Apple Pencil

While I’m no heavy user of the Apple Pencil myself, I can’t deny it’s rapidly become a key element of the iPad Pro experience for millions of users who rely on it to take handwritten notes, sketch, or put together amazing works of art. iPadOS 14 brings some major improvements and additions to Apple Pencil ranging from system-wide support for any text field to deeper integration with Notes and higher performance for PencilKit-enabled apps.

Scribble

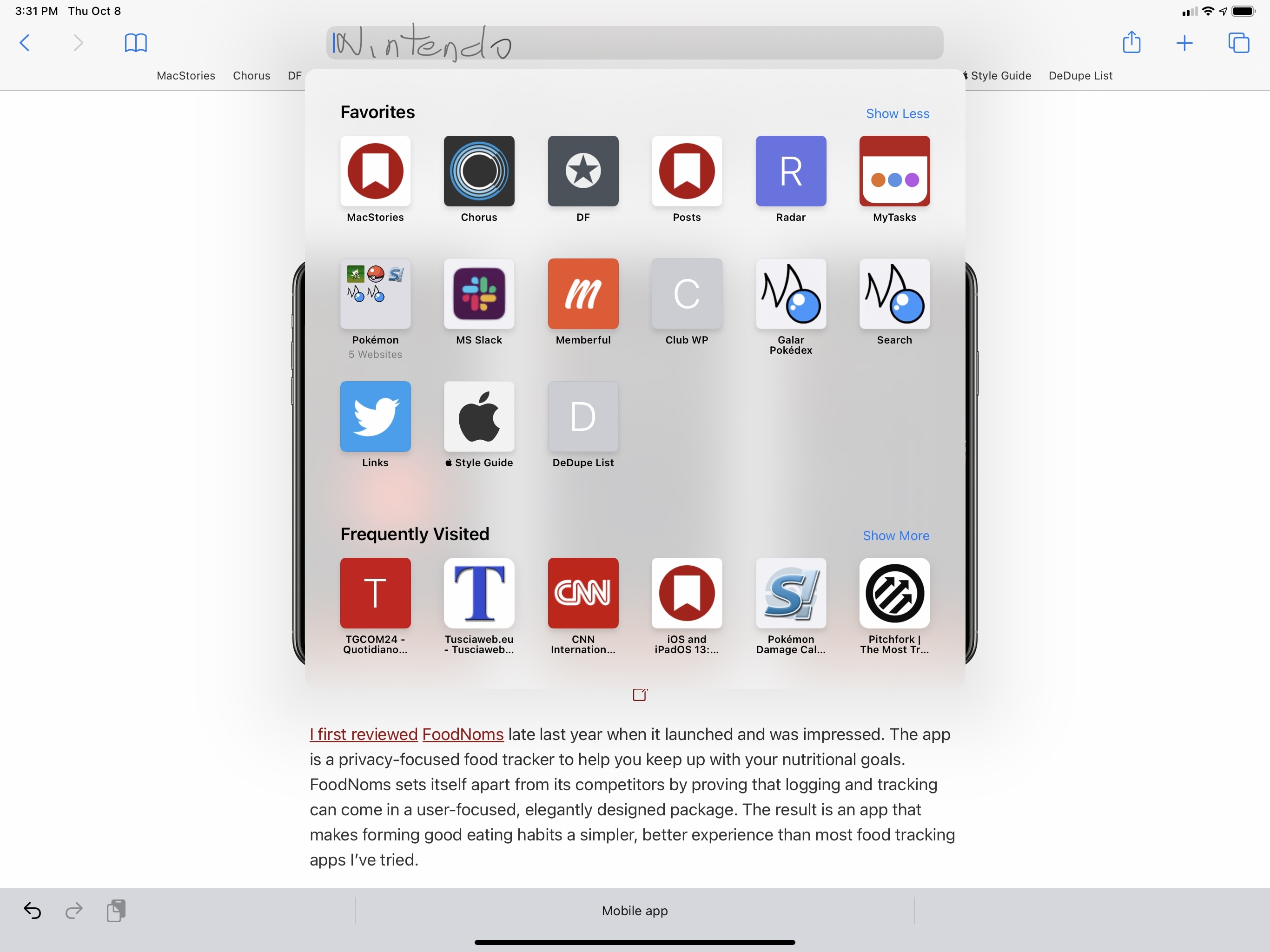

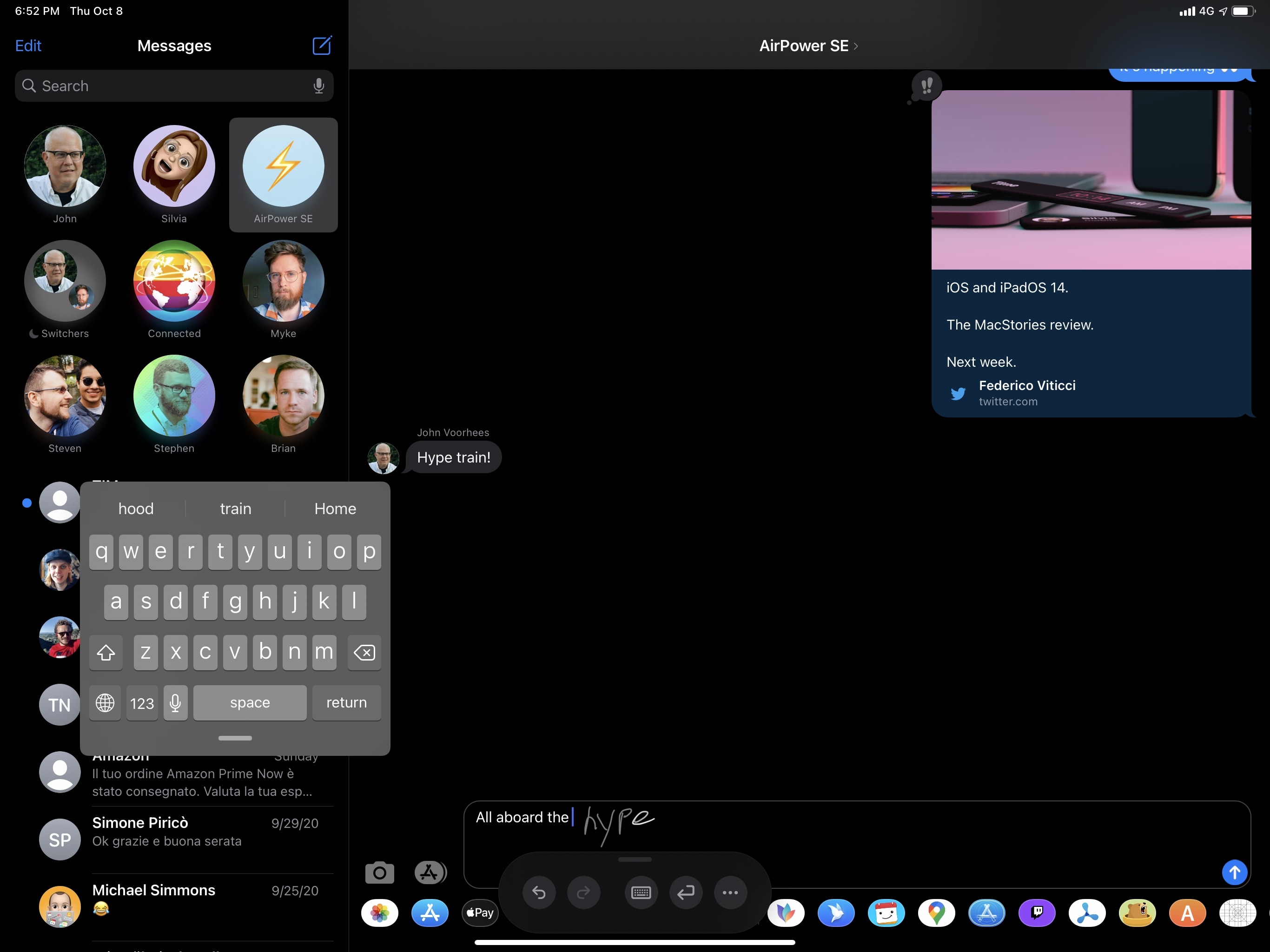

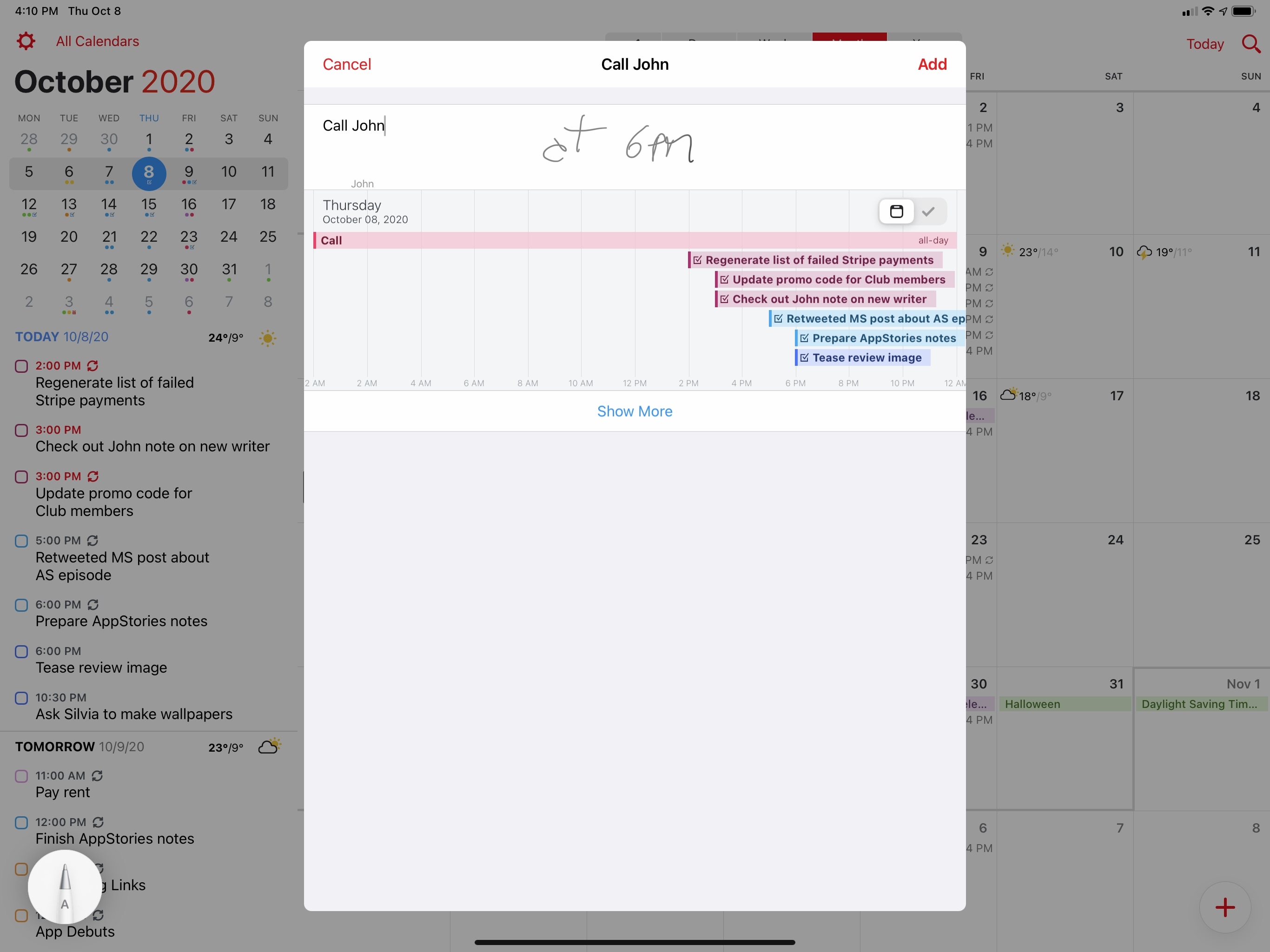

By far the marquee enhancement to Apple Pencil in iPadOS 14, Scribble is an all-new way of entering text in any text field, in any app, using handwriting recognition instead of a regular keyboard. Put simply: with Scribble, you can use the Apple Pencil to handwrite text anywhere – be it the Safari address bar or Messages’ compose box – and the system will automatically convert your handwriting to typed text. Scribble was designed for people who never want to put down the Pencil while they’re in the middle of their creative flow or a particular task; rather than placing the Pencil somewhere and temporarily typing on the software keyboard, Scribble lets them continue using the Pencil while iPadOS converts handwritten text to its typed counterpart.

Using Scribble in iPadOS 14. I apologize in advance for my terrible handwriting showcased in this review.

The idea behind Scribble is at the same time deceivingly simple and technically intricate. Scribble isn’t a separate mode you need to manually activate: it’s naturally integrated system-wide with any text field; transcription from handwritten to typed text happens as-you-write, and there’s no particular invocation technique you need to be aware of to start using it. As Apple describes it, Scribble’s strokes are ephemeral, and they disappear as soon as they’re converted to typed text; it is, in a way, like “dictation with motion”.

Even as someone who won’t use this feature on a regular basis – I like my Magic Keyboard too much, and I effectively never use the Apple Pencil – I have to admit that Scribble’s disarming simplicity is astounding when you consider the technicalities involved. First of all, Scribble uses a private, on-device text recognition engine to convert handwritten text to its typed form; only English and Chinese are supported by Scribble right now.21 Second, there is a new API for developers to support Scribble specifically with custom integrations; however, most apps are going to support Scribble out of the box, with no adjustments necessary, since the feature was designed to enable this new experience “for free” with behaviors that are automatically applied to apps – even if the developer didn’t explicitly add support for Scribble.

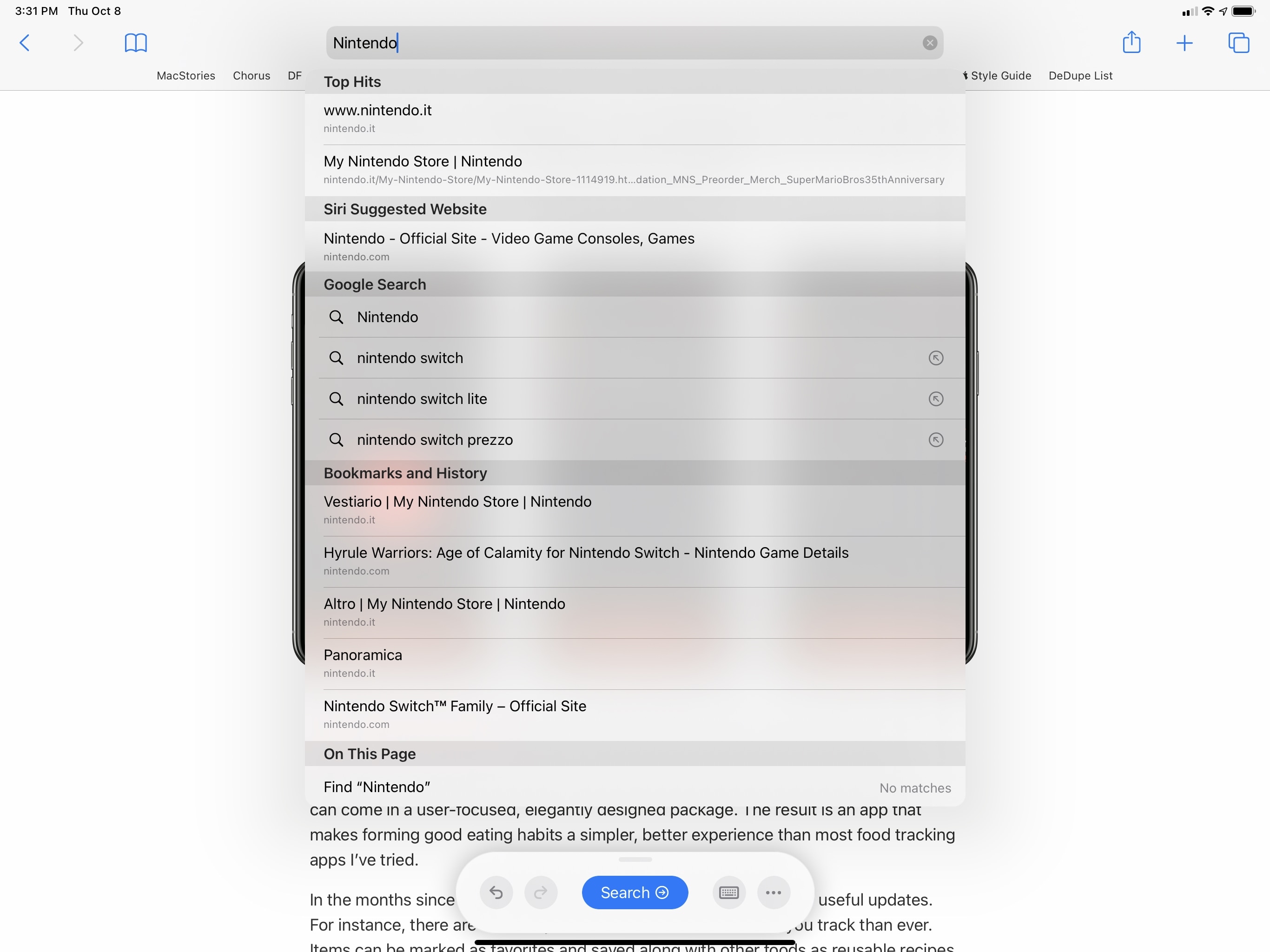

Search bars are a good example of how much iPadOS 14 does to enable Scribble integration in every app. When you write with the Pencil in a search field, two things happen: first, you’ll see a floating tools palette appear at the bottom of the screen with a big ‘Search’ button and additional controls to show the software keyboard and manage other Pencil settings. You can tap the Search button to confirm your search query; the floating palette is reminiscent of the one previously seen in Notes and Markup – you can also drag it around the screen and minimize it the same way.

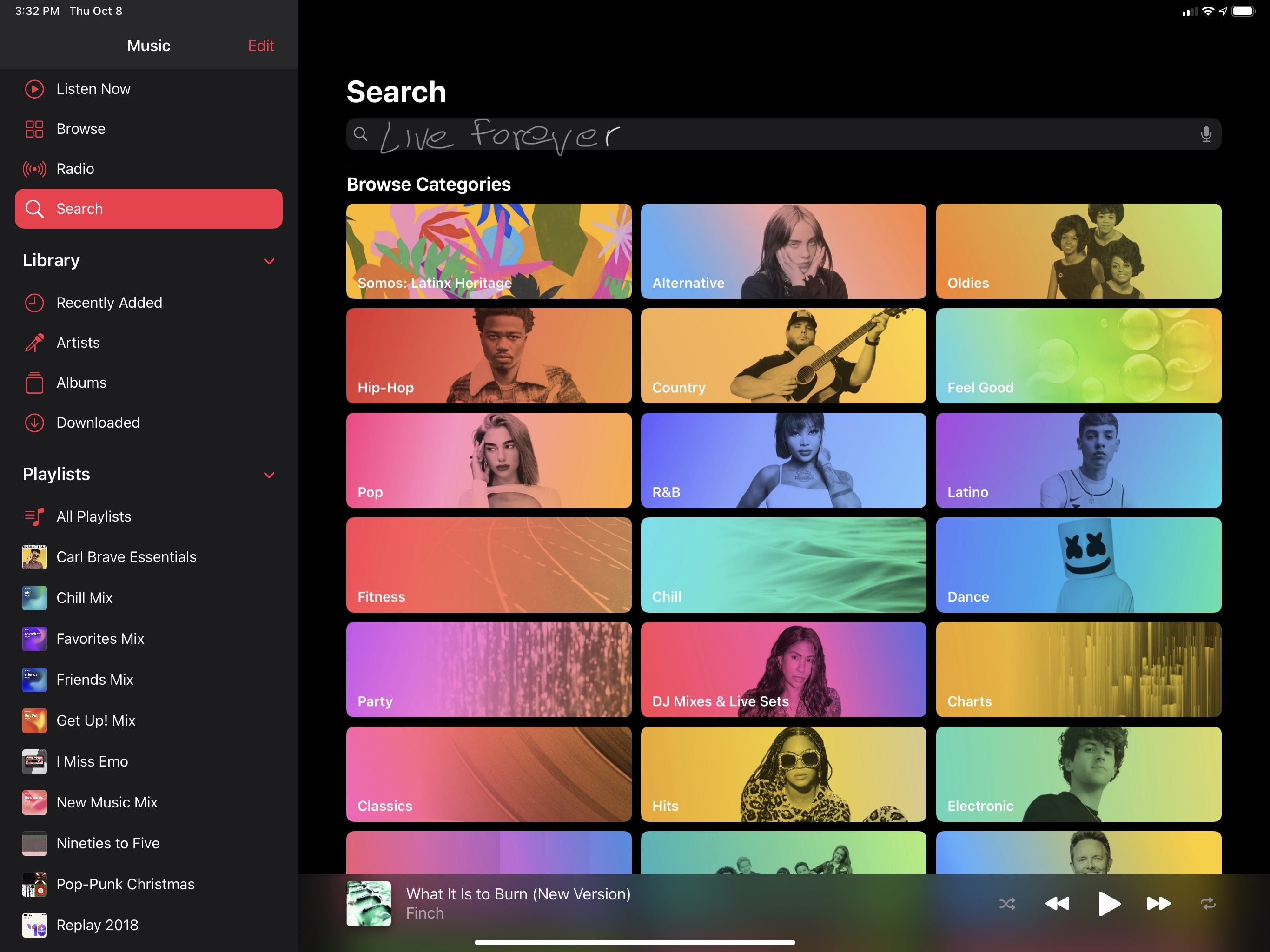

Additionally, if a search bar comes with placeholder text inside it, iPadOS 14 will automatically remove it so that you’re not scribbling on top of existing text, which could be confusing. This happens in Music (where the search field has the ‘Artists, Songs, Lyrics, and More’ placeholder text), but it also works out of the box in third-party apps like LookUp, even though its developers didn’t opt into any of the new Scribble APIs. This will work for search fields of most apps that use Apple’s native text field APIs22 – even if they haven’t been updated for iPadOS 14 yet.

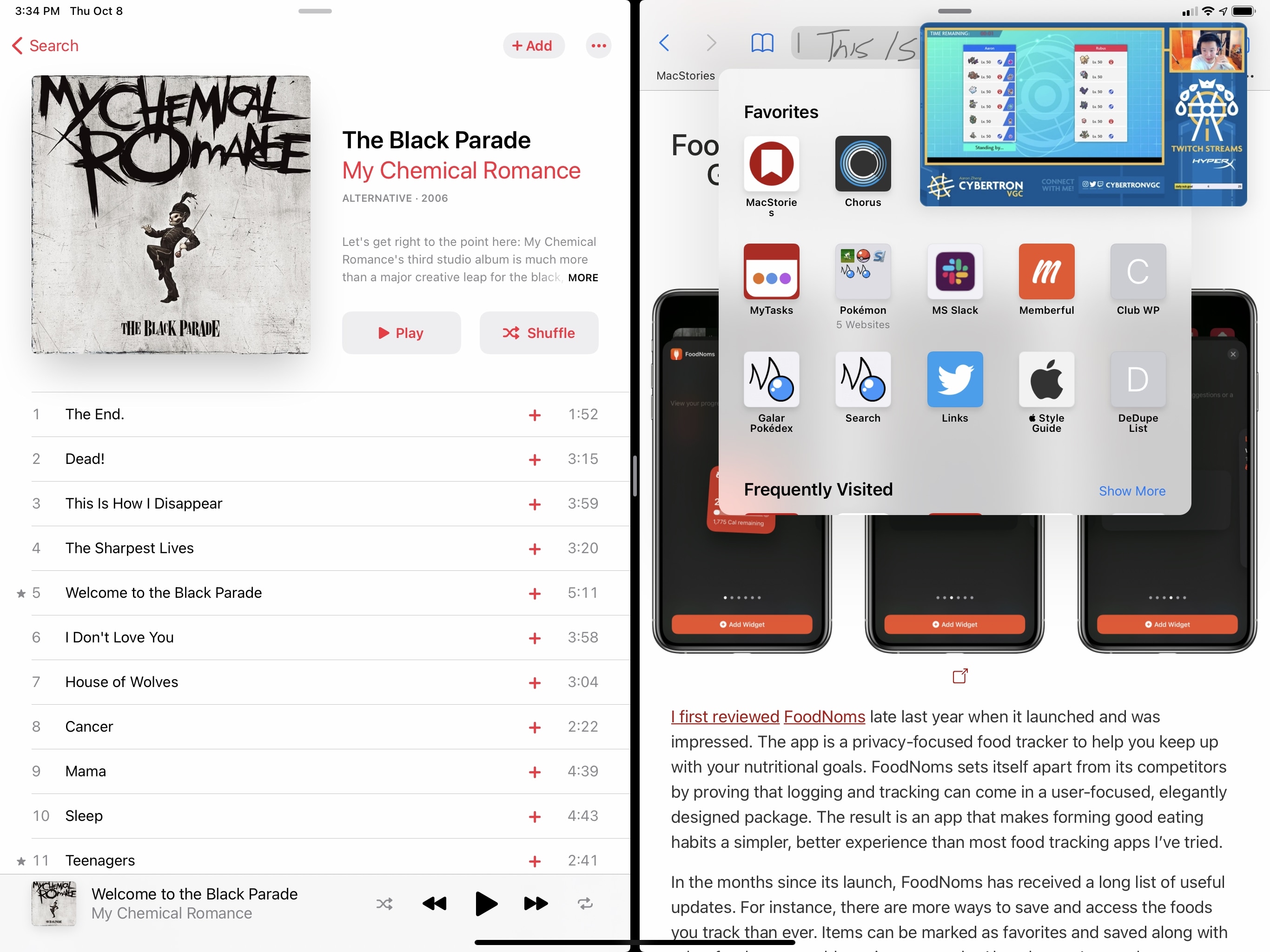

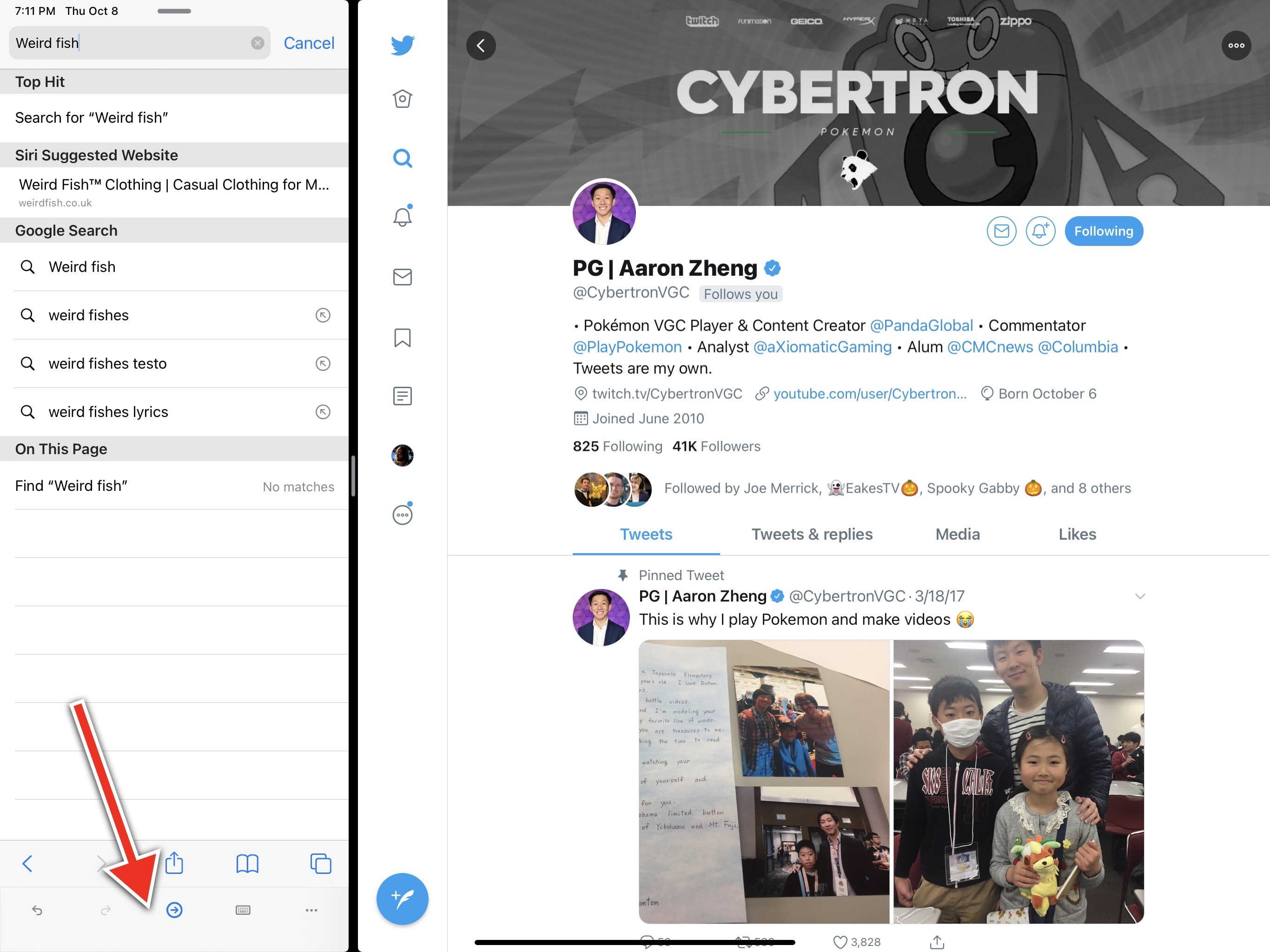

There are other behaviors Apple enabled for Scribble by default for all apps. For instance, you don’t need to “stay within the lines” when scribbling into a text field: your writing can extend outside its boundaries, and it’ll be fine – text will still be recognized and converted inside the text field. Scribble even works if you’re writing into a text field that can only be partially viewed onscreen. Consider the example below: the address bar of a compact Safari window was covered by a video playing in Picture in Picture, and I was still able to handwrite with Scribble in it.

There’s more. If you’re writing into a darkened text field, iPadOS 14 will give scribbled text a light font automatically, so it’s easier to see. And, of course, secure text input fields (i.e. password fields) are not supported by Scribble, so you won’t be able to handwrite the passwords you’ve memorized over the years.23

As I mentioned above, there are also instances of apps specifically adapting their UIs to Scribble in iPadOS 14. Messages, for example, detects when you’re using Scribble to handwrite a message and makes the compose field bigger so it’s easier to write into.

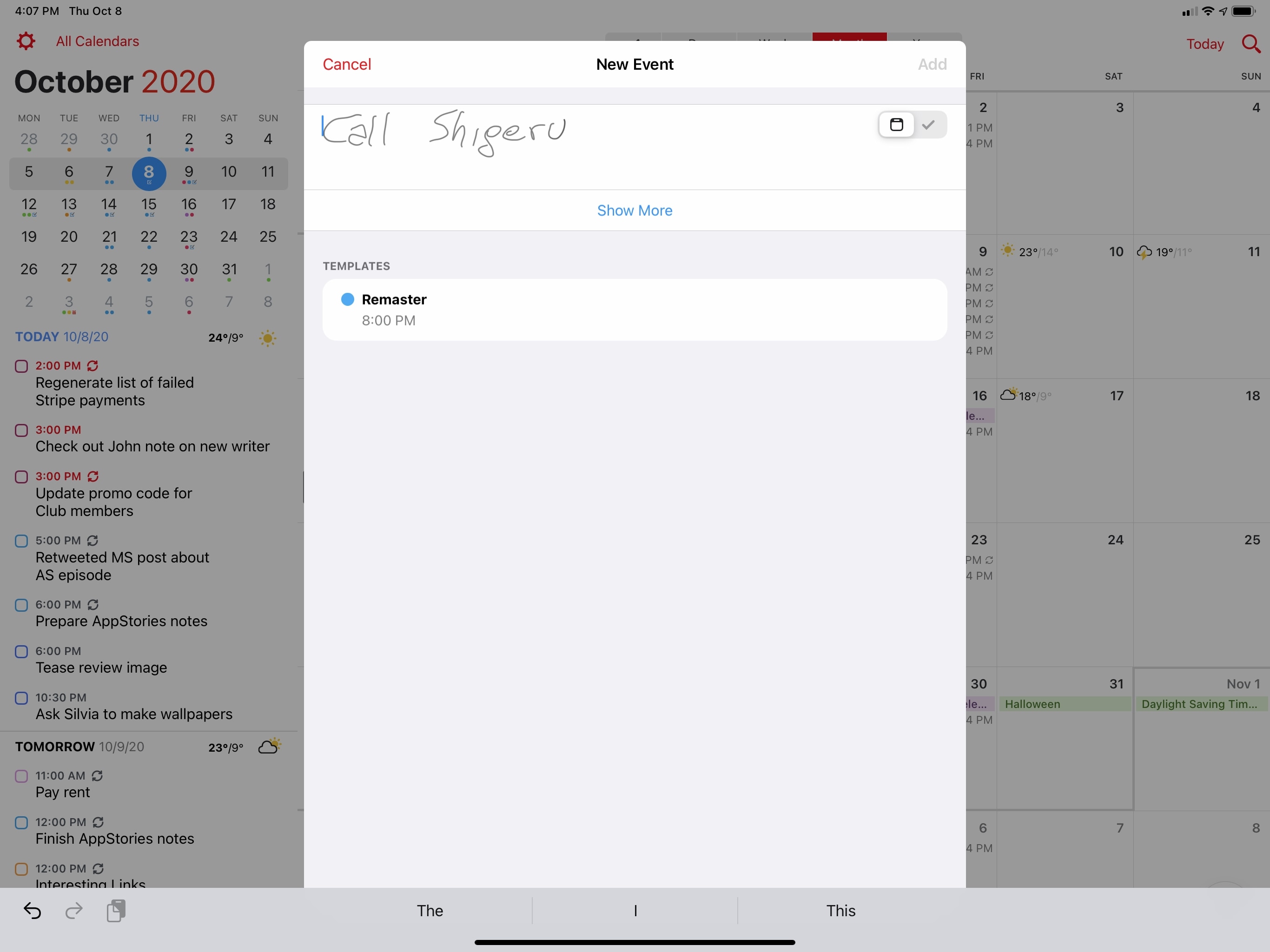

I expect this design choice to become the norm in iPad apps that want to integrate with Scribble. In the latest version of Fantastical, for instance, the compose field is automatically expanded if you tap the ‘+’ button to create a new event with the Pencil instead of your finger; the app detects a Pencil interaction, assumes you’ll want to scribble an event’s title, and automatically makes the compose field bigger for you.

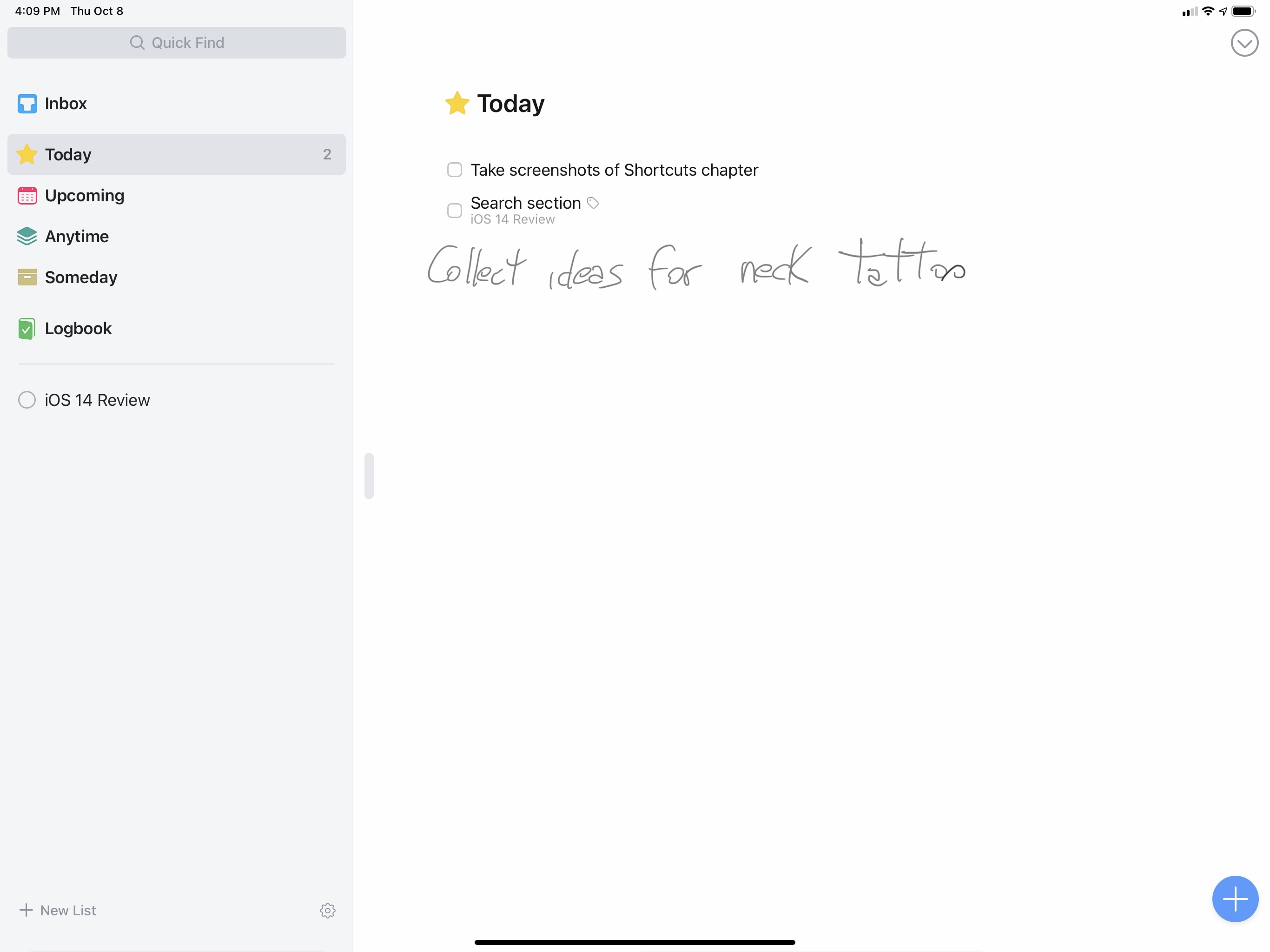

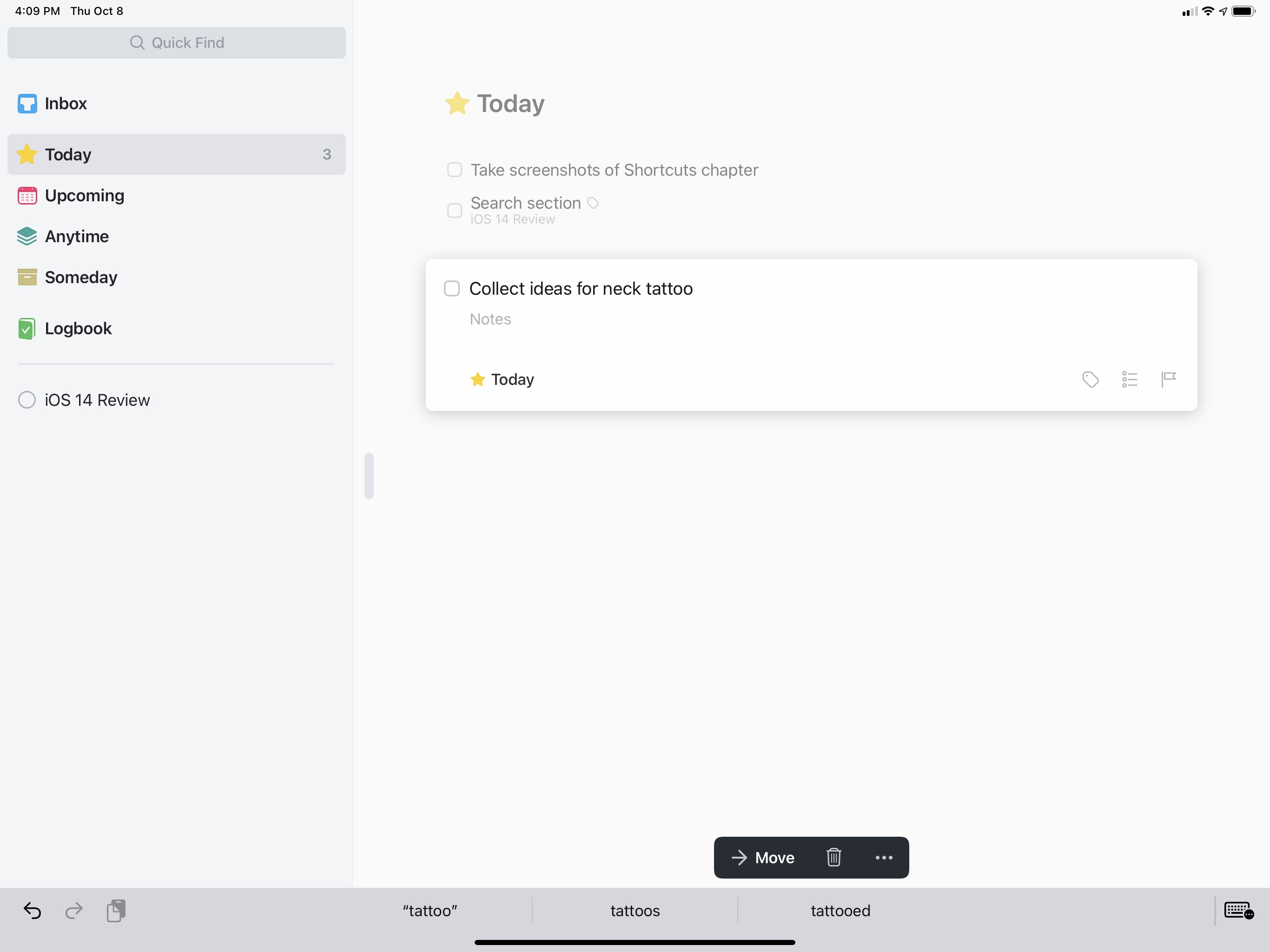

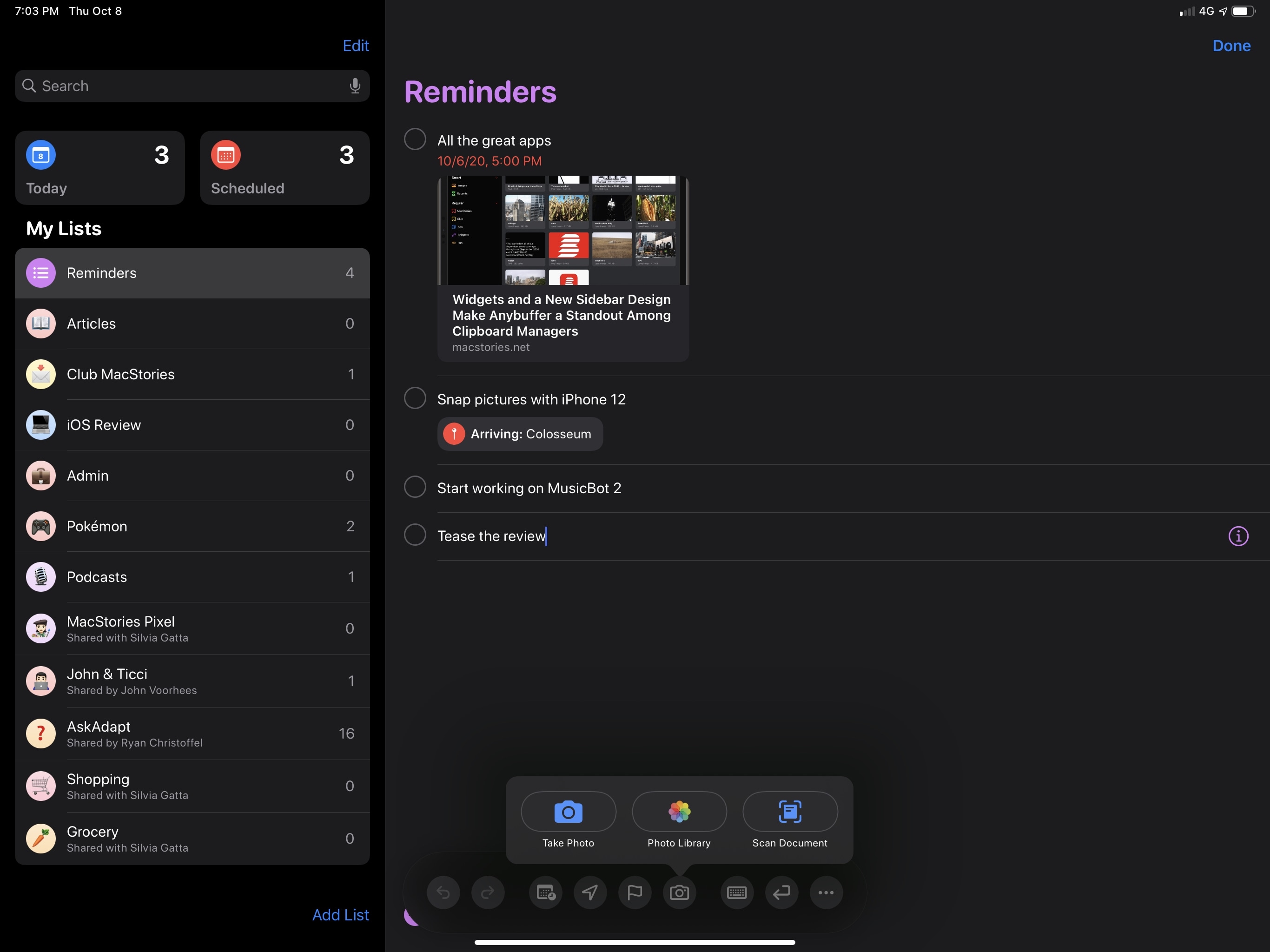

I’ve also seen examples of developers implementing Scribble as a “universal” input method that intelligently adapts to their apps’ UI. In Things for iPadOS 14, you can use the Pencil to select sections in the app’s sidebar; start writing in a blank space on any page, however, and the app will recognize Scribble input and create a new task for you based on converted text.

Fantastical has also followed a similar approach with the ability to create new events (with natural language support) with Scribble:

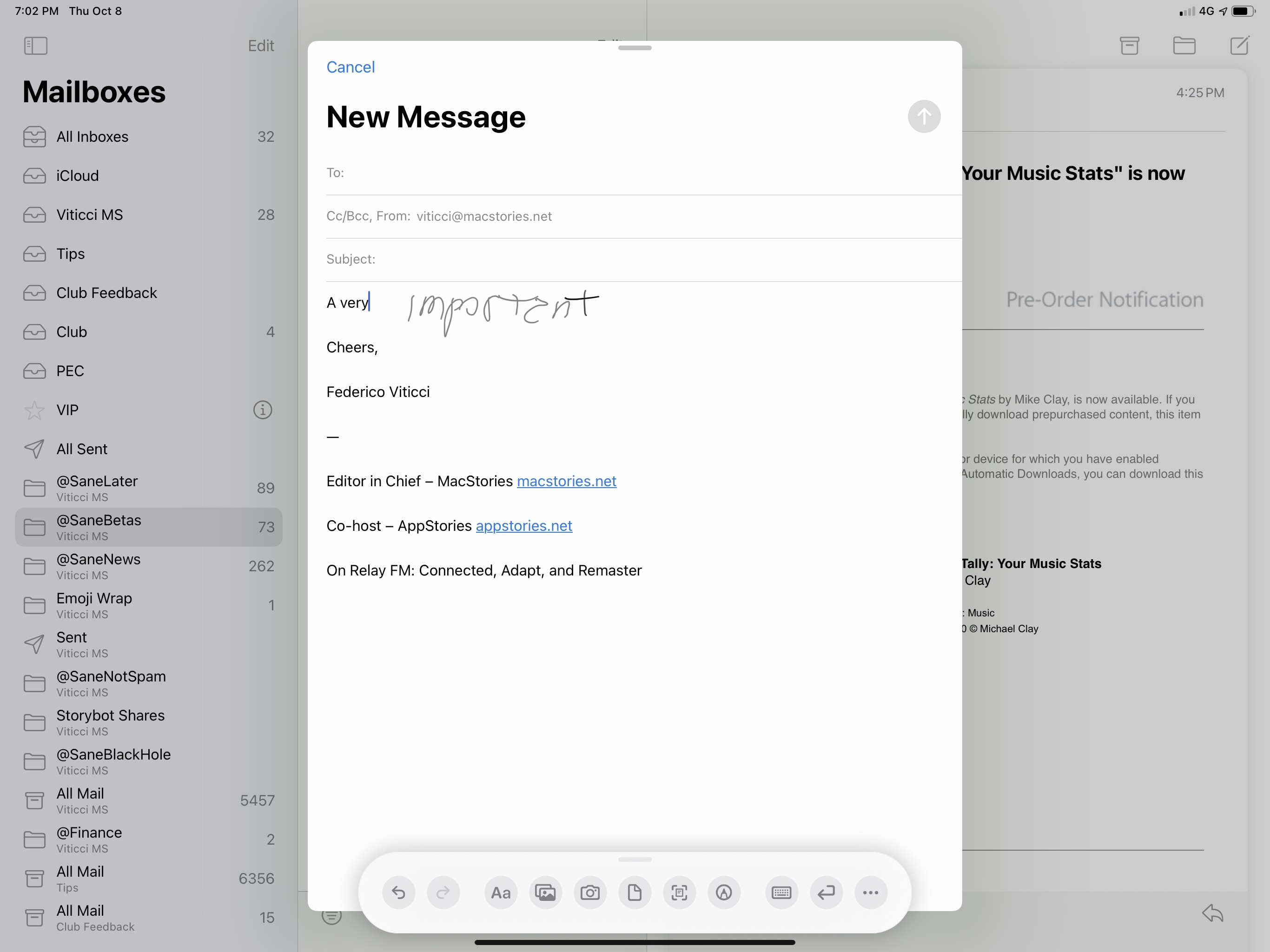

There are some fascinating implementations of Scribble’s floating palette in iPadOS 14, too. If you’re writing in Mail, the palette will give you options for the font picker, image and document insertion, and more – essentially replicating the buttons you’d get in the QuickType bar when using the keyboard. Based on the same idea, in Reminders the palette features options for time and location settings.

Interestingly enough, when the floating palette is shown in a compact size class (e.g. the app is in Slide Over), it takes on an alternate compact form at the bottom of the window, sort of like an additional toolbar.

In addition to writing into text fields, you can also manipulate text inside them with new Scribble-specific Apple Pencil gestures that let you delete words, make selections, insert spaces, and join words. These gestures (and Scribble itself) are explained to you the first time you connect an Apple Pencil to an iPad running iPadOS 14, but you can also go through the onboarding process and associated demo under Settings ⇾ Pencil ⇾ Try Scribble. The gestures are:

- Delete: You can remove words in editable text fields by scratching them out, either vertically or horizontally.

- Select: Circle or draw a line over text to select it, which will make the default copy and paste popup menu appear.

- Insert: Tap and hold with the Pencil anywhere in a text field to make room to write additional text.

- Join: You can join characters or separate words by drawing a vertical line before or after any character.

In a way, these gestures remind me of last year’s gestures for text manipulation: they’re somewhat hidden, and only advanced users will likely master them. I really like the inclusion of an interactive demo for Scribble and its gestures in Settings though, which is something I’d like to see the company do for more iPad functionalities.

As I mentioned above, I’m not the target user for Scribble: I don’t think I’ll ever find myself in the position where I want to use the Pencil in lieu of a keyboard to type text – and that’s totally fine. We’ve discussed this many times before: the beauty of iPad lies in its modularity – in how a single device paired with different accessories can yield a variety of experiences for a broad range of users.

If you’re a heavy Apple Pencil user who wants to write with the Pencil as much as possible, Scribble should be downright amazing for you: in my tests with the English language and the world’s worst penmanship, performance with handwriting recognition was solid, and the system-wide integration for all apps and text fields (even before iPadOS 14’s launch) was remarkable. I can only imagine how big a deal Scribble is going to be for students, artists, designers, and other iPad users who depend on the Apple Pencil for their work and use it for several hours a day. For those users, I feel like the impact of Scribble will be comparable to the system-wide pointer Apple added in iPadOS 13.4. Plus, I think it’s just cool that handwritten text can magically morph into typed text thanks to on-device intelligence.

I’m not going to use Scribble much personally, but it’s a groundbreaking addition for Apple Pencil users, and I’m fascinated by it.

Handwritten Notes

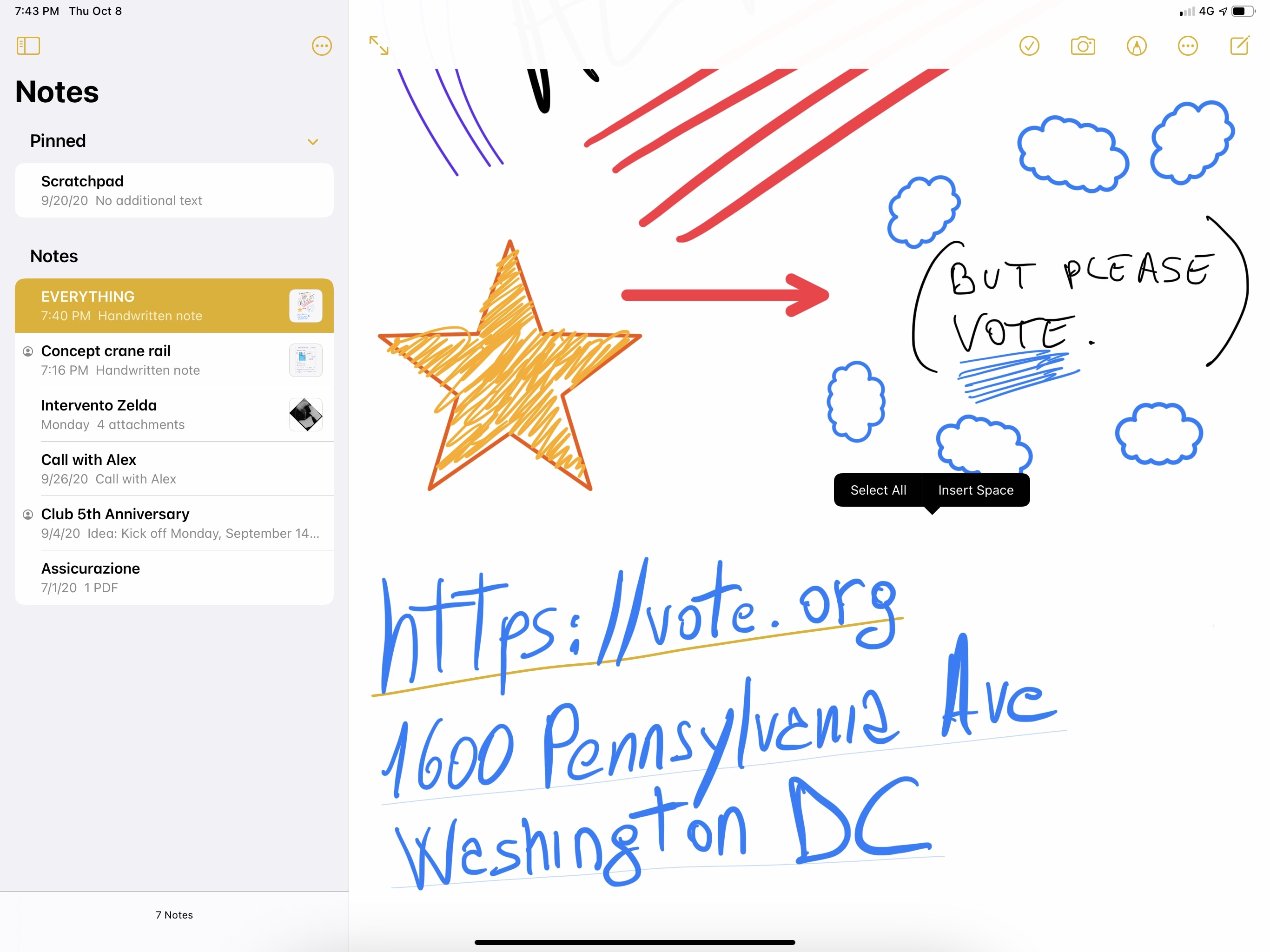

The other noteworthy addition for Apple Pencil in iPadOS 14 is deeper integration with Notes, which encompasses four major features: the ability to convert handwriting to typed text; Smart Selection for handwritten text; automatic shape recognition; and support for data detectors inside handwritten text. If you’re an Apple Pencil note-taker and rely on Apple’s Notes app, you’ll want to pay attention to what’s going on here.

iPadOS 14 builds upon the handwriting recognition originally introduced with iOS 11 in 2017: at the time, the system could only recognize text in the background, allowing you to search for specific words or passages via Notes’ built-in search feature; in iPadOS 14, you can actually select handwritten text and copy it as typed text, ready to be pasted elsewhere. As before, handwriting recognition is still limited to English and Chinese.

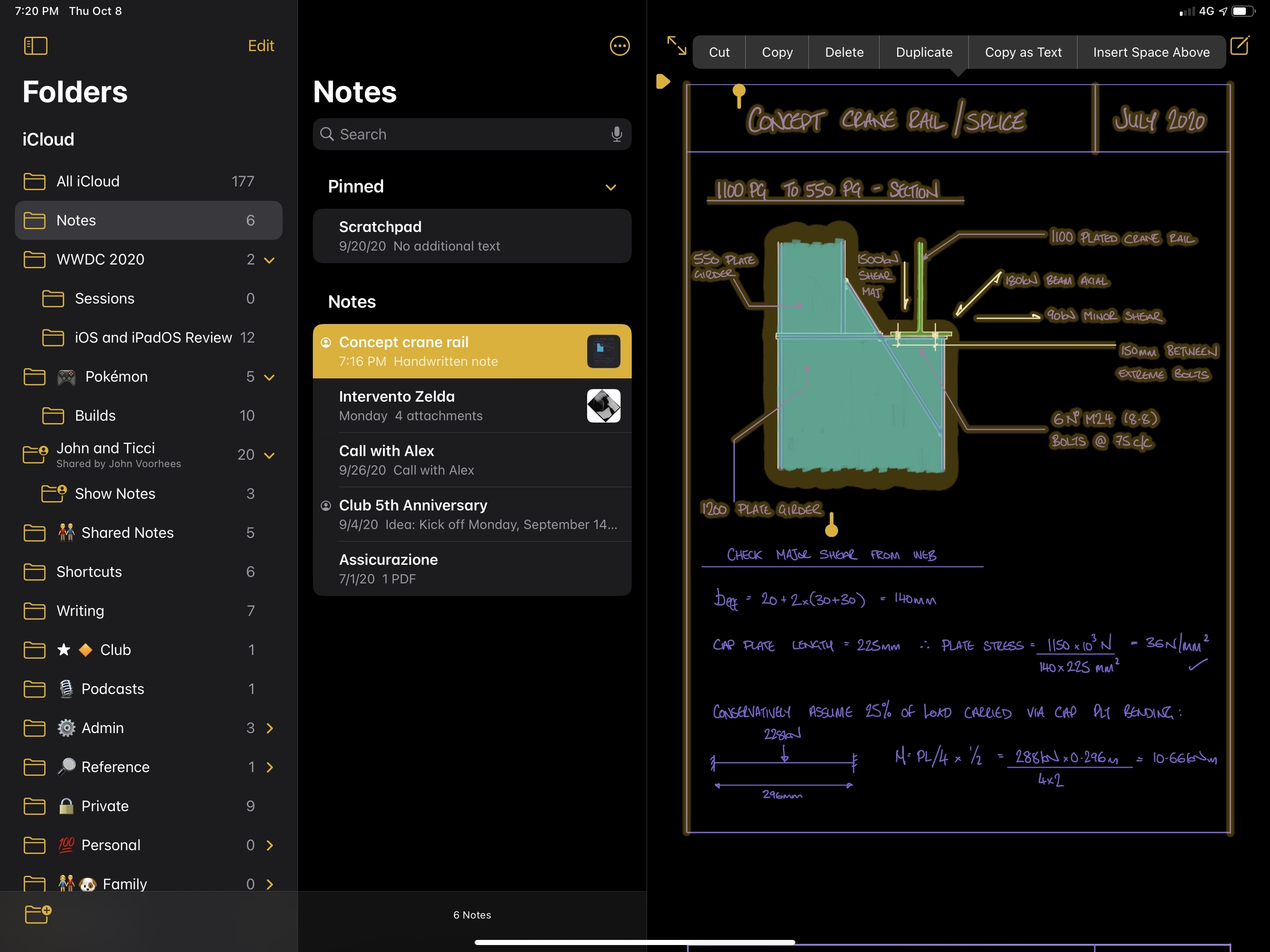

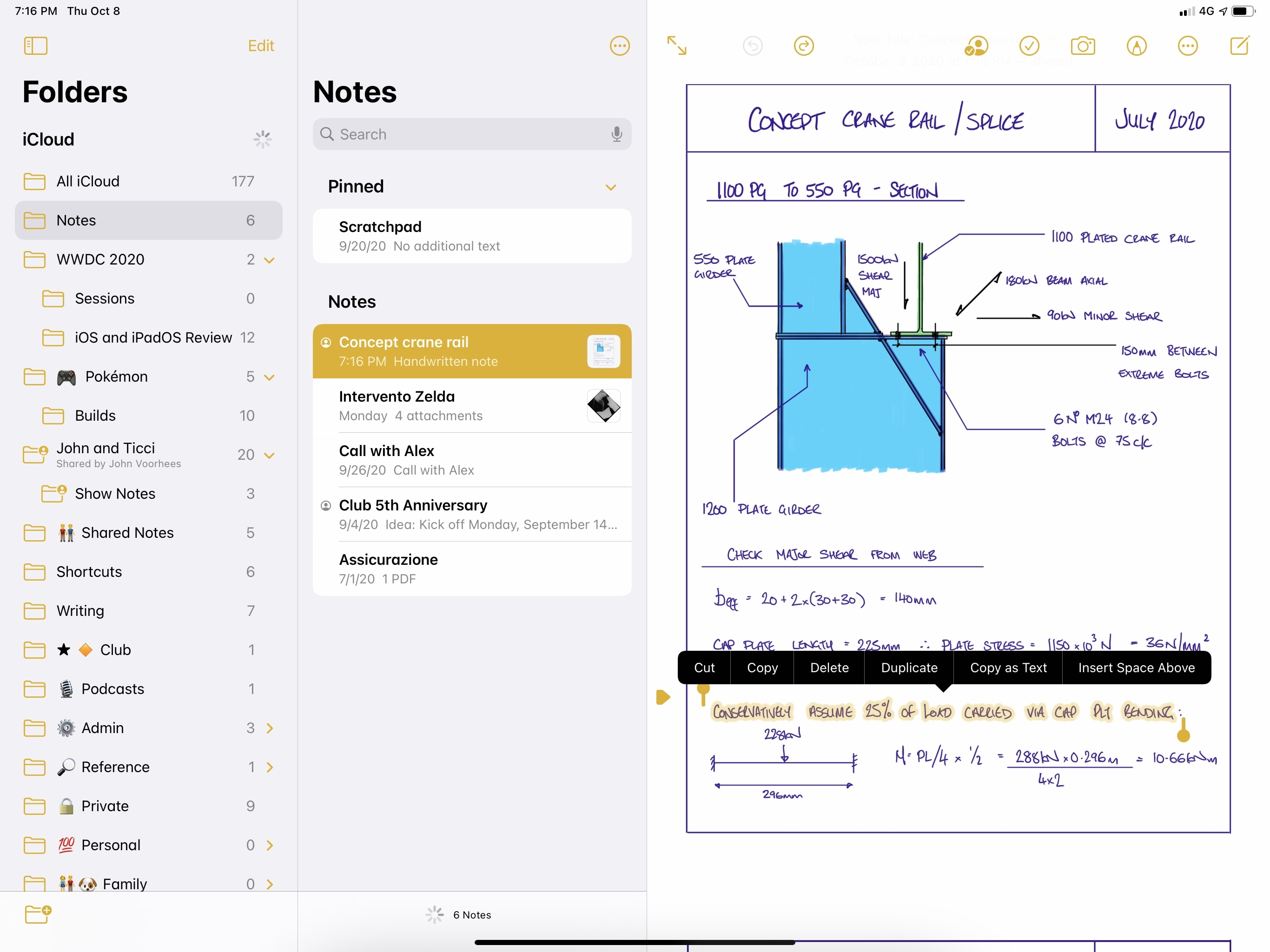

To copy handwritten text as typed text, you can select it in Notes and hit the new ‘Copy as Text’ option from the copy and paste menu. Unfortunately, there is no way to convert handwritten text to typed text inline within a note, as we’ve seen in other note-taking apps on iPad; you can only copy the typed equivalent to the clipboard and paste it somewhere else.

You now select handwriting in Notes and copy it as text. Obviously, this beautifully handwritten note isn’t mine.

You can paste copied text anywhere – in this case, Search will find the matching note inside the Notes app.

To select handwritten text, you can brush over it with your finger or Apple Pencil. Selecting handwritten text is, in my opinion, one of iPadOS 14’s most delightful touches: this feature is dubbed Smart Selection, and it’s a beautiful selection style that progressively surrounds your handwriting as you swipe over letters and words; it’s categorized as “smart” in that it uses on-device machine learning to distinguish text from drawings and doodles, automatically excluding non-text elements from the converted text you get at the end of the process. The intelligence of Smart Selection paired with the intrinsically analog feeling of handwriting is a unique combo that stands out as one of iPadOS 14’s coolest features.

Besides its looks, a lot of fascinating tech went into Smart Selection. For instance: Smart Selection supports acceleration, which means you can select large swaths of handwriting by swiping across text quickly, or you can decelerate to gain greater precision and select individual characters. If you’ve selected text too quickly, you can slowly swipe back to deselect entire words or specific letters, and the result is a beautiful animation that is both contextual and pleasing to look at.

Additionally, Smart Selection supports non-contiguous text selections, drag and drop, and the ability to change colors for selected text. The latter is pretty self-explanatory: select some text, press the drawing button in Notes’ top toolbar again, and choose a new color for the selected text from the system’s color picker. Drag and drop is supported both for in-app rearrangement of text (so you can, say, pick up a paragraph and drop it somewhere else in the same note) as well as other apps. In my tests, handwriting selected in Notes was always imported by other apps as an image; it’d be nice if apps could also opt in to receive handwriting from Notes as typed text.

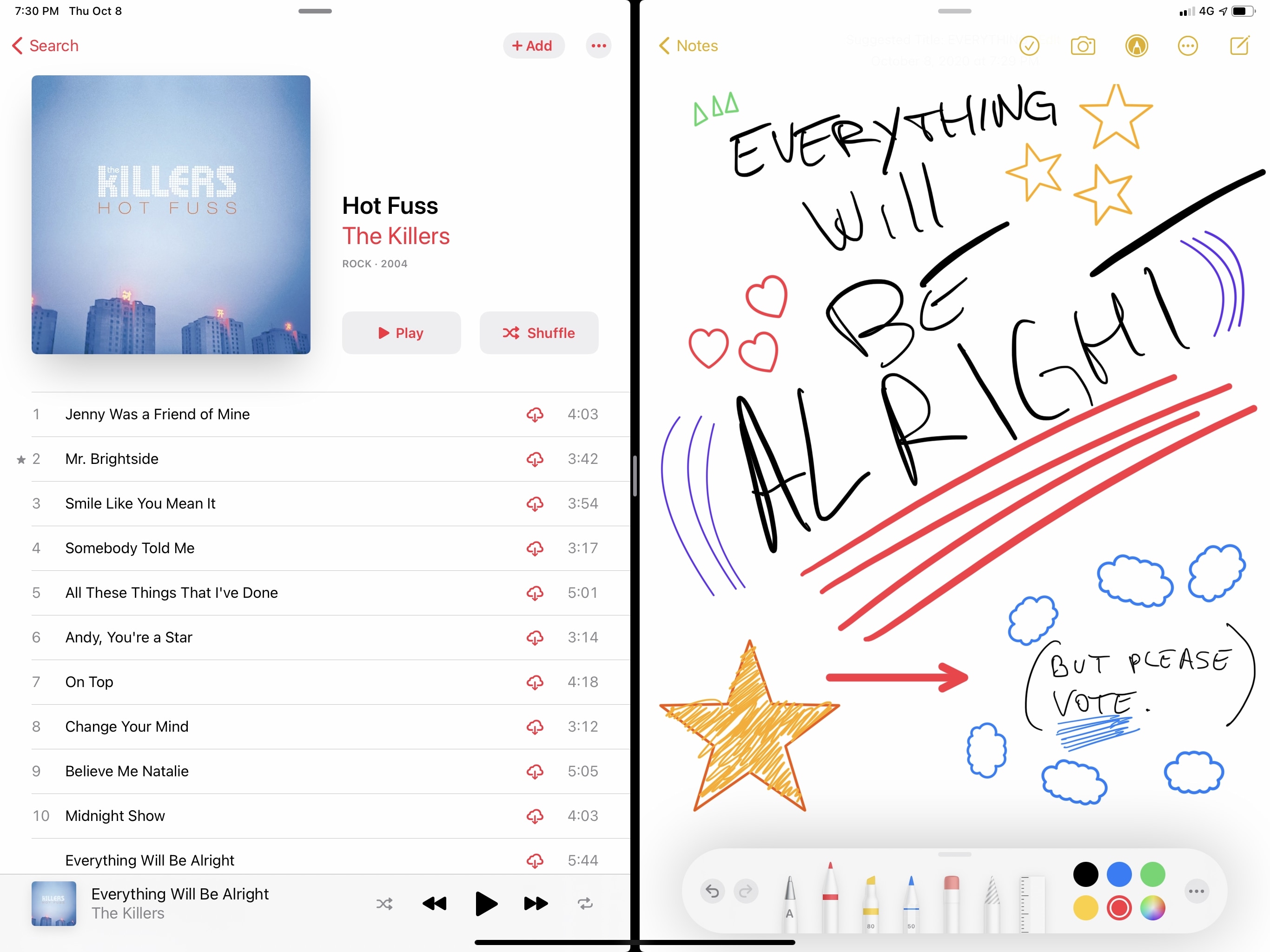

Selecting non-adjacent blocks of handwritten text ties into another addition to the Apple Pencil experience in Notes: built-in shape recognition. In both iOS and iPadOS 14 in Notes, screenshots, and Markup, you can draw a shape, pause briefly while still holding down with your finger or Apple Pencil, and watch as your freehand shape is converted to a geometrically perfect version that respects the size and angle of your original shape. You’ve probably seen similar implementations of this before in third-party note-taking apps for iPad; Apple’s version works system-wide, supports both touch and Pencil input, and uses on-device processing to recognize 16 different types of shapes:

- Line

- Curve

- Square

- Rectangle

- Circle

- Oval

- Heart

- Triangle

- Star

- Cloud

- Hexagon

- Thought bubble

- Outlined arrow

- Continuous line with 90‑degree turns

- Line with arrow endpoint

- Curve with arrow endpoint

I was somewhat skeptical of shape recognition when I first heard about it at WWDC, but Apple delivered on their promise with this feature: as someone who often needs to annotate webpages or screenshots, I found myself enjoying the ability to draw perfect lines, arcs, and circles with my finger or Pencil. Even more complex shapes such as stars, bubbles, and hearts are recognized consistently and quickly.

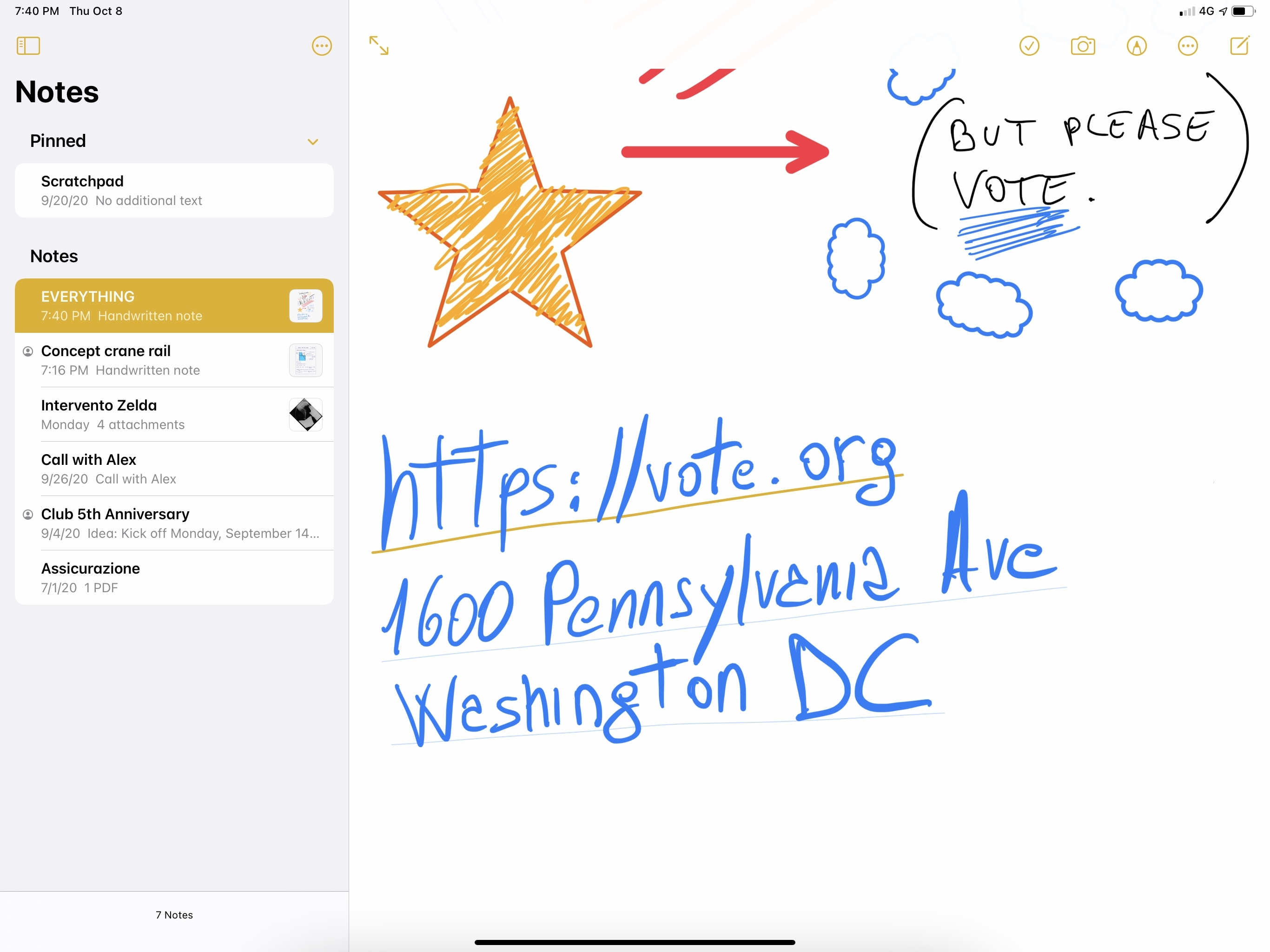

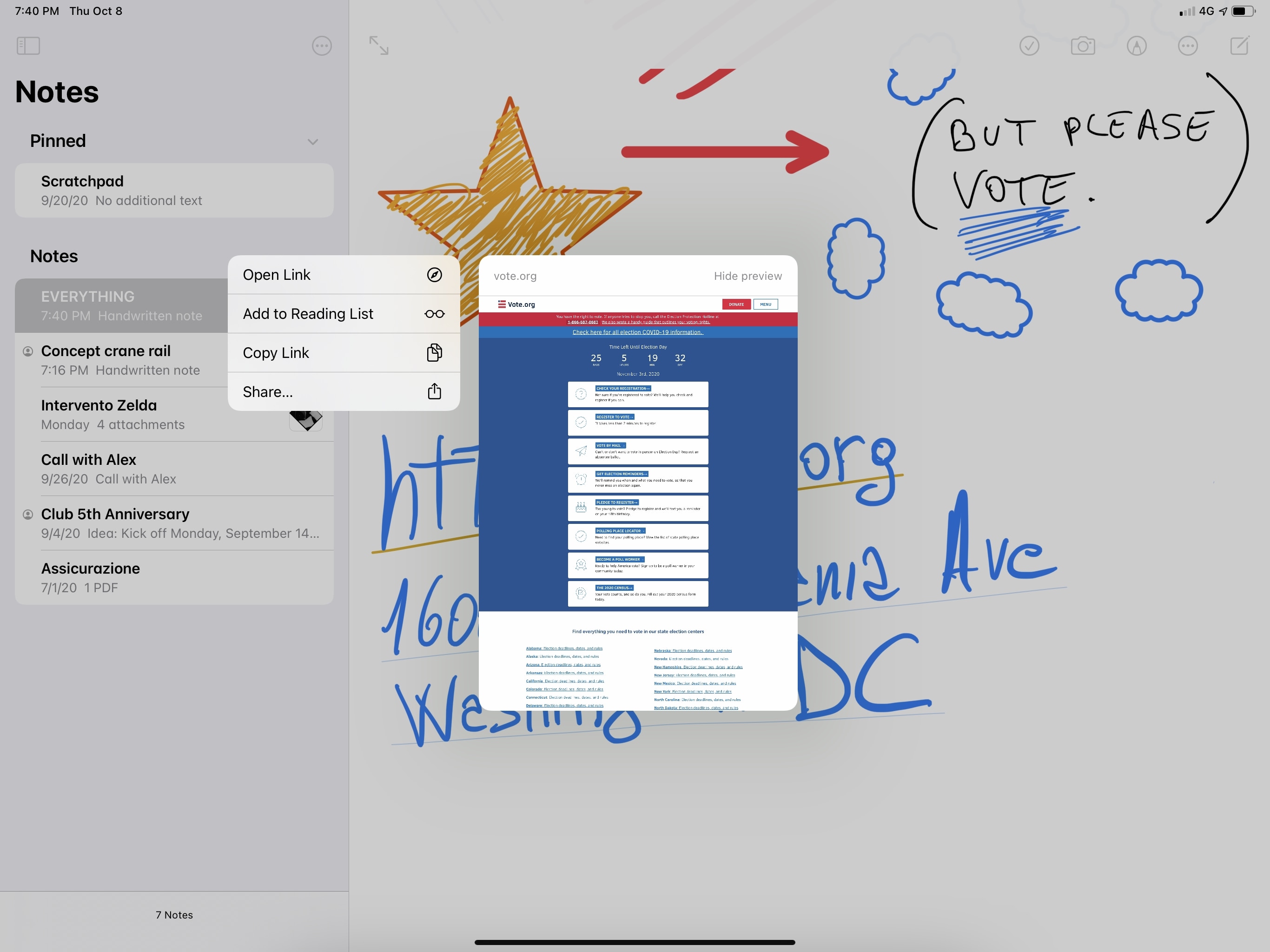

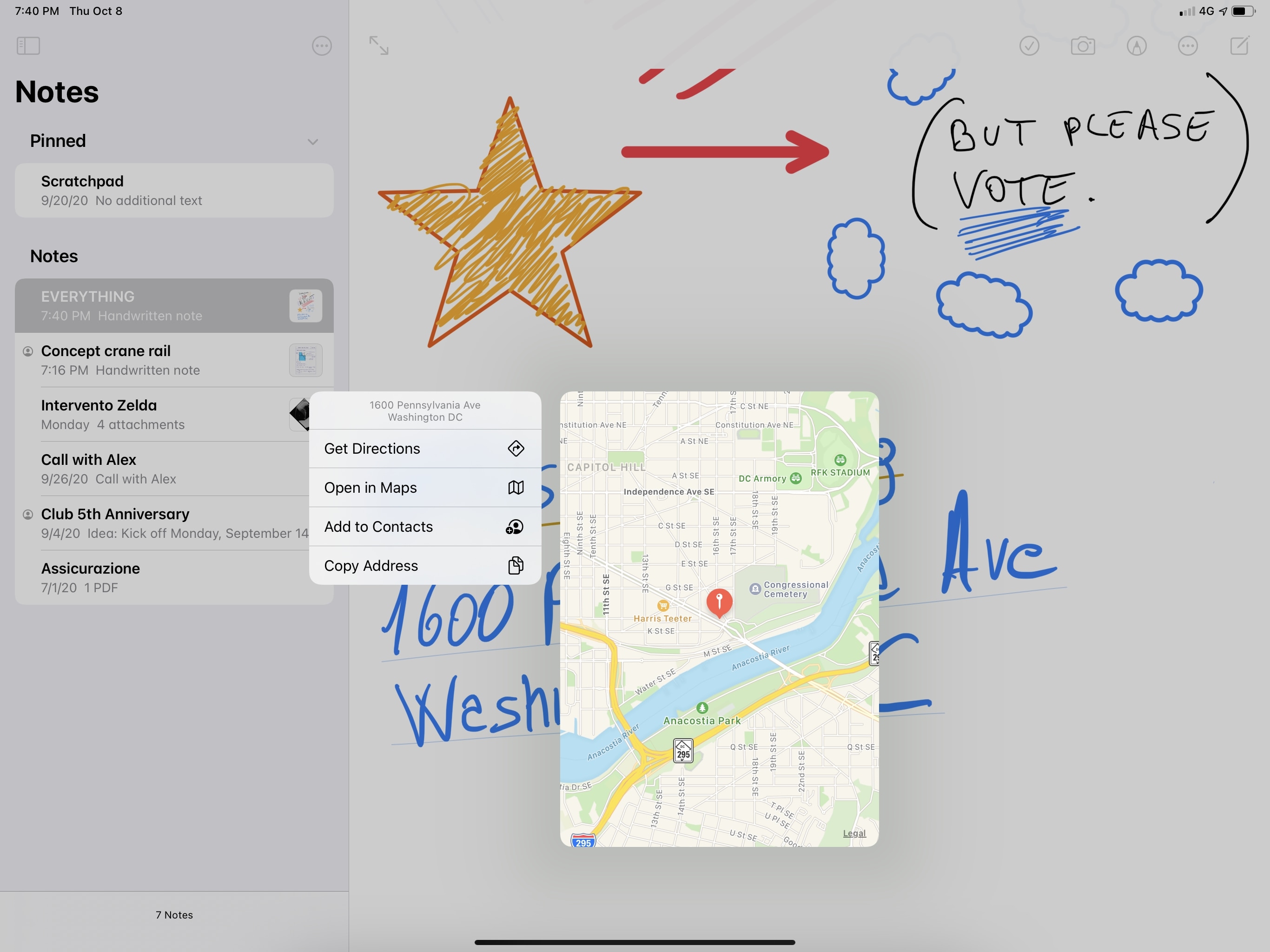

There’s still more interesting tech to point out. Handwritten text now supports data detectors: elements such as links, addresses, phone numbers, names, and email addresses will be recognized inside your handwriting and underlined to suggest they can be interacted with. Tap on them and you will kick off the associated action, such as making a phone call, viewing a location in Maps, or opening a link in Safari. You can also long-press them to preview the item or available options inline.

Once again, I don’t see myself ever taking advantage of this functionality since I don’t use my Apple Pencil much (let alone use it to jot down URLs or phone numbers), but it’s wild to consider how iOS and iPadOS can now identify entities such as URLs and addresses inside handwritten text and summon specific view controllers (such as Maps and Safari previews) when they’re long-pressed. And that all of this is done privately and securely with on-device processing, in a couple seconds, without requiring an Internet connection.

Lastly, there’s a new way of adding or deleting space between sentences or paragraphs in your handwritten notes: once you’ve selected handwritten text, you can drag a small triangle that appears on the left with your finger or Apple Pencil. Doing this will let you add or delete as much space as you want, bringing text closer together or spreading it apart to create a blank section in the middle. You can also use the new ‘Insert Space Above’ option in the copy and paste menu to add some white space above the current selection, which will make the horizontal bar and small triangle icon appear.

I’m not an analog note-taker, so I don’t think I’m qualified enough to comment on the overall quality of Apple’s text recognition engine. In my few tests this summer, English transcriptions were mostly okay, with the occasional hilarious mistake. I will say, however, that I’m impressed by the technological aspect of Apple’s new Pencil integration with Notes, which I find the ideal app to support all these new features since it’s a powerful, free, built-in solution for taking notes, searching them, and accessing them via Siri, Shortcuts, and even the Lock Screen. Shape recognition has worked wonderfully for me; Smart Selection’s visual effects are beautiful and fun to play around with; handwritten data detectors are the classic mix of technology and liberal arts that only Apple can pull off with such elegance. Notes may not be as powerful or customizable as GoodNotes or Notability, but iPadOS 14’s updates have raised its baseline considerably.

PencilKit

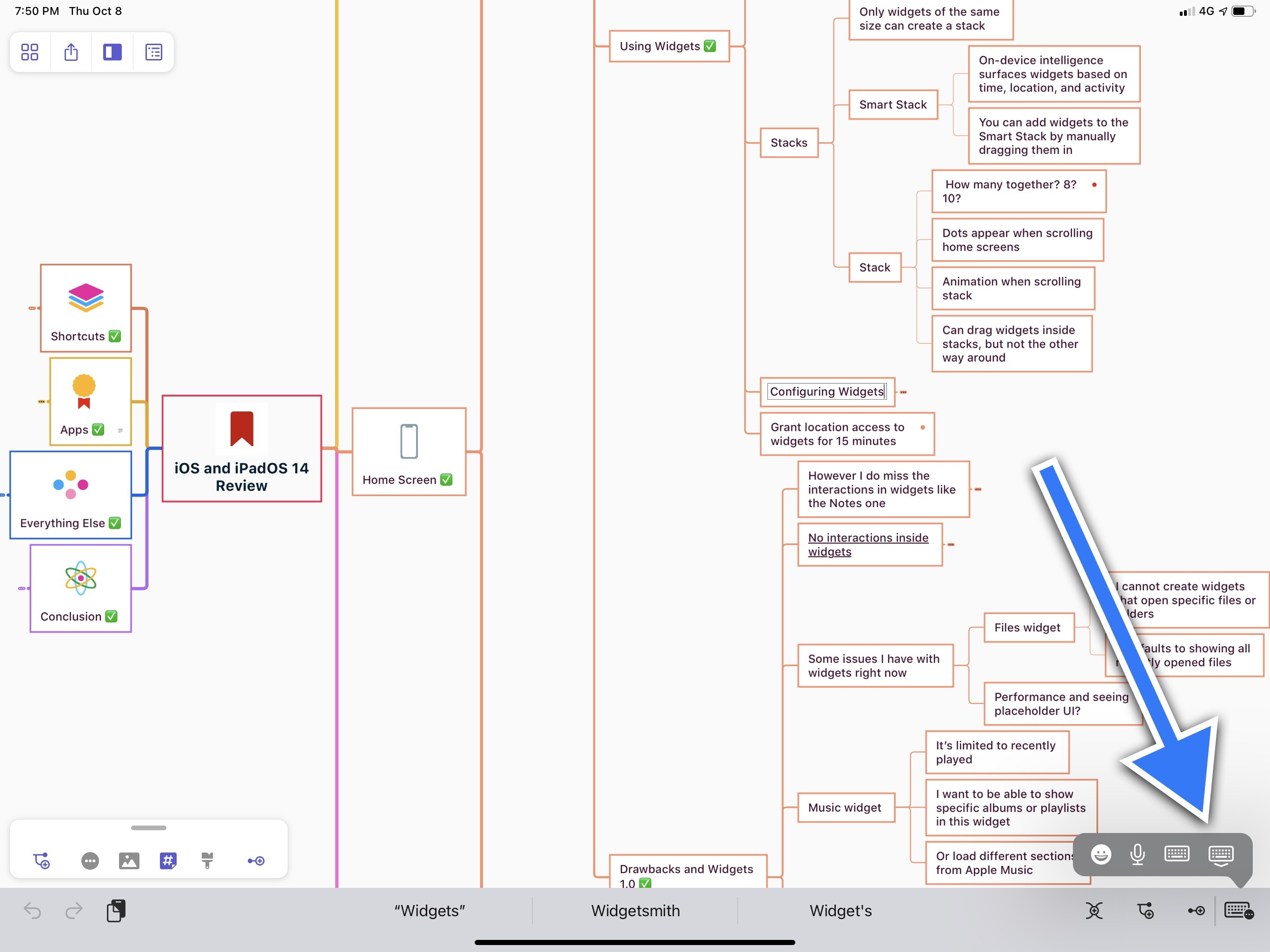

I’d be remiss not to mention some of the more technical and esoteric improvements to PencilKit, the Apple Pencil framework for developers, in iPadOS 14.

First, there’s a new option in Settings ⇾ Apple Pencil ⇾ Only Draw with Apple Pencil; once enabled, only the Apple Pencil will be used for drawing and touch input from your fingers will be exclusively employed for scrolling and interacting with apps. This option is great to have if you’ve ever been annoyed by the iPad picking up both the Apple Pencil and your finger as inputs when drawing in a note.

This setting applies globally to all PencilKit views in apps: once you enable it in Settings, it’ll work for all apps that use the PencilKit framework. Furthermore, developers can query the state of this setting (to see if the user has enabled it) and make appropriate suggestions in their apps. You can override this option with the ‘Draw with Finger’ toggle available in the tools palette of Markup and PencilKit views.

Also new this year, developers get access to the new color picker for free if they’re using PencilKit; as soon as you start drawing, the picker is automatically dismissed. As announced by Apple at WWDC, developers can also access individual strokes in PencilKit now, which should open up some fascinating possibilities for illustration, animation, and writing apps. Finally, in terms of latency improvements, Apple noted that both visual and blur effects on strokes are supported in PencilKit for iOS and iPadOS 14.

Other Changes

It wouldn’t be an iPadOS chapter without a final list of other changes worth discussing. After all, you’ve made it this far, you might as well get some extra details about iPadOS 14, right?

The new keyboard button. The old chevron in the right corner of the QuickType bar (which used to toggle the software keyboard in iPadOS 13 when an external keyboard was connected) has been replaced in iPadOS 14 with a menu that includes the option of toggling the software keyboard, among other things. If you’re using your iPad with an external keyboard attached, pressing the new keyboard button reveals a small popover with options to show emoji, dictation, toggle the software keyboard, and temporarily dismiss the extra QuickType bar.

We recently wrote about the iPad keyboard’s frustrating way of dealing with the QuickType bar and tab bars in Split View, so I won’t rehash Ryan’s list of complaints here. What should be clarified now that iPadOS 14 is out, however, is that the new keyboard button doesn’t really remedy any of the issues Ryan covered in his article. While it’s nice that Apple added a menu with more options to this button, hiding the QuickType bar isn’t a permanent tweak: as soon as you type a single keystroke in a text field, the extra QuickType bar appears again, potentially hiding the tab bar or bottom toolbar of an adjacent app in Split View – just like Ryan explained a few weeks ago.

If anything, given that there are now faster ways of activating emoji and dictation in iPadOS 14, you could argue that Apple made the process of toggling the software keyboard slower than before since you can’t long-press the chevron to instantly display the software keyboard anymore; you always have to show the menu first, then toggle the keyboard with a second tap. I realize I just spent three paragraphs describing the role and pitfalls of a single button, but this is precisely why I write these reviews.

An emoji popover…without search. As we’ll see later in this review, one of the big “finally” moments of iOS 14 is the addition of a search field to the emoji keyboard, which lets you search for specific emoji by name instead of hunting them down by category or shape. In an apparent case of Apple thinking that iPadOS users have no chill, the company decided that emoji search should remain exclusive to the iPhone this year. You can’t search for emoji by name on iPad because….

checks notes

…reasons.

Apple did, however, change how the emoji keyboard is displayed in iPadOS 14 while you’re using an external keyboard: it’s now a popover instead of a full-size keyboard (if you’re using the regular software keyboard, the emoji one is unchanged). There are also new ways to activate the emoji popover from an external keyboard: in addition to the aforementioned button in the keyboard menu, there is also a new option in Settings to instantly bring up emoji by pressing the Globe key once. This can be found in Settings ⇾ General ⇾ Keyboard ⇾ Hardware Keyboard ⇾ Press Globe for Emoji. Once enabled, pressing the Globe key on an external keyboard will bring up the emoji popover right away; you’ll still be able to cycle through installed keyboards with the ⇧⌃Space keyboard shortcut.

While I appreciate the inclusion of a faster way to activate emoji, I’m dumbfounded by the lack of search. Apple already did the work for the iPhone, so why not on iPad too? Is it because iPads are for work, and emoji are for fun? Is it a technical limitation we can’t possibly imagine? Who’s going to tell Jeremy Burge?

Easier access to dictation. Tapping the microphone button in the aforementioned keyboard menu isn’t the only way of activating dictation in iPadOS 14: if you’re using an external keyboard, you can now assign a shortcut to toggle dictation right away. You can customize this keyboard shortcut in Settings ⇾ General ⇾ Keyboard ⇾ Dictation Shortcut; by default, it’s double-pressing the Control key.

Now, any time you’re in a text field, you can double-press the assigned key on your external keyboard and dictation mode will be triggered instantly, without having to touch anything onscreen. This is a very welcome addition to iPadOS, and I’ve been using it a lot.

iPadOS 14

For Apple today, it’s clear that iPadOS has turned into the most challenging platform to design for. Unlike iOS and macOS, apps on iPadOS can run in a variety of size classes, with different multitasking conditions, with support for a multiplicity of inputs that range from touch and keyboard to the Pencil, pointer, and a combination of them all. At this point, it’s evident that any modern iPadOS app needs to feel great both when used with touch and the keyboard-trackpad combo – a unique problem Apple never faced in any of their other OSes before.

Apple’s challenge for the future of iPadOS is to rid the platform of features that are only optimized for one of the device’s modes. The changes in iPadOS 14, while not revolutionary when considered in isolation, are part of this bigger narrative, and they’re paving the way for a redefinition of the iPad app ecosystem, powered by an OS built around modularity and multiple interaction methods.

iPadOS 14, with its sidebars, multicolumn layouts, and reimagined toolbars, is Apple’s first major step into a future where the iPad, free of the preconceptions from its first decade, can embrace its intrinsic modularity. At the same time, iPadOS 14 also shows us that 13.4, seemingly dropped at random during the spring alongside the Magic Keyboard, was essential to have all these pieces in place for WWDC. iPadOS 13.4 provided Apple with a necessary foundation for iPadOS 14, and it set expectations for developers, who should stop thinking of iPad apps as glorified iPhone counterparts, and more as flexible experiences that encompass the whole spectrum of modern interactions – from touch to tablet to laptop. It’s no coincidence, after all, that Mac Catalyst, the technology that powers the conversion of iPad apps to Mac apps, is receiving a substantial update this year.

With this strategy in place, it’s clear that Apple is going to continue borrowing interface idioms and interaction principles from macOS. We’ve discussed this idea at length before, and the narrative isn’t changing with iPadOS 14: the company is not afraid to lean into lessons learned with the Mac years ago, but they’re also willing to remix those ideas and adapt them to the iPad’s peculiar modular nature.

Alas, this is also why certain issues stick out even more when this newfound approach isn’t followed.

The new universal Search in iPadOS is a fantastic improvement over the old, full-screen Search of iPadOS 13; however, there’s no way to invoke Search inside apps without an external keyboard. Similarly, while I don’t think Split View and Slide Over are the interaction disaster other folks paint them to be, they were designed for touch during the iPad era preceding the Magic Keyboard and pointer; as a result, it’s still awkward to operate multitasking via the trackpad and keyboard in iPadOS 14, which brings no meaningful improvements to Split View and Slide Over management when you’re not touching the screen. If ever there was a solid case against the current implementation of Split View and Slide Over, their lackluster support for the keyboard and trackpad in iPadOS 14 is it. I hope to see meaningful changes on this front with iPadOS 15 next year.

With iPadOS 14, Apple is asking both users and developers to reconsider what a truly great iPad app experience should look like in 2020. The answer is multifaceted, but it boils down to this: designing apps for iPad no longer means just “making a tablet version”; it means starting from the familiarity of touch while accounting for a diverse, vibrant platform where there’s no single input and no single correct way to hold a computer. It means accepting that the iPad has evolved into a flexible computing platform like no other in Apple’s ecosystem.

Five years after the iPad Pro’s debut, the iPad is changing again. But this time, iPadOS 14 is taking the whole iPad lineup along for the ride.

- Scribble also supports mixed languages, so you can write English and Chinese words in the same sentence and they will all be transcribed. ↩

- As you can guess, at the moment of writing this, this feature was not supported in Google Maps. I am utterly shocked. ↩

- No, seriously, the point is that you shouldn't know your passwords. Please consider using a password manager instead. ↩