Other Apps

In addition to changes in Apple’s core iOS apps, there are miscellaneous improvements to other system apps worth mentioning.

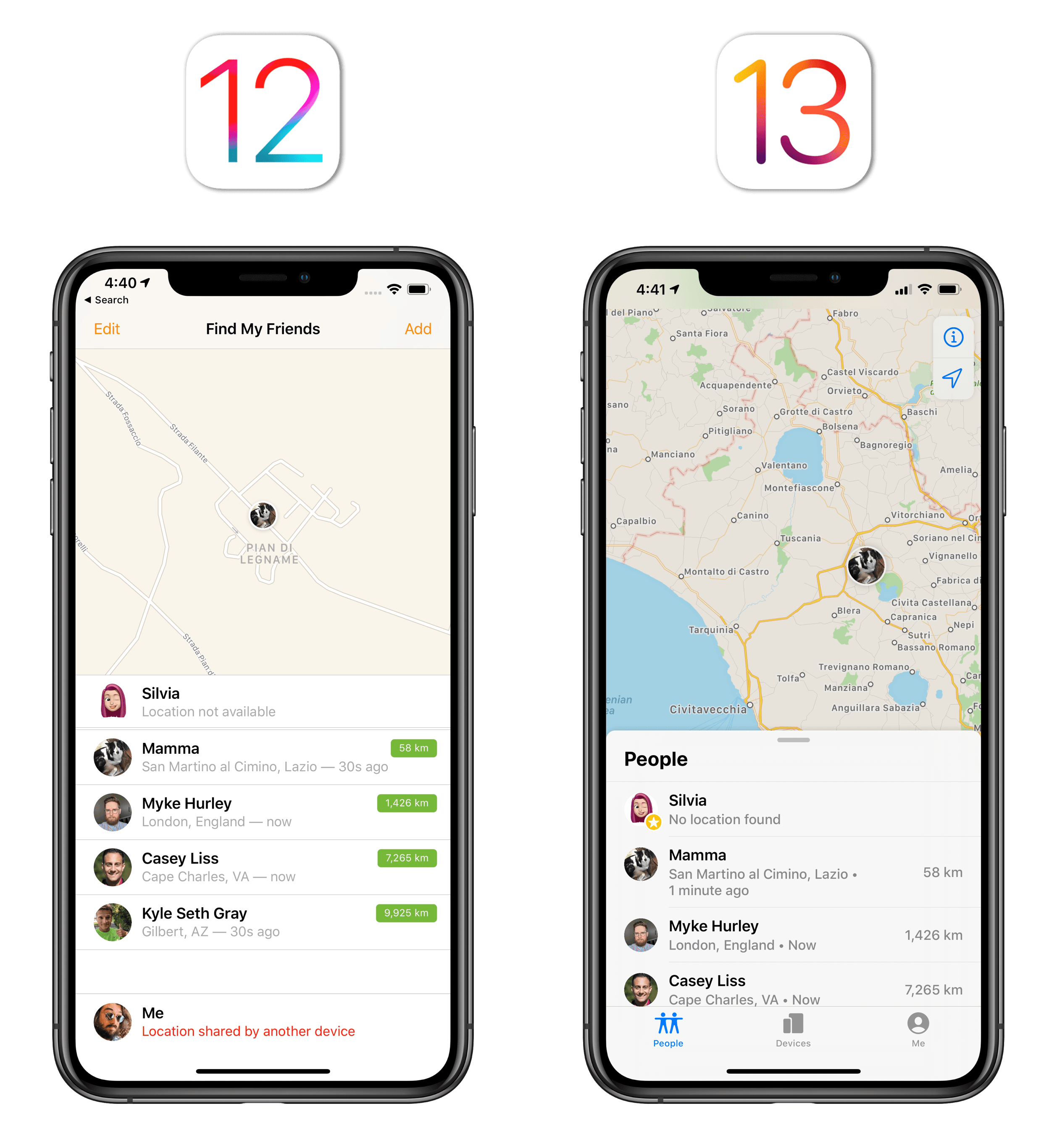

Find My

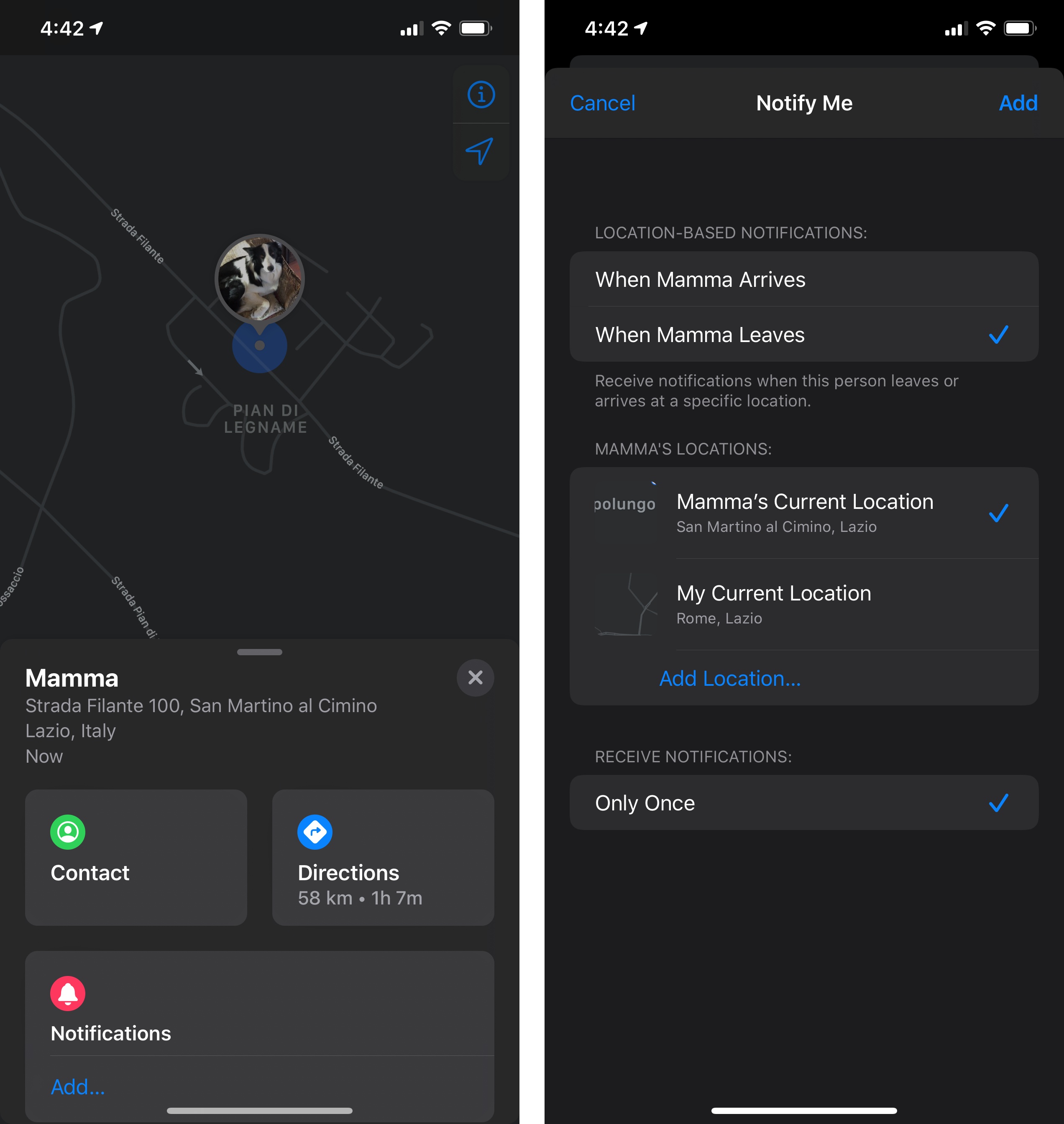

With iOS 13, Apple has unified the Find My iPhone and Find My Friends apps into a single app called Find My. While the design is all-new, basic features are essentially unchanged: you can still mark missing devices as lost, locate them, play sounds on them, and wipe them remotely; for friends, you can track them on a map to see where they are, set up notifications for their location status (in iOS 13, they’ll be notified when you enable notifications for them), and customize their location with labels such as ‘Home’ or ‘Work’.

It makes sense to offer both friend and device location tracking in the same app; even though I barely use these features, I’m a fan of the modernized design.

There’s a fascinating change to the underlying location-tracking system for lost devices: in iOS 13, Apple has implemented crowdsourced location reporting so that even if a device isn’t connected to Wi-Fi or the cellular network, you’ll be able to receive updates on its location. This is possible because other iOS 13 devices near one that’s been marked as missing can detect its Bluetooth signal, sort of like a distress beacon, and report its location back to you. Information is entirely anonymized and encrypted end-to-end, which means that people with a device that “found” the missing one will never know what happened or whose device it was.

Even though I hope I’ll never have to rely on it, this is a genius feature: once deployed at scale now that iOS 13 has launched to the general public, it has the potential of solving the issue of stolen devices being put in offline mode so they can’t communicate their location to you.

Find My’s crowdsourced location sharing seems to be working as advertised: a friend of mine lost his iPad at the Fiumicino airport in Rome a few months ago, and the device was offline; thanks to people running the iOS 13 beta, the location was updated overnight and he was able to retrieve his device the following day. This feature is a prime example of the integration between hardware and software unique to the Apple ecosystem, and I bet we’re going to hear dozens of similar stories over the next few months.

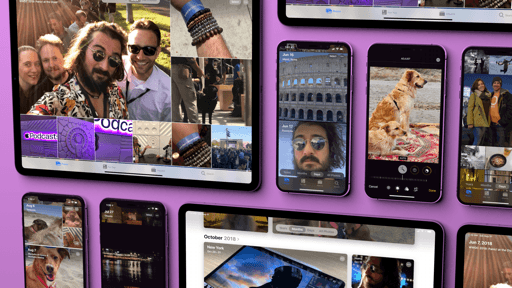

Camera

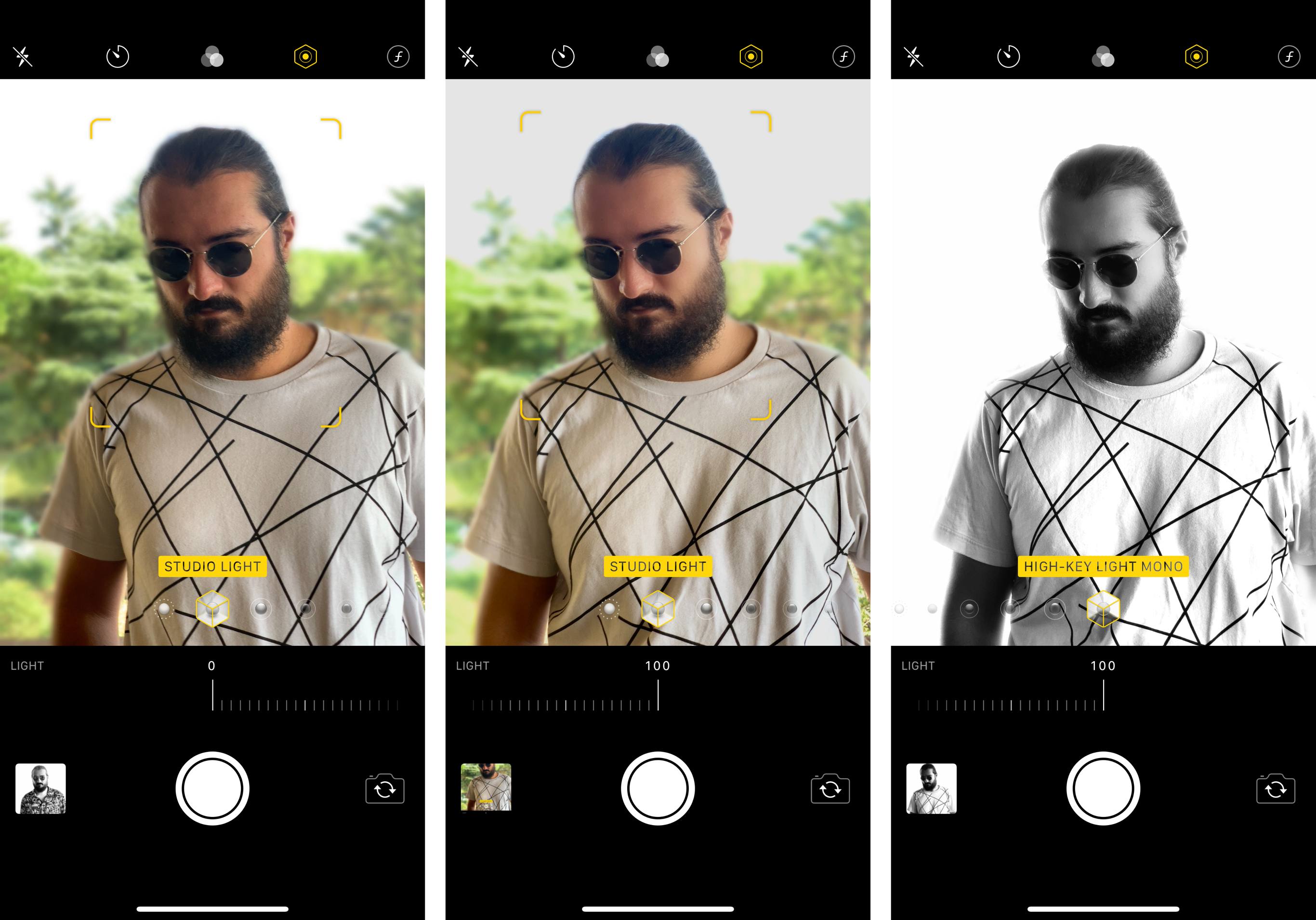

Besides new functionalities exclusive to the iPhone 11 hardware, the Camera app in iOS 13 has gained two new features for owners of existing iPhone models: there’s a new intensity slider to regulate the strength of Portrait Lighting, and a new effect called High-Key Light Mono.

The intensity slider is available along the top edge of the Camera app when shooting in Portrait Lighting mode, which essentially means all effects except Natural Light. The slider lets you tweak the intensity of light hitting the subject: the stronger you set it, the more skin and other facial features will be smoothed out in the final shot.

While I don’t take pictures in Portrait mode often, I’m glad Apple added a manual intensity adjustment tool because I don’t like aggressive smoothing that is often the default result of pictures taken on an iPhone XS. My Studio Light shots taken on iOS 13 have a bit more detail than what the default configuration would offer, and I’m pretty satisfied with the results.

The new Portrait Lighting intensity slider (left and center) and the new Portrait effect in iOS 13, High-Key Light Mono.

As for High-Key Light Mono: it’s a new effect that renders the subject in monochrome while turning the entire background white. In the right hands, I’m sure it can produce some gorgeous results. In my tests, I always noticed some image artifacts with separation between background and subject; I wouldn’t be surprised to discover that this feature performs much better on iPhone hardware introduced earlier this month.

This doesn’t necessarily apply to the system Camera app, but Apple has improved the Portrait Segmentation API in iOS 13 with support for separate skin, hair, and teeth segmentation mattes. Building on top of the API launched last year, these new mattes are embedded in all Portrait mode captures by default, and they will allow apps to isolate aspects of a subject such as eyebrows, mouth, or hair. Demos that Apple showed off at WWDC were, frankly, terrifying, but I’m expecting developers of makeup apps and apps with special face effects to take advantage of this API soon.

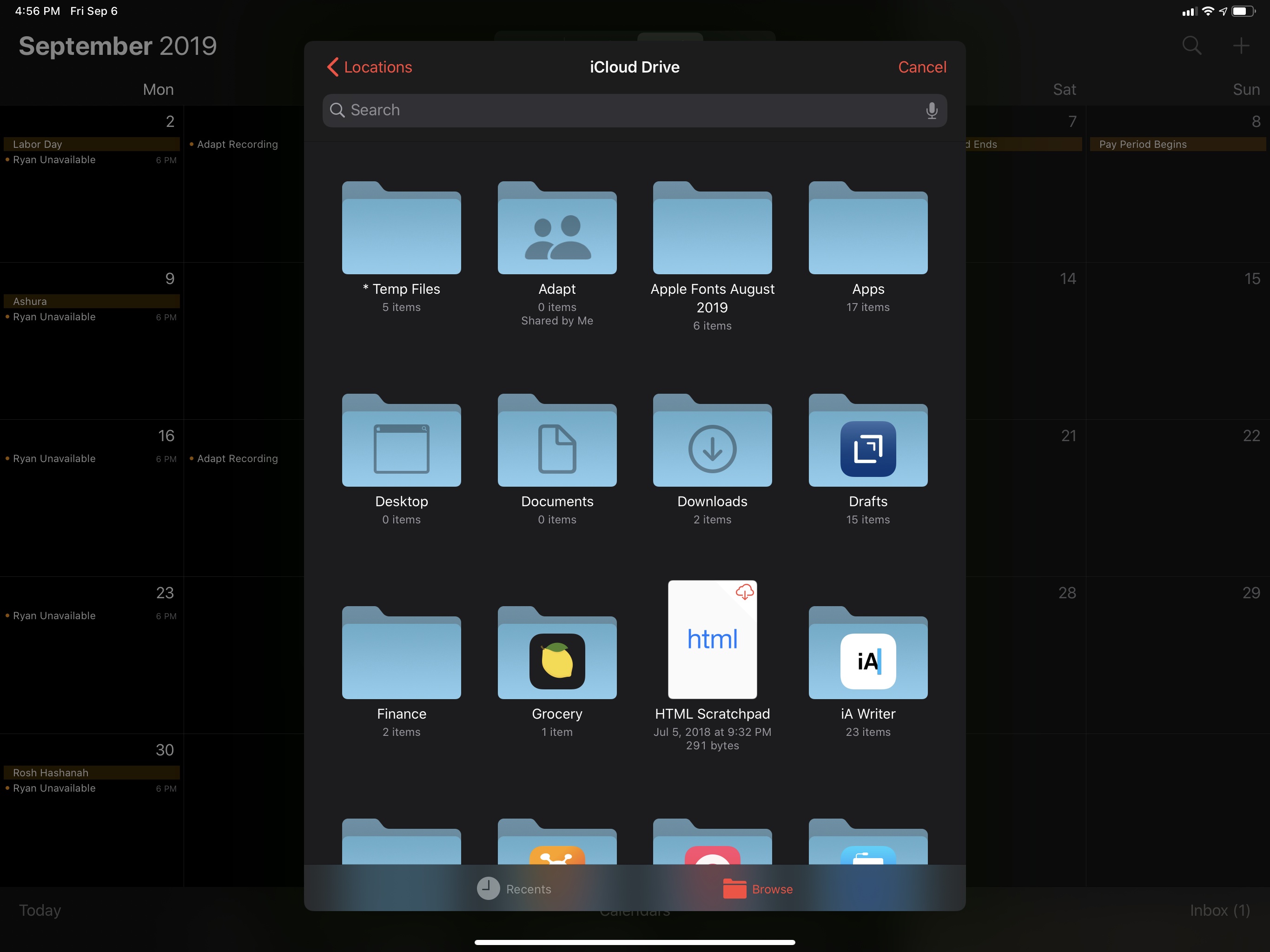

Calendar

The only addition to the Calendar app this year is the ability to add attachments to events. Unlike Reminders, which only supports scanned documents and images, Calendar uses a Files picker to let you attach any file you want to an event. I’d like Reminders to copy this approach.

Clock

The Clock app was updated with large titles, a true black background instead of dark gray, and a new circular UI for timers.

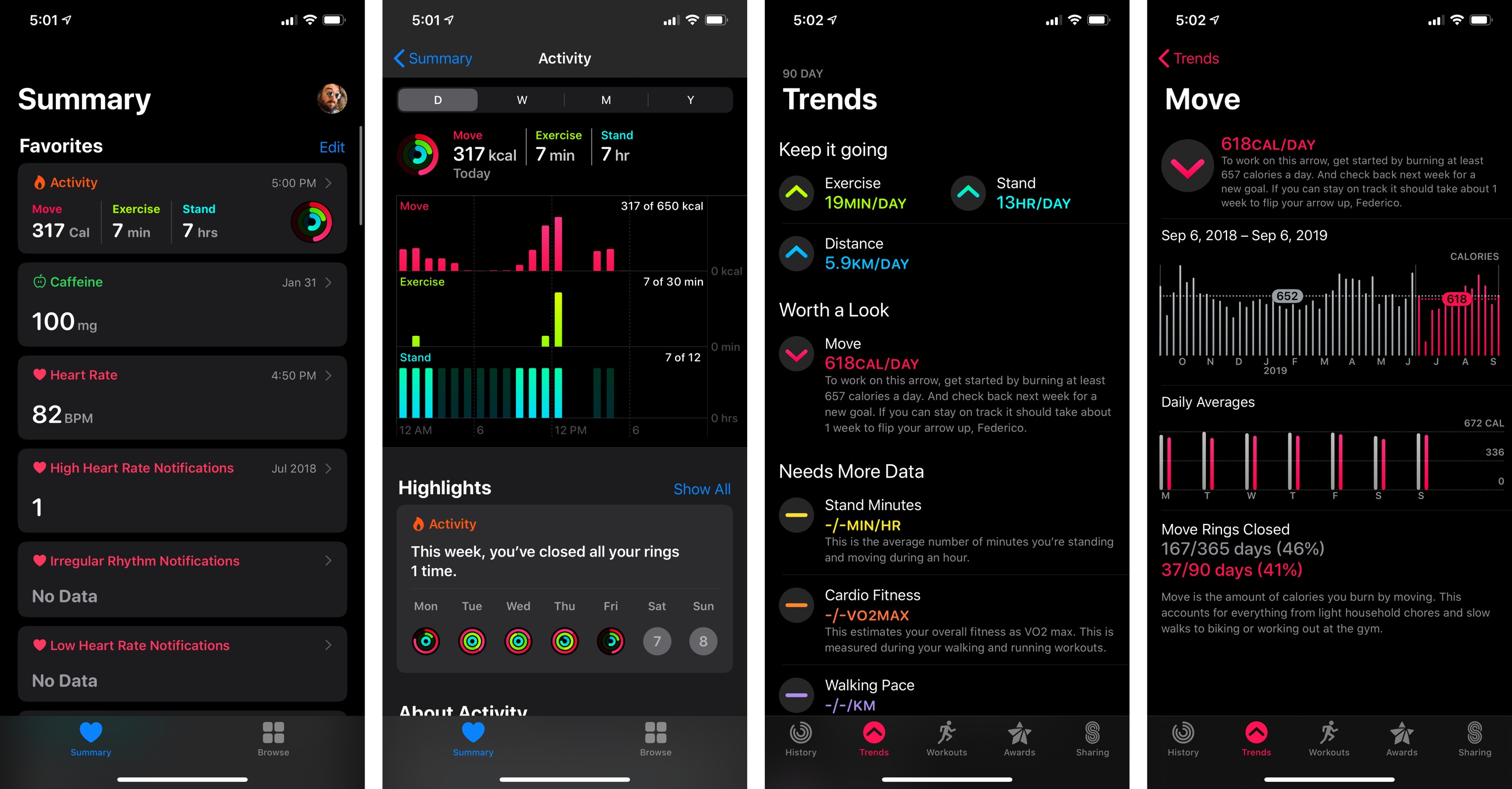

Health and Activity

Both Health and Activity have received substantial upgrades in iOS 13: Health has been redesigned to provide new highlights and a cleaner look for various stats; Activity now offers a helpful trend functionality to see how active you’ve been over time and focus on specific areas of your daily routine.

We’ve covered both apps in-depth with dedicated overviews earlier this year, which you can read here and here. Club MacStories members can also read updated versions of both stories in an exclusive eBook that collects additional iOS 13 coverage from the MacStories team; you can find more details here.

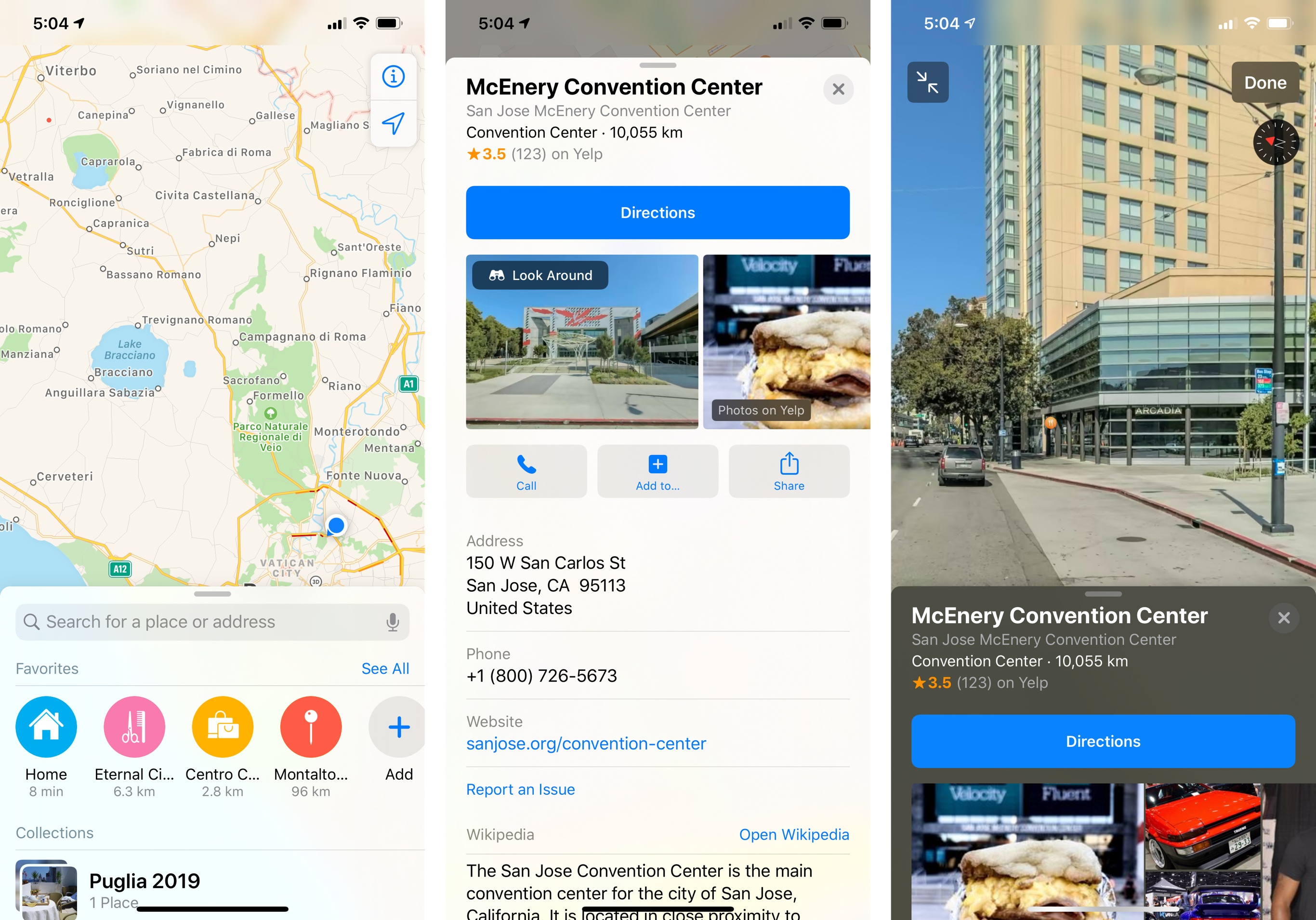

Maps

As part of Apple’s long-term plan to build its own map of the world based on extensive collection of 3D imagery, the Maps app in iOS 13 features a host of notable enhancements that, after years of playing catch-up, finally make it a worthy competitor to Google Maps. You can now create collections of your favorite places and share them with other users; the interface to browse nearby points of interest has been refreshed; in selected cities in the United States, Apple is debuting Look Around, an impressive – and immersive – 3D navigation tool that I found faster and more polished than Google’s Street View in my tests.

I live in Italy, and I don’t know when I’ll be able to take advantage of features such as updated maps and Look Around, which are exclusive to North America for now. Our Ryan Christoffel, however, published an in-depth analysis of all the changes in the Maps app for iOS 13, which I highly recommend reading here.

Books

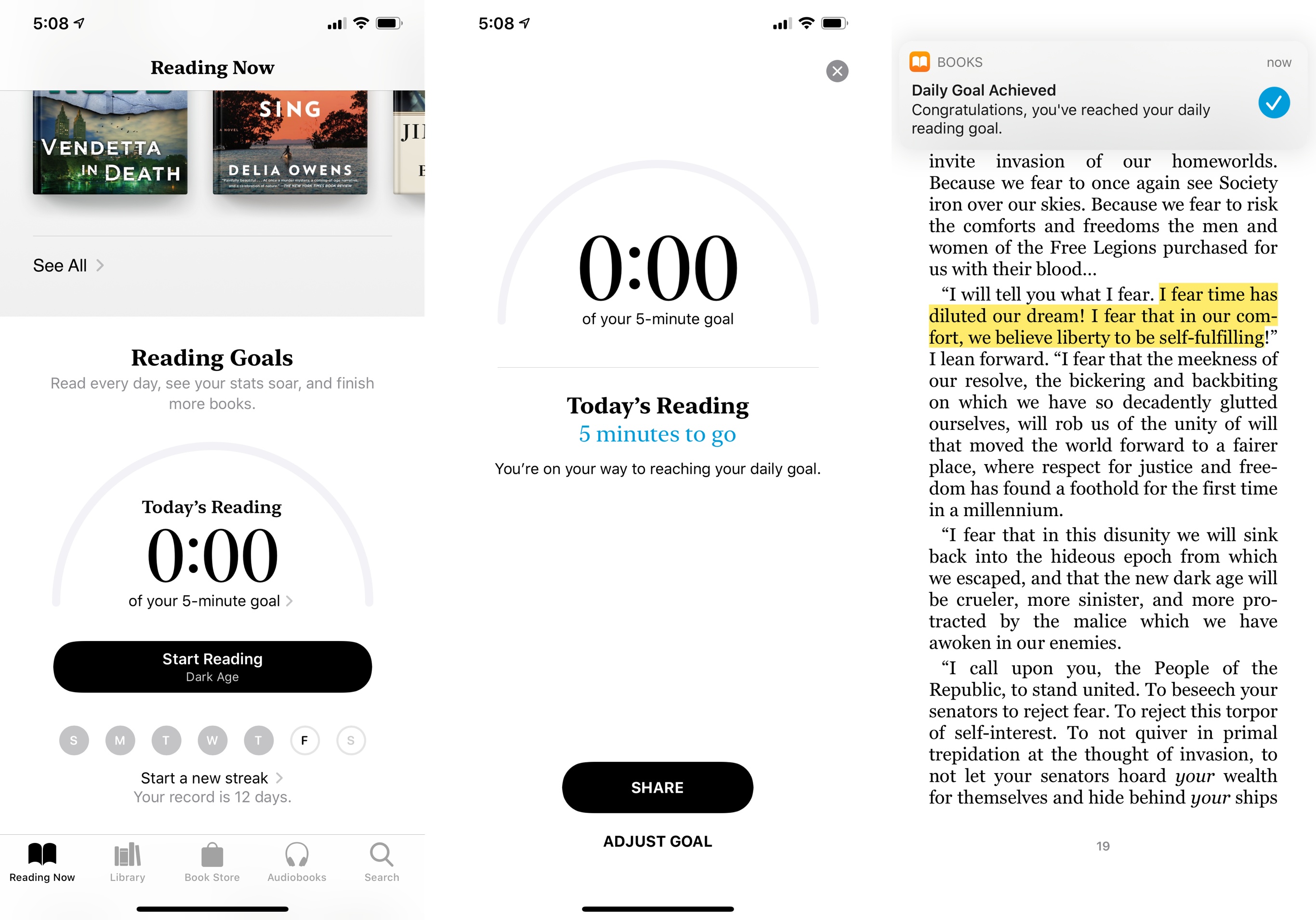

Following last year’s relaunch, Apple only added one new feature to the Books app in iOS 13: reading goals. Available at the bottom of the Reading Now page, reading goals are Apple’s attempt at nurturing the habit of reading by encouraging you to spend at least five minutes reading every day.

When you reach five minutes of reading in the Books app, you’ll receive Apple’s compliments via a notification and add one day to your streak. The 5-minute goal can be customized and you can view your current streak underneath a counter that tells you how long you’ve been reading today.

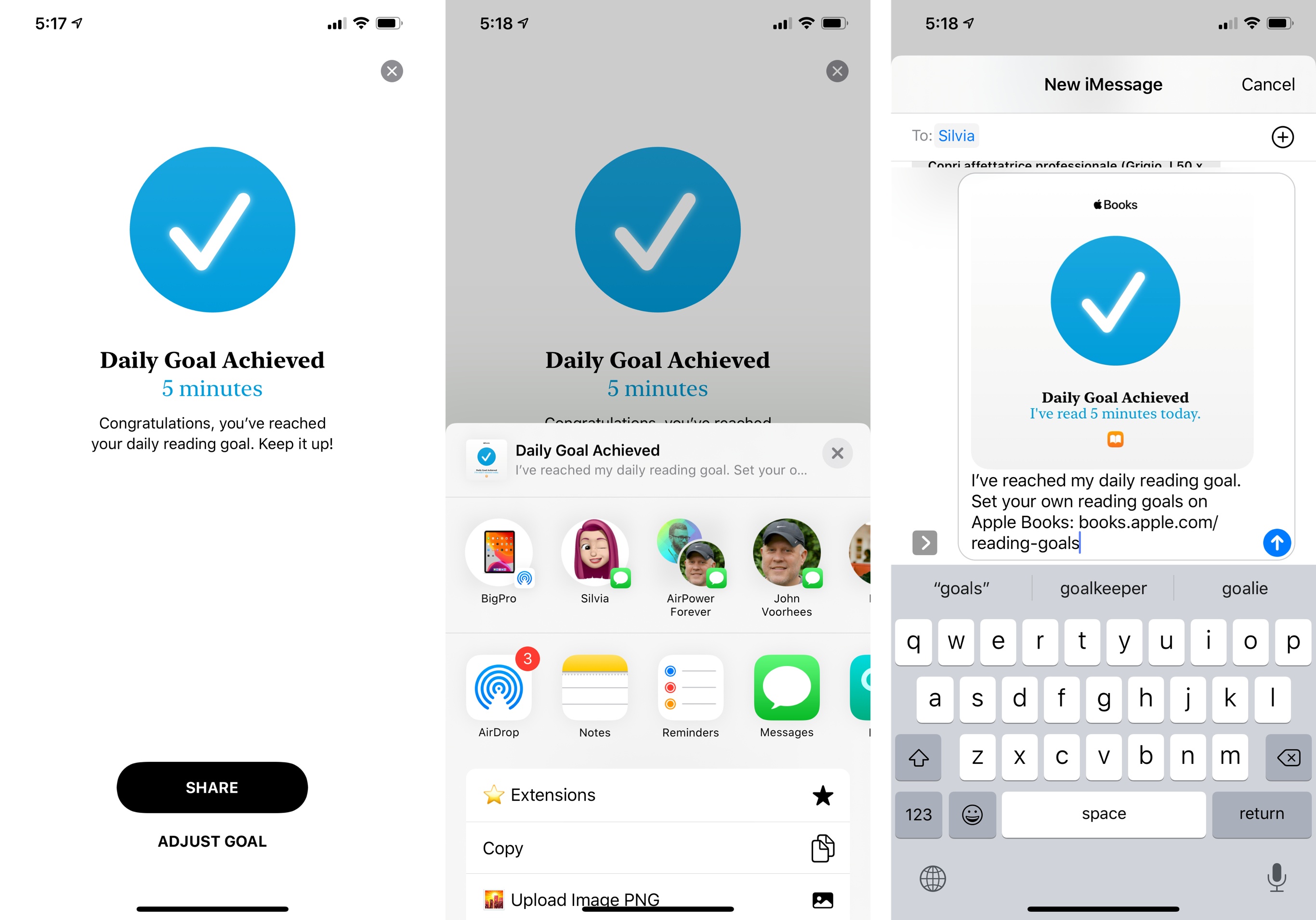

This habit-tracking aspect of Books wouldn’t be complete without a way to brag with your friends about your reading habits so, of course, you can share your current streak as an image with others. I don’t think I’ve ever found myself in a social situation where “book reading bragging rights” are commonplace, but I suppose the most competitive book clubs could use this feature.

I don’t particularly care about sharing my reading streak, but I like the idea of Apple pushing us to read more and spend time away from Twitter and Facebook – we could all use that nowadays.

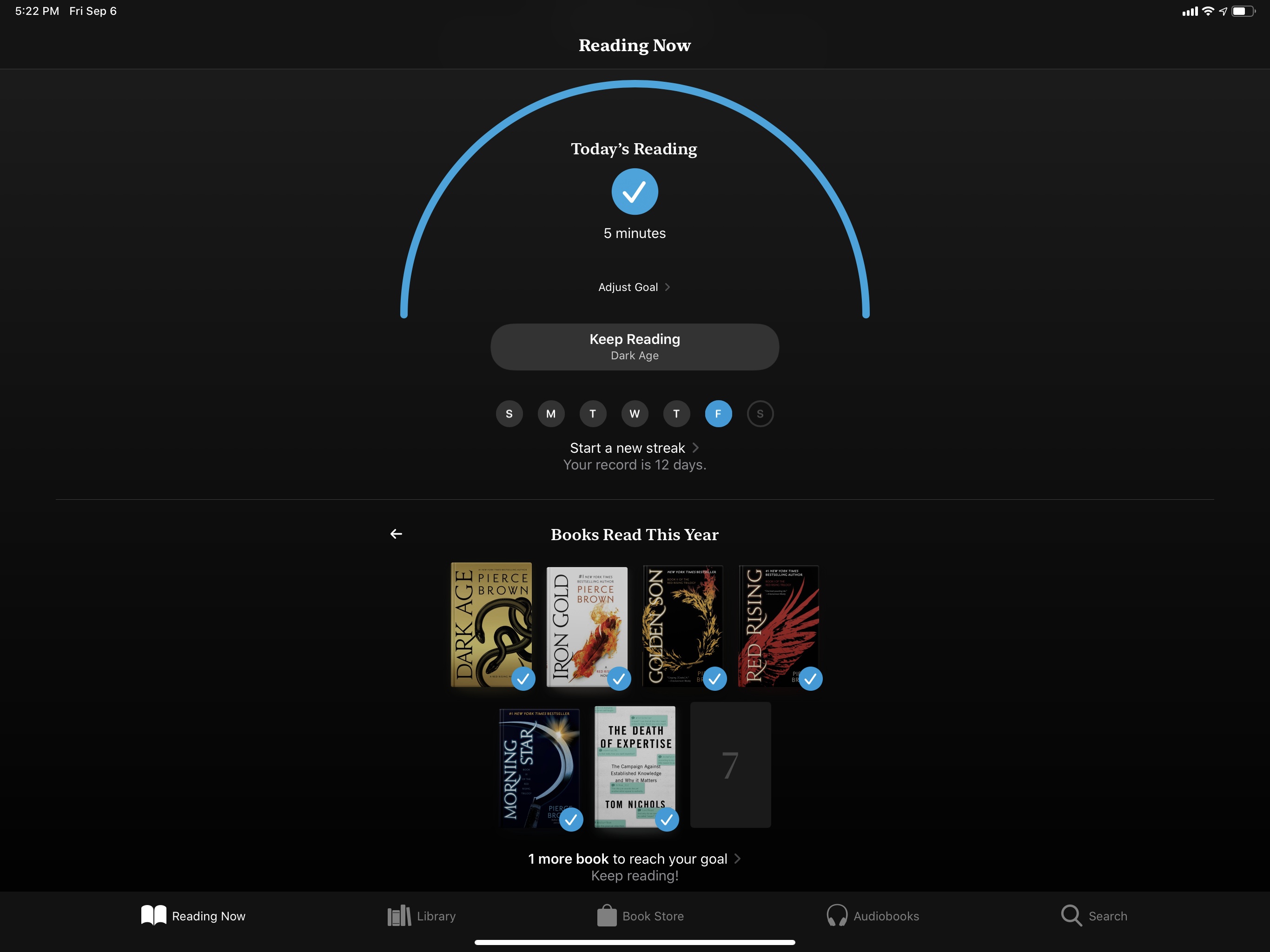

In addition to daily reading goals, Apple added an annual goals functionality too. As you can imagine, it automatically collects all the books you’ve read in the current year and comes with its own customizable goal. You can scroll through multiple years to see books you read in the past38, and you can tap on the grid of completed books to share it as an image.

As you can see from the screenshots above, I discovered the Red Rising series this year, and I wholeheartedly recommend it if you’re into space, Roman mythology, and political intrigue. Hail Reaper.

- Apparently the Books app always had this data in its database, even when it wasn't exposed with reading goals. ↩