Parameters and Conversational Shortcuts

When Apple relaunched Workflow as Shortcuts last year and integrated it with Siri, it was clear that both the Shortcuts app and developer framework were first steps toward a modern “scripting language” capable of communicating with apps and letting users interact with Siri directly. The first version of Shortcuts was still limited to URL scheme-based third-party app actions, and it didn’t support user interactions within Siri’s voice context, but Apple’s trajectory was clear.

As I wrote last year, on user interactions inside Siri:

This is perhaps the most notably absent feature from Shortcuts today: to run entirely within Siri, your custom shortcuts have to omit all kinds of user interactions. Siri already features the ability to let users confirm requests, offer additional information, or choose one among multiple items in a list; shortcuts running inside Siri should provide the same options.

And earlier this year, on third-party app integrations:

What I’m imagining is something simpler, yet exponentially more difficult for Apple to build: I want Shortcuts to integrate with third-party apps just like it can request native access to Reminders, Calendar, Apple Music, HealthKit, and other Apple apps. For Shortcuts to take its integration with the iOS app ecosystem to the next level, developers have to be able to provide actions based on a secure API that replicates the kind of direct integration Apple frameworks have with Shortcuts.

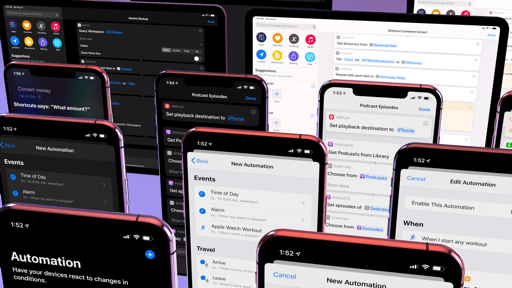

In iOS 13, Apple has delivered on both aspects of the Shortcuts experience: apps can eschew URL schemes (which Apple called “inherently insecure” at WWDC in discouraging new custom uses of them) and offer shortcuts that support inputs and outputs via parameters in what effectively amounts to a Shortcuts API20; and shortcuts are fully conversational now, meaning that besides having apps “talk” to each other by exchanging data via actions, shortcuts that require user interactions can run entirely within the Siri voice context without having to launch the Shortcuts app.

This initiative represents a shift from the old type of automation that Workflow spearheaded in the days of x-callback-url but, more broadly, it confirms Apple’s plans for extending Siri, which were only partly hinted last year: with conversational shortcuts, third-party app actions can become first-class features of the Shortcuts app and Siri, as if they were native functionalities of both.

Parameters are the Shortcuts API I’ve long argued Apple needed to move past URL schemes: they are variables that can pass dynamic input to an app through a shortcut; the app can perform the selected action in the background (without launching), and, unlike in iOS 12, pass back results to the Shortcuts app or Siri. With parameters, app shortcuts are no longer fixed actions: they can be customized by the user to perform different commands, allowing for greater flexibility than iOS 12’s Siri shortcuts and a more secure model than Shortcuts’ old x-callback-URL actions.

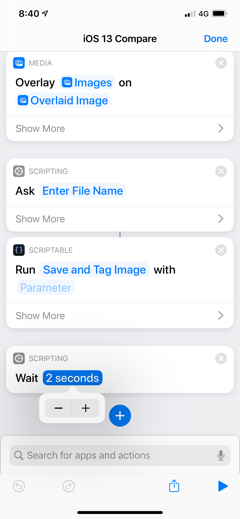

Parameters take different shapes and forms in a shortcut. At a high level, they are editable fields inside an app’s action that let you customize what a shortcut does by passing specific input to it; they look like blue empty fields, which you can select to pick from a list of pre-populated values, type some text, or enter a magic variable. When a shortcut runs, it’ll pick up the parameters you’ve filled out and perform the associated action accordingly.

Parameters let you treat actions as modular components where each piece can be customized to your needs – provided developers build support for them in their iOS 13 apps. There are different flavors of parameters: in addition to the aforementioned blue tokens, a shortcut can include on/off switches to configure specific options; there’s support for segmented controls; developers can even build shortcuts with additional parameters that accept variables of specific types (such as Text or URLs) as well as files. When a shortcut runs, it “resolves” all of these elements, performs the action without leaving the Shortcuts app or Siri (depending on where you’re running it), and returns results inline.

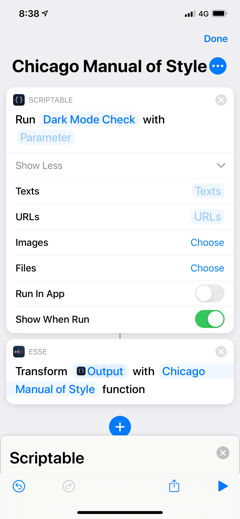

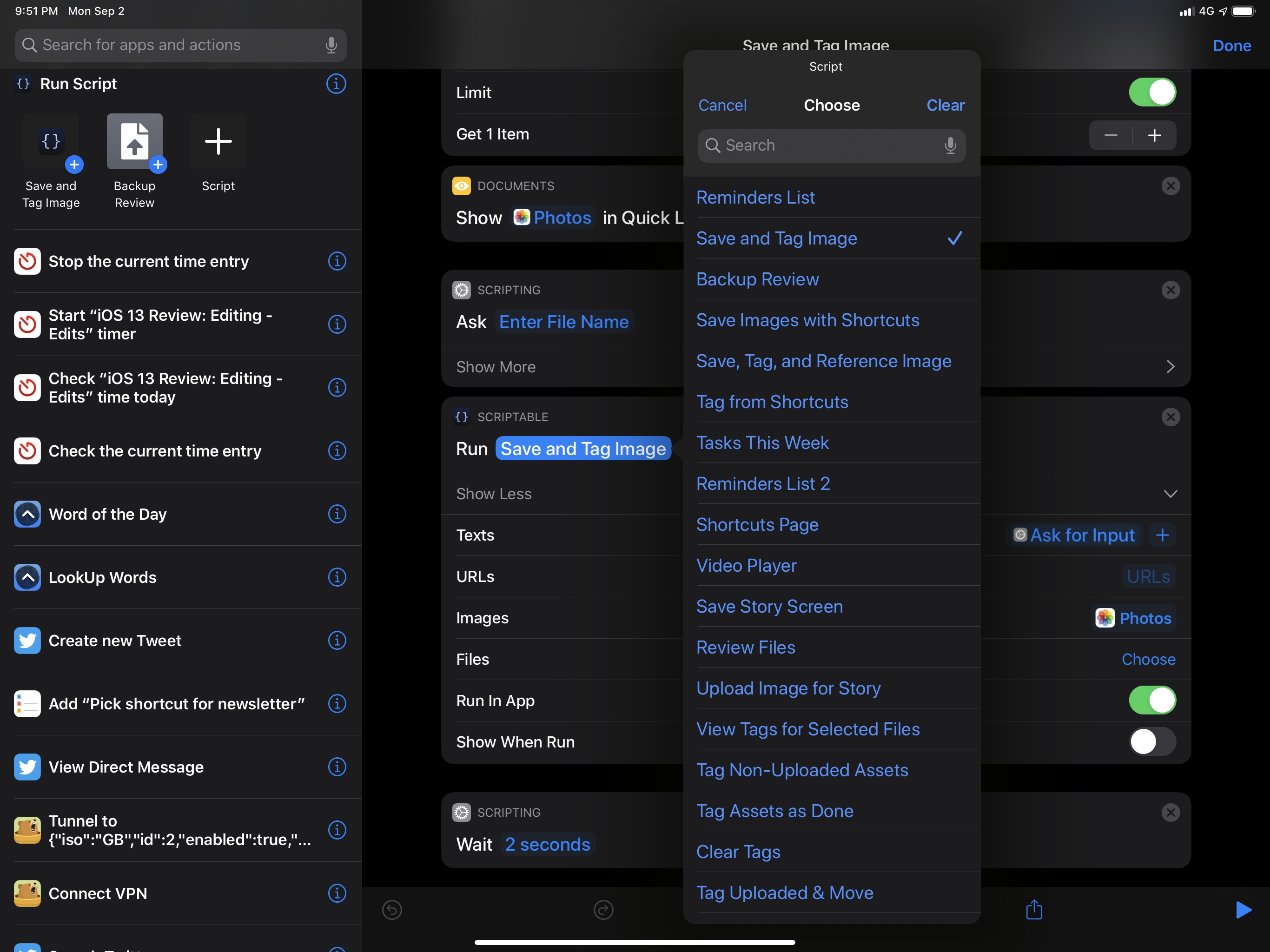

Different types of parameters for Scriptable and Esse. Scriptable lets you pick variables for files, too.

You can choose from a list of parameters.

You can add values to numeric parameters with buttons.

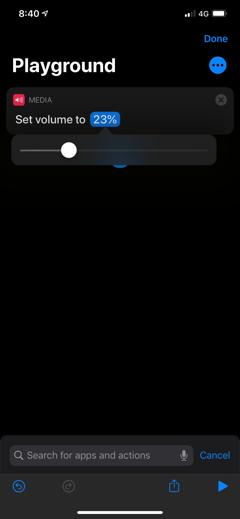

Parameters support sliders too.

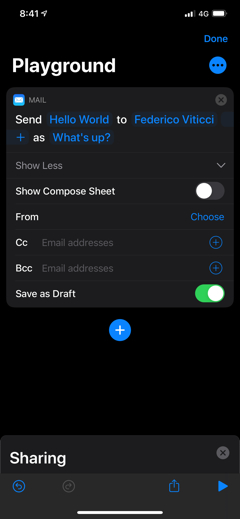

Apple’s Mail action supports multiple types of parameters.

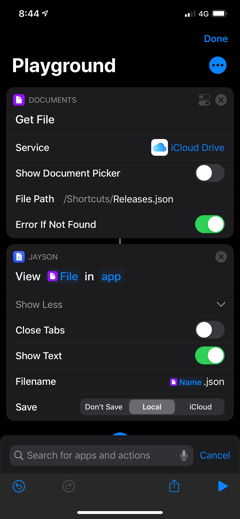

Jayson’s shortcut offers a segmented control.

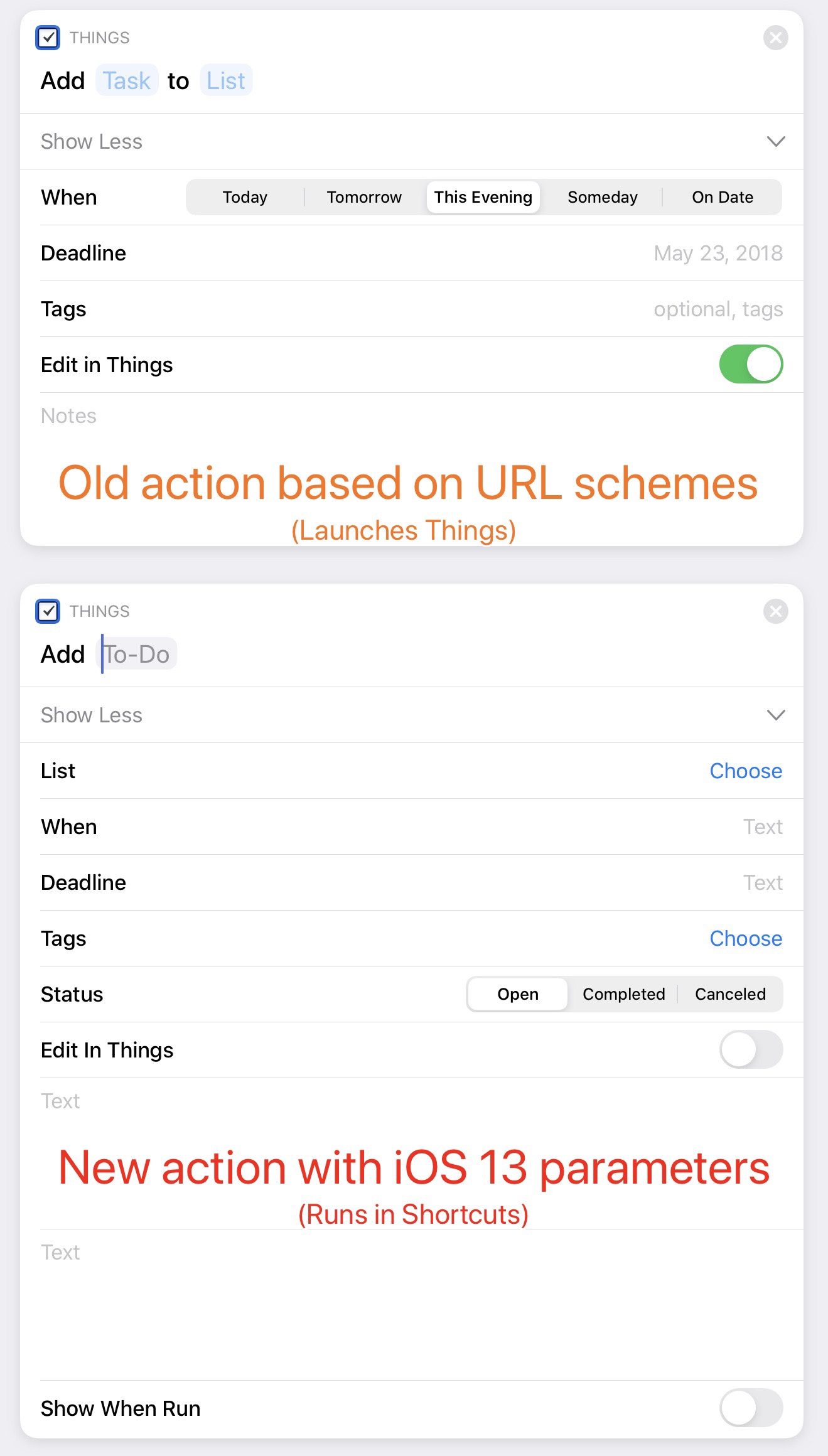

At first glance, shortcuts with parameters resemble the old “visual actions” that the Shortcuts team used to build for apps based on x-callback-URL to make them prettier and hide URL schemes, such as Things or Bear – and that’s the point. Like those actions, parameters allow you to visually customize an action with common iOS interface elements such as text fields and toggles, all while integrating with Shortcuts’ magic variable system. Here, let’s take a look at an actual example from Things, which had a custom integration with Shortcuts in iOS 12 via URL schemes and now supports iOS 13 parameters:

While Shortcuts’ old third-party actions were an interaction facade and ultimately relied on launching URL schemes to invoke actions inside apps, shortcuts with parameters are executed entirely within Shortcuts or Siri; they come with their own permission system to request access to an app’s data; and, unlike URL schemes, they can return rich results such as files, dictionaries, and even custom magic variables. And most importantly: any developer can create them now.21

Passing Input Parameters

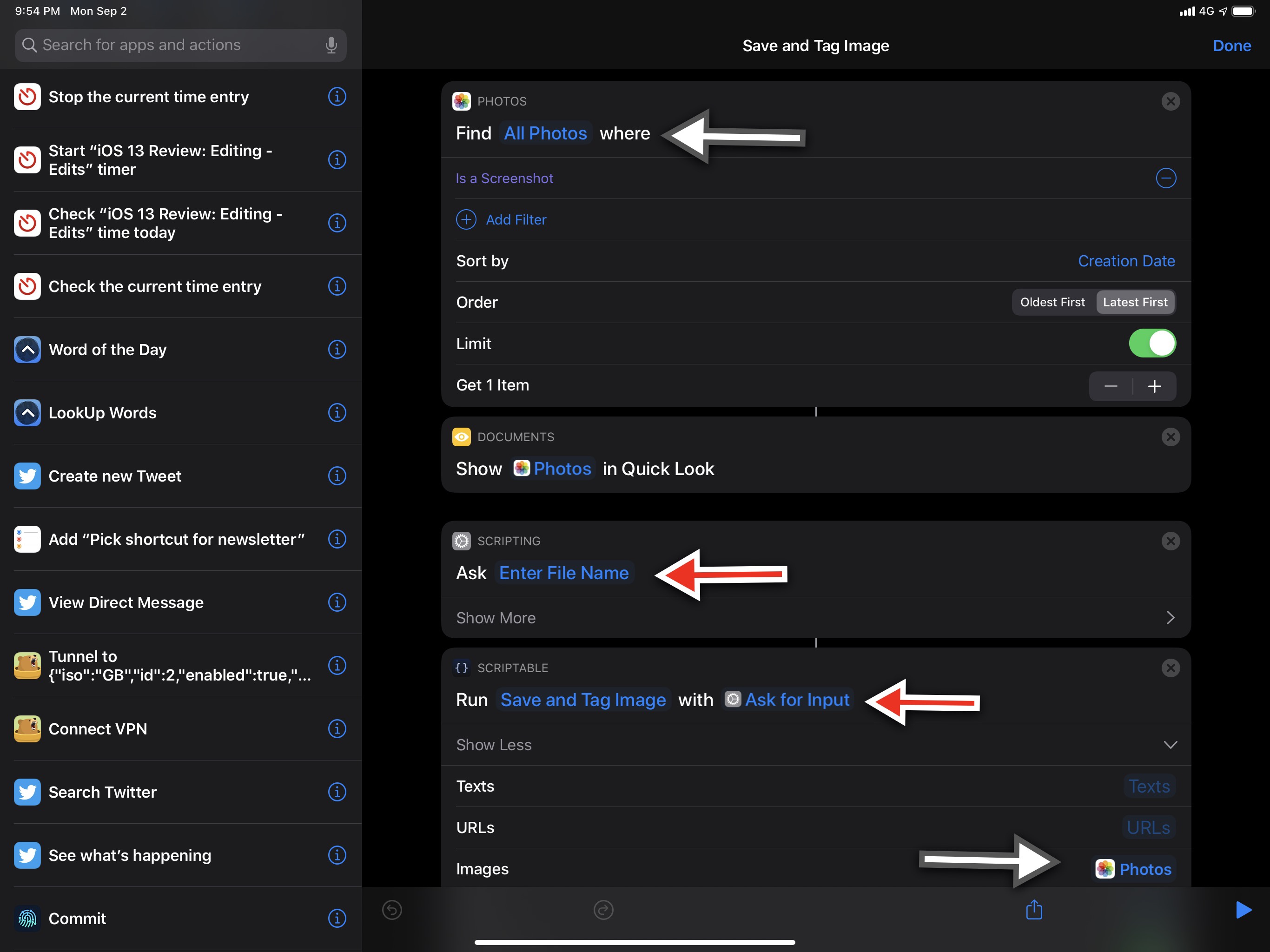

As per tradition in my Shortcuts coverage, let’s use some actual examples to demonstrate these features. Scriptable, Simon Støvring’s JavaScript IDE for iOS, now offers a ‘Run Script’ shortcut that can be customized with parameters. You can tap the ‘Script’ parameter to choose from a pre-populated list of your scripts. The list can be searched (a new option for all lists in iOS 13) so you can choose a script to run whenever the shortcut executes; however, you can also leave the ‘Ask Each Time’ parameter in there, in which case Shortcuts or Siri will ask you to pick which Scriptable script to run every time you invoke the shortcut.

Note that the list of scripts is generated at runtime by the shortcut, which communicates directly with Scriptable in the background to assemble a list of all your scripts currently in the app.

A shortcut can support multiple parameters at once, and Scriptable takes advantage of this to great effect in letting you pass multiple data objects to a script. You can run a script with a primary input parameter, but you can also assign individual parameters for text, URL, image, and file inputs. Plus, there’s a switch to determine whether the script should run in the app (launch Scriptable) or in the background.

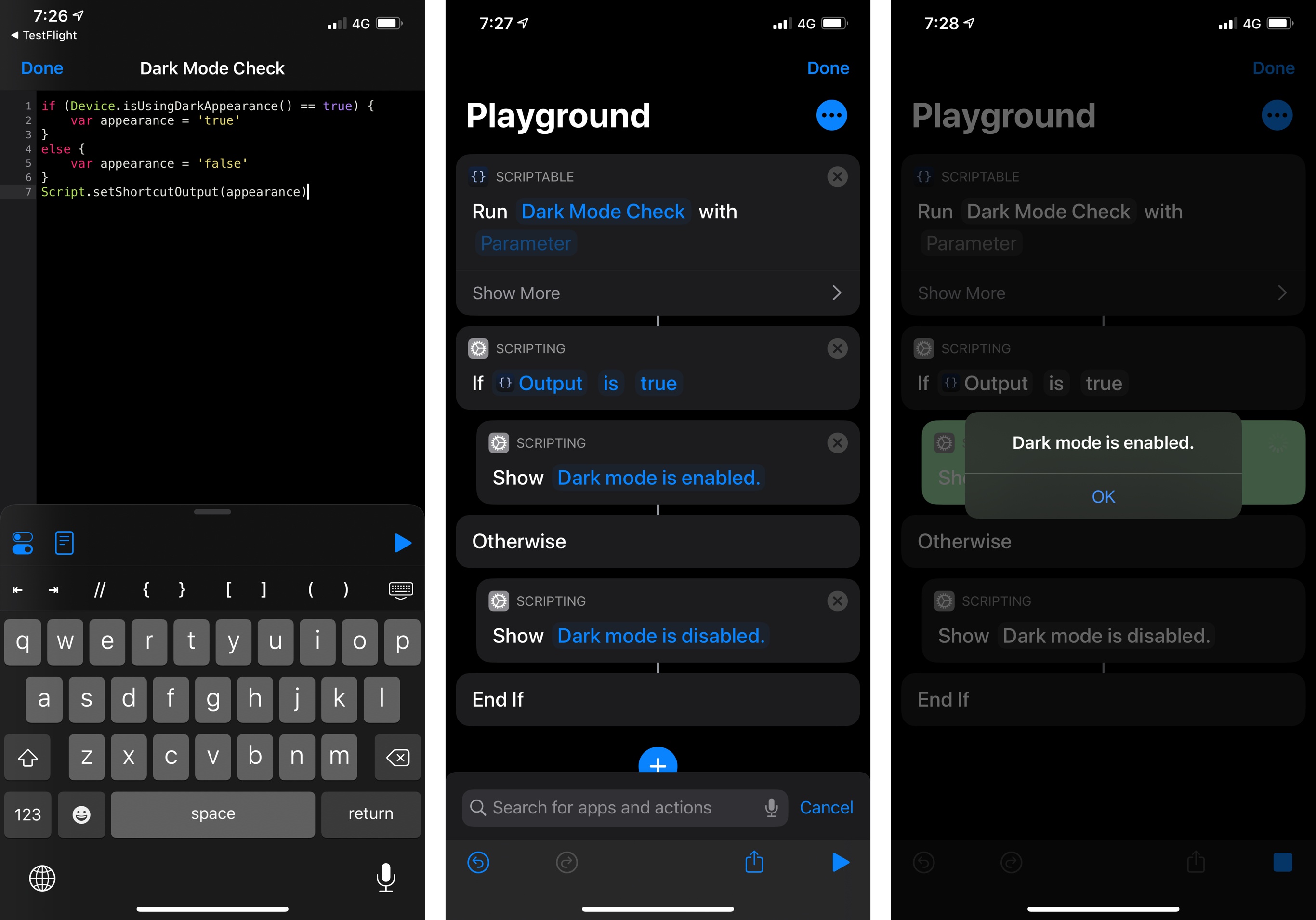

In iOS 12, if you wanted to invoke a Scriptable script and pass data to it, you either had to launch it via a URL scheme, write one piece of data at a time to the system clipboard, or run a script from the share sheet. You couldn’t pass multiple rich objects at once from Siri or the Shortcuts app. Parameters in Scriptable unlock new automation possibilities that go far beyond the clipboard and URL schemes; for instance, I’ve been using Scriptable with parameters to add features to Shortcuts that Apple didn’t build, such as a way to check whether or not a device is currently in landscape or using dark mode.

Checking with a Scriptable shortcut whether dark mode is enabled or not. The result is passed back by Scriptable as a text variable. The Scriptable app never launches.

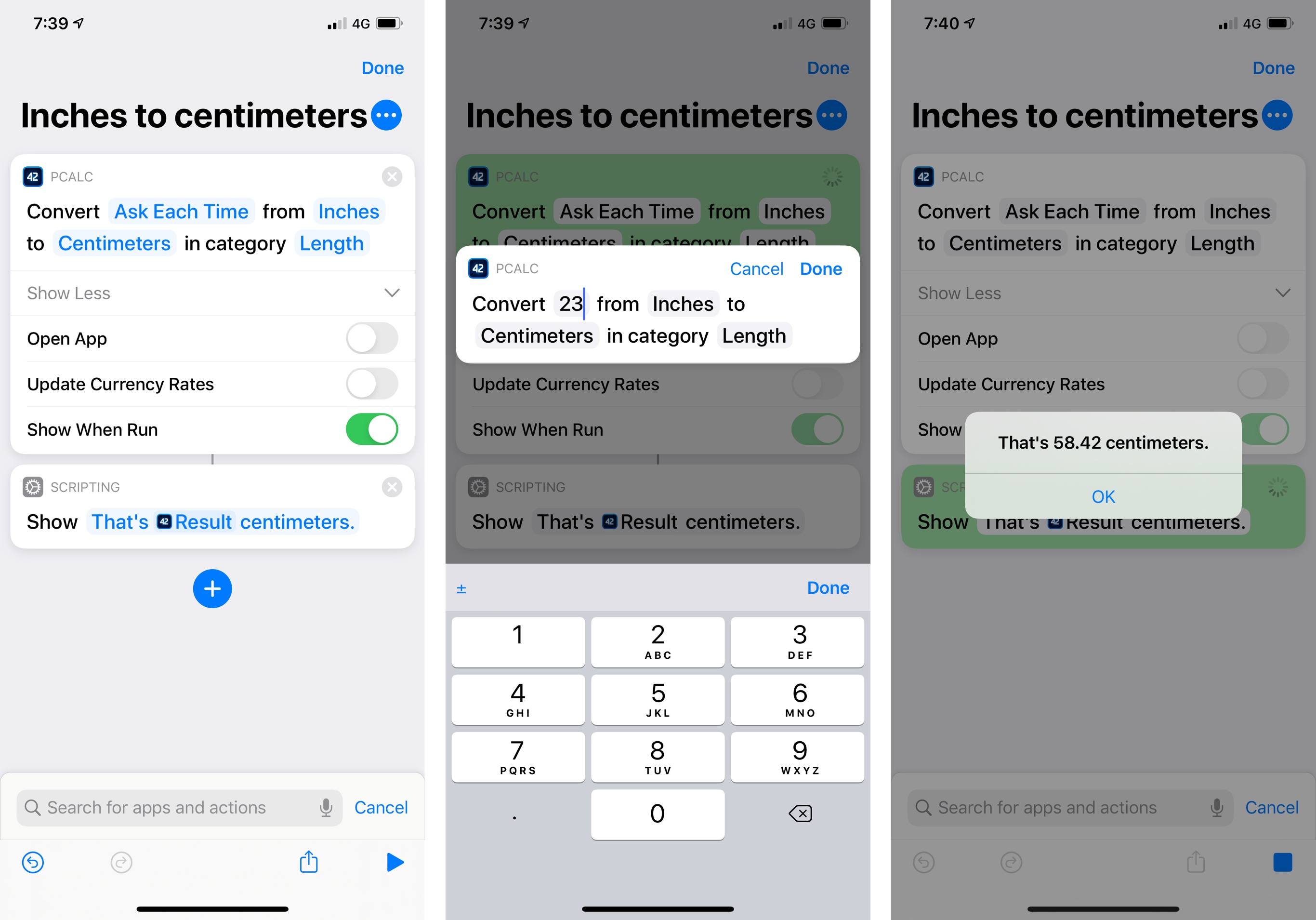

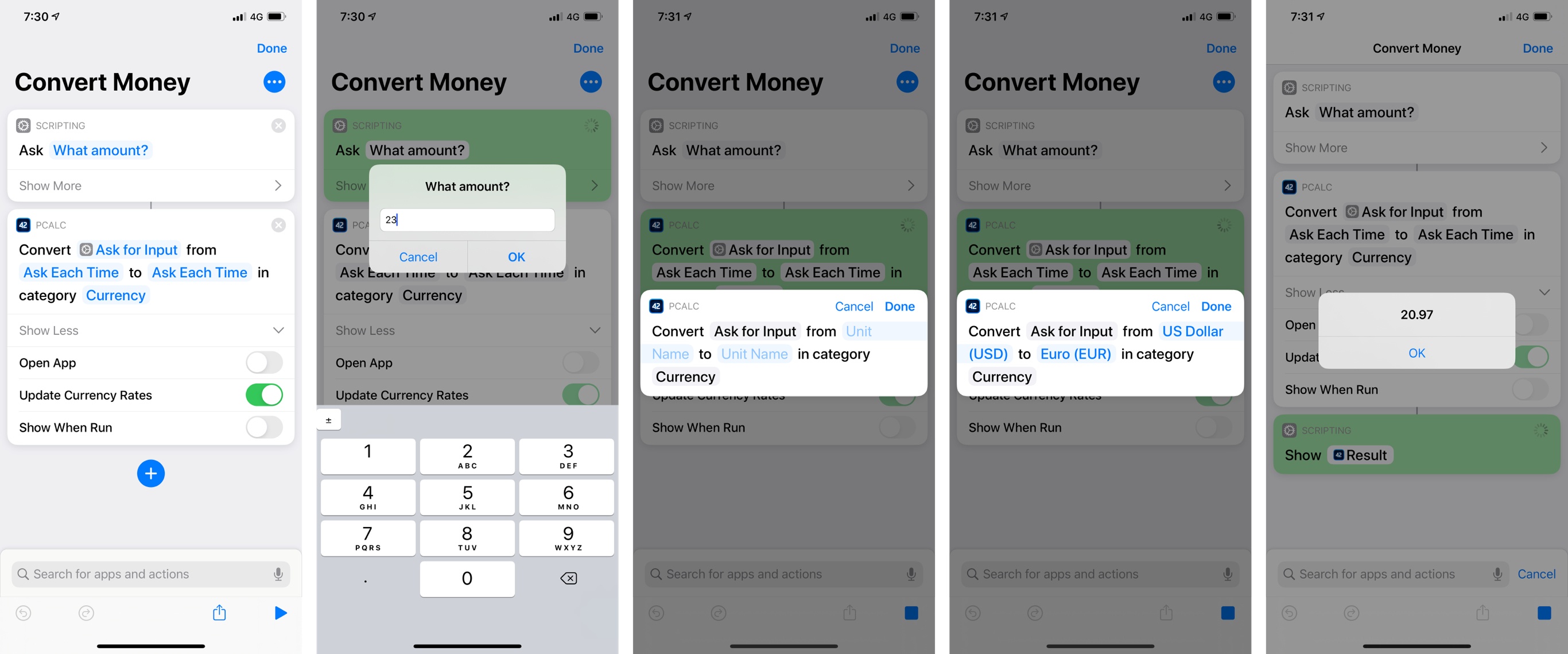

James Thomson’s PCalc, one of the apps that pioneered the idea of clipboard workarounds for Siri shortcuts in iOS 12, has fully embraced parameters in iOS 13 to let you perform calculations, pass input numbers, and retrieve results entirely inside Shortcuts and Siri. PCalc’s is among the most technically impressive implementations of parameters I’ve seen so far; like Scriptable, it has allowed me to enrich Shortcuts with native functionalities not created by Apple.

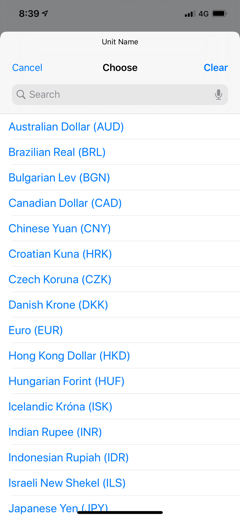

As in iOS 12, PCalc offers a variety of shortcuts to perform conversions, set register values, run commands and functions, and more. In iOS 13, these shortcuts support parameters so you can customize them from inside PCalc (with the ‘Add to Siri’ UI) or in the Shortcuts app. My favorite is the ‘Convert Value’ one, which supports parameters to set the numeric value to convert, pick a category of conversions, and choose two units. Impressively, the list of parameters for units updates dynamically depending on the category parameter you set: if you pick ‘Currency’, you’ll see a list of currency symbols; select ‘Length’, and you’ll find units such as ‘Centimeters’ and ‘Inches’ instead.

Of course, all of these parameters can be picked at runtime (when a shortcut runs) by inserting ‘Ask Each Time’ in their field. There’s even a switch to determine whether the shortcut should update currency rates with an Internet connection when it runs – which is what you want to get an accurate conversion between currencies. Because the first parameter supports plain text user input, any variable can be put in there: you can type a number and convert it every time the shortcut runs; or if you want to make it more dynamic, you can use the numeric result of another action such as ‘Ask for Input’, which will let you enter a value with a large number pad when the shortcut runs. And as we’ll see in a bit, actions such as ‘Ask for Input’ are fully integrated with Siri’s conversational abilities, so they’ll automatically translate to voice input when you run them through the voice assistant.

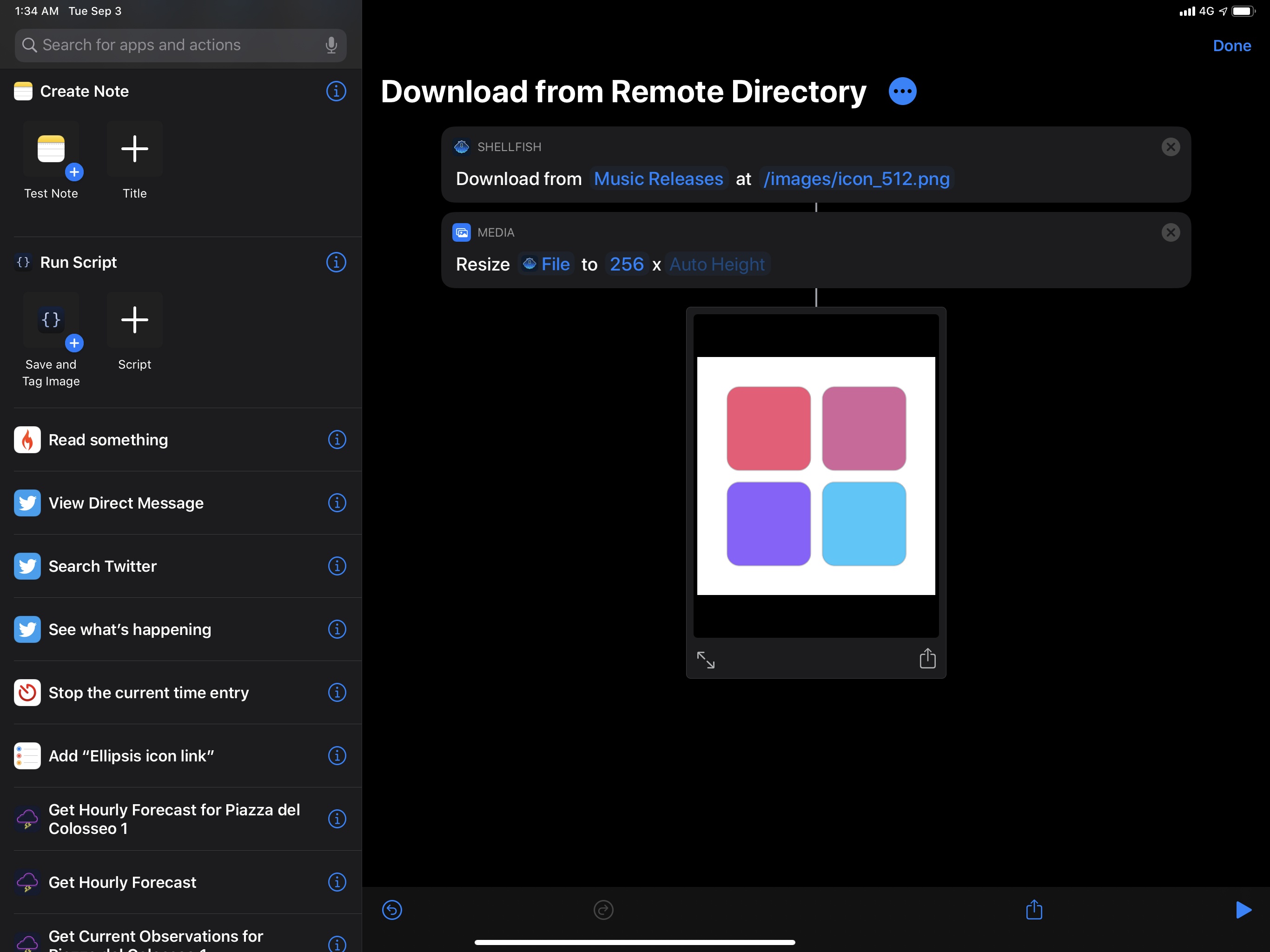

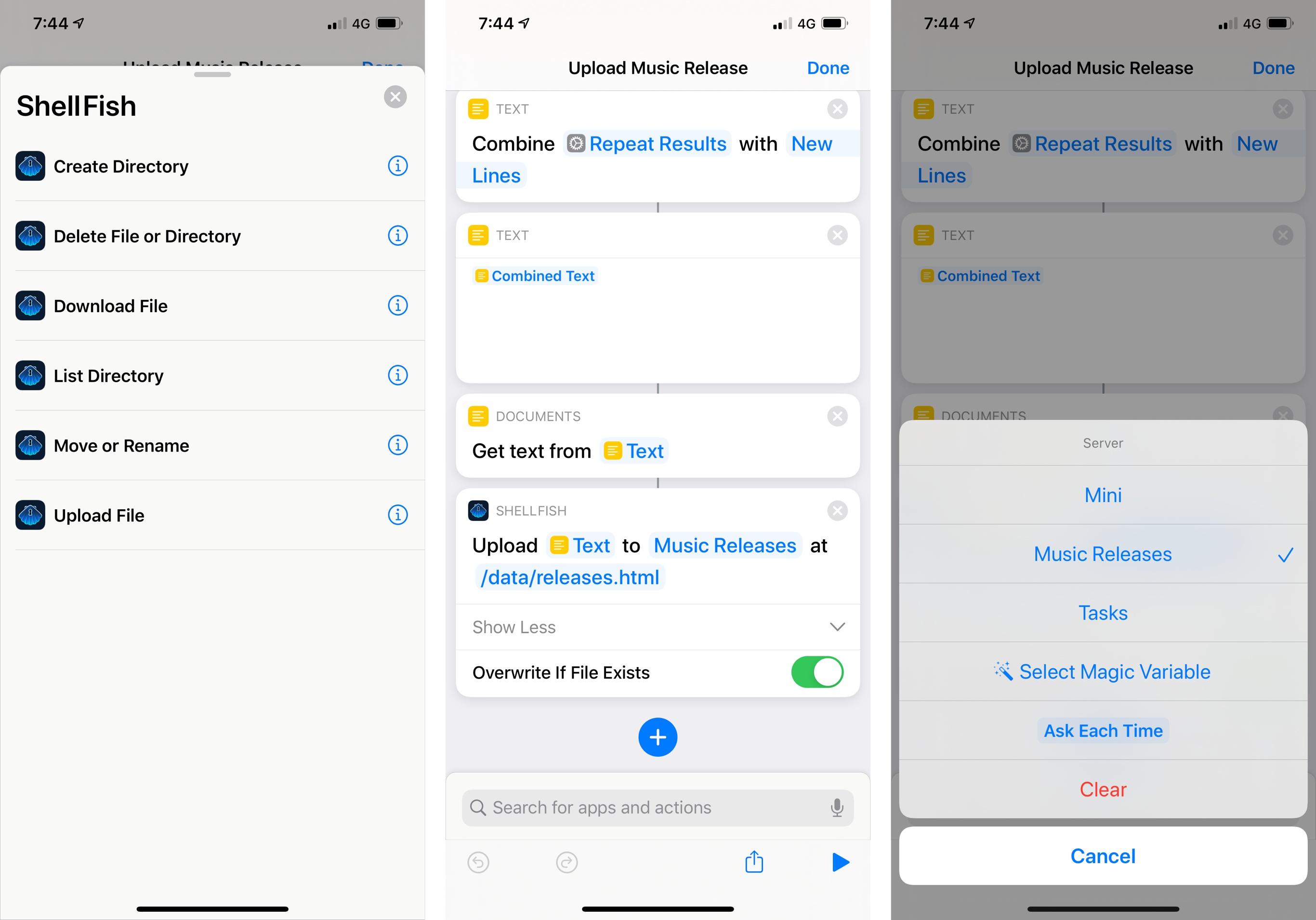

Secure ShellFish, Anders Borum’s excellent SFTP client that integrates with Files, is also adopting parameters with actions to upload files to a server, list the contents of a directory on a server, download files, and more. The ‘Upload File’ shortcut, for instance, lets you pass a file generated from Shortcuts as a parameter, supports picking one of your existing servers with a list, and has a switch to overwrite a file on the server if it already exists.

Secure ShellFish’s shortcuts in iOS 13. Thanks to parameters, the app can now upload any magic variable as a file to a remote server.

I’ve long wished Apple would add native FTP actions to Shortcuts; with the parameter-based actions provided by Secure ShellFish, I can stop waiting for Apple because Borum’s app does exactly what I need and runs inside Shortcuts, where it can be integrated with other apps or Apple’s own actions.

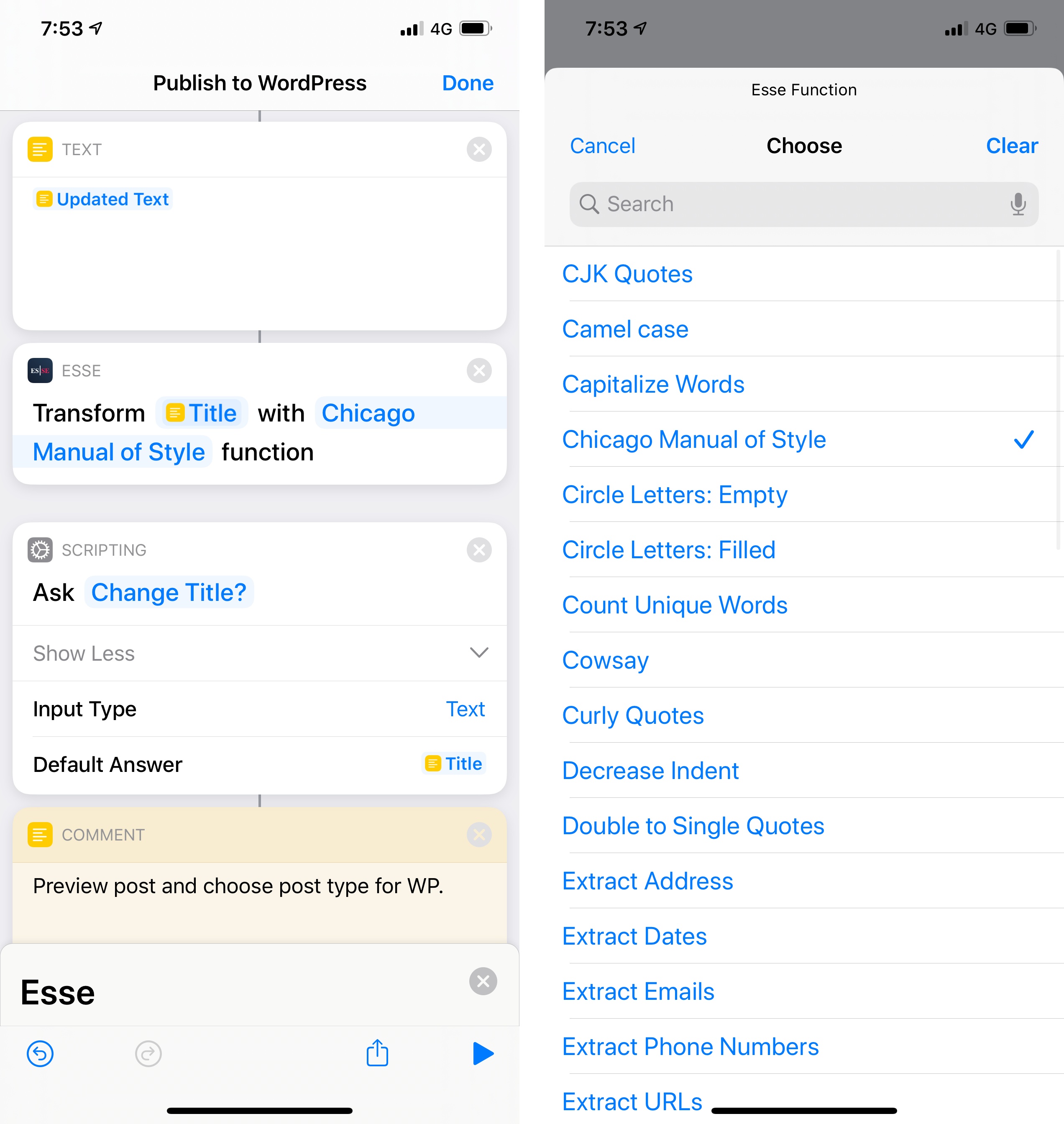

In case it’s not clear yet, parameter-based actions are Apple’s Trojan horse to bring more native functionalities into Shortcuts through third-party apps. Another great example of this is Esse, a utility to transform text into different formats. In iOS 12, Esse forced you to set up individual Siri shortcuts for each text transformation type, which was cumbersome and annoying, and it could only read text previously copied to the system clipboard.

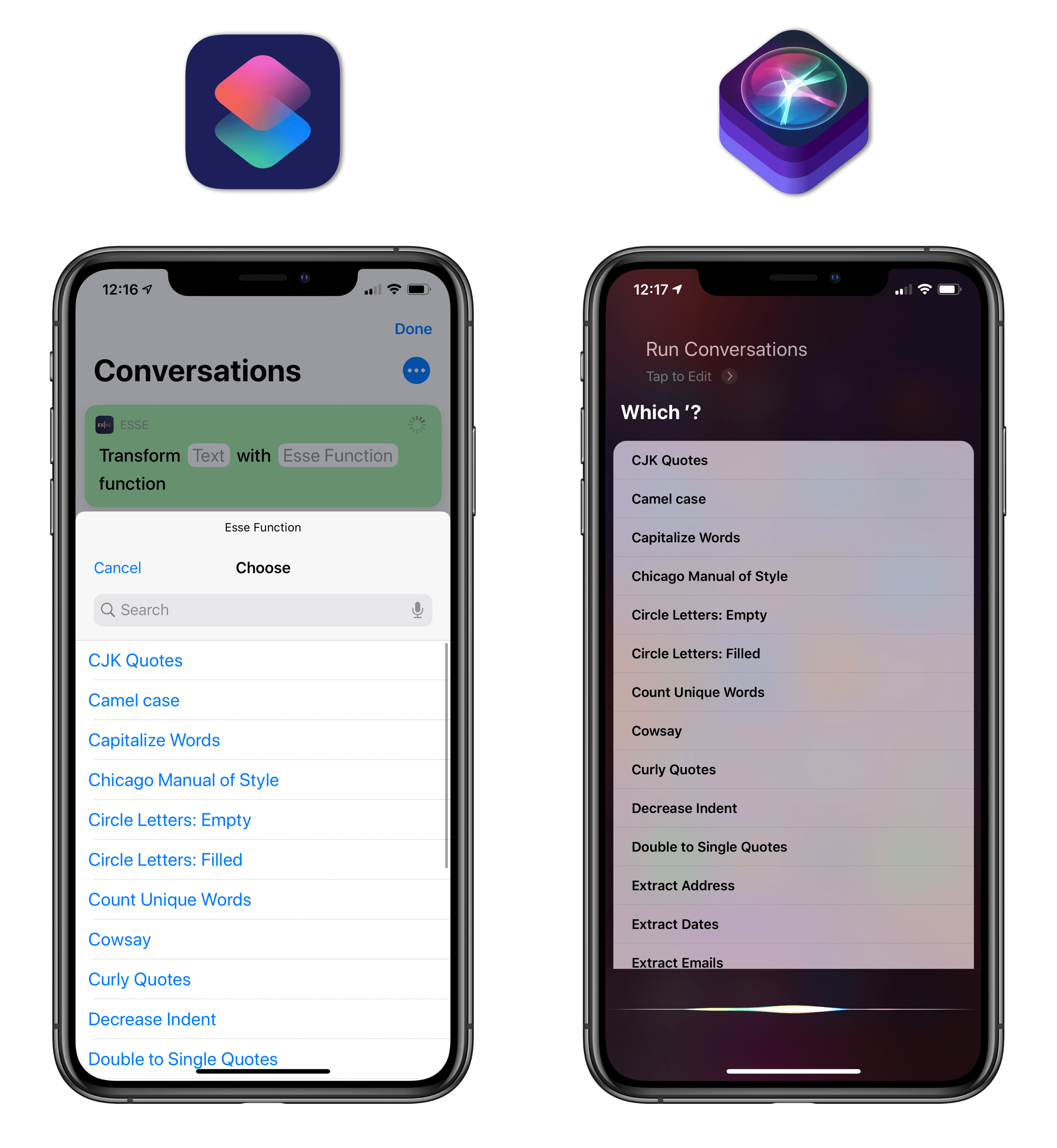

The Esse shortcut we use to capitalize headlines for MacStories (left) and Esse’s list of supported text functions.

In iOS 13, the app now offers a single ‘Transform Text’ shortcut with parameters to pick a transformation type and pass a text string to transform. As a result, Esse’s action is now better than Shortcuts’ own ‘Change Case’ one: it’s just as integrated with other variables in a shortcut, it returns a plain text variable as a result, but it supports more transformation types than Shortcuts. At MacStories, for instance, we can now use Esse’s shortcuts to capitalize titles for our posts using the Chicago Manual of Style guidelines, which aren’t supported by Apple.

Action Outputs

The integration between Shortcuts and third-party apps via parameters isn’t a one-way communication channel: in addition to passing input to apps via parameters, Shortcuts can receive results from apps in a variety of formats. Again, I have some practical examples to share that should give you an idea of what’s possible.

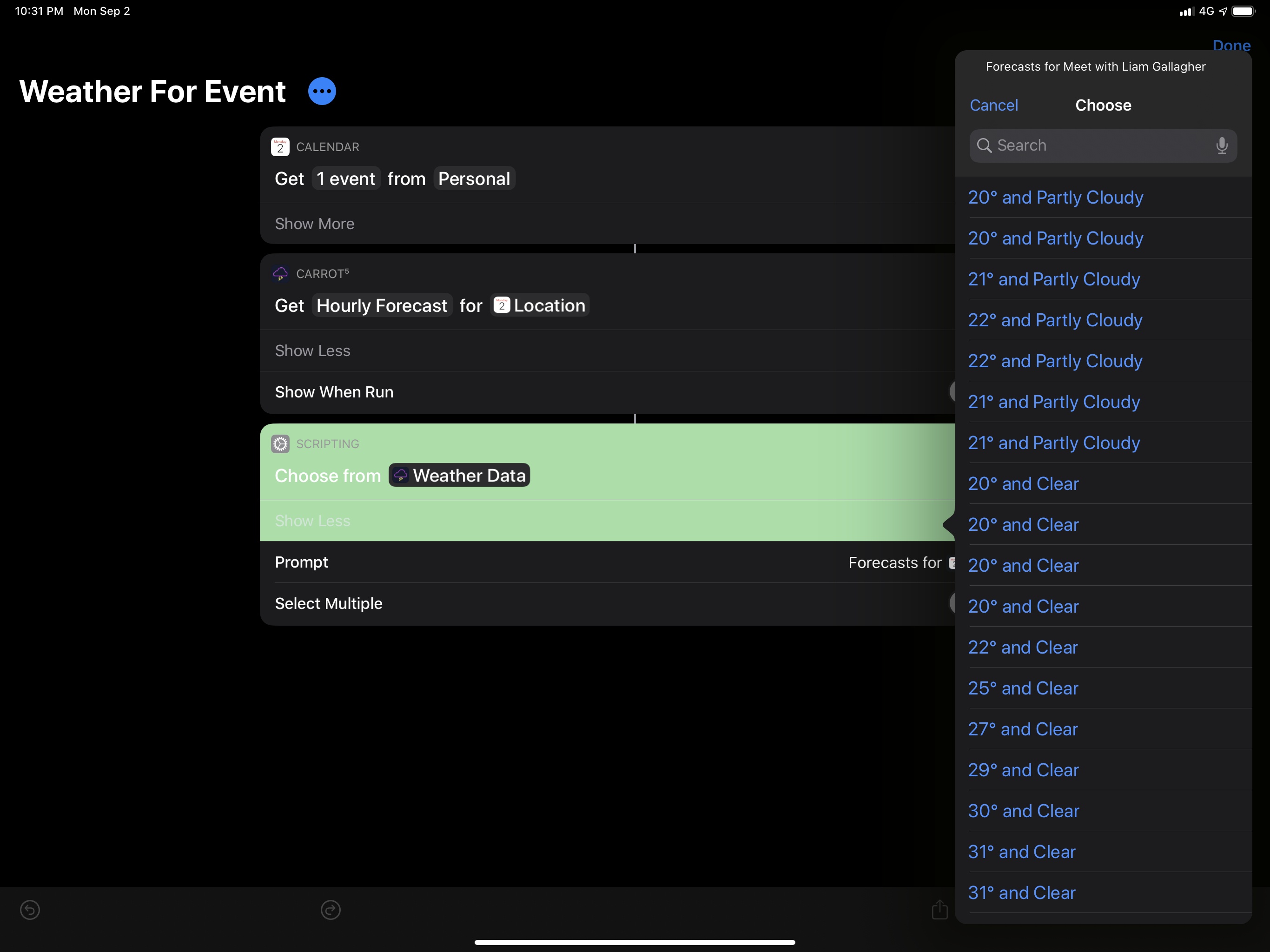

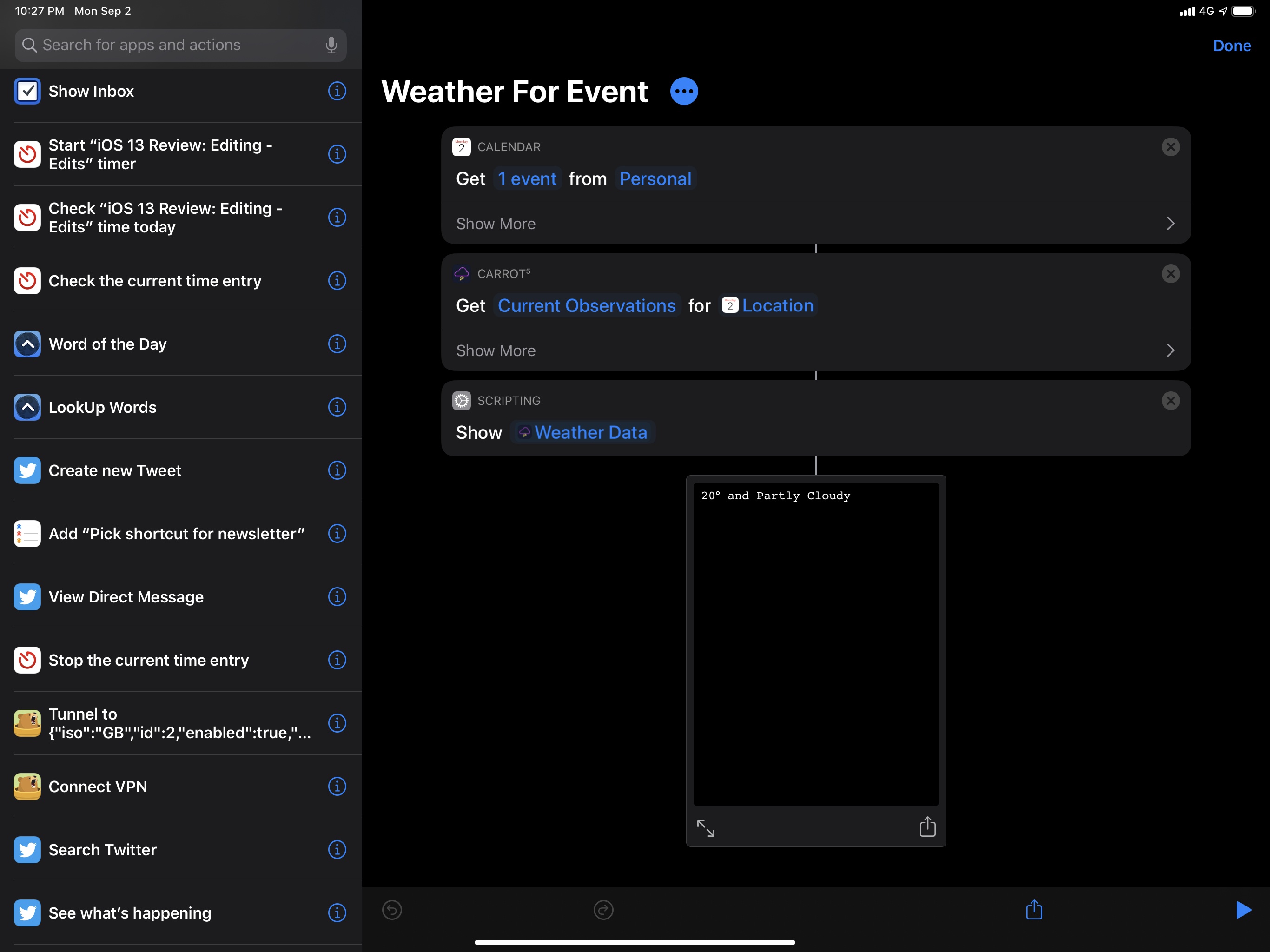

CARROT Weather has been updated for iOS 13 with a ‘Get Weather Report’ shortcut that lets you query the app’s forecast system to return current observations, hourly forecasts, or a daily forecast to the Shortcuts app – all without having to open CARROT or pass clipboard data via Siri shortcuts. By default, the shortcut uses your current location to check the weather, but you can also type an address in the ‘Choose Location’ parameter window or, even better, hook up the location parameter with a location variable returned by another action; you can, for example, get the address of a calendar event and pass that as a location parameter to the CARROT Weather shortcut.

This is all it takes to get the weather forecast from CARROT Weather for an upcoming event in iOS 13.

When the CARROT shortcut runs with the ‘Current Observations’ parameter set, it returns current weather conditions as a plain text string. Select a forecast mode, however, and the shortcut will return a list of results, which is compatible with Shortcuts’ ‘Choose from List’ action to present multiple items at once.

Thanks to the Content Graph engine, outputs returned by third-party actions can often be previewed and processed in Shortcuts with no further conversion necessary. Secure ShellFish, for example, has a shortcut to list the contents of a directory on a remote server: the result is a collection of file paths that you can swipe through using Shortcuts’ Quick Look, or which you can combine with Apple’s ‘Combine Text’ action. Secure ShellFish can also download files from a server: in that case, the result will be a ‘File’ variable, which is natively supported by Shortcuts and can be previewed with Quick Look, passed to the share sheet, or sent elsewhere.

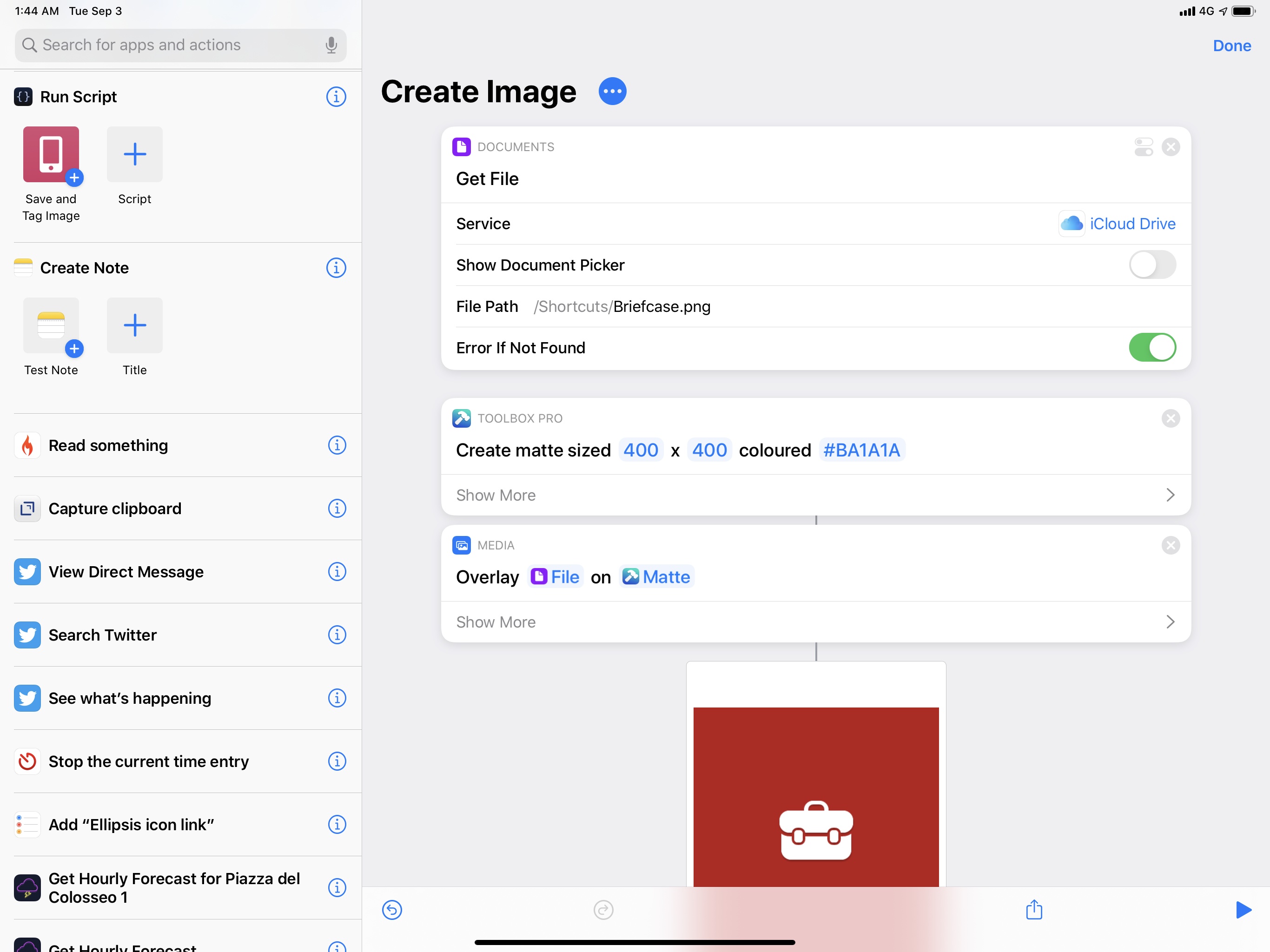

Toolbox Pro, a utility (currently in beta) that aims to complement Shortcuts with additional features through native shortcuts, comes with an action that lets you create images programmatically and use them in other actions. For instance, here’s an image (the red square) that was created inside Shortcuts with a third-party action, on which I overlaid an icon fetched from iCloud Drive:

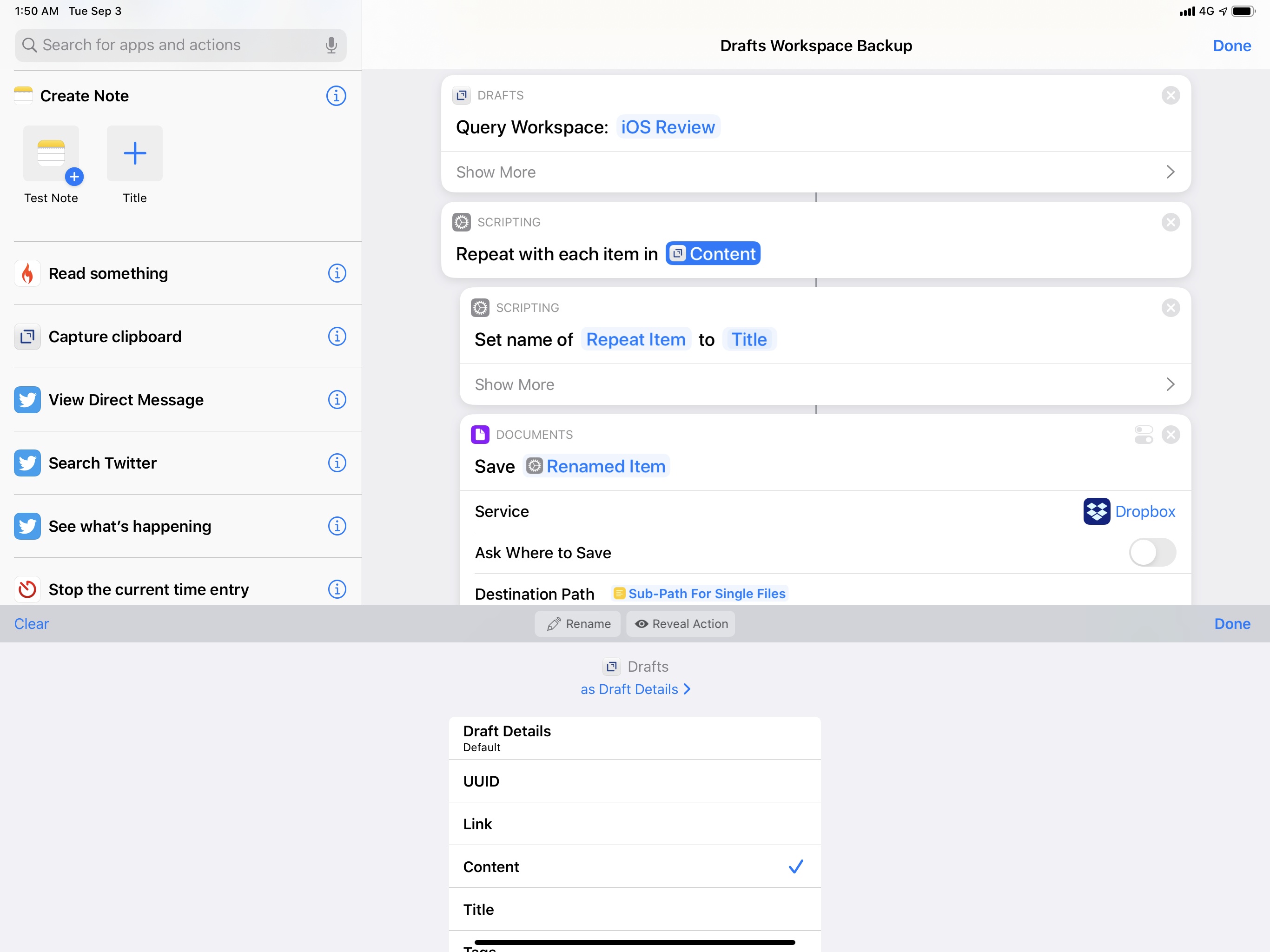

Shortcuts’ magic variable functionality comes full circle in iOS 13 with the ability for third-party actions to return their own custom magic variables for a shortcut to use. Take Greg Pierce’s Drafts as an example: in iOS 13, Drafts offers a shortcut to query the contents of an entire workspace and get all the notes contained inside it. The object returned by this action is a custom magic variable called ‘Drafts Details’, which can be expanded to specify which part of a draft you want to parse, such as its title, body text, creation date, and more. I’ve been using this action to compile my ‘iOS Review’ workspace to a single .md text file that I backed up to multiple locations earlier this year; I’ve never had a better backup system for my articles. I’d like all text editors to support similar shortcuts now.

The freedom afforded by the ability to parse application data as custom objects in Shortcuts is unlike anything we’ve seen before in iOS automation: we have reached the point where, in just a few taps, we can request secure access to an app’s data, program actions in a visual environment, and work with rich responses that can be connected to other apps’ actions. By definition, this is an application programming interface, but it’s exposed without writing a single line of code, and it’s fully integrated with Siri on multiple Apple devices.

Which brings me to the next major feature unlocked by parameters…

Conversational Shortcuts and Siri Interactions

Conversational shortcuts, originally announced by Apple at WWDC, are among the features that have been postponed to iOS 13.1. I’m including them in my coverage today because they help us understand Apple’s strategy with dynamic parameters and third-party shortcuts, but they’re not part of iOS 13.0, and you’ll have to wait a while longer to take advantage of them.

A conversational shortcut is a shortcut you can interact with while using Siri and your voice. As I mentioned above, one of the drawbacks of iOS 12’s shortcuts framework was the lack of any kind of user interaction with shortcuts running inside Siri: if a shortcut required you to enter some text or pick an option from a list, it would launch the Shortcuts app instead of completing the task within Siri.

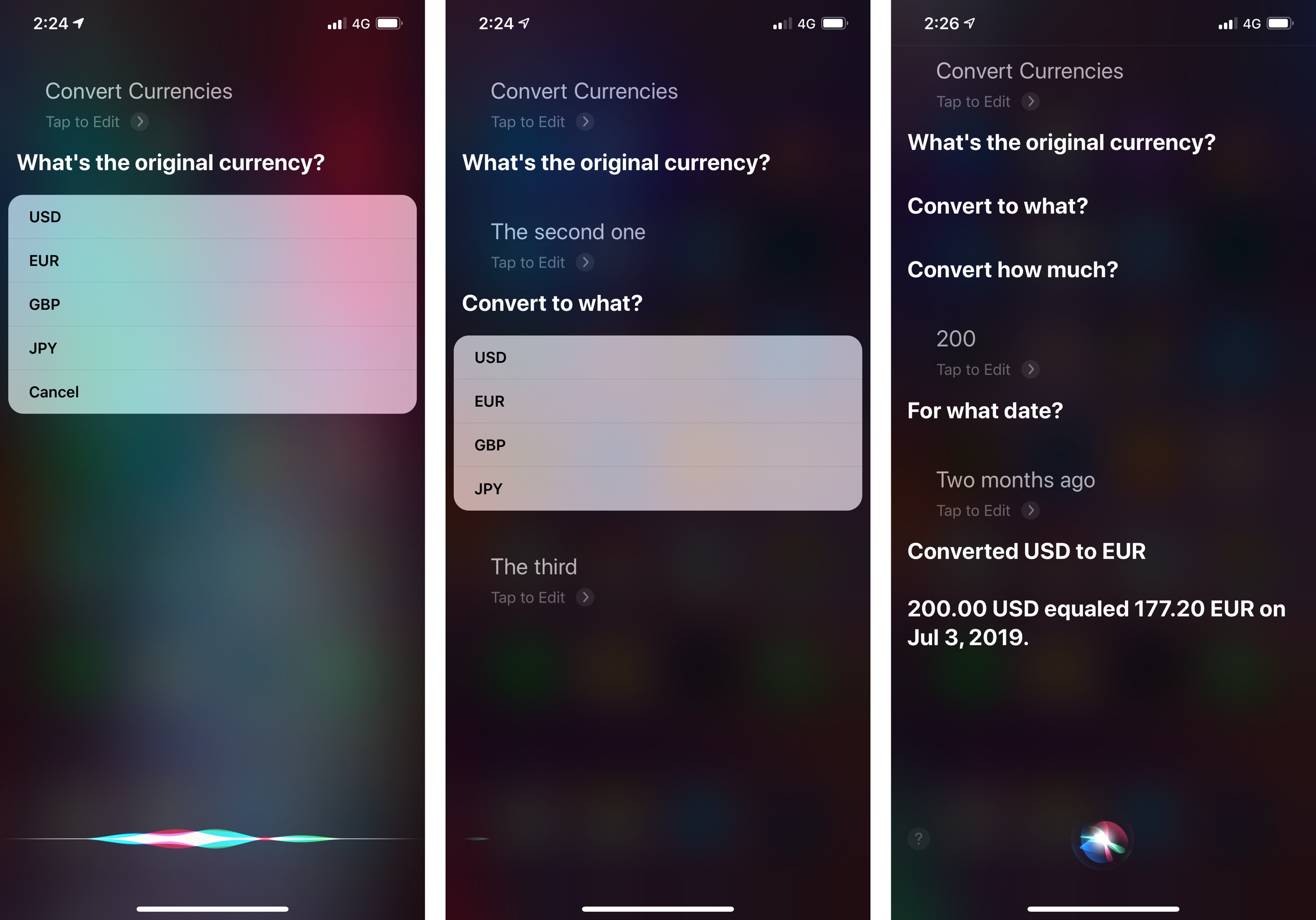

All of this is changing in iOS 13.1: if Siri hits a parameter that requires an interaction to be resolved, it’ll ask for your input before continuing with the execution of the shortcut. At a high level, this typically involves speaking a response or choosing from a list of options; both Shortcuts’ native actions and third-party actions will support conversational mode, which has been designed to allow for interactions on any Siri-capable device that can run shortcuts, including HomePod and Apple Watch.

On Apple’s side, shortcuts have been made conversational by bridging actions that require user interaction to Siri’s voice environment. When a custom shortcut running inside Siri encounters one of the following actions…

- Choose from List

- Choose from Menu

- Ask for Input

- Dictate Text

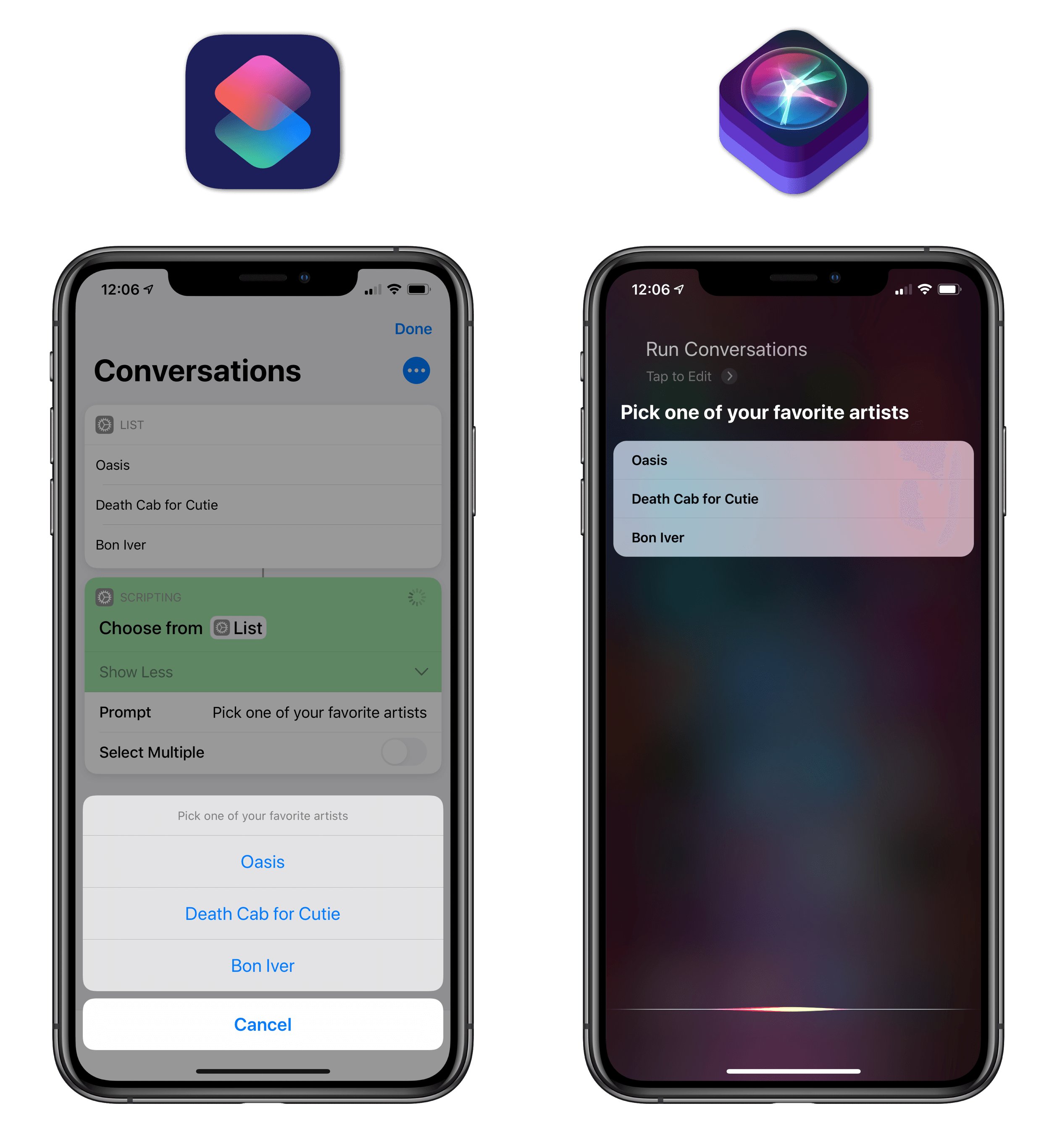

…the actions will automatically transform into Siri interactions thanks to conversational mode. Here’s what a ‘Choose from List’ action looks like when running within Siri:

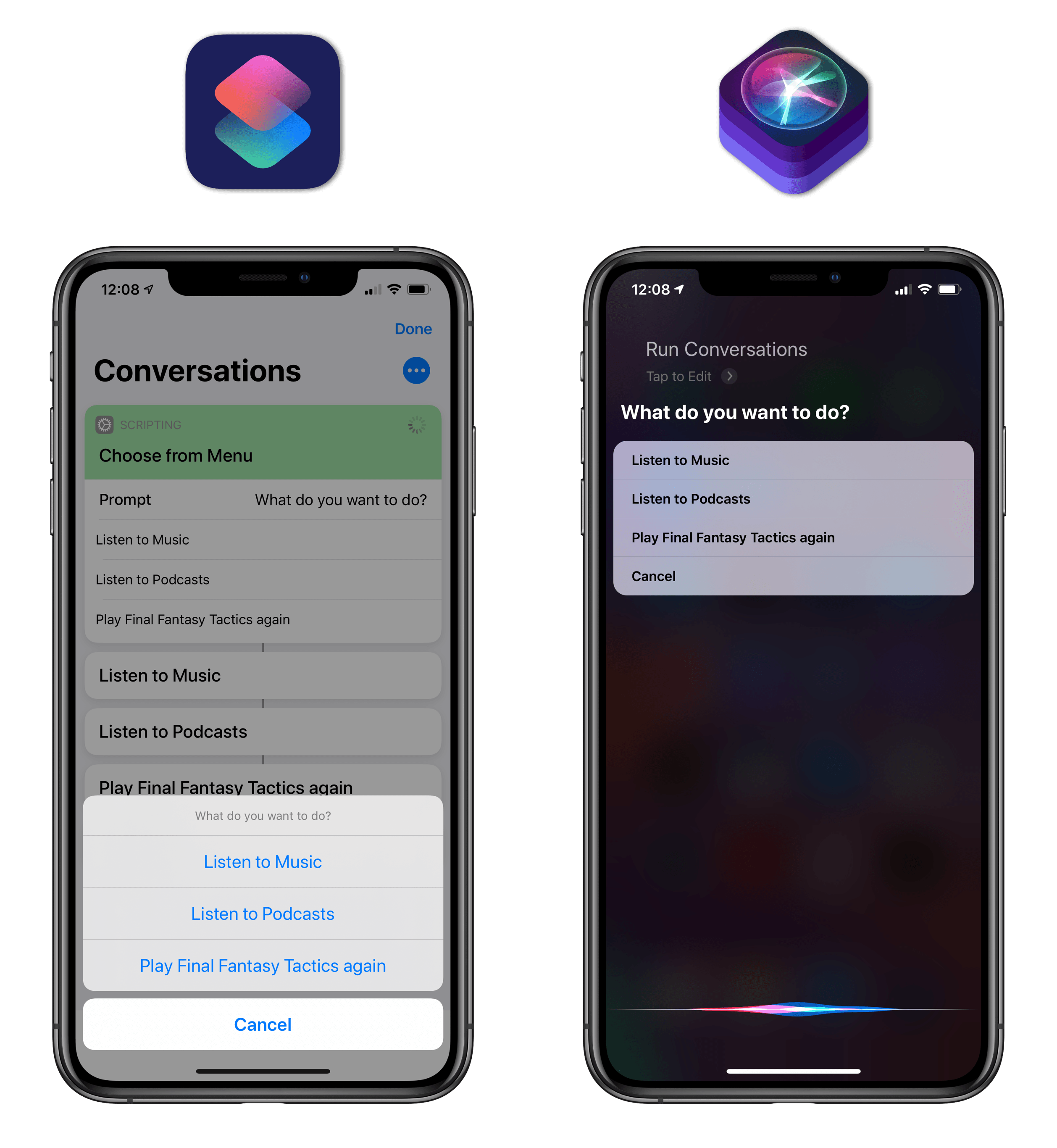

Here’s a menu:

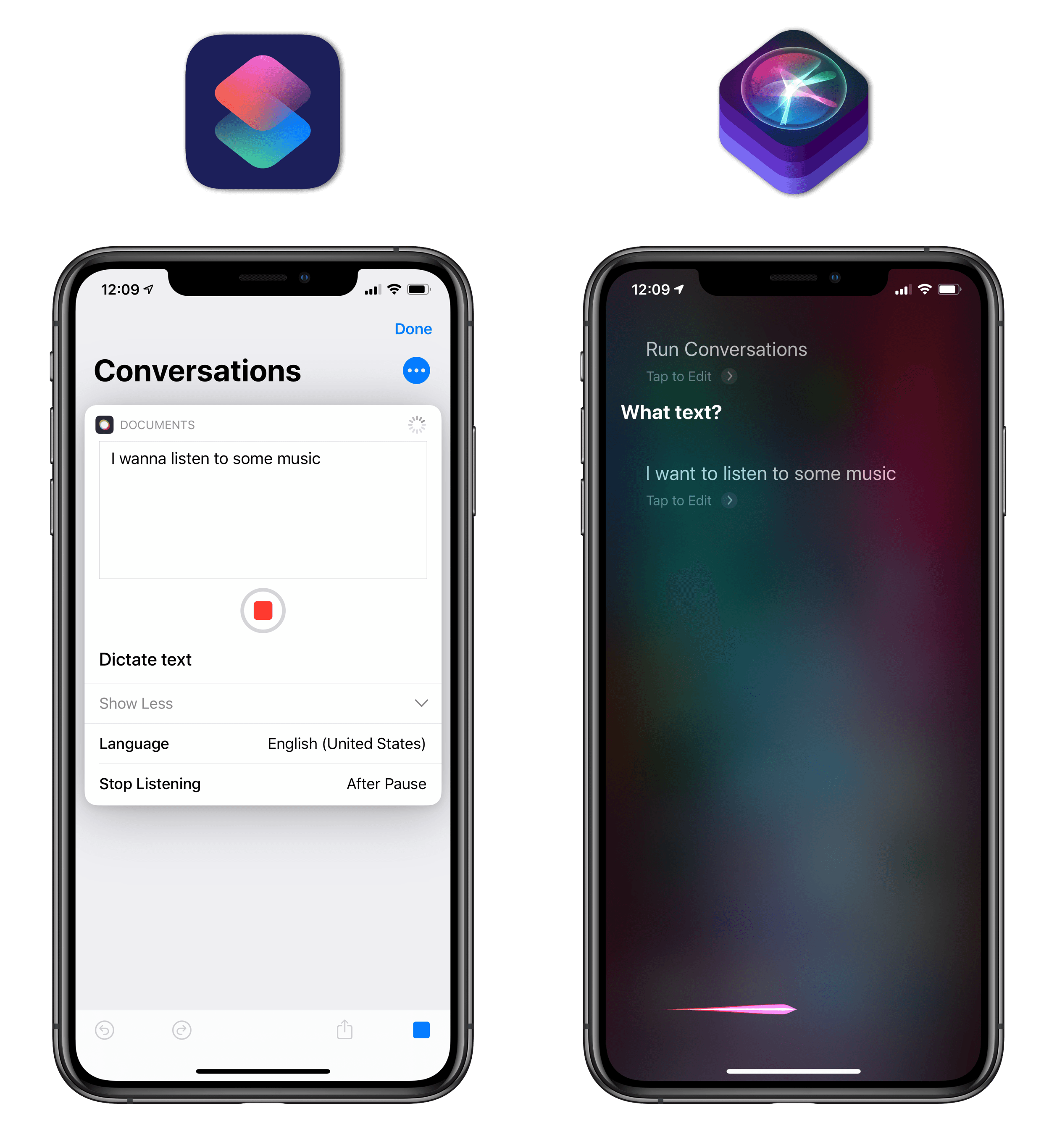

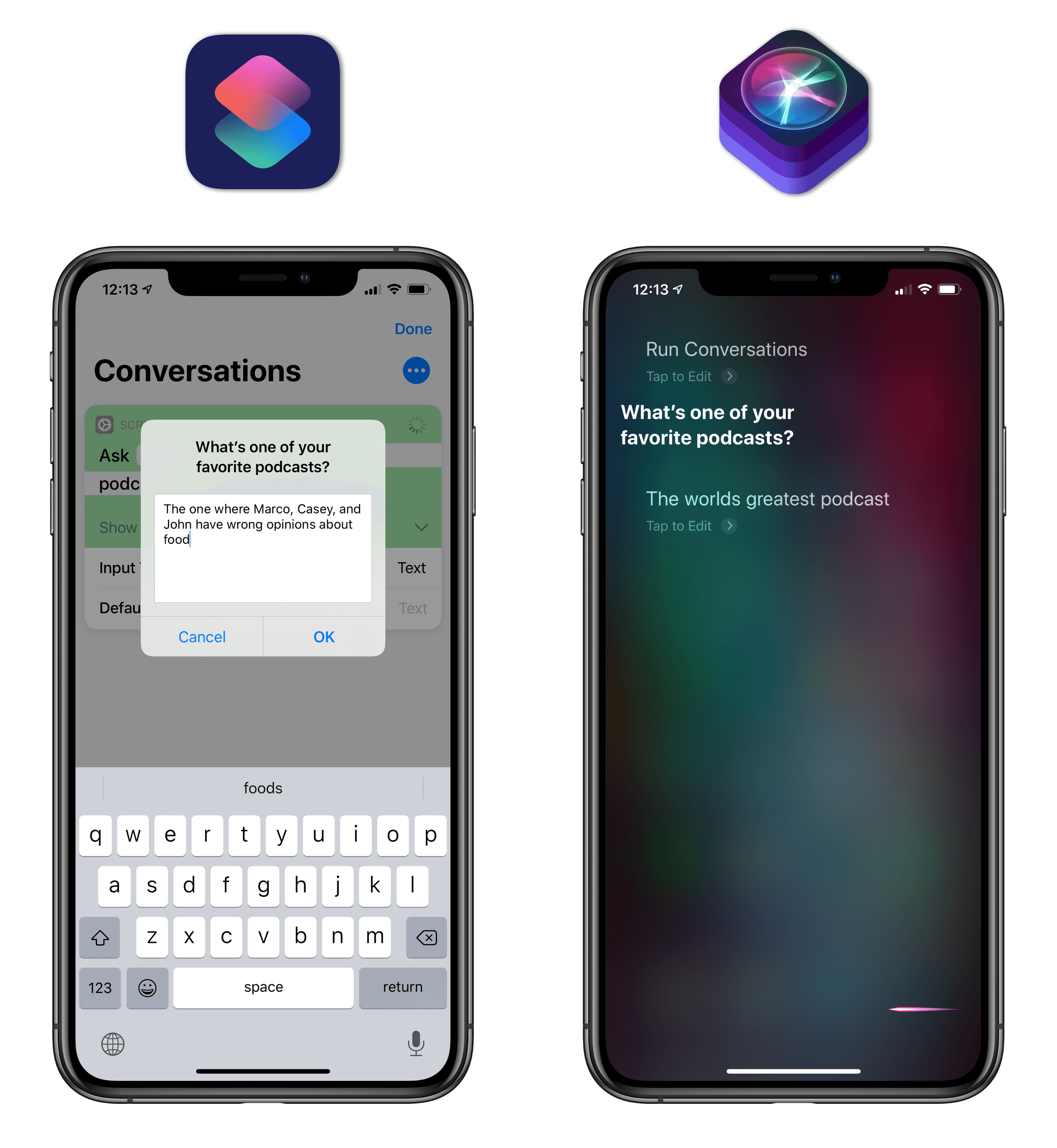

And here are requests for text input:

While this kind of Siri integration with certain actions is precisely what I imagined last year, what I didn’t foresee at the time was how Apple could leverage Siri’s linguistic abilities to make shortcuts truly conversational and well-suited for human interactions.

Actions aren’t just bridged to Siri so you can speak the name of an option or tap the screen to select a menu item: they fully support natural language input, allowing you to interact with Siri without following a specific script. For instance, when a list of multiple options comes up, you don’t have to say the exact name of an item to choose it: you can just say things like “the first one” or “pick the third option”, and Siri will know what you mean. All of Siri’s natural language capabilities for list-picking, punctuation, and text input can be tapped into when interacting with a conversational shortcut. As a result, not only are your shortcuts more useful because you can use them anywhere, but they’re optimized for voice interactions as well, so you can have a conversation with a shortcut as you normally would with Siri.

An example of a conversational shortcut in Siri. You can go back and forth with the assistant using natural language input.

Conversational shortcuts aren’t limited to a handful of Apple actions: all third-party actions based on parameters can be used in conversational mode in Siri with iOS 13.1. When actions with parameters are invoked within Siri, they behave as they would in UI form in the Shortcuts app, with the addition of custom voice responses. If a parameter was left empty or has an ‘Ask Each Time’ value, Siri will bring up a list of dynamic options to choose from, which you can then confirm using natural language input. If an action has multiple parameters that require user input, Siri will move through them sequentially, requesting that you fill them out one by one at runtime before executing a shortcut and returning a visual or vocal response (or both).

Let’s go back to the Esse shortcut I mentioned above. If both the ‘Text’ and ‘Function’ parameters are left empty and the shortcut is invoked in Siri, it will not error out, nor will it launch the Shortcuts app: in iOS 13.1, Siri will first ask you to pick a transformation to run, then it’ll let you dictate some text to transform.

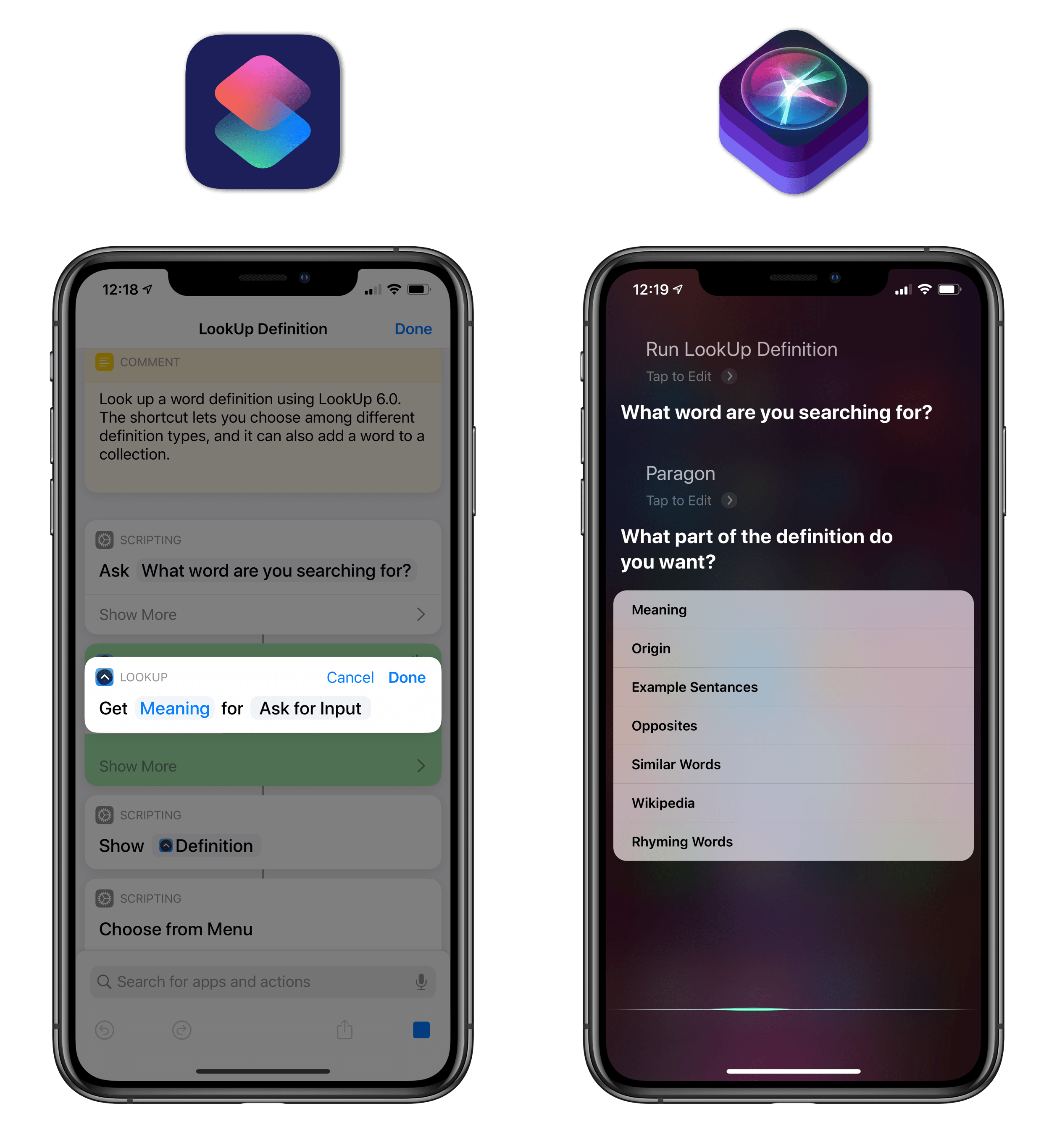

LookUp, my favorite dictionary app, is getting a major update for iOS 13 with new shortcuts based on parameters. One of these actions lets you look up the meaning of a word: if the definition parameter is left empty and the shortcut is run from Siri, you’ll be able to choose a definition type from the assistant, which will then return a printed response along with a visual snippet powered by the LookUp interface.

As you can see from the screenshots above, there’s some work developers need to do to optimize their dynamic shortcuts for iOS 13. When Siri reaches a parameter that requires user input, it can ask you a descriptive question, but that question (“What word are you searching for?” in the example above) has to be programmed by the app’s developer beforehand. Furthermore, in iOS 13 developers can provide pronunciation hints for letting Siri understand less verbose options, such as “veggie” instead of “vegetarian”; these dictionaries of synonyms also have to be created by developers first, as it’s not something that the system does for them.

It’s not an exaggeration to say that conversational mode gives Shortcuts an entirely new dimension, making the app – and thus Siri – drastically more convenient on a daily basis.

While Shortcuts’ integration with Siri in iOS 12 was useful, it was limited to fixed actions without interactivity, which hindered Siri’s ability to perform personalized tasks on demand throughout the day. iOS 13.1 and conversational mode fulfill Apple’s vision for Shortcuts and Siri: every action, no matter whether it’s made by Apple or not, can now run in a voice-only mode on any compatible device, providing you with new ways to use your favorite apps and run your custom shortcuts wherever you are, whenever you want.

Conversational mode sums up Apple’s advantage over its competition: in a world where digital assistants are made more versatile by pushing your personal data through web-based integrations and cloud services, Apple is extending Shortcuts and Siri using a clear privacy model based on the App Store, on-device requests, and native app actions. If developers adopt actions with dynamic parameters in their apps, they won’t just offer better automation features for Shortcuts: they will effectively become new features for Siri – a unique blend of automation and services that only Apple can deliver to users today.