Photos and Camera

A world-class portable camera is one of the modern definitions of the iPhone. Among many factors, people buy an iPhone because it takes great pictures. And Apple’s relentless camera innovation isn’t showing any signs of slowing down this year.

But the importance of the iPhone’s camera goes deeper than technical prowess. The Camera and its related app, Photos, create memories. Notes, Reminders, Maps, and Messages are essential iOS apps; only the Camera and Photos have a lasting, deeply emotional impact on our lives that goes beyond utility. They’re personal. They’re us.

iOS 10 strives to improve photography in two parallel directions: the capturing phase and the discovery of memories – the technical and the emotional. Each relies on the other; together, they show us a glimpse of where Apple’s hardware and services may be heading next.

Wide Color

Let’s start with the technical bits.

The Camera Capture pipeline has been updated to support wide-gamut color in iOS 10. All iOS devices can take pictures in the sRGB color space; the 9.7-inch iPad Pro and the upcoming iPhone 7 hardware also support capturing photos in wide-gamut color formats.

When viewed on displays enabled for the P3 color space, pictures taken in wide color will be beautifully displayed with richer colors that take advantage of a wider palette of options. That will result in more accurate and deeper color reproduction that wasn’t possible on the iPhone until iOS 10 (the 9.7-inch iPad Pro was the only device with a wide color-enabled display on iOS 9).

There are some noteworthy details in how Apple is rolling out wide color across its iOS product line, using photography as an obvious delivery method.

Wide color in iOS 10 is used for photography, not video. JPEGs (still images) captured in wide color fall in the P3 color space; Live Photos, despite the presence of an embedded movie file, also support wide color when viewed on the iPad Pro and iPhone 7 (or the Retina 5K iMac).

Apple has been clever in implementing fallback options for photos displayed on older devices outside of the P3 color space. The company’s photo storage service, iCloud Photo Library, has been made color-aware and it can automatically convert pictures to sRGB for devices without wide color viewing support.

More interestingly, wide-gamut pictures shared via Mail and iMessage are converted to an Apple Wide Color Sharing Profile by iOS 10. This color profile takes care of displaying the image file in the appropriate color space depending on the device it’s viewed on.

Even as a tentpole feature of the iPhone 7, wide-gamut photography isn’t something most users will care (or know) about. Wide color is relevant in the context of another major change for iOS photographers and developers of photo editing software – native RAW support.

RAW Capture and Editing

Apple used an apt and delicious analogy to describe RAW photo capture at WWDC: it’s like carrying around the ingredients for a cake instead of the fully baked product. Like two chefs can use the same ingredients to produce wildly different cakes, RAW data can be edited by multiple apps to output different versions of the same photo.

RAW stores unprocessed scene data: it contains more bits because no compression is involved, which leads to heavier file sizes and higher performance required to capture and edit RAW. On iOS 10, RAW capture is supported on the iPhone SE, 6s and 6s Plus, 7 and 7 Plus (only when not using the dual camera), and 9.7-inch iPad Pro with the rear camera only, and it’s an API available to third-party apps (Apple’s Camera app doesn’t capture in RAW).

To store RAW buffers, Apple is using the Adobe Digital Negative (DNG) format; among the many proprietary RAW formats used by camera manufacturers, DNG is as close to an open, publicly available standard as it gets.64

At a practical level, the upside of RAW capture is the ability to reverse and modify specific values in post-production to improve photos in a way that wouldn’t be possible with processed JPEGs. On iOS 10, RAW APIs allow developers to create apps that can tweak exposure, temperature, noise reduction, and more after having taken a picture, giving professionals more creative control over photo editing.

Things are looking pretty good in terms of performance, too. On iOS devices with 2 GB of RAM or more, the system can edit RAW files up to 120 megapixels; on devices with 1 GB of RAM, or if accessed from an editing extension inside Photos (where memory is more limited), apps can edit RAW files up to 60 megapixels.

Native RAW support opens up an opportunity for developers to fill a gap on the App Store: desktop-class photo editing and management apps for pros. If adopted by the developer community, native RAW capture and editing could enable workflows that were previously exclusive to the Mac. Imagine shooting RAW with a DSLR, or even an iPhone 7, and then sitting down with an iPad Pro to organize footage, flag pictures, and edit values with finer, deeper controls, while also enjoying the beauty and detail of wide-gamut images (which RAW files inherently are).

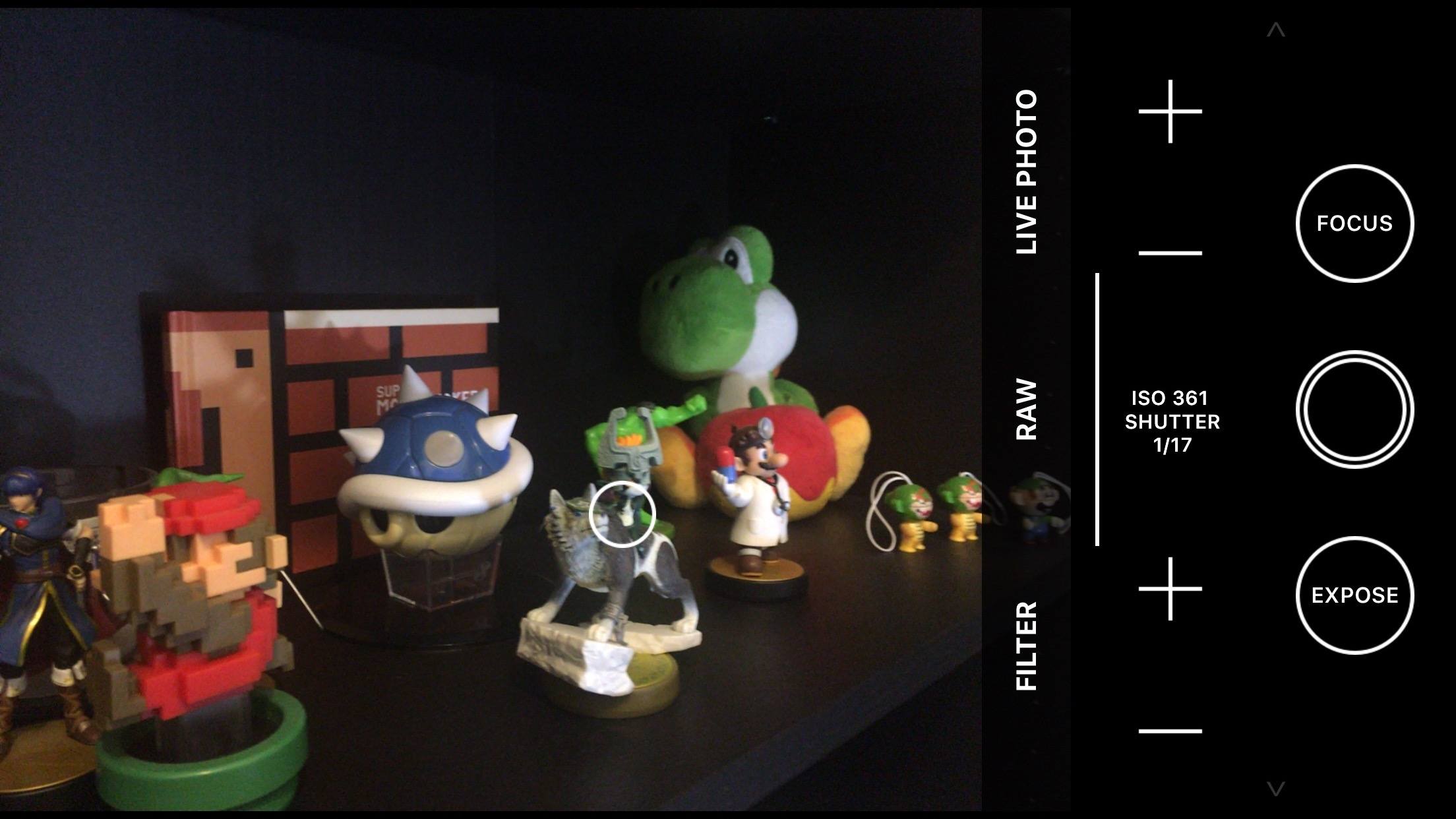

I tested an upcoming update to Obscura, Ben McCarthy’s professional photo app for iOS, with RAW support on iOS 10. RAW can be enabled from the app’s viewfinder; after shooting with Obscura, RAW photos are saved directly into iOS’ Photos app.

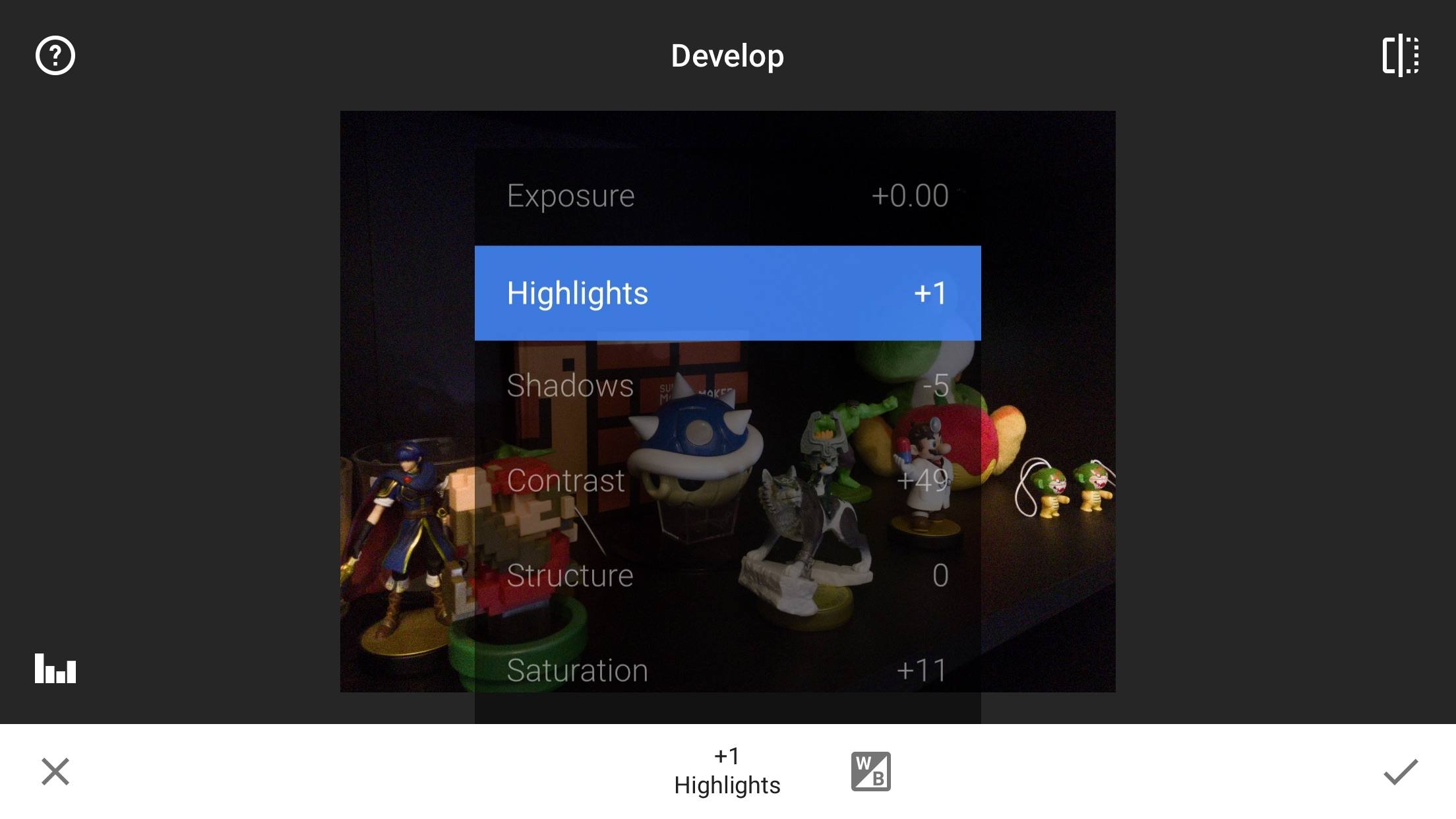

Google’s Snapseed photo editor imported RAW files shot in Obscura without issues, and I was able to apply edits with Snapseed’s RAW Develop tool, saving changes back to Photos. I’m not a professional photographer, but I was still impressed by the RAW workflows now possible with third-party apps and iOS 10.

On the other hand, while Apple has improved developer tools for RAW capture and editing, hurdles remain in terms of photo management. iCloud Photo Library, even at its highest tier, only offers 2 TB of storage; professional photographers have libraries that span decades and require several TBs. The situation is worse when it comes to local storage on an iPad, with 256 GB being the maximum capacity you can buy for an iPad Pro today. Perhaps Apple is hoping that these limitations will push users to rely on cloud-based archival solutions that go beyond what’s offered by iCloud and iOS’ offline storage. However, it’s undeniable that it’s still easier for a creative professional to organize 5 TB of RAW footage on a Mac than an iPad.

I have no reason to doubt that companies like Adobe will be all over Apple’s RAW APIs in iOS 10. I’m also curious to see how indie developers will approach standalone camera apps for RAW capture and quick edits. There’s still work to be done, but the dream of a full-featured photo capture, editing, and management workflow on iOS is within our grasp.

Live Photos

Apple isn’t altering the original idea behind Live Photos with iOS 10: they still capture the fleeting moment around a still image, which roughly amounts to 1.5 seconds before a picture is taken and 1.5 seconds after. Photos have become more than still images thanks to Live Photos, and there are some nice additions in iOS 10.

Live Photos now use video stabilization for the movie file bundled within them. This doesn’t mean that the iPhone’s camera generates videos as smooth as Google’s Motion Stills, but they’re slightly smoother than iOS 9. Another nice change: taking pictures on iOS 10 no longer stops music playback.

Furthermore, editing is fully supported for Live Photos in iOS 10. Apps can apply filters to the movie inside a Live Photo, with the ability to tweak video frames, audio volume, and size.65 To demonstrate the new editing capabilities, Apple has enabled iOS’ built-in filters to work with Live Photos, too.

The key advantage of Apple’s Live Photos is integration with the system Camera, which can’t be beaten by third-party options. I’d like to see higher frame rates in the future of Live Photos; for now, they’re doing a good enough job at defining what capturing a moment feels like.