.](https://cdn.macstories.net/untitled-1723677211874.png)

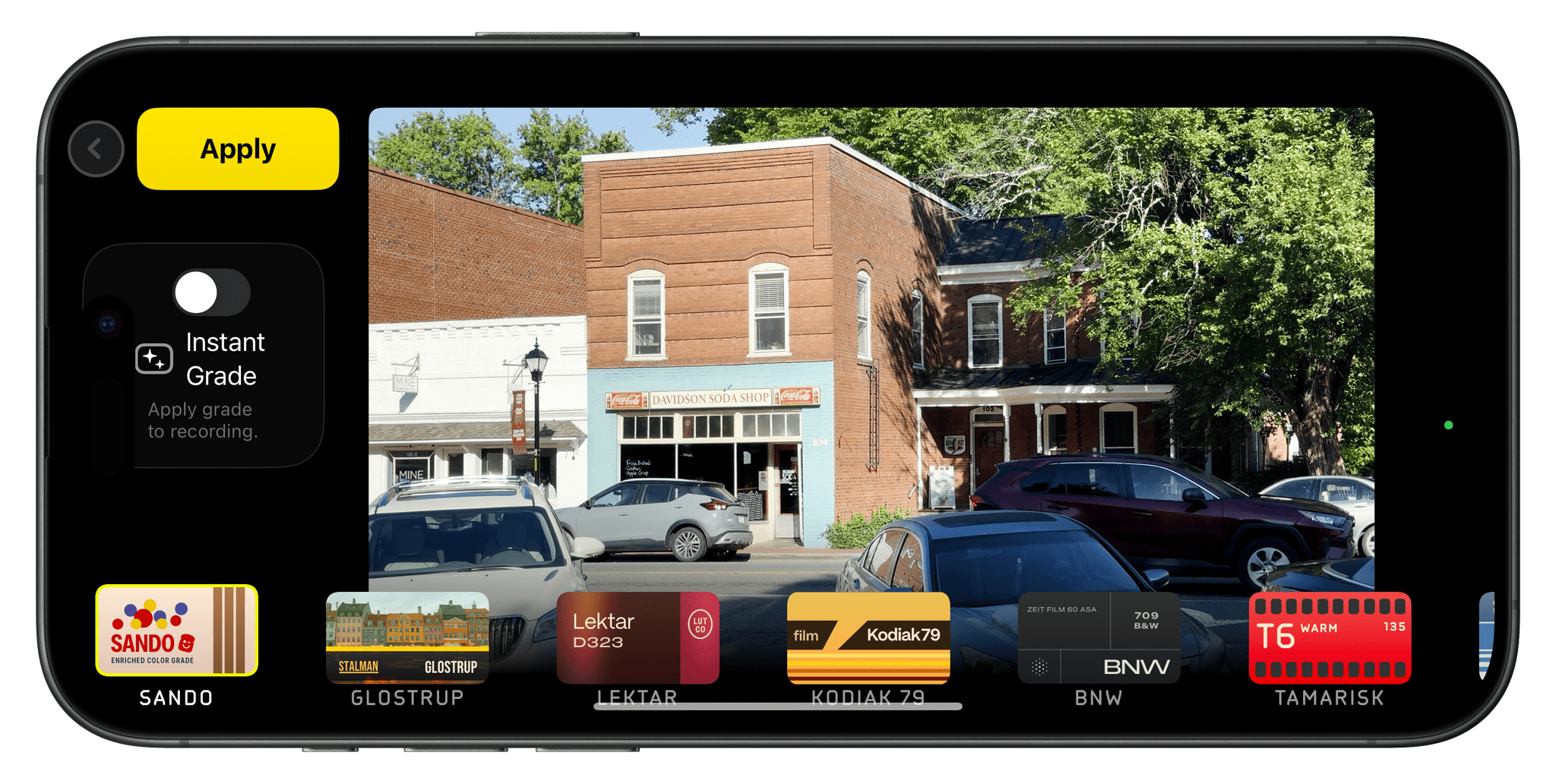

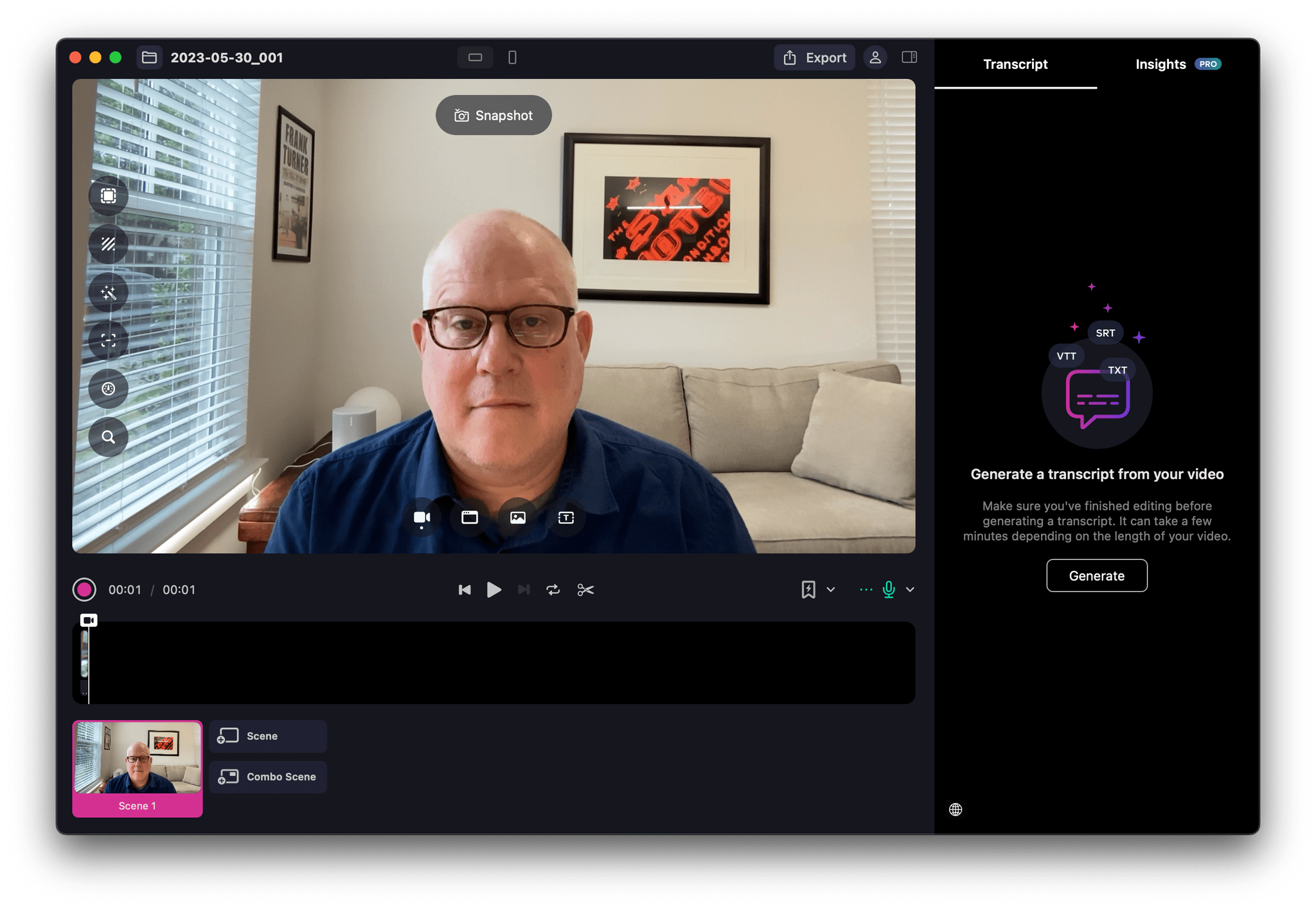

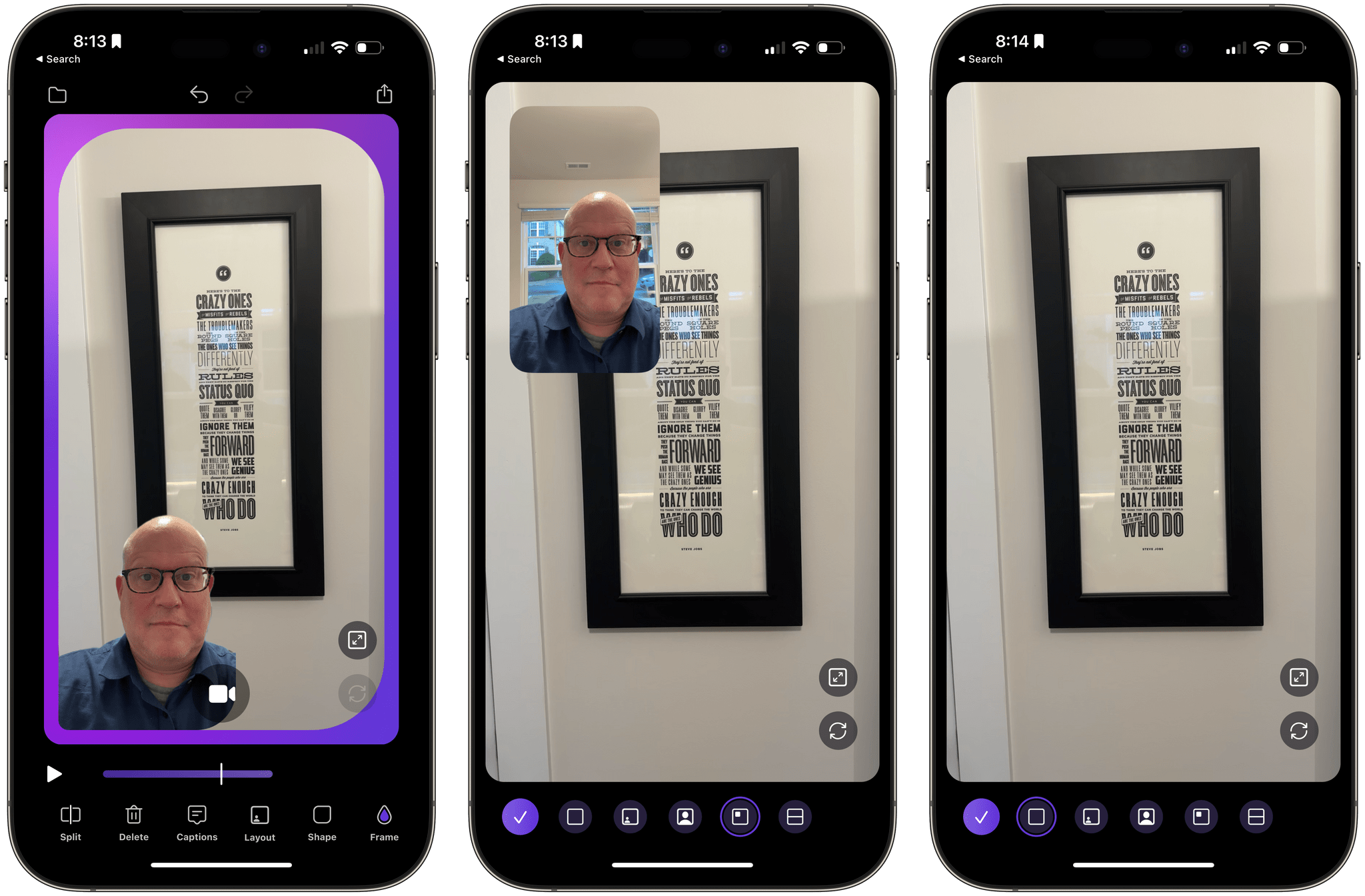

Images taken using Process Zero. Source: Lux.

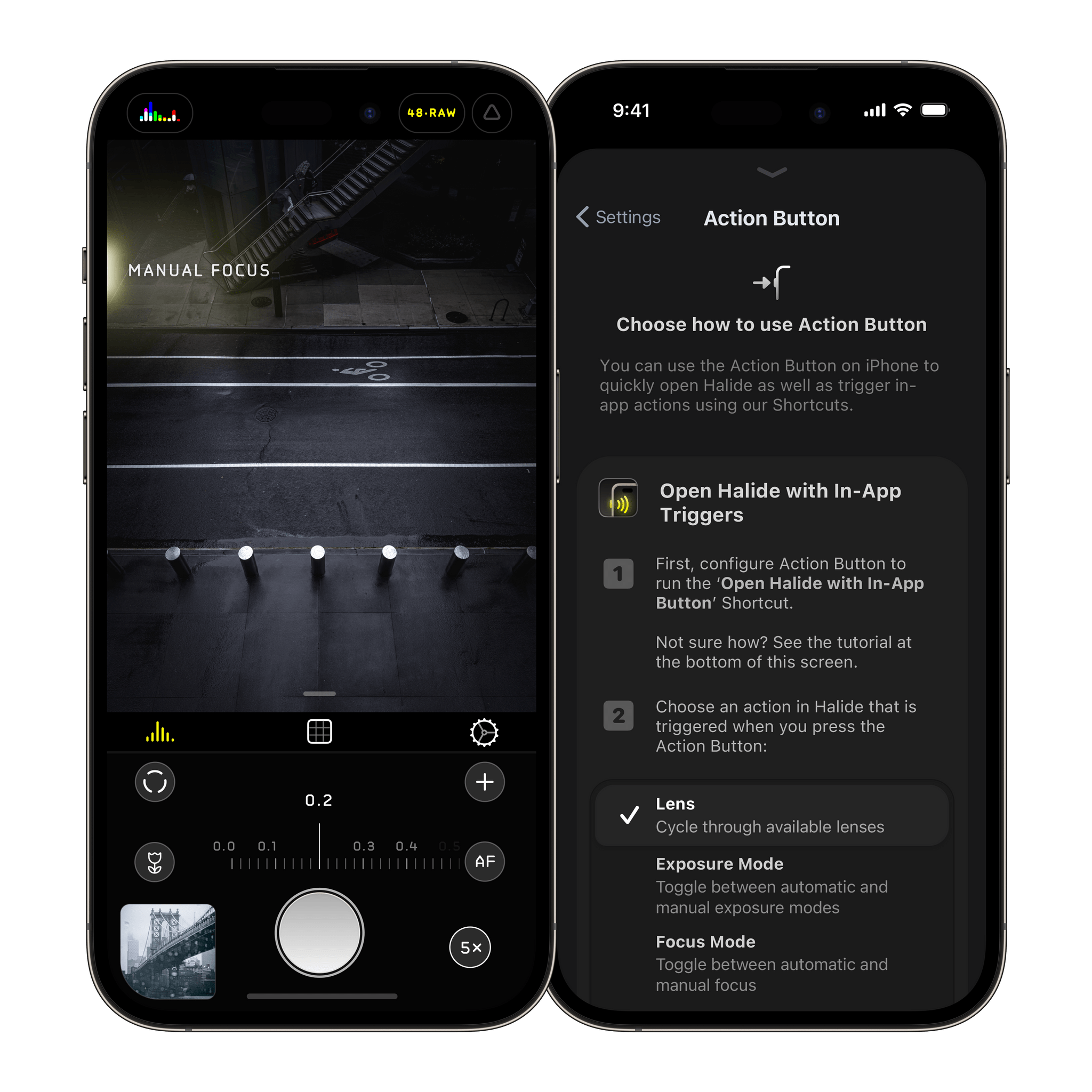

Today, Lux released an update to Halide, its manual control camera app. The marquee feature is Process Zero, a mode that allows photographers to take images with no algorithmic or AI processing. As Lux’s Ben Sandofsky explains it:

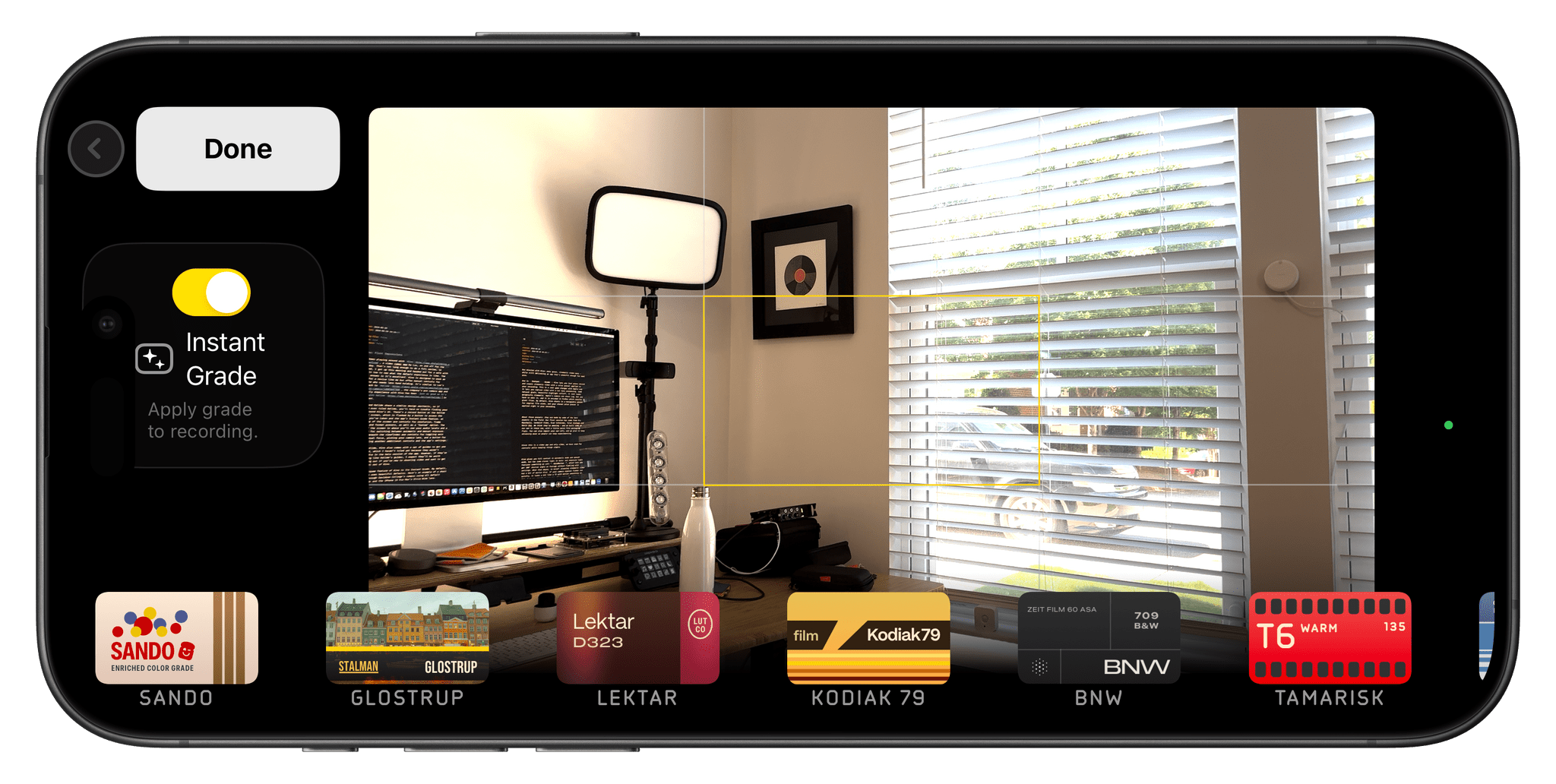

Process Zero is a new mode in Halide that skips over the standard iPhone image processing system. It produces photos with more detail and allows the photographer greater control over lighting and exposure. This is not a photo filter— it really develops photos at the raw, sensor-data level.

The result is that it’s possible to capture finer details than a processed photo under some conditions. The resulting image is a RAW file that’s 12 MB, significantly smaller than a ProRAW photo. In addition to Process Zero, the Halide team introduced Image Lab, a feature accessed from your Halide photo library, that offers a single dial element for adjusting your RAW photos.

Process Zero comes with some tradeoffs as explained in depth in Sandofsky’s post. The images it produces are “less saturated, softer, grainier, and quite different than what you see from most phones.”

I’ve had limited time to try Process Zero, but it was immediately apparent that the process of taking photos is different and harder compared to relying on the iPhone’s image processing. The feature requires a more deliberate, attentive approach to Halide’s manual camera settings to get a good shot. That’s not necessarily a bad thing, but it is clearly different, and I imagine it’s also probably the best way to really learn how the app’s manual camera settings work.

I also appreciate that the Halide team is taking a human-focused approach to photography at a time when so many developers and AI companies seem all too willing to cast aside photographers in favor of algorithms and generative AI. Process Zero’s approach to photography isn’t for everyone, and I expect most of the time, it won’t be for me either. However, I’m glad it’s an option because, in the hands of a skilled photographer, it’s a great tool.

If you’re interested in checking out Halide’s new Process Zero and Image Lab features, which are the foundation of what will become Halide Mark III, the app is currently on sale. For the rest of this week, Lux is offering Halide membership subscriptions for $11.99 per year, which is a 40% discount. The app is also available as a one-time $60 purchase.