Last Friday, just before midday, my new iPhone 17 Pro Max arrived at my home – a Deep Blue model with 512 GB of storage. Since then, it’s dropped neatly into my daily iPhone routine. In many ways, it’s not that different from the iPhone 16 Pro Max that I’ll be trading in. I’ve been on iOS 26 for months, so the operating system isn’t a big change, but there are some notable differences that I thought I’d share.

Setup

This year’s setup process was smoother than usual. I was in a rush to set up my iPhone because it arrived at about 11:30 AM and Federico and I were planning to record this week’s episode of AppStories all about our hardware first impressions. Given time differences, that didn’t leave me much time to get up and running.

I decided to go with restoring from an iCloud backup, which gets your iPhone to a usable state faster than other options but can take a long time to download all of your apps. It was a good call because by the time we started recording about 45 minutes later, not only did I have access to the basics, but most of the apps assigned to active Home screens had already been downloaded, giving me a chance to play around with things a bit before recording.

I had plans to go out late in the afternoon and was hoping to get as much of the transfer finished by then as possible, so I connected my iPhone 17 Pro Max to an Ethernet adapter and let it do its thing. When I left the house around 4:00 PM, I was pleasantly surprised to find that nearly all of the 520 apps I had installed were already downloaded.

Heat

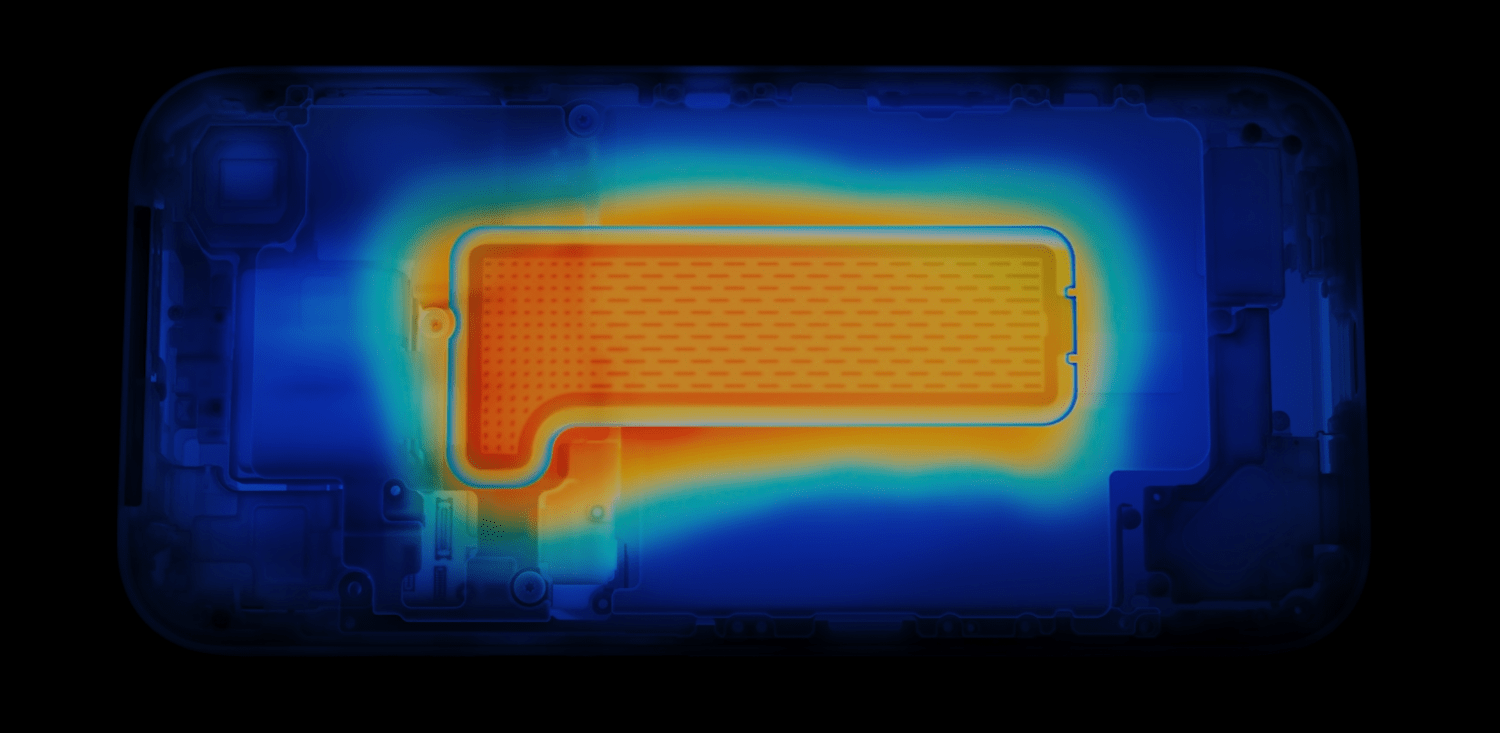

Apple made a big deal of the iPhone 17 Pro line’s new vapor chamber, which, combined with the aluminum case, is designed to dissipate heat better than before. In my experience so far, it absolutely does manage heat better, but that’s not to say the phone doesn’t get pretty warm. When I was setting up my new iPhone, it got quite warm, as did my old 16 Pro Max. However, while the 16 Pro Max got hot above the Apple logo on the back of the phone, the 17 Pro Max’s heat was more evenly distributed. That meant no one spot got as hot as the 16 Pro Max, but more of the phone got warm, including the sides, which I’d never really noticed with the previous model.

In the three days since I set up the 17 Pro Max, I’ve noticed it get warm now and then, but nothing close to as warm as the 16 Pro Max typically did. I’ve been out a couple of times on warm (80—85℉) sunny days taking photos and videos, and while the 17 Pro Max gets a little warm, it’s much less noticeable than before.

Hardware Design

Initially, I was a little underwhelmed by Apple’s iPhone lineup this year. I love the Air, but the camera tradeoffs were more than I was willing to make. As for the Pro line, I’m not a huge fan of the two-tone style and giant camera bump. It feels too industrial to me, which may be the point, but I prefer a sleeker look.

I was also disappointed by the Cosmic Orange color. I like orange but prefer something brighter like the orange Apple Silicone Case. As you can see from the image above, it’s brighter than the Cosmic Orange iPhone. I had a chance to compare them in person at a local Apple Store over the weekend, and all it did was confirm to me that I’d made the right choice getting the Deep Blue model.

Apple didn’t make a black iPhone 17 Pro this year, which led some people to expect the Deep Blue model would be closer to black than blue. As it turns out, although Deep Blue is a very dark blue, it’s not a blue that would be mistaken for black. It looks great and minimizes some of the phone’s industrial look that I don’t like, so it’s a big win in my eyes.

The only caveat is that the Ceramic Shield on the back makes a rectangular patch that looks a little closer to gray than I’d prefer. It’s not a huge deal since I usually have a MagSafe wallet hiding that area, but again, I’m not that into the two-tone look.

Surprisingly, another aspect of the 17 Pro Max that I absolutely love is the unibody design. There’s something about how the metal wraps around from the back to front that really works for me. Part of it is that the edges are rounder than on the iPhone 16 Pro Max, making the 17 Pro Max more comfortable to hold. The unibody design also makes the phone seem more sturdy. Plus, while I know intellectually that my new phone weighs 6 grams more than the 16 Pro Max, it doesn’t feel that way when I hold both, which I think has something to do with the fact that the rounder edges of the 17 Pro Max make it feel slimmer than it really is. In what may be another mind trick, the front glass of the iPhone 17 Pro Max feels a little smoother, too.

As I mentioned, I swung by my local Apple Store on Sunday to check out the iPhone Air and the latest accessories. I’ll get to the accessories in a bit, but when I saw the Air, I had that moment of amazement that it could be so thin and light, but not one moment of regret. The Air looks great, and I’m excited for what that sort of miniaturization of iPhone hardware means for future models, but it’s not for me.

I just like having a big battery and three cameras too much to trade those for a thin iPhone. Yesterday, I charged my phone early in the day, used it relatively lightly while I was working at my desk, and then headed out in the afternoon to test the cameras some more. When I went to bed last night, I left my phone on the couch by accident, where it sat until I got up today. It still had about 50% of its battery left. I love that I rarely have to think about whether my iPhone is charged, let alone worry about it.

The Cameras

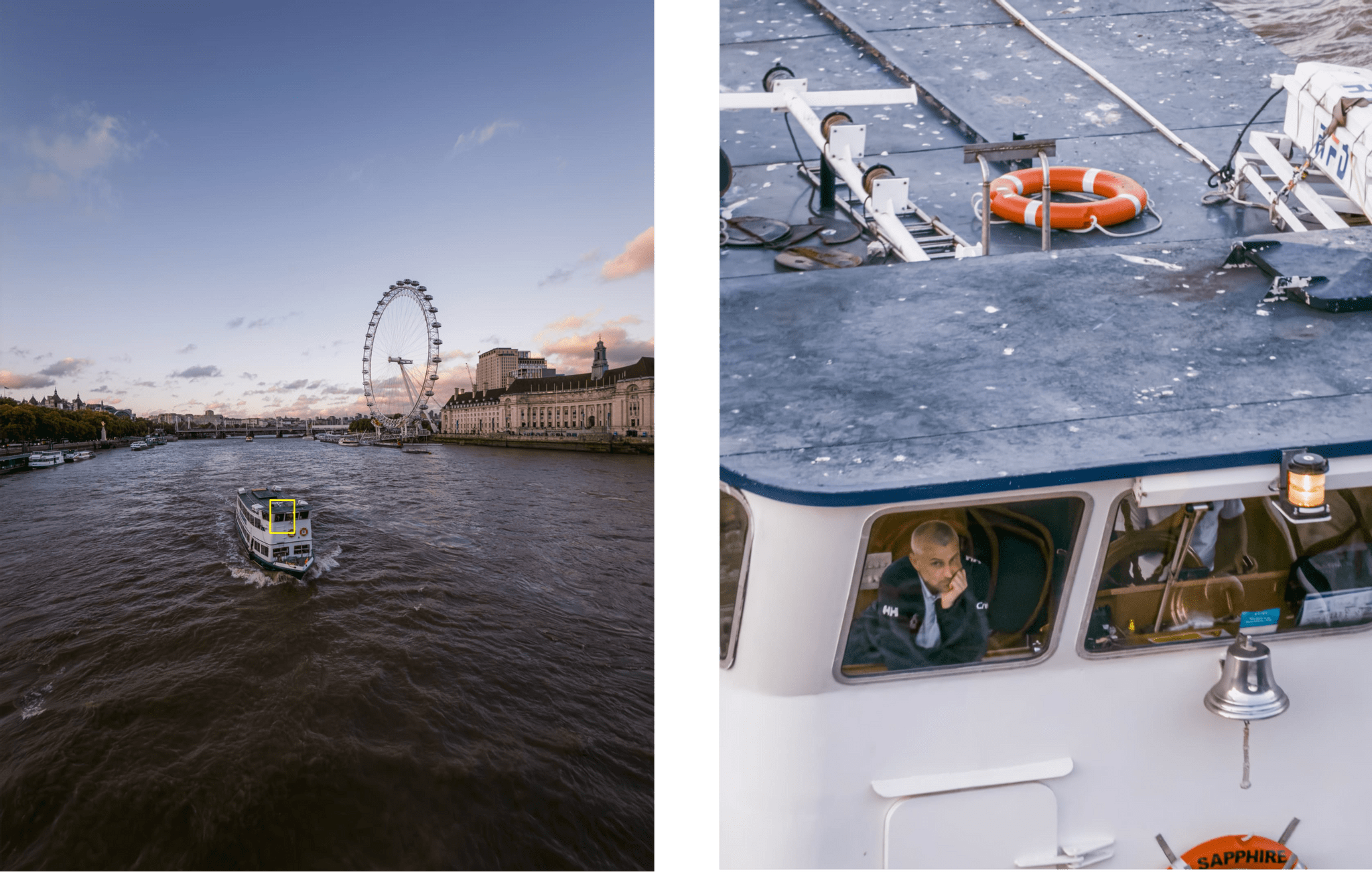

Over the past few days, I’ve been experimenting with the iPhone 17 Pro Max’s three rear-facing cameras. In Austin Mann’s review of the iPhone 17 Pro’s cameras that I linked to yesterday, he said:

On the 16 Pro, the 5x (120mm) often felt a little too tight. The new 4x (100mm) feels far more natural and much more familiar. It’s a classic portrait and landscape focal length in the photography world, and with the bump up from 12MP to 48MP I’ve been really happy with the results.

I’m no pro photographer, but having used the new zoom cameras, I have to agree. Both focal lengths feel great, but the real star is the detail both can capture thanks to their 48MP sensors.

To show off what these cameras can do, I picked three scenes of things I might snap a quick photo of while out on a walk. I left the cameras on the Standard Photographic Style and didn’t do any cropping or editing, taking 24MP shots, which is also a default setting, with the Wide, Main, 2×, 4×, and 8× options.

First up is an island in Lake Norman:

Next, I walked over to Davidson College and took a series of photos of the steeple of the campus church:

Finally, I took a similar series of shots of Chambers Hall on campus:

I’m really pleased with the results. It was a bright, sunny day, which always helps, but to my untrained eye, there’s a noticeable improvement in image quality now that all of the iPhone 17 Pro’s cameras have 48MP sensors.

Accessories

When I stopped by the Apple Store over the weekend, I checked out the latest accessories, too, but didn’t buy anything. I prefer to use my iPhone caseless, but I wanted to see what my “official” options are because I do use a case when traveling.

The TechWoven cases struck me as durable options, although I wasn’t wowed by any of the colors. Instead, I was drawn to the bright Silicone Cases. Historically, these cases aren’t the most durable, but as someone who gets a new phone every year, that’s less of a consideration. The bright orange is great, but for my Deep Blue iPhone 17 Pro Max, I think I’d probably go with Neon Yellow or Purple Fog.

I also checked out the new Beats cases. I love the little pill on the lanyard that you can pull apart to create a perfectly sized divot for propping your phone up. The colors are nice in person, too, but in the end, I didn’t buy one because I don’t want a lanyard on my iPhone.

So that’s my mini-review of the iPhone 17 Pro Max. It does the same Pro Max things I love with some nice perks like better cameras and a unibody design that’s functional and comfortable to hold while making the phone feel more substantial. Photographers and fans of great battery life really can’t go wrong with the iPhone 17 Pro Max.