Everything Else

As is often the case with new versions of iOS and iPadOS, there tend to be dozens of other features and smaller details to be discovered in the nooks and crannies of both OSes. iOS and iPadOS 15 are no different, so let’s take a look.

PDFs and Printing

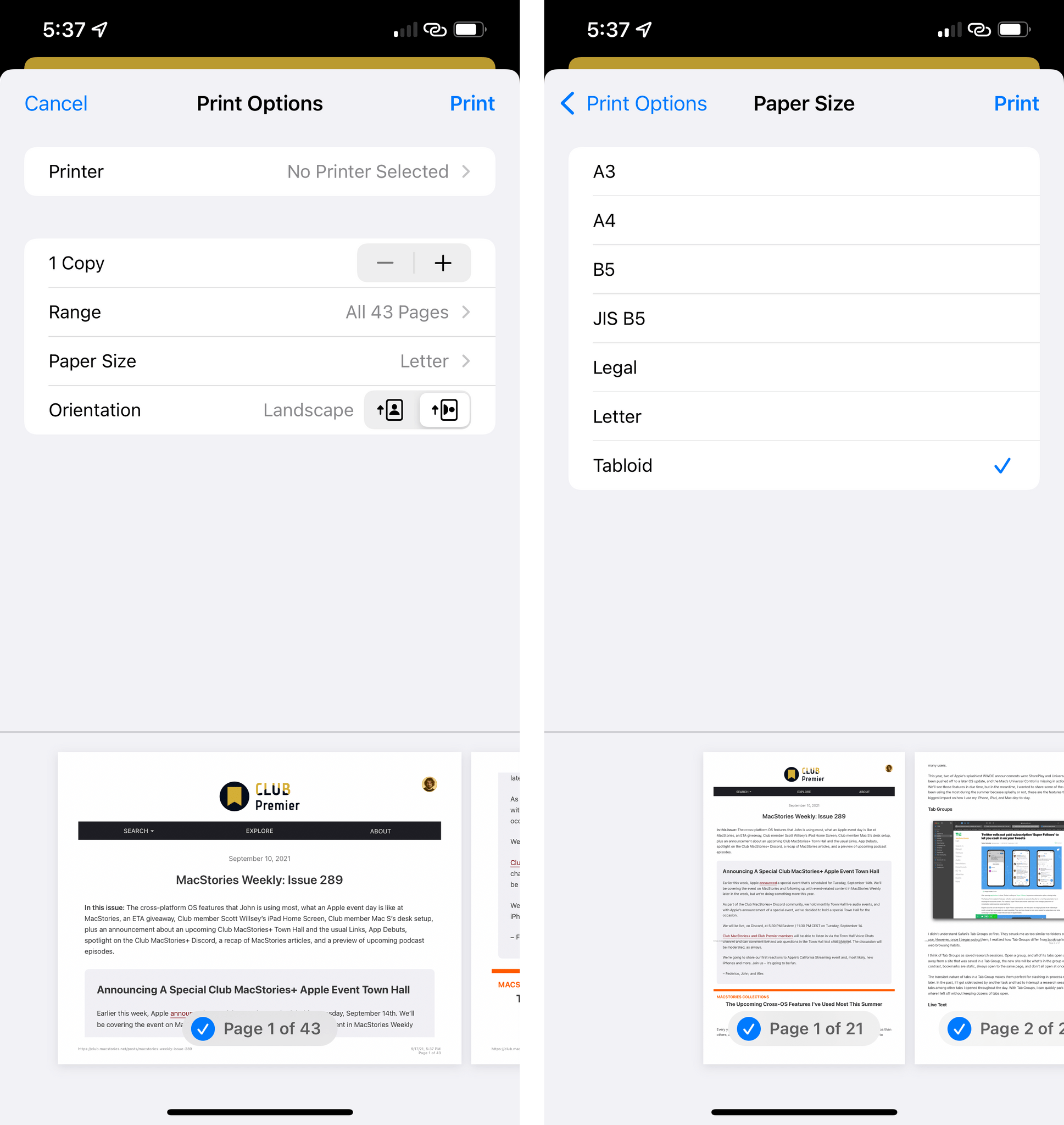

Whether you still print paper documents or, like most people these days, just want to generate PDFs, iOS 15 has a great addition in store for you: a completely redesigned Print menu.

Available in Safari and other apps that can print content, the new ‘Print Options’ screen lets you choose a paper size (including popular options such as A3 and A4) as well as the orientation of your document between portrait and landscape without having to physically rotate your device. As before, you can pinch-open the document preview in the Print screen to view the PDF version of it and export it to Files or other apps, which makes this revamped Print functionality a fantastic way to create PDF documents from Safari.

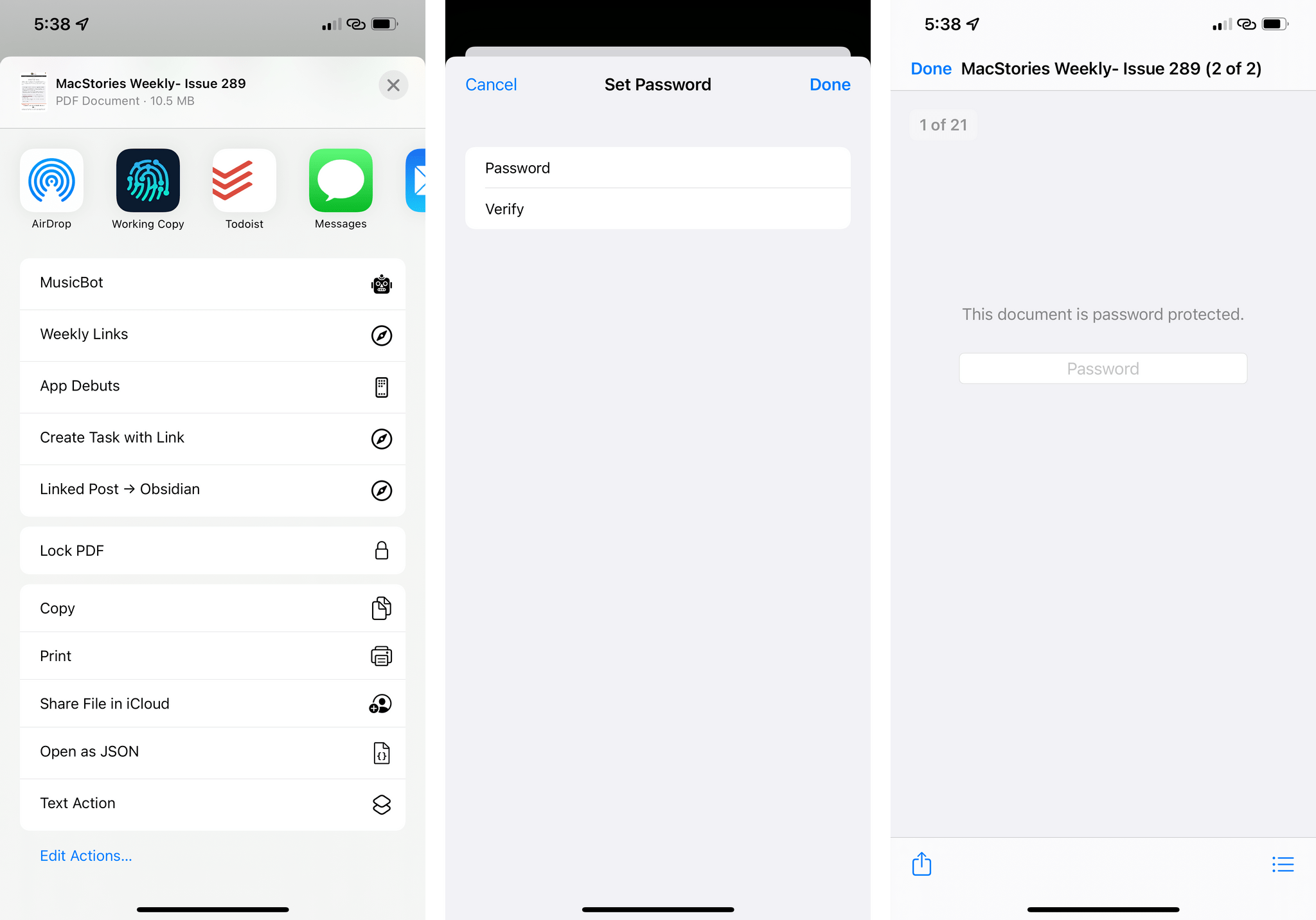

Speaking of PDFs, if you ever find yourself having to password-protect sensitive documents you need to share with other people, iOS 15 now lets you do this with a new ‘Lock PDF’ action. To lock a document, open any PDF document in a native Quick Look preview (in Files, for instance, just tap a PDF to preview it), then tap the Share button and scroll until you see the new ‘Lock PDF’ button. Tap it, and you’ll be presented with a screen that lets you enter a password for the document, which you’ll have to verify a second time.

Once a document is locked, you’ll have to enter a password in the Quick Look preview every time you open it.

Control Center

Control Center hasn’t undergone a major update in a while, and this year’s updates continue to refine a formula that has been working for the past few years.

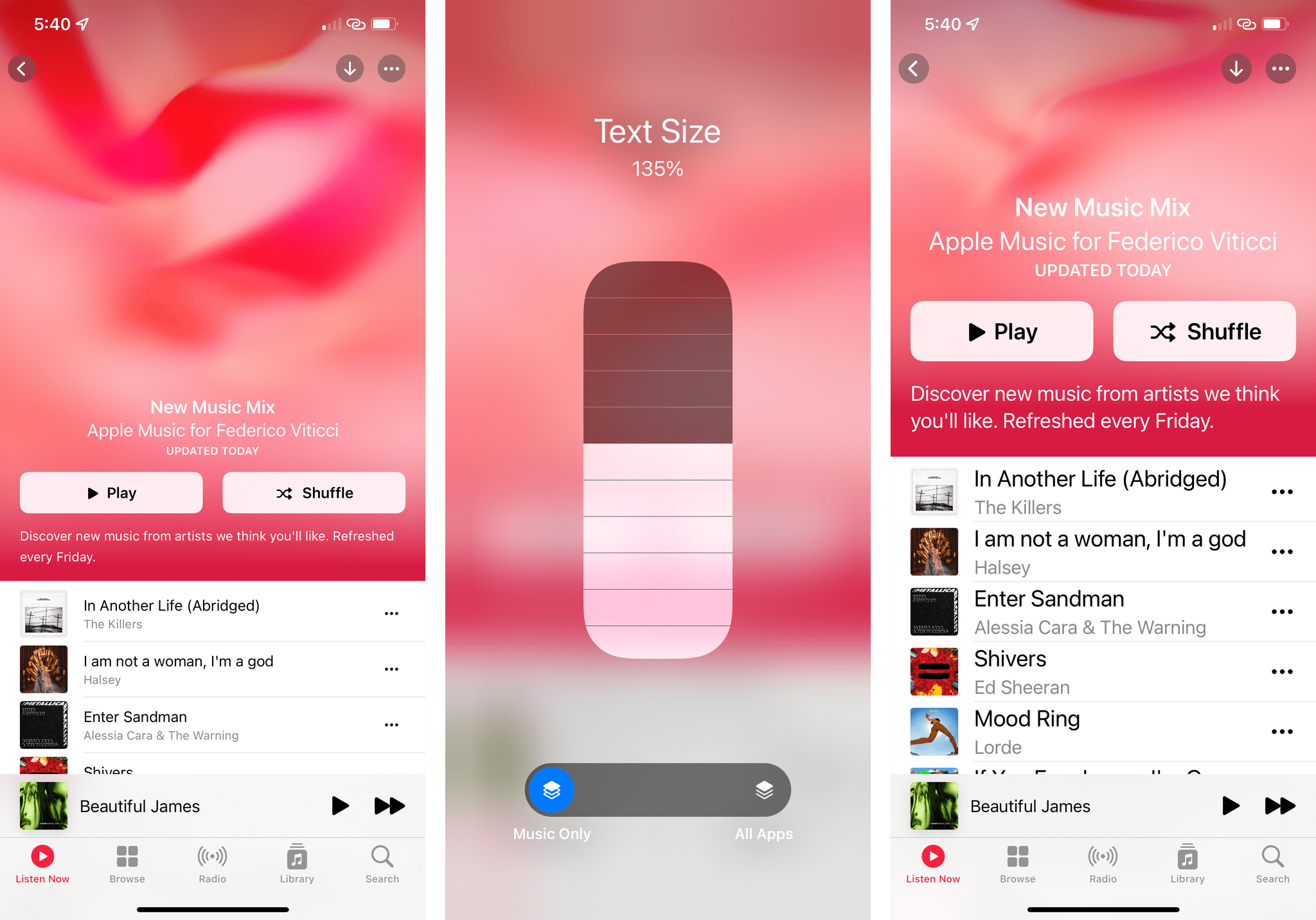

As part of iOS 15’s per-app Accessibility settings (which will let you customize Accessibility options for specific apps instead of the whole OS), Control Center has gained a new ‘Text Size’ toggle that lets you quickly modify the text size of the app you’re currently using without applying any changes to other apps. To do this, install the new Text Size control in Control Center, open the app you want to customize, and tap the item in Control Center. You’ll see a text size slider for ‘All Apps’ as the default view, but there’s also an ‘[App] Only’ tab you can select to open a secondary slider that changes text size just for the current app.

I like to keep text size on my devices relatively small (thankfully, I wear eyeglasses), but I’ve found that my preferred setting is a little too small for apps like Calendar. In iOS 15, I can change that app’s text size in just a few seconds by opening Control Center, which is terrific.

The TV remote in Control Center has also been slightly redesigned in iOS 15. The large ‘Menu’ button has been replaced by a ‘Back’ button inspired by the latest Siri remote; in the upper section of the virtual remote, you’ll also find buttons to mute and either power on or shut down an Apple TV.

Lastly, the music recognition toggle powered by Shazam, originally introduced in iOS 14.2, has been updated with the ability to view a log of songs you’ve recognized in Control Center by long-pressing it. This history, sadly, still doesn’t sync with the ‘My Shazam Tracks’ playlist on Apple Music that’s managed by the main Shazam app.

You can tap songs in this History panel to reopen them in the Shazam app or swipe to remove them.

Siri

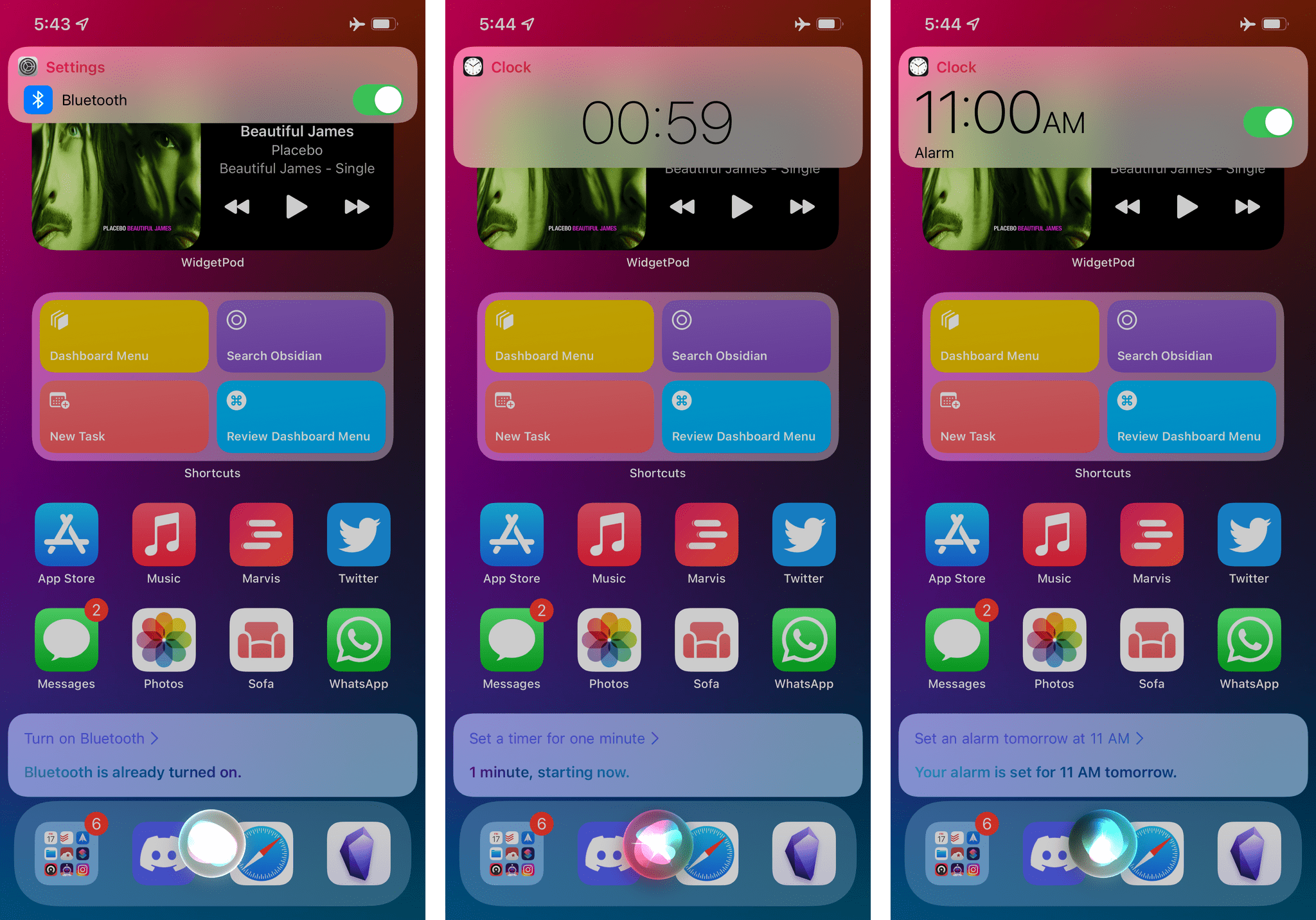

The most important addition to Apple’s assistant this year is that, starting with iOS and iPadOS 15, Siri will work offline by default. As announced by Apple at WWDC, the Neural Engine can now process your requests locally, on-device, with the same quality of server-based speech recognition.

In practice, since the switch to offline processing, I haven’t noticed a degradation of quality in terms of how Siri interprets my requests, but it hasn’t gotten smarter than before either. I suppose this is good for Apple since, at the very least, moving away from server-based processing hasn’t made Siri worse.

Obviously, only some types of requests will work offline. These include:

- Timers

- Alarms

- Launching apps

- Music and audio controls15

- Switching settings

You can try these requests offline by putting your device in Airplane mode and switching off all data connections. Before, Siri would immediately throw an error upon activating it; now, Siri starts listening locally, then decides whether it can complete your request based on what you’re asking. I’ll admit I’m not a huge Siri user myself, but with the switch to offline requests, I’ve at least noticed that Siri no longer randomly refuses to activate because of server issues.

According to Apple, Siri should also be better now at maintaining context between requests; some of these improvements have been rolled out server-side, so they also work on iOS 14 right now, but others are exclusive to iOS 15. For instance, if you’re looking at a contact in the Contacts app or a conversation in Messages for iOS 15, you can say, “Message them I’m almost there”, and Siri will understand how to route your request. This also works for notifications of messages and missed calls.

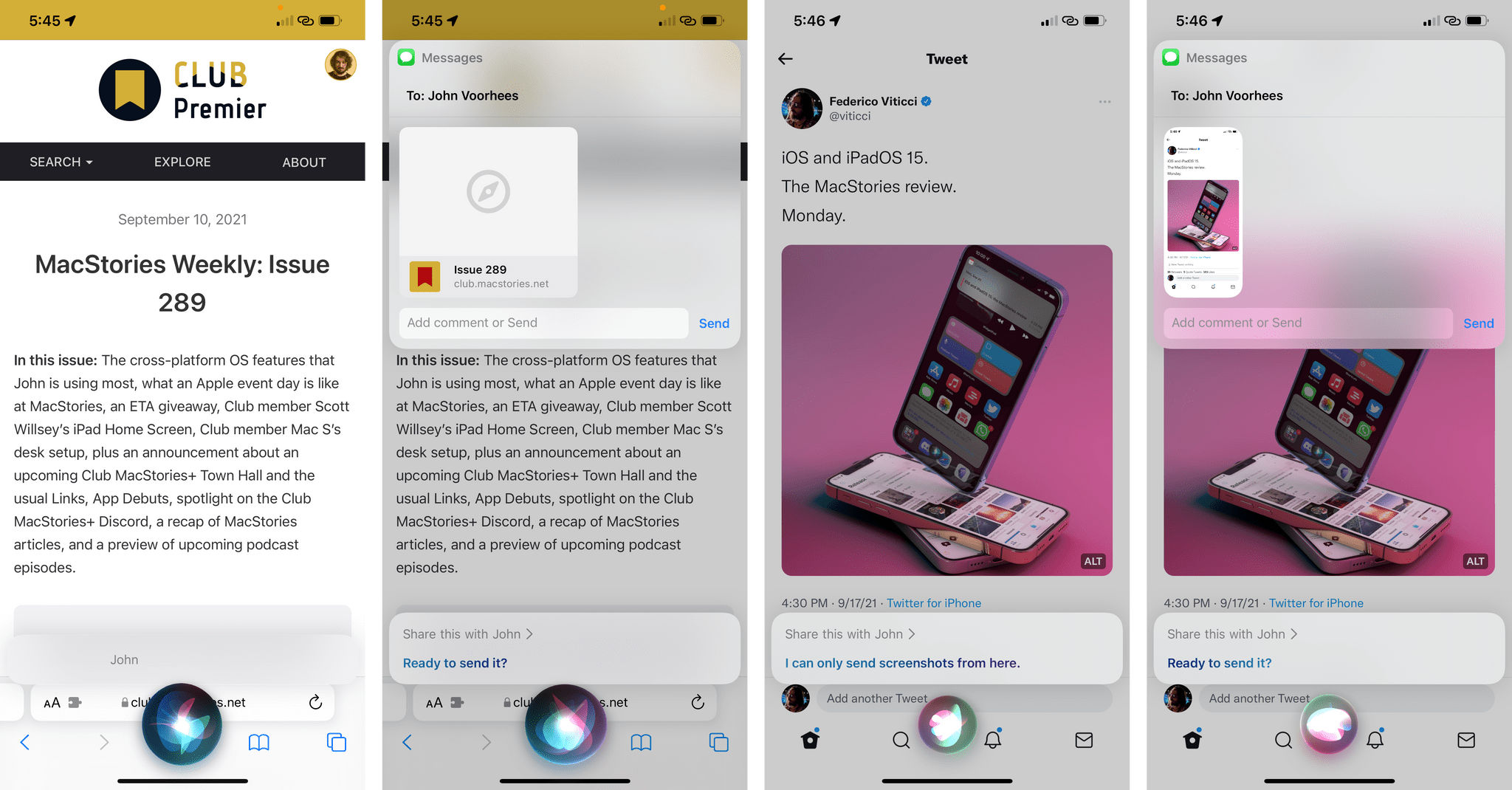

Siri for iOS 15 also features the ability to share onscreen content with people by simply saying, ‘Share this with [person name]’.

I’m fascinated by this feature since, as I originally suspected, it’s yet another functionality that piggybacks on the NSUserActivity API, which we know Apple loves to use for all kinds of deep-linking on iOS (see: Quick Note). If you ask Siri to share content displayed onscreen while using an app that donates deep links to the system, Siri will prepare a message containing the link and confirm you want to send the message.

You can test this when reading an article in Safari, and Siri will turn the “this” into a link you can send to someone. Interestingly, if the app you’re using while invoking Siri doesn’t support deep-linking, a screenshot will be taken as a fallback method instead. So if you want to share an Instagram DM (we’ve all done this) or something you wrote in Notes with a friend, say “Share this…” to Siri, which will capture a screenshot of what’s onscreen for you and offer to send it as an image.

Lastly, Siri comes with extended HomeKit integration, which allows you to set up temporary automations for commands to execute in the future. You can read more details in John’s standalone story on HomeKit here.

- If offline music playback doesn't work for you, apparently the trick seems to be to add "my music" to the Siri request. ↩