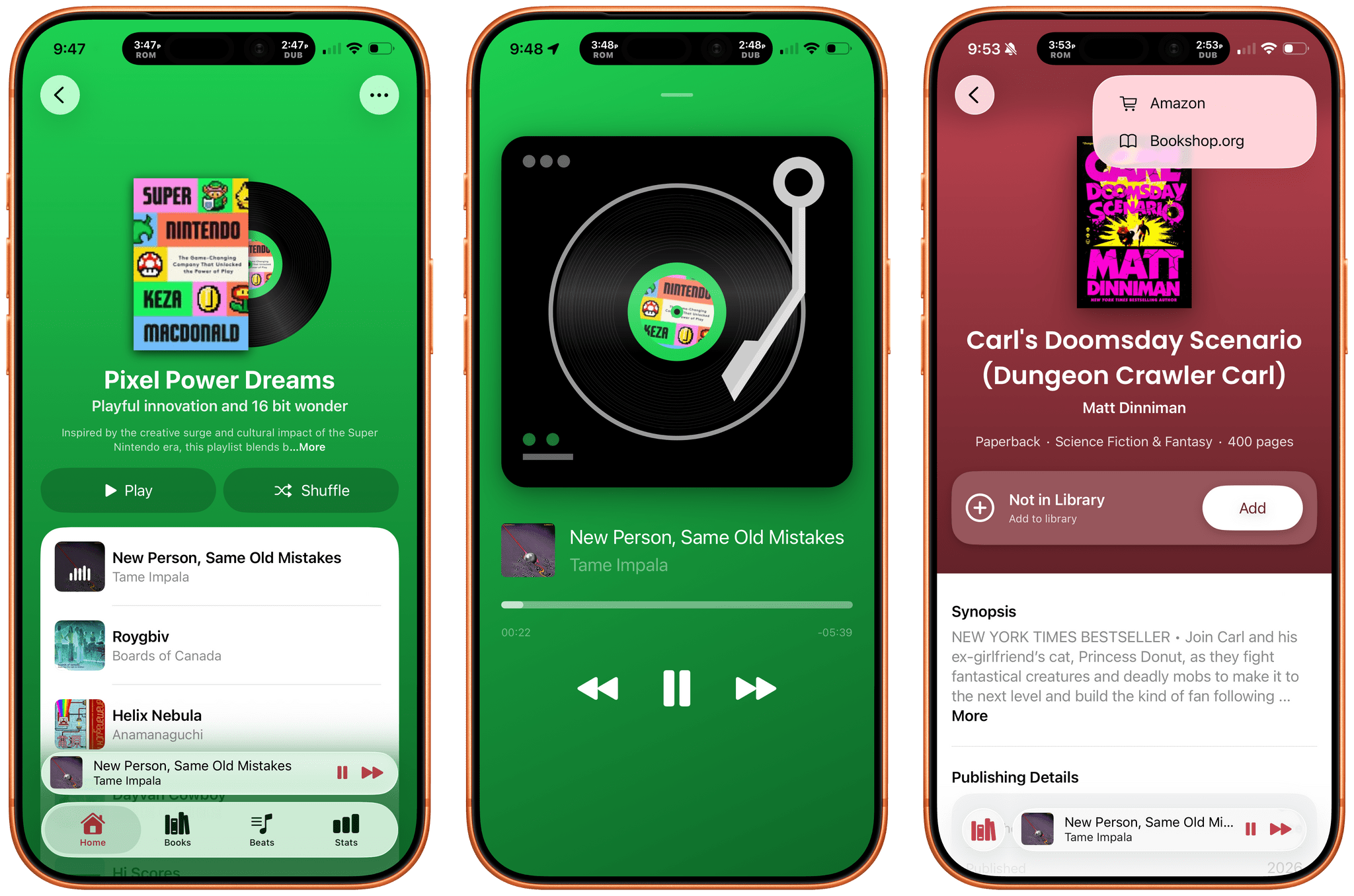

Tracking the books you read is well-trodden App Store ground. So is making music playlists. But what if you combined the two? The result is Book Beats, a new iPhone and iPad app from Olea Studios’ Casey and Lisa Doyle, that does just that, managing to elevate books and music in a new and unique way by bringing reading and listening together. It’s a terrific example of an app made by people who deeply understand its subject matter and bring AI to bear in a focused, tasteful way that elevates the app’s experience without being gimmicky.

Posts tagged with "iOS"

Book Beats: Reading to the Rhythm

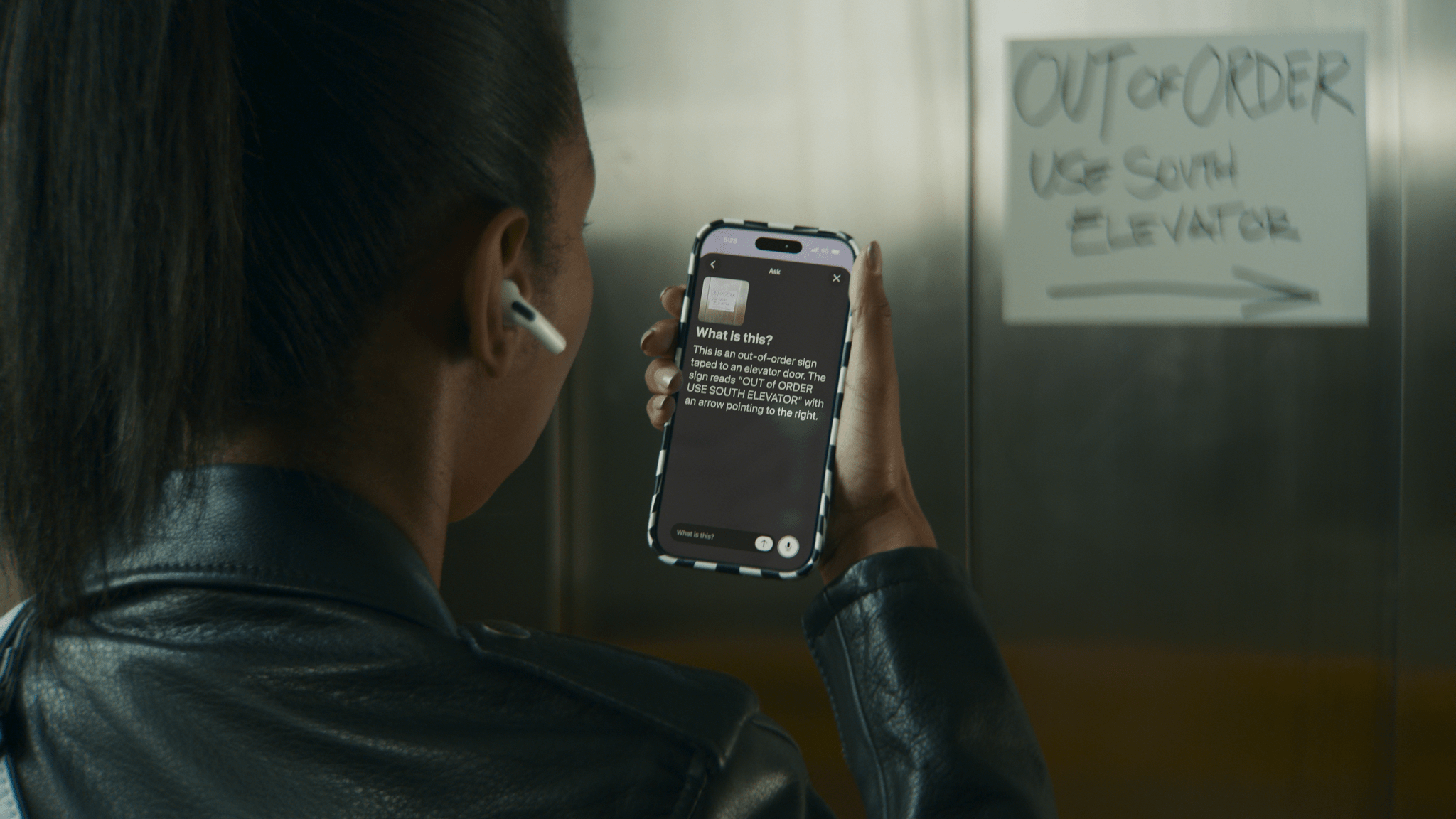

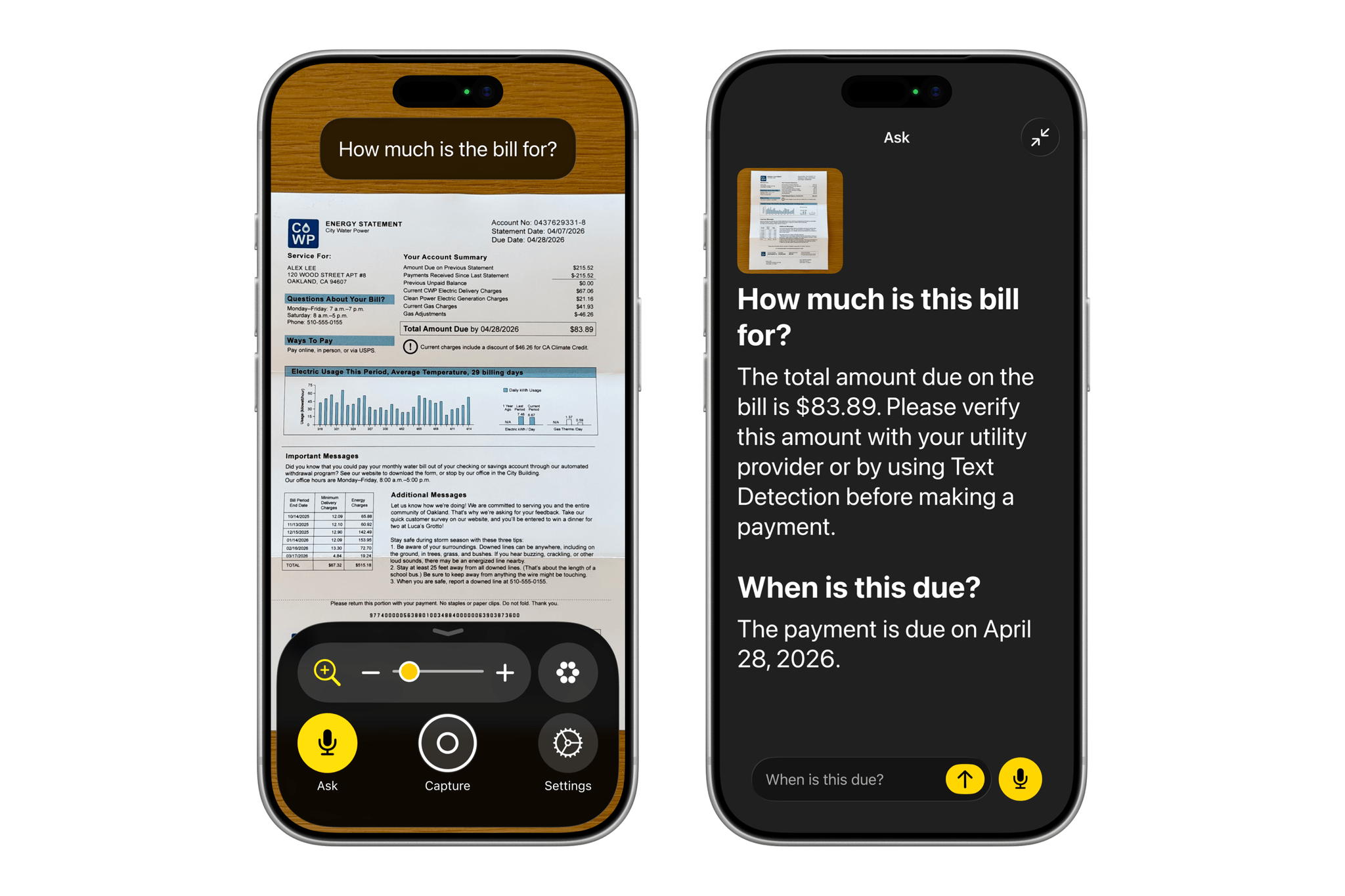

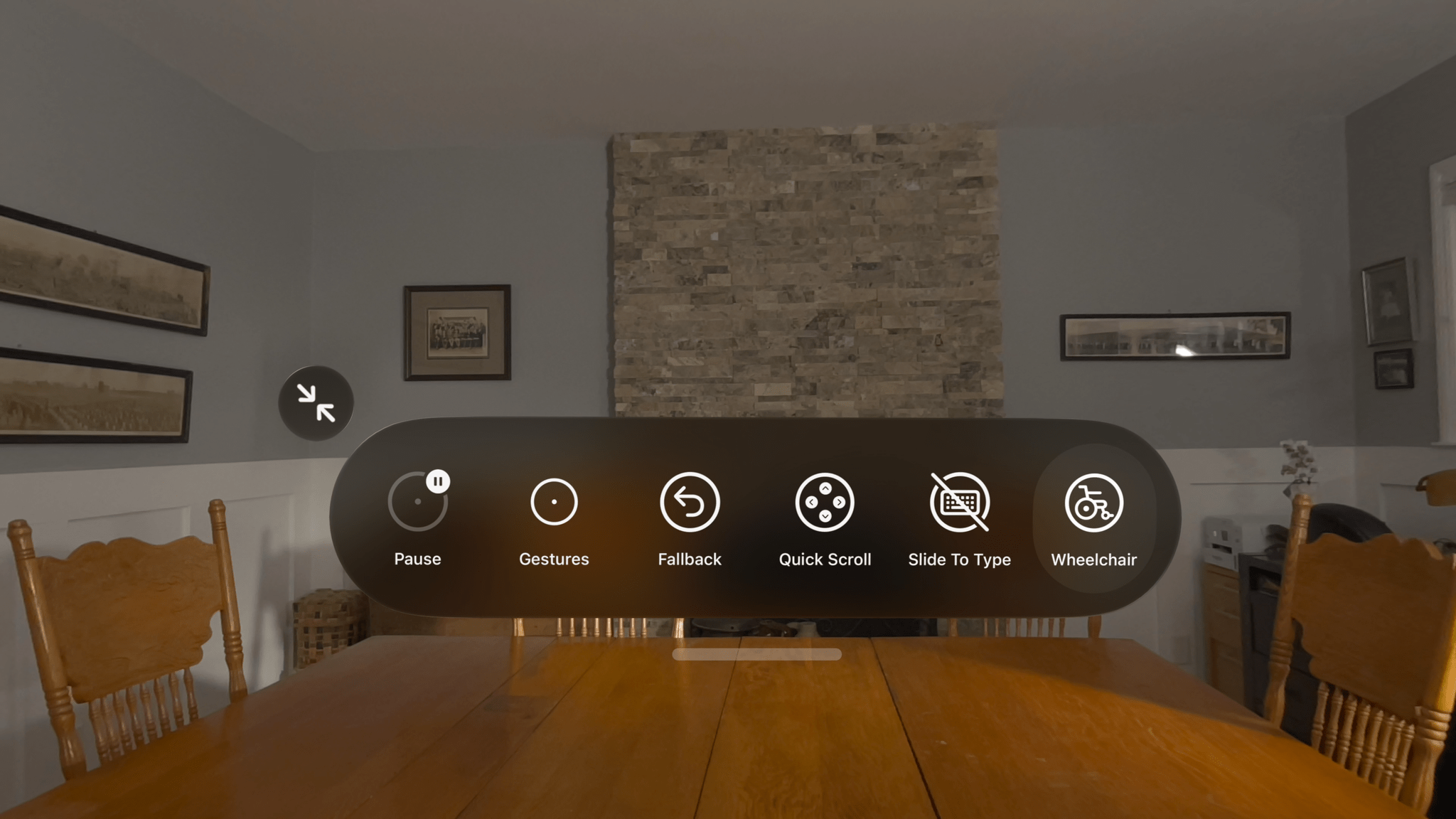

Apple Intelligence-Infused Accessibility Features Promise Greater Flexibility and Power

In what I expect will be an overarching theme at WWDC 2026, Apple’s Accessibility group took the wraps off an impressive collection of features coming later this year. The announcement, which is timed to lead into Global Accessibility Awareness Day on Thursday, emphasizes existing features and technologies that the company says will gain deeper capabilities thanks to Apple Intelligence.

For starters, VoiceOver will become more descriptive, allowing a device’s camera to be used to describe the user’s surroundings or a scanned document in greater detail. The feature will also make use of the Action button to trigger the camera and allow users to ask questions and make follow-up inquiries about what’s in the viewfinder. The Magnifier will gain voice controls, too, so users can simply ask it to zoom in, for example.

Voice Control will get similar enhancements. Rather than requiring a defined set of commands that need to be memorized to control a device, the feature will allow users to invoke actions with natural language, such as, “Tap the orange folder.”

Accessibility Reader will be able to handle more complex written layouts that include tables, columns, and other traditionally challenging formatting. If there’s one thing that LLMs have become extremely good at, it’s scraping the web and learning how to parse the meaningful parts of a webpage. While I’d have preferred that the web not have been pillaged as fuel for models in the first place, I’m glad at least part of that is going towards making the web and other text more readable for people who need it.

One of my favorite demos that Apple showed off during my briefing was a short video shot on an iPhone that had subtitles added to it on the fly using an on-device model. We’ve grown so accustomed to subtitles being available with the TV shows, movies, and YouTube videos we watch that they feel like they’re missing from the home movies we shoot and share with friends and family. Later this year, though, subtitles will be available for all types of video, generated privately on device.

The Vision Pro uses state-of-the-art eye tracking for interacting with your environment. Apple announced that it is extending that technology to motorized wheelchairs by working with partners TOLT Technologies and LUCI. The system allows a motorized wheelchair to be maneuvered by the user simply looking at controls inside the Vision Pro. The video showing off the feature was impressive and makes perfect sense if you’ve ever used the Vision Pro.

Apple also announced a new accessory with an accessibility angle. You may have seen the Hikawa Grip and Stand collection, a series of colorful accessories designed to make it easier for people to hold an iPhone more securely. Designed late last year by artist Bailey Hikawa, the Hikawa Grip and Stand is being mass-produced by PopSockets and sold in Apple retail stores in 20 markets starting today.

Finally, a bunch of other accessibility features are coming to Apple platforms later this year, including:

- Vehicle Motion Cues, face gestures for taps, and eye-select in Dwell Control for visionOS,

- Touch Accommodations setup customization,

- Improvements to MFi hearing aid pairing and handoff across devices,

- Larger Text support in tvOS,

- Name Recognition in over 50 languages,

- An API for adding human sign language interpreters to FaceTime, and

- Support for Sony’s Access game controllers on iOS, iPadOS, and macOS.

With all the overblown hype surrounding artificial intelligence, it’s refreshing to see Apple putting it to practical use in ways that are meaningful to its users. One thing I’ve learned from following the work of Apple’s Accessibility team over the years is that there’s no one-size-fits-all approach to accessibility. The solutions are as unique as the people they serve. Apple has always offered a wide range of APIs and user features to make their hardware and apps available to as many people as possible, but Apple Intelligence promises to take the company’s longstanding commitment and make it more flexible and powerful for more people than ever before.

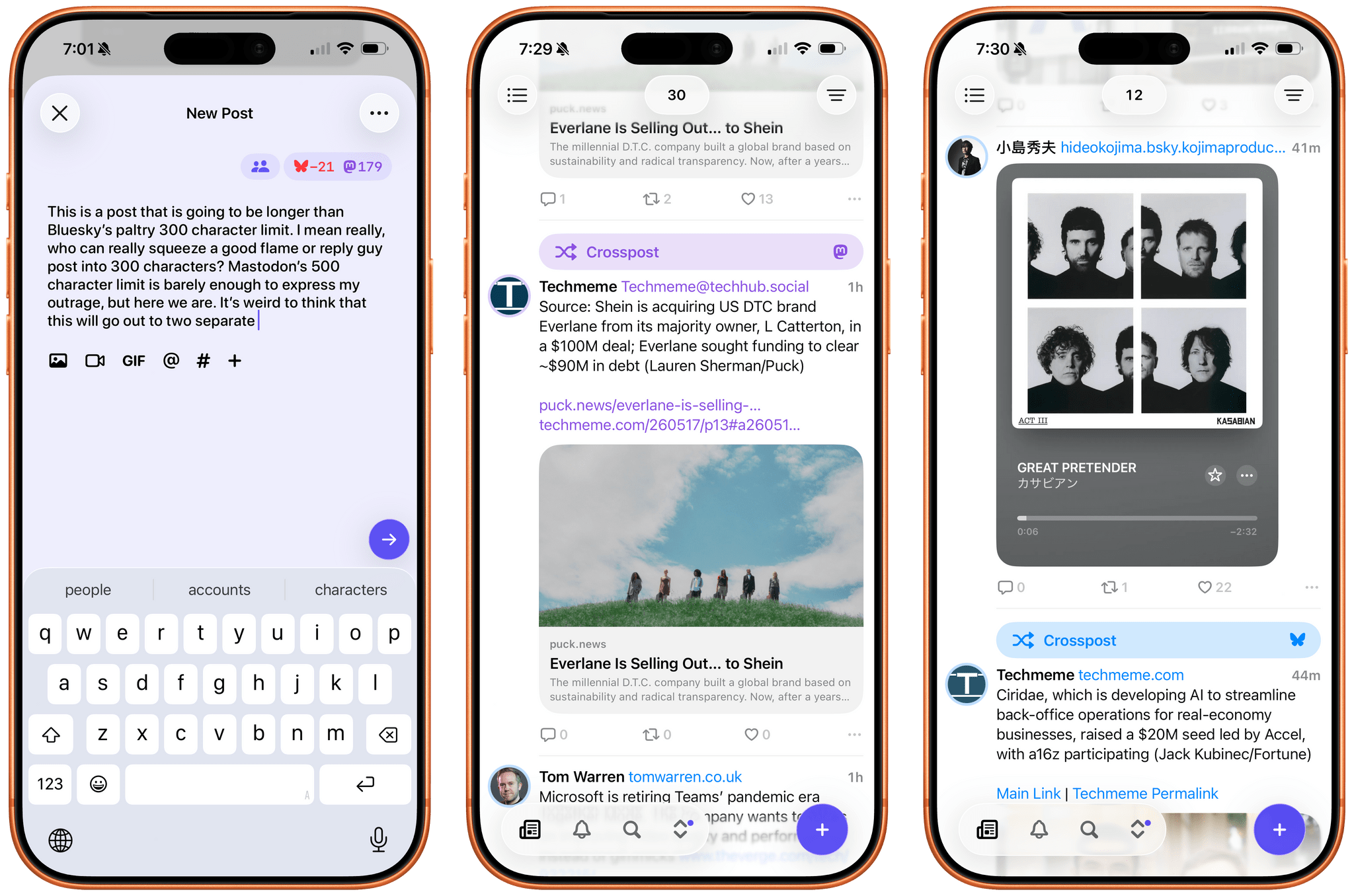

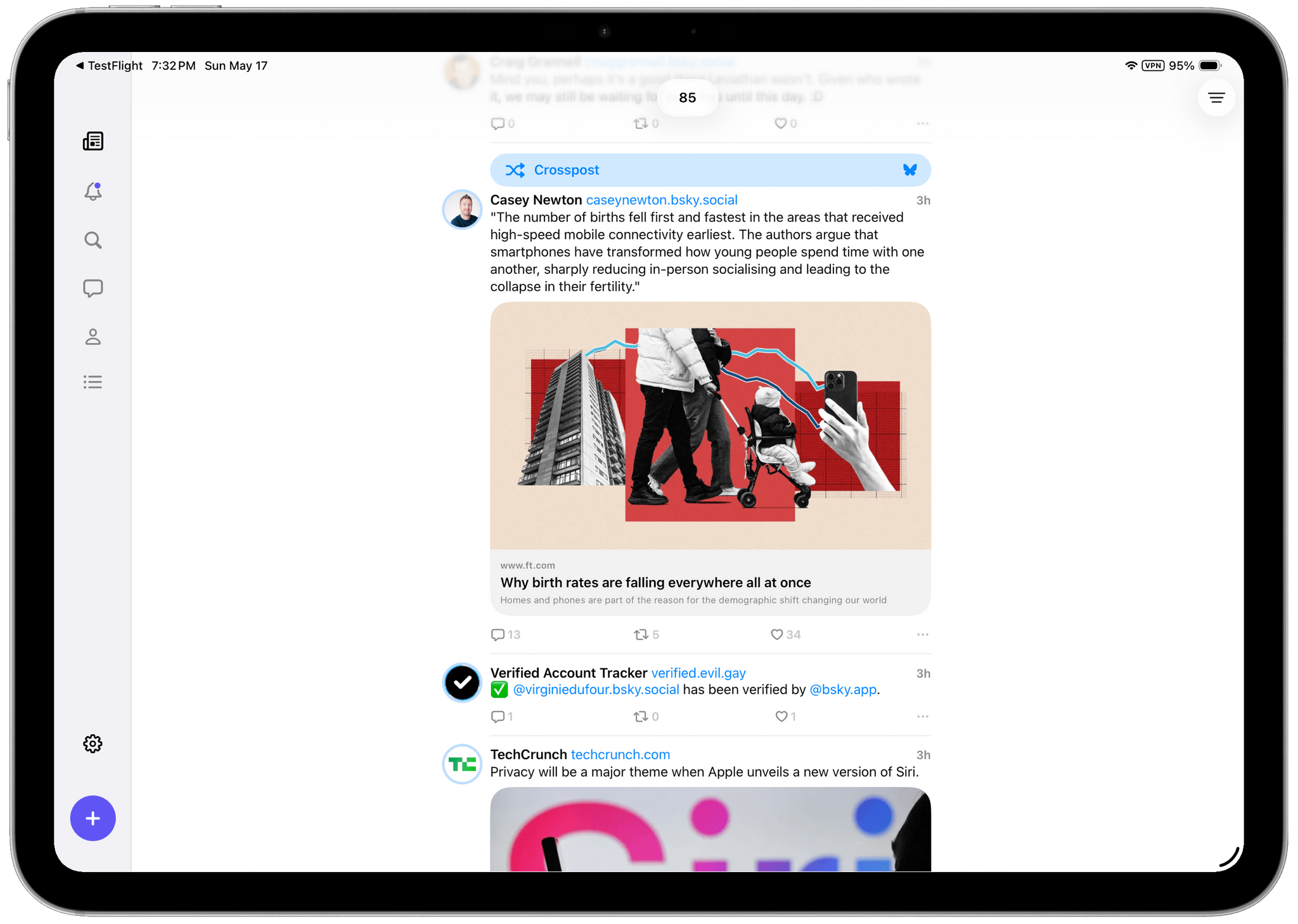

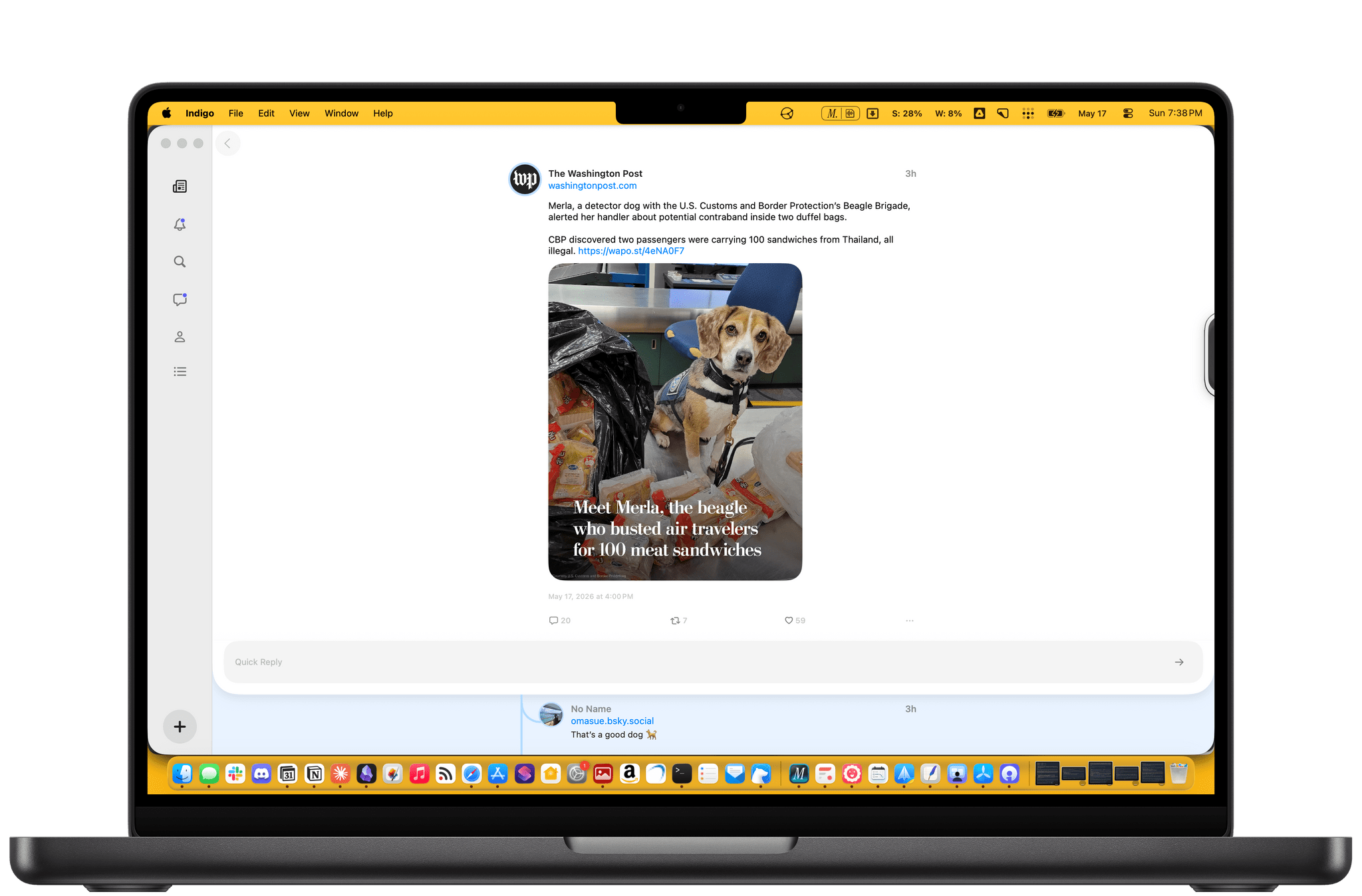

Indigo: A Clever Mashup of Bluesky and Mastodon in One Timeline

Last week, Soapbox Software (Ben McCarthy and Aaron Vegh) released Indigo, an iPhone, iPad, and Mac app that offers a unique take on social media, allowing you to log into both Bluesky and Mastodon in a single app. In the increasingly fractured social media landscape we live in, it’s a fantastic idea. Instead of bouncing back and forth between two services that have a lot of overlap for some users, why not use just one?

This isn’t Soapbox’s first collaboration. You may recall Croissant, the cross-posting utility that I covered when it released in 2024. We were so taken by the app that we gave it the Best New App award in the 2024 MacStories Selects Awards. That pedigree shows in what is a much deeper and more complex app.

Like Croissant, Indigo lets you cross-post to Bluesky and Mastodon and is beautifully designed. But unlike Croissant, Indigo is a full-blown timeline app for simultaneously catching up on your Bluesky and Mastodon feeds at the same time.

Depending on who you follow on each service, a mashup of the two has the potential to generate a timeline full of duplicates, but Soapbox took that into account with Indigo. There’s no need to change who you follow or make any other sort of adjustment yourself; instead, the app automatically detects duplicate posts and removes them from sight. However, if for some reason you want to see both, the duplicate post is always available behind the tap of a Crosspost button. It’s a great feature that alerts you to the fact that one of your timelines has been altered while also giving you the chance to check out the other post.

Other touches, such as the color of links, provide subtle clues to convey a post’s provenance, but the shades of blue and purple used are close enough that you might not notice the difference until you run across a Crosspost button. I also appreciate the separate character limit countdowns for each service on the New Post screen, which let you know when you’re going to have to forgo Bluesky for a chattier Mastodon post. Fortunately, the app lets you just post to one or the other service if you’d like by tapping on the character countdown.

All of the other core features you’d expect are available, too. Photos, videos, and GIFs are supported, as are @mentions and hashtags. You can filter who can see your post and who can reply to it, with some inherent differences in the underlying services’ support for those features. The app also includes search, notifications, direct messages, profile viewing, and a bunch of settings you can tweak. That said, power users of apps like Ivory may feel a little constrained in Indigo. It’s an excellent 1.0, but it doesn’t yet match the full functionality of Ivory.

Indigo strikes me as a good solution for a couple of different types of users. If you want a simple, beautifully designed way to read your Bluesky or Mastodon timeline, this is a great one. While the cross-posting and deduplication features are what will set Indigo apart for many, it works well as a standalone option for either service.

However, I expect the core audience will be people who use both Bluesky and Mastodon and follow many of the same people in both places. Especially if the people you follow cross-post a lot, Indigo greatly improves the experience.

I’ve enjoyed playing around with Indigo for the past few weeks and noticed a couple of things. Despite following roughly the same number of people on both services, the Bluesky accounts I follow are a lot chattier than those on Mastodon. I also have far fewer Crosspost buttons in my timeline than I expected. I guess I just follow very different accounts on each.

If you’ve ever felt the fatigue of jumping back and forth between a Bluesky and Mastodon timeline and found it hard to keep up with both, be sure to give Indigo a try. It makes the entire experience much nicer. You can download Indigo from the App Store on iPhone, iPad, and Mac and unlock its full feature set by purchasing the Ultraviolet tier, which costs $4.99/month, $34.99/year, or a one-time payment of $119.99.

Pedometer++ 8: Glimmers of an Apple Wrist Renaissance

Today, when you mention David Smith’s name, most people probably think of Widgetsmith, his runaway success that caught fire on TikTok and is still going strong today. But for me, Pedometer++ is what comes to mind first. Still a couple of years away from releasing my own apps or writing at MacStories, I was fascinated by the dynamics that made the app a success when it debuted in 2013. Part of that success was how quickly David got it onto the App Store in the wake of the iPhone 5s and its M7 coprocessor that made step counting possible.

It didn’t hurt that Pedometer++’s initial release was also free (and the core features still are), but the app’s elegant, simple design played a big part, too. Pedometer++ appealed to a wide audience who appreciated its focus and frequent updates that systematically took it from basic step counting to badges, confetti, workouts, maps, and more. It’s a great example of a developer who jumped on a new hardware feature quickly with a focused initial release and then relentlessly iterated year after year without sacrificing what made that first version a favorite of so many people.

Today’s 8.0 release is focused first and foremost on the Apple Watch, which is the other aspect of so many of David’s apps that I appreciate. Few people know the ins and outs – and frustrations – of watchOS (née WatchKit) development like David does. But despite the platform’s rudimentary beginnings, David has stuck with it, making the best watch version of Pedometer++ that was possible with each turn of the SDK and, later, OS. That’s as true with version 8.0 of the app as it has ever been.

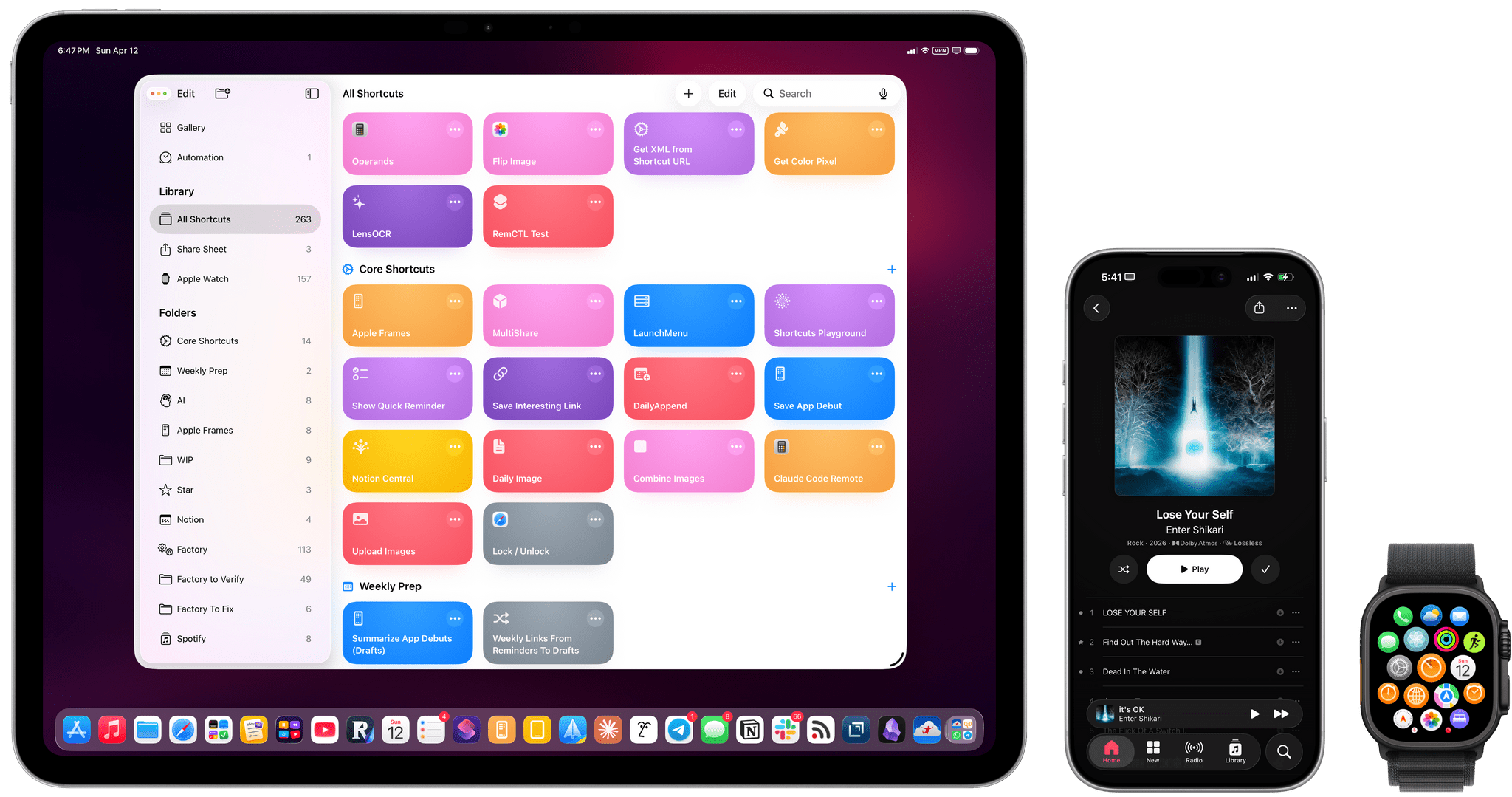

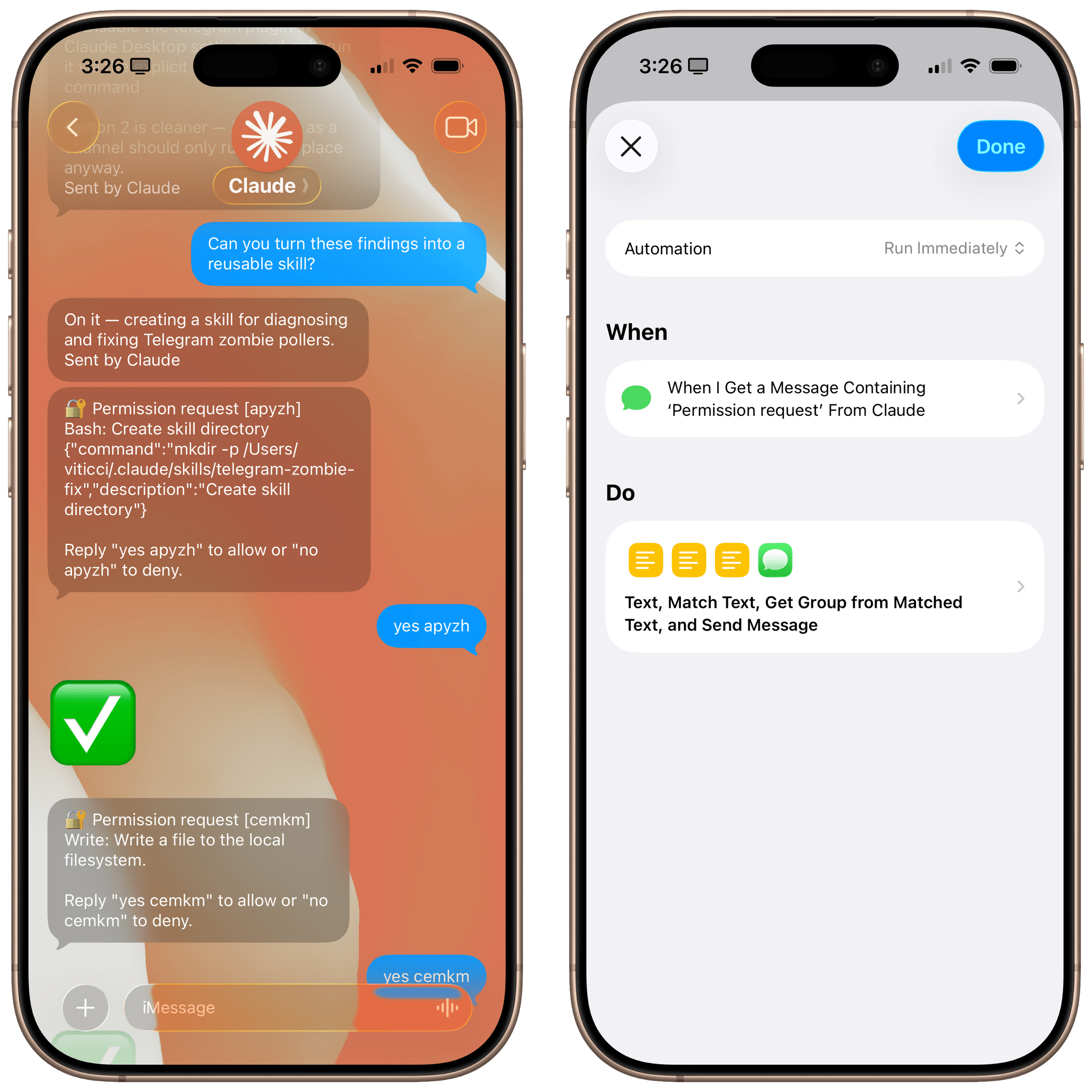

Introducing Apple Frames 4: A Revamped Shortcut, Support for Frame Colors, Proportional Scaling, and the Apple Frames CLI for Developers

Well, it’s been a minute.

Today, I’m very happy to introduce Apple Frames 4, a major update to my shortcut for framing screenshots taken on Apple devices with official Apple product bezels. Apple Frames 4 is a complete rethinking of the shortcut that is noticeably faster, updated to support all the latest Apple devices, and designed to support even more personalization options. For the first time ever, Apple Frames supports multiple colors for each device, allowing you to mix and match different colored bezels for each framed screenshot; it also supports proportional scaling when merging screenshots from different Apple devices.

But that’s not all. In addition to an updated shortcut, I’m also releasing the Apple Frames CLI, an open source command-line utility that lets developers and tinkerers automate the process of framing screenshots directly from the Mac’s Terminal. And there’s more: the Apple Frames CLI is also designed to work with AI agents, and it comes with a Claude Code/Codex skill that lets coding agents take care of framing dozens or even hundreds of screenshots in just a few seconds, from any folder on your Mac.

Apple Frames 4 is the result of an idea I had months ago that enabled me to remove more than 500 actions from the shortcut, going from over 800 steps down to ~300. I did all that work manually, but it was worth it; the improved shortcut is faster and vastly more reliable than before thanks to a more intelligent logic that adapts to the growing ecosystem of Apple screen sizes and display resolutions.

Apple Frames 4 and the Apple Frames CLI represent a substantial step forward for screenshot automation, and I’ve been using both extensively for the past few weeks.

Let’s dive in.

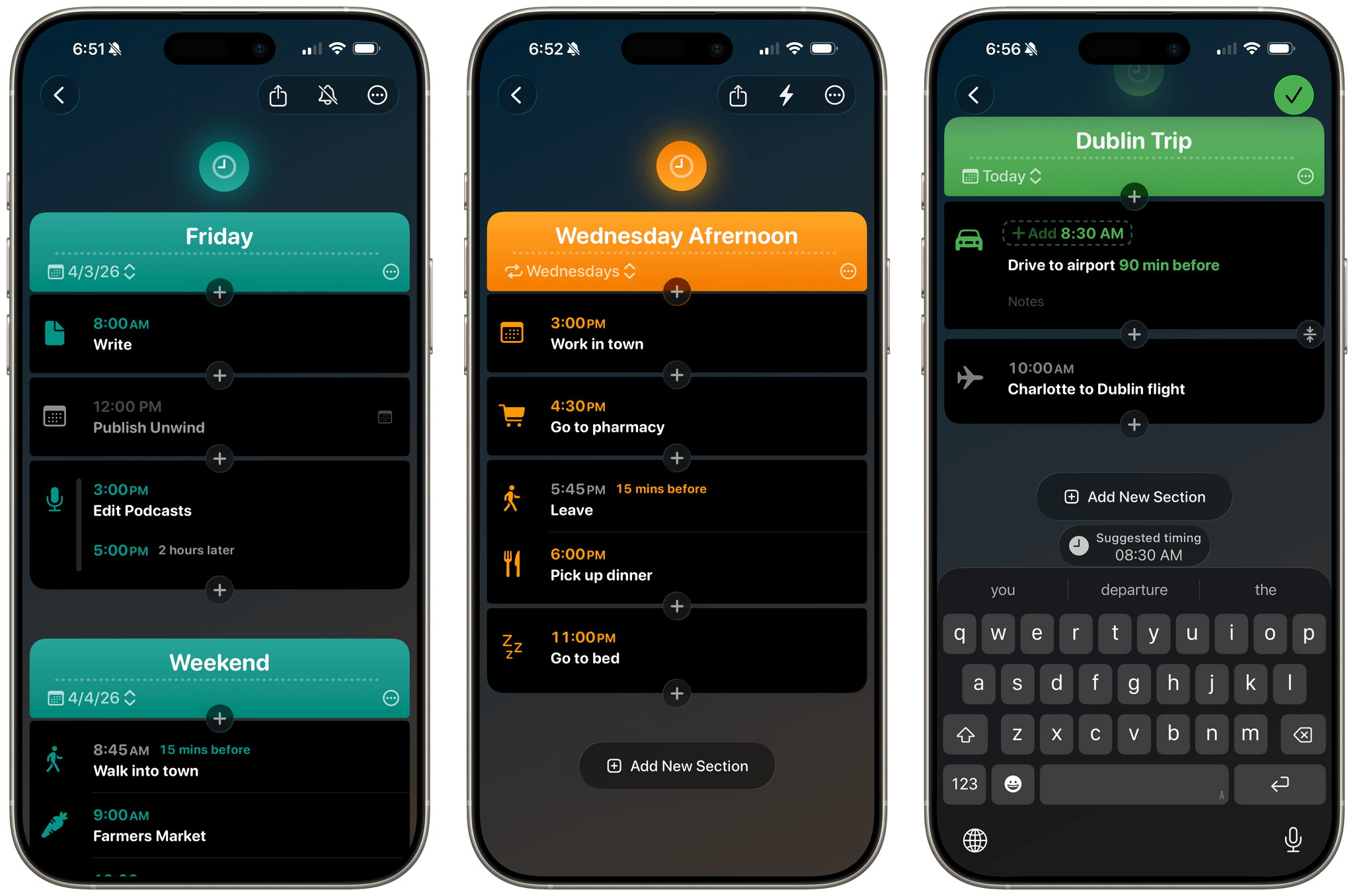

Hour by Hour: Reverse Engineering Your Schedule

Hour by Hour is a clever new approach to scheduling your time from Joe Humfrey of Selkie Design that took me a little while to get used to, but has really grown on me.

The app was inspired by travel planning and the age-old question, “When should I leave for the airport?” You’ve probably been there before. You have a flight at, say, 2:00 pm, but you need to drive 30 minutes to the airport, add some time to park, take a shuttle to the terminal, get through security, and build in a little extra wiggle room just in case traffic is bad or something else goes sideways. Suddenly, 2:00 pm becomes an exercise in mental gymnastics as you work your way back to when you should walk out the door.

Hour by Hour solves this sort of scheduling, but for every type of event, by using the same kind of reverse planning. At the same time, it’s not really a calendar app so much as a scheduling companion for your calendar. You can pull your calendar events into Hour by Hour, but you don’t have to, and if you dive into the app expecting to use it the same way you use a traditional calendar, the assumptions you bring with you will probably trip you up.

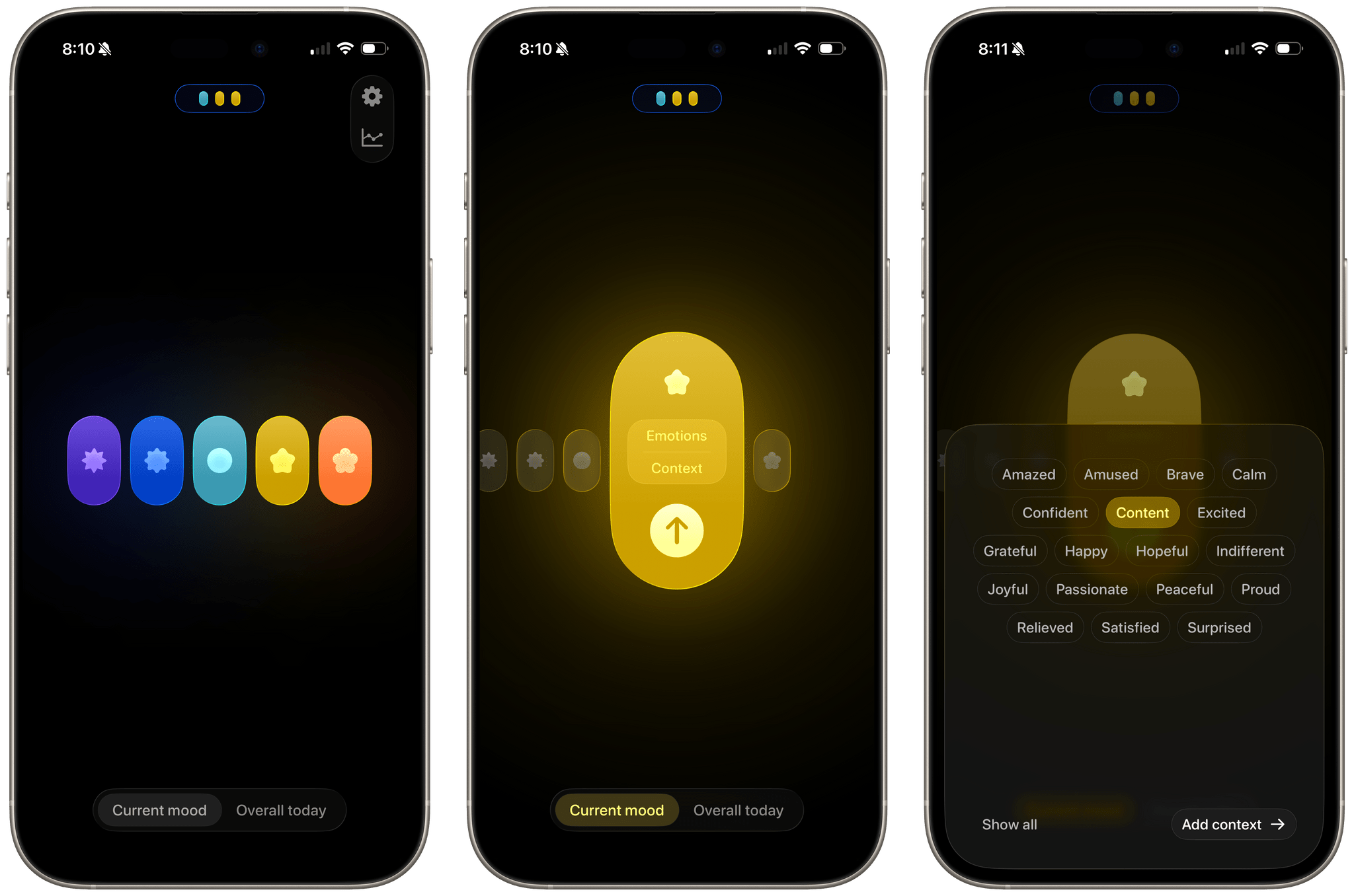

Moods Faster: Effortless Mood Tracking

I’ve said over and over that the most important feature of any habit tracker is being able to get in and out of the app quickly. For some developers, that doesn’t always come naturally. After all, doesn’t every developer want their customers to use their app more than others? Sure they do, but it’s not always the right instinct.

That’s something Nick Leith has understood for a long time. Leith is the developer of Remind Me Faster, a companion app for Apple Reminders that accelerates task entry. I’ve moved in and out of Reminders annually for my macOS reviews and every time, the first app I download after the move-in is Remind Me Faster because it makes using Reminders much easier.

Leith has been thinking about how to make data entry simple and fast for years thanks to that app, and it shows with his brand new app, Moods Faster (Get it? Moods Faster -> Move Faster). Okay, you probably didn’t need that nudge, but I like the name. It’s fun.

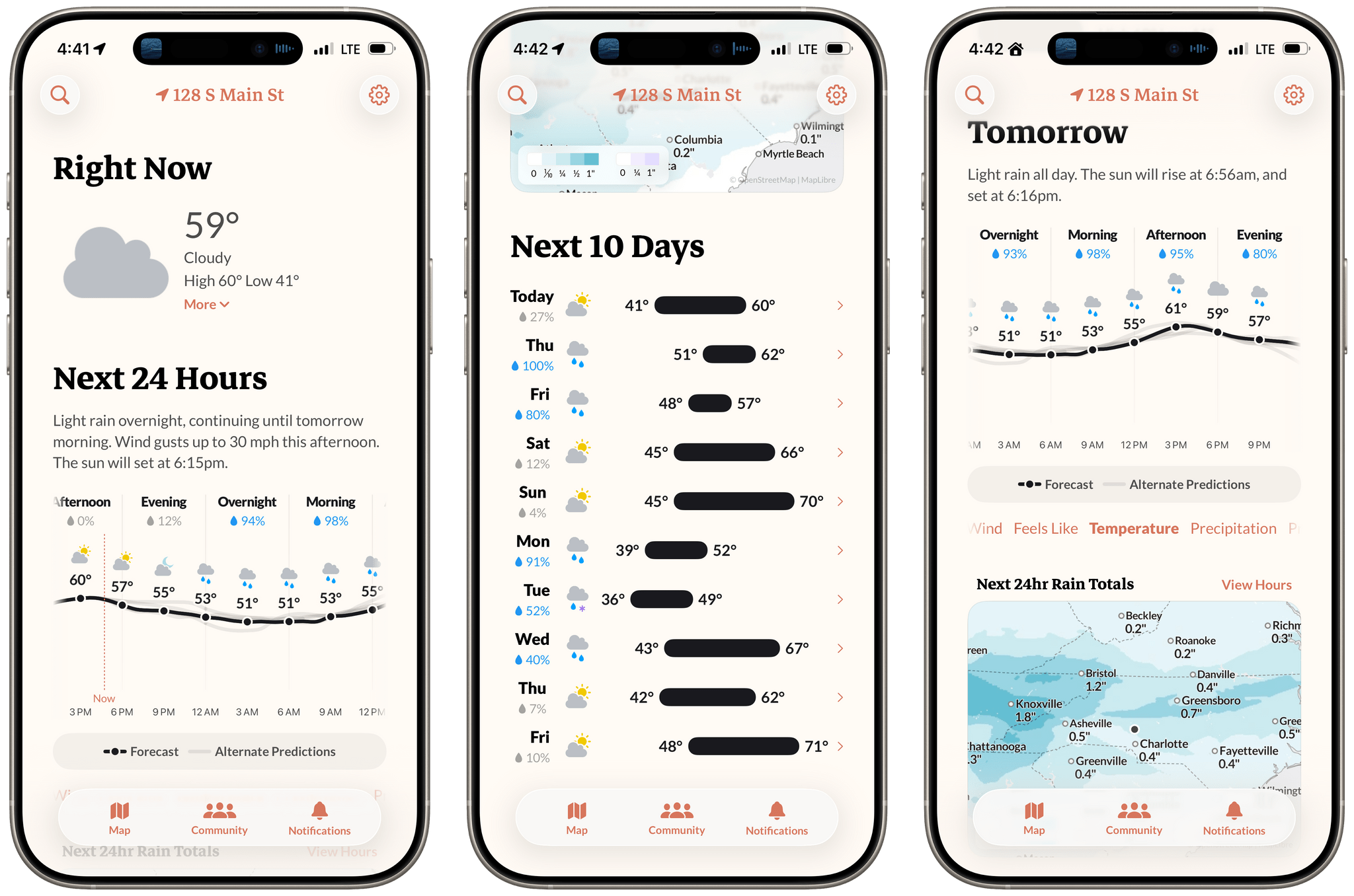

Acme Weather: A Fresh Take on Forecast Uncertainty

Earlier this week, the founders of Dark Sky made their post-Apple debut with a new weather app for the iPhone and Apple Watch: Acme Weather. It’s a terrific 1.0 with all the details you’d expect, plus a few interesting features that set it apart from other apps in its category.