Today Apple released the second major revision to iOS and iPadOS 14 since their introduction in September. The previous major update, 14.1, was largely just for iPhone 12 support and bug fixes. iOS and iPadOS 14.2 are packed with quite a few nice new additions to the operating system, and are available to the public as of this morning.

New Emoji and Wallpapers

iOS 14.2 packs over 100 new emoji, including a smiling face with a tear1, a ninja, a toothbrush, and a pickup truck. Emojipedia covered the new emoji earlier in the beta cycle, and of course Federico attempted to guess all of their official names on an episode of Connected. My personal favorite is the new mousetrap emoji, which reminds me of the leprechaun traps that my sister and I used to set up the night before St. Patrick’s Day when we were growing up.

Eight new still wallpapers have also been released in today’s update. Some are photos of mountainous regions while others are scenic illustrations. Each new wallpaper includes a variation for light and dark mode.

Magnifier with People Detection

Apple has shipped a first-party app called Magnifier on iOS for years now, but it’s hidden by default until you enable it from the Accessibility panel in Settings. The Magnifier app shows a view through the camera, but does not save images to the camera roll. Instead, its purpose is to help visually impaired users to better see their surroundings. Users can set the camera’s zoom level, or can snap an image and then zoom in and out of it. Options also exist to set color filters and adjust brightness.

In iOS and iPadOS 14.2 the Magnifier app is receiving a major update with a feature called “people detection.” This feature takes advantage of the LiDAR sensor on iPhone 12 Pro and the latest iPad Pro models to identify people and other objects in real time. If users have VoiceOver enabled, the updated Magnifier app will now audibly call out the objects and people that it is identifying. This allows visually impaired users to better navigate their surroundings by allowing their iPhone or iPad to call out what is around them. People detection with LiDAR also monitors distance between the user and their surroundings, and can communicate how close objects or other people are to them using sound or haptics.

Apple is billing people detection as a major breakthrough in visual accessibility. Unsurprisingly, accessibility expert Steven Aquino has an excellent rundown of the feature over at Forbes.

New AirPlay Controls

The controls for AirPlay devices have been redesigned in iOS 14.2, but I still find them to be a bit confusing. For starters, the new interface is now slightly different depending on whether you open it from the Lock Screen or from Control Center. If you tap the AirPlay button from Control Center’s audio playback section, you’ll still see playback controls above the AirPlay options. If you open AirPlay controls from the Lock Screen’s Now Playing interface though, playback controls are not present. I’m not sure why this discrepancy exists, as the interfaces are otherwise identical. Having playback controls always available is nice, so I hope Apple will eventually add them to the Lock Screen AirPlay interface too. (The Lock Screen version of the interface is also used when tapping the AirPlay button in the Music app.)

The new AirPlay interface now hides control of other devices behind a button labeled “Control Other Speakers & TVs.” Tapping that button opens a list of available devices that you can control. Past versions of the AirPlay interface automatically showed other devices in a list below your selected one, which always felt a bit confusing if multiple devices were playing at once. Tapping the new button and then selecting an option switches the whole interface to controlling that device.

Once a device is selected, if playback is active you’ll see the audio’s artwork shown at the top. If nothing is playing in the Control Center or Lock Screen AirPlay interfaces then you’ll see a grid of suggestions from Apple Music. Federico also covered the new AirPlay controls in his iOS 14 review. Make sure to check that out for a closer look at the new changes, as well as more information on how the suggestions feature works.

Scene Detection and Auto FPS

Another new feature targeting devices with LiDAR sensors, scene detection improves exposure of photos taken in the Camera app. Using machine learning and LiDAR, scene detection can intelligently identify objects in the camera frame and adjust exposure settings in real time to make the image look better.

I haven’t been able to test scene detection since it doesn’t seem to be present at all on non-LiDAR devices like my iPhone 11 Pro. In my experience though, Apple’s intelligent photo improvement technologies always tend to work great for me. If you do have the need to disable scene detection on a device that supports it, you can do so from the Camera page in the Settings app (the setting will only show up if your device supports the feature).

iOS 14.2 also introduces auto FPS for videos taken in the Camera app on the new iPad Air. This feature will intelligently alter the frame rate of videos to optimize quality in low light, as well as to conserve storage space.

Shazam Toggle in Control Center

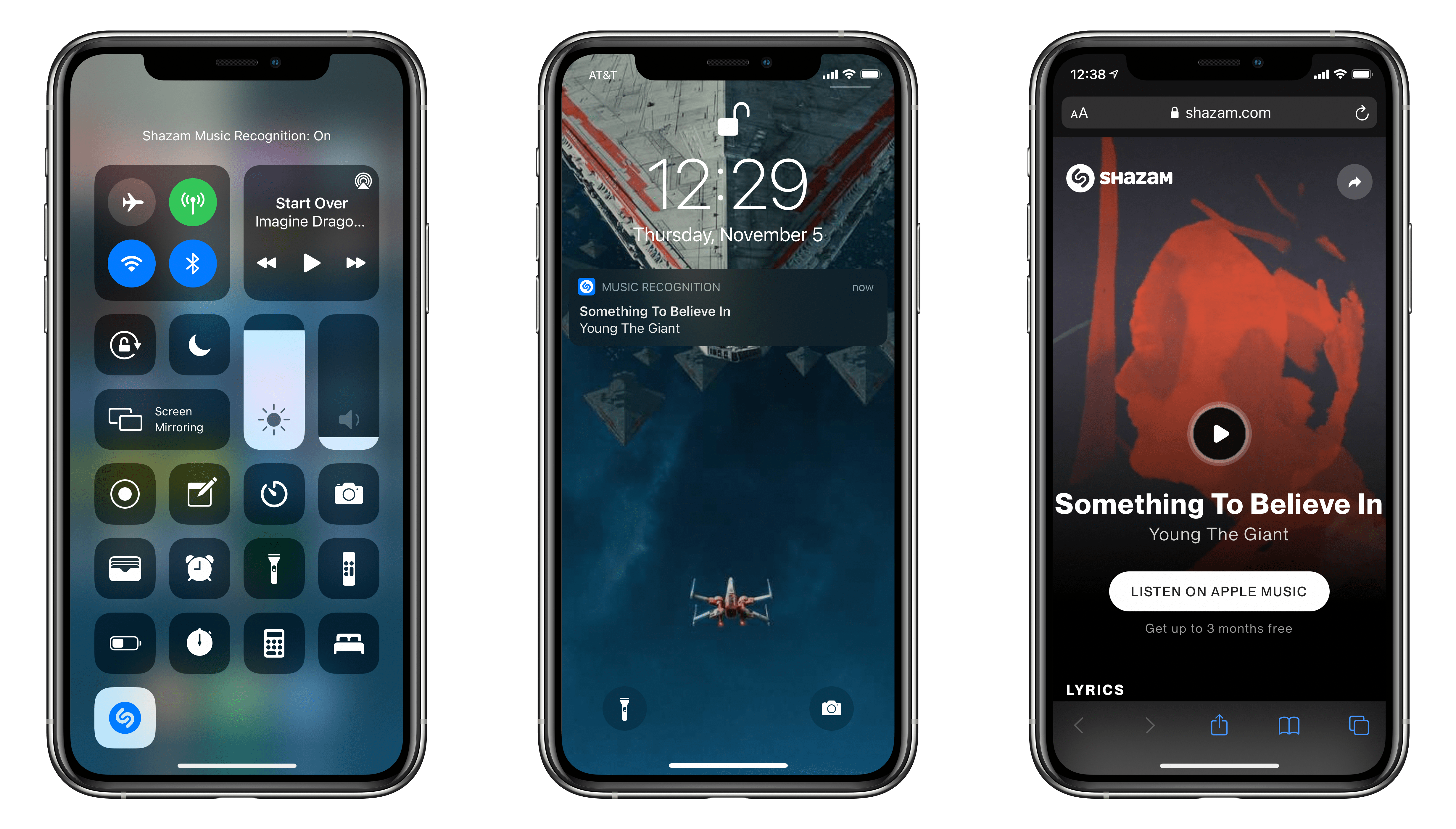

In the Control Center panel of the Settings app you can now add a new option called Music Recognition. This will enable a button in Control Center that will activate the Shazam music recognition feature that has previously been available by asking Siri. Your device will listen for a few moments and then show a notification with the song that is has discovered.

Interestingly, tapping the song opens a Safari webpage to Shazam where you can tap to listen to the song in Apple Music. I’m not entirely sure why the integration isn’t able to open directly to Apple Music from the notification, as I think that would be a bit nicer of an experience.

The new Shazam toggle can also identify music that is playing through your headphones. This means you can pick up background tracks from videos that you’re watching without stopping the video playback. Federico covered this feature in his iOS 14 review as well, where he noted:

Apple went beyond embedding regular Shazam as a system feature in Control Center though. The real power of iOS 14.2’s music recognition lies in how it can recognize audio playing inside apps even if you’re wearing headphones and nothing is coming out of your device’s speakers. Shazam’s music recognition works with both external and internal audio sources – a first for Shazam on iOS, which could never recognize internal audio due to system limitations before. But since Shazam is now owned by Apple, the company has started integrating its music recognition engine across the system, so you’ll no longer have to make sure audio is playing through the speakers to recognize songs.

AirPods Battery Health Improvements

An update to AirPods in iOS 14.2 will alter the way charging works for the devices. Rather than staying topped off the entire time they’re on a charger, AirPods will now allow their charge to drop a bit before recharging back to full. This change has already been seen elsewhere in Apple’s product lines, and its purpose is to maintain the battery health of devices over time. It will be particularly nice if this feature meaningfully increases the lifespan of AirPods batteries, as degraded batteries cannot be replaced in AirPods.

Intercom for HomePod

MacStories’ John Voorhees covered the new Intercom feature on MacStories a few weeks ago. Previously Intercom was available on new iPhone 12 devices, but iOS 14.2 brings it to the rest of Apple’s lineup. You can enable Intercom in the Home app, and then control it from the Intercom button that will show up in the Home app’s top right corner. Make sure to check out John’s article for a full review.

Bug Fixes

Lastly, iOS 14.2 fixes a number of bugs which you may have run into. Here’s the list of notable bug fixes that Apple included in the updates release notes:

- Camera viewfinder may appear black when launched

- The keyboard on the Lock Screen could miss touches when trying to enter the passcode

- Reminders could default to times in the past

- Photos widget may not display content

- Weather widget could display the high temperature in Celsius when set to Fahrenheit

- Voice Memos recordings are interrupted by incoming calls

- The screen could be black during Netflix video playback

- Apple Cash could fail to send or receive money when asked via Siri

Conclusion

iOS 14.2 seems like a very solid update mid-cycle update. The new AirPlay controls are an improvement over what was there previously, and I really like the new Shazam toggle in Control Center. Especially when I’m in public, it always felt a little strange to ask Siri out loud what song was playing. Now that process can happen silently with the tap of a button.

The new people detection feature in the Magnifier app could be a breakthrough in visual accessibility, and I’m excited to see how Apple continues to utilize the new LiDAR sensors for features like this. Topping things off with new emoji and wallpapers leaves us with an exciting update only a month after iOS and iPadOS 14 shipped.

iOS and iPadOS 14.2 are available today from the Software Update section of the Settings app on all devices which supported iOS and iPadOS 14.

- :’) ↩