Today, Apple released iOS and iPadOS 15.4. The fourth major updates to iOS and iPadOS 15, originally released in September 2021, offer a long list of miscellaneous improvements and feature tweaks (which I will detail later in the story) as well as two major additions for iPad and iPhone users: the long-awaited Universal Control and the ability to use Face ID while wearing a mask, respectively.

I’ve been testing both iOS and iPadOS 15.4 since the first beta in late January, and I was able to spend some quality time with both of these features and everything else that is new and improved in these releases. Let’s take a look.

Universal Control

You’d be forgiven if you thought Universal Control was never going to ship and ended up dismissing it as vaporware. Introduced at WWDC 2021 and subsequently delayed to Spring 2022, Universal Control is finally here and, despite its long gestation, it’s labeled as a beta feature.

The underlying premise of Universal Control is simple and conceptually attractive: what if you could use the keyboard and trackpad of an iPad or Mac to control another nearby iPad or Mac, seamlessly moving the cursor between different platforms while keeping your hands on the keyboard? Unlike Sidecar, which allowed you to extend a Mac’s display by turning an iPad into a wireless receiver, Universal Control is the culmination of Apple’s love for platform integration and independence: you use one trackpad and one keyboard for both the Mac and iPad, but each device still runs its own OS. Universal Control was designed for people who like to use both macOS and iPadOS, alternating between the two operating systems in their daily workflows.

Obviously, I was intrigued by Apple’s proposition right away at WWDC 2021, but even more so once I started using a 14” M1 Max MacBook Pro (a review unit) a few months ago. This is a topic I will explore more in depth in a separate story, but, essentially, I now find myself regularly rotating between the MacBook Pro and iPad Pro for everything I do, whether it’s researching and writing articles in Obsidian, working on shortcuts for MacStories and Club MacStories, or preparing and recording podcasts. I don’t think I’m the only iPad Pro user who’s (re)discovered the joy of using macOS thanks to Apple silicon and the latest-gen MacBook Pros. Therefore, it’s not a surprise I would naturally gravitate toward Universal Control at this stage of my computing life.

Universal Control is pitched as a way to control an iPad or Mac from either device, meaning that you can also control a Mac from an iPad Magic Keyboard or keyboard-trackpad combo connected to an iPad. However, the fact that Universal Control’s preferences can only be managed from macOS seems to suggest the feature was originally conceived as a Mac-to-iPad integration rather than the other way around.

To get started with Universal Control, let’s round up the list of requirements. First, you’ll have to be running macOS Monterey 12.3 and iPadOS 15.4 on a compatible machine. The devices supported by Universal Control are:

- MacBook Pro (2016 and later)

- MacBook (2016 and later)

- MacBook Air (2018 and later)

- iMac (2017 and later)

- iMac (5K Retina 27-inch, Late 2015)

- iMac Pro

- Mac mini (2018 and later)

- Mac Pro (2019)

- iPad Pro

- iPad Air (3rd generation and later)

- iPad (6th generation and later)

- iPad mini (5th generation and later)

This is a fairly comprehensive list of modern Apple devices, so there’s a good chance your existing Mac or iPad supports Universal Control out of the box. It gets more interesting when it comes to software and wireless requirements. For Universal Control to work, these conditions apply too:

- Both devices must be signed in to iCloud with the same Apple ID and 2FA enabled;

- For wireless use, both devices must be within 30 feet of each other (10 meters) with Bluetooth, Wi-Fi, and Handoff enabled. The Mac and iPad cannot be sharing a cellular connection via Personal Hotspot;

- For wired use over USB, you must trust your Mac on the iPad.

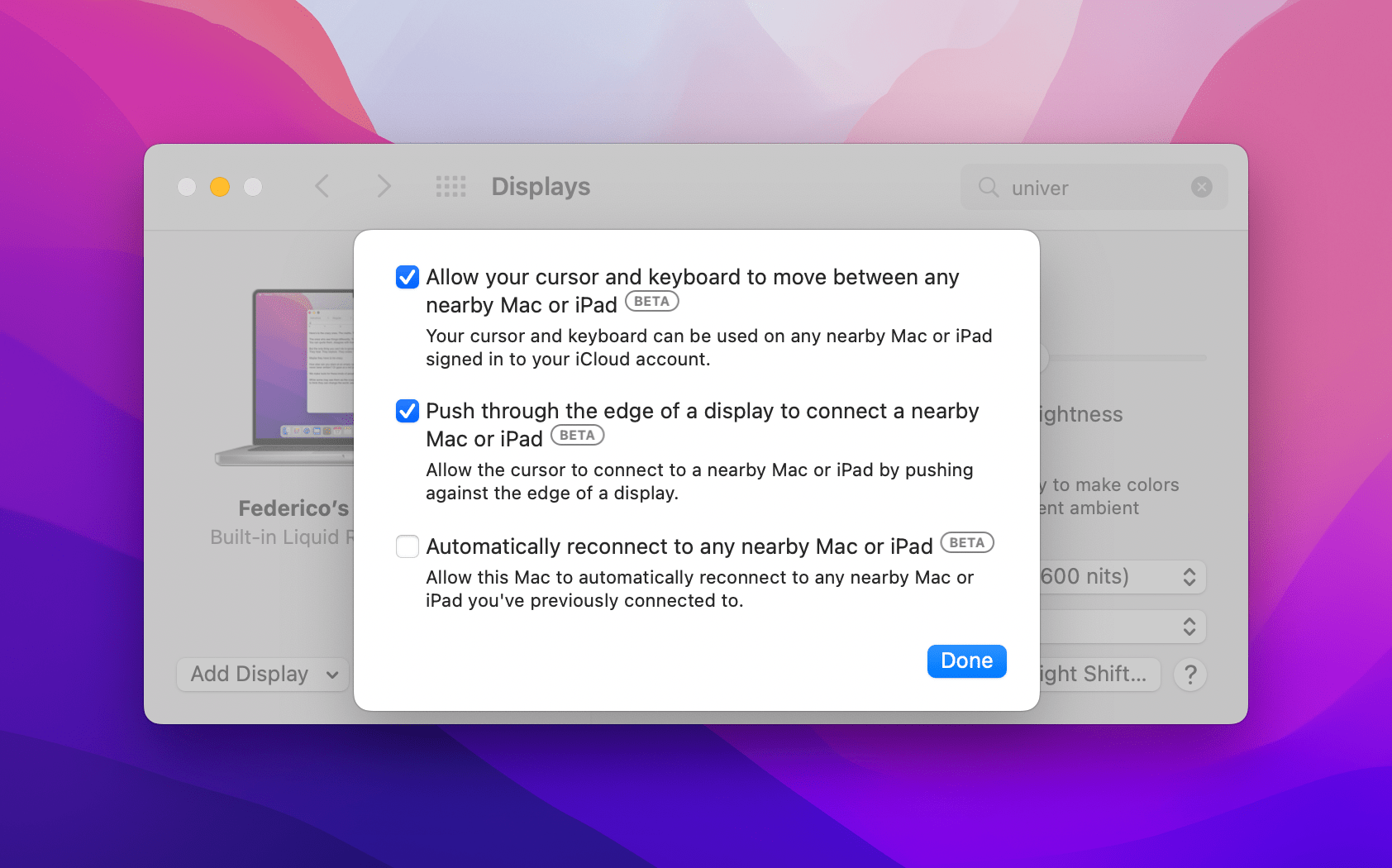

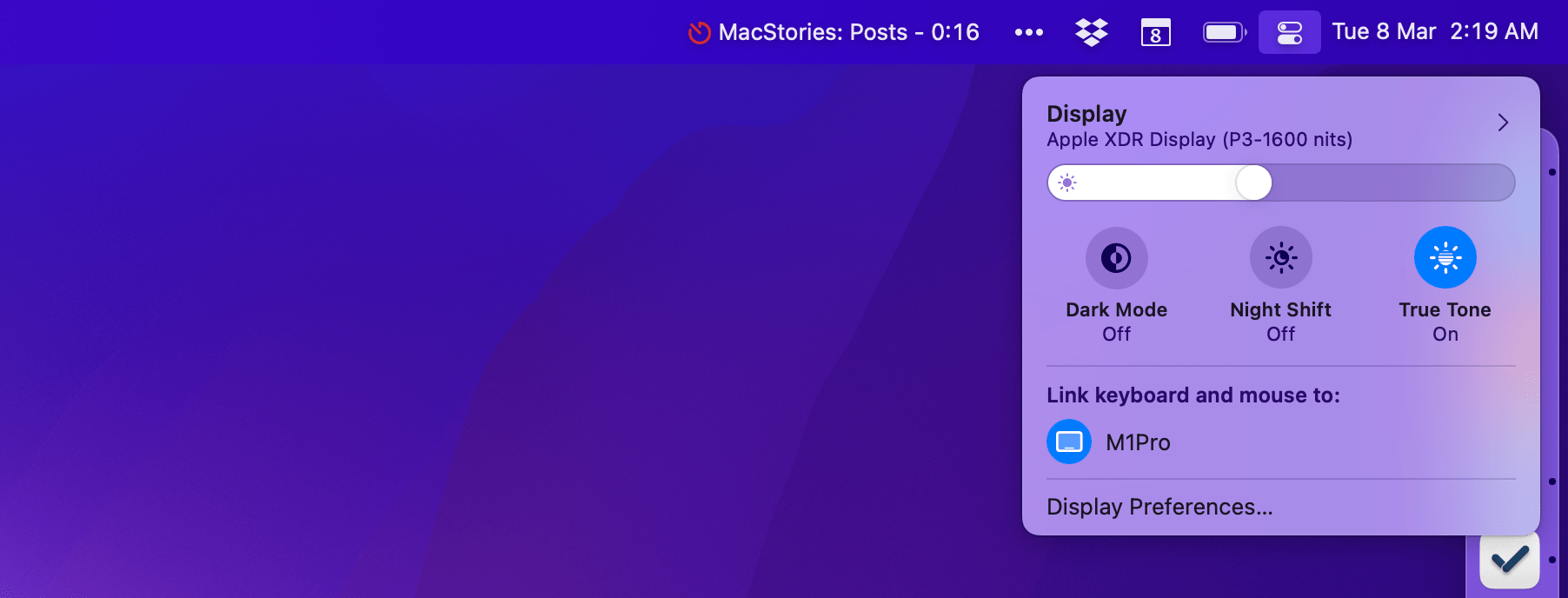

If you meet these criteria, you can start using Universal Control by heading over to System Preferences ⇾ Displays on your Mac and clicking the Universal Control button. You’ll be presented with the following options:

The first checkbox is a global switch that controls whether or not Universal Control is turned on. The second option lets you connect to a nearby device by pushing the cursor against the edge of the display; in my tests, however, disabling it had no effect on this behavior (I was always able to switch between devices by pushing against the edge), so I’m not sure what it’s supposed to do.

The third option allows you to automatically reconnect to a nearby iPad or Mac you were previously connected to. This one is disabled by default, so if you want to reconnect to a previous device, you’ll have to do so explicitly by pushing with the cursor over the edge of the screen toward where the other display was before. If you enable automatic reconnection, iPads and Macs that meet the aforementioned criteria will automatically connect and show up in the Displays preference pane as soon as they’re available. Based on these details, it’s clear that the best way to use Universal Control is to keep all the three options turned on so you can achieve the seamless integration between platforms Apple has been marketing thus far.

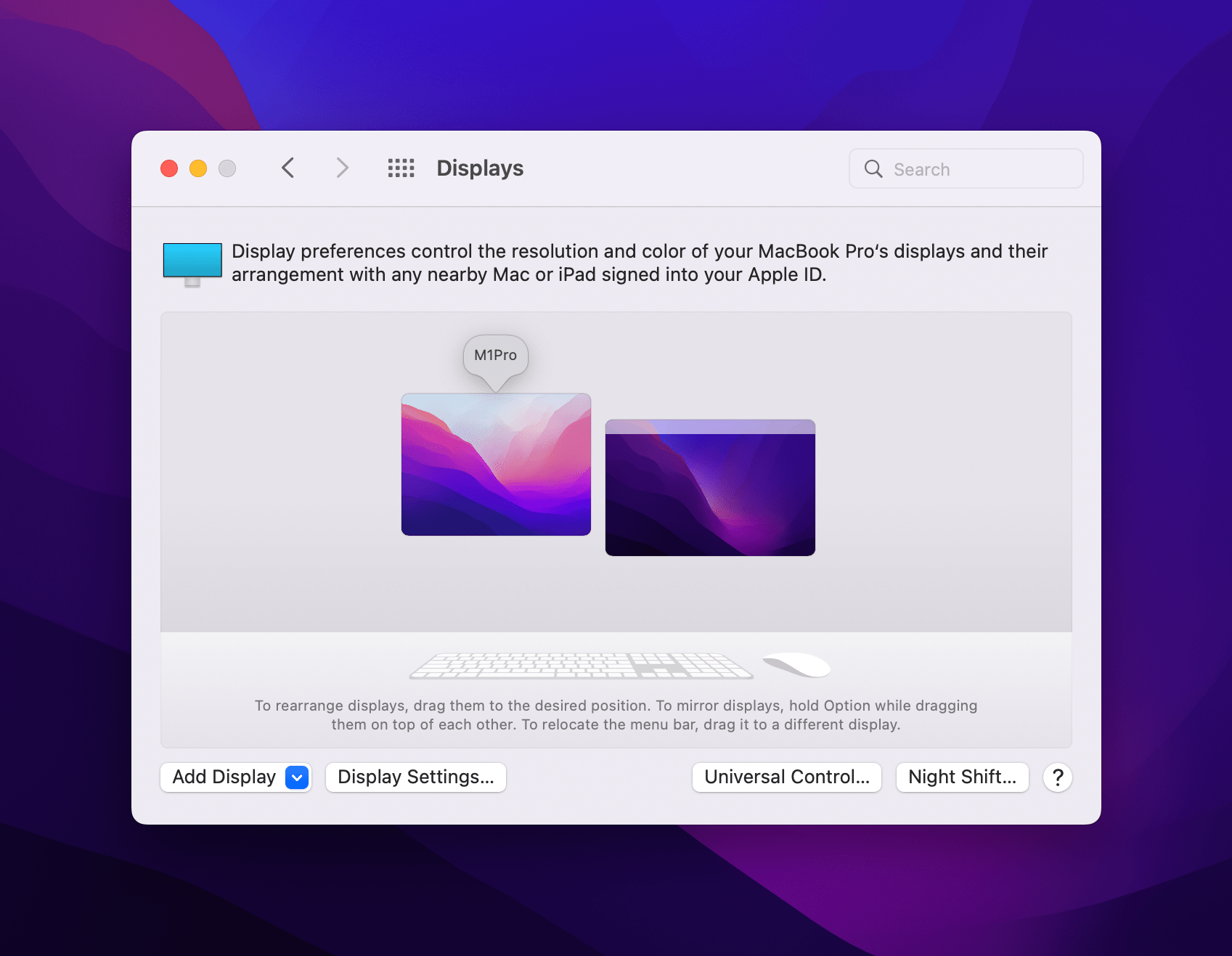

I was initially surprised by Apple’s decision to embed Universal Control’s options in the Displays section of System Preferences, but it makes sense: the same system you’ve long used to assign a placement to external displays for your Mac can also be leveraged to configure where a connected Mac or iPad should be placed relative to your host Mac. This way, if you want to, say, place an iPad Pro on the right side of your Mac but don’t want to give up the bottom-right Hot Corner on macOS for gesture activation, you can “raise” the iPad a little higher in System Preferences.

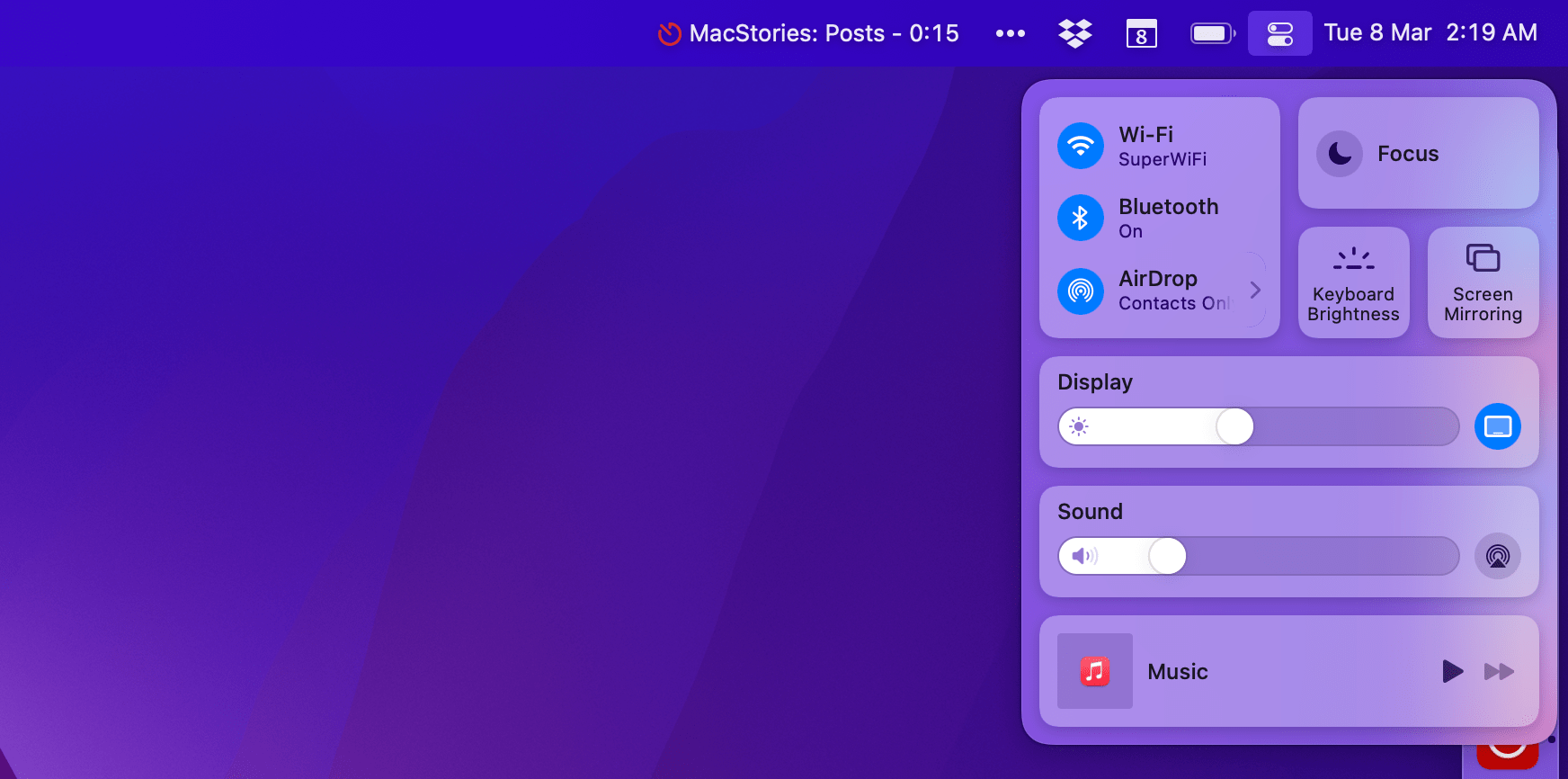

Fortunately, System Preferences isn’t the only place on macOS Monterey where you can activate Universal Control: you’ll also find a new icon in Control Center’s Display tile (which you can optionally pin to the menu bar) that you can click to open quick display settings anywhere on macOS. In this dropdown menu, you’ll find a new entry for ‘Link keyboard and mouse to…’ that lets you select devices eligible for Universal Control so you can manually connect to them from the menu bar.

Unsurprisingly, Apple hasn’t built any Shortcuts actions to reconnect to Universal Control devices.

Using Universal Control

In my tests over the past couple of months, I have exclusively used Universal Control over Wi-Fi in two different locations: at home with my modern Wi-Fi 6 network, and at Silvia’s childhood home in Viterbo where they’re not using Wi-Fi 6. Additionally, I have used Universal Control in two configurations: MacBook Pro and 2021 iPad Pro as well as MacBook Pro, iPad Pro, and iPad mini since more than one iPad can participate in Universal Control.

When Universal Control works reliably, it’s the kind of feature that is indistinguishable from magic, and it’s changing how I think of my work setup split across the MacBook Pro and iPad Pro.

Here’s what I love about Universal Control: without physically relocating my hands from one keyboard to another, I can instantly “switch modes” and jump from macOS to iPadOS while keeping the interaction paradigm for each platform intact. This means that when the macOS cursor moves over to iPadOS, both keyboard shortcuts and gestures become the ones associated with iPadOS. ⌘Space triggers Raycast on my MacBook Pro, but it turns into Spotlight once I hop over to the iPad; a three-finger horizontal swipe does nothing on my Mac, but on the iPad, it lets me quickly move across recently used apps. And so forth for all kinds of gestures that exist on both iPadOS and macOS. With Universal Control, the same input device instantly adapts to the selected platform.

Years ago, I would have likely opposed this type of indirect manipulation of content on the iPad. That ship sailed two years ago with the Magic Keyboard and iPadOS 13.4. Because I’m so used to working with an external trackpad and keyboard on my iPad Pro now, performing iPad gestures on the MacBook’s trackpad doesn’t feel strange at all and I got used to it right away. If anything, the MacBook Pro’s larger trackpad makes those iPad gestures even more comfortable to perform. One could even say that the MacBook Pro’s keyboard and trackpad are the best external input devices you can get for an iPad Pro in 2022. (I’m kidding…to an extent. These folks may be onto something.)

I’ve gotten used to flipping between the Mac and iPad with Universal Control at this point, and I wouldn’t want to go back to physically moving my hands between keyboards. What’s also cool about Universal Control is that drag and drop operations are supported too. My favorite example: take a screenshot on iPad, then start dragging it onto the Mac’s desktop to save it as a PNG file in Finder. This kind of seamless integration “just works” with Universal Control; it makes you wonder why devices in the Apple ecosystem haven’t always worked this way.

That said, Universal Control is still a beta feature, which is why I used “when it works” above. Be prepared to run into bugs, occasional slowdowns, and hiccups that will require you to turn it off and on again if something gets stuck. For instance, Universal Control would visibly stutter and drop frames on Silvia’s old Wi-Fi network, and it was much faster and more reliable on my modern Wi-Fi 6 network in Rome. In my tests, I noticed that sometimes the pointer would successfully move from Mac to iPad but keyboard input would remain assigned to the Mac only. This has been a pretty common issue for me; to “fix” it, I have to lock my iPad Pro and unlock it again.

I also want to point out two issues I had with Universal Control that aren’t necessarily “bugs” but questionable decisions on Apple’s part. The ability to drag files between iPad and Mac is wild, but it works better when a file starts on the iPad and is dropped on macOS; doing so the other way around only highlights the lack of a real desktop on the iPad Home Screen and the inability to “park” items in a temporary shelf. If you want to quickly drag a file from macOS to iPadOS and save it there, you’ll have to open the Files app before dropping the file, which is awkward.

My other qualm is about how keyboard shortcuts work in Universal Control: for keyboard input to properly transfer from one device to another, you’ll have to click anywhere onscreen on the other device at least once. Otherwise, even if the pointer has moved to the second device, keyboard input will still remain active on the first one. I can’t tell you how many times I found myself moving the pointer from Mac to iPad and press ⌘Space only to find that Raycast was being triggered on macOS instead of Spotlight on iPadOS. I think this is confusing and Apple needs to improve Universal Control so that both pointer and keyboard input transfer at the same time the moment you switch displays. The current implementation is clunky and feels disorienting.

Despite some (understandable) beta issues, I’ve had a great time using Universal Control on my devices; this feature alone is a good enough reason to update to iPadOS 15.4 and macOS Monterey 12.3 as soon as possible.

Universal Control joins Universal Clipboard, Mac Catalyst, iCloud sync, and Universal purchases in the group of Apple technologies that make it possible to effortlessly move between platforms without sacrificing the aspects that make each unique. Universal Control feels like it was built exactly for users like me – folks who have embraced the dual Mac-iPad life thanks to Apple silicon over the past year. I’m glad Universal Control is finally out, relieved that it works about as well as Apple promised, and hopeful that it’ll only get better with time.

Face ID with a Mask

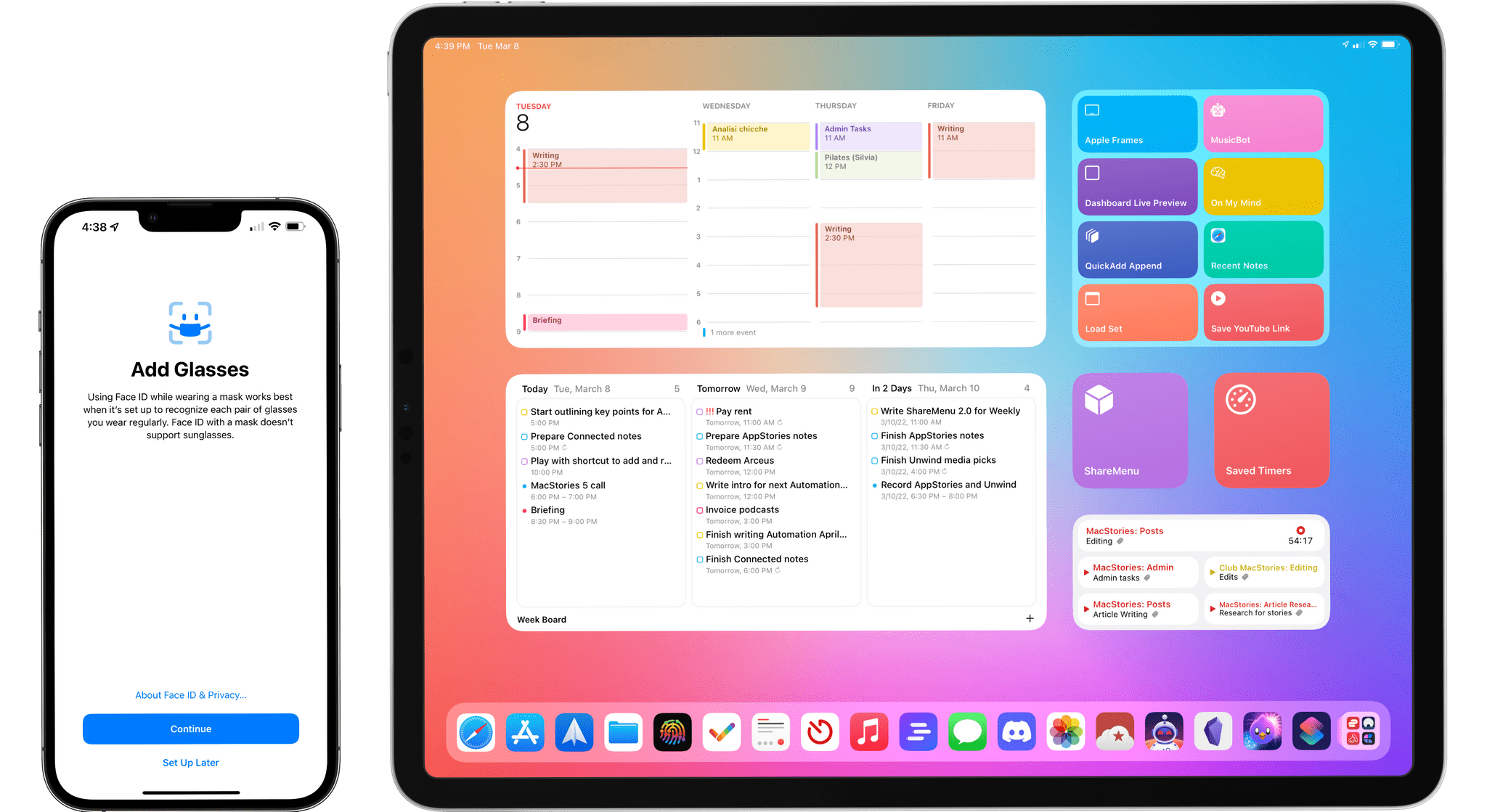

If Universal Control will push iPad users to upgrade to 15.4, there’s another major addition that will instantly convince millions of iPhone users to get iOS 15.4 immediately: Face ID with a Mask.

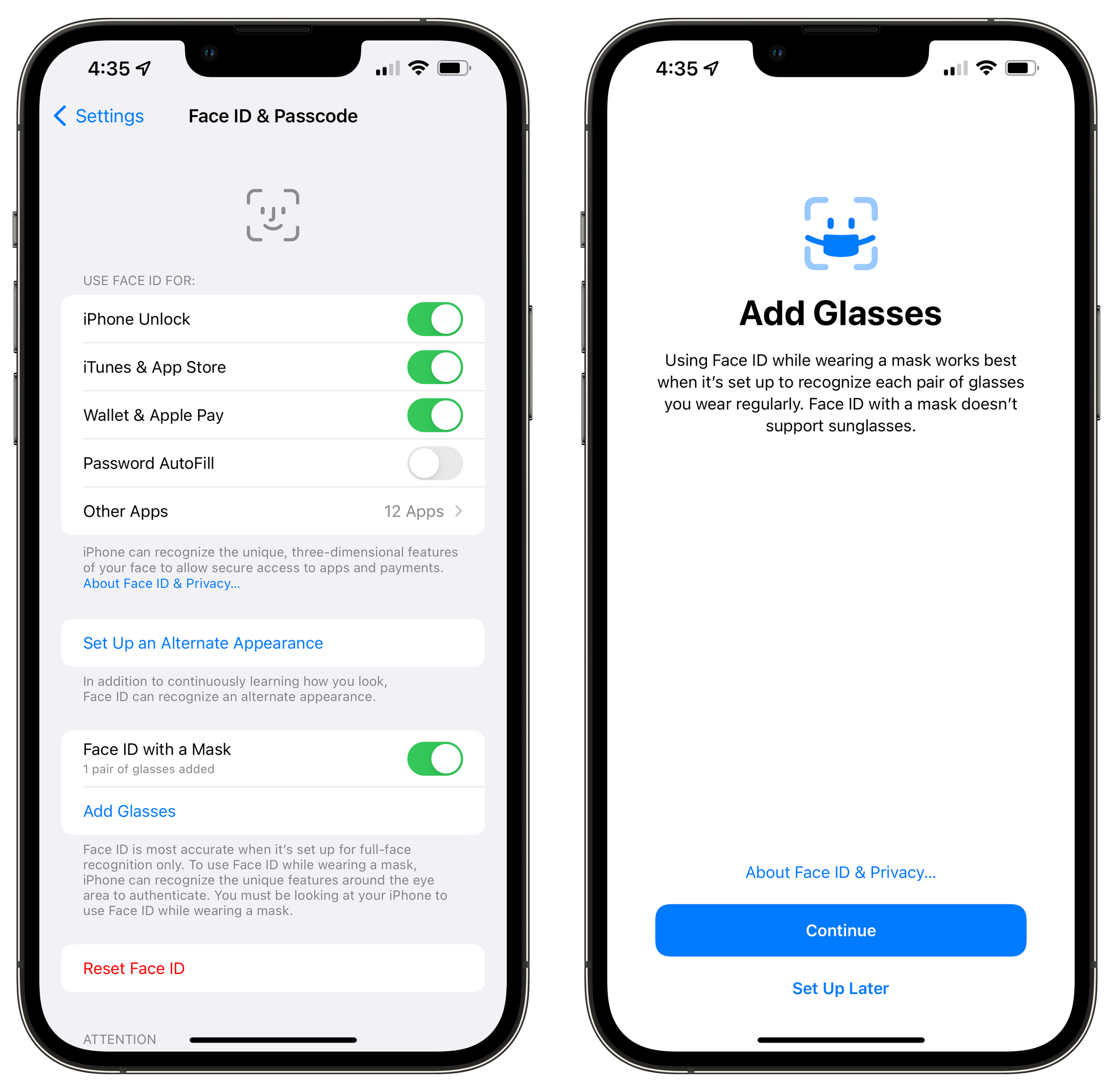

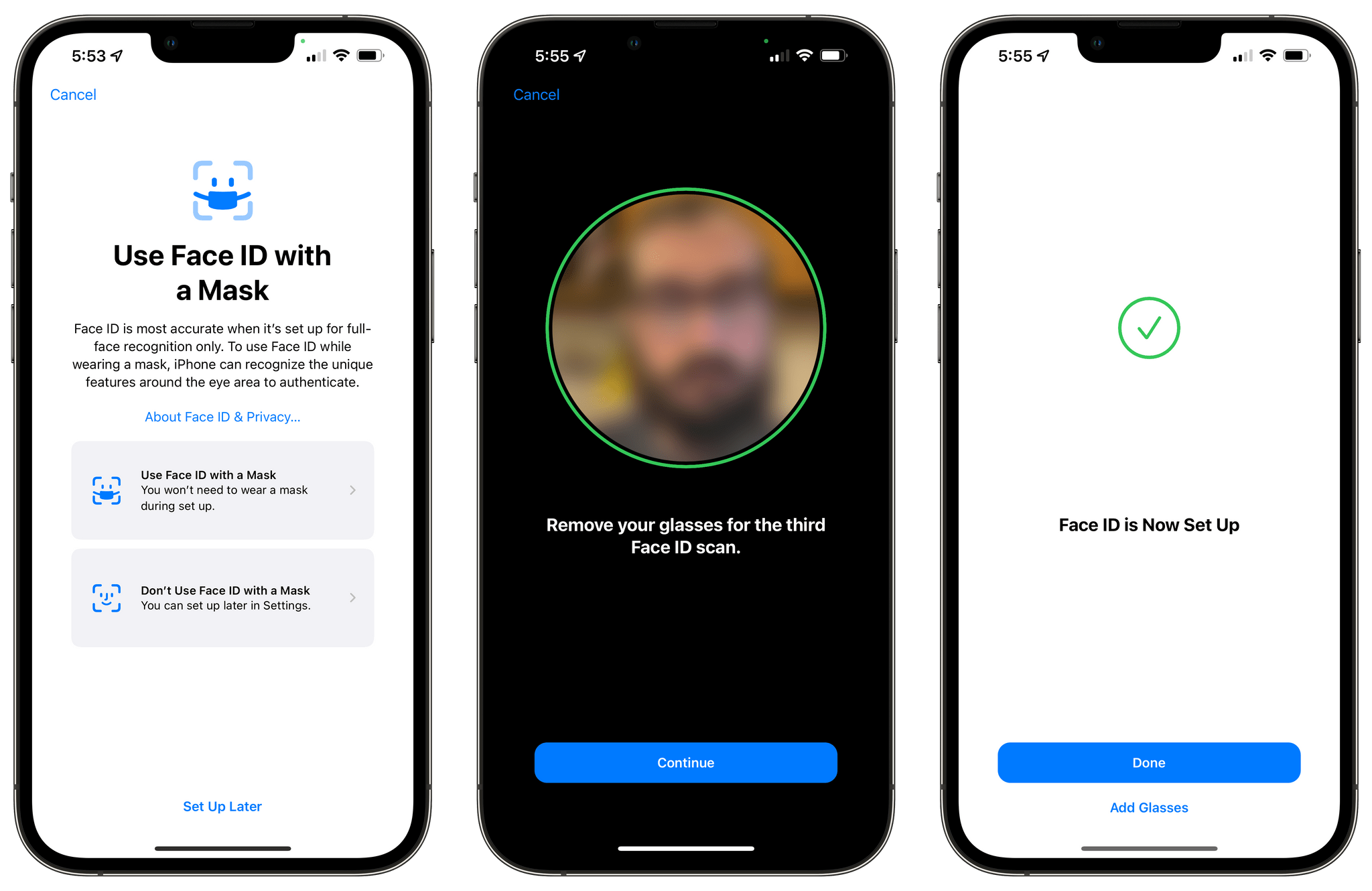

Starting with iOS 15.4, you’ll be able to unlock your iPhone with Face ID even if you’re wearing a mask by enabling the optional toggle in Settings ⇾ Face ID & Passcode labeled ‘Face ID with a Mask’. Of course, it’s not like the iPhone’s TrueDepth camera can suddenly see through masks and face coverings; instead, in enabling this option, you’re accepting a security trade-off in that Face ID will only recognize the “unique features around the eye area” to authenticate you.

While regular Face ID works with full-face recognition, enabling this toggle makes Face ID less secure but adds the convenience of unlocking a phone when you’re out and wearing a mask without having to type in your passcode or use Apple Watch unlock. It’s a compromise you have to be willing to accept, and it’s exactly the option I wanted Apple to roll out two years ago when the pandemic hit.

Here are a few things you should know about Face ID with a Mask. According to Apple, Face ID is “most accurate” when it’s set up for full-face recognition, which makes sense. Face ID with a Mask is only available on the iPhone 12 generation and later, leaving the iPhone X and 11 out of this – surprising, given that both models have a TrueDepth camera with Face ID. In a nice touch, Apple is mitigating the decrease in security by enforcing attention awareness: if you’re wearing a mask and want to unlock with Face ID, you’ll always have to look down at your iPhone, even if you don’t normally use the ‘Require Attention for Face ID’ setting.1

Face ID with a Mask does not support sunglasses, but Apple created a separate option for people who wear eyeglasses. Both in Settings and during Face ID setup, you’ll be able to add pairs of glasses you usually wear and have them become part of Face ID with a Mask. If you’re wearing glasses while setting up Face ID with a Mask, the system will prompt you to remove them for a third scan; otherwise, you can add glasses later in Settings and you’ll see how many pairs you’ve added in the Face ID with a Mask section.

Speaking of setup, the entire flow has been revised to include a prominent ‘Use Face ID with a Mask’ page that lets you choose whether you want to stick with full-face recognition or use Face ID with a Mask. It’s impossible to miss.

Security trade-offs notwithstanding, Face ID with a Mask is what I’ve long argued Apple should have built years ago to make face recognition less inconvenient in the pandemic era: let me choose to give up some security by telling the system to scan my eye area only, so that I can keep using Face ID as I always have without other workarounds (i.e. Apple Watch unlock). That’s precisely what Apple added in iOS 15.4, and it works incredibly well: Face ID with a Mask takes a split-second longer than regular Face ID, but it’s always worked reliably for me and I don’t need to think about it.

I don’t have anything else to say about Face ID with a Mask. I would have loved to have this feature in 2020 and early 2021 when we were wearing masks outdoors all the time. Because I’m personally going to continue wearing masks indoors for the foreseeable future, I’m still glad this feature exists and I don’t imagine disabling it any time soon. Hopefully, the next-generation iPad Pros with Face ID will get this feature too.

Shortcuts Changes

While there aren’t any new actions in Shortcuts for iOS and iPadOS 15.4, it appears Apple is listening to community feedback and they’ve included some refinements to existing actions and app features worth mentioning here.

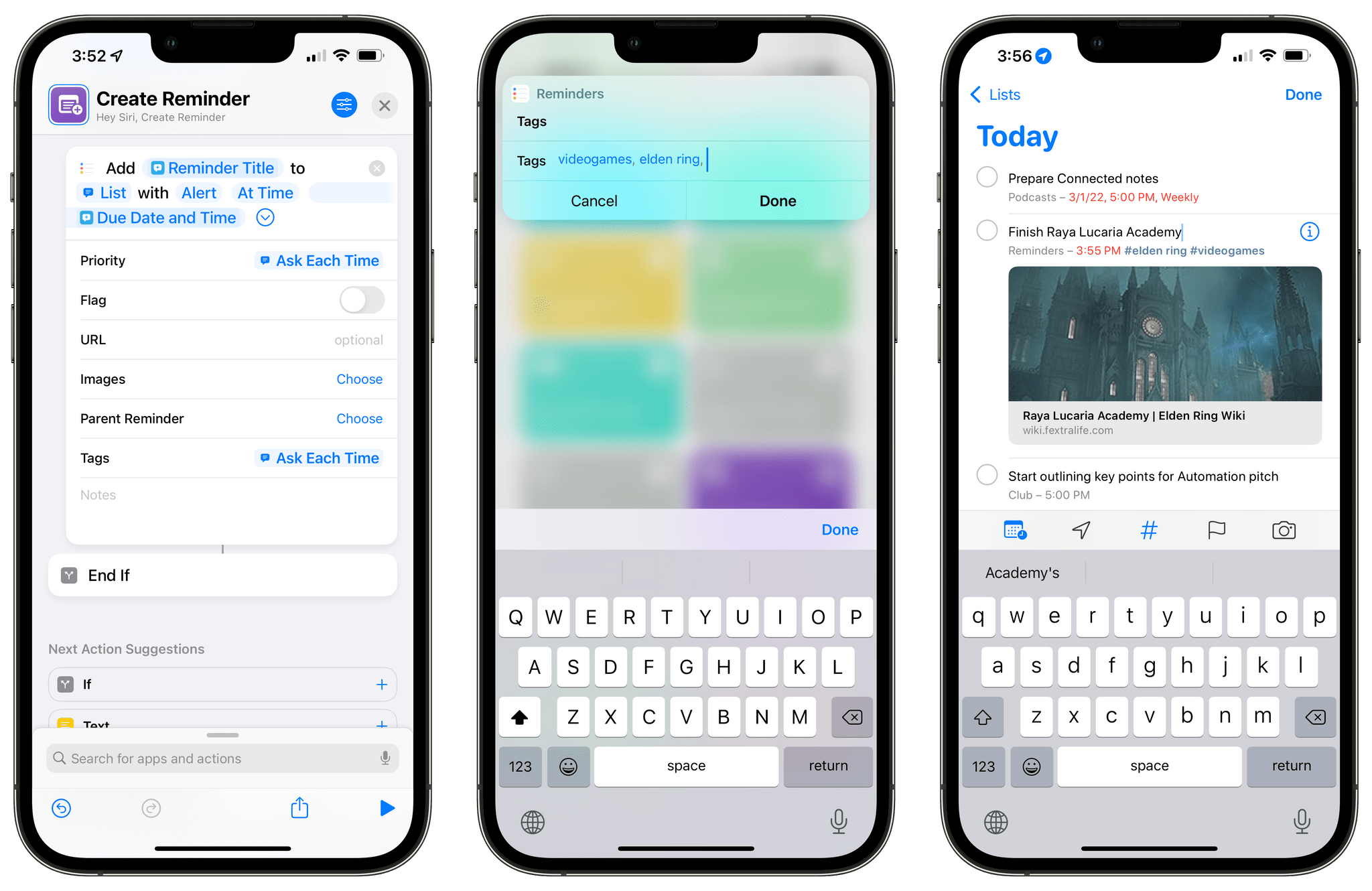

The Reminders actions in Shortcuts now fully support tagging, which Apple brought to the Reminders app with iOS 15 in September. You can now create a reminder with tags using the ‘Add Reminder’ action or filter reminders that contain specific tags via the ‘Find Reminders’ action. Tags are also supported as a property of the ‘Reminder’ object in Shortcuts, so you can always extract tags from any reminder you may be using as a variable in a shortcut.

Surprisingly, Apple didn’t add a ‘Get Reminders Tags’ action (so there’s no way to get a list of all the tags you’re already using in Reminders) and the Shortcuts app doesn’t support autocomplete when typing tags, so there’s still work to do on this front. But it’s a start, and we didn’t have to wait until iOS 16 to get tagging support for Reminders actions.

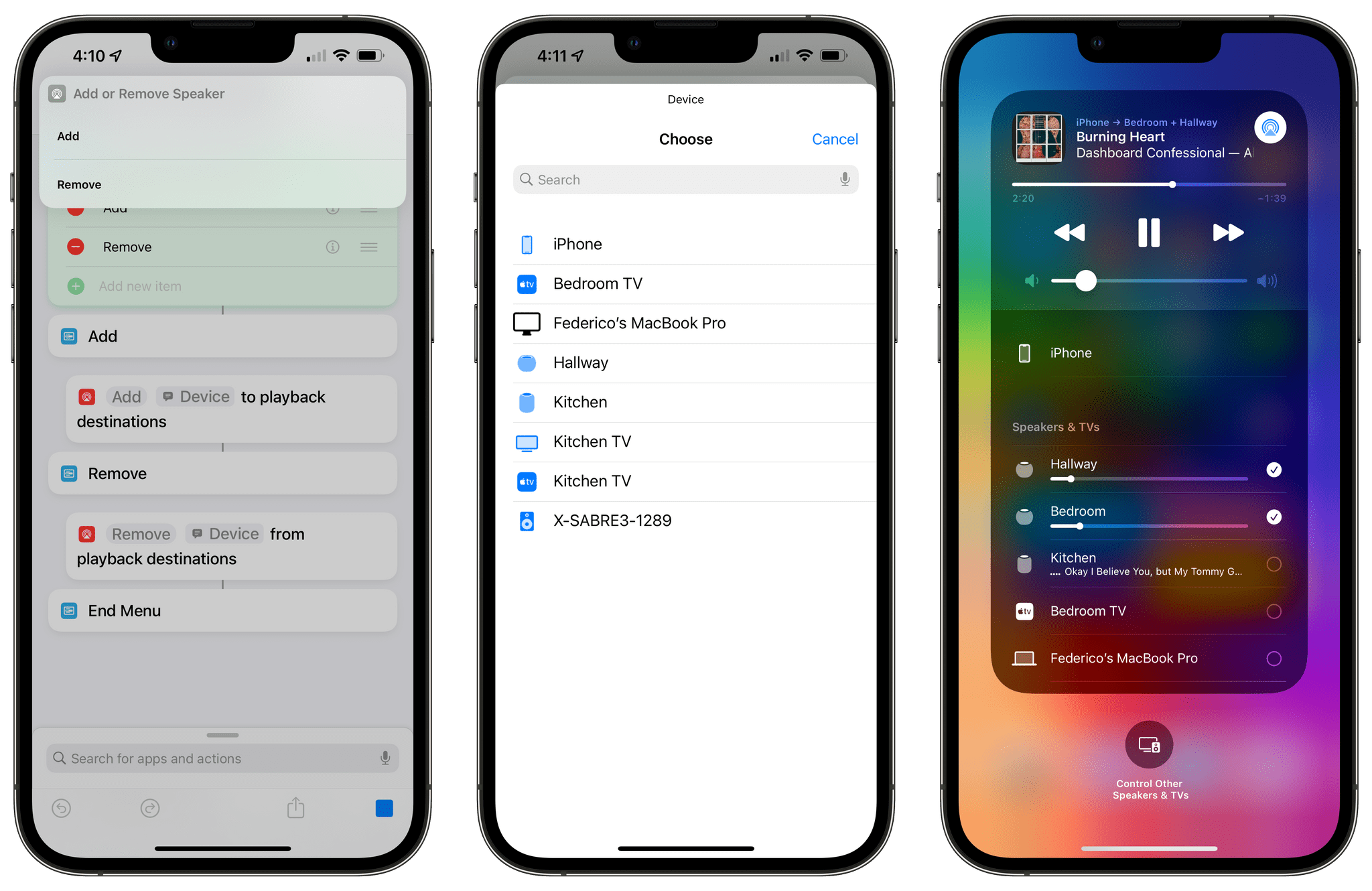

In iOS 15.4, Apple added new ‘Add’ and ‘Remove’ options to the ‘Change Playback Destination’ action, which lets you switch audio output from your iPhone or iPad to a nearby HomePod or AirPlay speaker. With these new actions, it’s now possible to add or remove speakers to and from an existing playback session without having to outright transfer it, thus replicating the behavior of Control Center and its checkboxes for nearby speakers. As you can see from the screenshots below, it’s easy to pick an additional speaker from the list of supported devices, which will be added to your current playback setup in just a couple of seconds.

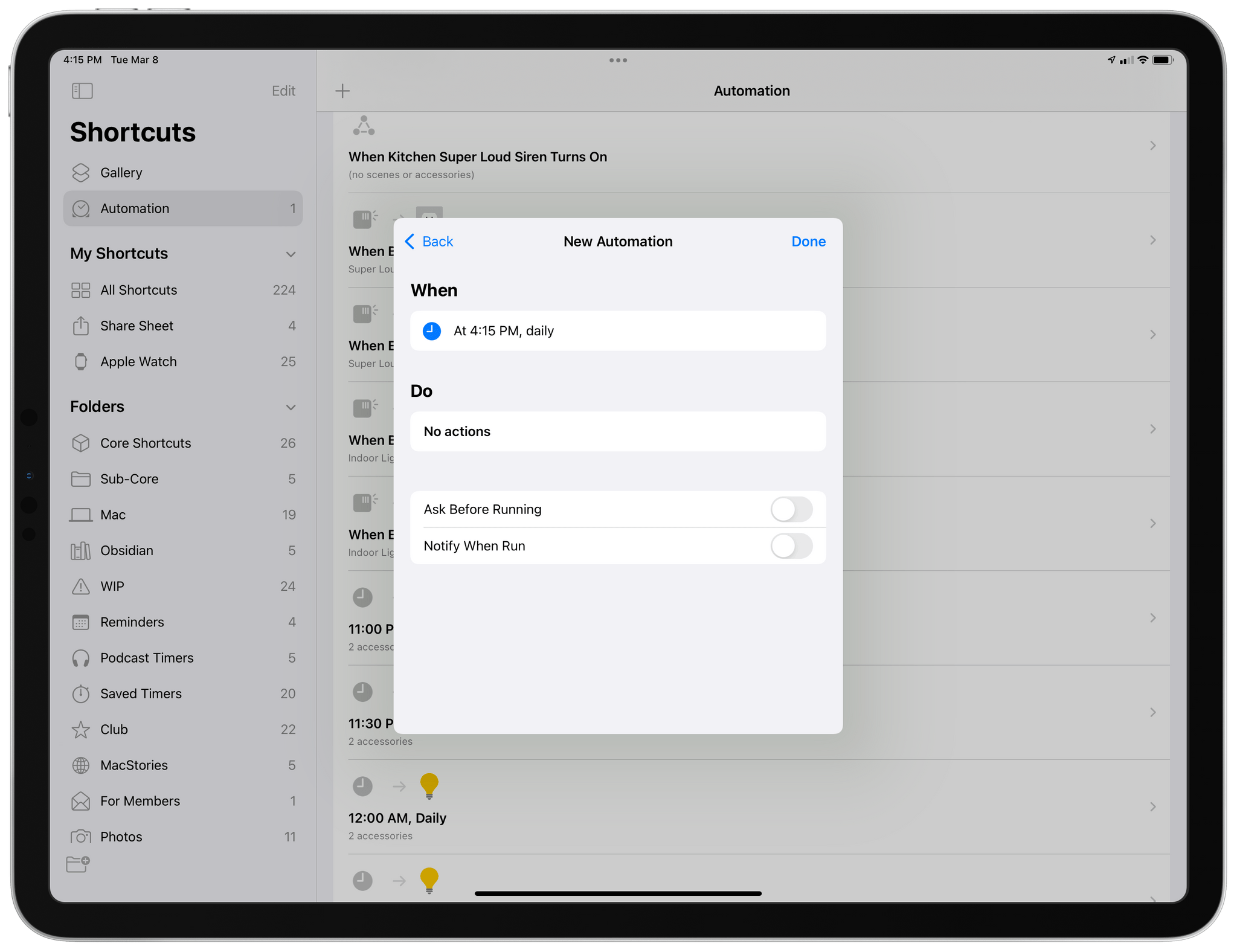

My favorite change in Shortcuts for iOS 15.4, however, is a new option for personal automations: a ‘Notify When Run’ toggle you can disable to never be notified when one of your automations runs in the background.

If you disable ‘Ask When Running’ for personal automations, iOS and iPadOS 15.4 will also let you disable notifications for when an automation runs in the background.

If you’re the kind of person who relies on automations in Shortcuts, you know why this is a big deal: it used to be that you could let most types of automations run in the background without manual user confirmation, but you’d still get notified with an alert that the automation was running. That kind of forced handholding is now gone and you can choose not to be told when an automation is triggered. This is especially handy for those who use automations as a way to open third-party apps when an Apple app is opened, such as launching Halide when the Camera app is opened on the Lock Screen. Now those automations are going to be more user-friendly since you won’t be told every single time that an automation you set up did, in fact, run. Apple can’t help itself so you’ll still get a notification at some point with a summary of how many notifications ran without notifying you, but that’s a trade-off I can live with.2

Oddly enough, the Notify When Run toggle hasn’t been made available for shortcuts added as icons to the Home Screen. Which means that if you, like me, run certain shortcuts as icons, you’ll still get that notification banner every time you run a shortcut from the Home Screen. I have no idea why this is still the case, and I hope the new Notify When Run toggle will find its way to the ‘Add to Home Screen’ page of Shortcuts soon.

Finally, it’s worth noting the Shortcuts app sports refreshed iconography for actions and variables in iOS 15.4. The new glyphs are consistent across iPhone, iPad, and Mac, and they look nice.

Everything Else

Here’s a rundown of everything else that has changed in iOS and iPadOS 15.4.

AirTag safety improvements. As Apple announced last month following various stories regarding AirTags being used to track other people without their knowledge and consent, the company added a series of tweaked settings and unwanted tracking prevention options in iOS 15.4. These features had been previously detailed here, and they include new privacy warnings at setup, a “refined” tracking alert logic to tell users earlier if an AirTag is found moving with them, and changes to how notifications are managed in the Find My app. MacRumors previously detailed the changes to AirTags in the iOS 15.4 beta here.

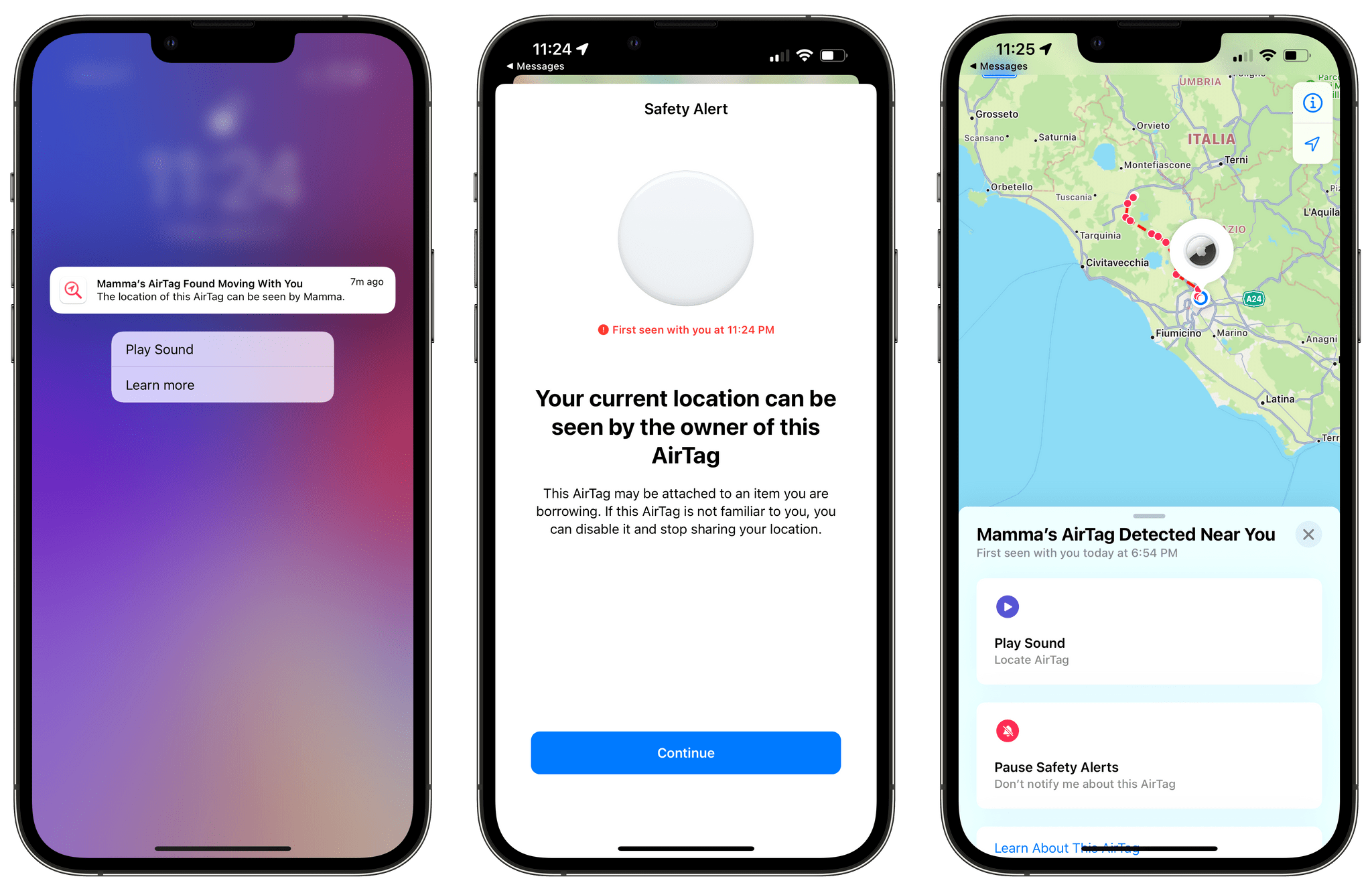

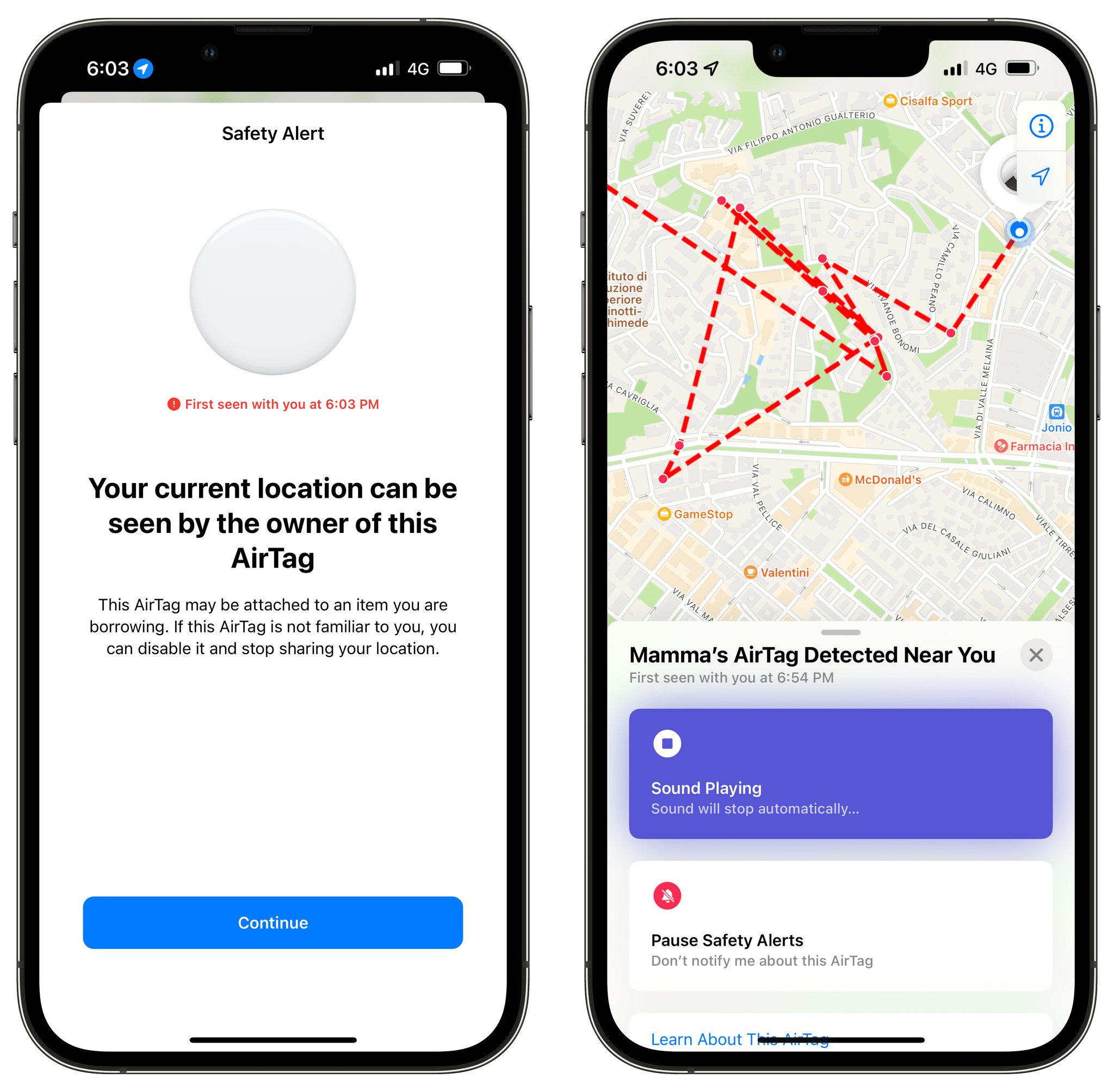

Given Apple’s commitment to this product and to the safety of its users, I wanted to highlight my experience with an AirTag that didn’t belong to me with my iPhone running iOS 15.4. Anti-stalking alerts aren’t new in iOS 15.4, but I figured the OS update would be a good opportunity to test Apple’s refined logic and various improvements for AirTags and the Find My app.

I recently borrowed my mom’s car to drive from Viterbo to Rome, and my mom has an AirTag on her keychain. It takes about an hour to drive from Viterbo to Rome; on my iPhone running iOS 15.4, I received the first alert – and the AirTag started emitting a sound – when I parked in the street where I live. As you can see in the images below, I received the first alert at about 11:18 PM and had started driving around 10:15 PM. I find it ironic that this “refined” logic fired off an alert precisely when I was outside my house: if someone was stalking me, they would have been able to track me in my neighborhood and, quite possibly, the street where I live before I could even notice. Clearly, this logic needs to be “refined” some more, even after the release of iOS 15.4.

Additionally, because I borrowed my mom’s car for three days, I noticed that the AirTag that was with me would play alerts at random times of the day when we were on the move, but not always. For instance, we went to the dog park on Saturday afternoon and the AirTag alerted me again, but it didn’t when we left the park an hour later and drove back home.

I appreciate Apple’s commitment to personal safety and the fact they have detailed their steps in public with expanded documentation, but I feel like the company still needs to do more here. In my experience, the first alert I got – arguably, the most important one if you’re being stalked by someone – was delivered too late, and that is unacceptable. Our iPhones have a detailed, private, on-device log of our movements and patterns over time; it shouldn’t take a full hour for an iPhone to determine that an AirTag found moving with us may be potentially dangerous.

Furthermore, I feel like Apple should consider proactively disabling an AirTag if it’s detected with you for multiple days at a time as an additional precaution. Users may ignore the notification that’s pinned on the Lock Screen; if the system is able to confirm that an AirTag that doesn’t belong to you is, in fact, following you, it may be worth considering whether disabling it preemptively could improve the safety of more users.

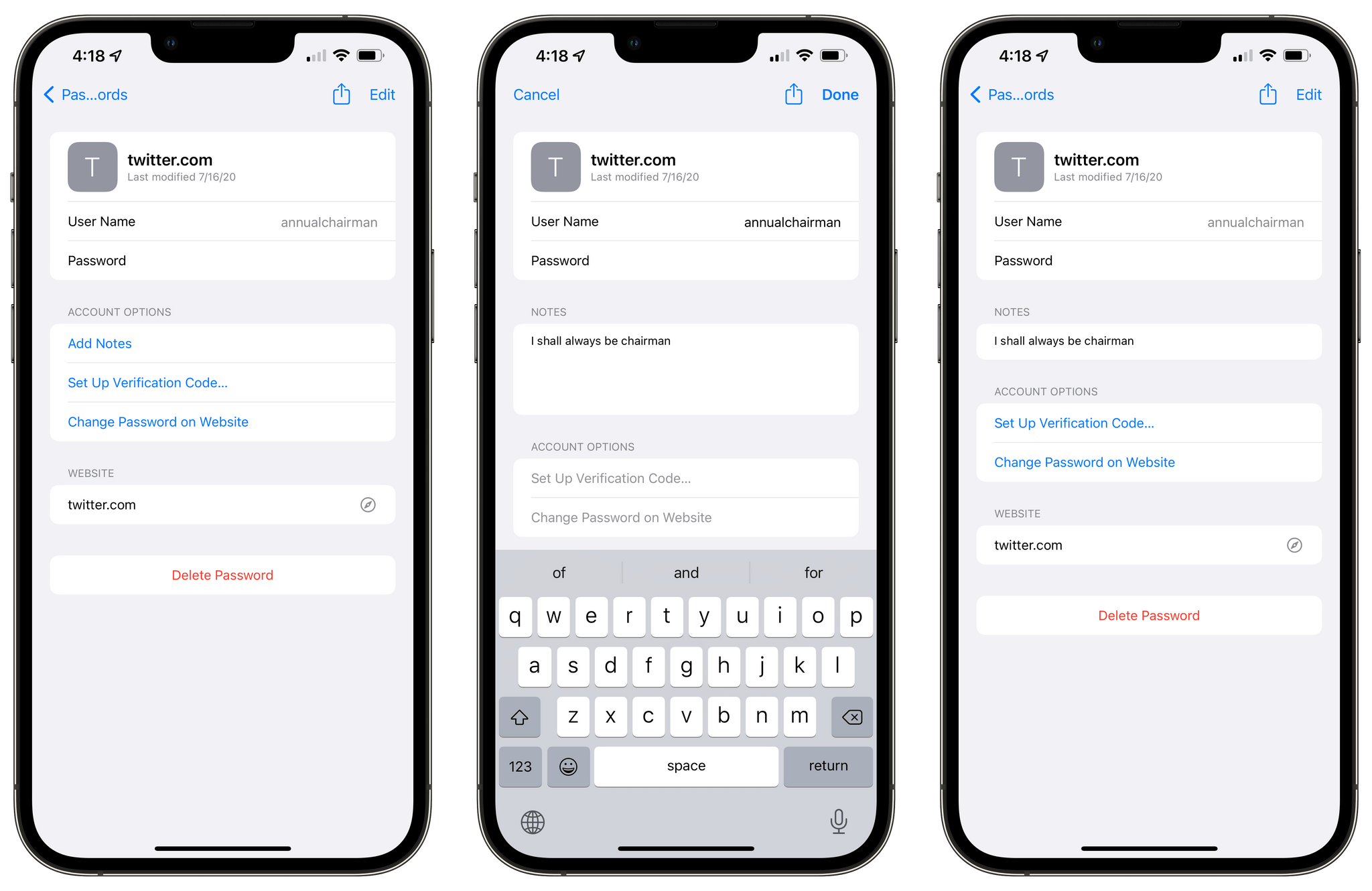

Notes in iCloud Keychain and hiding security recommendations. Apple’s iCloud Keychain feature continues to improve: following the addition of verification codes in iOS 15, it’s now possible to add plain text notes to individual entries. Notes fields for logins and other secure items are a staple of third-party password managers, so I’m glad to see Apple follow the rest of the industry here.

Additionally, you can now hide specific security recommendations in Keychain by pressing a ‘x’ button next to one you don’t want to see anymore. You’ll be able to find these later under Settings ⇾ Passwords ⇾ Security Recommendations ⇾ Hidden Security Recommendations.

New emoji and SF Symbols. Count this in the list of reasons for people to upgrade to iOS 15.4: if Face ID with a Mask isn’t enough for you, how about 37 new emoji and dozens of skin tone variations for existing emoji? Some of my favorites include saluting face, biting lip, and, of course, the new beans (!) emoji. But you already know this if you’re an avid Connected listener. In which case, I ???? you.

As for other new system images that aren’t emoji, iOS 15.4 comes with a handful of new SF Symbols, including glyphs for macro mode and Universal Control. As always, Geoff Hackworth has an excellent list of all the changes here.

A new Siri voice and more offline features. iOS and iPadOS 15.4 bring a fifth, gender-neutral Siri voice for American users. Labeled ‘Quinn’ under the hood, the voice was recorded by a member of the LGBTQ+ community and joins the other Siri voices that use Neutral Text to Speech (Neural TTS) technology. It sounds great.

Additionally, Apple has expanded the list of features that are supported by Siri offline with on-device processing powered by the Neural Engine, first introduced in iOS 15. Starting with the iPhone XS, XR, iPhone 11, and newer models, Siri will be able to answer time and date requests even without an Internet connection. At least, that’s according to Apple. In my tests, questions like “what time is it in San Francisco” or “what day is tomorrow” would show an error message saying that the request couldn’t be completed offline. I was only able to get “what time is it” to work but, you know, that’s not so useful when you have a clock always displayed in the iPhone’s status bar and an Apple Watch on your wrist.

Keyboard brightness in Control Center for iPad. There is a new Keyboard Brightness item for Control Center in iPadOS 15.4 that lets you manually control the brightness of the Magic Keyboard from anywhere on your system. I’ve added this to Control Center weeks ago and never looked back.

Tweak volume control behavior on all iPads. With iPadOS 15.4, Apple is making the dynamic volume control option first seen on the iPad mini available to all iPads. Found in Settings ⇾ Sounds ⇾ Fixed Position Volume Controls, this toggle determines whether you want the volume buttons to remain in a fixed position or change based on the orientation of your iPad. The setting is enabled by default, so nothing will change for existing iPad users. If you turn it off, the volume buttons will follow the direction of the software volume slider – like on the iPad mini – meaning that the “volume down” button will actually raise the iPad’s volume while in landscape mode.

Safari webpage translation for more languages. Originally introduced in iOS and iPadOS 14, Safari webpage translation has received support for Italian and Chinese (Traditional) in 15.4. I tested this with a few Italian websites I read: the translation to English was pretty good and in line with translations we’ve previously seen in Safari and the standalone Translate app.

Emergency SOS tweaks. I never understood how Apple changed the activation method for Emergency SOS a few years ago, so I’m glad this feature has been simplified in iOS 15.4. By default, the system now uses ‘Call with Hold’, so you can press the side and volume buttons and keep holding them until a countdown begins, a loud sound plays, and emergency services are called at the end. The option I always found confusing – ‘Call with 5 Presses’ – is still available, but disabled by default. The new system is much easier to explain and activate: just hold down two physical buttons until your iPhone calls emergency services for you.

Macro support for the Magnifier app. I assumed this was already the case, but I guess I was wrong: the Magnifier app is gaining support for close-up shots based on the ultra-wide camera in iOS 15.4. So if you’re an iPhone 13 Pro or 13 Pro Max users, you can now look at objects up close with the Magnifier app and it’ll automatically switch to the ultra-wide lens, just like the Camera app does for macro mode.

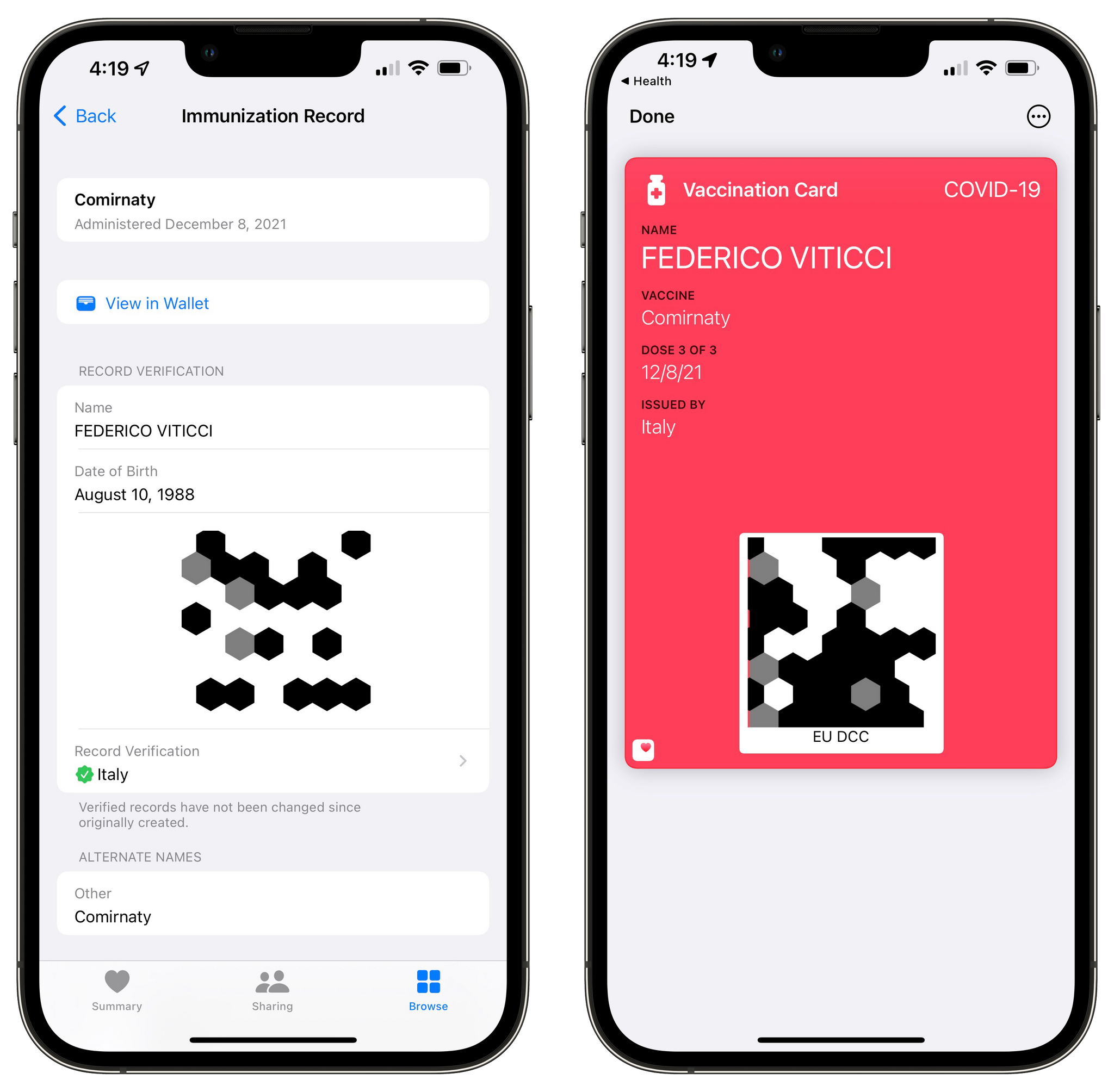

EU Digital COVID Certificates in Health and Wallet. If you live in the European Union and have a Digital COVID Certificate, iOS 15.4 lets you add it to the Health and Wallet apps as an officially-recognized immunization record. To do so, all you need to do is scan the QR code provided by your local government with the Camera app, tap the ‘COVID-19 Vaccination’ data detector, and confirm that everything looks good in the Health app. After that, you’ll be able to see your record verification and details in the Health app, but you’ll also be able to add it as a card with associated QR code to the Wallet app.

I did this for my Italian ‘Green Pass’ weeks ago, and it’s nice that I can now easily find this in Wallet when I have to show proof of vaccination at shops, restaurants, and other places.

Live Text support in Notes and Reminders. In both the Reminders and Notes app (when adding a reminder or writing in a note, respectively) you’ll find a new ‘Scan Text’ button in the camera menu that brings up Live Text recognition to enter text by scanning it with the Camera.

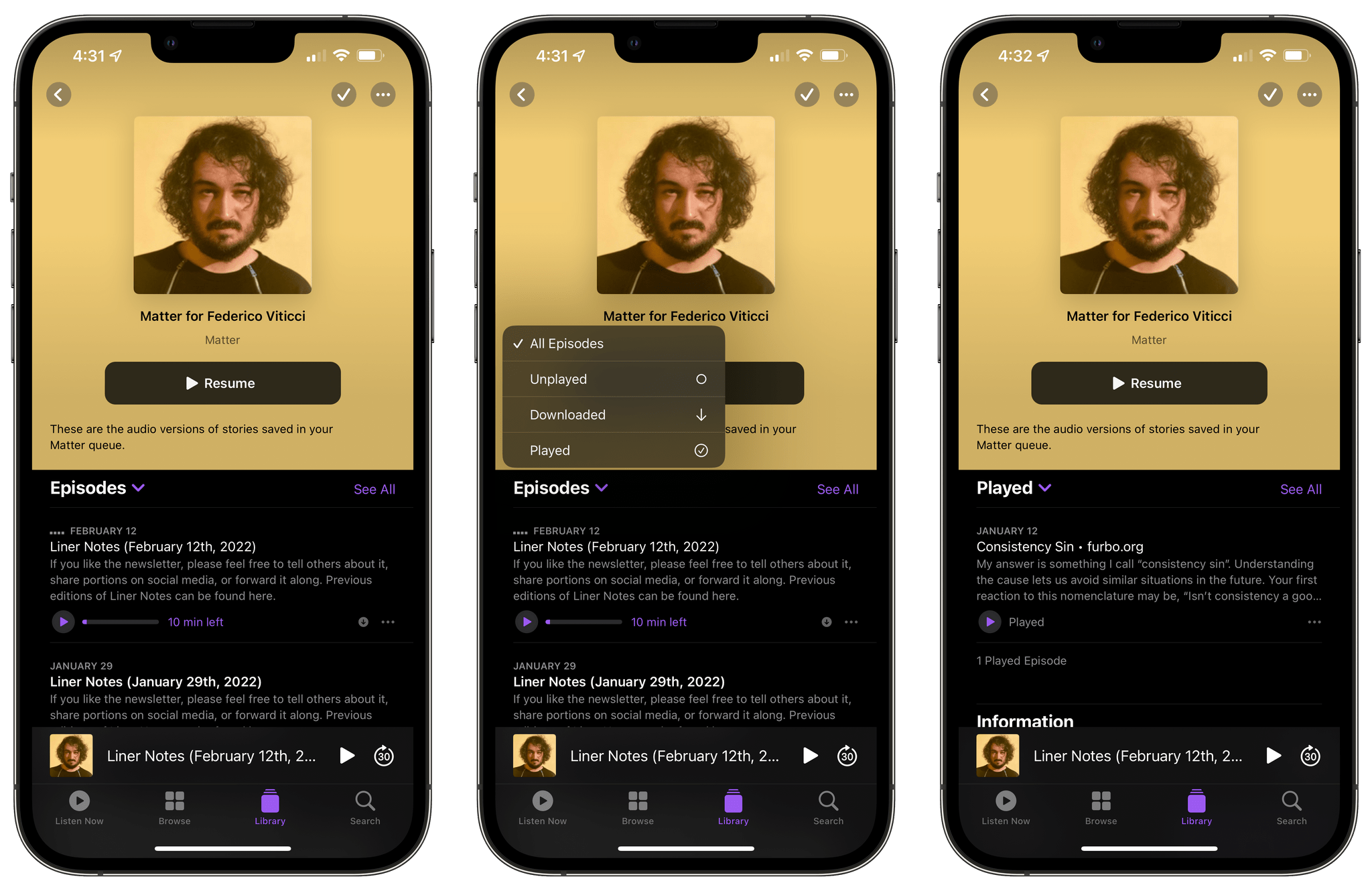

New filters for the Podcasts app. In the Podcasts app for iOS and iPadOS 15.4, you can tap the ‘Episodes’ header in an individual show page to filter its episodes by unplayed, downloaded, or played. I still find the Listen Now and Library pages of the Podcasts app overly confusing for my podcast listening habits, but these filters are nice to have.

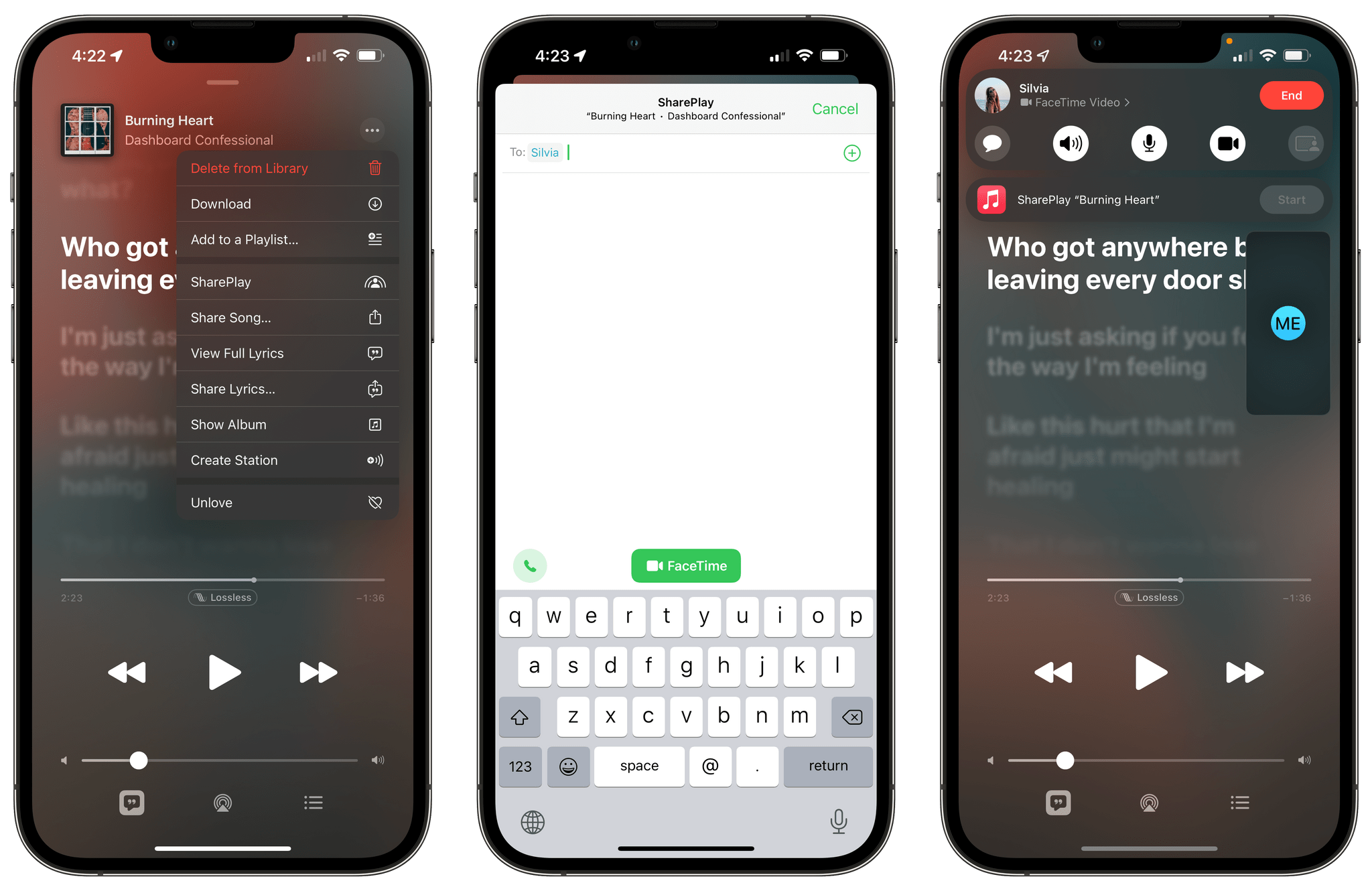

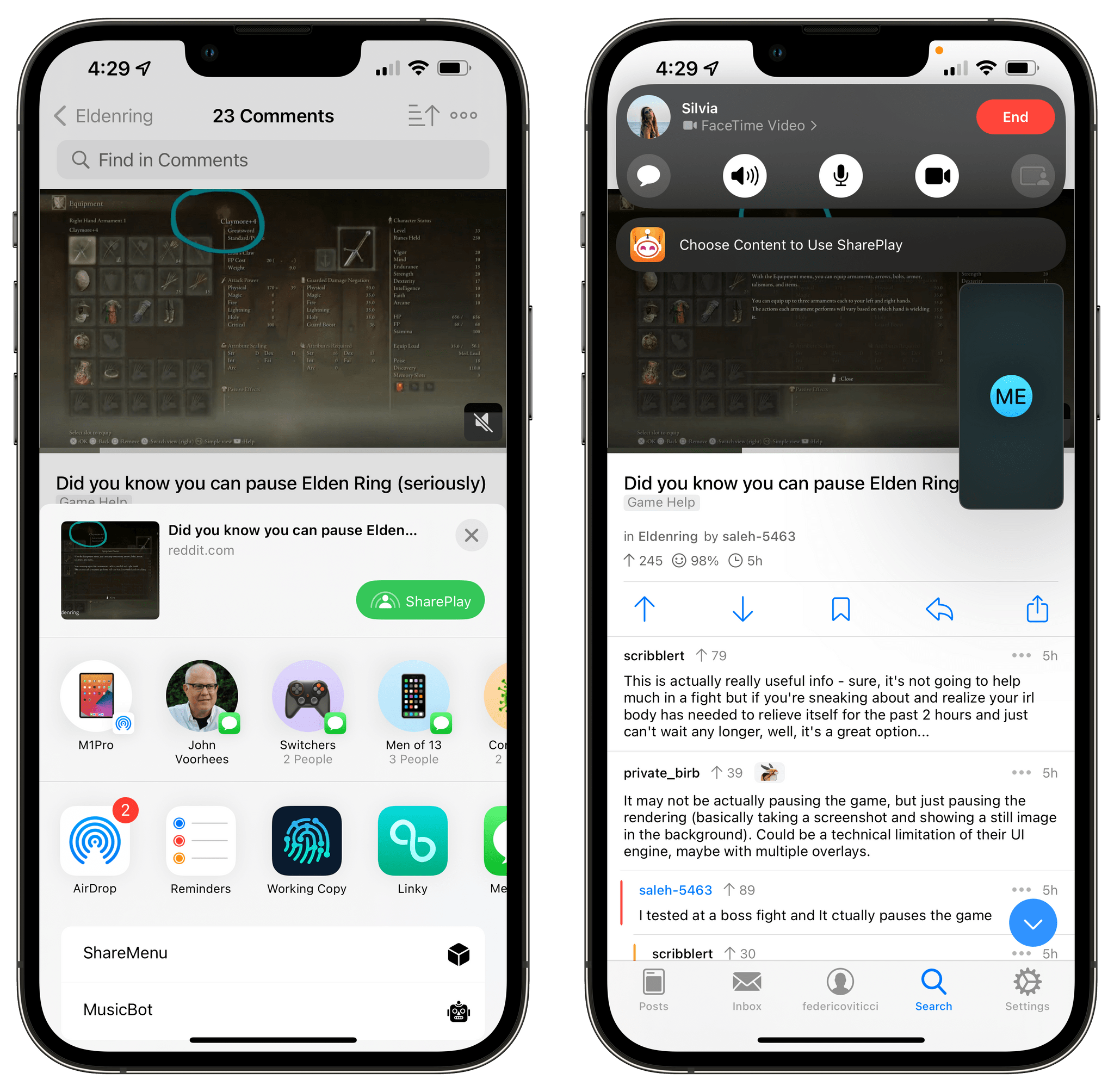

SharePlay improvements. Apple is improving the accessibility of SharePlay, the content-sharing feature based on FaceTime, by making it possible for developers to start FaceTime calls with associated SharePlay activities from their apps. Effectively, instead of having to first start a FaceTime call then pick a SharePlay-enabled app and find content to share, you can now start from the content itself and tap a SharePlay button to begin the call and share with friends in one easier, more intuitive flow.

You can demo this with the updated Music app in iOS 15.4 or via the share sheet of existing SharePlay-enabled apps. In Music, you’ll now find a new SharePlay button in the context menu for songs and albums that lets you pick participants for a new FaceTime call and shares selected Apple Music content right away. I prefer this simplified flow since it cuts the steps required to jump on a FaceTime call and share content, and I wish SharePlay integration with apps worked like this from the beginning.

As I mentioned above, I’ve also noticed the SharePlay button appear in the share sheet of compatible apps. In Apollo, my favorite Reddit client, there is now a green SharePlay button in the share sheet that appears when you try to share a Reddit post or comment.

The new SharePlay button in Apollo’s share sheet. Alas, Silvia didn’t care about my Elden Ring tips.

As far as I know, Apollo hasn’t been updated to support any specific iOS 15.4 API yet, suggesting that Apple is retroactively applying this extended SharePlay integration to all apps that supported the share sheet and shared activities in iOS 15.1.

Wrap-Up

iOS and iPadOS 15.4 are hybrid releases: they contain the dozens of tweaks and improvements we’re used to seeing in mid-cycle updates; iPadOS 15.4 marks the debut of the delayed Universal Control, which works pretty well in most scenarios; iOS 15.4 surprised us with the unexpected arrival of Face ID with a Mask. All things considered, these are two solid releases that put a nice bow on iOS and iPadOS 15 just a couple of months before WWDC 2022.

You can find both iOS and iPadOS 15.4 in Apple’s Software Update page now, and stay tuned for more Universal Control coverage on MacStories soon.

- Face ID with a Mask is also supported elsewhere in iOS such as Apple Pay authentication and password auto-fill in Safari and apps. ↩

- You can long-press this notification to see a full summary of all the automations that ran in the background. ↩