Everything Else

As is always the case with a new version of iOS, there are dozens of other features and smaller details to be discovered in the nooks and crannies of the OS. Here are some of the other notable additions to iOS 12.

ARKit 2

Apple continues to bet heavily on augmented reality: a year after its public debut, the company’s AR framework has graduated to version 2.0 in iOS 12 with some noteworthy technical improvements.

ARKit now understands where an object is located and how the user’s device is oriented relative to the object, which results in better performance and rendering of information laid on top of the physical object. 3D objects visualized in augmented reality can now reflect a real-world scene in the camera view; this is a fascinating technology that, among other things, leverages machine learning to infer lighting conditions when creating a reflection on a virtual object. Face tracking is also faster and more accurate than last year; it now understands where the user is looking thanks to gaze tracking (which opens some amazing possibilities for accessibility) and detects when users are sticking out their tongue.

Superior performance and rendering aside, the biggest changes to ARKit for consumers in 2018 come in the form of shared AR experiences where multiple users can participate and cooperate at the same time. In iOS 12, developers can create shared ARKit apps where you and people around you can see your own perspective on the same things as they happen. An obvious application of this will be multiplayer games where there is one shared augmented reality for everyone, but it’s interesting to consider how museums and other organizations may also take advantage of this. Also, ARKit experiences in iOS 12 can persist across time and locations, so the user can return to a previously assembled AR environment whenever they like. Possible implementations for persistent ARKit scenes might involve visualizing new furniture for your apartment (so you don’t have to start over every time) or tracking how a landmark is changing.

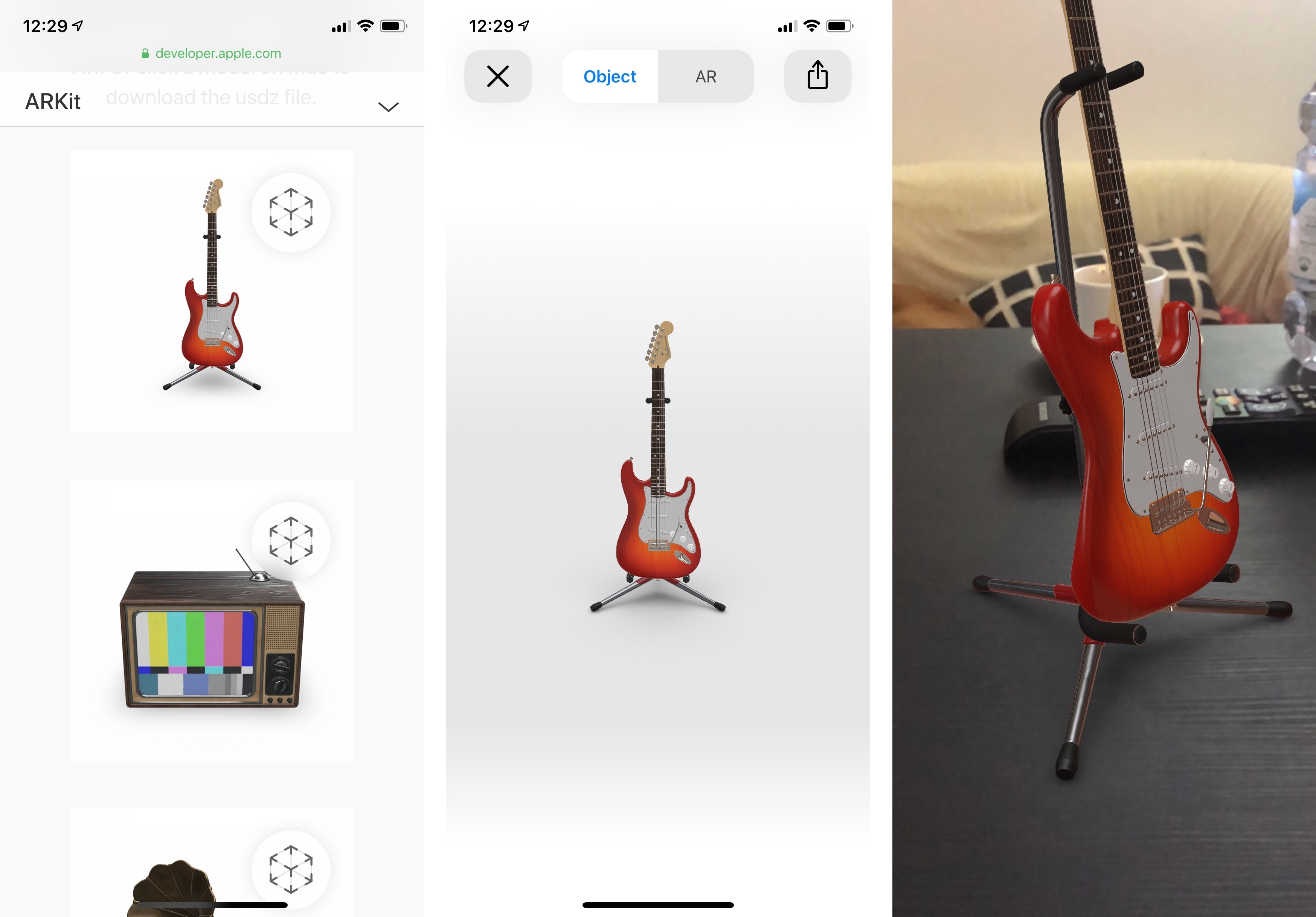

Moreover, Apple is launching a new open file format called USDZ that allows developers to package 3D objects that can be embedded in other apps and then previewed by the user on-screen or in AR. USDZ previews are supported across the entire ecosystem of Apple apps like Mail, Messages, and Safari thanks to a special Quick Look mode. As we’ve seen before, USDZ is going to be used extensively by e-commerce companies to let customers preview virtual objects in the real world, for example.

Even though we haven’t seen ARKit’s killer app yet, Apple is only increasing their commitment to this developer framework. There’s almost a sense that Apple needs to grow ARKit’s accuracy and performance rapidly to prepare for whatever AR-focused project may be coming over the next few years. Until that happens, it’ll be fun to continue experimenting with ARKit apps and games created by third-party developers on iOS.

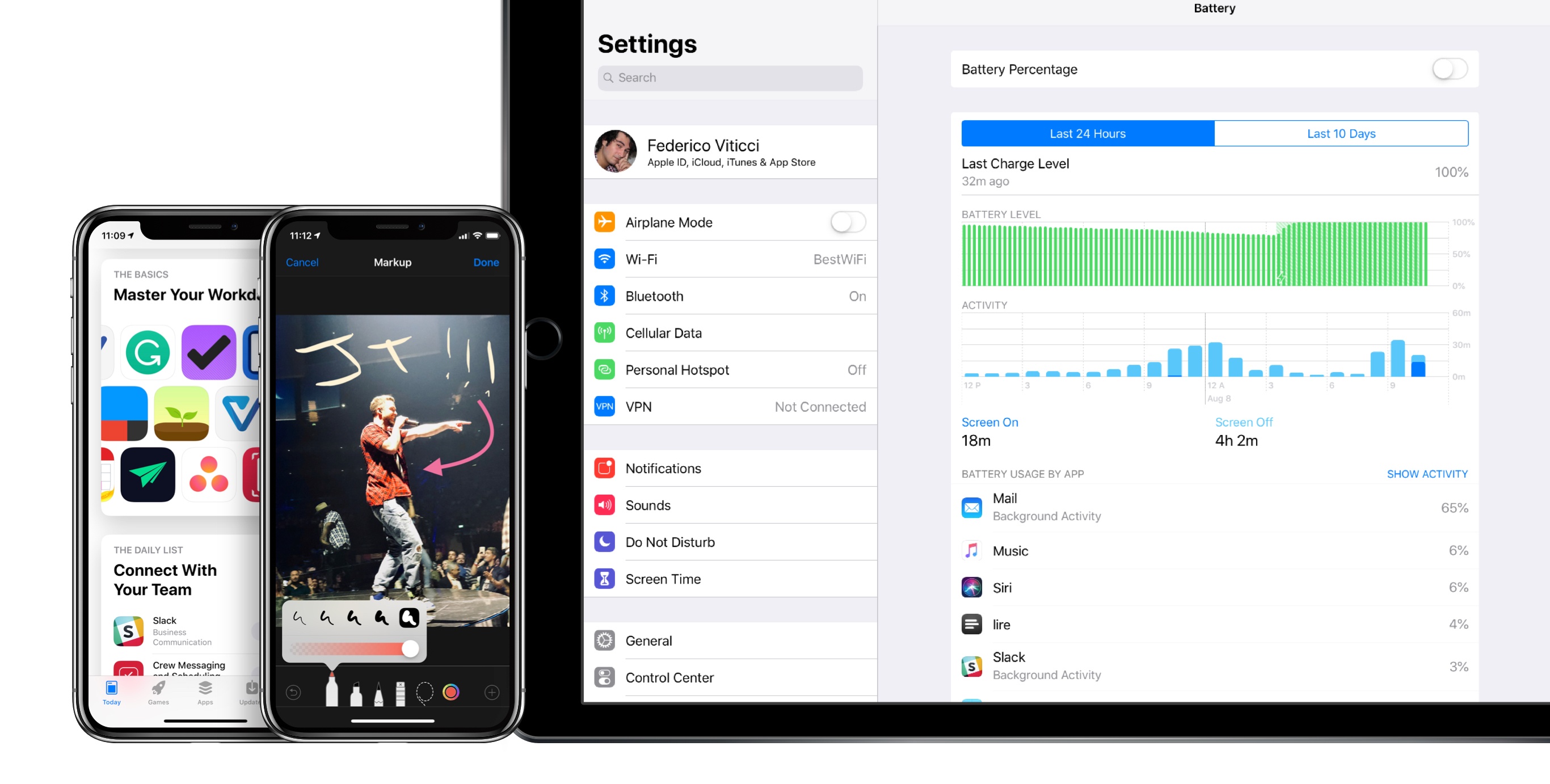

Battery Usage

Following the battery and performance kerfuffle of late 2017, Apple has enhanced the Battery screen of iOS 12 with detailed, Android-like charts about battery level and app usage.

Available for all iOS devices and modeled after Screen Time, the new battery charts in iOS 12 display two main trends for the last 24 hours or 10 days: battery level, visualized in green, and app activity with the screen on and screen off (dark blue and light blue, respectively). The two charts are useful to correlate battery level with increased app usage in specific moments of the day; for instance, I can see that jumping back and forth between Safari and Tweetbot a lot when I’m on 4G drains the battery much faster than, say, writing articles in Drafts. I knew this information before, but seeing charts puts it in better perspective.

You can tap on a chart to focus on individual data points (hours of the day or days of the week) and view how app activity correlates to the selected time period. Charts show you when a device was charging, when its battery indicator turned red, and even when it reached its lowest battery level during the day. As with previous versions of iOS, you can check out battery usage by app as a percentage or view their activity details.

If you have an old iOS device and want to maximize its battery life as much as possible, I bet you’re going to visit this screen a lot.

Face ID

Face ID in iOS 12 has been improved with an easier unlocking system and the ability to create an alternate appearance.

If Face ID fails when you try to unlock your device, the lock screen now lets you swipe up to instantly re-try another Face ID scan. This is a more elegant solution than the confusing debut in iOS 11 last year.

Also, while Face ID can continuously learn how you look over time (and I’ve been pretty happy with its performance), you can now set up a second face in Settings as an alternate appearance. I know of some people who have been using this feature to let their spouse unlock a device using their face, but I believe it’s more designed for letting you set up an appearance that differs from your main one – for instance, if you need to wear protective headwear at work or other accessories that would trick Face ID otherwise. I look forward to testing Face ID’s performance and support for multiple orientations on the upcoming iPad Pros.

Easier-to-Access Trackpad Mode

Previously available only on 3D Touch-enabled iPhones or with a two-finger swipe on the iPad’s keyboard, trackpad mode can be activated in a much easier way in iOS 12: just tap & hold on the space bar until the keyboard becomes a trackpad.

This mode (seemingly inspired by Gboard and other custom keyboards with a similar implementation) gives owners of iPhones without 3D Touch a way to more precisely control the cursor in text fields. This is a nice way to toggle trackpad mode if you’re using an iPhone 5s, SE, or 6; it also supports text selection if you tap somewhere on screen while dragging the cursor with another finger.

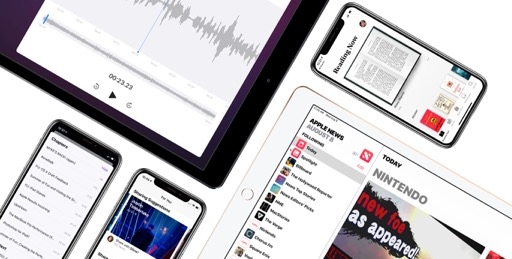

Personalized App Store

In iOS 12, Apple is using a variety of data points to provide you with a personalized App Store experience. In practice, this means the Today page – the protagonist of the App Store’s reboot in iOS 11 – is going to be slightly different for every user in the future.

According to Apple, bigger stories such as App of the Day and timely developer profiles will be available for everyone at the same time, while app collections, smaller stories, and other content may be tailored to each user based on their previous purchases, search history, In-App Purchases, and more. I’ve noticed more productivity-oriented app collections (which led to more downloads) in my experience with the Today tab in iOS 12, so I guess the system must be working. I’ll be curious to monitor the impact of personalization on developers at scale.

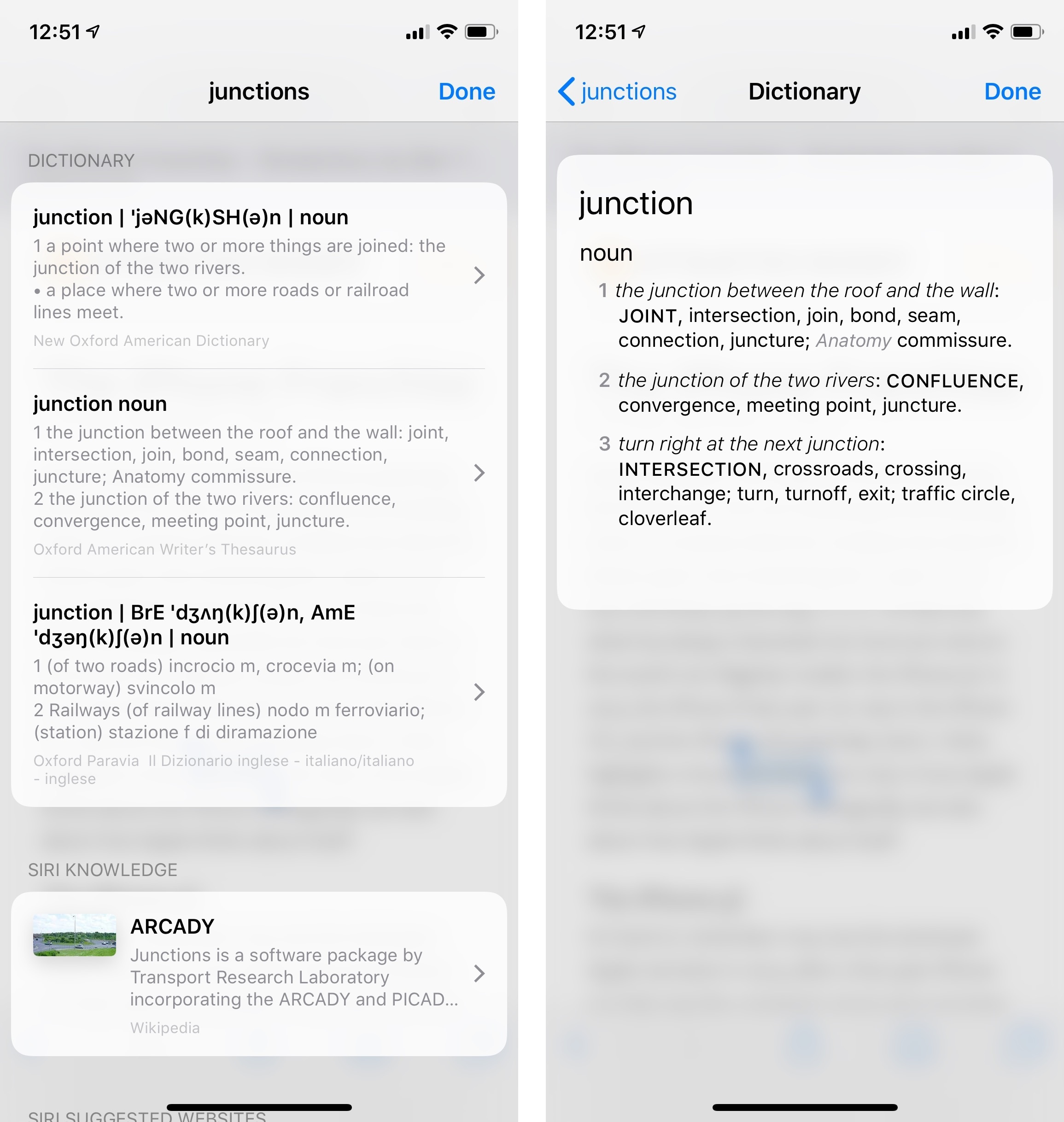

Built-In Thesaurus

If you write longform content on an iPad, or if you want to always make a great impression with your ‘Sent from iPhone’ emails, iOS 12 has just the feature for you: a built-in thesaurus that lives alongside the system dictionary.

Long available on the Mac, the Oxford American Writer’s Thesaurus can now be enabled in Settings ⇾ General ⇾ Dictionary; once activated, the thesaurus will provide you with synonyms and alternatives to the currently selected word from the Look Up menu. As someone who always keeps Safari in Slide Over to search for synonyms when writing, I’m very happy about this addition, which I used a lot this summer.

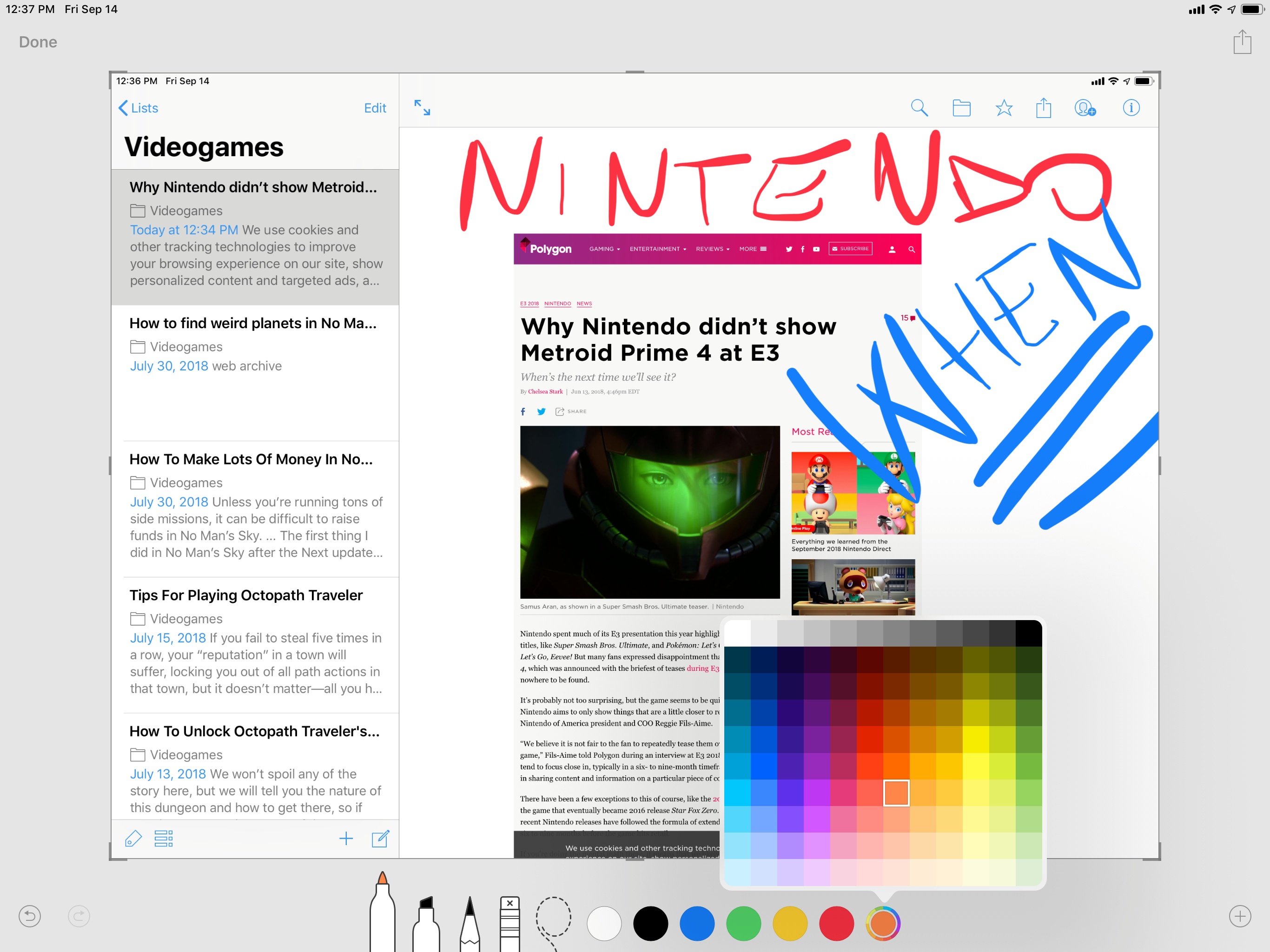

Improved Markup

For the past couple of years, iOS has offered a native Markup mode to annotate images and PDF documents. Initially rolled out for Notes and Mail, this feature eventually expanded to Quick Look for document previews as well as screenshots. In iOS 12, Apple is making Markup mode more powerful by adding new drawing options and a color picker with 120 color choices.

When editing an image in Markup mode, you can now tap on a selected drawing tool (pen, highlighter, or pencil) to open a contextual menu that lets you adjust line thickness and opacity. And while iOS still offers a limited default color palette, you can now choose any other color you want from a picker that features a 12x10 grid of colors in various shades. These additions haven’t made me stop using Annotable to edit screenshots for my articles yet, but I’m using the native Markup mode a lot more for basic image edits.

And More…

Finally, here’s a grab bag of everything else worth noting about iOS 12.

Apps are easier to quit on iPhone X. To force-quit an app on an iPhone X running iOS 12, you just need to swipe up its card in the multitasking switcher instead of long-tapping it and pressing a close button. It seems like Apple has given up on the idea of making it harder for people to quit apps even though they don’t need to; no matter how much explanation and details they’re given, some people like to believe that closing apps is better for them. So be it.

No more accidental screenshots. iOS 12 prevents screenshot capture when you’re waking up a device if you accidentally press both the lock and volume-up buttons at the same time. As someone who took dozens of these screenshots due to the vertical placement of said buttons, I’m glad this has been fixed.

SMS and call spam reporting. Following call and SMS blocking (introduced in iOS 10 and 11, respectively), iOS 12 introduces a new extension point for SMS and call spam reporting. These new extensions, which like the previous ones have to be enabled manually in Settings, let you report missed calls or received messages as an unwanted communication (or spam). To do this, you can swipe left on a call in the Phone app’s Recents list and tap Report, or select one or multiple messages in a conversation and report them. After reporting a contact as spam, the extension can block it and add it to your device’s main list of blocked contacts.

I look forward to testing these extensions as most of the automatic spam call identification apps I tried didn’t have an accurate Italian spammer database; being able to quickly select calls and messages, report them, and block them seems like a useful compromise.

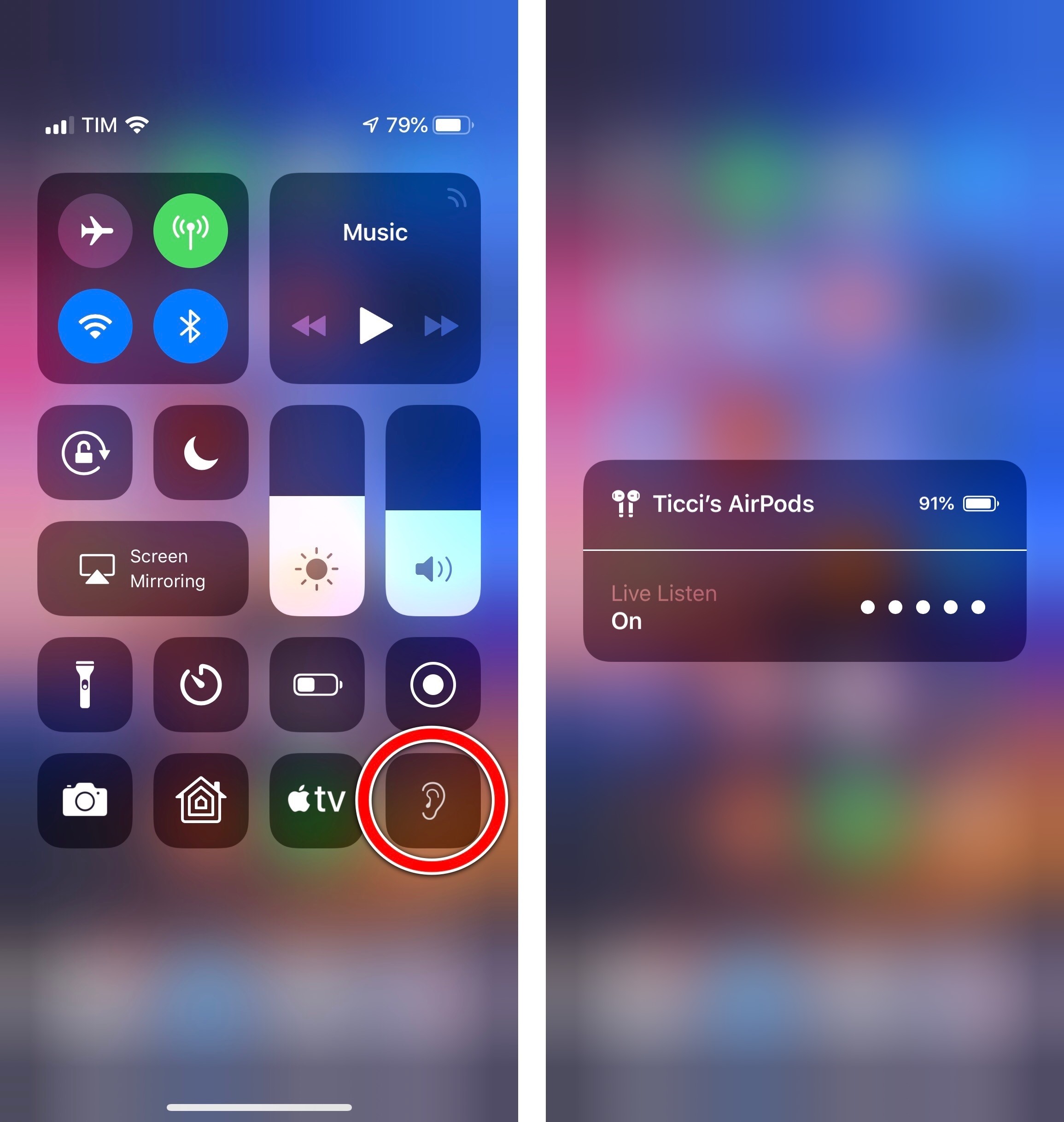

AirPods Live Listen. One of the best new accessibility features of iOS 12 is a new Control Center button that lets you use the iPhone as a directional microphone to assist with hearing through your AirPods.

It’s such a simple, clever idea: once enabled, you can put your iPhone next to a person speaking or a television and use the AirPods as an amplification tool for what the iPhone is picking up. Thanks to the W1 chip inside AirPods, you can be at a considerable distance from the iPhone (for example, if you’re sitting in an audience) and audio won’t cut out. This is one of my favorite examples of Apple’s appreciation for inclusive technology.