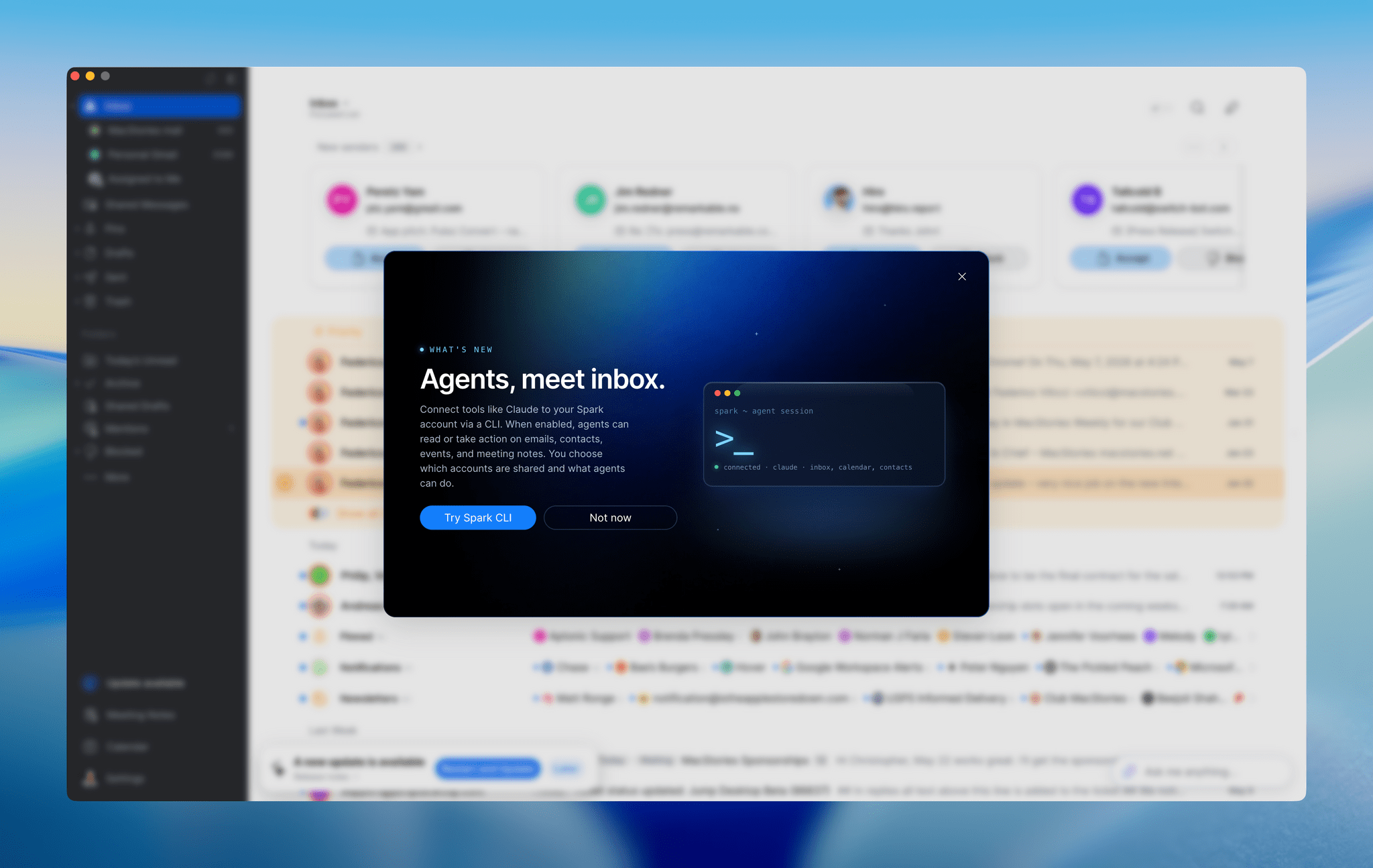

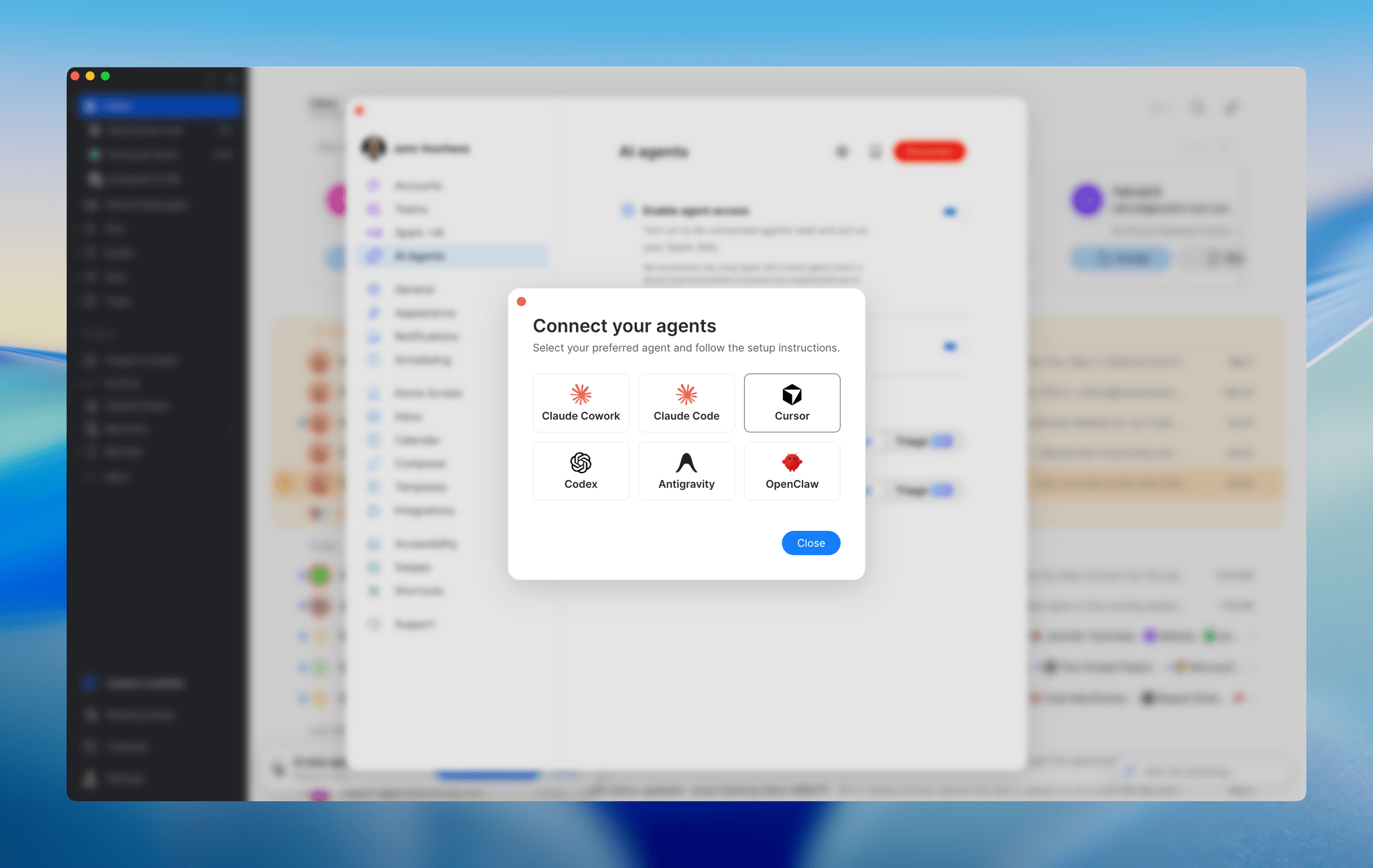

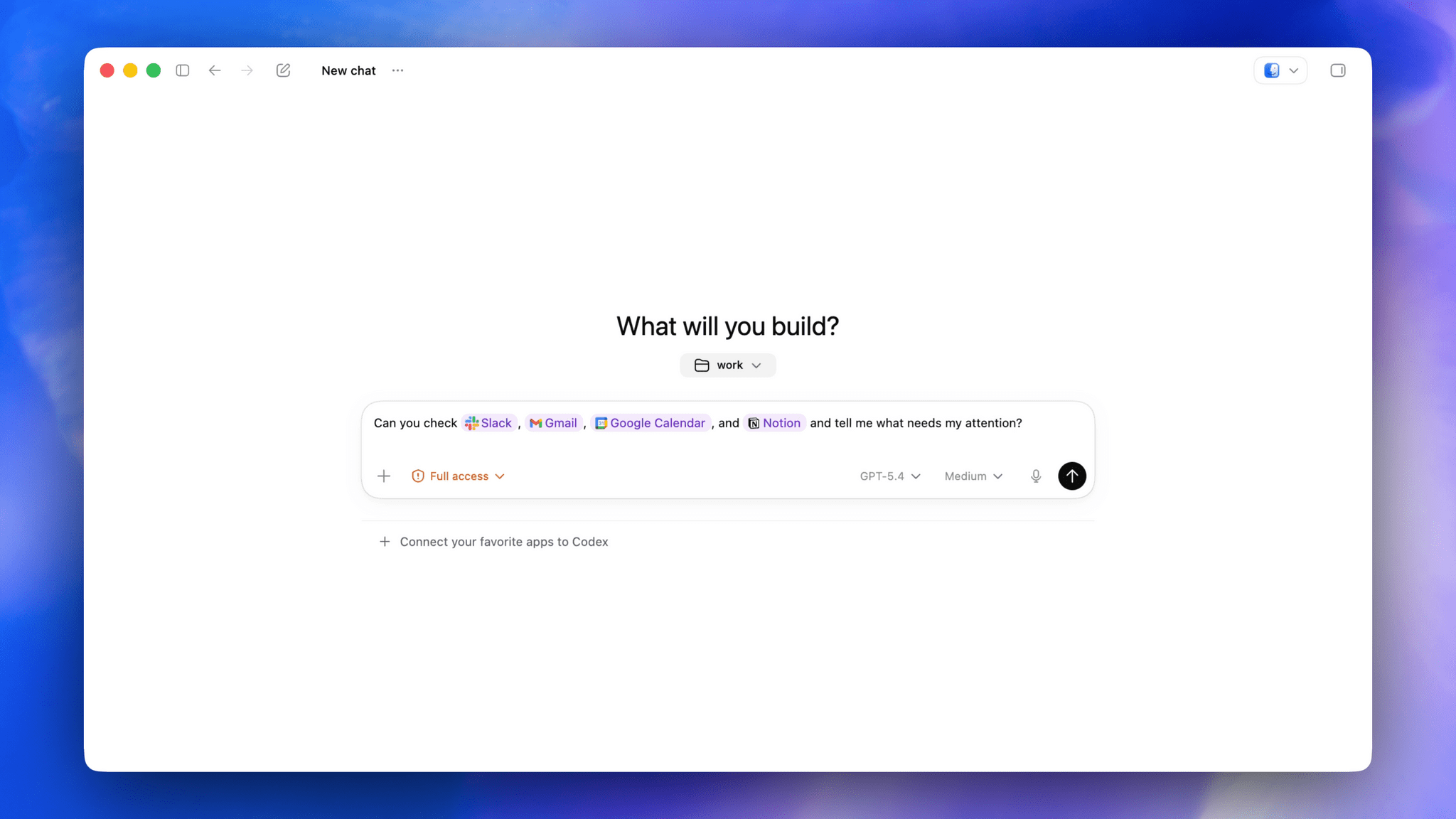

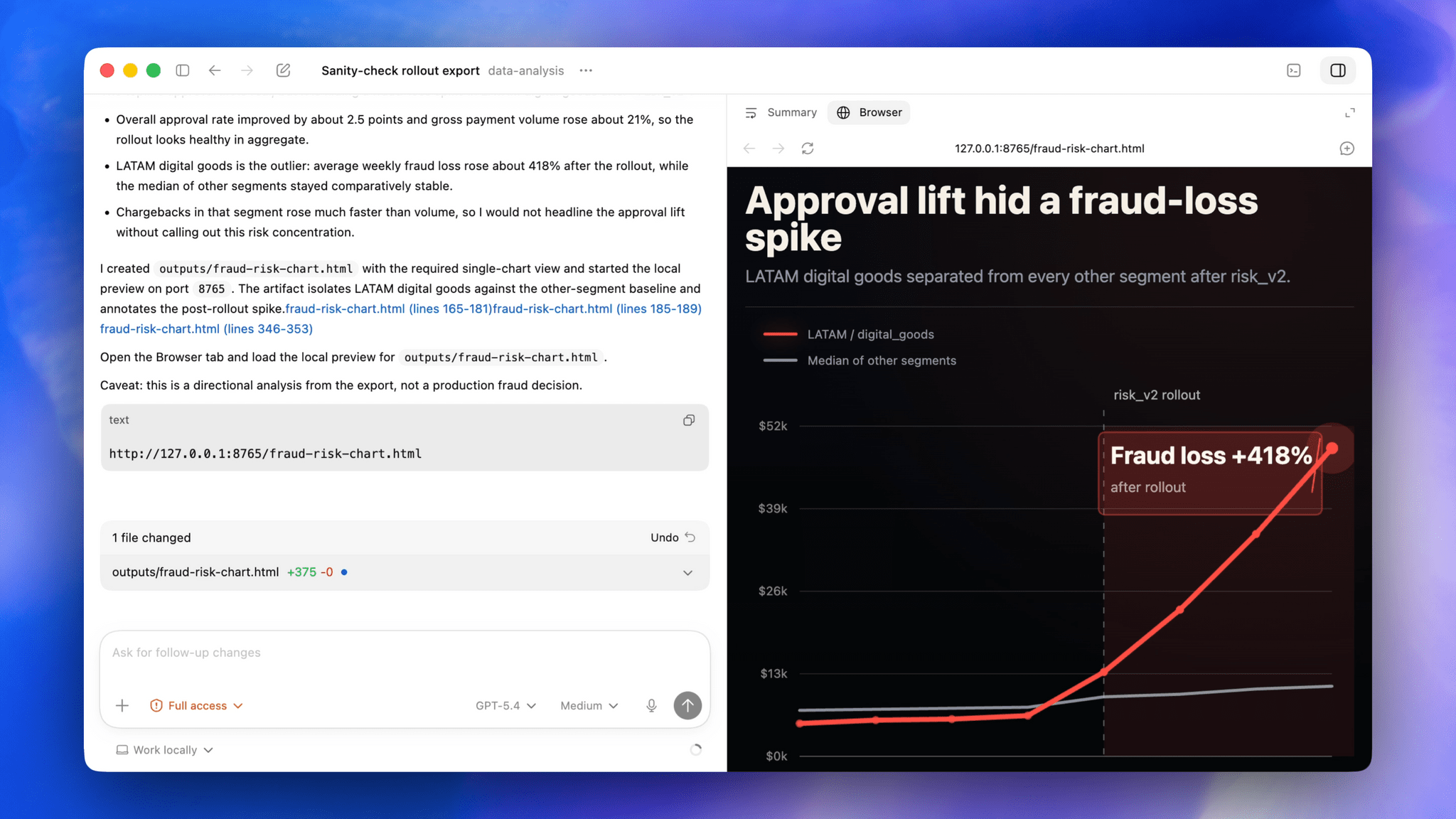

About two weeks ago, Spark, the email app by Readdle, was updated with a CLI and a set of agentic skills for Claude Code, Codex, and other agents, allowing them read-only access to messages, calendar events, contacts, and meeting notes. These features were extended again a few days ago with new abilities that added email triage actions and more skills. The approach is clever in its local architecture, which keeps your message data on your Mac while making it available to agents.

CLIs are one of this year’s top app trends, with a wide variety of productivity apps adding them. The reason is simple: agents that work in the Terminal like Claude Code and Codex can use local CLIs, which keeps token usage down because the agent only sees a command’s text output instead of carrying tool schemas with it the way MCP servers do.

Spark isn’t the first to create an email CLI. The Google-created, but “not an official product,” googleworkspace CLI interfaces with Gmail and a bunch of other Google services, offering over 100 skills. The difference is that a CLI like googleworkspace contacts Google’s Gmail servers and acts on your messages in the cloud, whereas Spark’s CLI acts as a remote control for the Spark app itself, managing the messages locally on your Mac and then syncing them back to Gmail via the desktop app.

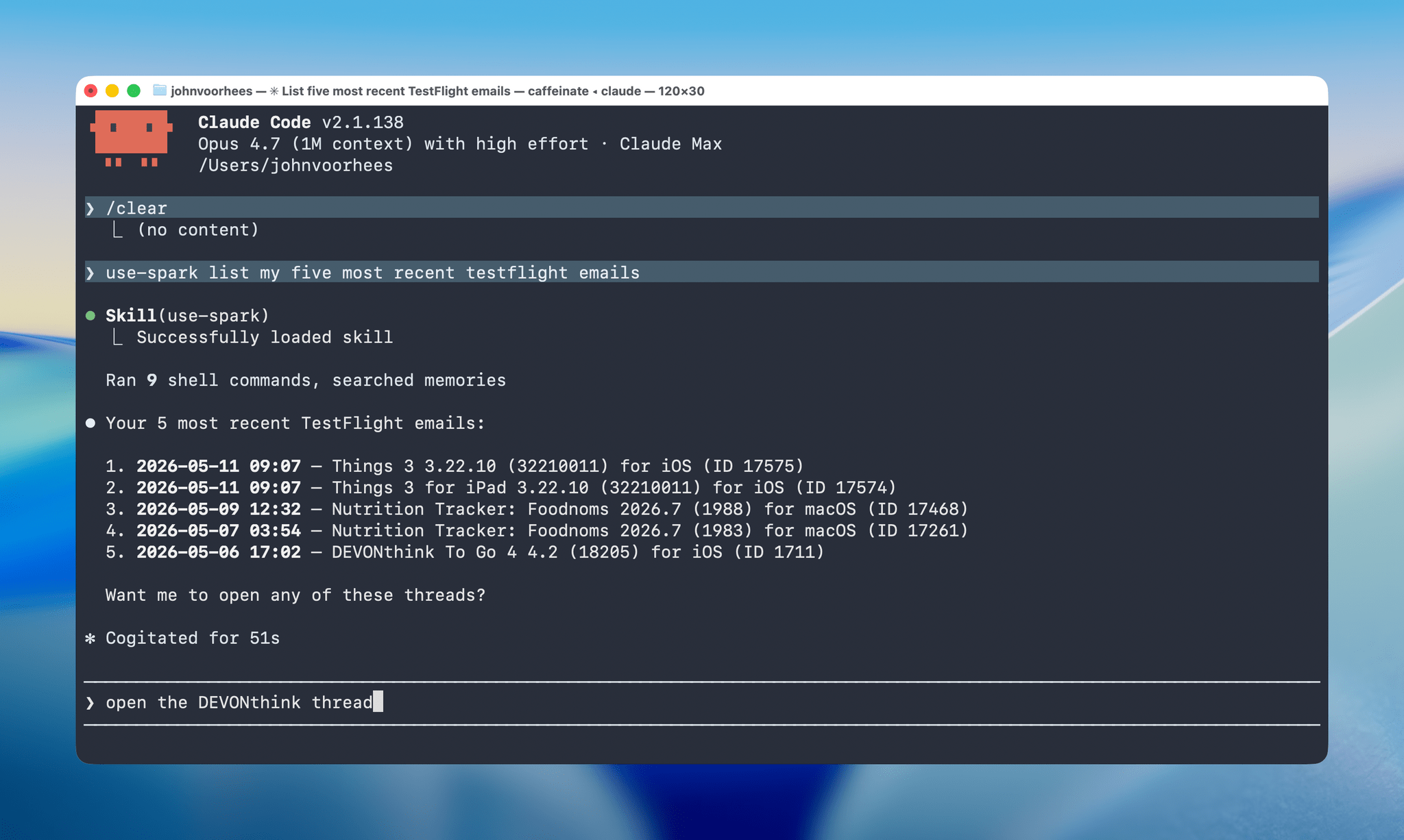

I’ve worked with both the googleworkspace CLI and Spark’s, and Spark’s is by far the easier one to use because you don’t need to set up a Google Cloud project or deal with OAuth. The only drawback is that the Spark app needs to be open for its CLI to work because everything happens on your Mac. However, as a practical matter, that’s not a limitation that has impacted me since my email app is open when I’d want to use Spark’s CLI or skills anyway.

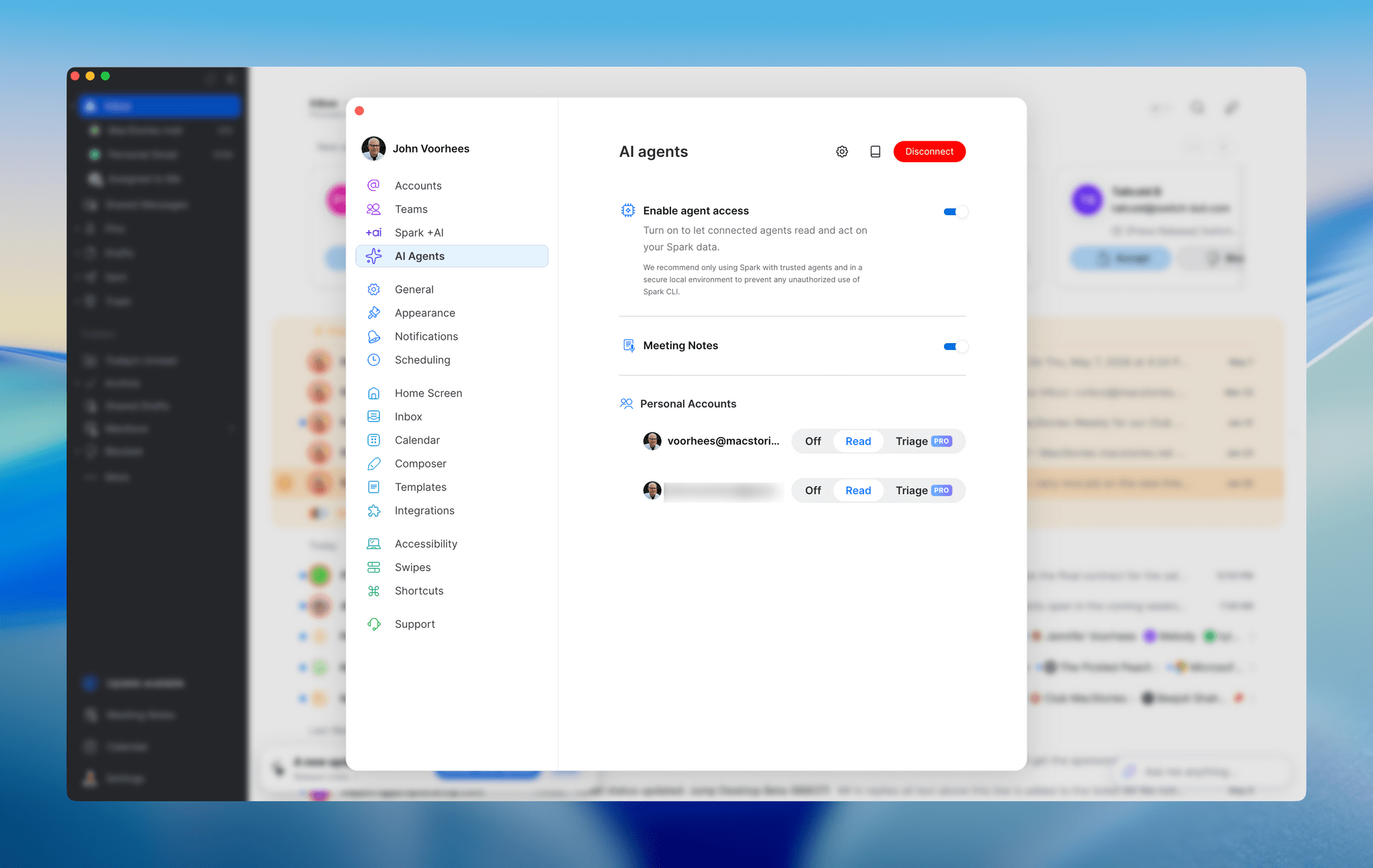

There are two levels to what Spark offers. The read-only CLI and skills are available to all users, whether or not they subscribe to Spark Pro. Those actions include the ability to search and summarize messages, fetch context, read threads, and view your calendar, contacts, and meeting notes. A Pro subscription adds message drafting, replying, snoozing, pinning, labeling, moving, and archiving, along with team commenting. It’s an excellent set of actions that uses syntax similar to Gmail, which means it should be familiar to many long-time Gmail users straight out of the box.

And there’s more. Readdle has also released a set of recipes and personas, which are open-source skills. The recipes include instructions for morning and end-of-day email reviews, reviewing of new senders, catching up on messages after vacation, and more. Personas are more holistic approaches to your inbox that apply to an entire email session and have modes. For example, the Founder persona has Rapid Triage, Aggressive Delegation, and Cross-Team Oversight modes. Other personas include Executive Assistant, Freelancer, and Team Lead. Full details of every recipe and persona are available on Readdle’s GitHub page.

I’ve spent time using the read-only actions of Spark’s CLI with Claude Code, and it’s an excellent option for automating your email. Setup is simple and fast, and it works well. I’m not sure personas are for me, but there are a bunch of interesting ideas among the recipes, which I intend to explore more and use to create my own skills.

Spark Mail is available as a free download on the Mac App Store. The CLI’s triage actions are exclusive to users who subscribe to Spark Pro, which costs $20/month or $200/year.