For the past week, I’ve been testing the Belkin Stage PowerGrip. It’s an iPhone accessory that adds a DSLR-like grip to your iPhone while simultaneously charging it. Belkin isn’t the first company to make an accessory like this, but the Stage PowerGrip delivers a bigger battery at a more affordable price than its competitors. That’s why it initially caught my eye. However, what I didn’t expect was for the device to make a compelling case to become part of my everyday on-the-go setup, which it absolutely has. Here’s why.

Posts tagged with "photography"

More Than Just a Camera Grip: Belkin’s Stage PowerGrip for iPhone

Lux’s Sebastiaan de With on the iPhone 16e’s Essential Camera Experience→

As I read Sebastiaan de With’s review of the iPhone 16e’s camera, I found myself chuckling when I got to this part:

You can speculate what the ‘e’ in ‘16e’ stands for, but in my head it stands for ‘essential’. Some things that I consider particularly essential to the iPhone are all there: fantastic build quality, an OLED screen, iOS and all its apps, and Face ID. It even has satellite connectivity. Some other things I also consider essential are not here: MagSafe is very missed, for instance, but also multiple cameras. It be [sic] reasonable to look at Apple’s Camera app, then, and see what comprises the ‘essential’ iPhone camera experience according to Apple.

What amused me was that I initially planned to call my iPhone 16e review the ‘e’ Is for Essential, but I settled on ‘elemental’ instead. Whether the ‘e’ in iPhone 16e stands for either of our guesses or neither really doesn’t matter. Like Sebastiaan, I find what Apple chose to include and exclude from the 16e fascinating.

When it comes to the iPhone 16e’s camera, there are differences compared to the iPhone 16 Pro, which is the focus of Sebastiaan’s review. The 16e supports fewer features than the Pro and the photos it takes don’t reproduce quite as much detail, especially in low-light conditions. There are other differences, too, so it’s worth comparing the review’s side-by-side comparison shots of the 16e to the 16 Pro.

Overall, though, I think it’s fair to say Sebastiaan came away impressed with the 16e’s camera, which has been my experience, too. So far, I’ve only used it to shoot video for our podcasts, and with good lighting, the results are excellent. Despite some differences, the iPhone 16e combined with the wealth of photo and video apps, like Lux’s Halide and Kino, make it a great way to enjoy the essential iPhone photography experience.

Our MacStories Setups: Updates Covering Video Production, Gaming, and More→

The second half of 2024 saw a lot of change to my setup and Federico’s. We launched the MacStories YouTube channel, expanded our family of podcasts, and spent time chasing the ultimate portable gaming setup for NPC: Next Portable Console. The result was that our setups have evolved rapidly. So, today, we thought we’d catch folks up on what’s changed.

Our Setups page has all the details, but you’ll notice a couple of trends from the changes we’ve made recently. As Federico recounted in iPad Pro for Everything: How I Rethought My Entire Workflow Around the New 11” iPad Pro, the linchpin to ditching his Mac altogether was recording audio and video to SD cards. He already had a solution for audio in place, but video required additional hardware, including the Sony ZV-E10 II camera.

Federico’s gaming setup has evolved, too. The Sony PS5 Pro replaced the original PS5, and he swapped the limited edition white Steam Deck in for the standard OLED version. He also revealed on NPC: Next Portable Console this week that he’s using a Lenovo y700 2024 gaming tablet imported from China to emulate Nintendo DS and 3DS games, which will be available worldwide later this year as the Lenovo Legion Tab Gen 3. Other upgrades to existing hardware Federico uses include a move from the iPhone 16 Plus to the iPhone 16 Pro Max and an upgrade of the XREAL Airs to the XREAL One glasses.

As for myself, CES and its bag size limitations pushed me to rethink my portable video and audio recording setups. For recording when I’m away from home I added several items to my kit that I detailed in What’s in My CES Bag?, including:

- a Tomtoc sling bag

- the Insta360 Flow Pro gimbal

- DJI’s Mic 2 wireless microphones and receiver

- Lexar’s tiny 2TB SSD and hub accessory for the iPhone

On the gaming side of things I added a white TrimUI Brick and GameCube-inspired Retroid Pocket 5.

2024 was a big year for setup updates for both of us. We already have new hardware incoming for testing, so keep an eye on the Setups page. I expect we’ll update it several times in 2025 too.

What’s in My CES Bag?

Packing for CES has been a little different than WWDC. The biggest differences are the huge crowds at CES and the limits the conference puts on the bags you can carry into venues.

My trusty Tom Bihn Synapse 25 backpack isn’t big, but it’s too large for CES, so the first thing I did was look for a bag that was small enough to meet the CES security rules but big enough to hold my 14” MacBook Pro and 11” iPad Pro, plus accessories. I decided on a medium-sized Tomtoc Navigator T24 sling bag, which is the perfect size. It holds 7 liters of stuff and has built-in padding to protect the corners of the MacBook Pro and iPad as well as pockets on the inside and outside to help organize cables and other things.

I don’t plan to carry my MacBook Pro with me during the day. The iPad Pro will be plenty for any writing and video production I do on the go, but it will be good to have the power and flexibility of the MacBook Pro when I return to my hotel room. For traveling to and from Las Vegas, I appreciate that the Tomtoc bag can fit everything I’m bringing.

With little room to spare, my setup is minimal. I’ll write on the iPad Pro and MacBook Pro, carrying the iPad with me tethered to my iPhone for Internet access. That’s a tried-and-true setup I already use whenever I’m away from home.

Lux Reveals Plans for Halide Mark III→

Yesterday, the team at Lux announced that they are working on the next major release of their pro camera app, Halide, which will be dubbed Halide Mark III. The next iteration of Halide, which Lux hopes to release in 2025 will focus on three areas:

- Color Grades: Like Kino, their App Store iPhone App of the Year for shooting video, Lux plans to add custom color grading to Halide.

- HDR: Lux is developing its own implementation of High Dynamic Range that will give Halide’s photos “a thoughtful and nuanced HDR look.”

- Redesign: Although Lux has not revealed any details, Halide will be redesigned, which should include a focus on color grading.

In addition to upcoming features, Lux announced a new community Discord for Halide and Kino, to collect feedback from customers and to allow them to share their interest in photography. The Discord and social media will also be where users can participate in the Halide and Kino 52-Week Challenge:

Every week you’ll get a photography challenge on our Discord. We’ll also include resources to help with the challenge — like app-specific tips. The challenge will be shared there and on our social media. Once you’ve got your shot, you can share your shots and see what the rest of the community came up with.

I love both Halide and Kino, and I’m intrigued by Lux’s new approach to development. Running a community can be challenging, but I expect the feedback Lux gets from users will be invaluable, as they work on the next big update to one of the App Store’s best camera apps.

Kino 1.2 Adds Camera Control Support and Higher Resolution and Frame Rate Recording

Lux Camera’s video camera app Kino has been updated to version 1.2, bringing a variety of new features and a redesigned icon. I covered the debut of Kino back in May and have been using it a lot lately because its design makes taking great-looking video so easy.

At the heart of Kino’s 1.2 update is support for the latest iPhones. Kino now works with the iPhone 16 and 16 Pro’s Camera Control for making adjustments that previously were only possible by touching your iPhone’s screen.

On the 16 Pro, the app also supports 4K video at 120fps with its Instant Grade feature enabled. That’s the feature that lets you pick a color grading preset created by video experts, including Stu Maschwitz, Sandwich Video, Evan Schneider, Tyler Stalman, and Kevin Ong. Version 1.2 of Kino lets you reorder those grades in its settings to make your favorites easier to access. Finally, Kino has added support for the following languages: Chinese, Dutch, French, German, Italian, Japanese, Korean, Portuguese, Russian, and Spanish.

If you haven’t tried Kino before, it’s available on the App Store for $9.99.

Pixelmator Team to Join Apple→

Today, the Pixelmator team (this and next week’s MacStories sponsor) announced on their company blog that they plan to join Apple after regulatory approvals are obtained. The Pixelmator team had this to say about the news:

We’ve been inspired by Apple since day one, crafting our products with the same razor-sharp focus on design, ease of use, and performance. And looking back, it’s crazy what a small group of dedicated people have been able to achieve over the years from all the way in Vilnius, Lithuania. Now, we’ll have the ability to reach an even wider audience and make an even bigger impact on the lives of creative people around the world.

There will be no material changes to the Pixelmator Pro, Pixelmator for iOS, and Photomator apps at this time.

The Pixelmator Team’s apps have always been among our favorites at MacStories. In 2022 we awarded Pixelmator Photo (now, Photomator), the MacStories Selects Best Design Award, and in 2023, Pixelmator received our MacStories Selects Lifetime Achievement Award. Congratulations to everyone at Pixelmator. We can’t wait to see what this exciting new chapter means for them and their fantastic suite of apps.

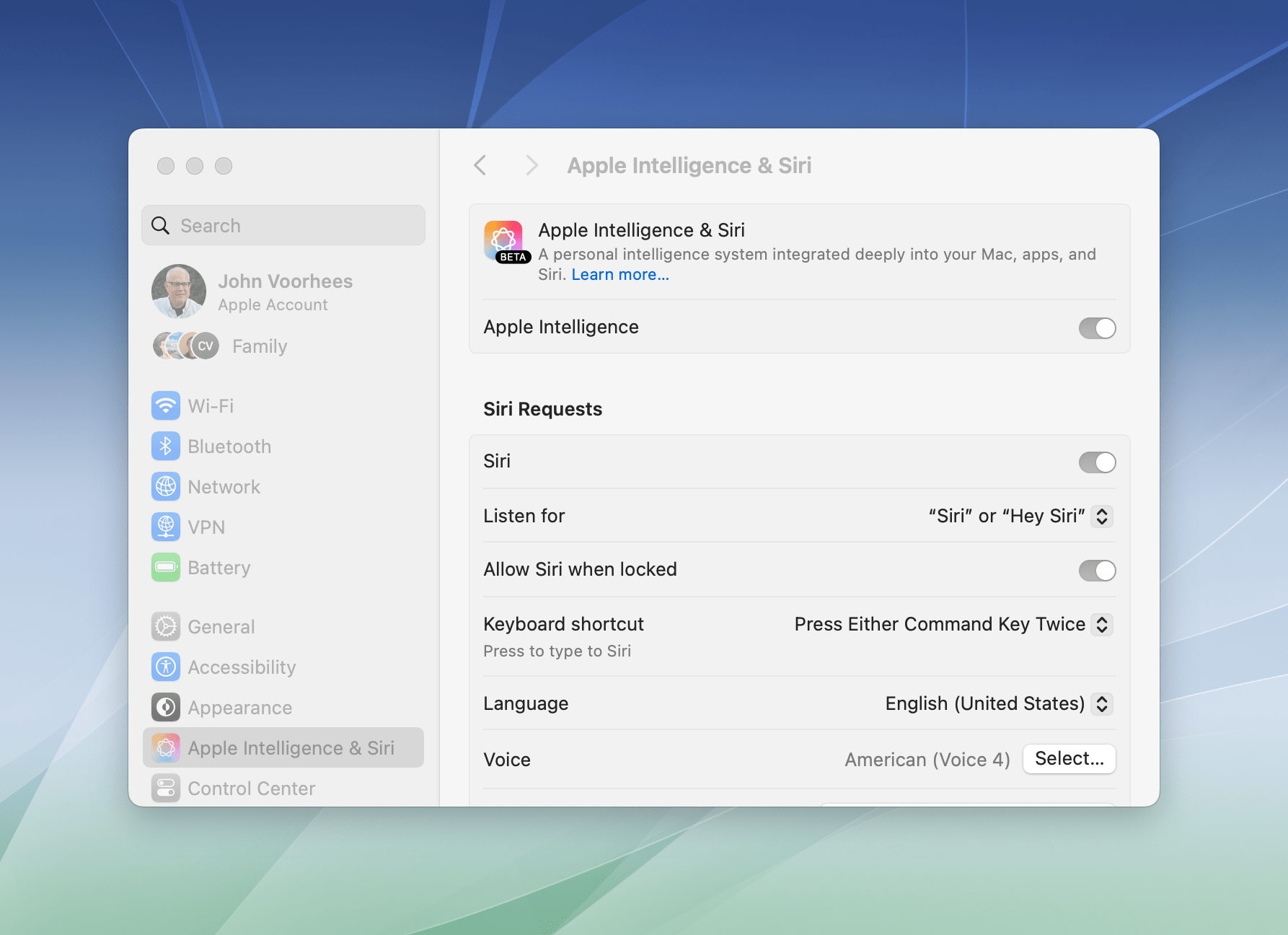

Apple’s Commitment to AI Is Clear, But Its Execution Is Uneven

The day has finally arrived. iOS 18.1, iPadOS 18.1, and macOS 15.1 are all out and include Apple’s first major foray into the world of artificial intelligence. Of course, Apple is no stranger to AI and machine learning, but it became the narrative that the company was behind on AI because it didn’t market any of its OS features as such. Nor did it have anything resembling the generative AI tools from OpenAI, Midjourney, or a host of other companies.

However, with today’s OS updates, that has begun to change. Each update released today includes a far deeper set of new features than any other ‘.1’ release I can remember. Not only are the releases stuffed with a suite of artificial intelligence tools that Apple collectively refers to as Apple Intelligence, but there are a bunch of other new features that Niléane has written about, too.

The company is tackling AI in a unique and very Apple way that goes beyond just the marketing name the features have been given. As users have come to expect, Apple is taking an integrated approach. You don’t have to use a chatbot to do everything from proofreading text to summarizing articles; instead, Apple Intelligence is sprinkled throughout Apple’s OSes and system apps in ways that make them convenient to use with existing workflows.

If you don’t want to use Apple Intelligence, you can turn it off with a single toggle in each OS’s settings.

Apple also recognizes that not everyone is a fan of AI tools, so they’re just as easy to ignore or turn off completely from System Settings on a Mac or Settings on an iPhone or iPad. Users are in control of the experience and their data, which is refreshing since that’s far from given in the broader AI industry.

The Apple Intelligence features themselves are a decidedly mixed bag, though. Some I like, but others don’t work very well or aren’t especially useful. To be fair, Apple has said that Apple Intelligence is a beta feature. This isn’t the first time that the company has given a feature the “beta” label even after it’s been released widely and is no longer part of the official developer or public beta programs. However, it’s still an unusual move and seems to reveal the pressure Apple is under to demonstrate its AI bona fides. Whatever the reasons behind the release, there’s no escaping the fact that most of the Apple Intelligence features we see today feel unfinished and unpolished, while others remain months away from release.

Still, it’s very early days for Apple Intelligence. These features will eventually graduate from betas to final products, and along the way, I expect they’ll improve. They may not be perfect, but what is certain from the extent of today’s releases and what has already been previewed in the developer beta of iOS 18.2, iPadOS 18.2, and macOS 15.2 is that Apple Intelligence is going to be a major component of Apple’s OSes going forward, so let’s look at what’s available today, what works, and what needs more attention.

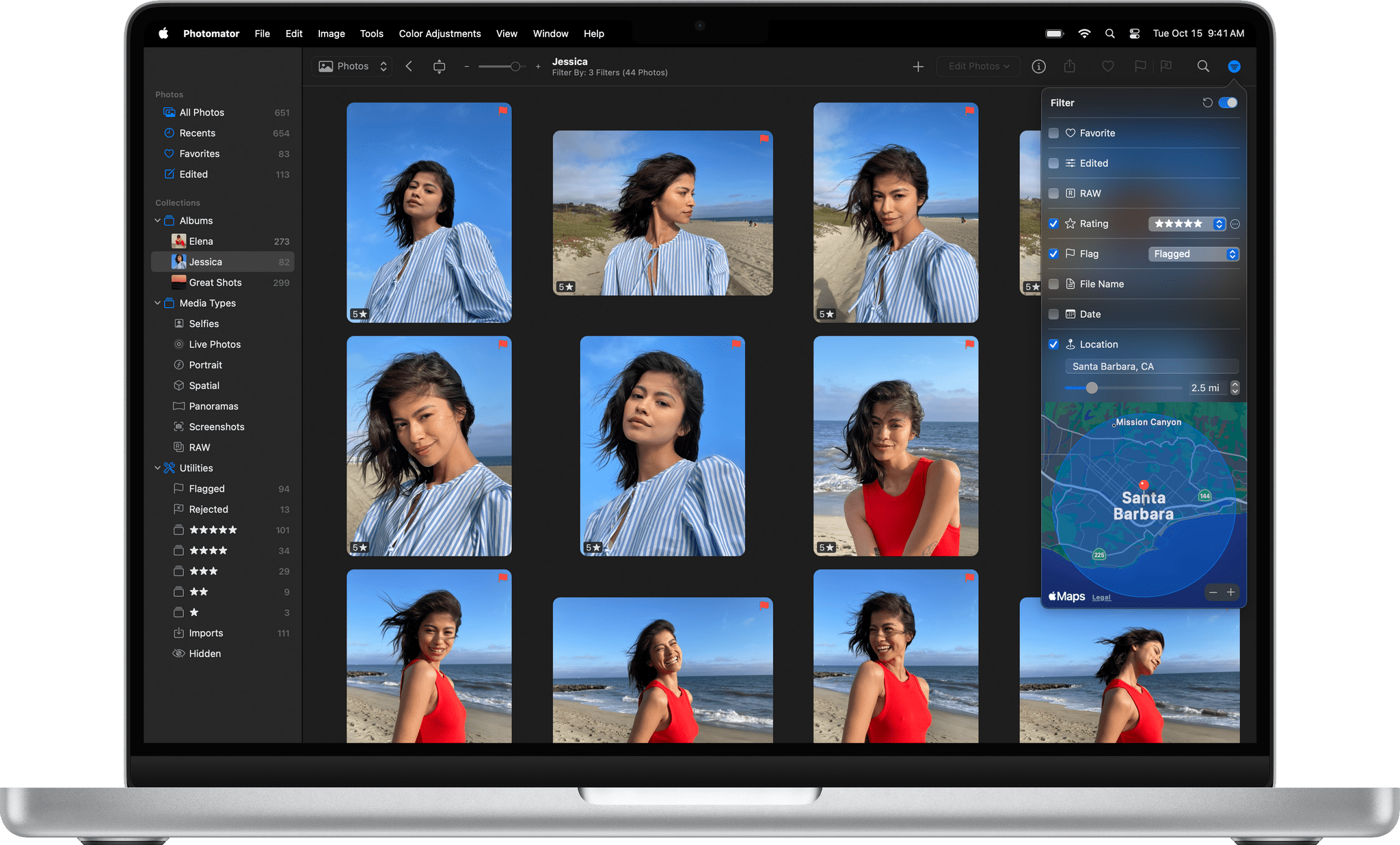

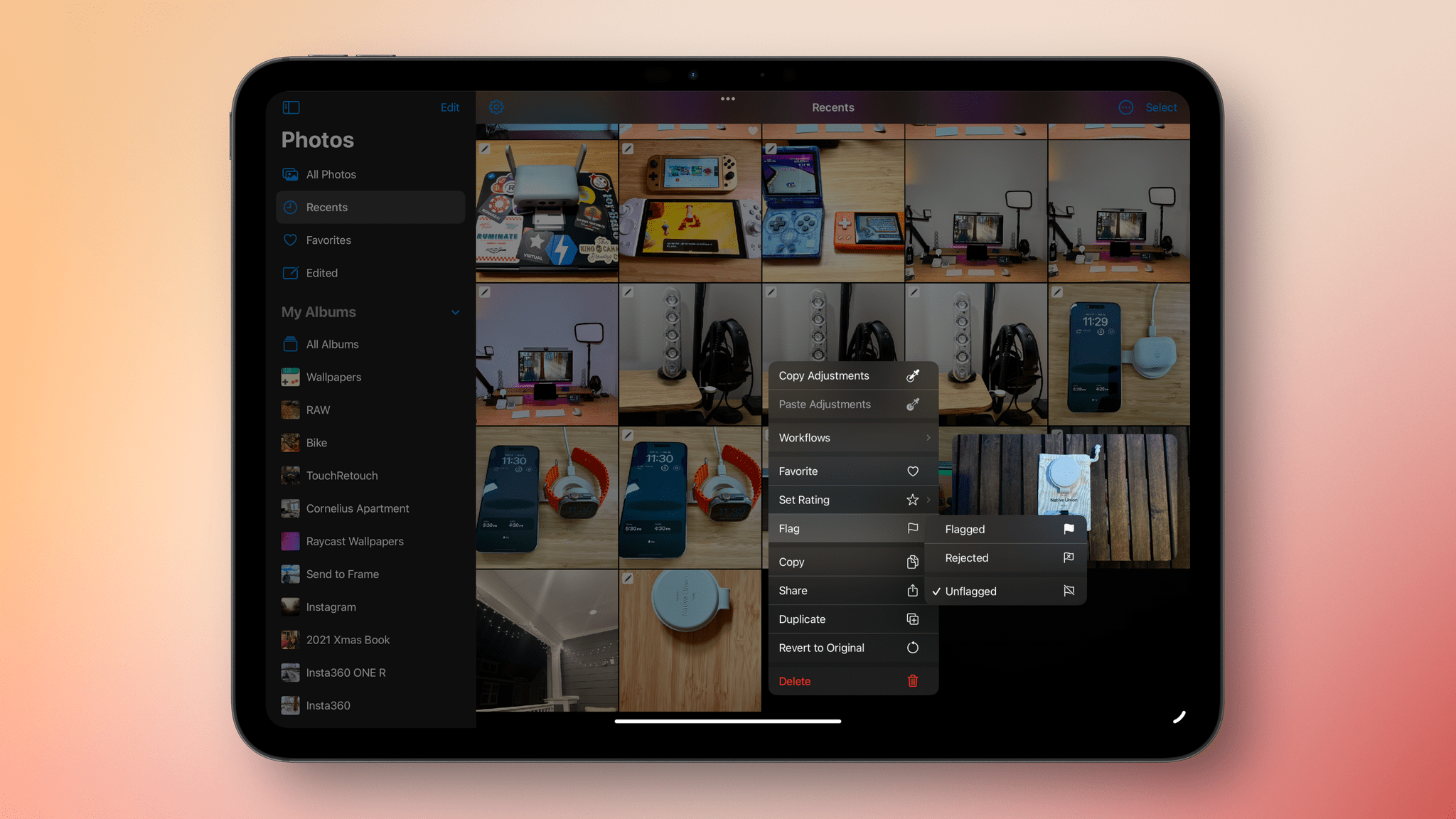

Photomator 3.4 Adds Photo Flagging, Rating, and Filtering

Today, Photomator 3.4 was released, adding flagging, rating, and filtering, which should substantially enhance how photos are organized with the app.

I haven’t spent much time with Photomator 3.4 yet, but the features it adds today will be familiar to anyone who has used other photo editors. The update adds the ability to flag and reject photos and apply a one- to five-star rating. Then, with filters based on flags, rejects, and star ratings, it’s easy to navigate among images to determine which to keep. The process is aided by extensive single-key shortcuts, too.

Photomator’s filtering options extend beyond flags, rejects, and stars. Other filtering options include whether an image is a RAW file or a favorite. You can filter based on a photo’s file name, date, and location, too. Flagged, rejected, and rated images are also gathered in special Utilities Collections, along with a new collection for imported photos. Photographs added from apps like Adobe Lightroom that store flags and ratings in metadata or XMP files are preserved when imported, too.

I haven’t tried the iOS or iPadOS versions of Photomator yet, but they share similar features, including the ability to flag and star images in the app’s browser UI. The iPhone and iPad versions support context menus for flagging and rating and batch application of flags and stars. The iOS and iPadOS versions of Photomator also include collections that assemble flagged and starred items in one place but don’t support the Mac version’s other filtering tools.

Photomator 3.4 is available as a free download on the App Store for existing customers. Some features require a subscription or lifetime purchase.