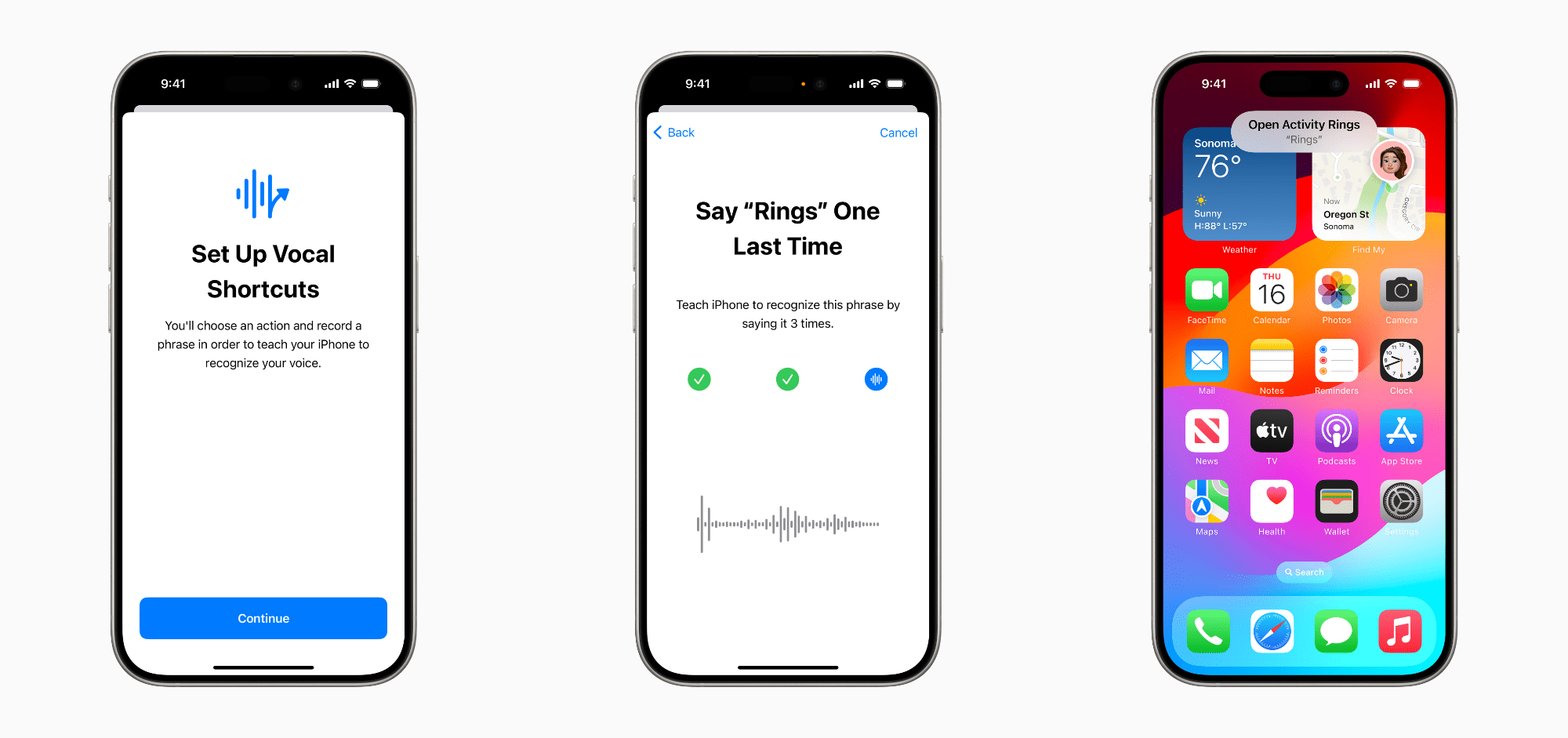

Earlier this week, Apple announced a series of new accessibility features coming to its OSes later this year. There was a lot announced, and it can sometimes be hard to understand how features translate into real-world benefits to users.

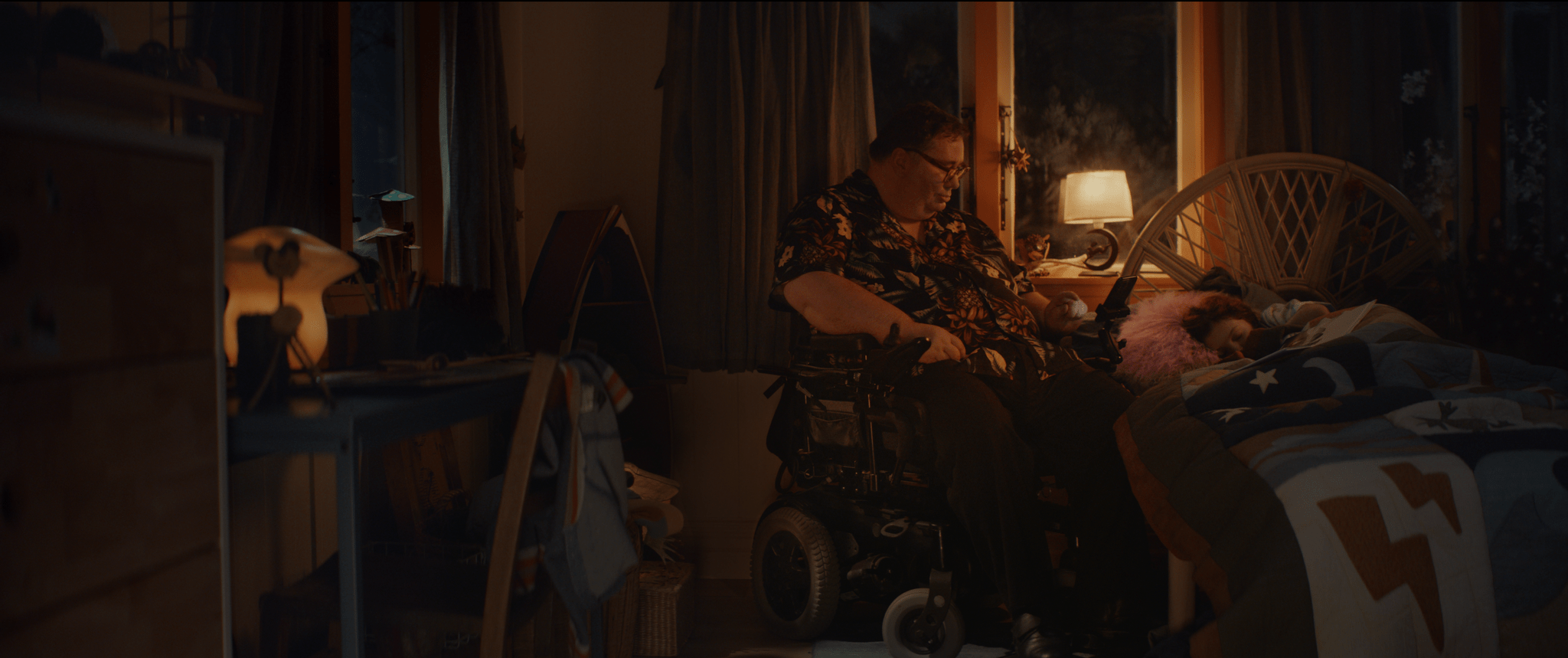

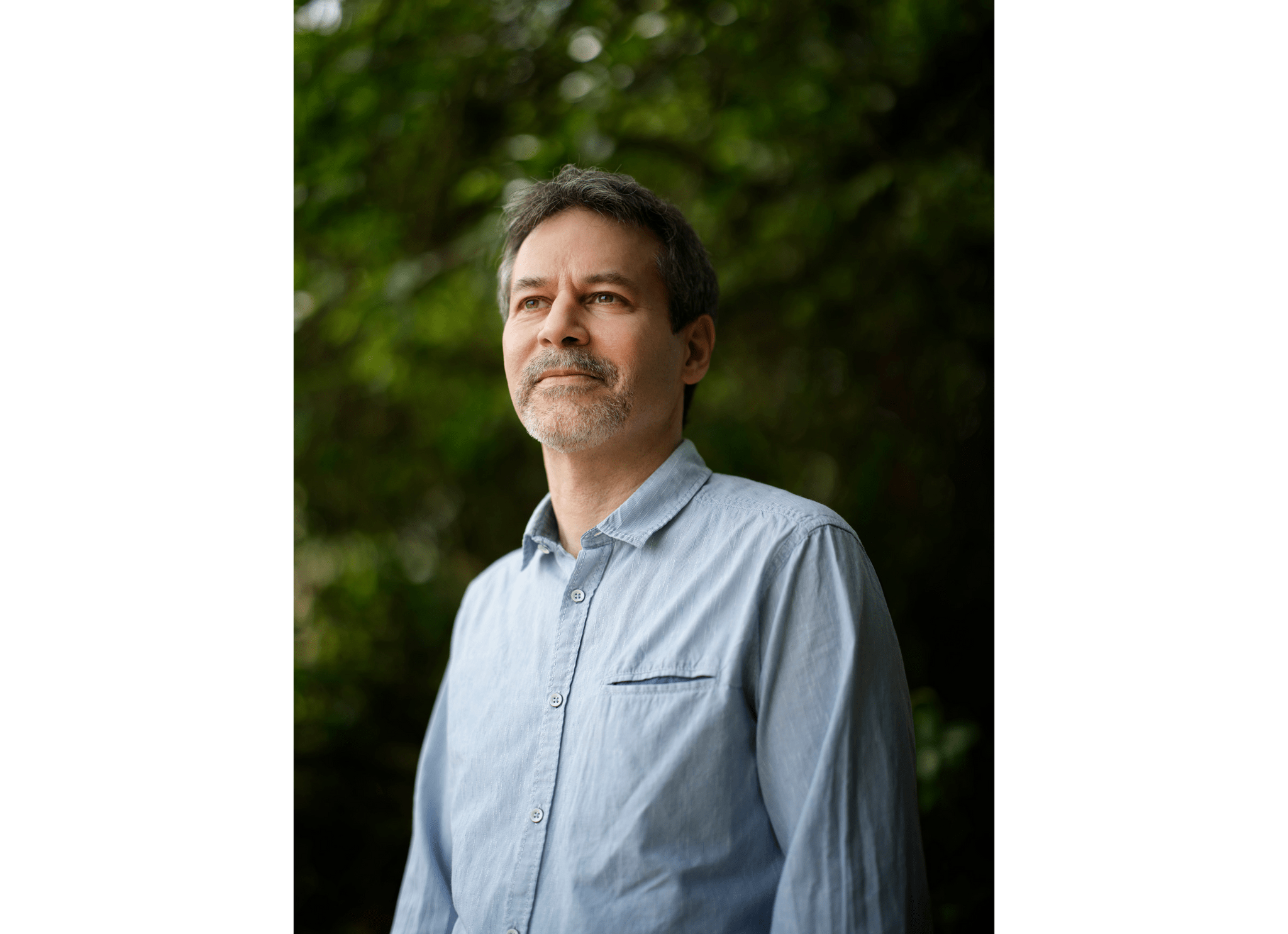

To get a better sense of what some of this week’s announcements mean, I spoke to David Niemeijer, the founder and CEO of AssistiveWare, an Amsterdam-based company that makes augmentative and alternative communication (AAC) apps for the iPhone and iPad, including Proloquo, Proloquo2Go, and Proloquo4Text. Each app addresses different needs, but what they all have in common is helping people who have difficulty expressing themselves verbally.

What follows is a lightly edited version of our conversation.

Let me start by asking you a little bit about AAC apps as a category because I’m sure we have readers who don’t know what they do and what augmented and alternative communication apps are.

David Niemeijer: So, AAC is really about all ways of communication that do not involve speech. It includes body gestures, it includes things like signing, it includes texting, but in the context of apps, we typically think more about the high-tech kind of solutions that use the technology, but all those other things are also what’s considered AAC because they augment or they are an alternative for speech. These technologies and these practices are used by people who either physically can’t speak or can’t speak in a way that people understand them or that have other reasons why speech is difficult for them.

For example, what we see is that a lot of autistic people is they find speech extremely exhausting. So in many cases, they can speak, but there are many situations where they’d rather not speak because it drains their energy or where, because of, let’s say, anxiety or stress, speech is one of the first functions that drops, and then they can use AAC.

We also see it used by people with cerebral palsy, where it’s actually the muscles that create a challenge. [AAC apps] are used by people who have had a stroke where the brain system that finds the right words and then sends the signals to the muscles is not functioning correctly. So there are many, many reasons. Roughly about 2% of the world population cannot make themselves understood with their own voice.

Read more