Thursday is Global Accessibility Awareness Day, and as in years past, Apple has previewed several new accessibility features coming later this year. This year, Apple is focusing on a wide range of accessibility features covering cognitive, vision, hearing, mobility, and speech, which were designed with feedback from disability communities. The company hasn’t said when these features will debut in its operating systems, but if past years are any indication, most should be released in the fall as part of the annual OS release cycle.

Assistive Access

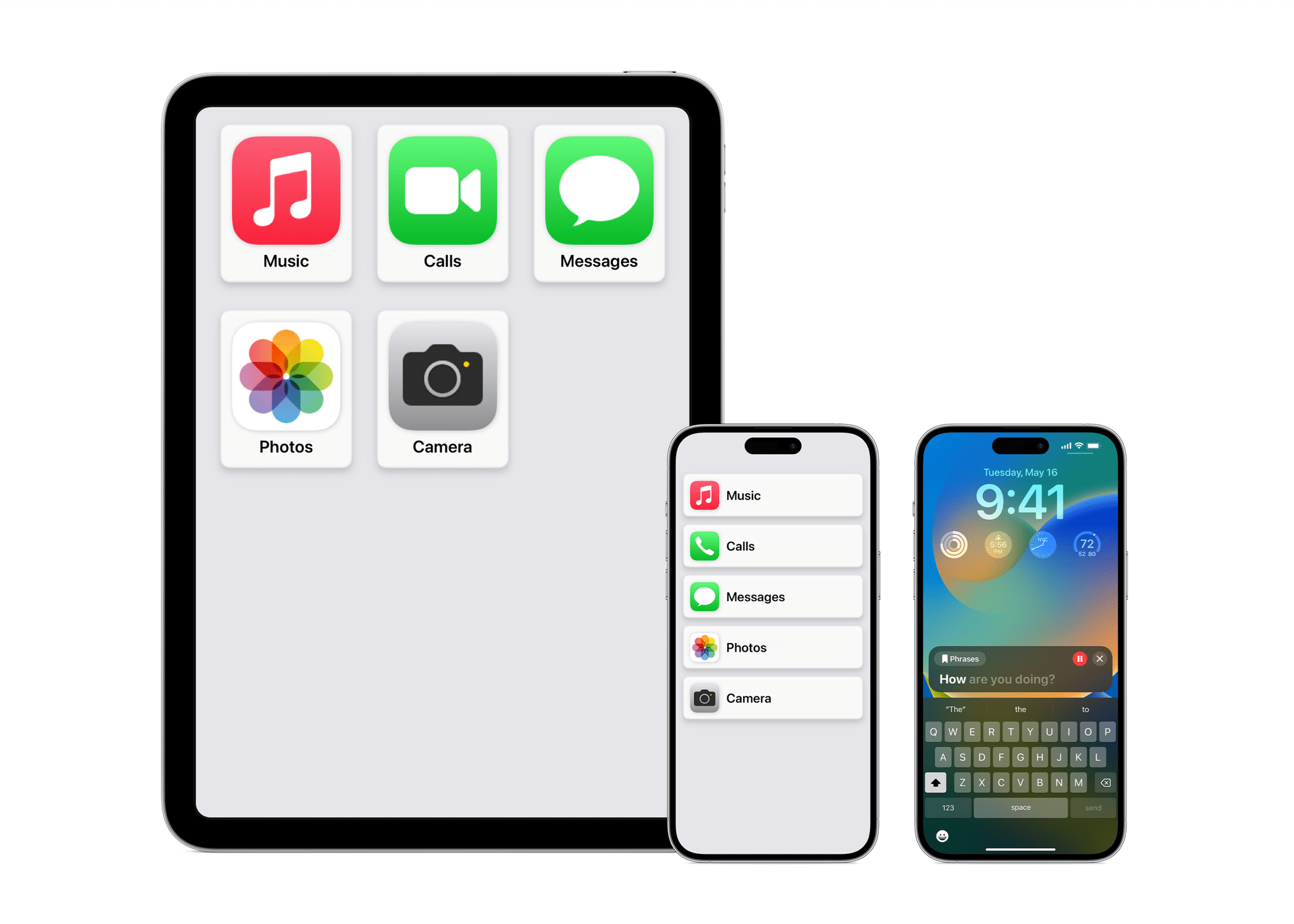

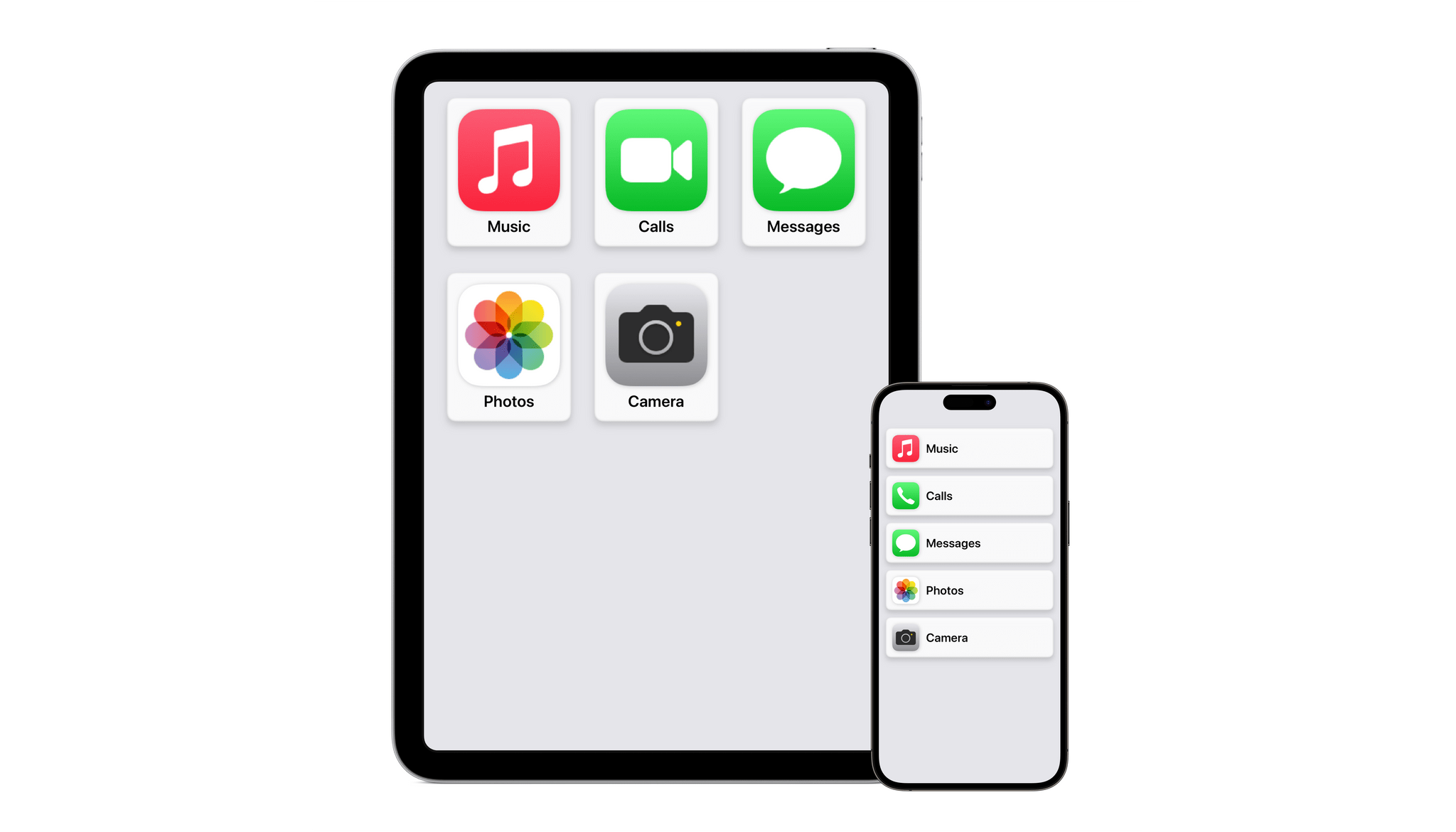

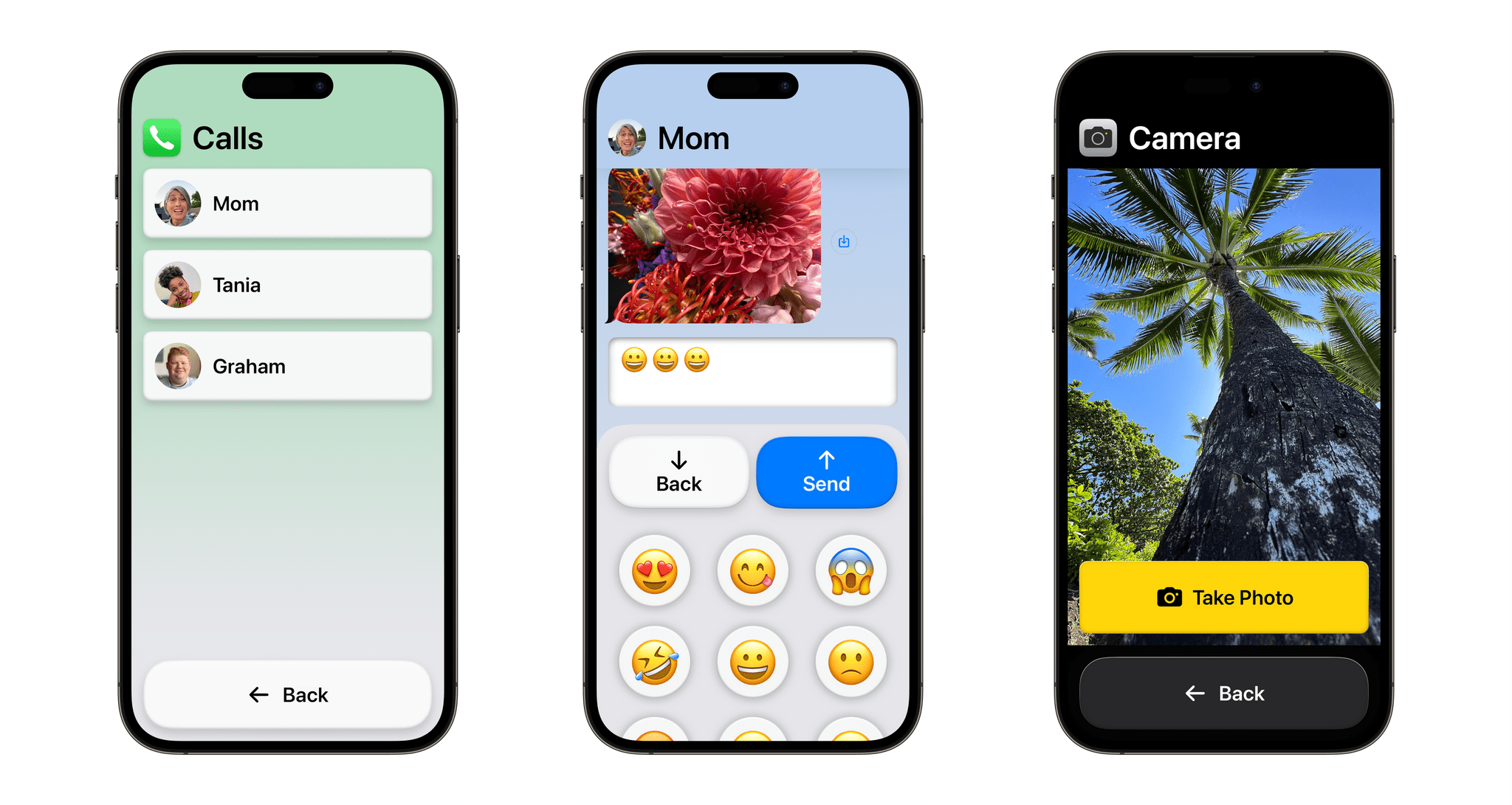

Assistive Access is a new customizable iPhone and iPad mode created for users with cognitive disabilities to lighten the cognitive load of using their favorite apps. Apple worked with users as well as their trusted supports to focus on the activities they use most, like communicating with friends and family, taking and viewing photos, and listening to music. The result is a distillation of the experiences of the related apps. For instance, Phone and FaceTime have been combined into a single Calls app that handles both audio and video calls.

The UI for Assistive Access is highly customizable, allowing users and their trusted supporters to adapt it to their individual needs. For example, an iPhone’s Home Screen can be streamlined to show just a handful of apps with large, high-contrast buttons with big text labels. Alternatively, Assistive Access can be set up with a row-based UI for people who prefer text.

Live Speech and Personal Voice

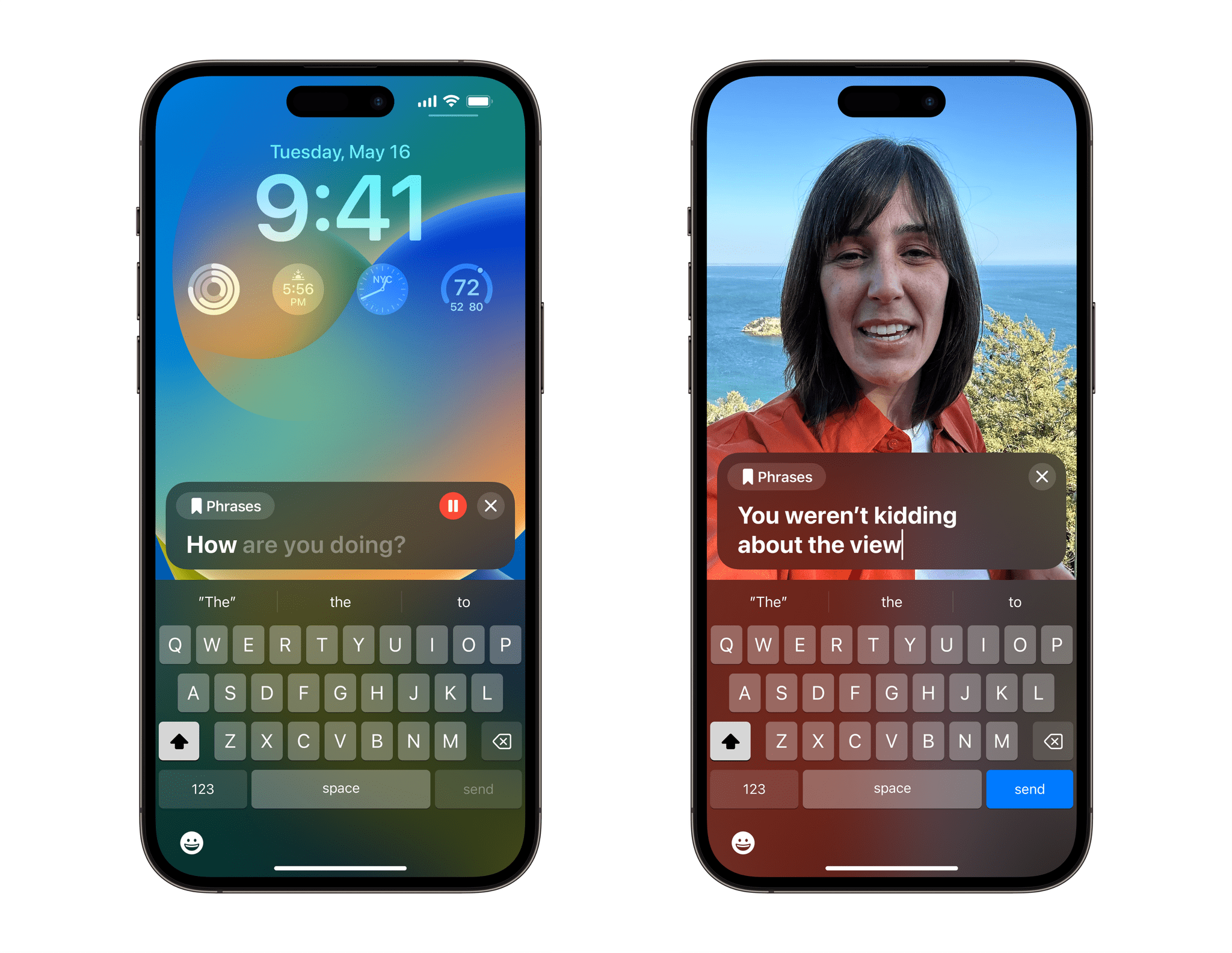

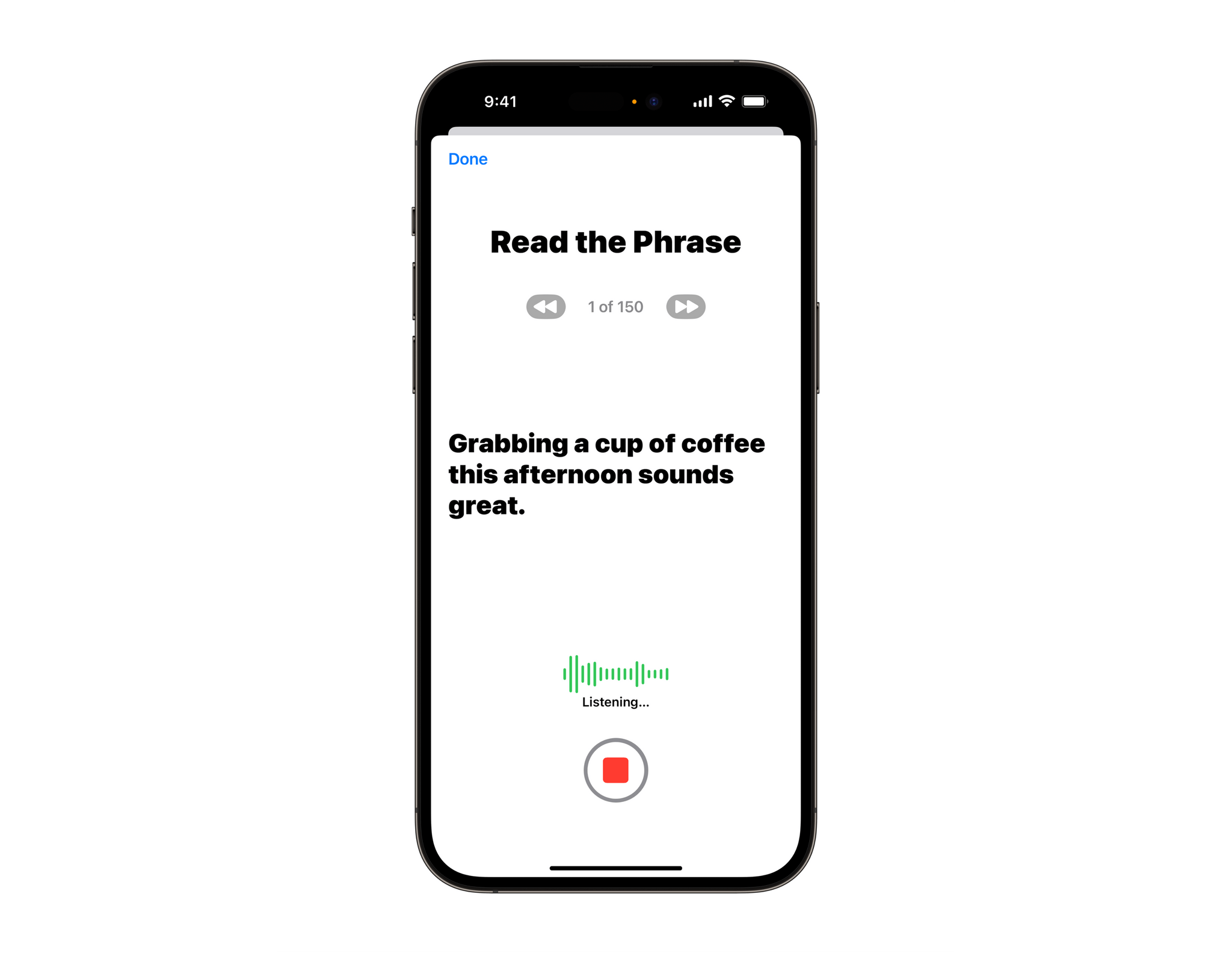

Live Speech is a new feature that works with the iPhone, iPad, and Mac, allowing users who can’t speak to type responses that are spoken aloud on phone and FaceTime calls, as well as during in-person conversations. Common phrases can be saved and played back without the need to type too.

Apple also announced Personal Voice, which leverages the power of Apple silicon’s Neural Engine to assist users who are at risk of losing their ability to speak. The feature allows users to record 15 minutes of randomized text prompts on iPhone, iPad, or Apple silicon Mac, which Personal Voice uses to create a facsimile of their voice. The process takes place on-device using machine learning and can be synced to other devices using iCloud end-to-end encryption if users opt-in. At launch, Personal Voice will be English-only.

Personal Voice is a very big deal for people faced with losing their ability to speak as a result of an ALS diagnosis or other disability:

“At the end of the day, the most important thing is being able to communicate with friends and family,” said Philip Green, board member and ALS advocate at the Team Gleason nonprofit, who has experienced significant changes to his voice since receiving his ALS diagnosis in 2018. “If you can tell them you love them, in a voice that sounds like you, it makes all the difference in the world — and being able to create your synthetic voice on your iPhone in just 15 minutes is extraordinary.”

Personal Voice also demonstrates the extent to which machine learning can be accomplished locally on iPhones and iPads. I’ve played around with speech synthesis myself using ElevenLabs’ tools, but those require you to upload samples of your voice, trusting that the company creating the voice will not misuse your voice data. Personal Voice achieves an important accessibility goal without compromising privacy, which is a big win for users. I haven’t heard enough examples of Personal Voice to evaluate how well it works compared to other options, but what I have heard was very good, so I’m optimistic that users like it.

Point and Speak in Magnifier

The Magnifier app is a terrific accessibility tool and will be getting even better with Point and Speak. The feature is an extension of Detection Mode, which was introduced last year and helps blind and low-vision users find and navigate doors when they arrive somewhere. Like that feature, Point and Speak combines the power of camera hardware, LiDAR, and text recognition to help users interact with things in their environment, such as home appliances.

For example, as the video above shows, a user can hold an iPhone or iPad up to a microwave oven, and as they move their finger over each button, the Magnifier app will speak the text of the label on the button. It’s a remarkable coordination between several hardware and software technologies that boil down to one simple feature that promises to make everyday life easier for many users. Point and Speak will require an iPhone or iPad with a LiDAR scanner and will support English, French, Italian, German, Spanish, Portuguese, Chinese, Cantonese, Korean, Japanese, and Ukrainian at launch.

Other Features and Events

Apple also announced a grab bag of other new accessibility features that are coming later this year:

- Made for iPhone hearing devices will work with the M1 and M2 Macs

- Voice Control is gaining phonetic suggestions for words that sound similar when a user is editing text in English, Spanish, French, and German

- A Voice Control guide will be added to the iPhone, iPad, and Mac to provide tips for using the feature

- Switch Control will add the ability to allow a switch to serve as a game controller

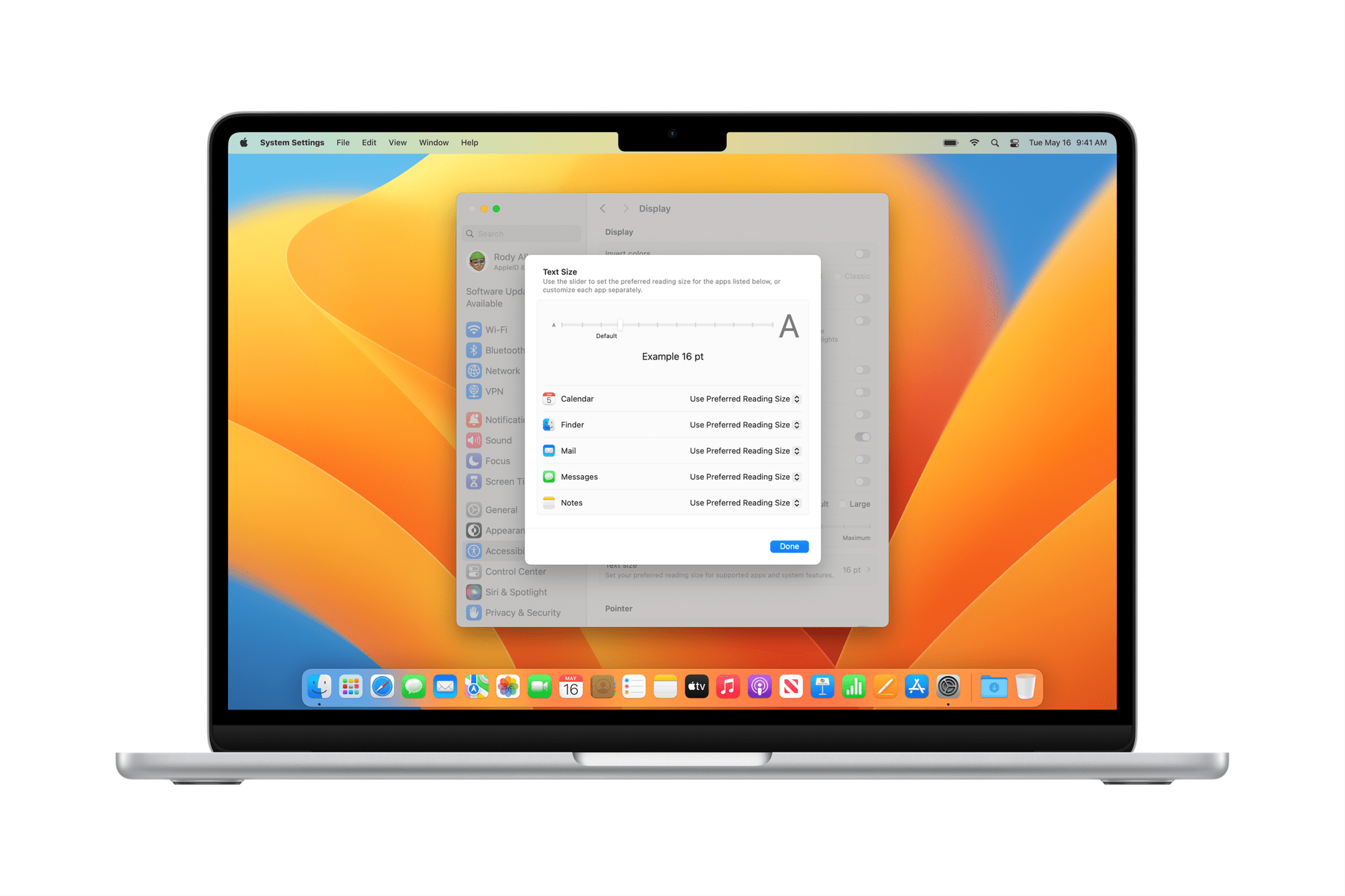

- Adjusting text size will be easier in Mac apps, including Finder, Messages, Mail, Calendar, and Notes

- Animations can be set so they will automatically pause in Messages and Safari for users who are sensitive to fast animations

- Siri voices will sound natural for VoiceOver users when played back between 0.8x and 2x speeds

To celebrate Global Accessibility Awareness Day, Apple is also rolling out SignTime to more of its stores. The program, which offers on-demand sign language for Apple Store and Apple Support customers, will expand on May 18th to Italy, Germany, Spain, and South Korea. In addition:

- Shortcuts is adding Remember This, a shortcut for users with cognitive disabilities that creates a visual diary in the Notes app

- Apple Podcasts will have a collection of shows about accessibility technology

- Apple TV is curating TV shows and movies from the disability community

- Apple Books is spotlighting the memoir of Judith Heumann, a disability rights pioneer

- Apple Music is featuring American Sign Language (ASL) music videos

- Apple Fitness+ trainer Jamie-Ray Hartshorne will highlight accessibility features of the service’s workouts

- The App Store will spotlight Aloysius Gan, Jordyn Zimmerman, and Bradley Heaven, three disability community leaders, and their experiences with augmentative and alternative communication (AAC) apps for nonspeaking people

Apple has made a tradition of announcing its upcoming accessibility features the week of Global Accessibility Awareness Day. It’s a great way to shine a spotlight on the company’s efforts to make its products available to everyone and how technology can make that a reality. This year even more than others, theres a lot of accessibility innovations to look forward to in upcoming OS updates.