Keyboard and Autocorrect

The iPhone’s autocorrect feature has been the butt of so many Internet jokes over the years, and rightfully so: while Eat Up Martha may have been a niche problem for a very peculiar device, having to ducking fix constant mistakes on the keyboard we use to chat with friends, family, and colleagues for multiple hours every day is problem of a completely different order of magnitude.

Imagine my relief, then, when I realized that iOS 17’s brand new autocorrect feature based on a transformer model was not just marketing speak but actually works and makes typing on the iPhone’s (and iPad’s) software keyboard a…pleasant experience. I can’t believe I’m saying this but I love Apple’s new autocorrect system in a way I never even remotely appreciated typing on a software keyboard.

The new autocorrect is so good, it allowed me to write a good chunk of this review without using the Magic Keyboard for iPad at all.14

There are a few different layers to the new autocorrect system made by Apple, but it all starts with the transformer model that is available for the English, Spanish, and French keyboards in this first version of iOS 17. Understanding the underpinnings of a transformer model is far above my pay grade, but I would sum up the things it does as follows:

- It allows for far greater accuracy when checking for typos and grammatical errors that may span entire sentences;

- It can proactively suggest the next word you want to type more quickly and accurately than before;

- It can also suggest multiple words you may want to type next, offering suggestions for whole parts of sentences if its confidence level is high enough.

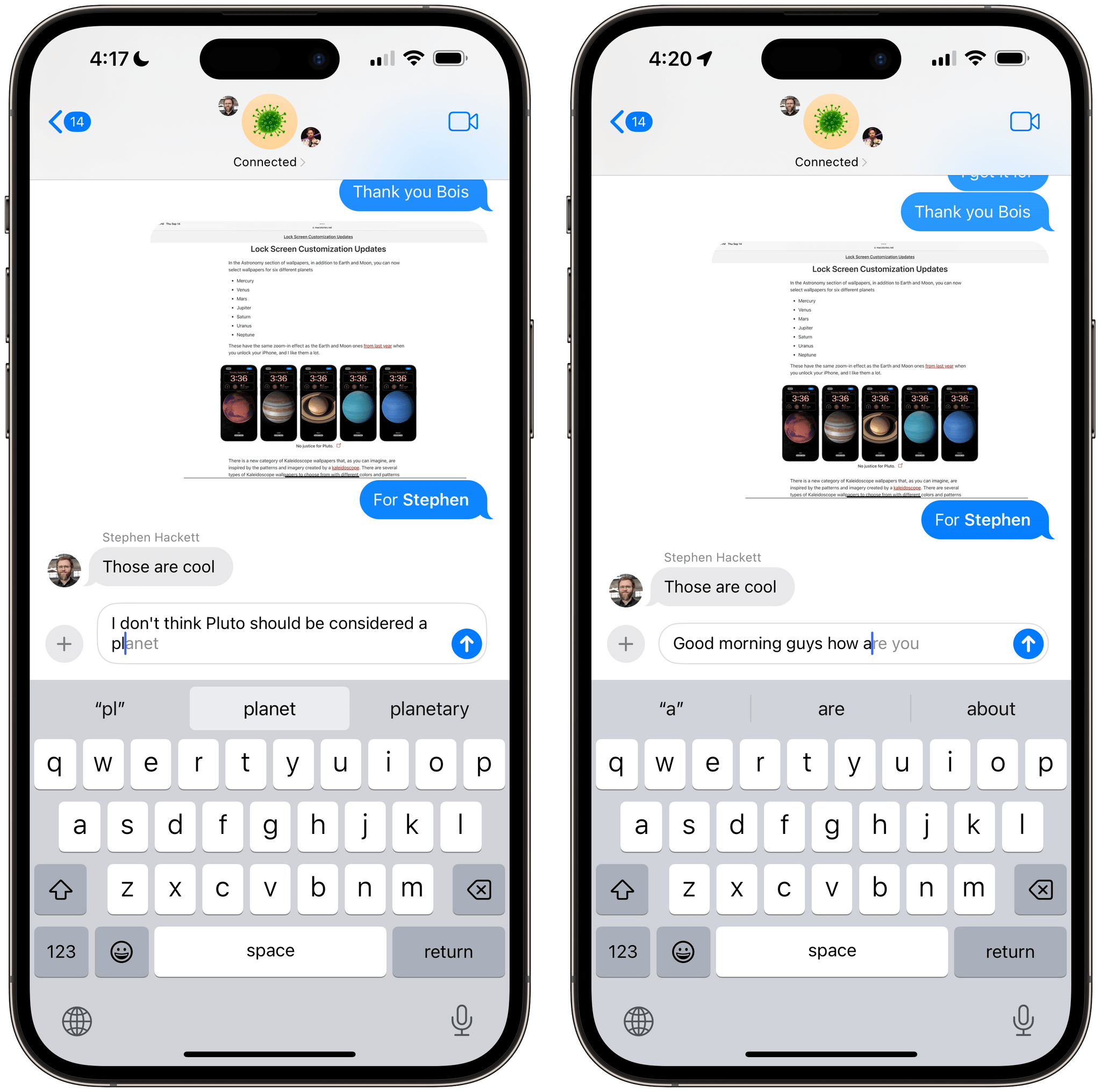

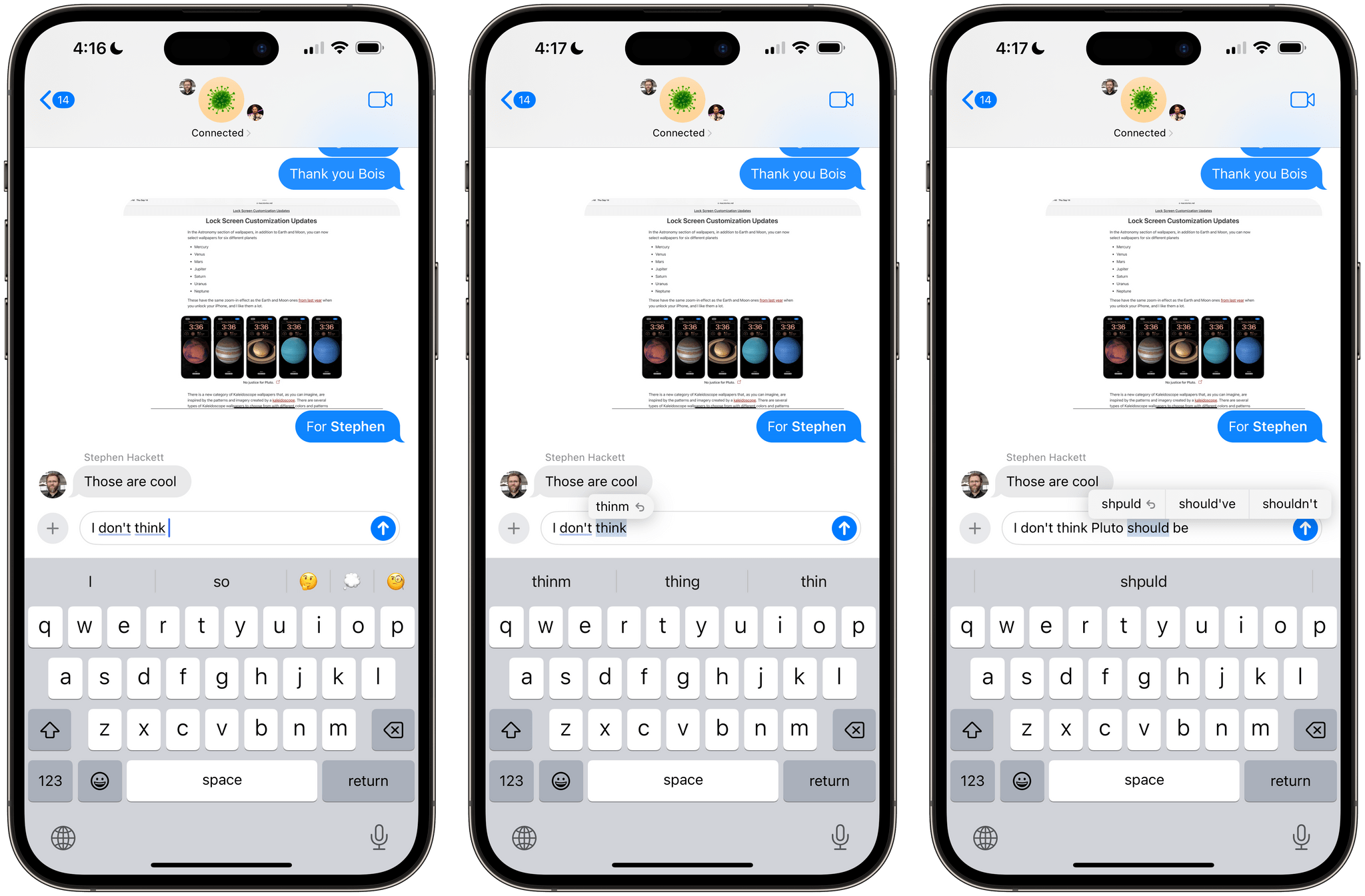

All of these traits are complemented by the refreshed user experience Apple designed for the system keyboard in iOS 17. For starters, when a word is automatically corrected, it gets underlined; tap the underlined word, and a new popup appears, allowing you to revert to what you wanted to type in the first place or choose between different suggestions.15

When a word gets underlined in light blue, it means iOS 17 autocorrected it. If you tap it (center, right) you can see what you actually typed and optionally revert to it, or view other suggestions.

In my experience with iOS and iPadOS 17, more often than not, the default automatic correction is good enough and doesn’t need any further tweaking. The system is remarkable and finally feels like it’s working alongside me rather than against me when I want to type something quickly on my phone. That said, I like that I can go back and quickly change what was corrected with the ability to select other suggestions too. And if I do want to pick what I originally typed, iOS 17 will learn from my action and won’t automatically correct that word the next time I type it a certain way (but it’ll still underline it in red as a typo).

Apps that use native text views on iOS and iPadOS (Obsidian, which is built with web technologies16, can’t be part of this category of apps, sadly) can also take advantage of the new proactive suggestions displayed as-you-type next to the cursor. The new language model trained by Apple will frequently try to infer the next word you want to type and offer to complete the word for you by displaying the next few letters in a lighter gray color next to the cursor; press the space bar to accept the suggestion and the word will be autocompleted for you. Sometimes, however, iOS 17 will even try to complete multiple words at a time for you, which you can also accept with the space bar.

Multi-word suggestions are rare, but I’m impressed every time they work and I can hit the space bar to fill an entire sentence with one tap. It feels like magic compared to the past 16 years of typing on an iPhone, and that’s maybe the best way I can describe what the new autocorrect is like in everyday usage. It’s almost as if we’re seeing the light in terms of what a true modern, intelligent keyboard designed by Apple should be, and I can’t imagine ever going back to typing with the pre-iOS/iPadOS 17 software keyboard now. With the Vision Pro and its floating keyboard coming out soon, I’m not surprised Apple prioritized shipping a brand new, powerful autocorrect feature now.

The fact that I was able to write entire chapters of this review using the touch keyboard on my iPad Pro at night should tell you everything you need to know about the quality of the new autocorrect and its proactive suggestions. If a friend or relative asks you for reasons to upgrade to iOS 17, tell them that autocorrect is finally Good.

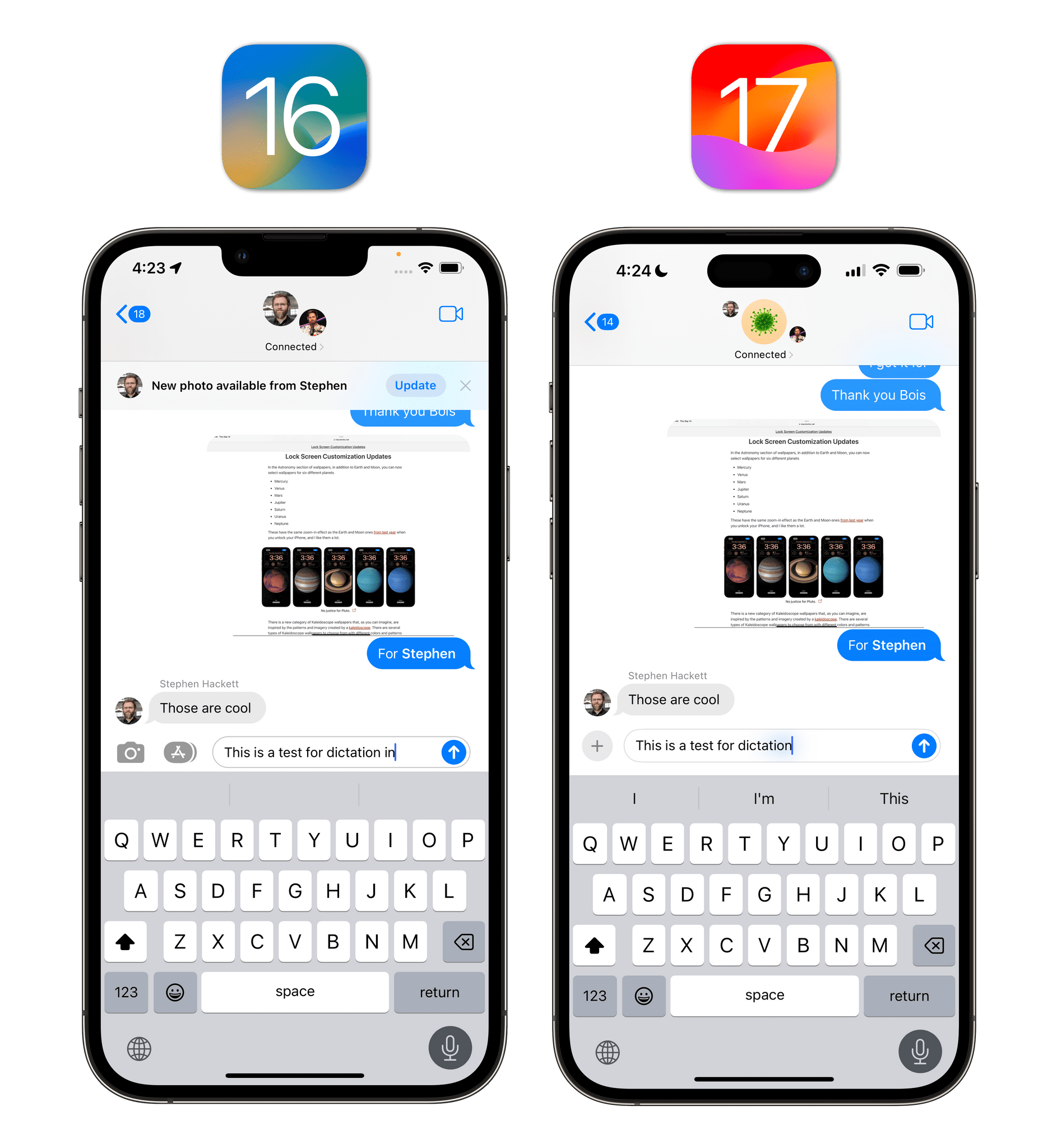

Dictation has undergone a subtle redesign in iOS 17: when dictation is active, the cursor has a slightly different animation and glow around it, which, according to Apple, should “improve its look and feel”. The language picker also looks different from iOS 16 and is consistent with the iPadOS keyboard picker, but it still requires two taps to switch between languages. I honestly don’t think this dictation UI is improved over iOS 16; it just looks newer.

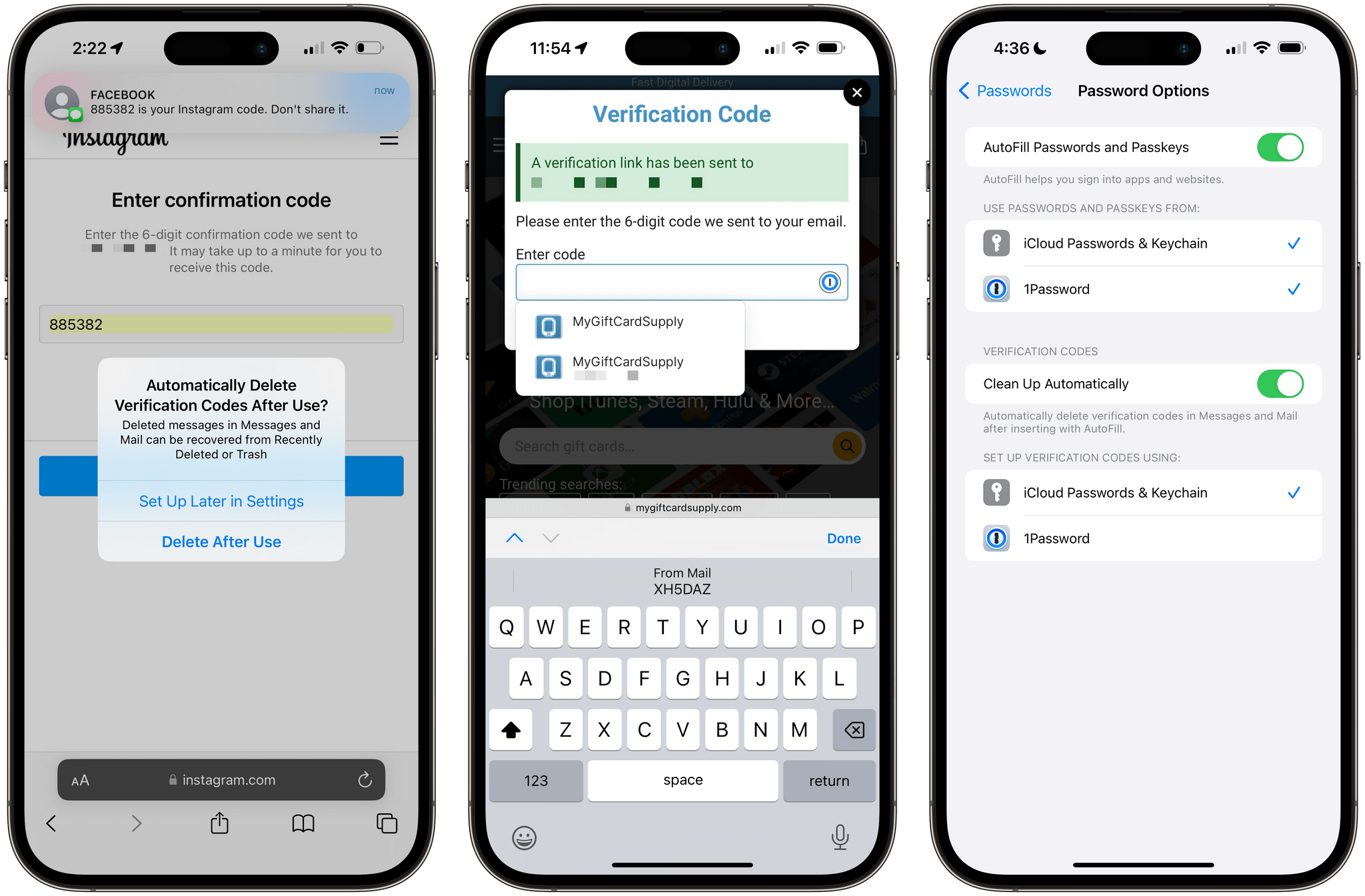

I saved the best for last: in iOS 17, Apple is improving upon one of the absolute best iOS features in modern history – security code autofill from Messages, introduced in iOS 12 – by extending it in two ways: messages with verification codes can now be automatically deleted after they’re autofilled from the keyboard, and codes received over email in the Mail app are supported too.

Security code autofill for Messages and Mail. You can now choose to automatically delete these messages after filling, which is an option you can also change in Settings later (right).

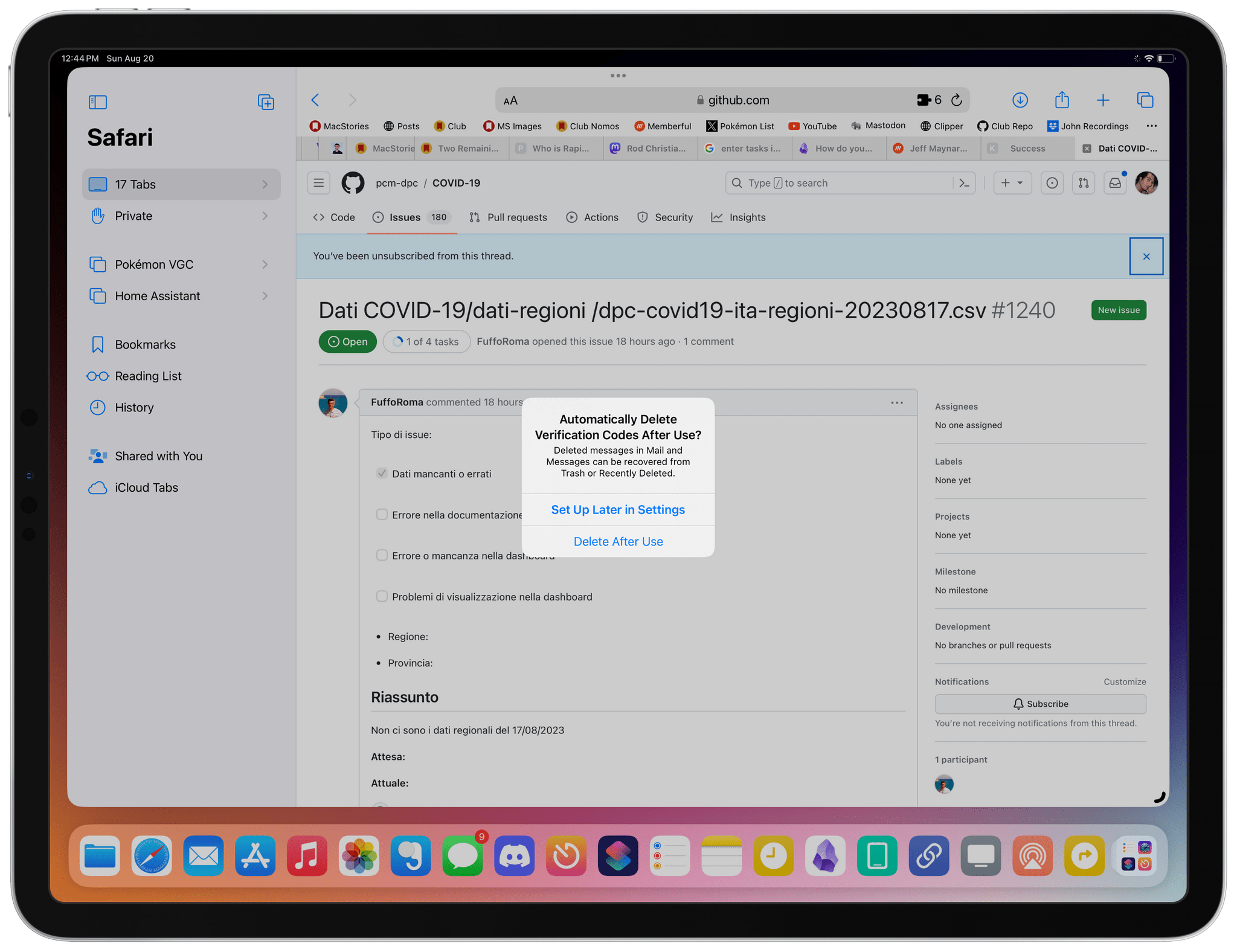

The first time you receive an authentication code, iOS and iPadOS 17 will ask you if you want to enable automatic deletion of such messages. The idea is that these messages, once the code they contain is autofilled, are useless: they just clog your Messages or Mail inbox until you manually delete them. I enabled this preference instantly since this is a behavior I’ve always wanted; plus, those messages can always be recovered in the trash or ‘Recently Deleted’ section of Messages anyway.

If you don’t accept this suggestion when you get prompted to do so, you can find it later in Settings ⇾ Passwords ⇾ Password Options ⇾ Verification Codes.17

I was prompted to set this up after I signed into my GitHub account and had to paste a verification code.

Supporting Mail as a source for messages that contain verification codes is nice: the feature works just like codes from Messages and emails can also be automatically deleted once codes are autofilled. The problem is that, as a Gmail user, those messages don’t come in fast enough in Apple Mail; if I find myself having to manually refresh my inbox in the Mail app to wait for an incoming message, I might as well just copy the code manually while I’m at it too. That said, if you’re not a Gmail user and your messages are delivered immediately to Mail thanks to push notifications, I can imagine you’re going to find this addition extra nice.

Siri

Apple’s assistant didn’t receive a large number of updates this year and, arguably, its conversational and generative skills still pale in comparison to ChatGPT. However, the new features it did get in iOS 17 meaningfully improve the experience in a way I wasn’t anticipating back in June, which have resulted in me using Siri more than ever this summer.

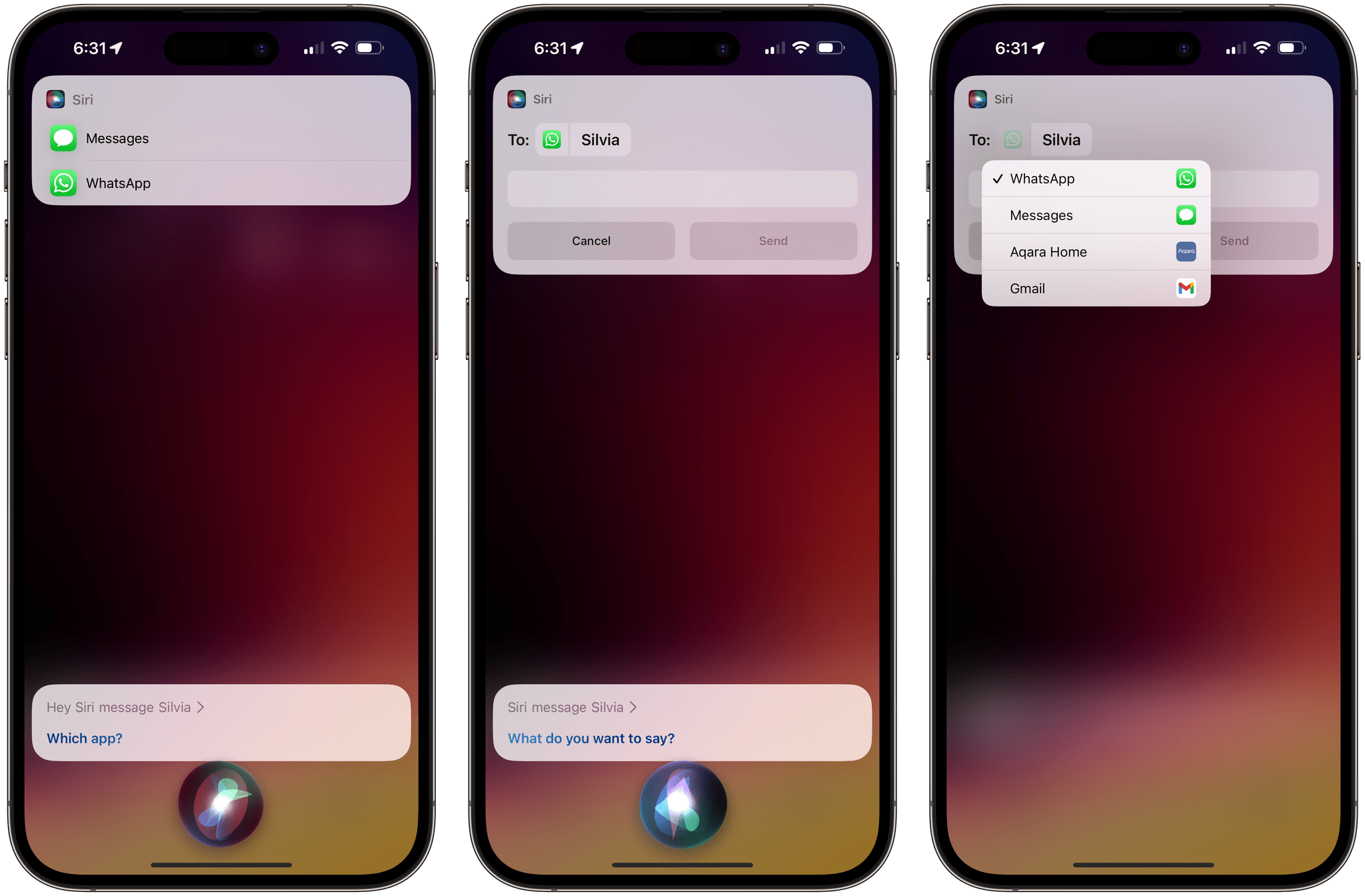

Let’s start with a small nice-to-have: Siri now lets you choose which messaging app you want to use when texting or calling someone. The first time you ask Siri to “text/call [person]”, if that contact is available for calls or messages via other apps that integrate with SiriKit, you’ll be asked which app you want to use. Confirm your selection, and from that moment, that app will be used as the default option for that contact.

Selecting a different messaging app for a contact via Siri. I was also prompted to pick an app for regular phone calls, but not as frequently as for messaging requests.

At any point during your conversations with Siri, you can change the app you’re using for texting someone, as shown in the screenshot above. Tap the icon of the app selected by Siri, and you’ll be able to switch to a different one.

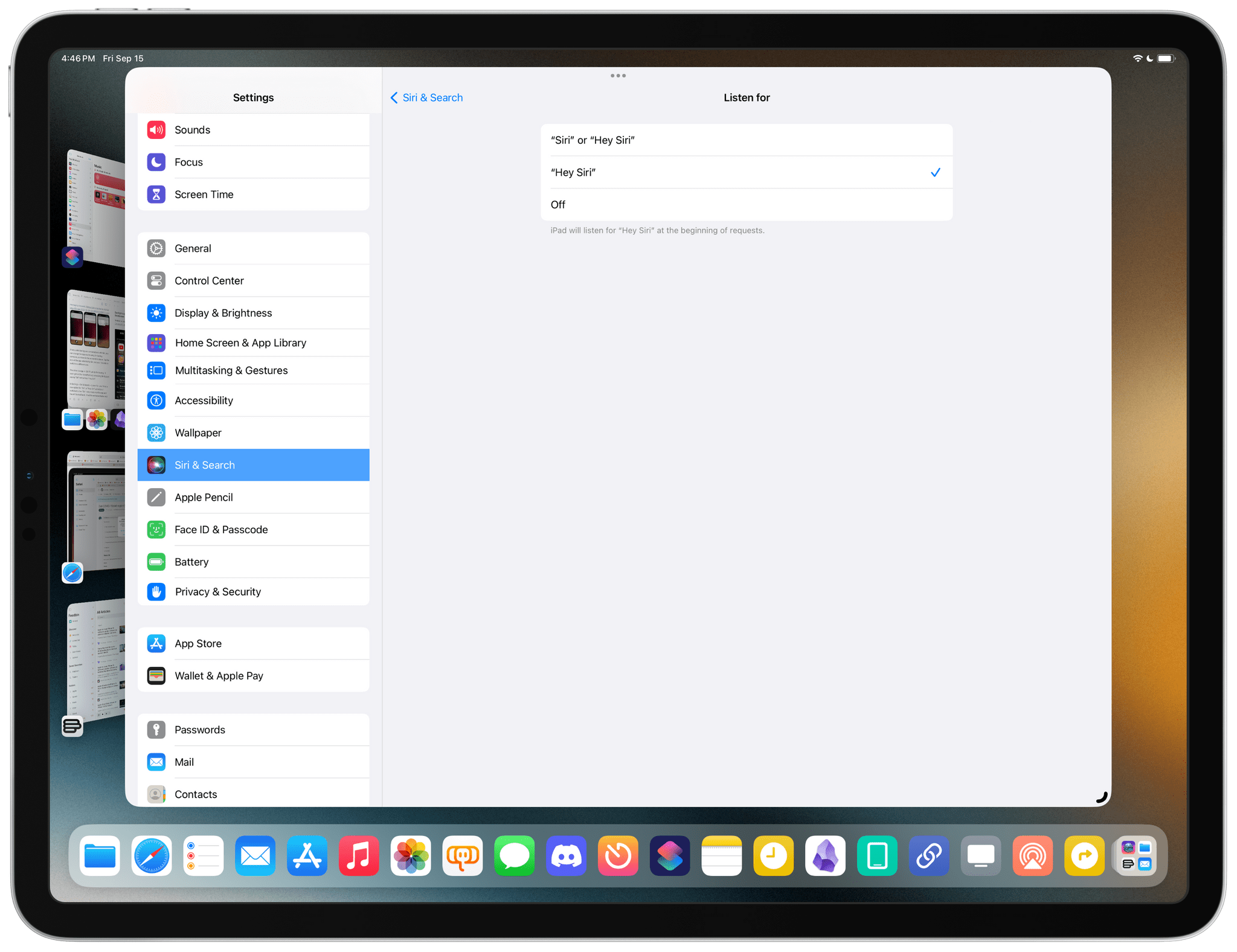

The other change in iOS 17 (which I’m hoping I’ll soon get on the HomePod too) is invoking Siri by just saying “Siri” rather than “Hey Siri”.

In Settings ⇾ Siri & Search ⇾ Listen For, you’ll find a new option for “Siri” or “Hey Siri” activation. I switched to the “Siri”-only mode months ago and haven’t looked back. I find the sentence faster and more natural to utter than “Hey Siri” and recognition by my iPhone 14 Pro Max has been reliable. The fewer words I have to use to interact with Siri, the better. You can still use the old “Hey Siri” method if you want to.

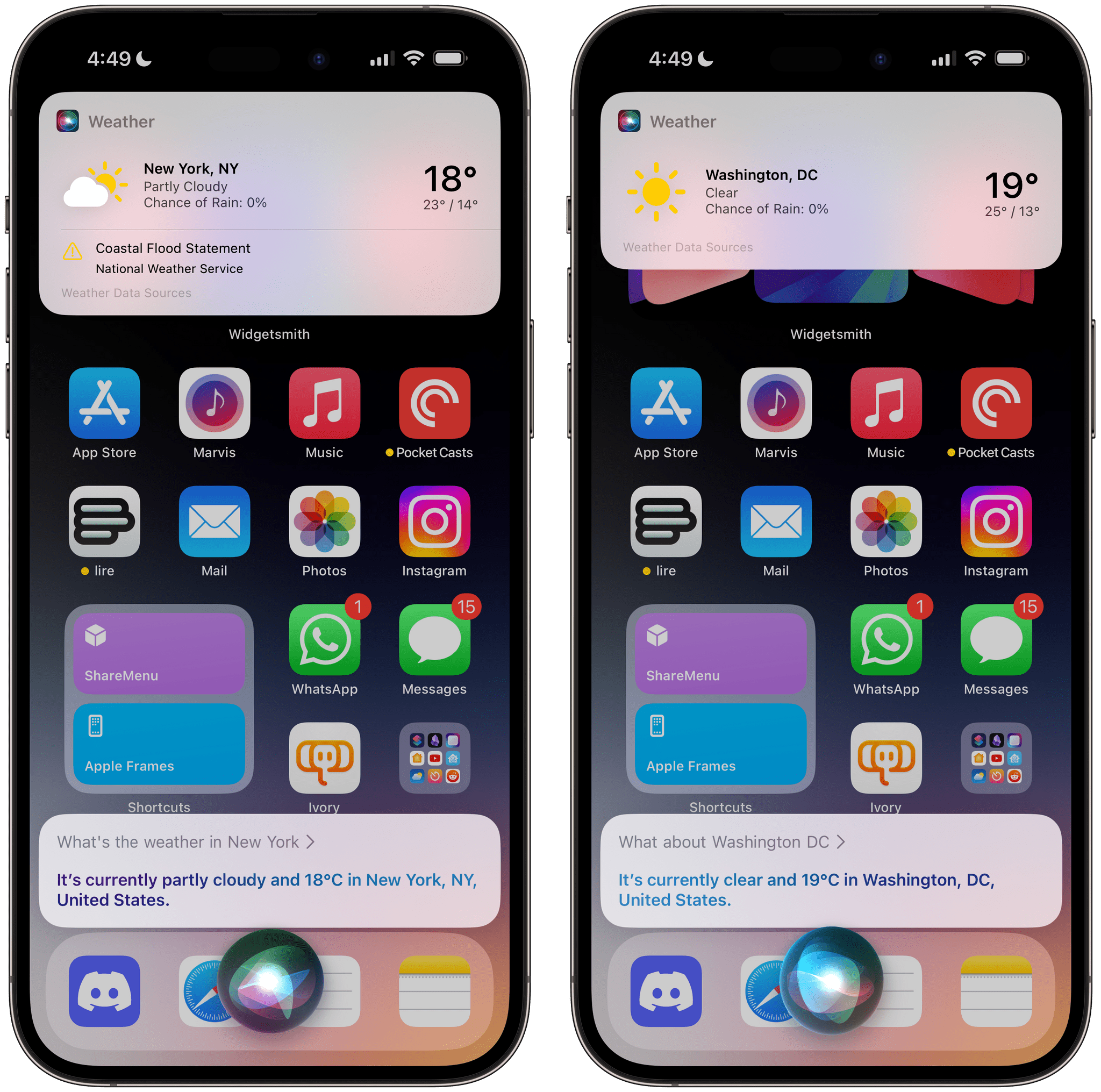

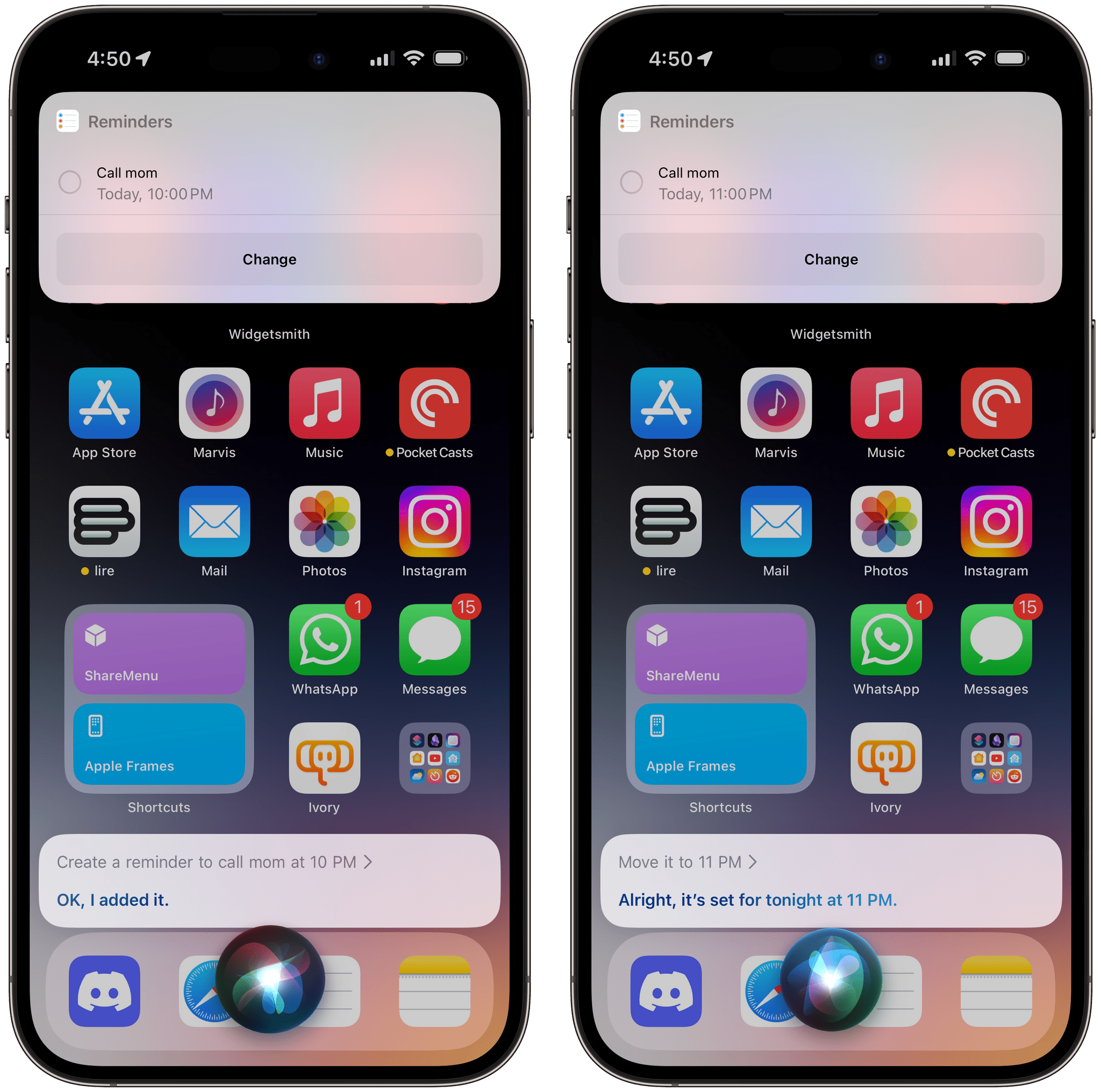

The best change in Siri for iOS 17, in my opinion, is the introduction of back-to-back requests that do not require saying the activation phrase over and over. Now, once you’ve completed a request with Siri, if you don’t exit the Siri UI manually on your device, the assistant will keep listening in the background for about 10 seconds. This way, you’ll be able to issue multiple requests in succession that retain the context of the ongoing conversation if necessary.

I’ve tested this feature extensively, and it works well – within the current limitations of Siri, that is. I can ask Siri to text my girlfriend, then immediately follow up with a request to create a reminder, then another to shuffle a playlist. These are three separate commands that work perfectly one after the other, do not need any more context, and no longer require multiple utterances of “Hey Siri”. I can even ask Siri to create a reminder for 10 PM, then follow-up by saying “actually, move it to 11 PM”, and Siri will understand that request too.

Siri gets a lot of flak for often getting some questions hilariously wrong, and rightfully so. It’s undeniable and objectively true that Siri is behind the raw conversational capabilities of ChatGPT. But the thing is, I still find Siri useful – and improved compared to a few years ago – for the common things I request every day. Not everything needs to be a question about the meaning of life you ask ChatGPT.

I just want to ask Siri to water my plants, remind me to call my mom, shuffle The Maine, and turn off the lights. And for those common, practical use cases, Siri in iOS 17 is better than ever.

- Landscape keyboard, of course. Who can even thumb-type on a 12.9" iPad Pro? ↩

- Another one: the new autocorrect suggestions do not work with swipe typing – I'm guessing because of the unpredictability of swipes. ↩

- I've seen this happen to myself, and I think it'll become a topic of conversation in our community soon enough: apps with custom text views that do not fully support the new autocorrect with proactive suggestions feel broken to me now. This is why I've started experimenting with the MWeb app as a native front-end for editing Markdown text in Obsidian. I'll have to write about this soon. ↩

- I love that Passwords, which I continue to think should be its own standalone app, is a Settings screen with its own settings screen now. ↩