Everything Else

As is often the case with new versions of iOS, there are dozens of smaller features and details to be discovered in the nooks and crannies of the OS. iOS 16 is no different, so let’s take a look.

Device Compatibility

For the first time since iOS 13, which dropped support for the iPhone 5s and iPhone 6, Apple is changing the device compatibility matrix in iOS 16, which has higher requirements than last year’s software update.

On the “regular” iPhone side, the iPhone 6s and 7 lines are out, with the iPhone 8 becoming the minimum threshold to update to iOS 16 (with several features, of course, not available for older devices). On the SE side, iOS 16 also drops support for the first-generation iPhone SE and now requires a second-gen SE or later. Lastly, the discontinued, but never forgotten, iPod touch is also out and isn’t supported by iOS 16. If you kept one around because of the good memories, you won’t be able to put a custom Lock Screen on it.

Dictation

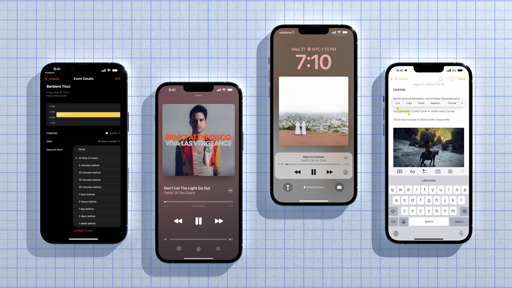

iOS 16 comes with a greatly improved dictation feature that lets you alternate between using voice input and the keyboard in a single, fluid flow that feels much better than dictation in iOS 15.

As you can see from the screenshot above, when tapping the microphone button underneath the keyboard, the dictation UI no longer takes over it. That’s because dictation and keyboard input can now coexist when you’re typing something. The microphone icon simply gets highlighted to indicate that your device is listening, but then you can go ahead and mix and match dictating and typing text together as you please. This new way of dictating text and switching to typing specific words or names makes so much more sense than before, it’s one of those things that will instantly make you wonder how we went so long without it. Of course it’s supposed to work like this.

It’s not just about having the keyboard (and related QuickType suggestions) available when dictating, though. iOS 16’s dictation takes care of automatically inserting punctuation such as commas, periods, and question marks as you dictate. While the feature isn’t 100% accurate, it gets the job done in most scenarios and it has saved me a lot of time when I was dictating text messages. In my tests, the dictation engine did a good job understanding pauses in speech where commas need to be placed, and it also seems to understand the intonation of a question, putting a question mark at the end.

Dictation now supports entering emoji by name, and that has worked reasonably well for me too. Being able to quickly dictate something like “hey, what should I buy for dinner? – smile emoji” while driving and have it transcribed with the proper formatting is nice and deserves a finally from us. I’ve never been a big fan of dictation because of how much it felt like a separate, and more limited, “mode” of the keyboard. I think the changes in iOS 16 are going to make people like me use it a lot more.

Control Center

First introduced in iOS 14, the Privacy menu in Control Center is growing in iOS 16 with the ability to expand it and see a full list of all the apps that recently accessed your location, camera, and microphone.

When you swipe down from the status bar to show Control Center on your iPhone, you’ll see an updated privacy indicator with the name of the app that recently accessed one of the system’s sensitive data types. There can now be multiple apps mentioned in this text label, and there’s a button that indicates you can press the label to navigate into a detail view. This new page is a complete breakdown of all the apps that recently used your location, camera, and microphone. This is a good way to get a detailed overview of what’s accessing what on your device; I just wish it was also possible to tap on the items listed in this screen to open their associated Privacy settings page.

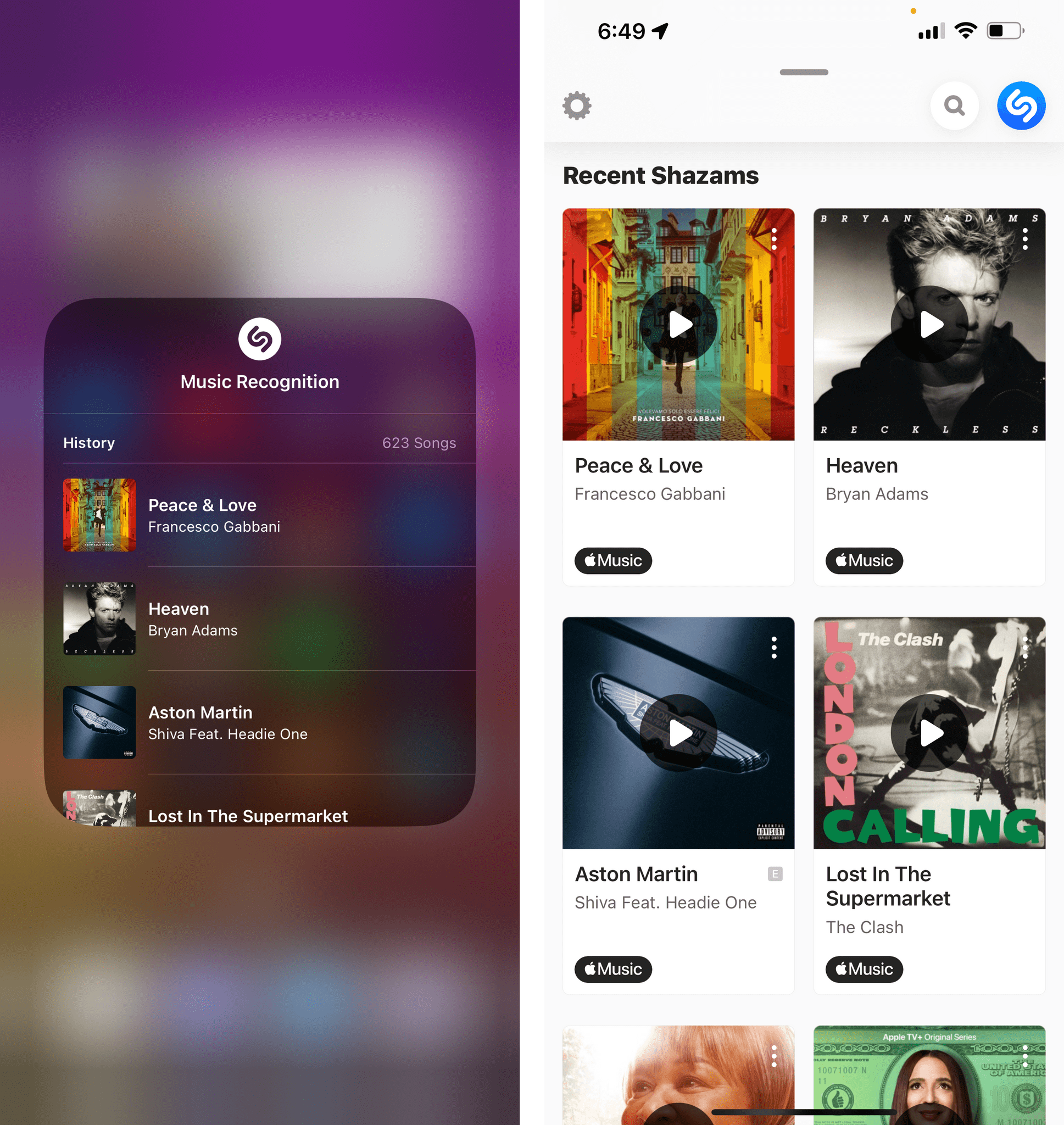

Also in Control Center for iOS 16, there’s another finally for me in the form of the improved music recognition toggle based on Shazam. At long last, songs recognized from Control Center will be automatically synced with the Shazam app.

I long resisted the urge to use the faster, and more accessible, Shazam button from Control Center because it didn’t sync with my history in the app and the Shazam playlist in Apple Music. That’s no longer the case. Even more impressive: because of this change, you can now see your entire history of Shazam tracks inside Control Center by long-pressing the icon and scrolling the list of songs.

Screenshots

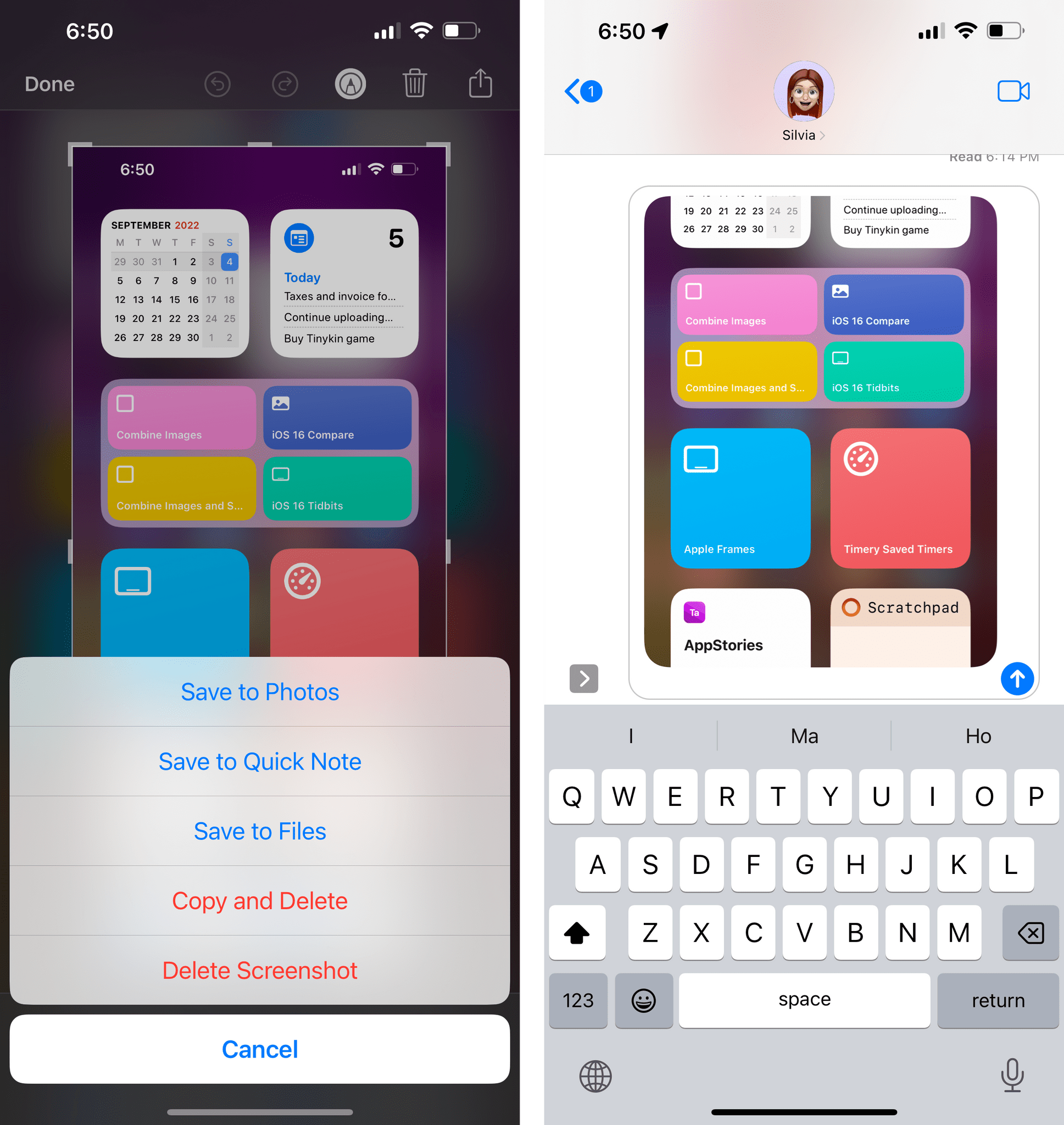

There are two new buttons available when clicking the ‘Done’ button in the screenshot markup UI. One of them is a genius addition for which the engineer/designer who pitched it deserves an immediate raise and promotion. The other is just fine.

First, you can add a screenshot to a quick note. This is nice, but since iOS 16’s Quick Note is limited to creating notes and doesn’t support appending to existing quick notes, it’s not as useful as it could have been.

The real stroke of genius is the new ‘Copy and Delete’ button. It does exactly what it says: it’ll copy the screenshot to your system clipboard and delete it, without saving it to the Photos app at all. I love this feature because it feels like someone at Apple looked directly into how I use my iPhone and created a feature to save me a lot of time.17

I do this all the time: I take a screenshot, crop or annotate something, save it to Photos, go to the Photos app, copy it, delete it, then open iMessage or Twitter, and paste it. I don’t want to keep random screenshots in my library forever and pasting images is usually faster and easier than choosing them from a photo picker. With this addition to the screenshot markup tool, Apple has cut all those steps down to a single button, which I’ve already used hundreds of times. Whoever thought of this:

Thank you. Ask for that raise.

Spotlight

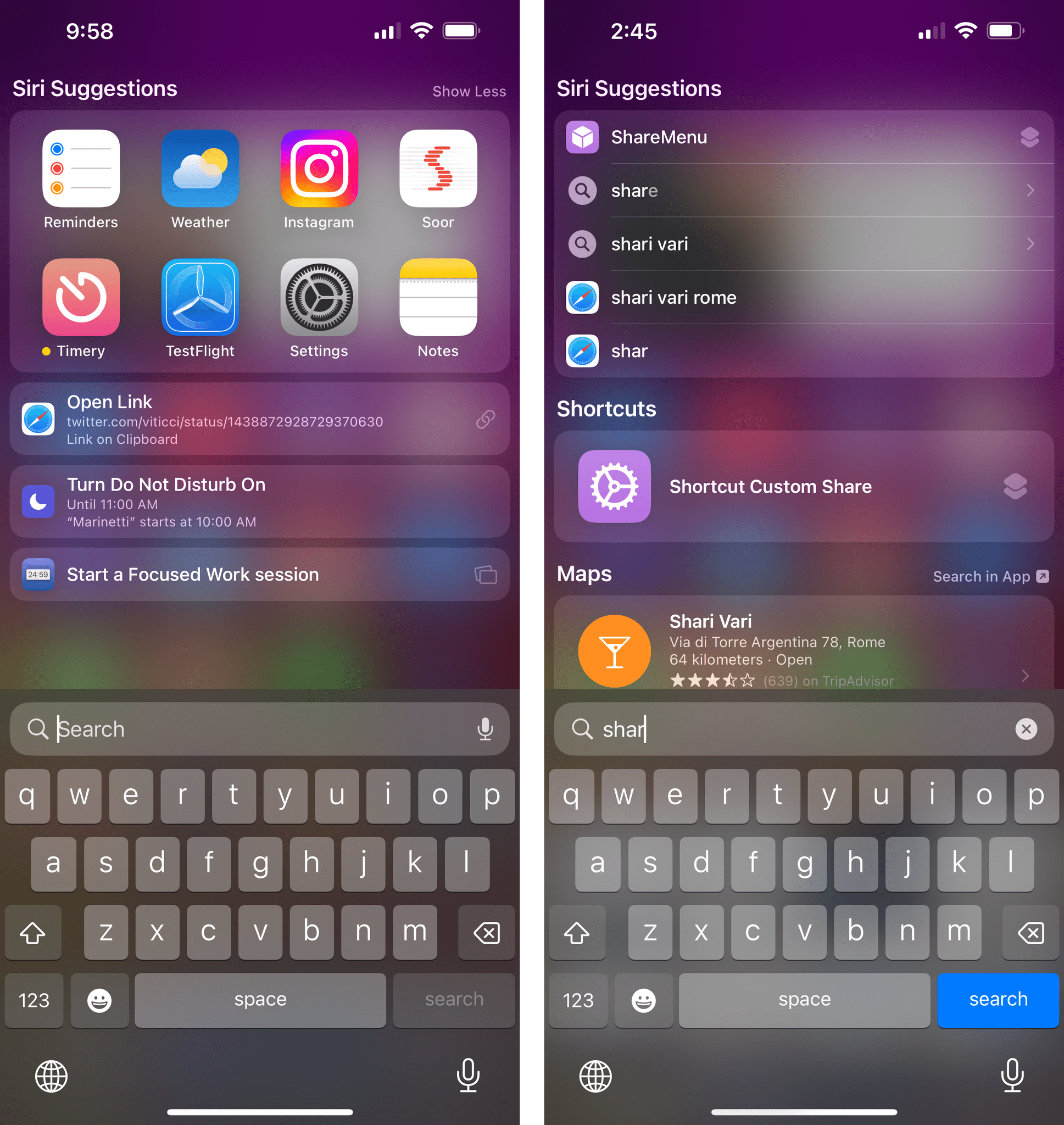

The first thing you’ll notice about Spotlight in iOS 16 is that there’s a new way to get to it: a Search button at the bottom of the Home Lock Screen. The button expands into the Spotlight search box with a neat animation when tapped:

If you don’t like this button and would rather have the page indicators back, you can disable it in Settings ⇾ Home Screen ⇾ Search. You can, of course, still swipe down on the Home Screen to open Spotlight.

In terms of new features for Spotlight, there are some, but they’re not groundbreaking. Running shortcuts is better than before, but that’s because shortcuts are faster to run in iOS 16, period. There are suggestions for recent searches and new kinds of Siri suggestions, but those haven’t been that useful in my experience.

For the most part, I’ve noticed that Spotlight offers to turn on Do Not Disturb if there’s an upcoming calendar event and that’s about it. Spotlight can now find image results (including Live Text matches) for images stored in Messages, Notes, and Files, which is cool, and it should show more information for sports leagues and teams which is…not my forte, exactly.

Shared with You

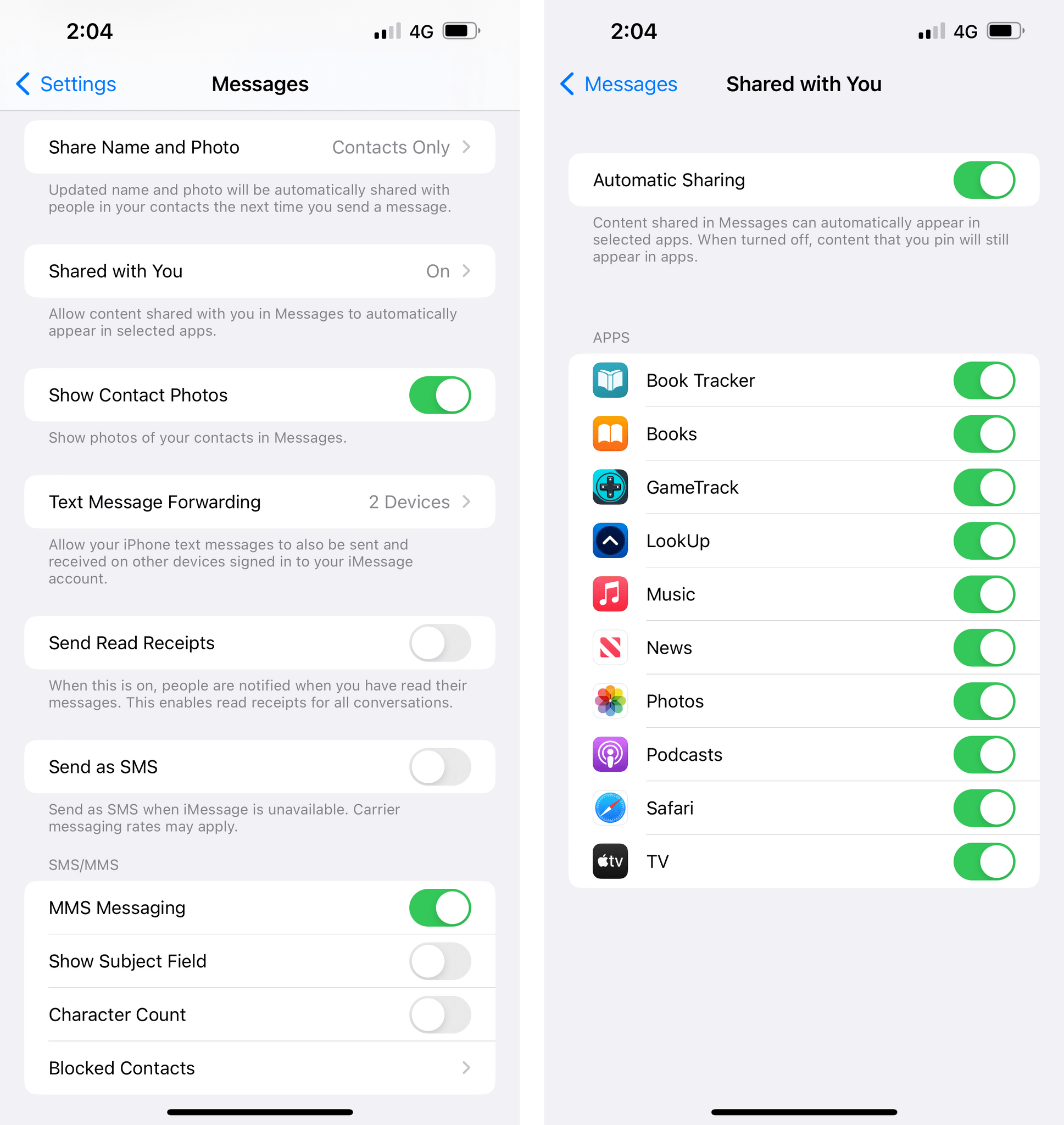

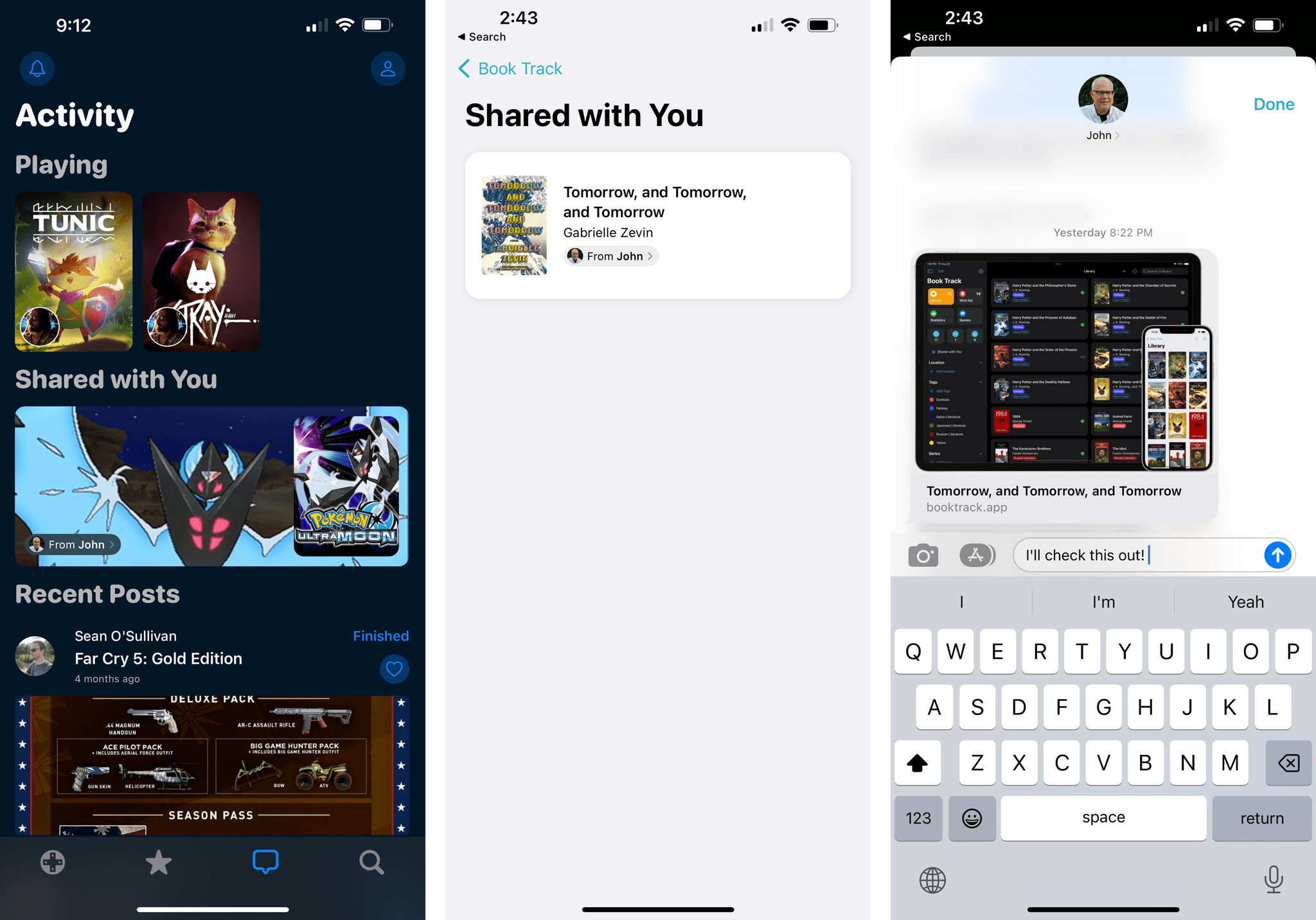

One of the additions to iMessage from last year, the Shared with You system, is growing in iOS 16 with a developer API that will allow third-party apps to piggyback on iMessage with a sharing feature that is ideal for Apple users, native, and more contextual.

In case you missed my coverage of Shared with You and how its integration between apps and iMessage conversations works, you can find it here, and all the details apply to how the feature works for third-party apps in iOS 16.

When you share an item from an app compatible with Shared with You with someone else on iMessage, the app will generate a Universal Link. That link is a form of attribution that tells the system a specific app is responsible for generating certain types of links. In compatible apps, developers can then create dedicated “shelves” for Shared with You items and aggregate all links that were, well, sent to you. Developers are free to customize the UI they want to use, but one consistent element they’ll always have to use is the ‘attribution pill’ – the button that tells you who shared an item with you, which you can tap to reopen the iMessage conversation exactly at that point where the item was shared. It’s just how it works in apps like Safari and Music, but in third-party apps too.

Shared with You shelves in GameTrack (left) and Book Track. When you reply to an item in Shared with You (right) the message will be a direct reply to the original one.

Opening up Shared with You to third-party developers so soon is a smart decision: it’s another way for Apple to further increase the iMessage lock-in in their ecosystem; and it incentivizes developers to adopt the feature so they can have a modern sharing system without having to do much work themselves. Early examples of apps I tried with Shared with You integration include GameTrack and Book Track. I expect all kinds of apps that deal with media and read-later services to adopt Shared with You in the near future.

Siri

While not revolutionary, there are some nice quality-of-life improvements to Siri in iOS 16.

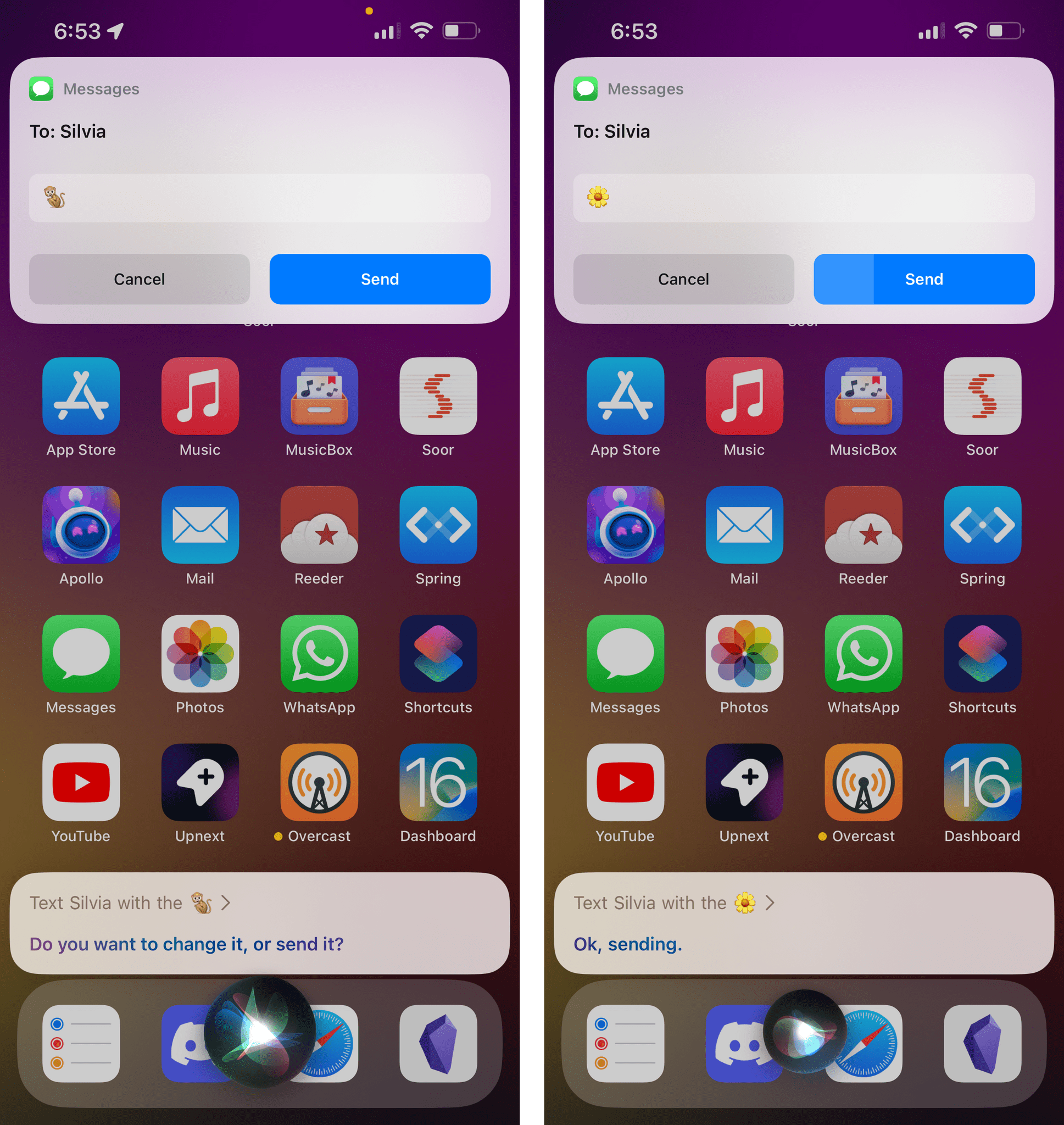

Just like dictation, you can now dictate emoji names and Siri will transcribe them to the proper character. This is especially nice when combined with the new option to skip the confirmation step when sending an iMessage, which you can enable in Settings ⇾ Siri & Search ⇾ Automatically Send Messages. Now when you have that urge to send a particular emoji to a friend or loved one, you can just say ‘Hey Siri, text Joe with monkey emoji’ and Siri will assemble the message and prepare to send it without you having to confirm anything else.

If you pay attention, you’ll see that the Send button is actually a progress bar in this case, so you have a few seconds to cancel sending the message if you’ve changed your mind about the vibe you’re giving off with your text.

Also new in iOS 16, you can hang up Phone and FaceTime calls by saying “Hey Siri, hang up”. Now, I don’t know why I’d personally ever want to do this without sounding like a jerk, but I know that there are important Accessibility-related reasons for Apple to add this option. Speaking of Accessibility: in Settings ⇾ Accessibility ⇾ Siri Pause Time, you can now adjust how long Siri waits for you to finish speaking before responding to a request. This is a useful option for stutterers or people with other speech impediments or simply folks who like to talk to Siri carefully and deliberately.

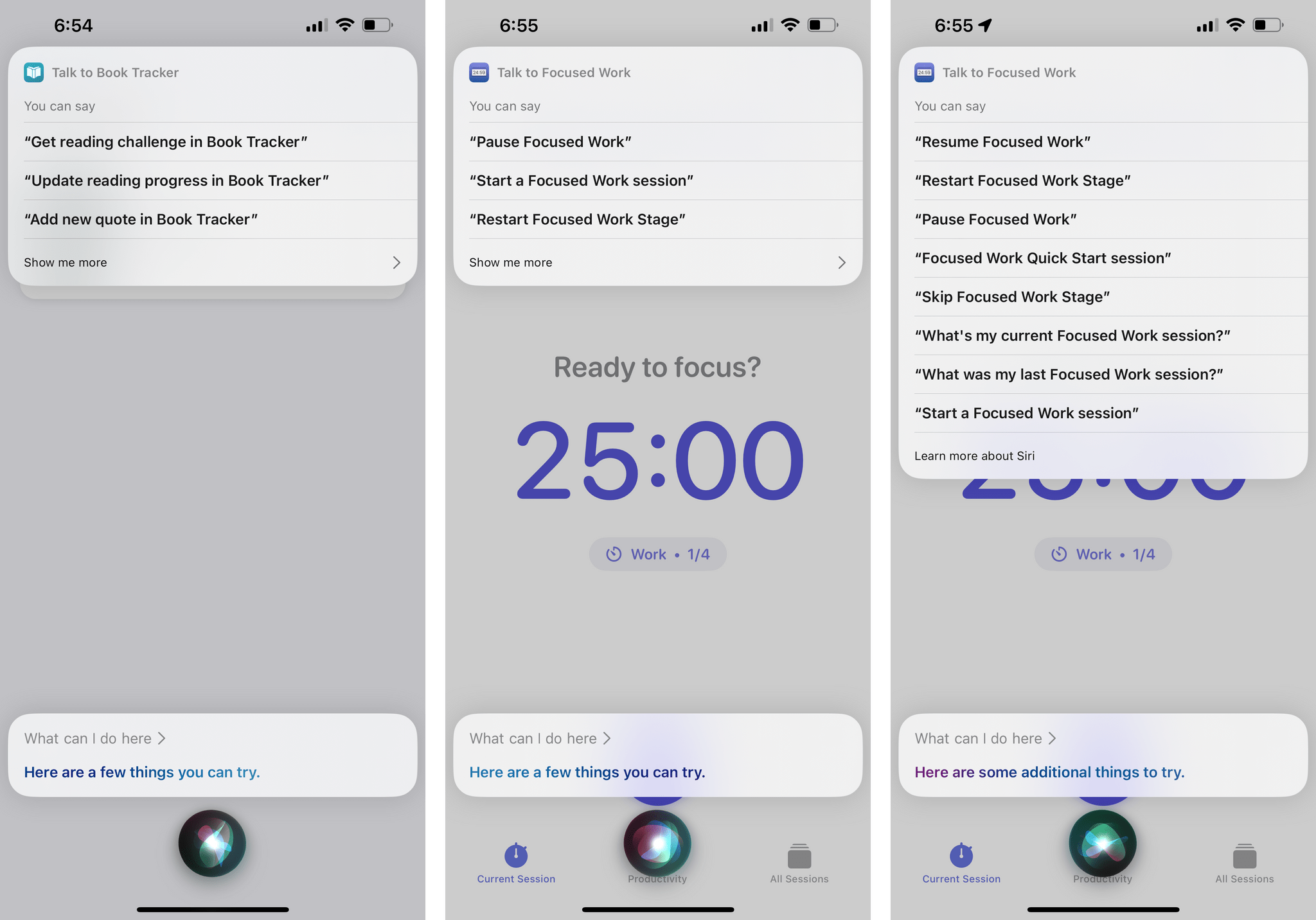

Offline Siri has been expanded to support HomeKit, the Intercom feature, and voicemail. Lastly, if you want to discover the Siri commands or App Shortcuts supported by apps, you can now ask Siri “what can I do here?” when inside a specific app. Doing so will show you a special Siri notification with a list of commands and App Shortcuts you can try.

- Now that I think about it, could this be the kind of enhancement that Apple created by observing user patterns aggregated in logs collected by millions of iPhones worldwide? How much is "innovation" actually the result of parsing logs and understanding what people do with their computers and optimize for that? ↩