Messages

The app that plays the biggest role in Apple’s platform lock-in has received two substantial new features in iOS 16 that are playing catch-up to Meta’s WhatsApp and other modern messaging apps, plus some collaboration enhancements that build atop SharePlay, which we’ll explore more in depth with an iPad angle next month.

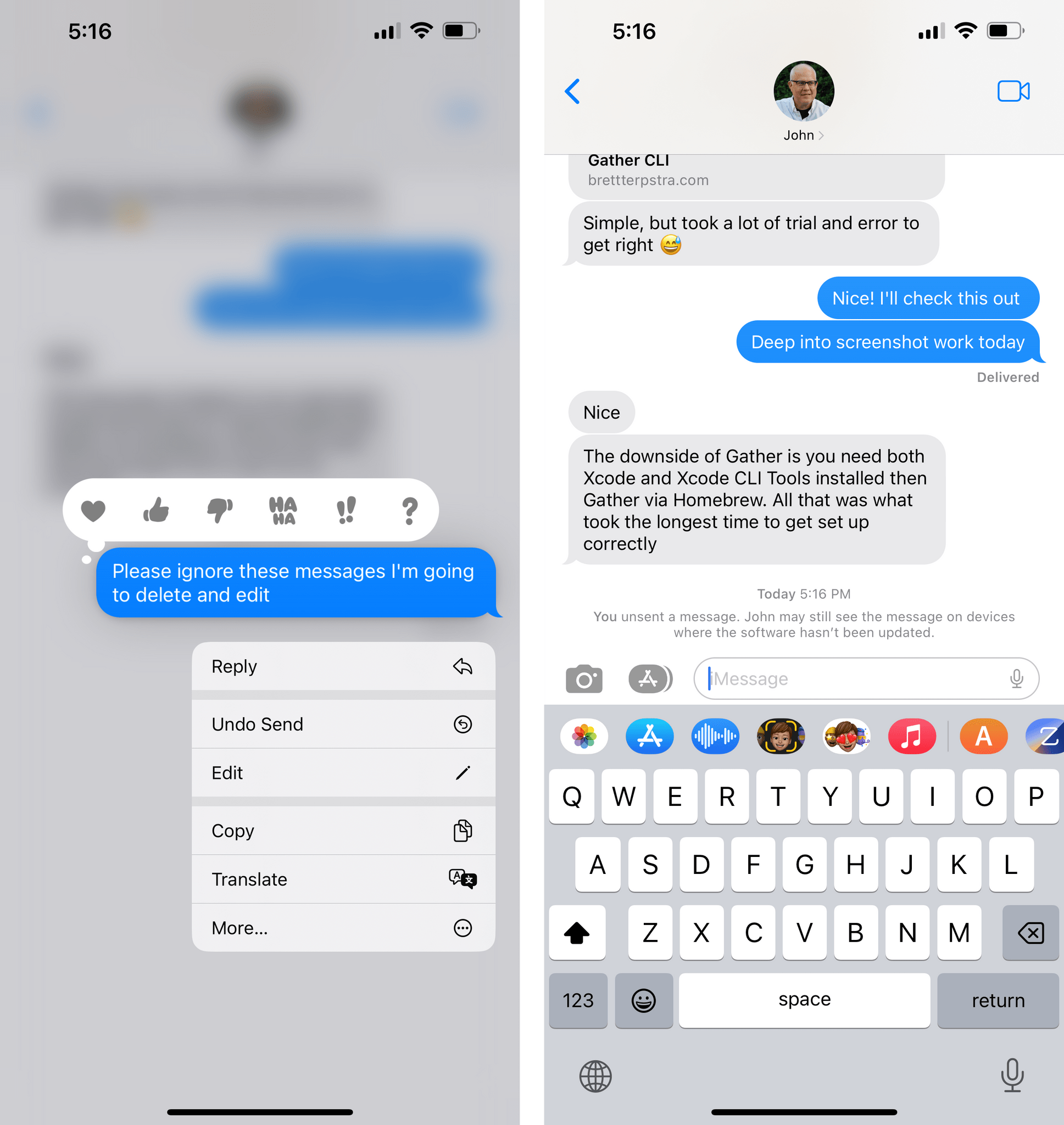

At long last, the Messages app for iOS 16 lets you edit messages and outright un-send them by deleting them. The ability to delete a previously sent message isn’t new in this space: WhatsApp has had this option for years. In iMessage, you can retract a sent message within two minutes after sending it; you can do so by long-pressing a message and selecting the new ‘Undo Send’ button, if available. Amusingly, doing so will burst the message’s bubble in a nice, whimsical touch that feels uniquely Apple.

Deleting an iMessage in iOS 16.Replay

On the recipient’s end, similar to other messaging services, the other person will know that you deleted a message. If they are on older versions of iOS, they may still see the deleted text, and Apple tells you this with an informative notice in the Messages app. As more people update to iOS 16, this message will likely go away. Personally, I’m not the type of person who deletes messages – if you send a message, own it – but since so many other people do, I’m glad Apple has adopted what is an industry standard at this point.

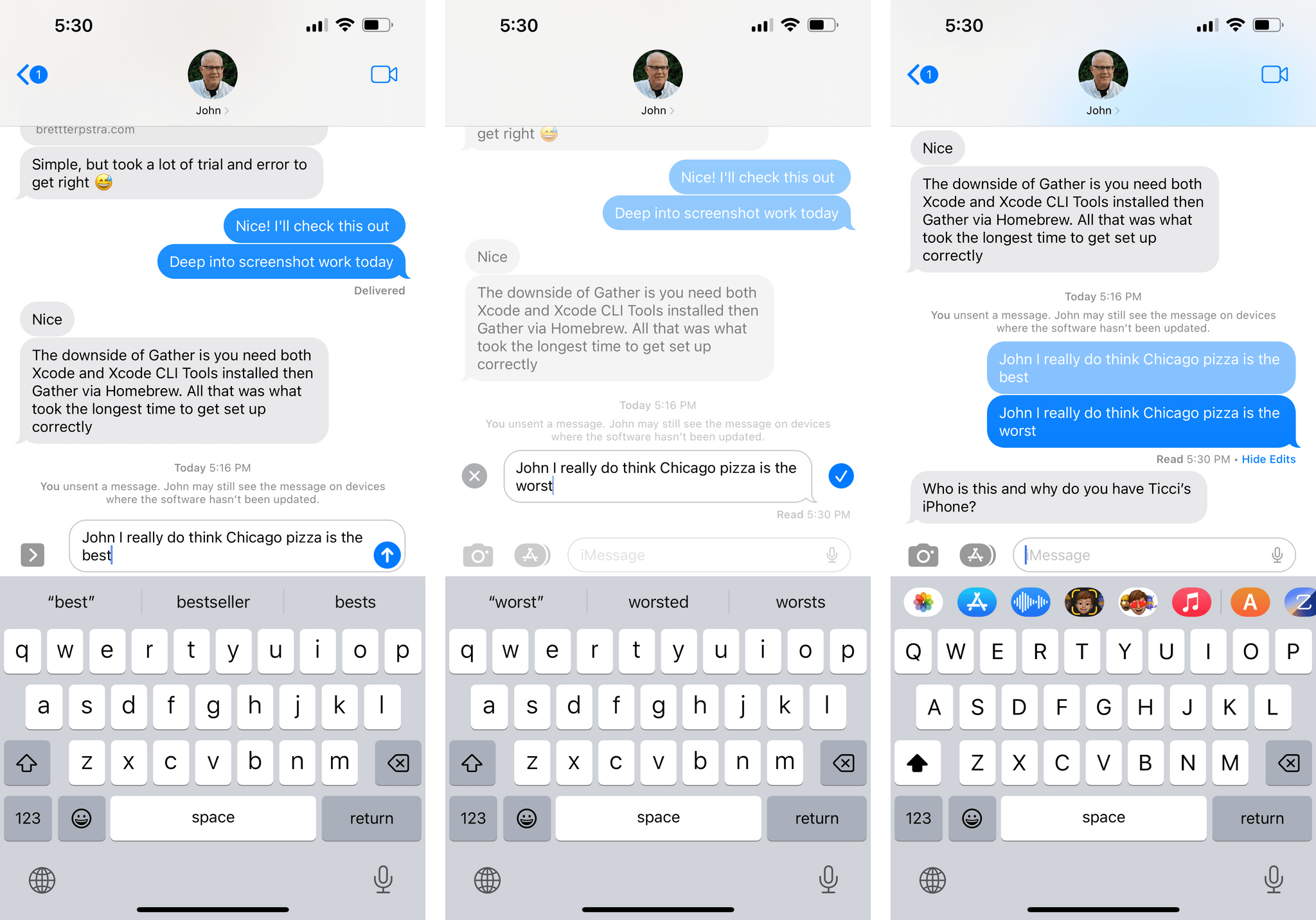

Editing a message is the more interesting option in my opinion. You can edit a message within 15 minutes of sending it, and you can perform up to five edits to a given message. In a notable move, the recipient will be able to see that you edited a message (of course) alongside a full record of all edits made to the message.

Essentially, Apple has created the Edit button we’ve always wanted from Twitter, and they nailed it on their first try. It didn’t even take them to change five different CEOs to do it.

You can edit a message by long-pressing it and selecting the new Edit option, which will kick you back into the compose field with the text already filled-in, so you can make your edits. In the chat transcript, you’ll see an ‘Edited’ button underneath messages that have been edited; tap it, and you’ll expand the message to show all previous versions of it.

What Apple has done with its editing feature may seem trivial, but it’s not. The decision to limit edits by amount and time, as well as allowing recipients to view edits, shows that the company has thought about the potential scenario where some folks may send abusive messages, then edit them, hoping to get away with something they previously said. iMessage in iOS 16 won’t let them do that, and it’s the right call.

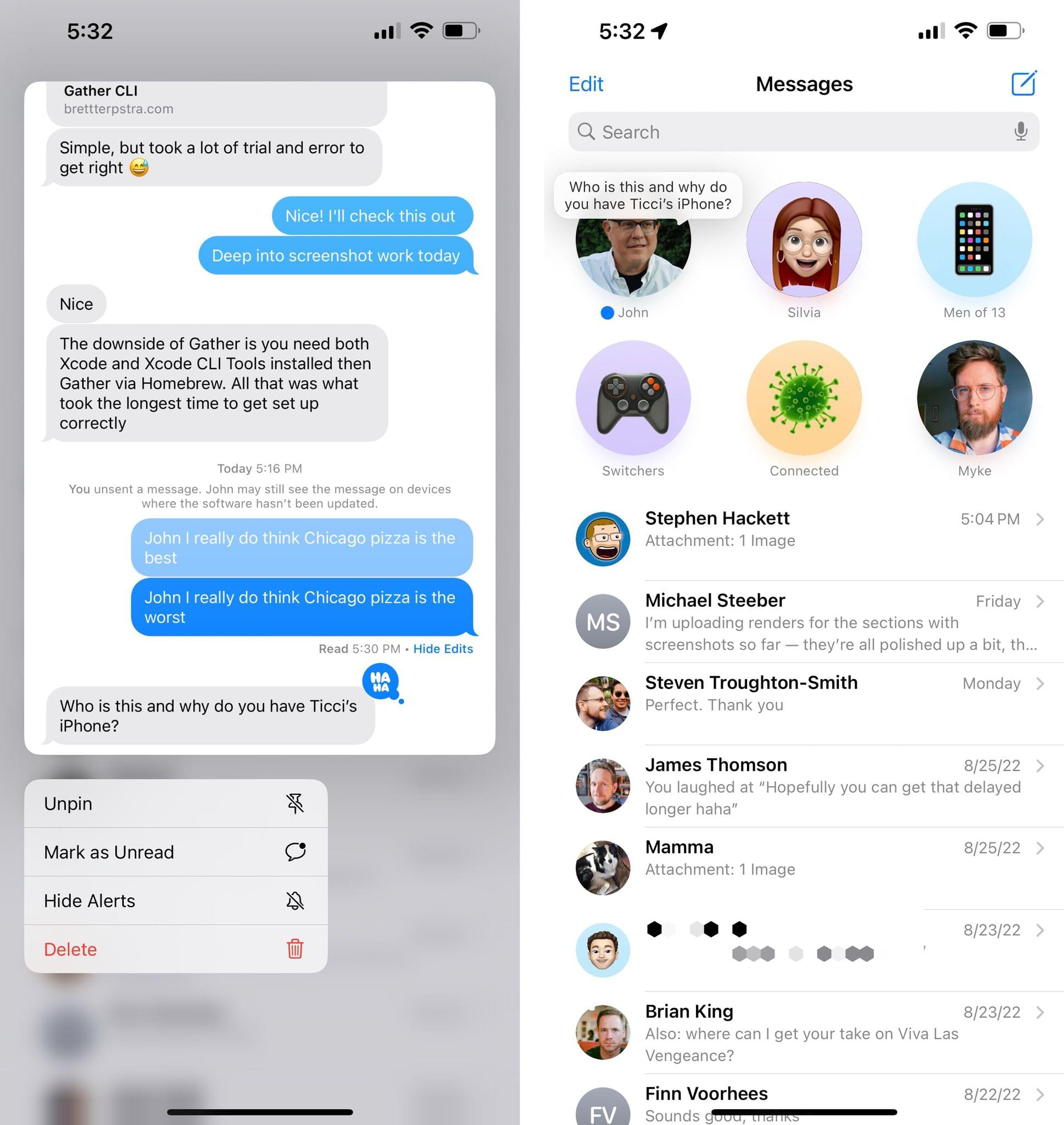

There are some other enhancements to the Messages app worth mentioning. Those of you who use Messages as an inbox may be happy to hear you can now mark messages as unread. So now, even if you did read a message, you can be a terrible friend, mark it as unread, and reply on your own time. I…shall we say, dislike it when others do that to me, but, hey, you do you.

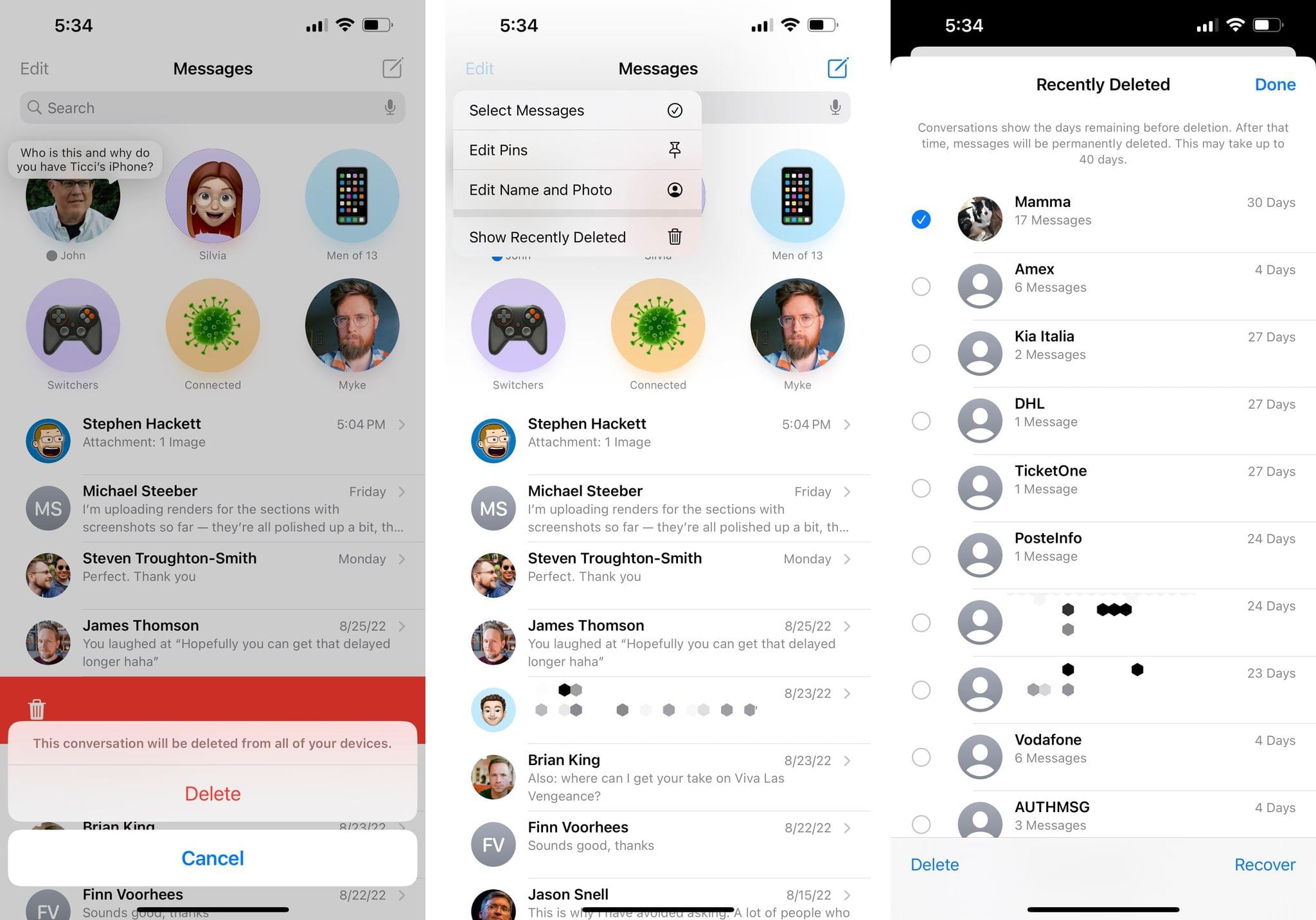

Also new in iOS 16: you can recover previously deleted conversations for up to 30 days, just like Files and Notes. This feature is somewhat hidden though. To access it, tap the ‘Edit’ button in the main Messages view (I know: why is it under an ‘Edit’ menu?) and select ‘Show Recently Deleted’. This will take you into your iMessage trash, where you can find deleted messages and recover them.

Lastly, there are some notable improvements to the collaboration features in Messages, but we’ll explore these in the context of iPadOS with a standalone story next month. For instance, SharePlay now works with iMessage, and Messages has also been integrated with productivity apps such as Notes and Pages for collaborative features. But that’s a topic for another time.

Music

There are many things Apple doesn’t get right about the Music app and Apple Music’s integration with iOS. When I say “many”, I mean we had to do an entire episode of AppStories about our ideas to fix the Music app.

Unfortunately, iOS 16 doesn’t take any meaningful steps toward improving the Music app in what has to be the smallest update to the app in an annual iOS release to date.

There are a total of two new features in the Music app for iOS I can cover this year. The first one is the ability to drag a song from an album or playlist into your queue. Simply hold down the song, drag it onto the mini player, wait a second for the queue to open, and drop it wherever you want. It’s a nice addition.

Dragging songs into the queue.Replay

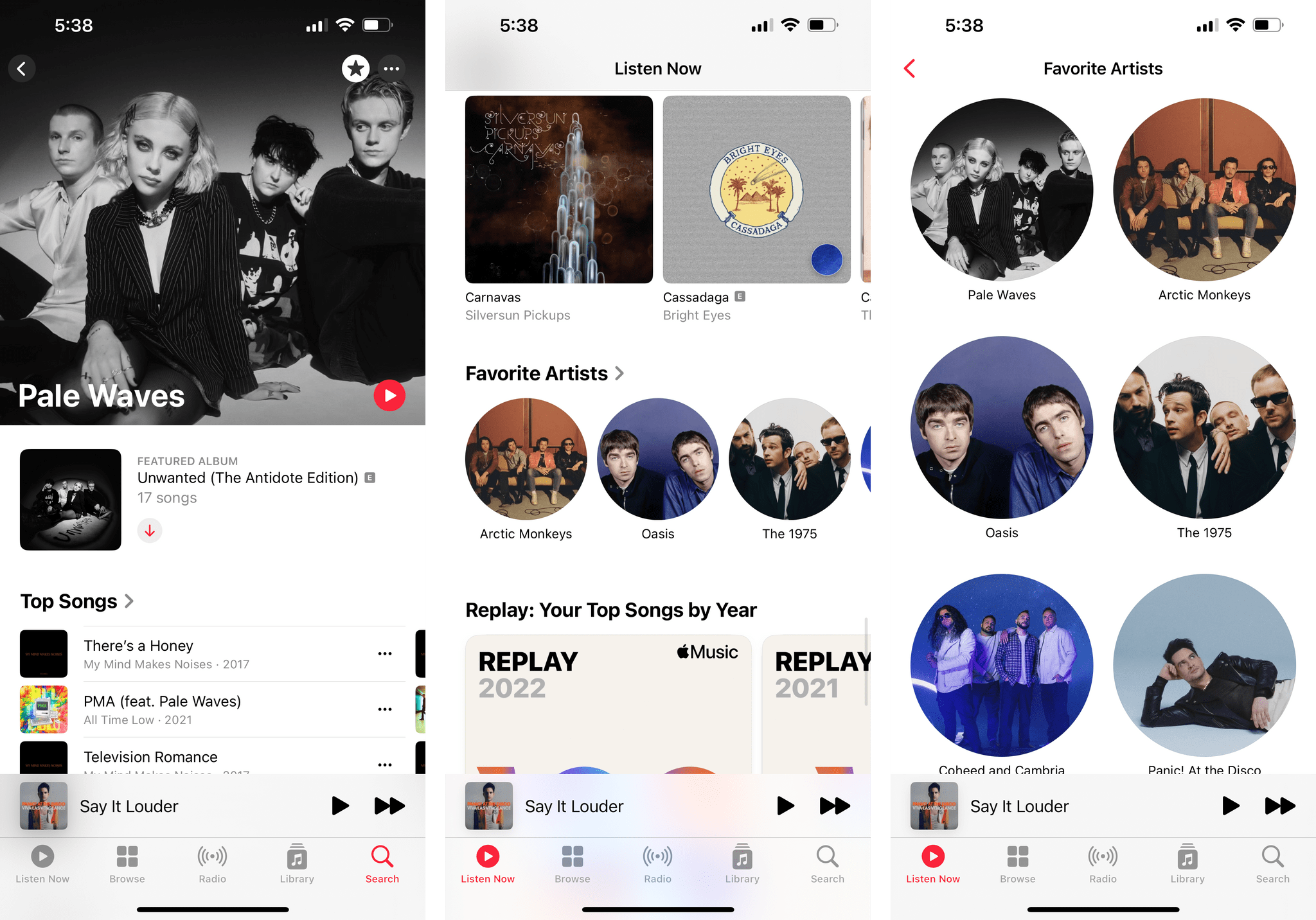

The second feature is the addition of a star button to mark an artist as favorite. It’s not entirely clear to me right now what adding an artist to your favorites is supposed to accomplish. For sure, what I do know is that you can find a list of all your favorite artists buried somewhere in the Listen Now page, which continues to be in dire need of a redesign aimed at increasing its information density.

Beyond that, though, what does favoriting artists achieve? It doesn’t force push notifications for all their new releases to arrive, and when they do, they’re not as fast as checking MusicHarbor. It doesn’t create any new kind of automatic mix based on your favorite artists, which is a missed opportunity. So what does it do? Does it invisibly train the algorithm behind the scenes? Does it tell Apple anything valuable about my music taste?

But more importantly: it’s 2022, why should I have to manually mark an artist as favorite when the service should understand that about me on its own?

Notes

Notes for iPhone is another example of an app that received quality-of-life improvements and miscellaneous enhancements after the rollout of bigger changes last year.

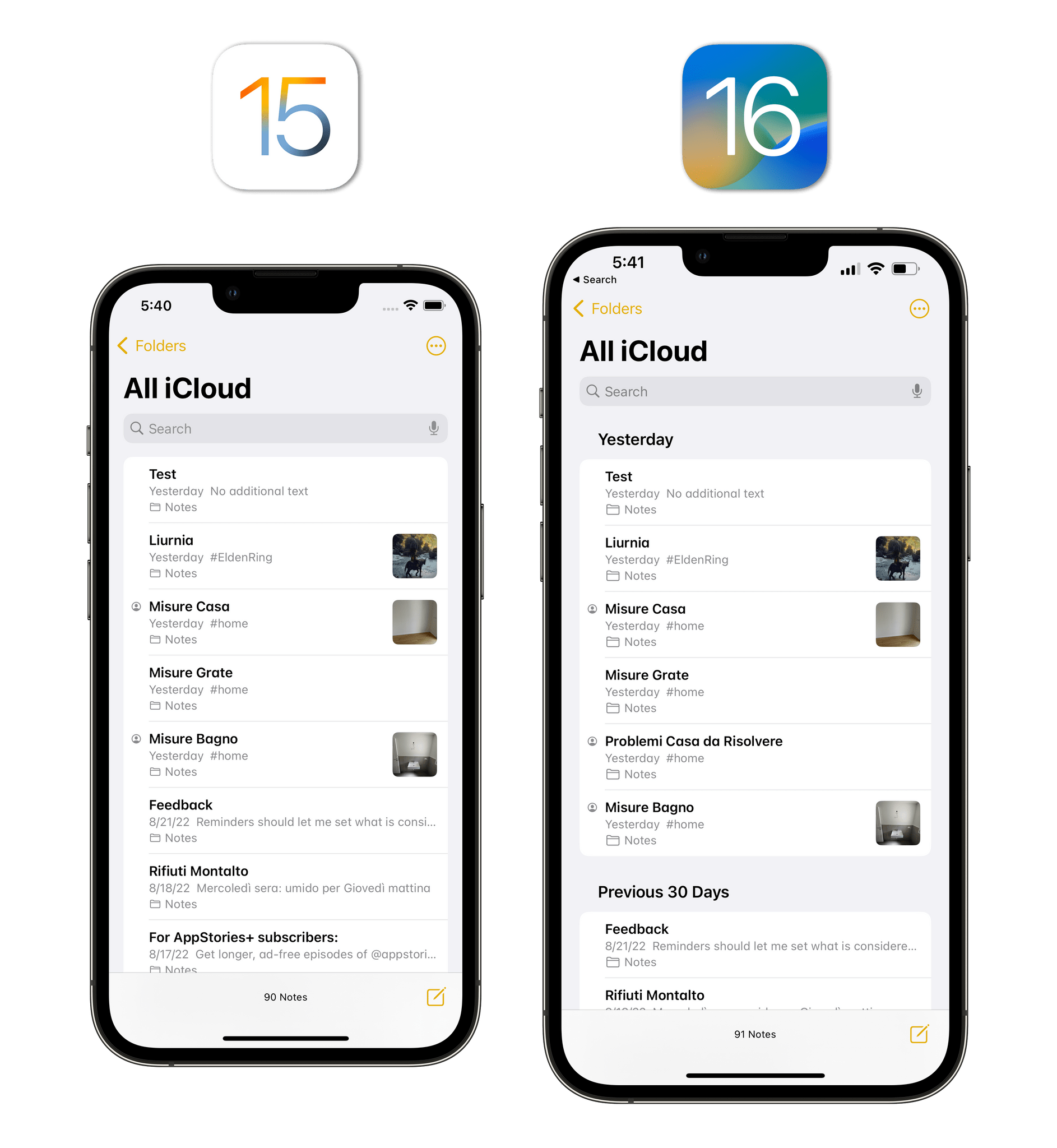

By default, the Notes app now groups notes by date. New grouping and sorting options are available via a menu from the upper-right corner that replaces the custom action sheet Apple was using before, which looks much better. You can disable the grouping if you want, but, personally, I like how it breaks down my library by date, allowing me to find related notes more easily.

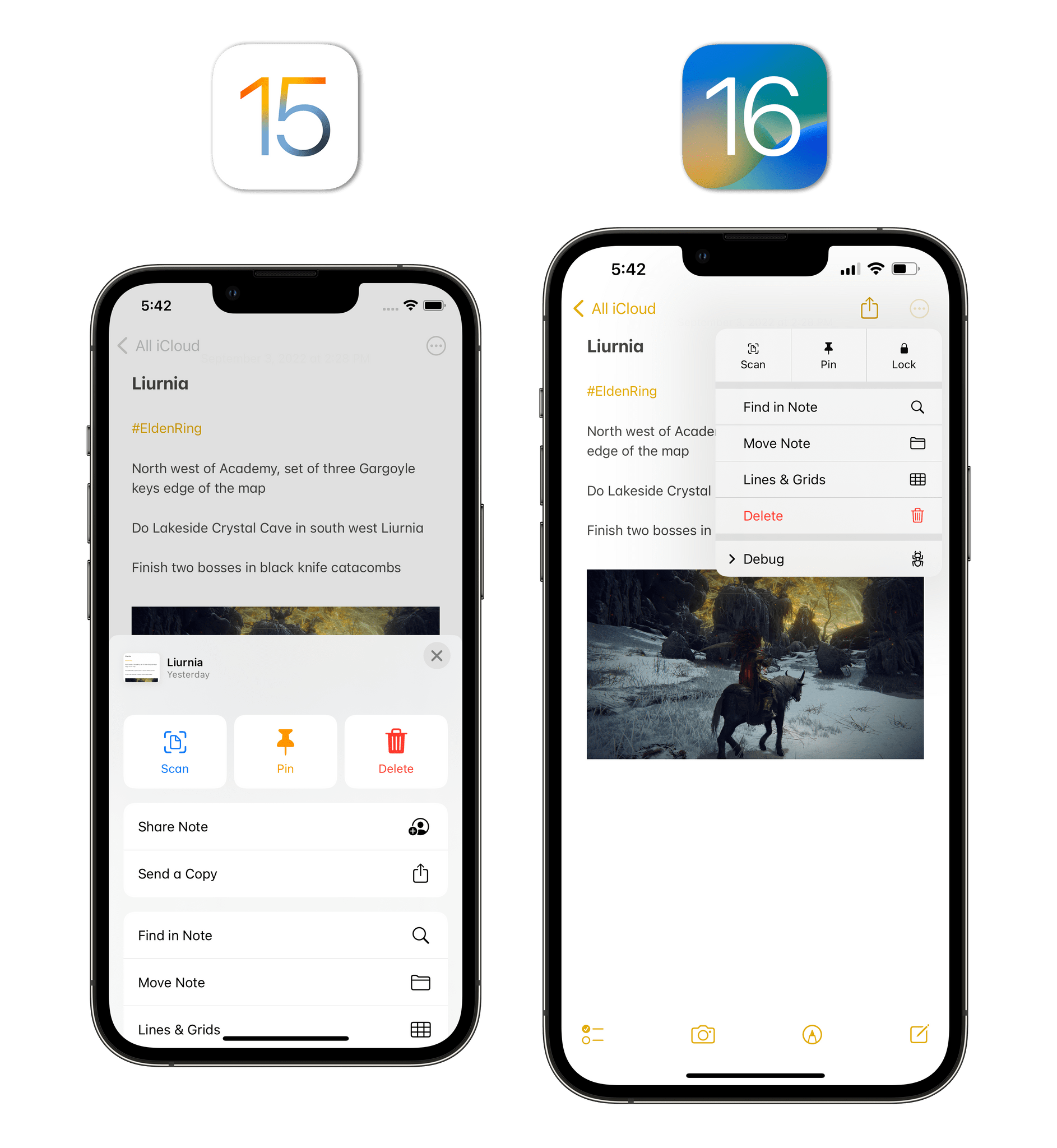

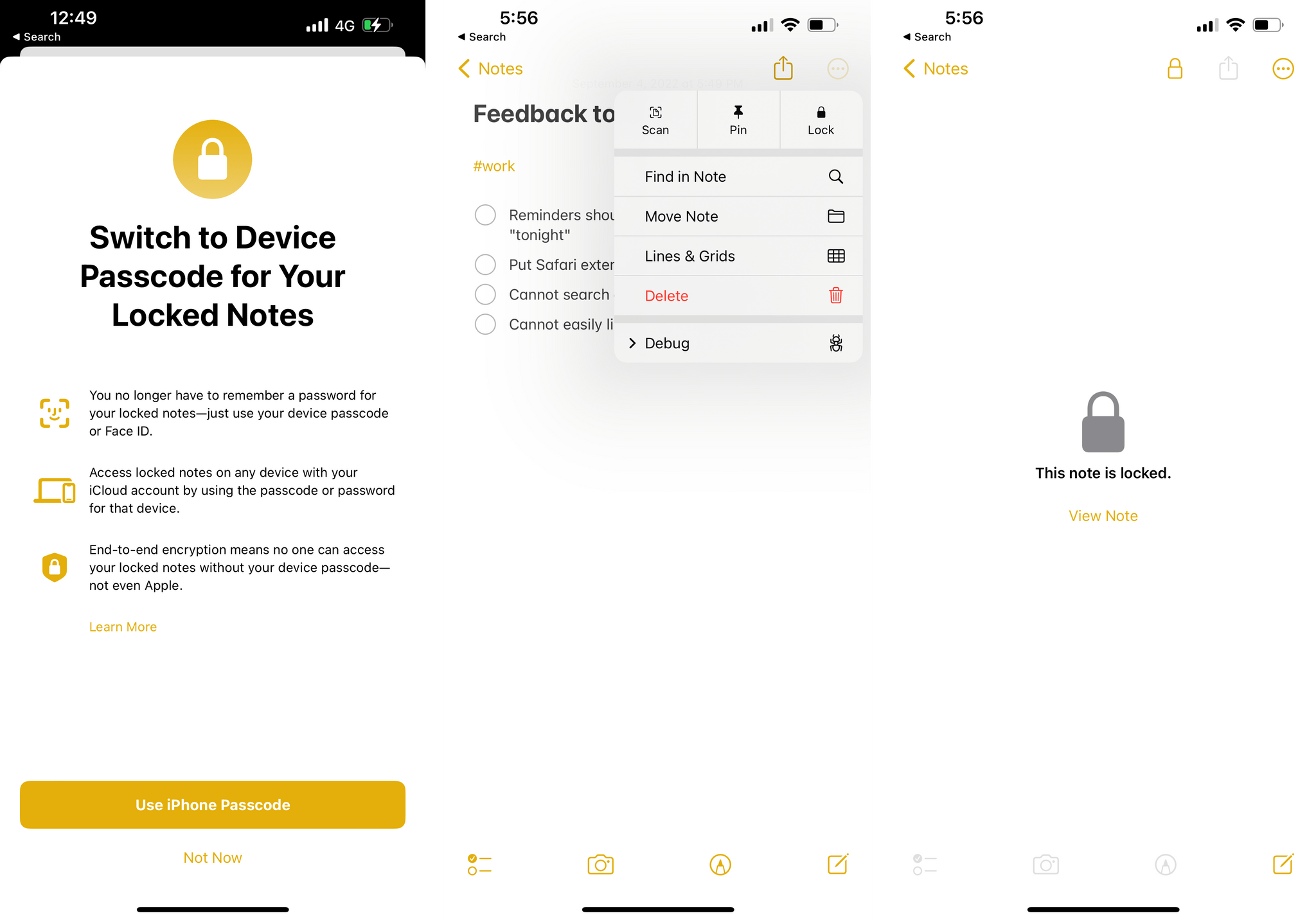

The other action sheet that has been replaced by a native pull-down menu is the one that used to contain options for note settings and sharing. In iOS 16, Apple has separated sharing and note settings. The new menu is more compact than before, has three compact buttons at the top to scan text, pin a note, and lock it, and hides less text than before.

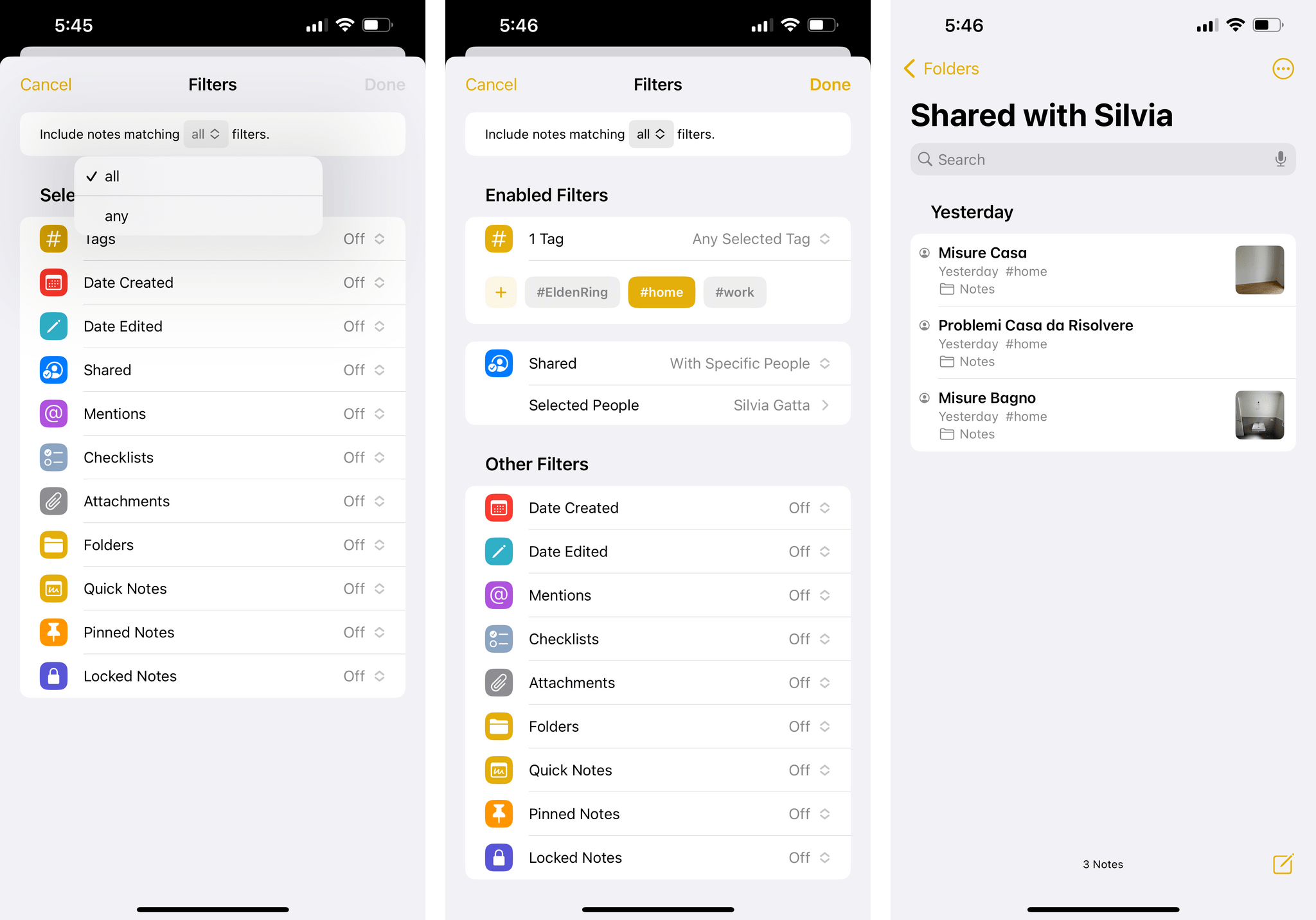

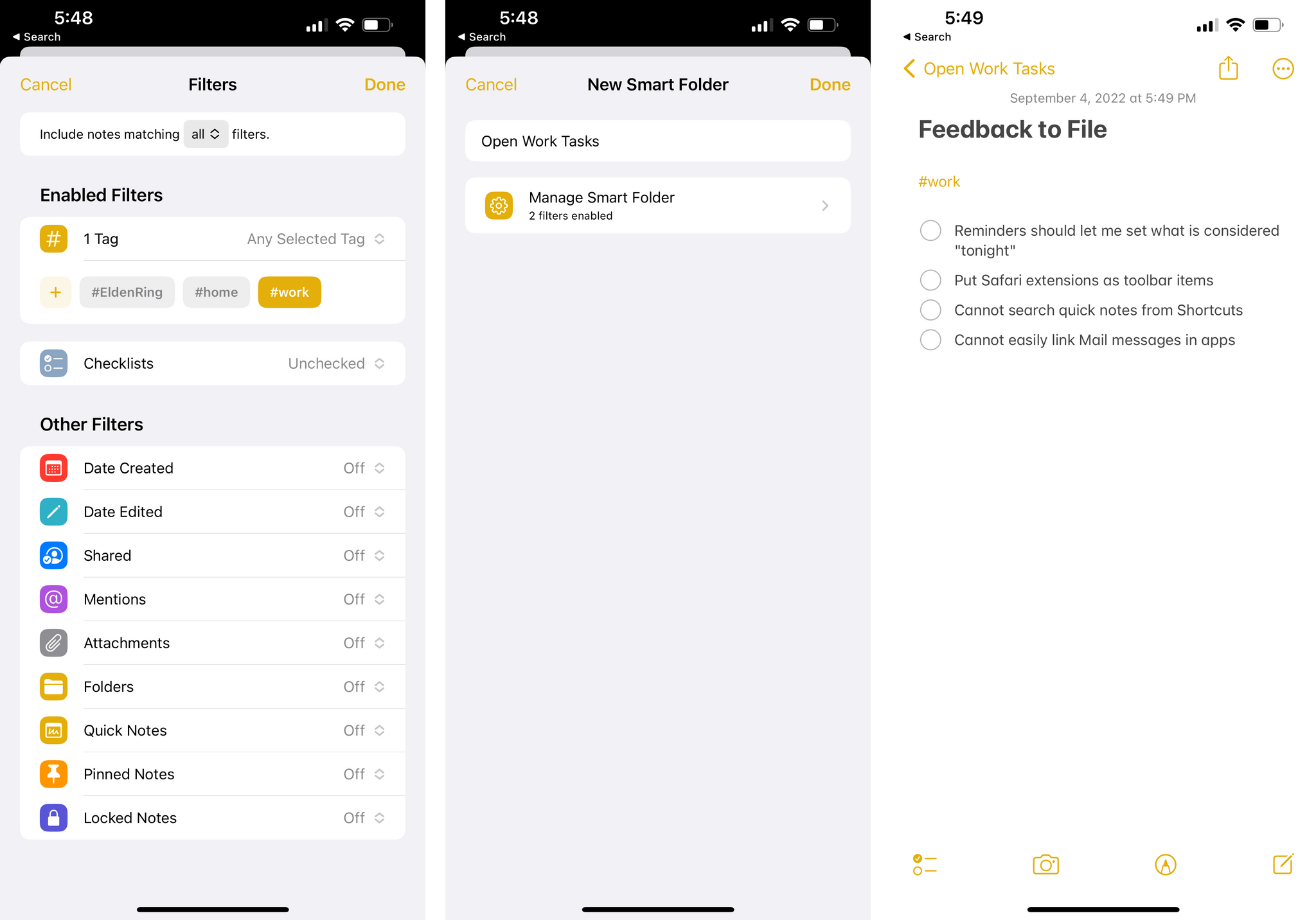

Smart folders were introduced last year as a way to complement Notes’ new tagging feature, but compared to smart lists in Reminders, they felt more like a proof of concept. Apple has gone back to the drawing board for smart folders in Notes this year and they’ve shipped what feels like the true version 1.0 of this feature. You can now choose from a much larger series of filters, including:

- Date Created

- Date Edited

- Shared

- Mentions

- Checklist

- Attachments

- Quick Notes

- Pinned Notes

- Lock Notes

As in Reminders this year, you can match any or all filters – an added flexibility that should allow you to put together reasonably advanced smart folders to surface specific subsets of notes from your library. One of the filters I’m fascinated by at the moment is the checklist one: by choosing between finding checked or unchecked items, it’s now possible to create smart folders that filter notes that contain open checklist items, which is an intriguing way to think about task management inside the Notes app.

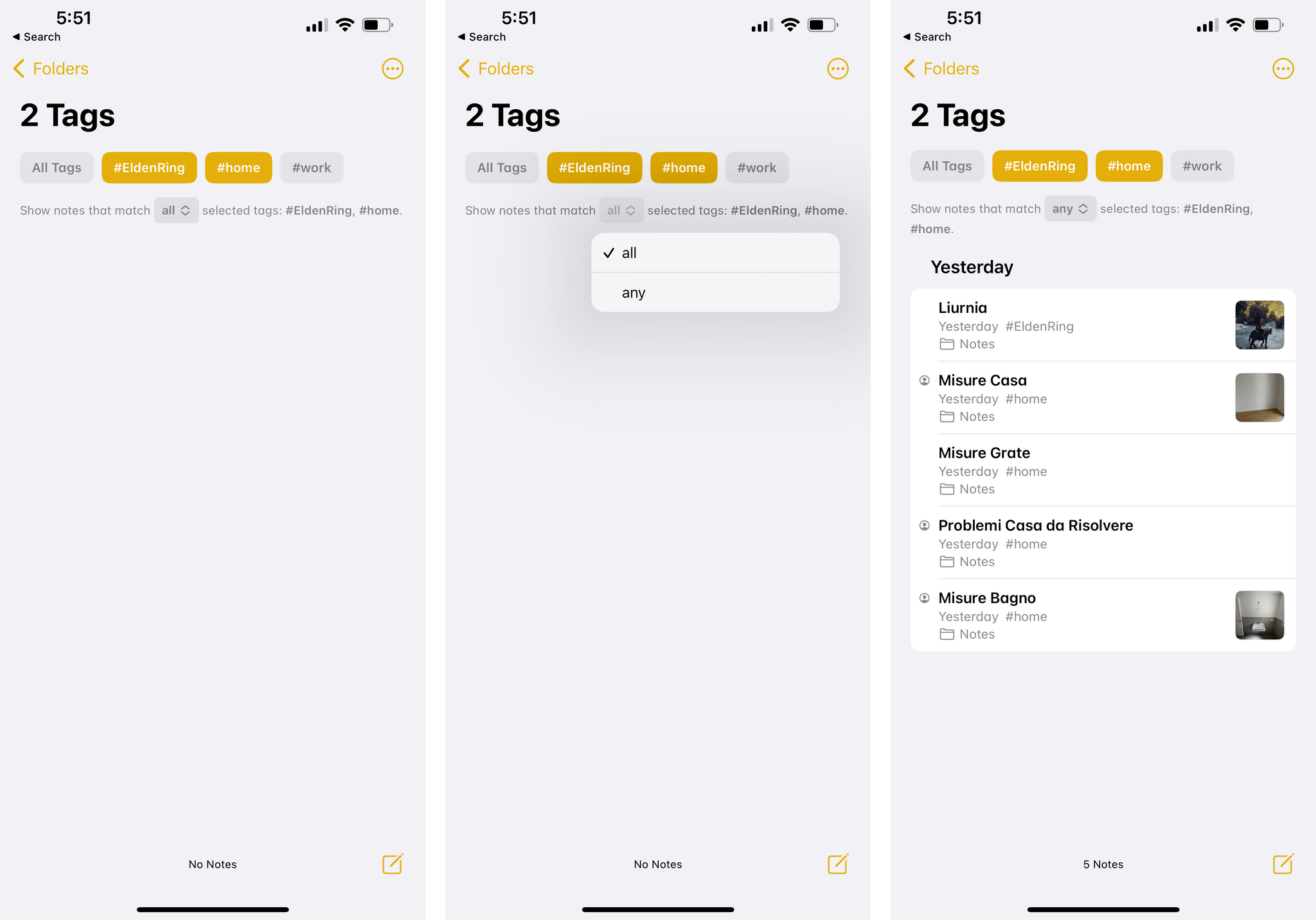

The ‘Shared’ filter is itself a new top-level section of the Notes sidebar, but it can also be used as a standalone filter and combined with others. For instance, you could use this to find shared notes that have open checklists and also have a specific tag. The ability to combine filters by ‘any’ or ‘all’ parameters is also available in the Notes sidebar in iOS 16: when you select multiple tags, you can now choose to filter notes that contain all of them or any of the selected ones.

Still conspicuously absent from Notes’ filters? The ability to search for notes by title and body text. This is one of my favorite aspects of putting together smart searches via Dataview in Obsidian, and I’m a disappointed Apple didn’t have a native, visual answer for this in iOS 16’s Notes app.

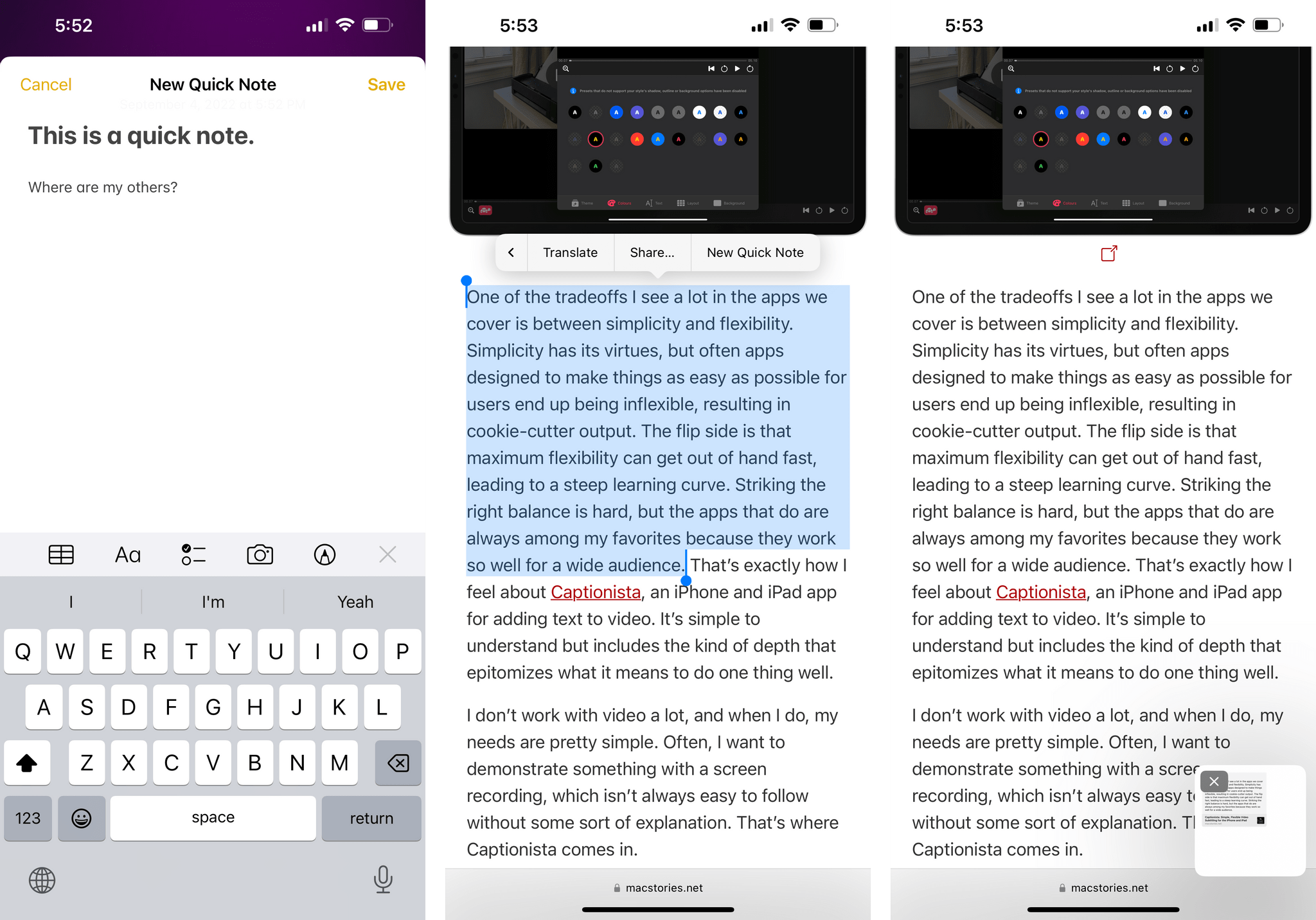

Apple brought the standout addition to last year’s Notes update for iPad – Quick Note – to the iPhone this year…albeit in a limited fashion I don’t understand.

As we’ve seen with Picture in Picture over the past two years, the iPhone is perfectly capable of dealing with small, floating popup windows. And yet for iOS 16’s Quick Note, which can only be activated via a toggle in Control Center, Apple thought it’d be best to make it a full-screen window that only lets you capture new quick notes and does not let you swipe through your existing quick notes, like on the iPad.

Quick Note on the iPhone can be invoked from Control Center (left) or used from Safari to save webpages. The floating panel (right) is only supported to reopen highlights on webpages.

It’s bad enough that Apple didn’t add support for activating Quick Note’s floating window via a gesture, but limiting Quick Note to creation-only on iPhone? That’s unnecessarily punitive and lazy, and I’m sad we’ll probably have to wait an entire year for improvements on this front.

In the privacy department, Apple has vastly simplified the process of locking notes to make them private. Instead of forcing you to create a specific password for the Notes app, private notes are now locked with your device passcode and Touch/Face ID – the same system you use to lock your device. This way, your notes will be end-to-end encrypted and you won’t have to remember an additional password for the Notes app; this is another sign of Apple pushing to move beyond passwords in the near future.

There are some other additions to Notes I’ll discuss next month in my iPadOS 16 coverage, such as the new tools to add shapes and signatures to drawings, handwriting improvements, or the new sharing and collaboration features for iCloud users.

I won’t lie: I was hoping to see changes in the Notes app this year directly inspired by services that have reshaped the note-taking market over the past couple of years such as Notion, Craft, and Obsidian. Instead, despite some very nice improvements, I can’t shake the feeling that Apple’s Notes app feels and works like a pre-pandemic note-taking product.

At this point, every modern note-taking app supports some kind of command palette for keyboard-driven access to commands, has an in-app Spotlight-like search for navigating to notes, and lets you refer to other notes via internal links. These features have become commonplace in every single major note-taking app these days. Some of them even come with a healthy developer ecosystem and support integration with task managers and other services.

Will it take Notes a decade to catch up on this front, like Mail? I hope not. For now, while I like Notes’ design, native feel, and Quick Note for iPad, I’ve gone back to Obsidian for personal notes and Craft for collaborative ones.

Photos

The most important changes in the Photos app this year revolve around its integration with Live Text (which I’ll explore more in depth in the Everything Else chapter), shared libraries, and improved use of context menus for batch operations and other actions.

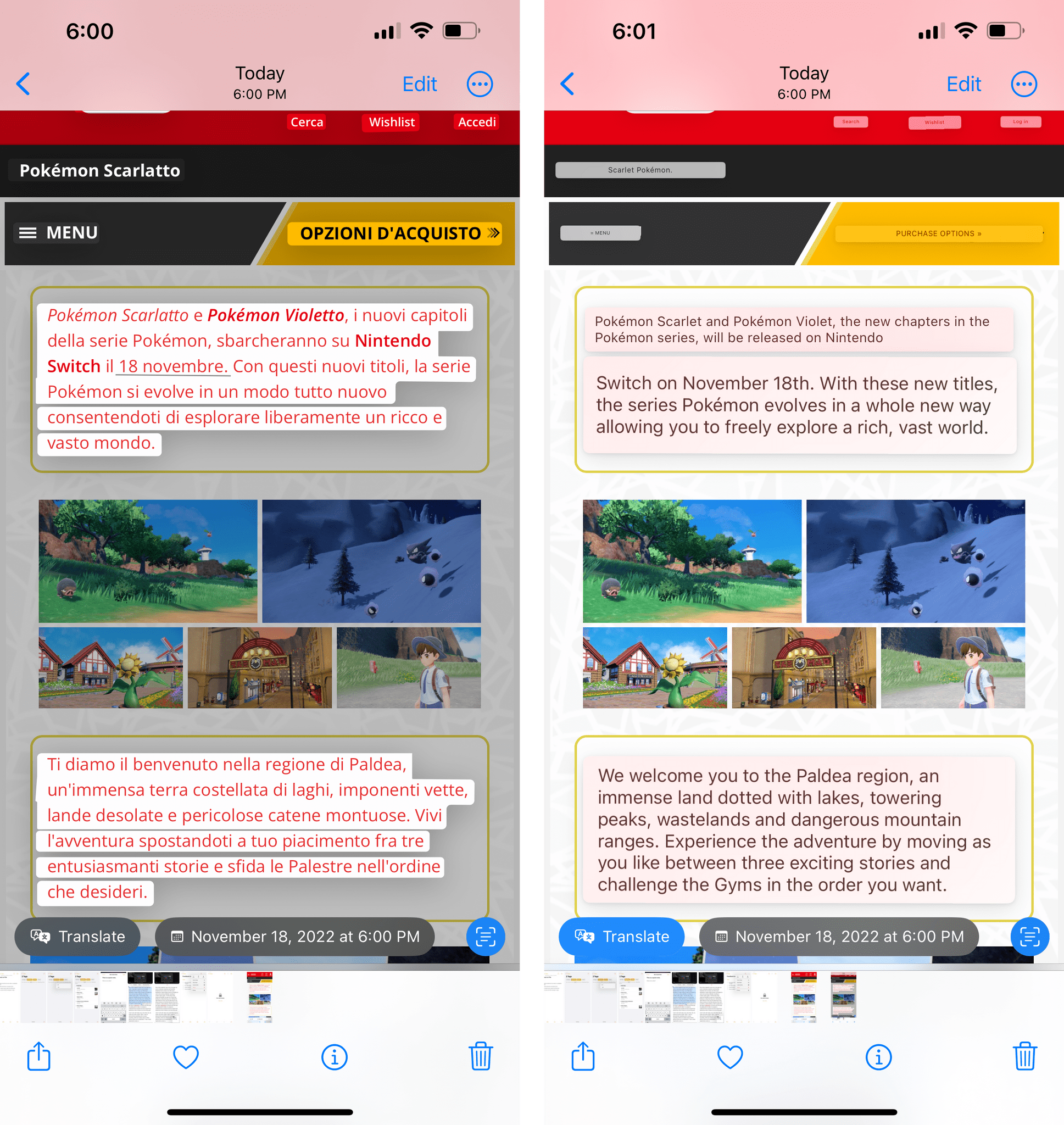

As I’ll explain later, the Live Text interface in the image viewer has been greatly enhanced in iOS 16 with the addition of new smart data detectors to perform more actions contextually within the image. This is no surprise given how remarkable the Live Text technology is and how popular it has gotten over the past year. Among the changes I want to call out in this chapter is the ability to translate text recognized in an image right from the Photos app itself. Translations overlaid on top of images are also a feature of the Translate app this year, but doing the same while in Photos feels magical.

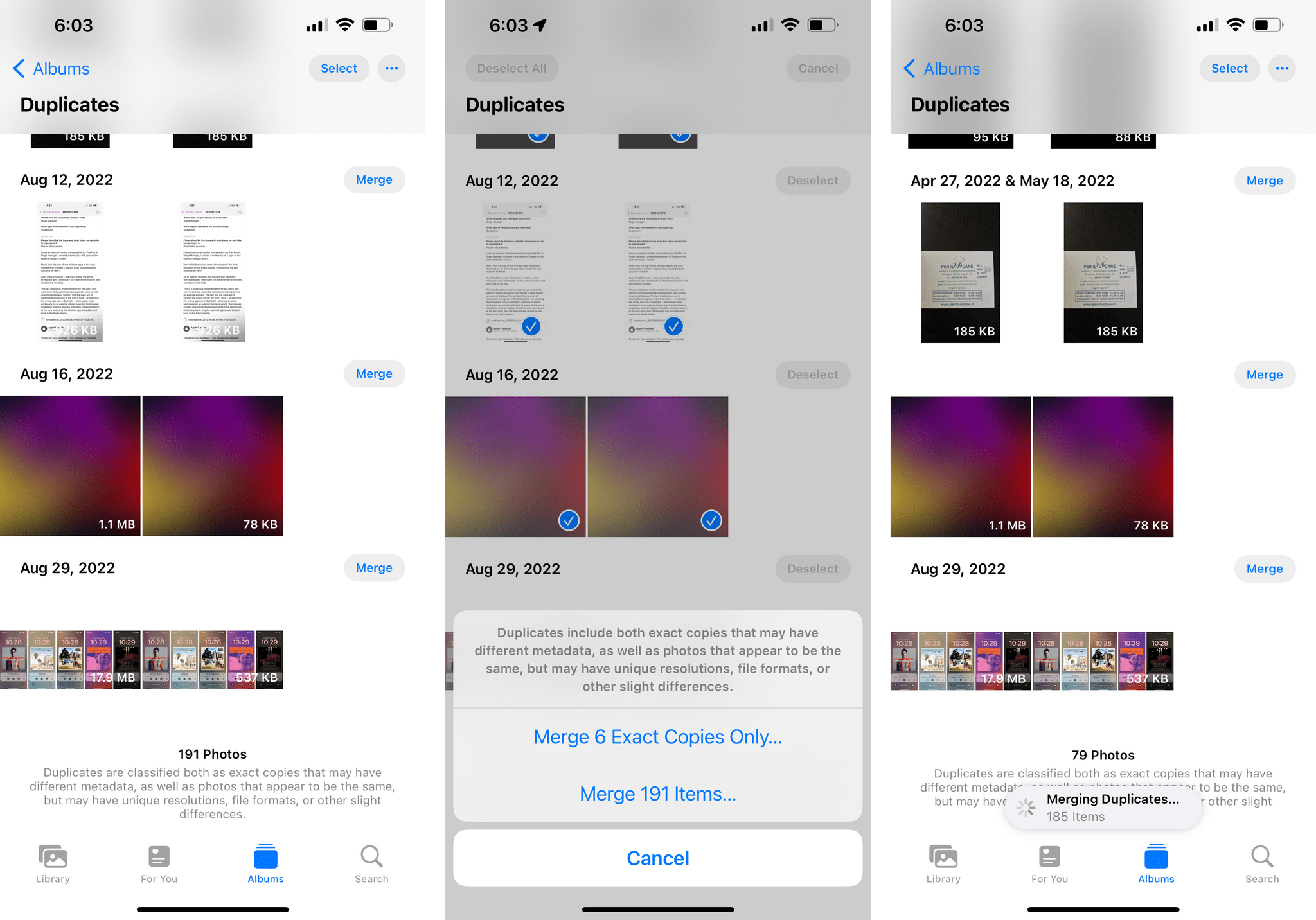

Our John already covered shared libraries and iCloud Family Sharing in Photos, so I’ll focus on the other additions to the Photos app here. In iOS 16, the Photos app can detect duplicate photos and videos. If your Photos library consists of old iPhoto/Aperture/Everpix libraries that were merged multiple times over the years, this feature is for you: head over to Albums ⇾ Duplicates, and – if your library has been indexed (i.e. give it a few days) – you’ll see a list of all the items that iOS considers duplicates. You can then merge duplicates, thus slimming down your library and getting rid of legacy files.

As Apple explains, items may be classified as duplicates both if they’re an exact copy of each other and if they appear to be similar but have different resolutions, file formats, or other “slight” differences. In my experience, this duplicate detection and merging tool has been spot-on. When you merge items, the Photos app will keep one version of the duplicate set, but will combine the highest-quality and most relevant data and move discarded items to your Recently Deleted folder. I did this months ago and removed hundreds of items from my iCloud library, so I recommend it.

Speaking of the Recently Deleted album: both it and the Hidden album now require authentication to be opened. You can disable this if you want in Settings ⇾ Photos ⇾ Use Face/Touch ID…but do you really want to?

In the People album, you can now sort people by name or custom order.

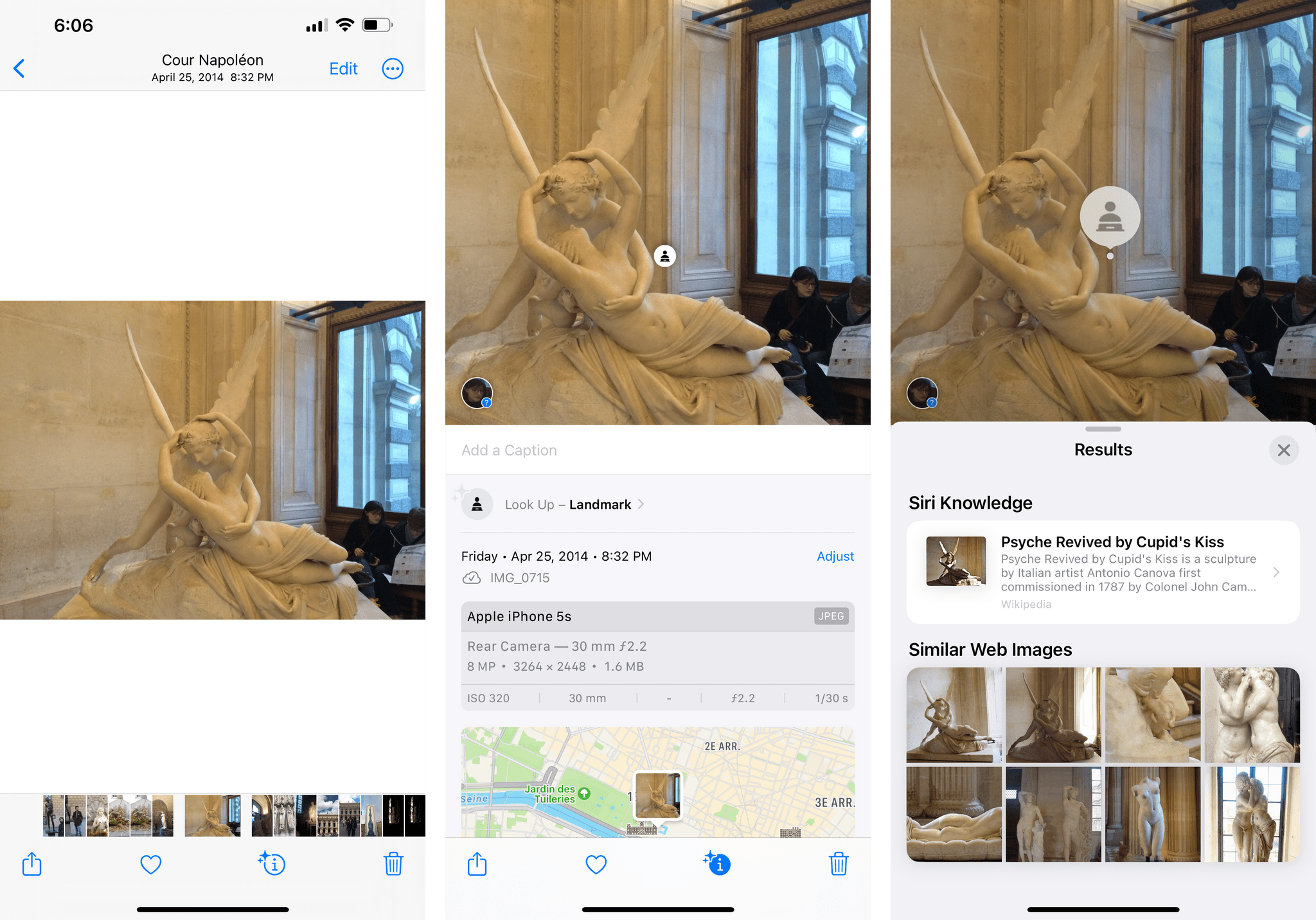

The Visual Look Up feature – the one that can recognize people and dogs and landmarks in photos – has been updated in iOS 16 to support birds, statues, and bugs. In my tests, the improved Look Up worked pretty well for famous statues I photographed at the Louvre in Paris, but it also thought a crab I photographed at the beach was an insect, so, yeah, your mileage may vary.

In theory, in the For You tab, you should find new types of memories for This Day in History and children playing. I haven’t seen either because I don’t have kids and…well, I guess I haven’t seen This Day in History for the same reason why Photos continues to think the woman tattooed on my arm is a person: its underlying intelligence is still hit or miss.

If you use Memories and the Photos widget on a regular basis, you’ll be happy to know iOS 16 brings a new setting to disable featured content from appearing in Spotlight and widgets. This is going to help for those times when you don’t want to see a hurtful memory pop up as a featured item, but I can’t help but think that what I mentioned above also applies here: these apps should be smarter in figuring things out on their own, not require us to manually turn off certain options or hide things.

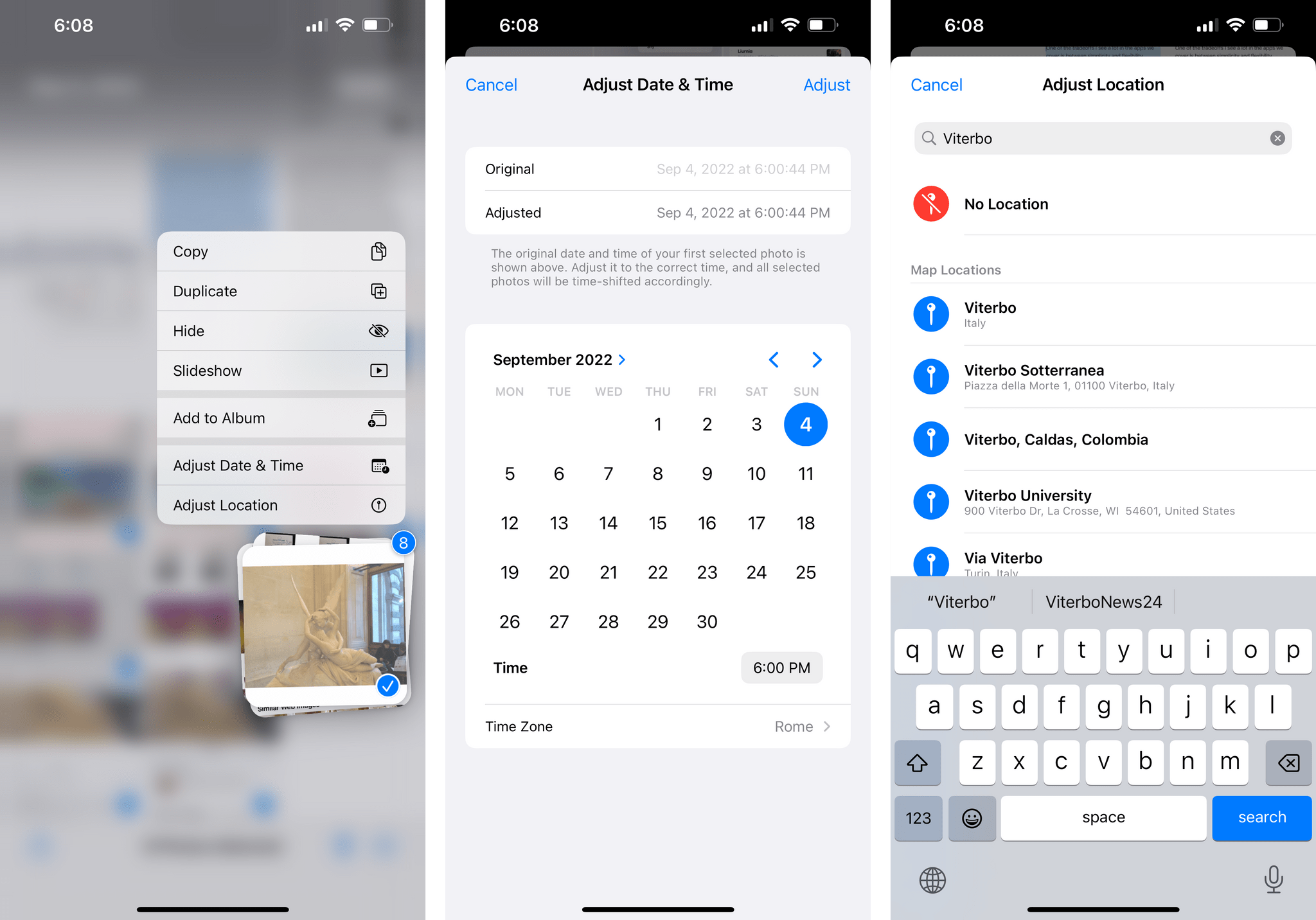

Next up: batch operations. In Photos for iOS 16, you can now select multiple items at once and long-press to show a context menu with actions you can perform for all of them at the same time.

As you can see, you can add multiple images to an album at once (finally!), adjust their timestamps and location (great for old pictures!), as well as copy or hide them. There’s another amazing (and hidden) thing you can do here though: you can now copy and paste edits between photos. To do this, select an edited image and choose ‘Copy Edits’ from its context menu. If there are other images you want to apply the same edits to (for me, it’s usually the magic wand tool + Vivid filter), select them, long-press, and choose ‘Paste Edits’. And voilà, you’ve now brought a series of consistent, non-disruptive edits across images in just a couple taps.

If you’re the kind of person who spends a lot of time editing photos, you should also be happy to hear that the Photos app now lets you undo and redo individual edits in a photo. I can’t believe we’ve gone all these years without having this much better flavor of undo and redo in Photos, but yes, it’s only new this year, and it works perfectly.

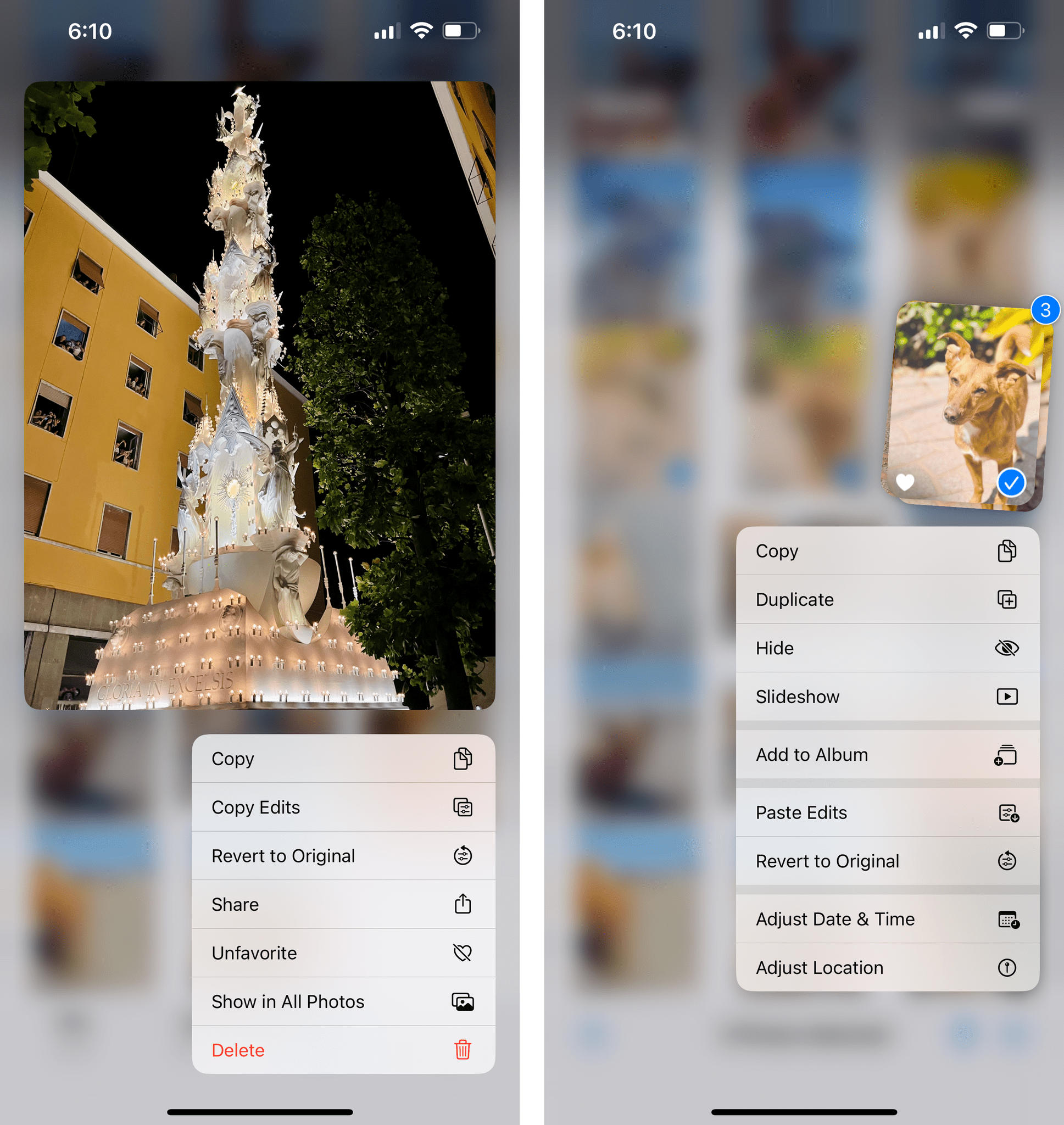

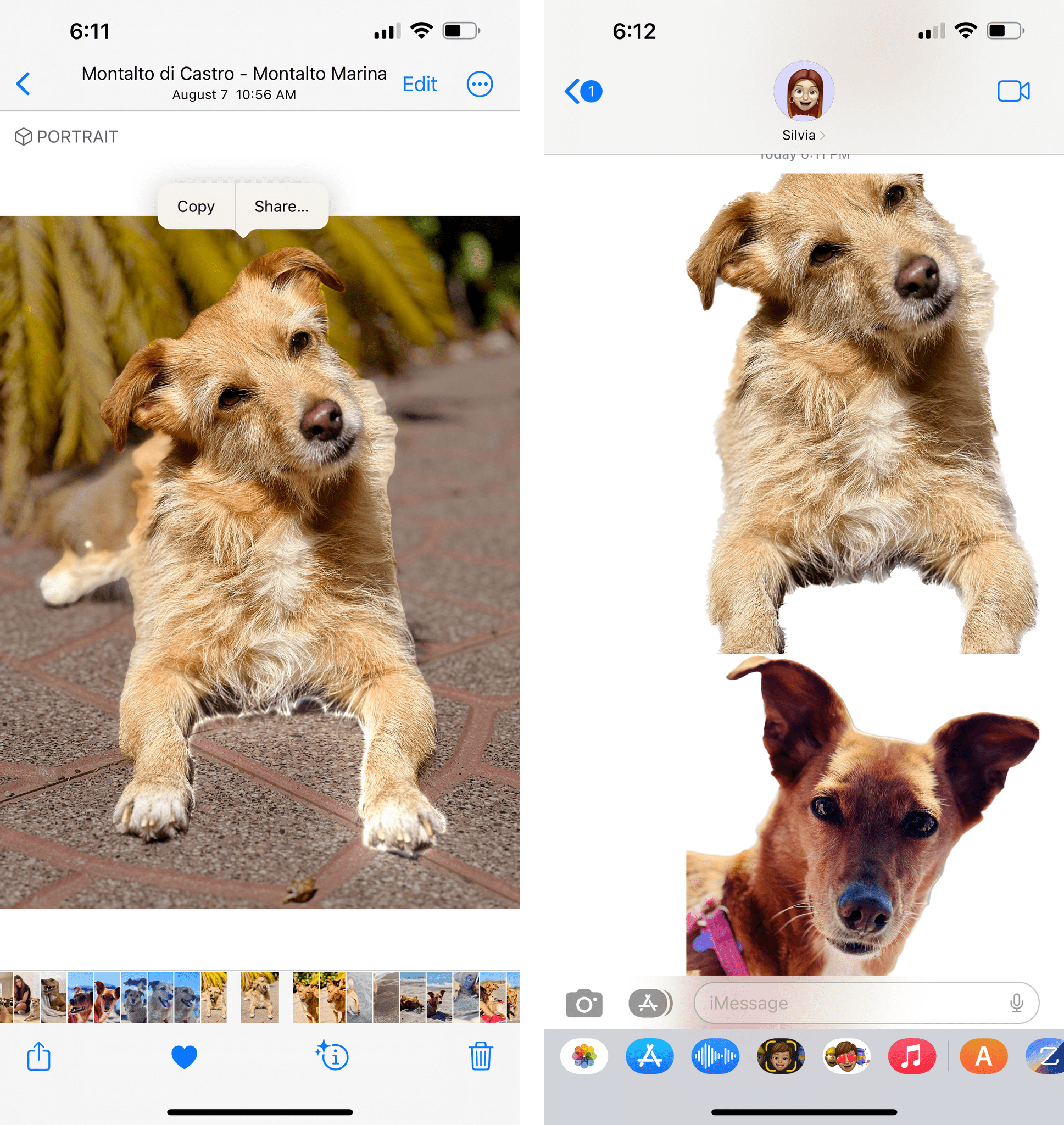

I saved the best – and most ridiculous, in a good way – addition to Photos in iOS 1613 for last: the ability to lift the subject of any photo and drag it out as a transparent PNG into other apps.

Here’s how you can do this: in any photo – it doesn’t have to be shot in Portrait mode – hold down on what you think the subject is, and an animated, shimmering outline will surround it as long as there’s a clear separation between the subject and background.14 Then, drag it away and do whatever you want with it:

Dragging subjects out of the Photos app.Replay

Now, don’t ask me why Apple built this because the reason is beyond me. Were they so proud of their subject separation technology that doesn’t require Portrait mode that they just had to put in Photos (and Shortcuts) too? Did an intern prototype this and Craig thought was a good idea and here we are? Or, the Galaxy Brain theory: what if this is an ingenious way to teach people about drag and drop between apps on iPhone without making it feel like a Nerd Thing?

I love this feature because, on paper, it has no practical reason to exist and arguably makes watching Live Photos a bit worse than before15, and yet it works so well and it’s going to be perfect to generate meme material on iOS. I’ve also seen other use cases for it though: a few days ago, for instance, I wanted to mock up some bedroom designs based on IKEA furniture, but the IKEA website has JPEG images and I needed PNGs with alpha transparency. So I saved all the images to the Photos app, used the subject selection feature to isolate the products I wanted, and dragged the resulting PNGs into Pixelmator. What would have required Photoshop or basic graphic editing skills before can now be done with a single drag and drop gesture, so maybe Apple considered this aspect too? Subject selection can be used as a simple design tool in iOS 16, and I have to assume that it’ll come in handy for Apple’s upcoming Freeform app as well.

Regardless of whether or not you’re going to use shared libraries, the new features in the Photos app this year are well thought out, useful, and perfectly integrated with the app’s existing design and structure. The Photos team at Apple should be proud this year…except for those questionable “intelligent” suggestions, which still need some work.

- For devices with the A12 chip and later. ↩

- This is powered by Apple's image segmentation technology. ↩

- If you want to watch a Live Photo now, you need to keep holding longer on an image. By the way, the subject selection feature also works in paused videos. ↩