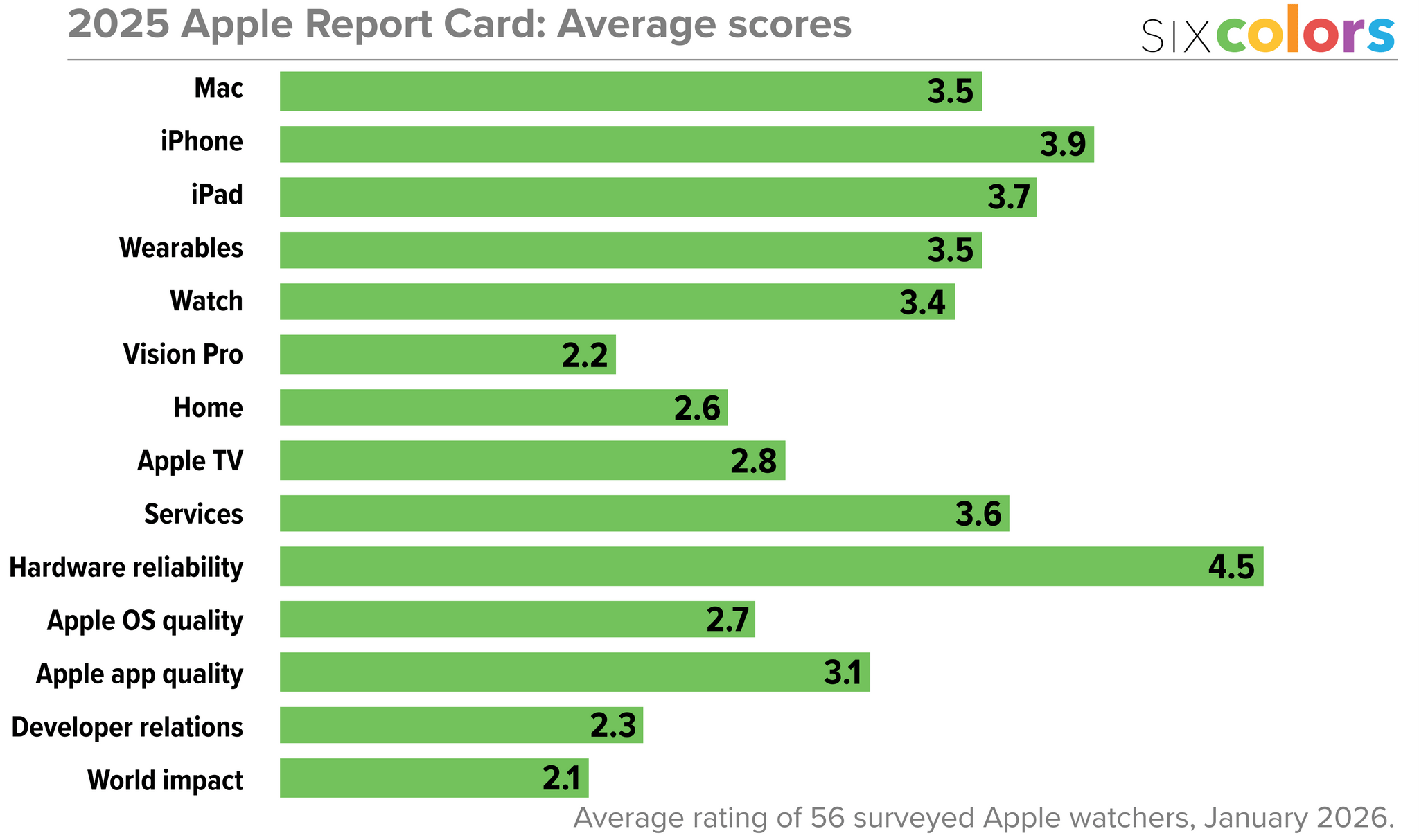

For the past 10 years, Six Colors’ Jason Snell has put together an “Apple report card” – a survey to assess the current state of Apple “as seen through the eyes of writers, editors, developers, podcasters, and other people who spend an awful lot of time thinking about Apple”.

The 2025 edition of the Six Colors Apple Report Card has been published, and you can find a summary of all the submitted comments along with charts featuring average scores for the different categories here.

I’m so grateful that Jason invited me, once again, to participate in the survey and share my thoughts on Apple’s 2025. As you’ll see from my comments – and as you know if you’ve been listening to AppStories or Connected lately – I’ve been focusing on AI agents, hybrid automation, and splitting my work between iPadOS and macOS for the past few months. The LLM takeoff in the productivity space is accelerating on a weekly basis, and modern AI tools are fundamentally changing the way I get work done. Case in point: this article was written before OpenClaw went viral, and the past month alone has seen so many of my habits and automations get upended by this incredible open-source tool. As I noted in my comments, however, one thing is not changing: iPadOS essentially gets no access to any of these modern AI tools, which are increasingly launching as Mac-only apps or features.

I’ve prepared the full text of my responses for the Six Colors report card, which you can find below.