Fascinating analysis by Allen Pike on how, beyond traditional chatbot interactions, the technology behind LLMs can be used in other types of user interfaces and interactions:

While chat is powerful, for most products chatting with the underlying LLM should be more of a debug interface – a fallback mode – and not the primary UX.

So, how is AI making our software more useful, if not via chat? Let’s do a tour.

There are plenty of useful, practical examples in the story showing how natural language understanding and processing can be embedded in different features of modern apps. My favorite example is search, as Pike writes:

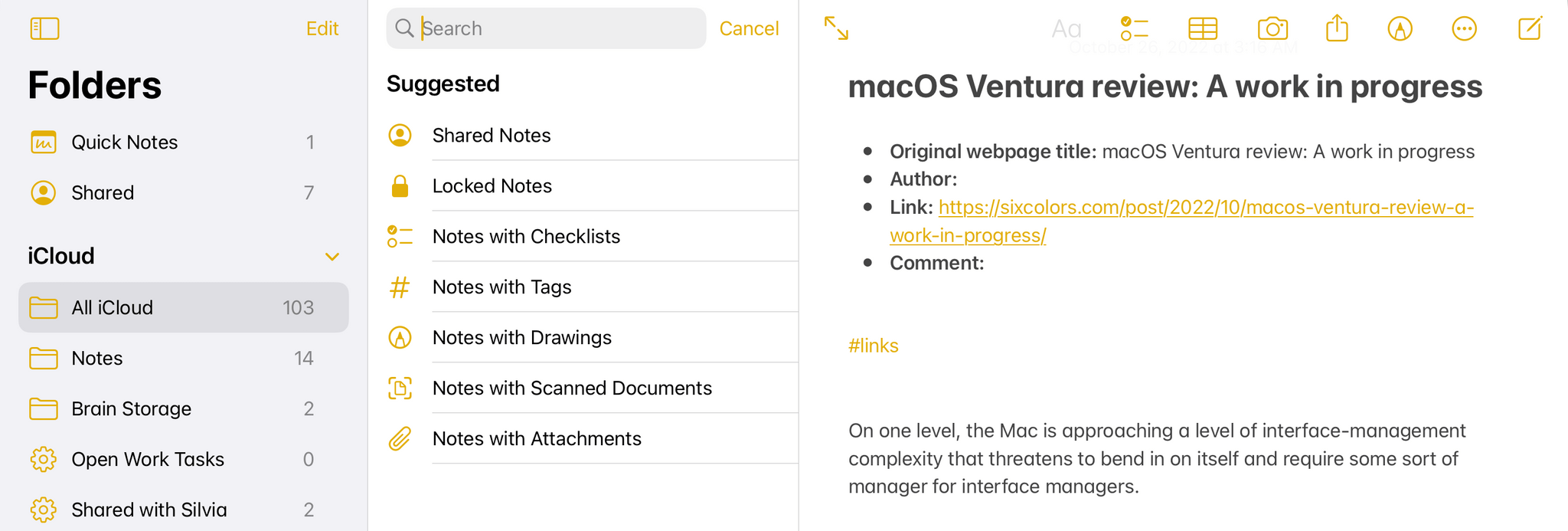

Another UI convention being reinvented is the search field.

It used to be that finding your flight details in your email required typing something exact, like “air canada confirmation”, and hoping that’s actually the phrasing in the email you’re thinking of.

Now, you should be able to type “what are the flight details for the offsite?” and find what you want.

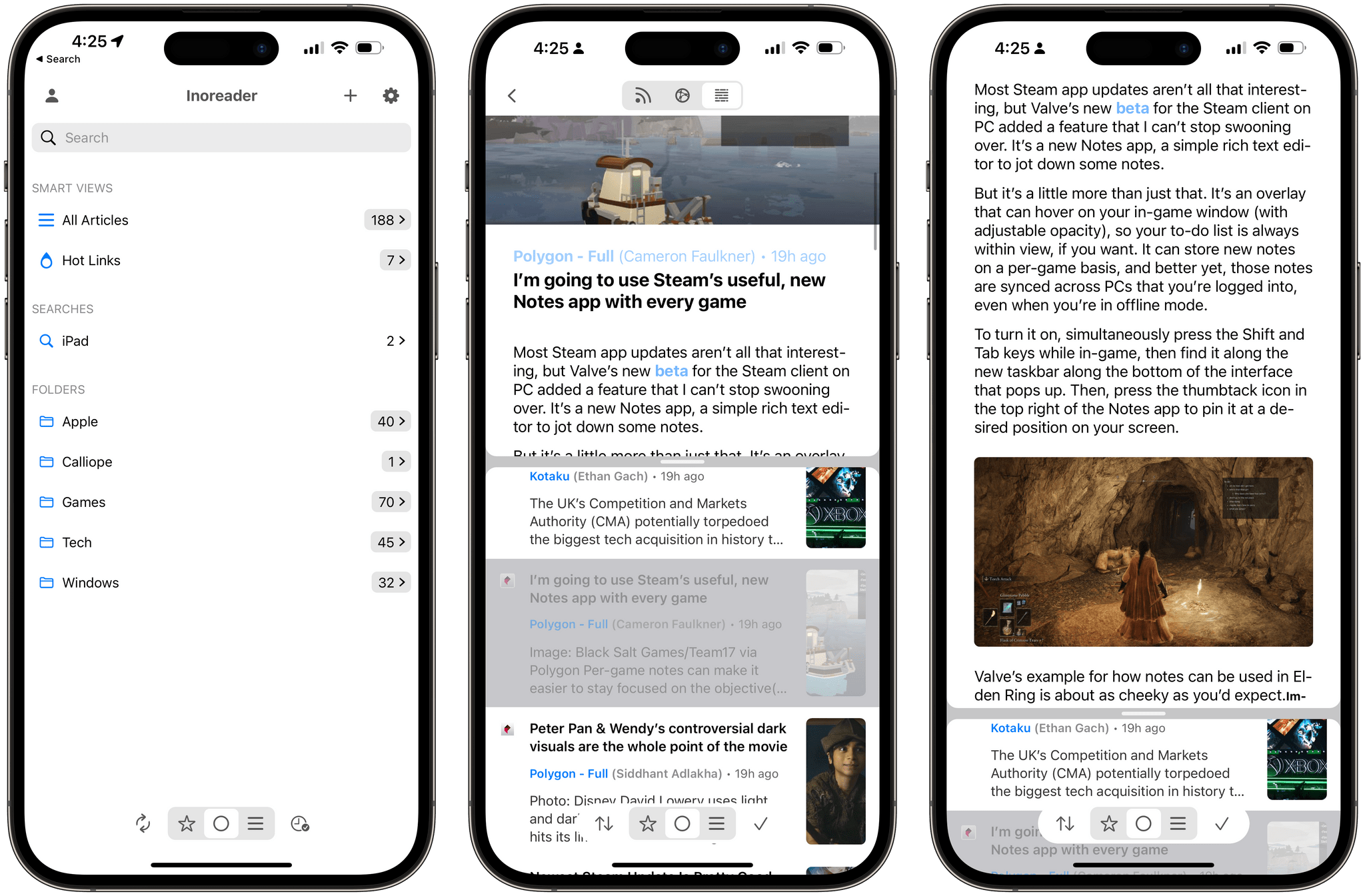

Having used Shortwave and its AI-powered search for the past few months, I couldn’t agree more. The moment you get used to searching without exact queries or specific operators, there’s no going back.

Experience this once, and products with an old-school text-match search field feel broken. You should be able to just find “tax receipts from registered charities” in your email app, “the file where the login UI is defined” in your IDE, and “my upcoming vacations” in your calendar.

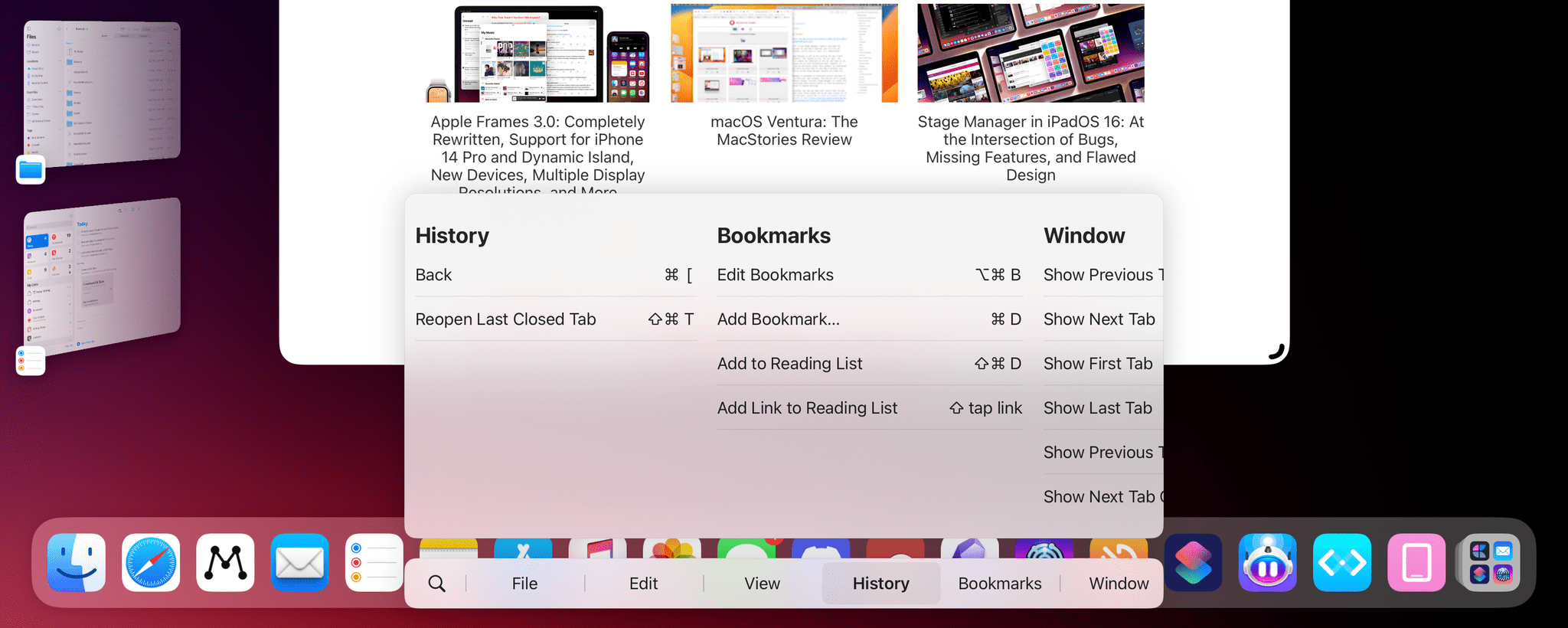

Interestingly, Pike mentions Command-K bars as another interface pattern that can benefit from LLM-infused interactions. I knew that sounded familiar – I covered the topic in mid-November 2022, and I still think it’s a shame that Apple hasn’t natively implemented these anywhere in their apps, especially now that commands can be fuzzier (just consider what Raycast is doing). Funnily enough, that post was published just two weeks before the public debut of ChatGPT on November 30, 2022. That feels like forever ago now.