External Displays

There have been a couple of additions to my writing workflow since I last wrote about using a 2018 iPad Pro with an external USB-C display. They’re not groundbreaking, but I wanted to include this section at the end of the story for one reason: despite its severe limitations, I still enjoy using the iPad Pro with my UltraFine 4K display.

I wrote about my decision to buy an UltraFine 4K display and the issues with iOS and external displays back in December. For context, here’s the key part of the story:

I went with the UltraFine 4K for two reasons:

First, I strongly believe that the future of iPad should be a self-contained computer that can transform from a tablet into a full-fledged workstation depending on what you need to work on. A piece of glass that can be a tablet with cellular connectivity, a laptop with a built-in keyboard, or a “desktop” computer that puts iOS on the big screen. A compatible USB-C display is the first step to start building that future.

Second, while I could have bought another display and used it as an external monitor for the iPad via HDMI adapters, I didn’t want to run into latency-related issues that have always affected how iOS needs to encode video-out signals to H.264 over Lightning. Due to its limited bandwidth, Lightning cannot send raw HDMI data through the HDMI adapter, generating latency and causing various video artifacts that have frequently been observed by many in games or other high-performance apps with video-out support. USB-C, on the other hand, can send a native, lossless signal over a compatible cable, with the added benefit of power delivery to charge an iPad from the display and treating the display itself as a hub for other downstream USB devices. As I mentioned in the first article of this series, one of my goals over the next year is to use the iPad Pro as a workstation for video and photo editing as well as a console for graphically intensive games; thus, finding a compatible USB-C display that tapped into the iPad Pro’s native support for the USB 3.1 Gen. 2 spec was an obvious choice.

Using an iPad Pro with an external display is far from an ideal experience at this point due, again, to limitations of iOS.

Essentially, iOS mirrors the same content you see on the main iOS device to the bigger screen, but app developers can integrate with the barebones external display API (which has existed since 2012) to show different content on the iOS device and the external display. Adding a secondary display to an iPad, whether wirelessly or via cable, doesn’t work like on a Mac, where you get a second desktop and more room for different app windows; on iOS, the system just mirrors what is already on screen, projecting the primary interface to an external monitor. While some app and game developers have done the work to show full-screen content on an external display when an iPad is connected (e.g. VLC and Real Racing 3), most of the time you’ll be looking at an enlarged, pillarboxed version of the iPad’s UI because, by default, iOS doesn’t optimize the interface to fill the screen. And, of course, forget about being able to use an external pointing device to control the iOS UI on the bigger screen: the iPad can mirror its UI to a 4K display, but you can’t connect a mouse or trackpad and control the mirrored UI “remotely” – you’ll always have to touch the screen of the iPad if you need to select UI elements.

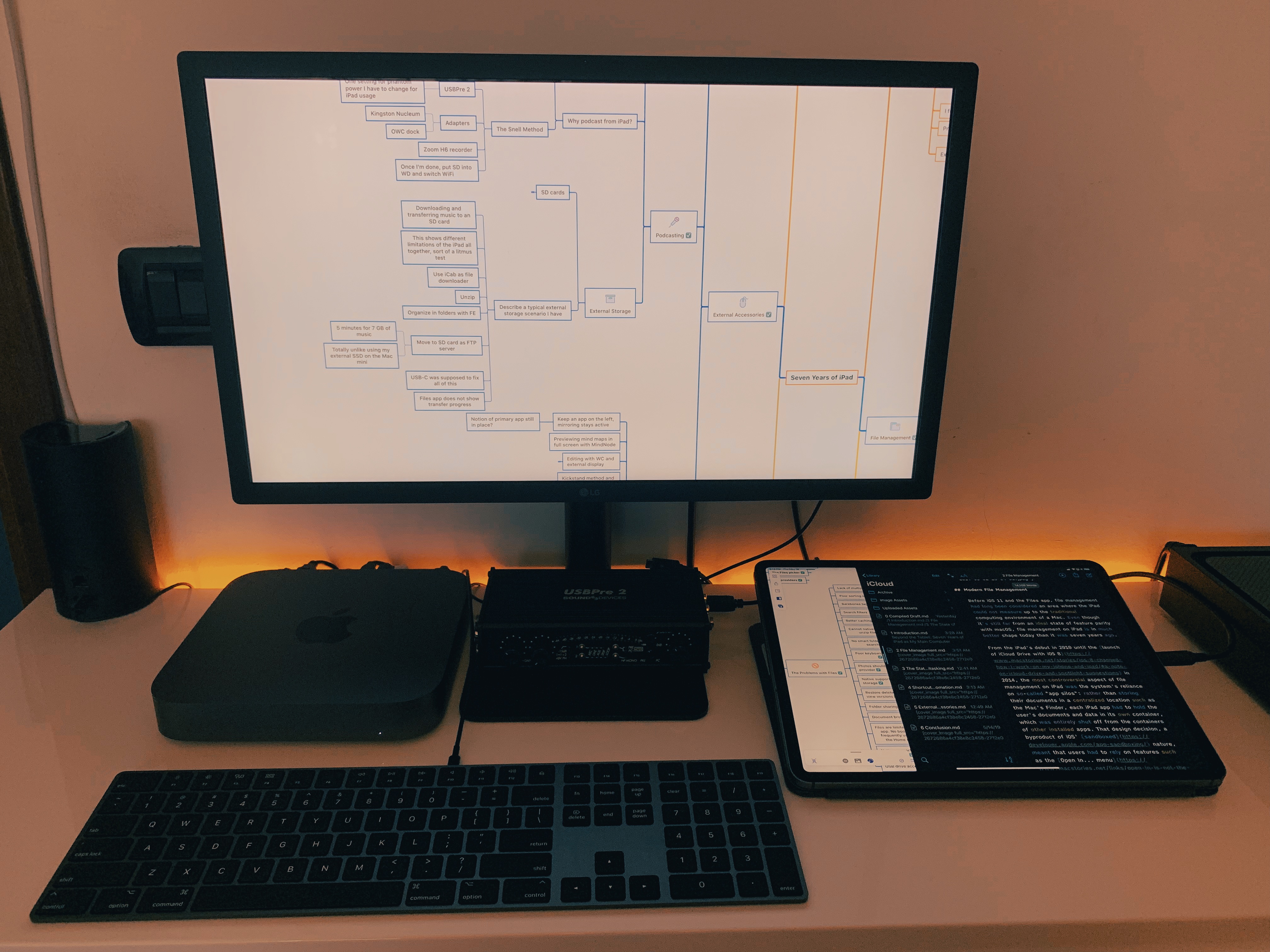

With a single USB-C cable (compatible with USB 3.1 Gen. 2 speeds), the iPad Pro can mirror its UI to an external 4K monitor, support second-screen experiences for apps that integrate with that API, and be charged at the same time. In the case of the UltraFine 4K display, the monitor can also act as a USB hub for the iPad Pro thanks to its four USB-C ports in the back; as I mentioned last year, this allows me to plug the Magic Keyboard (which I normally use via Bluetooth with the Mac mini) into the UltraFine and use it to type on the iPad Pro. To the best of my knowledge, there are no portable USB-C hubs that support 4K@60 mirroring to an external display via USB-C’s DisplayPort alt mode.

Despite the fact that I can’t touch the UltraFine to control the iOS interface or use a trackpad to show a pointer on it, I’ve gotten used to working with iOS apps on the big screen while the iPad sits next to the keyboard, effectively acting as a giant trackpad with a screen. For instance, when I want to concentrate on writing while avoiding neck strain or eye fatigue, I just plug the iPad Pro into the UltraFine, connect the Magic Keyboard in the back, and type in iA Writer on a larger screen. No, pillarboxing is not ideal, but the bigger fonts and UI elements are great for my eyesight, and I still get to work on iOS, which is the operating system I prefer for my writing tasks.

When I’m working at my desk with the UltraFine, I use the kickstands I attached to the Smart Keyboard Folio to prop up the iPad at an angle; if I have to control the iOS interface with touch (because, again, there is no trackpad support yet), I do so with the Pencil. This setup feels perfectly normal to me at this point, and it’s a terrific aid to productivity when I want to focus on one app at a time. I’ve written long sections of this story with the iPad Pro plugged into the UltraFine showing iA Writer’s text editor on a big monitor, and I loved it.

In doing some research around iPad apps that integrate with external displays, I recently came across a lesser known detail of iOS that has helped me bring two more apps into my hybrid iPad-UltraFine setup.

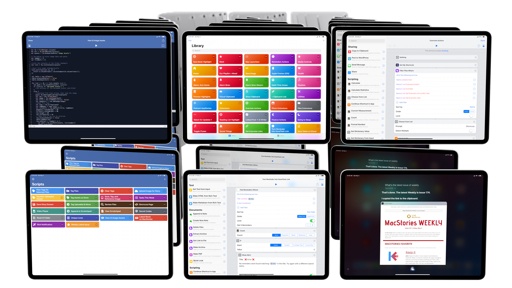

In iOS 12, there is still the concept of a “primary” and “secondary” app in Split View: this is not communicated anywhere in the iOS UI, and it’s a leftover from the days of iOS 9 and old multitasking, but the app on the left side of Split View still acts as the primary one behind the scenes. This matters for apps that ship with special support for external displays, like MindNode or iMovie: if the app is on the right side of Split View, it will not be able to push a custom interface in full-screen to the external display; iOS will mirror the entire Split View (with pillarboxing) instead. However, if an app that is capable of showing additional full-screen content on an external display is on the left side of Split View, it’ll be considered the primary one: this means you’ll be able to have the primary app’s full-screen content on the external display and a separate Split View on the iPad Pro’s display.

MindNode is open on the left side of Split View, so it can output full-screen content to an external display.

This discovery has opened up some interesting possibilities in terms of multitasking and external displays. For instance, when I’m writing a story on the iPad Pro, if I want to have a large mind map on the UltraFine rather than a mirrored version of the iPad Pro’s UI, I can put MindNode on the left side of Split View and iA Writer on the right, enable MindNode’s external display mode, and end up with a full-screen, 4K mind map on the UltraFine while I’m still working with Split View on the iPad Pro.

What I like about this approach is that MindNode allows me to lock the viewport of an external display and have different zoom levels for the same map on the UltraFine and iPad Pro. This lets me keep a zoomed-out overview of the entire map on the external 4K display and edit individual sub-sections while MindNode is running in compact mode in Split View next to iA Writer.

Another fascinating use case for this hidden functionality is using Working Copy in combination with a text editor that supports open-in-place such as iA Writer. Working Copy comes with a special integration for external displays that lets you visualize a document’s preview in full-screen (without pillarboxing) on a connected display. This mode can be accessed from a contextual menu that also provides the option to run a local web server for document previews:22

Previewing a document from iA Writer (iPad, right) that was opened-in-place in Working Copy (iPad, left), which can mirror it in full-screen to an external display.

Here’s where this gets interesting: Working Copy is set up to read files directly from iA Writer; if I put Working Copy and iA Writer side-by-side in Split View (with Working Copy on the left), open the same document in both apps, and start making changes in iA Writer, edits will be automatically reflected in Working Copy’s full-screen preview on the UltraFine display whenever iA Writer saves changes back to the filesystem.23 Combine this with the ability to use custom CSS for HTML previews of Markdown documents, and you have a pretty sweet system to type in Markdown on iPad Pro and have a real-time visual preview on an external display – and it’s all made possible by iOS’ external display APIs, USB-C, Split View multitasking, and the Files framework for developers.

I was only able to find a couple of apps with native support for external displays that I could use, and I accidentally stumbled upon iOS’ secret differentiation between primary/secondary apps for mirroring. This should tell you everything you need to know about the current state of using external displays with an iPad Pro: adoption is sparse among third-party developers, and it’s mostly limited to professional tools that have a specific use case for an additional monitor.

From a system perspective, there are no settings in iOS to control what happens on a secondary display because the iPad was not designed to work in that kind of environment. The fact that I’ve found ways to enjoy basic display mirroring or use apps that properly support external displays doesn’t mean I’m satisfied with this functionality. There’s an odd dichotomy between the iPad supporting external displays up to 5K resolution via a single USB-C cable and iOS’ lackluster support for the technology. As is often the case with the iPad Pro, the hardware is all there, but the software isn’t ready for the task yet.

I’d like to see Apple tackle this aspect of the professional iPad user experience in two ways: as I mentioned before, the iPad Pro should support external pointing devices as options for users who need them; and, there should be native system controls to send specific app “windows” to an external monitor. I shouldn’t have to know that iOS secretly treats the app on the left side of Split View as the more important one that can display a separate UI on an external display; instead, I should be able to decide (from the app switcher, or from Control Center – it doesn’t matter how) which app (or instance of an app) I want to see on an external display, or if I want to use basic mirroring instead.

Having used the iPad Pro as a workstation paired with an external 4K display for months now, I know that there are real benefits to being able to see a bigger iOS UI or a different interface from an iPad app on a bigger screen. And it’s amazing how, with just one cable, the same computer I can hold in my hands can transform into a professional desktop environment. But the entire system has to become more flexible and easier to support for third-party developers if Apple wants to move beyond the basic, limited UI mirroring that constitutes the majority of external display interactions for iPad Pro users now.

I dream of the day when my existing 4K monitor, trackpad, and external SSDs will work with my iPad Pro just like they do with my Mac mini. And I want to believe that day is nearly upon us.

- Which technically means you could use Safari on another device on the same local network to display the HTML preview of a Markdown document from Working Copy on the iPad. I, for example, have also been using Safari for Mac to display the local web server of Working Copy for iOS. ↩

- According to developer Anders Borum, Working Copy uses file coordination as well as kernel events to detect when there are changes to the synced directory of the currently open repository. The process is entirely automatic, but I've found that hitting ⌘S in iA Writer will instantly force saving document edits back to Working Copy. ↩