Shortly before WWDC, Logitech sent me their brand new Flip Folio case/keyboard combo to test. It’s a cleverly designed iPad case that’s a bit heavy but has a lot of other things going for it, like a very stable kickstand and an excellent travel keyboard. The Flip Folio is also more affordable than the Apple Magic Keyboard, which I expect will make it an attractive option for many iPad users.

Posts tagged with "keyboard"

Logitech’s Flip Folio: A Modular iPad Keyboard for Occasional Typing

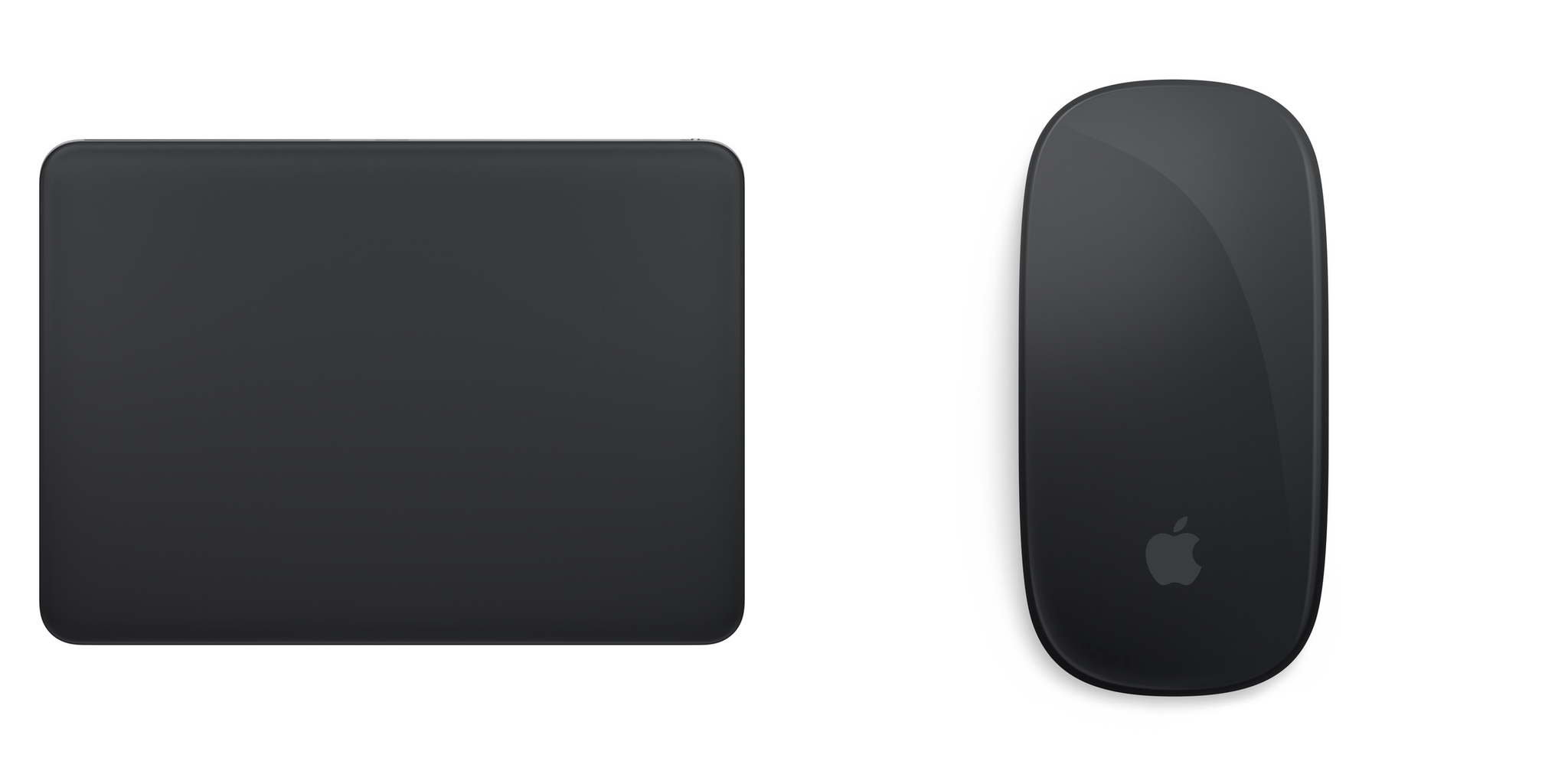

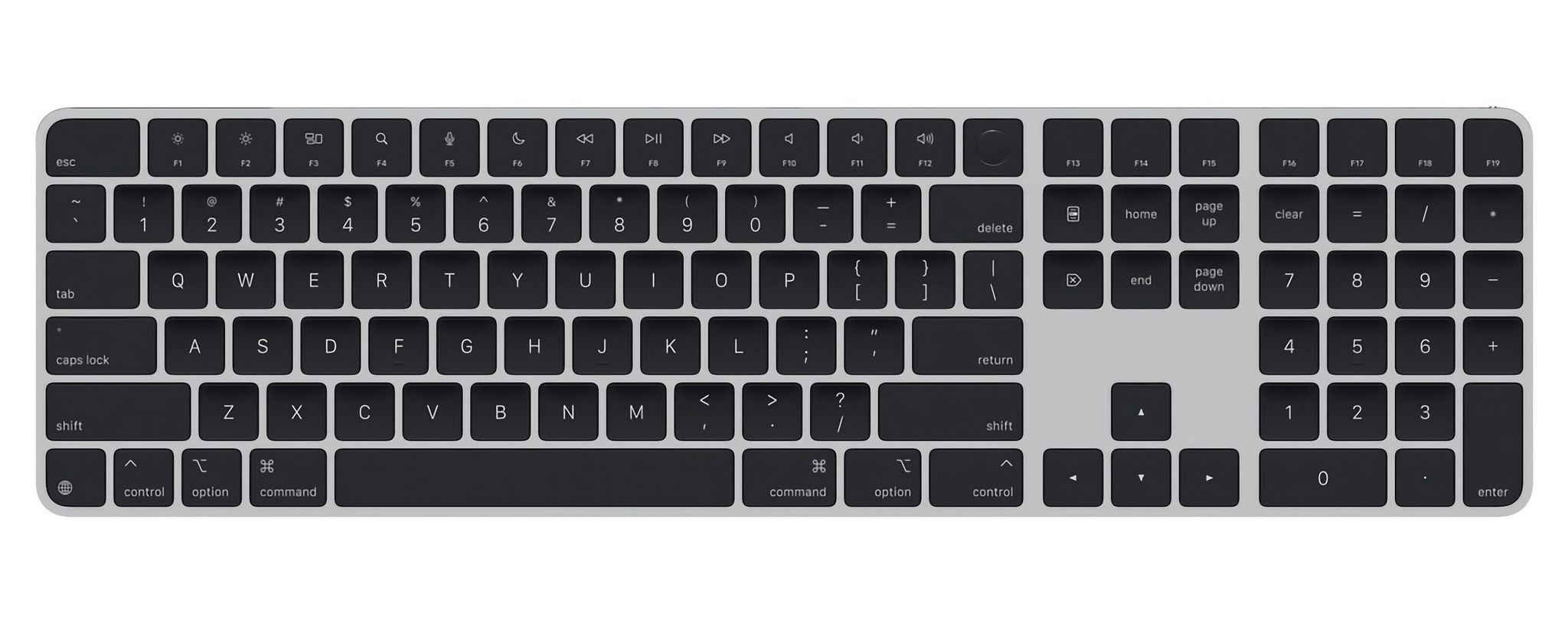

Apple Releases Magic Keyboard, Trackpad, and Mouse with USB-C

Today, Apple finally released USB-C versions of the Magic Keyboard, Magic Mouse, and Magic Trackpad in white and black. The accessories don’t appear to have changed substantially from the models they replace, except for the fact that they can be connected to a Mac and charged using a standard USB-C cable instead of a Lightning cable.

That’s a big win and a change I’ve been waiting for, but there appears to be one big problem with the peripheral update: as of publication, there no longer seems to be a Magic Keyboard available without a numeric keypad. I hope that changes, because I greatly prefer the more compact version.

The new accessories are available to order now from Apple’s Online Store. The Magic Keyboard starts at $179, the Magic Mouse starts at $79, and the Magic Trackpad starts at $129, with a $20 markup to get each accessory in black.

Update: The smaller Magic Keyboard is now available with a USB-C connector for $149.

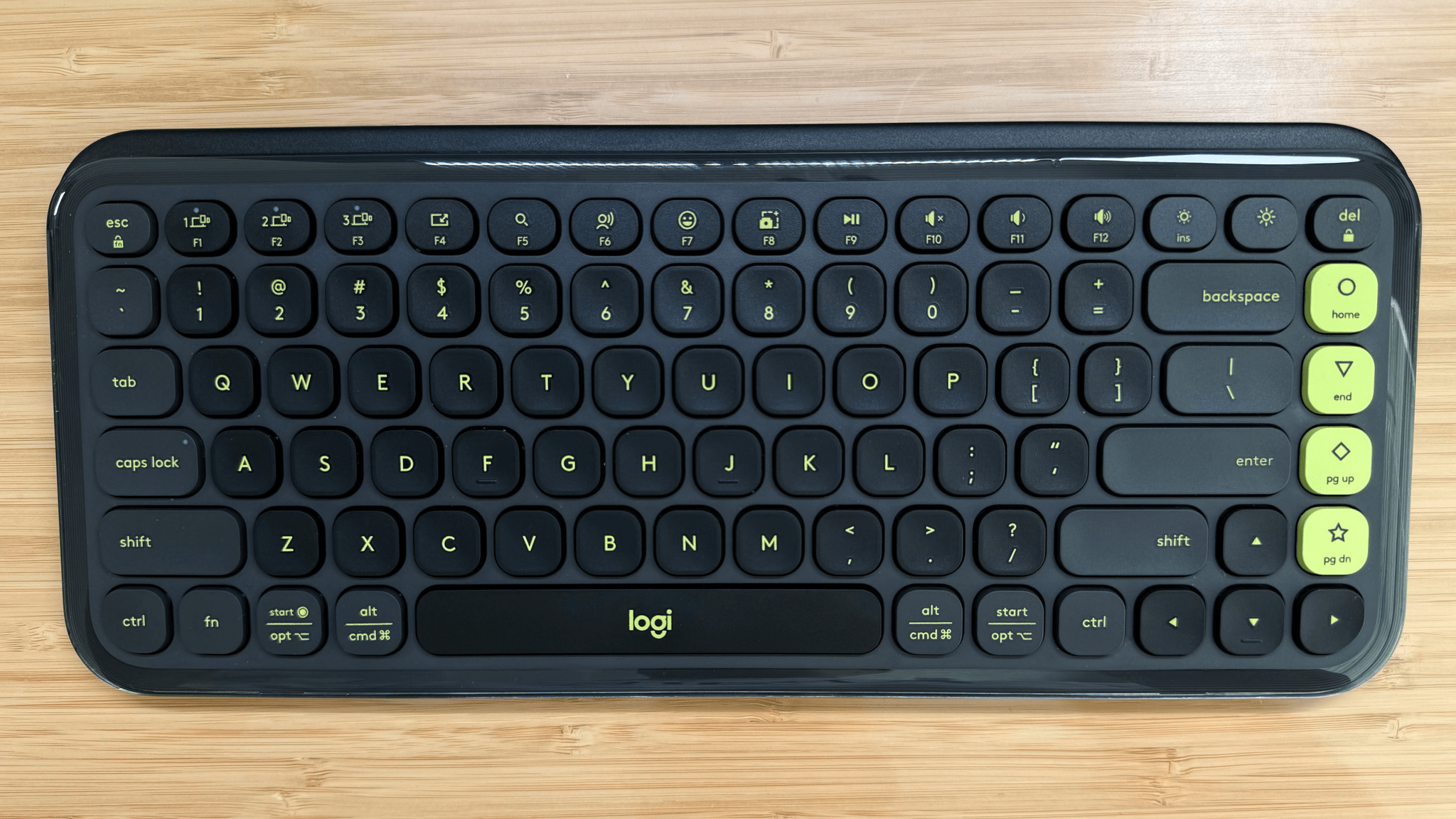

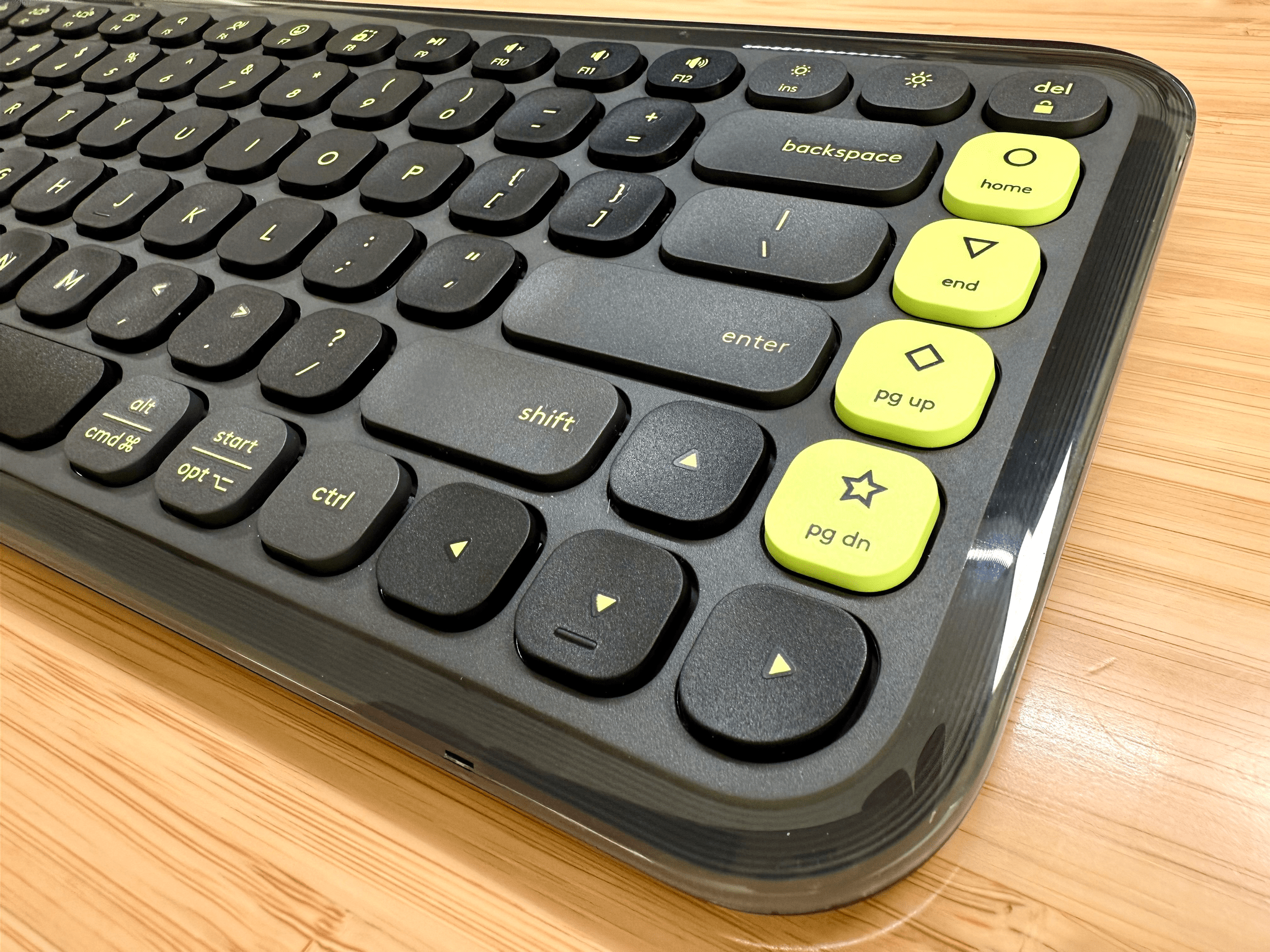

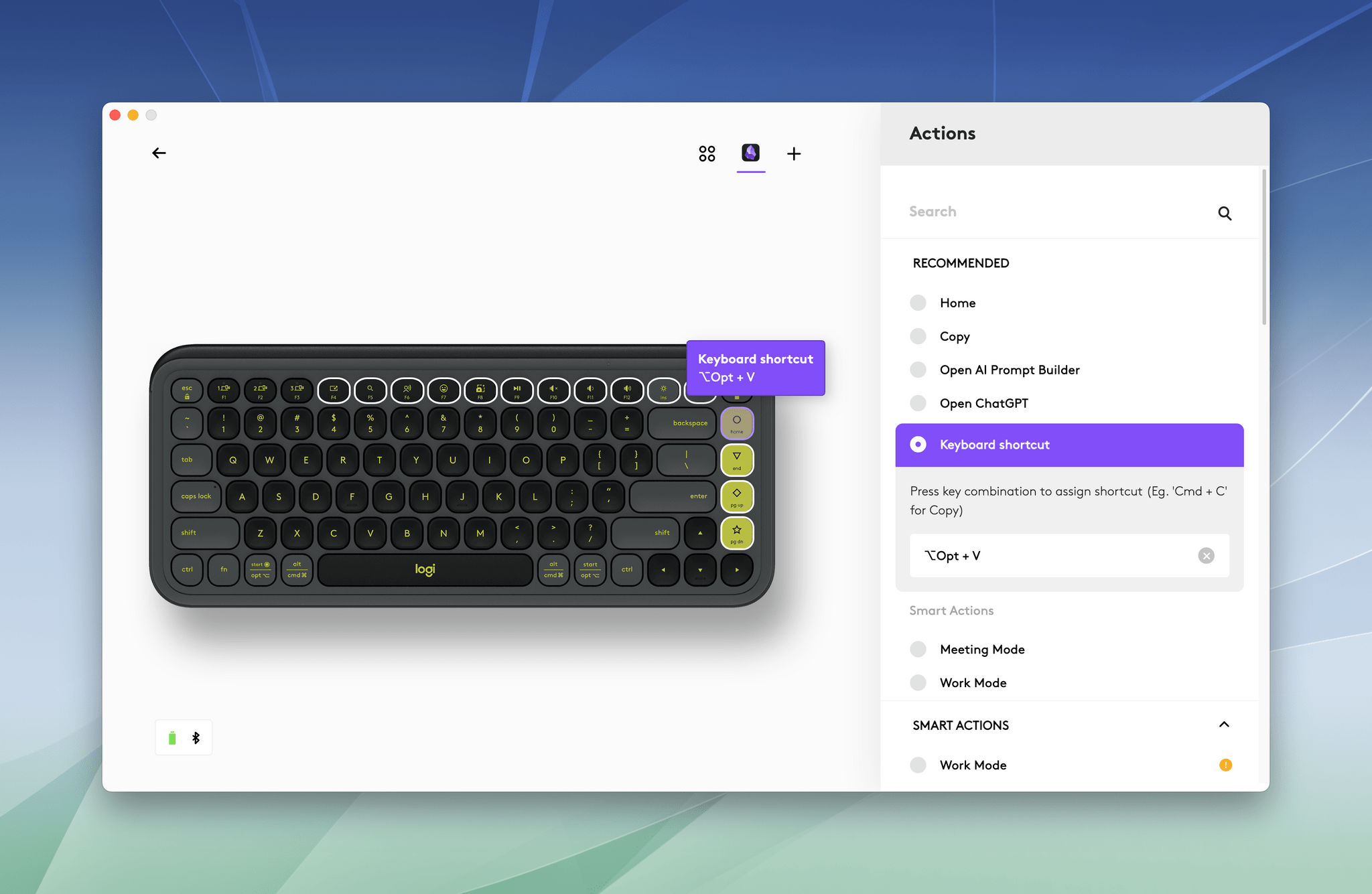

POP Icon Keys: Logitech Brings Automation to a Budget-Friendly Keyboard

A couple of weeks ago, I wrote about and showed off Logitech’s MX Creative Console, a two-piece device made up of a keypad and dialpad, that takes the Elgato Stream Deck head-on. Well, today, Logitech is back with a slightly different approach in the form of its POP Icon Keys keyboard, which borrows some tricks from the Creative Console.

The $49.99 keyboard, shipping later this month, is solidly built and low-profile. It weighs 530g and has four big rubber pads on the bottom corners, giving it a sturdy, stable feel on my desk. The keys use scissor switches and feature aggressively rounded corners, and they’re quiet and have more throw and resistance than an Apple Magic Keyboard, but are easy to adapt to if you’re used to Apple’s keyboards. I particularly like the texture of the keys – which could be partially due to the fact that I’ve been using a worn-down Magic Keyboard – but the keys have a nice feel and don’t show fingerprints.

The body of the keyboard is made of a similar plastic, and the keys are surrounded by a strip of glossy, transparent plastic that adds a little flair to the entire package. The color options available for the POP Icon Keys are fun, too. I’ve been testing a black keyboard with neon yellow accents for about a week, and I like it a lot, but there are other color combinations available, including pink, orange and white, and a purpleish-blue color scheme. Also, the POP Icon Keys runs on two AAA batteries, which Logitech says can provide 36 months of operation thanks to the keyboard’s onboard power management.

If that’s where the story ended for the POP Icon Keys, I’d recommend it because it’s a very good keyboard for the price. What sets the POP Icon Keys apart, though, is that it goes a step further, adding automation features similar to those found on the more expensive MX Creative Console.

Logitech has designated the Home, End, Page Up, Page Down, F4-F12, and brightness keys as programmable via its Logi Options+ app. Among other things, you can use these keys to control system settings, execute keyboard shortcuts, and run multiple actions combined into macros. The keys’ original functionality remains available, too, if you hold down the function button. The POP Icon Keys also shares the MX Creative Console’s ability to set up app-specific profiles, meaning you can program keys to perform different tasks depending on which app is active.

For example, you could use the Home, End, Page Up, and Page Down buttons to open different sets of apps for work, a special project, or relaxing with a game. Or you could use the function keys to trigger keyboard shortcuts in your favorite apps or Shortcuts automations.

There are a couple of things I love about this functionality. First, the flexibility is fantastic, especially since you can access the programmable keys without taking your hands off the keyboard, which is an advantage over the MX Creative Console. Second, for just $50, the POP Icon Keys is a great entry point into the world of push-button automation. If it turns out that keyboard-driven automation isn’t your thing, you still have an excellent keyboard, but if it is, you can go a long way with the POP Icon Keys’ options before you graduate to the MX Creative Console or another similar device.

All in all, I like the POP Icon Keys a lot. It’s nicely built and a great way to get started with keyboard automation or supplement other automation workflows you already use. The device is available directly from Logitech and Amazon.

First Look: Logitech’s MX Creative Console Is Poised to Compete with Elgato’s Stream Deck Lineup

Today, Logitech revealed the MX Creative Console, the company’s first product that takes advantage technology from Loupedeck, a company it acquired in July 2023.

I’ve been a user of Loupedeck products since 2019. When I heard about the acquisition last summer, I was intrigued. Loupedeck positioned itself as a premium accessory for creatives. The company’s early products were dedicated keyboard-like accessories for apps like Adobe Lightroom Classic. With the Loupedeck Live and later, the Live S, Loupedeck’s focus expanded to encompass the needs of streamers and automation more generally.

Suddenly, Loupedeck was competing head-to-head with Elgato and its line of Stream Deck peripherals. I’ve always preferred Loupedeck’s more premium hardware to the Stream Deck, but that came at a higher cost, which I expect made it hard to compete.

Fast forward to today, and the first Logitech product featuring Loupedeck’s know-how has been announced: the MX Creative Console. It’s a new direction for the hardware, coupled with familiar software. I’ve had Logitech’s new device for a couple of weeks, and I like it a lot.

The MX Creative Console is first and foremost built for Adobe users. That’s clear from the three-month free trial to Creative Cloud that comes with the $199.99 device. Logitech has not only partnered with Adobe for the free trial, but it has worked with Adobe to create a series of plugins specifically for Adobe’s most popular apps, although plugins for other apps are available, too.

Apple’s May 2024 Let Loose Event: By the Numbers

Today’s Let Loose online Apple event was packed with facts, figures, and statistics throughout the presentation and elsewhere. We’ve pulled together the highlights.

iPad Pro

- The new iPad Pro’s GPU is 10x faster than the original model and 4x faster than the M2 iPad Pro

- The M4 chip is 50% faster than the M2

- The M4’s 16-core Neural Engine is 60x faster than the original Neural Engine

- The M4 uses a 2nd generation 3nm process

- The M4 features 28 billion transistors

- Apple has improved the thermal performance of the iPad Pro by 20% compared to the previous model

- The Neural Engine can handle 38 trillion operations per second

- The unified memory bandwidth is 120GB/s

- The iPad Pro display supports 1000 nits of brightness for SDR and HDR content and 1600 nits peak brightness for HDR

- Storage capacities range from 256GB to 2TB

- The Wi-Fi version of the 11” iPad Pro is .98 pound (444 grams), and the 13” model is 1.28 pounds (579 grams). Adding cellular adds 2 grams to the 11” model and 3 grams to the 13” version.

- The 256 and 512GB models have 8GB of RAM, while the 1TB and 2TB models have 16GB of RAM

- The new models support Bluetooth 5.3

- The 11” iPad Pro is 5.3mm thick, and the 13” model is 5.1mm thick

- It’s possible to spend $3,077 on a fully-spec’d 13” iPad Pro with Apple Pencil Pro and Magic Keyboard for iPad

iPad Air

- There’s a new 13” model

- The M2 chip in the new Air is 50% faster than the M1 and 3x faster than the iPad Air with the A12 Bionic chip

- The iPad Air can be configured with up to 1TB of storage

- The front and back cameras both have 12MP sensors

- There are 4 colors available, 2 of which are new

- The 11” Air is 1.02 pounds, and the 13” model is 1.36 pounds, both of which are heavier than their iPad Pro counterparts

- Both models are 6.1mm thick

- The 11” iPad Air maxes out at 500 nits of brightness and the 13” model at 600 nits for a 100-nit difference

Accessories and Other

- The Magic Keyboard for iPad Pro has a 14-key function row

- The price of the existing 10th generation iPad was reduced to $349

- Apple paid 0 tributes to Warren Buffet’s Paper Wizard

You can follow all of our May 2024 Apple event coverage through our May 2024 Apple event hub or subscribe to the dedicated May 2024 Apple event RSS feed.

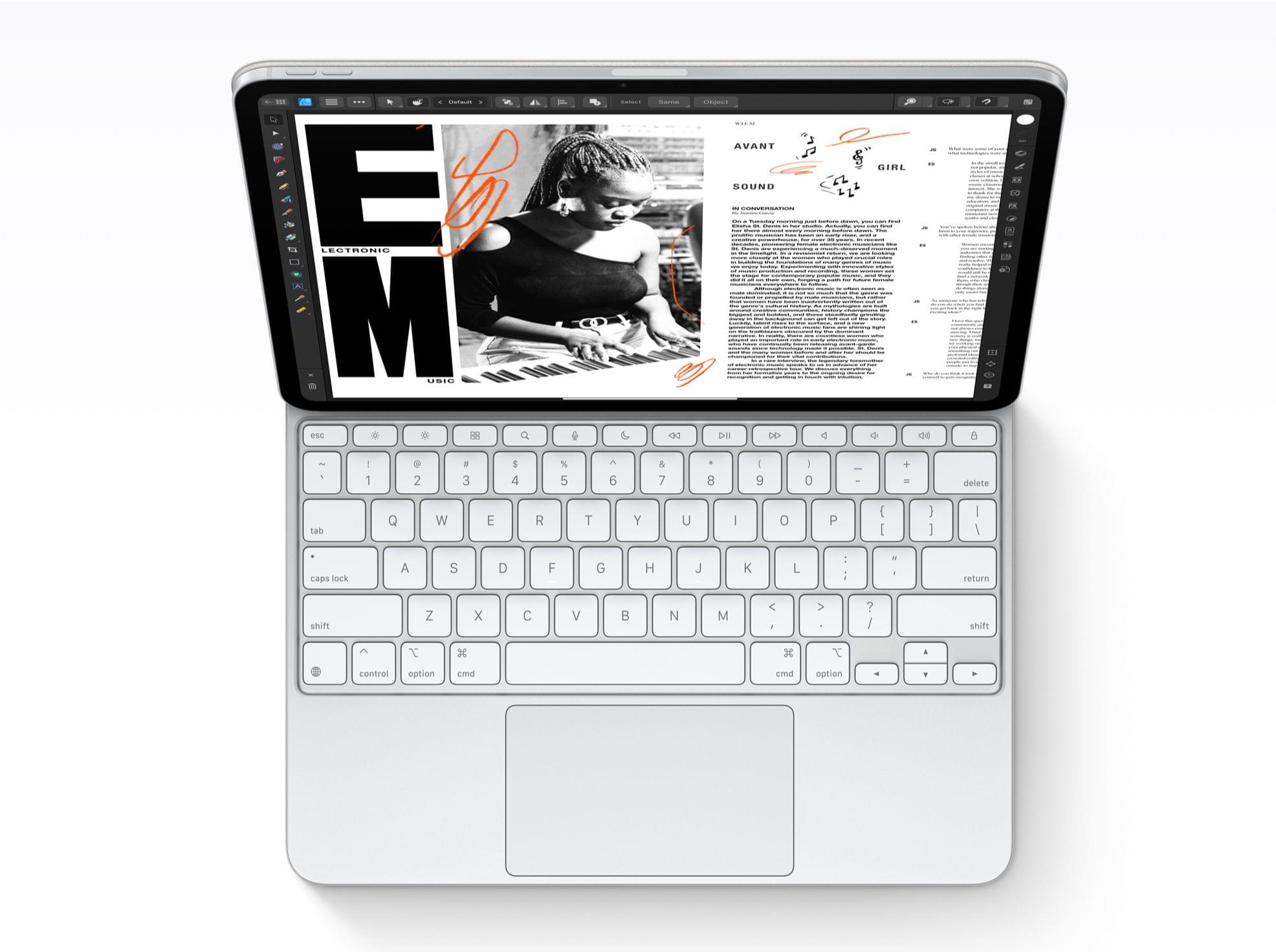

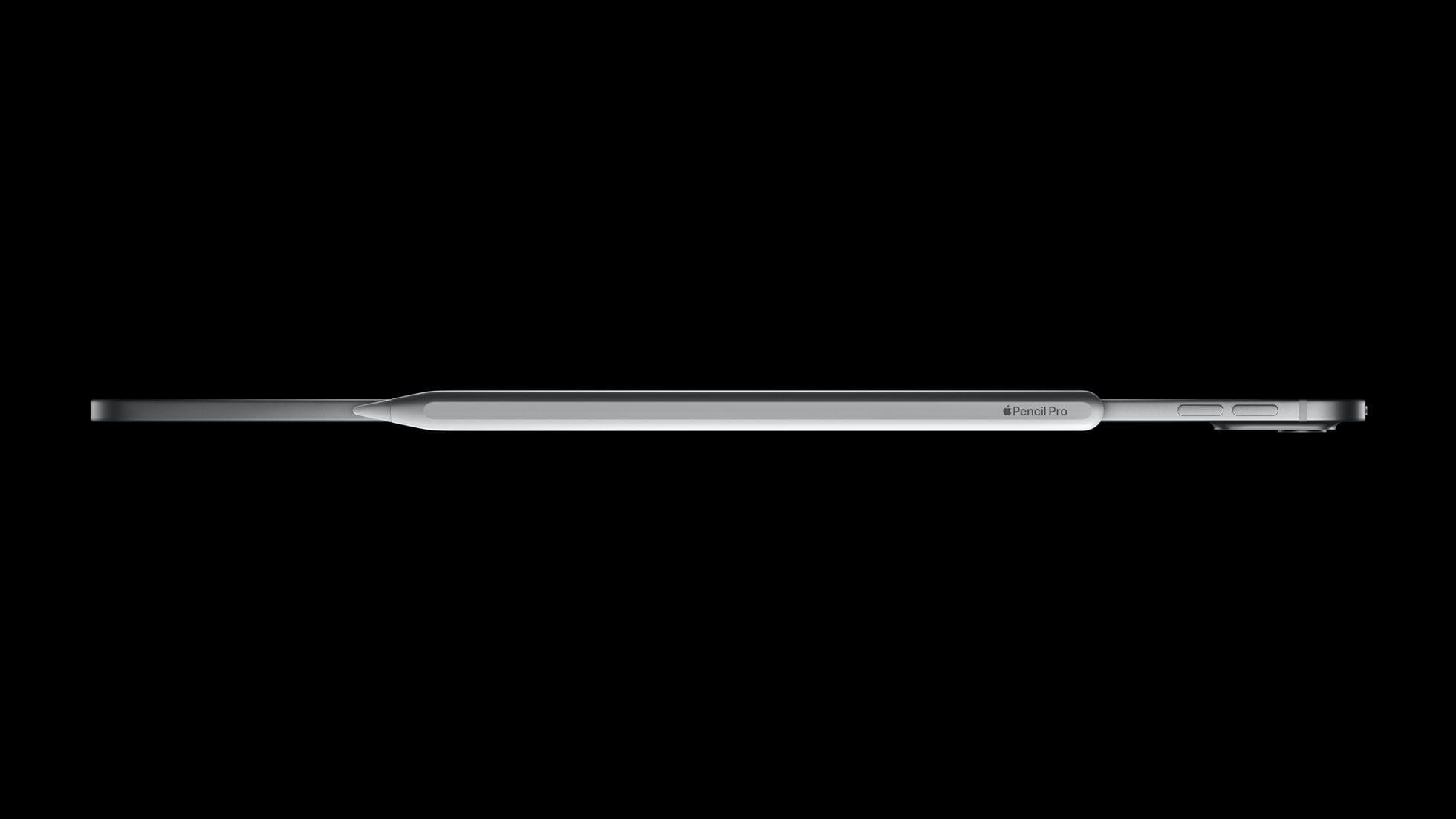

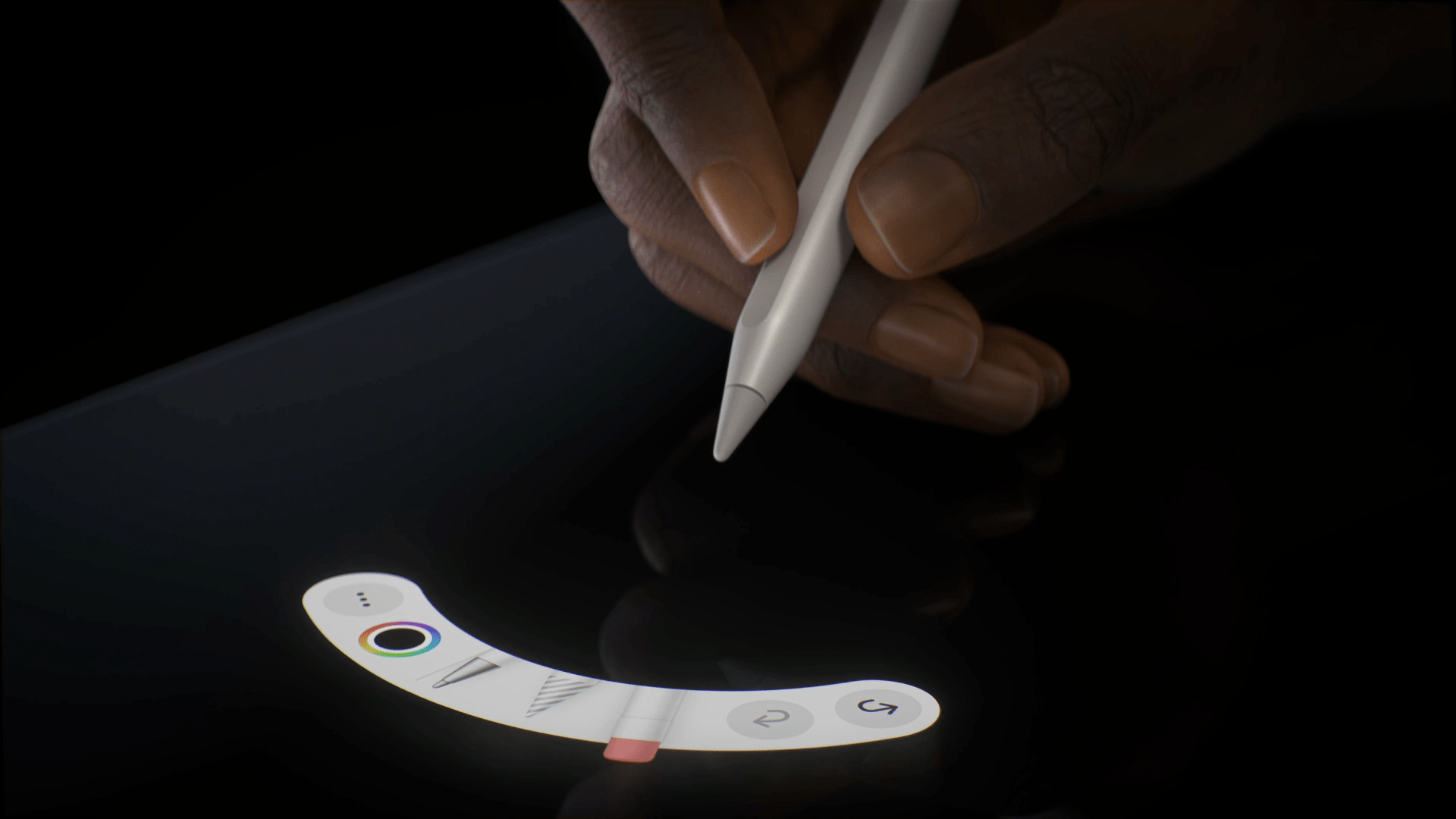

Apple Reveals New Keyboards and the Apple Pencil Pro

Along with the iPad Pros, Apple today introduced new keyboards and an Apple Pencil Pro.

The new Magic Keyboard for iPad Pro, at long last, has a function row of half-height keys similar to what you’d find on a Mac. The trackpad is bigger, the palm rest is aluminum, and the whole thing is thinner and lighter than before, which are all great additions. The keyboards come in black (with a black aluminum palm rest) and white (with a silver palm rest). What hasn’t changed is the cantilevered design of the keyboard, which some had predicted would be replaced by a more laptop-like hinge.

There’s a new Smart Folio for iPad Pro, too. According to Apple’s press release:

The new Smart Folio for iPad Pro attaches magnetically and now supports multiple viewing angles for greater flexibility. Available in black, white, and denim, it complements the colors of the new iPad Pro.

The new Apple Pencil Pro sounds as though it’s a big step forward. There’s a sensor in the device’s barrel so that, with a squeeze, users can summon a tool palette on the iPad Pro or iPad Air. The new Pencil also incorporates haptics, allowing it to provide a bit of feedback when a user squeezes the Pencil. There’s even a built-in gyroscope that senses when the barrel is rotated, which can be used to rotate brushes and onscreen objects. The rotation functionality is incorporated into the hover feature, allowing users to see the rotation of a brush before placing the Pencil on the screen, for example. The new model Pencil also supports Find My. As before, the new Apple Pencil Pro connects to the iPad Pro magnetically for pairing, charging, and storing.

I like the look of the Magic Keyboard for iPad Pro and Apple Pencil Pro a lot. The previous Magic Keyboard always felt too cramped, and it was frustrating to not have function keys. And, although I’m not a heavy Apple Pencil user, I’m excited to see how developers implement the new Pro features.

The new Smart Folio comes in black, white, and denim and is $79 for the 11” iPads and $99 for the 13” iPads. The new Magic Keyboard comes in white and black and is $299 for the 11” iPad Pro and $349 for the 13” iPad Pro, with $20 off those prices for education customers. The Apple Pencil Pro is $129 and $119 for education customers.

You can follow all of our May 2024 Apple event coverage through our May 2024 Apple event hub or subscribe to the dedicated May 2024 Apple event RSS feed.

Logitech’s Casa Pop-Up Desk Elevates Your MacBook for More Comfortable Computing

When I’m sitting at home in my office, the ergonomics are perfect. I have a comfortable chair with plenty of back support, my keyboard is at the right height, and my Studio Display is at eye level. The trouble is, that’s not the only place I work or want to work. As a result, I spend time almost daily using a laptop in less-than-ideal conditions. That’s why I was eager to try the Logitech’s Casa Pop-Up Desk that debuted in the UK, Australia, and New Zealand last summer and is now available in North America, too.

Logitech sent me the Casa to test, and I’ve been using it on and off throughout the past 10 days as I work at home, away from my desk, and in various other locations. No portable desktop setup is going to rival the ergonomics of my home office, but despite a few downsides, I’ve been impressed with the Casa. By making it more comfortable to use my laptop anywhere, the Casa has enabled me to get away from my desk more often, which has been wonderful as the weather begins to warm up.

Brydge Ceases Operations, Leaving Employees in the Lurch→

Chance Miller published an excellent exposé on the downfall of Brydge, an iPad, Mac, and Microsoft Surface accessory maker. The company got its start with a Kickstarter campaign in 2012, and for a time, its keyboard accessories were a popular choice among iPad users, including me.

However, as Chance explains, Brydge’s fortunes took a turn for the worse as it was forced to compete head-on with Apple’s Magic Keyboard, later spiraling out of control as its cash flow ran out:

Brydge, a once thriving startup making popular keyboard accessories for iPad, Mac, and Microsoft Surface products, is ceasing operations. According to nearly a dozen former Brydge employees who spoke to 9to5Mac, Brydge has gone through multiple rounds of layoffs within the past year after at least two failed acquisitions.

As it stands today, Brydge employees have not been paid salaries since January. Customers who pre-ordered the company’s most recent product have been left in the dark since then as well. Its website went completely offline earlier this year, and its social media accounts have been silent since then as well.

From what former employees told 9to5Mac, it appears that a number of factors contributed to its downfall, but the saddest part of the story is how Brydge treated its employees, keeping them in the dark and, in many cases, unpaid to this day.

Apple Releases New Mac Keyboards and Pointing Devices

Apple has updated its online store with new accessories that first debuted with the M1 iMac. The updated accessories were spotted by Rene Ritchie, who tweeted about them:

https://twitter.com/reneritchie/status/1422531729894613017?s=21

Among the items listed, which each come with a woven USB-C to Lightning cable and come in white and silver only, are:

- Magic Keyboard ($99). The Magic Keyboard features rounded corners and some changes to its keys, including a dedicated Globe/Fn key and Spotlight, Dictation, and Do Not Disturb functionality mapped to the F4 - F6 keys.

- Magic Keyboard with Touch ID ($149). Along with the design and key changes of the Magic Keyboard, this model includes Touch ID, which works with M1 Macs only.

- Magic Keyboard with Touch ID and Numeric Keypad ($179)

- Magic Trackpad ($129). The corners of the new Magic Trackpad are more rounded than before, but it’s functionally the same as prior models.

- Magic Mouse ($79). The Magic Mouse is listed as new, too, although apart from the woven USB-C to Lightning cable in the box, there don’t appear to be any other differences between this model and the prior model.

I’ve been using the Magic Keyboard with Touch ID, Magic Trackpad, and Magic Mouse for a couple of months with an M1 iMac. Based on my experience, the trackpad and mouse haven’t changed enough to warrant purchasing one unless you need one anyway. However, if you’ve got an M1 Mac mini or M1 laptop that you run in clamshell mode, the Magic Keyboard with Touch ID is a nice addition to any setup. Having Touch ID always available is fantastic, and I’ve grown used to using the Do Not Disturb button along with the Globe + Q keyboard shortcut for Quick Note, the new Notes feature coming to macOS Monterey this fall, which is the same when using an iPad running the iPadOS 15 beta with a Magic Keyboard attached.