Paul Lamkin, writing at The Ambient:

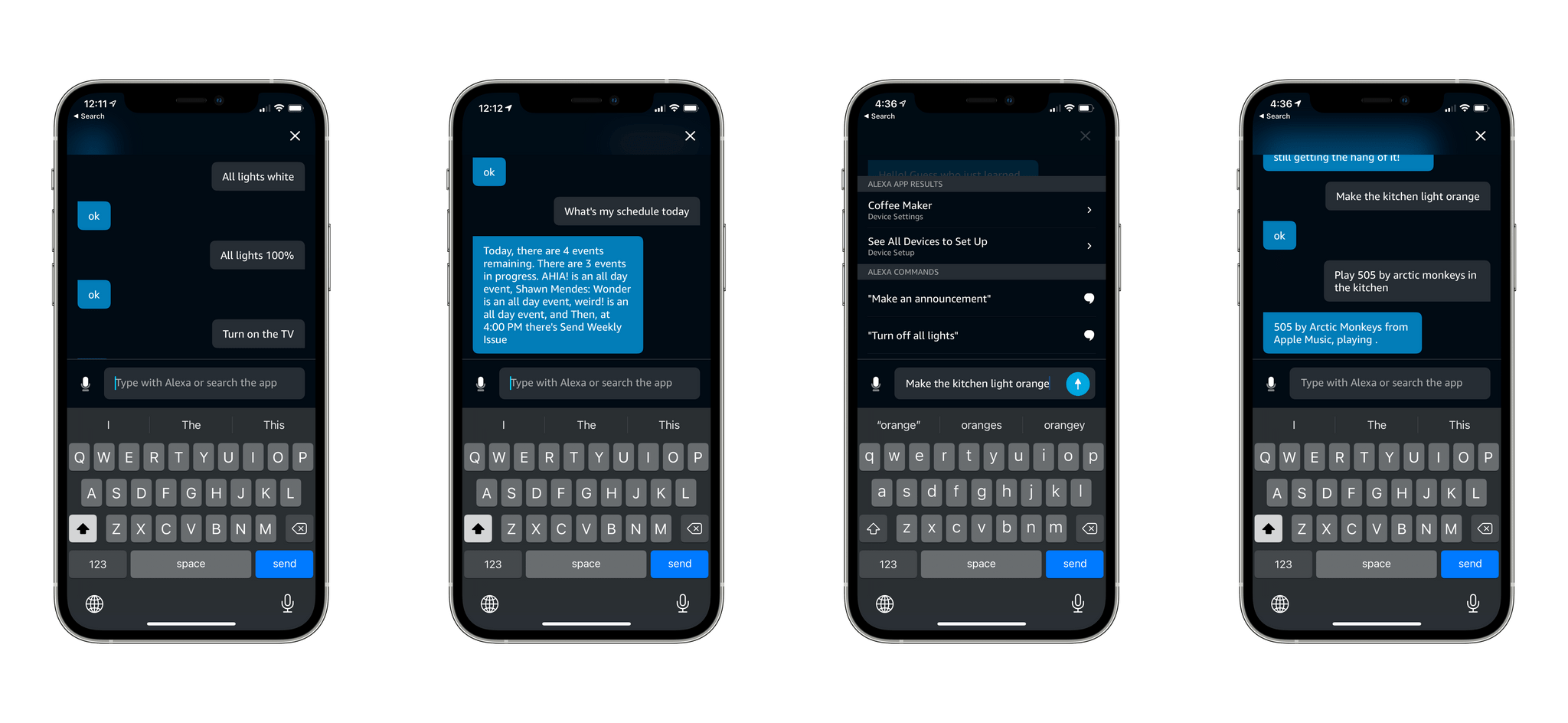

Amazon is rolling out a new feature within its smart home app. Type with Alexa allows you to send messages to your digital assistant using a keyboard and text messages, rather than using your voice.

The new feature, which is rolling out as part of a public preview - _The Ambient _contributor Jennifer Pattison Tuohy noticed it pop up on her phone - means you can send discreet messages to Alexa for occasions when your voice might not be the best option; think cinemas, on the train, at a funeral and so on.

Sure, you could already search within the app for Alexa Routines and smart home device controls, but the new keyboard based input also allows you to ask queries such as diary updates, calculations, news headlines and the like - as well as acting as a pretty nifty search tool for smart home routines and devices with your Alexa ecosystem.

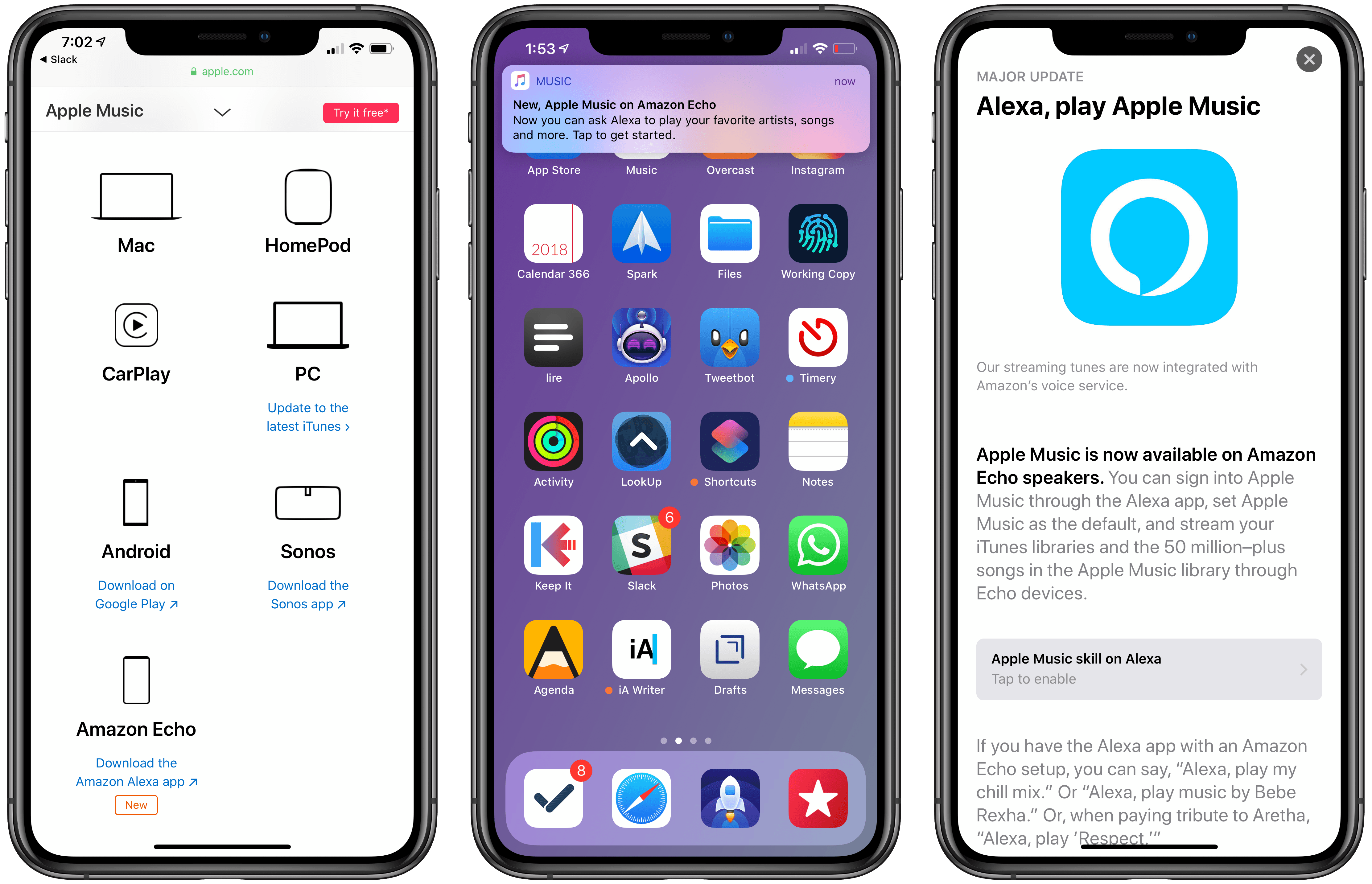

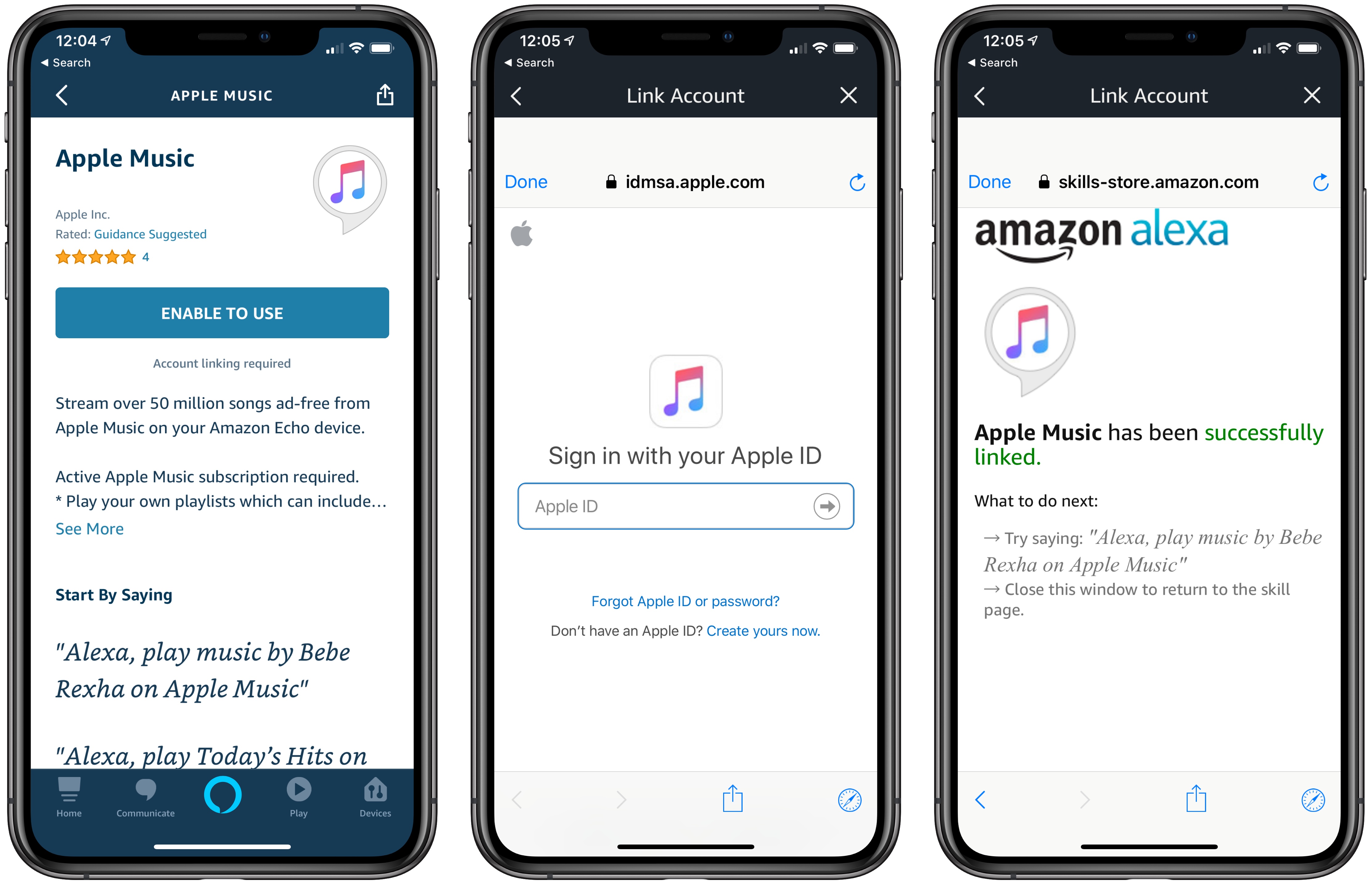

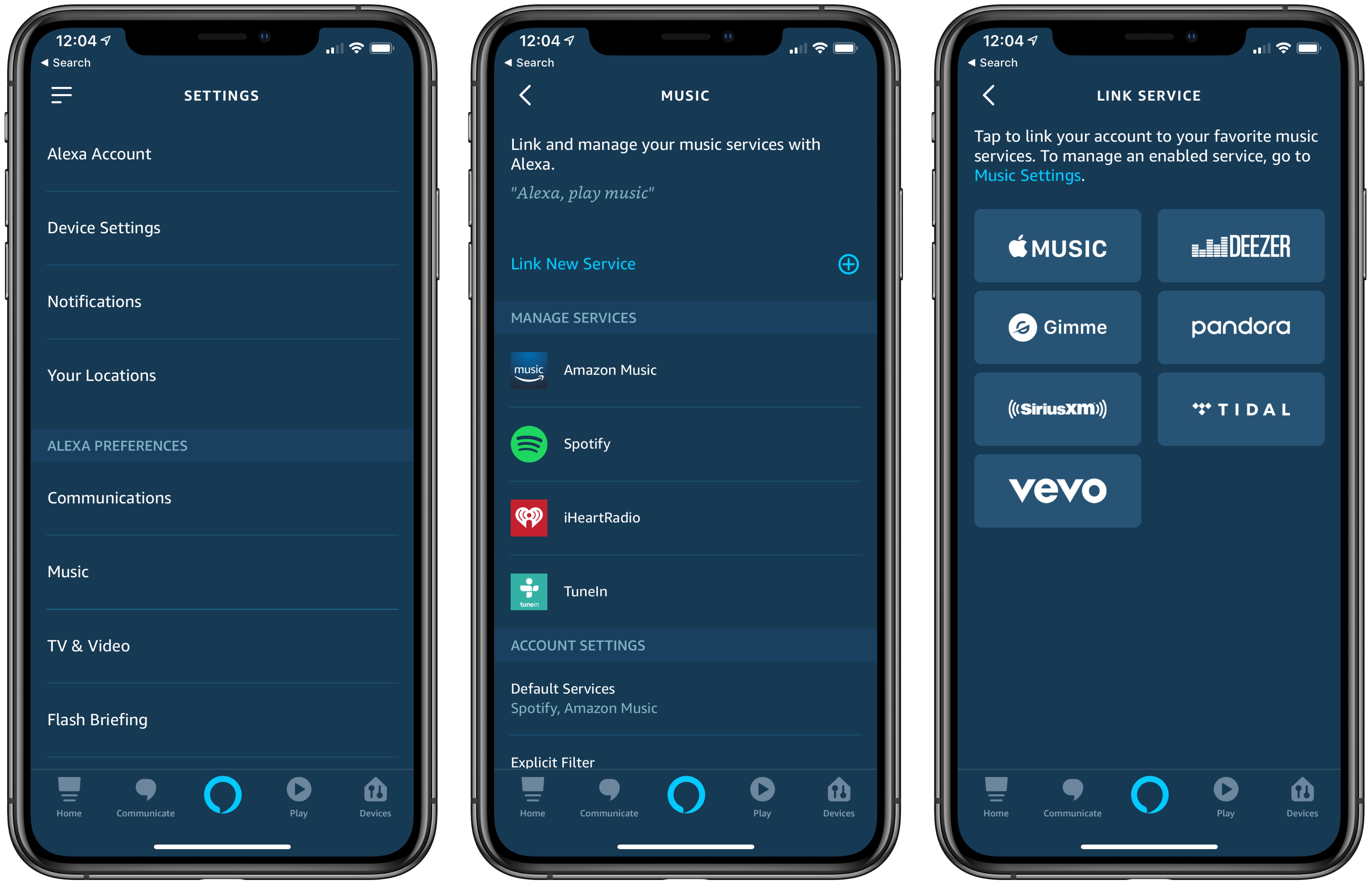

I also noticed the public preview of this feature in the Alexa app on my iPhone, and I’ve been playing around with it since last night. My first impression is that ‘Type with Alexa’ is what I’ve long wanted from Siri: having a silent conversation with a smart assistant that can control smart home accessories, interact with web services, and play music or podcasts is terrific. Anything you can ask Alexa with normal voice commands can also be typed now, so sending a message such as “play 305 by Shawn Mendes in the kitchen” from your iPhone will result in Alexa playing that song via an Echo speaker in the kitchen. (I’m aware that Google Assistant has offered a typing mode for a long time; however, I don’t use Google’s smart home products.)

I could achieve something similar with Siri by enabling iOS’ ‘Type to Siri’ Accessibility setting. The problem with that option, as I mentioned several times before, is that it replaces Siri’s voice interactions: if you enable ‘Type to Siri’, you’ll no longer be able to issue voice commands and the keyboard will always be displayed instead. I’m not the first one to ask this, but I’d love the ability to have a separate conversation with Siri in iMessage in a future version of iOS.