I was recently watching a movie with my girlfriend, and it had a great soundtrack. After scrubbing the video back to open Shazam on my iPhone for the third time, I remembered that Shazam offered an automatic tagging feature to let the app continuously listen in the background to recognize songs. Shazam’s auto-tagging isn’t meant to be active all the time, but we were home, with my iPhone charging next to me, and it seemed like a perfect time to try it.

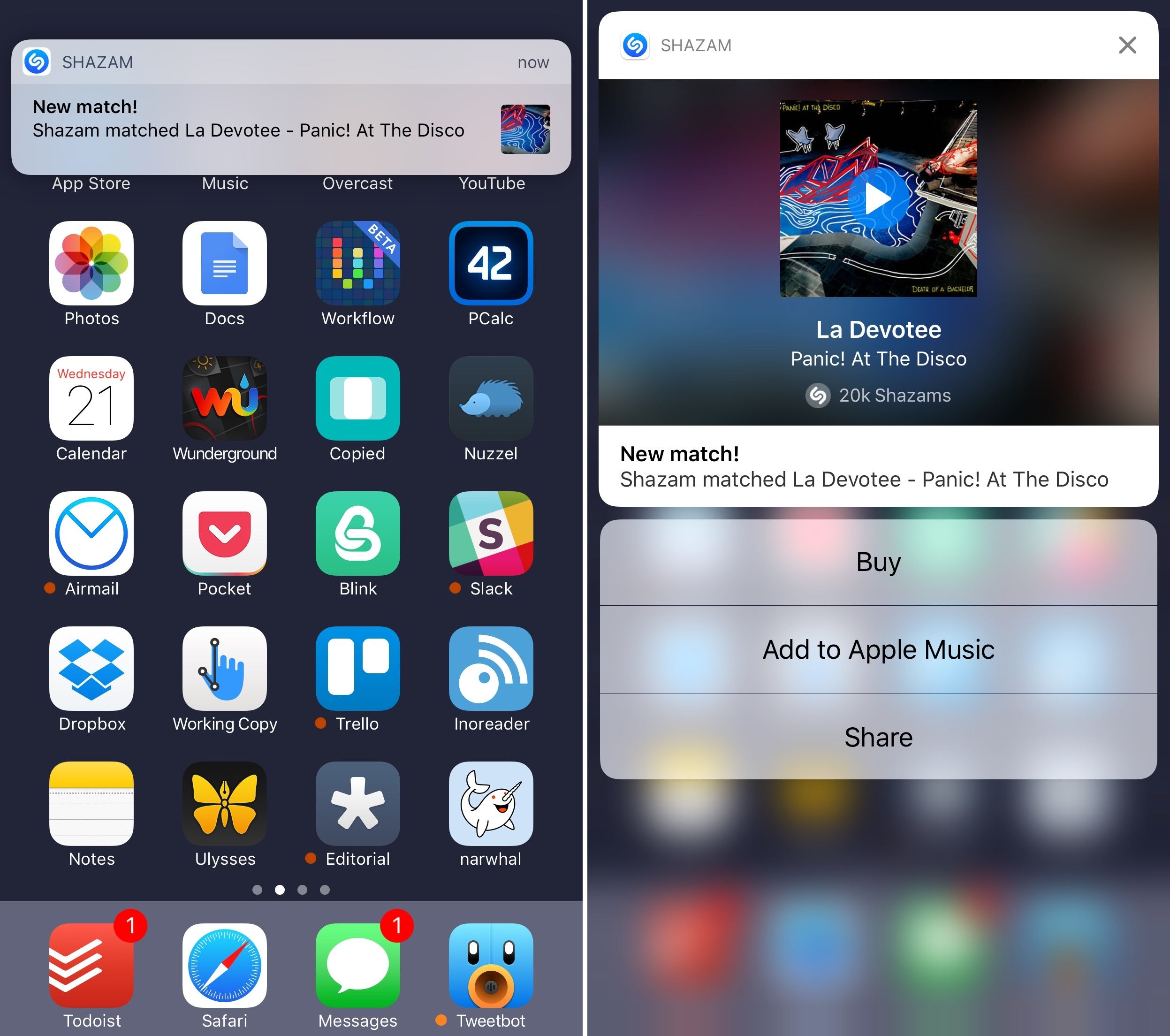

To my surprise, Shazam started pushing tagged songs using iOS 10’s new notification framework. Their implementation is a great example of what developers can achieve with rich notifications: a notification can be expanded and you’ll be presented with a custom view showing the song’s title, artist, album artwork, and global number of Shazams by users. But that’s not all – you can also tap on the artwork to listen to a song’s preview inside the notification without opening the Shazam app. If you want to act on the notification, there are three quick actions (another change made possible by iOS 10) to buy the song, add it to a playlist on Apple Music, or share it.

Once I realized I could catch up on tagged songs from Notification Center, I left Shazam running and enjoyed the rest of the movie. At the end, I went through my notifications, listened to each audio snippet, and saved a few songs in my Apple Music playlists.

The final result would have been the same in iOS 9, but the experience wouldn’t have been as nice (or as fast) without rich notifications. I’m looking forward to more apps adopting similar notification features in the next few months.