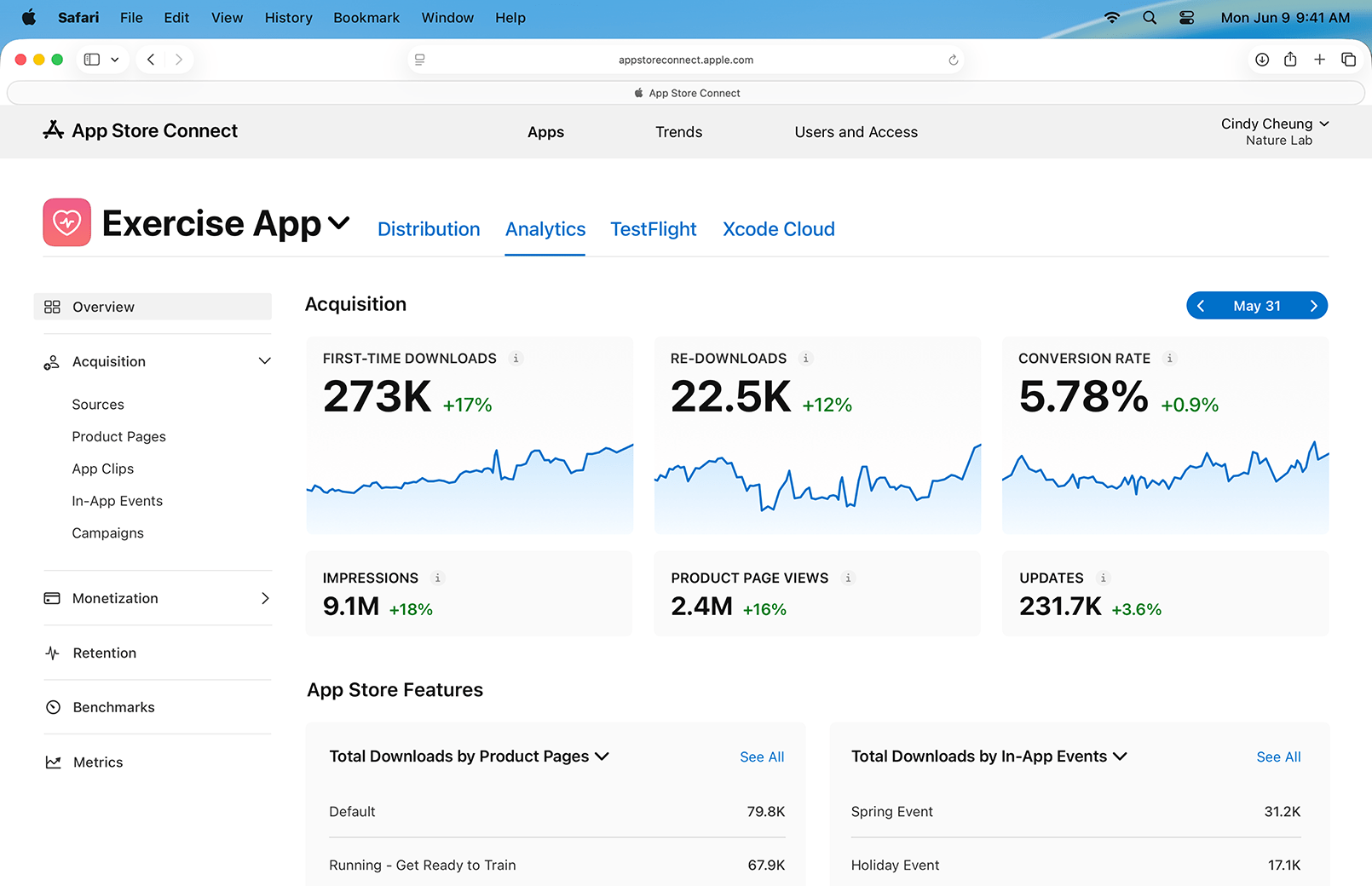

Overnight, Apple rolled out a big update to App Store Connect with new sales and analytics tools for developers. App Store Connect is the online portal that developers use to manage everything related to selling apps from TestFlight betas, to managing their App Store listings and tracking sales data and analytics.

It’s that last piece that was overhauled with this release. In fact, Apple’s post on its developer site says there are over 100 new metrics developers can use to measure the performance of their apps, all of which have been designed in a privacy-first way to protect users.

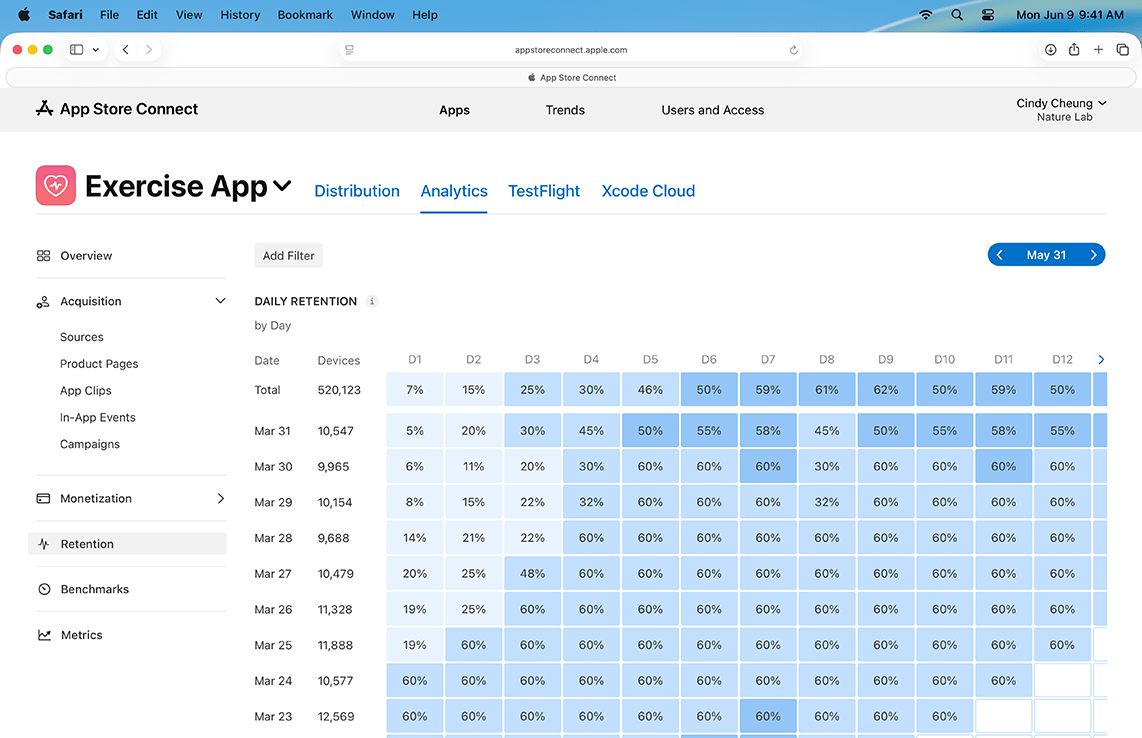

The granularity is impressive. For example, developers can track where their sales are coming from, including search, App Store browsing, web sources, and more. Conversion rates are a big part of the analytics, allowing developers to see how many people have seen their apps on the Store and downloaded them, breaking down first-time downloads and re-downloads. Analytics also tie into App Store features like In-App Events, custom product pages, and developer marketing efforts across a multitude of channels using campaign links. There’s a lot more, including metrics that track app pre-orders, user engagement and retention, and good old-fashioned sales data sliced and diced to allow developers to better understand the sources of their income.

And that’s really just the tip of the iceberg of what has changed in App Store Connect. So if you’re a developer, it’s worth spending some time with your app data and reading the new guide Apple published that covers it all.

Since the changes rolled out, a couple of concerns I’ve seen expressed online are that there will no longer be a single place to view the aggregate performance of multiple apps and that the new default reporting period is three months. Those concerns are well founded. The changes are organized on an app-by-app basis, and as Apple says in a banner on App Store Connect, the Dashboards in the Trends section of Connect and related reports where that data was available are being deprecated later this year and next. So, while the data Apple offers is deep for each app, the aggregate data falls short by not providing a birds-eye view of a developer’s entire app catalog.

For what it’s worth, Apple is aware of the feedback regarding cross-app reporting. Also, the shorter sales reporting periods, such as the past 24 hours and seven days, are still available, but they’re less visible because three months is the new default.

This is a big update to App Store Connect that will take developers time to get used to, but it’s also a welcome change that provides meaningful new insights into App Store performance. I expect that there will be more areas where the changes fall short of developers’ expectations. However, it’s also clear to me that Apple has heard the early feedback, so I wouldn’t be surprised if adjustments are made in the future. On balance, though, I think the changes give developers valuable new ways to think about and manage their businesses across the increasingly competitive app landscape, which is welcome.