OpenAI announced GPT-5.5 and GPT-5.5 Pro today, which it says are faster and able to work more autonomously than the company’s previous models. It’s a message that is sure to interest business users whether their goal is accelerating software development or increasing productivity more generally. Some of the areas that OpenAI says GPT-5.5 and GPT-5.5 Pro excel at include:

- writing and debugging code;

- analyzing data;

- conducting web research;

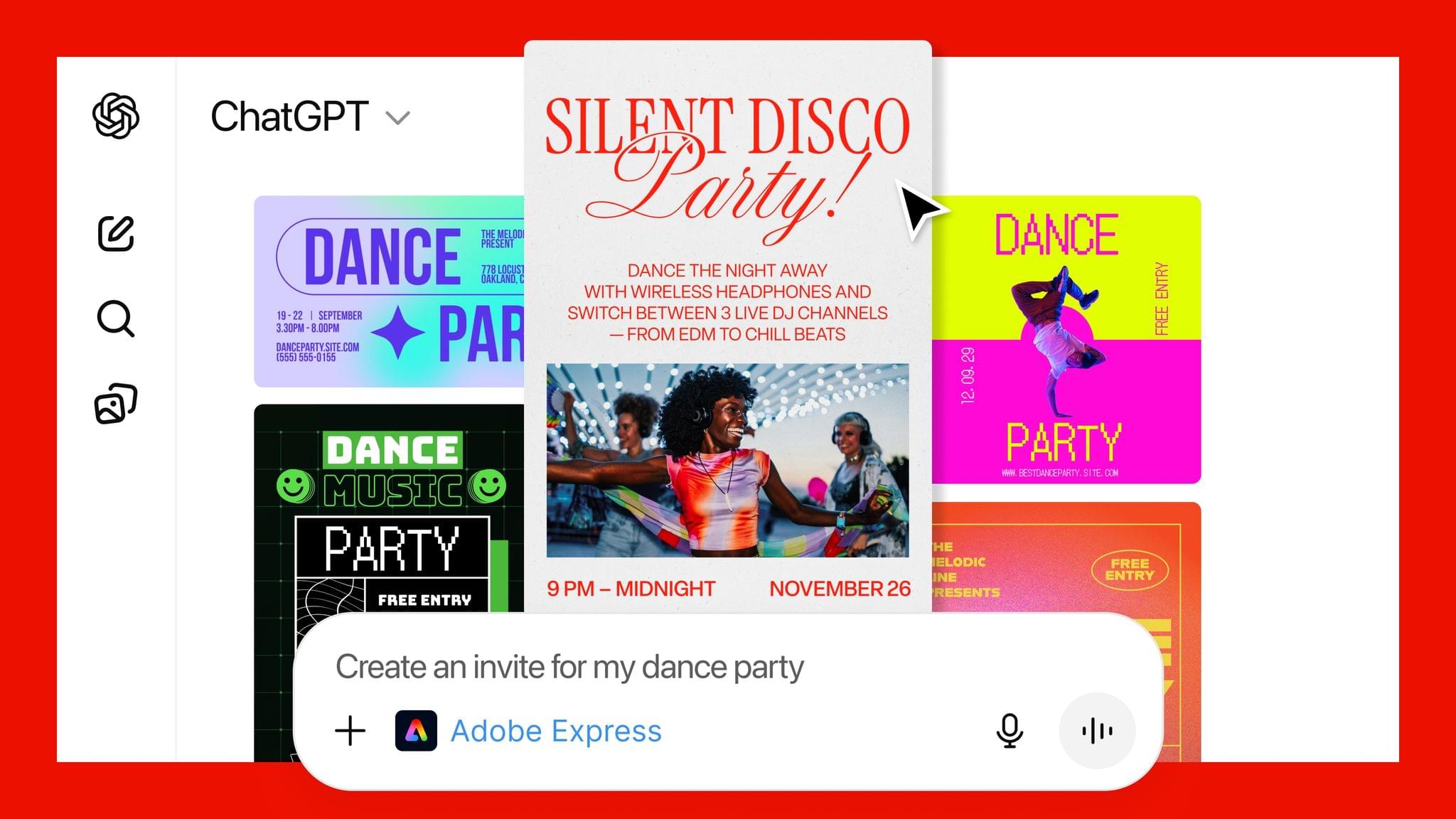

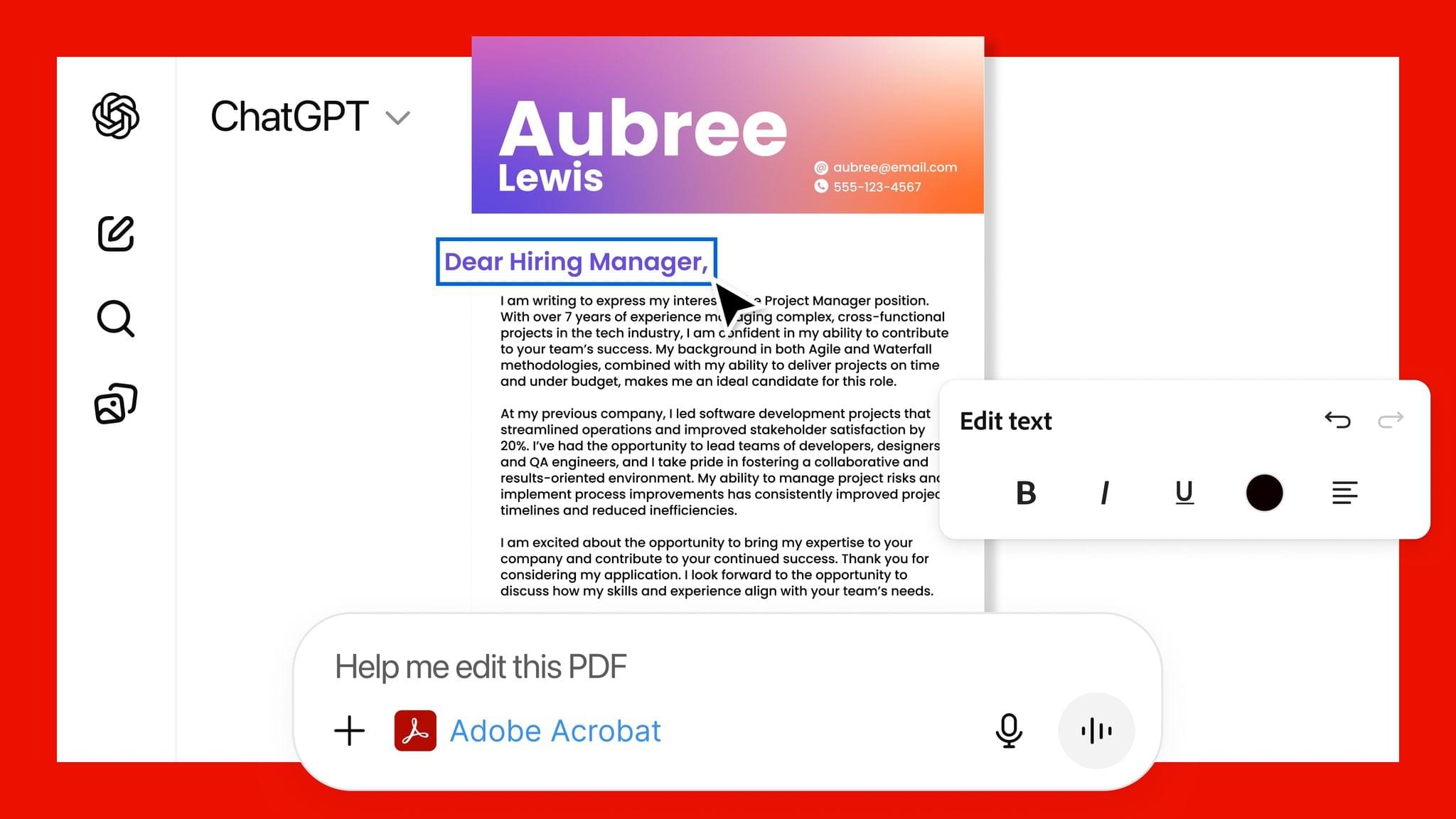

- creating business documents such as spreadsheets and presentations;

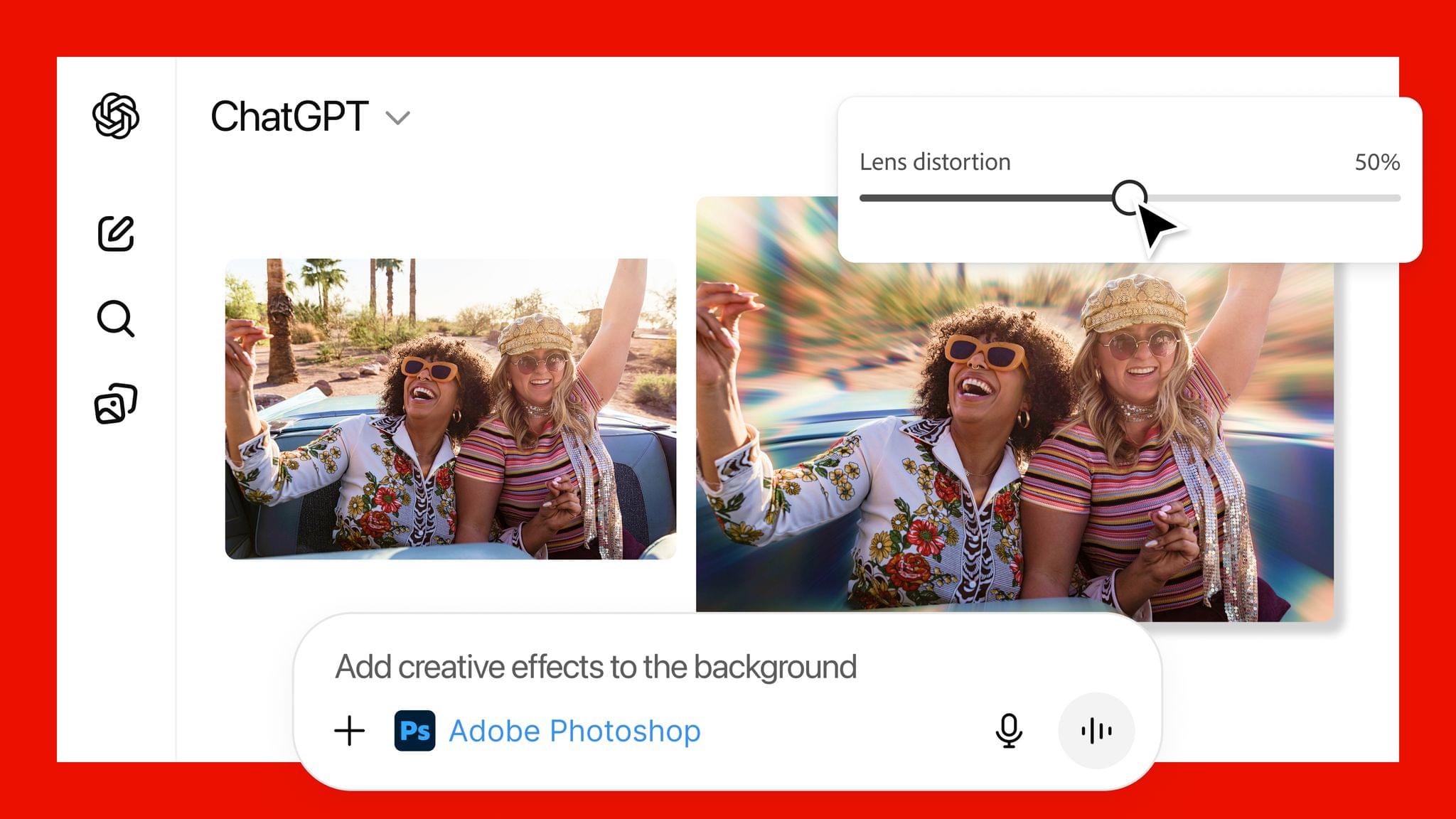

- using apps; and

- juggling multiple tools.

In its press release, OpenAI claims that:

The gains are especially strong in agentic coding, computer use, knowledge work, and early scientific research—areas where progress depends on reasoning across context and taking action over time. GPT‑5.5 delivers this step up in intelligence without compromising on speed: larger, more capable models are often slower to serve, but GPT‑5.5 matches GPT‑5.4 per-token latency in real-world serving, while performing at a much higher level of intelligence. It also uses significantly fewer tokens to complete the same Codex tasks, making it more efficient as well as more capable.

I haven’t tried either model yet, but early reactions seem to support OpenAI’s claims that GPT-5.5 understands user intent better, requiring less precise instructions. The company says it is better at using the tools at its disposal, and checking its own work, too. OpenAI says the Pro model takes that up a notch, working faster on more complex tasks, such as programming, research, and document-intensive workflows. Whether the early hype translates into real-world gains that are noticeable in everday work, remains to be seen, but we shouldn’t have long to wait though, since GPT-5.5 is rolling out to users now.

GPT-5.5 is available in ChatGPT and Codex to Plus, Pro, Business, and Enterprise subscribers, and GPT-5.5 Pro is limited to Pro, Business, and Enterprise subscribers in ChatGPT. Neither model is available through OpenAI’s API, but the company says they will be soon.