Textual Siri

Here’s a good article by Rene Ritchie from June 2012 about a textual interface for Siri:

If Spotlight could access Siri’s contextually aware response engine, the same great results could be delivered back, using the same great widget system that already has buttons to touch-confirm or cancel, etc.

I completely agree. Spotlight lets you find apps and data to launch on your device; aside from its “assistant” functionality, Siri lets you search for specific information (either on your device or the web). There’s no reason find and search shouldn’t be together. Siri gained app-launching capabilities, but Spotlight still can’t accept Siri-like text input.

The truth is, I think using Siri in public is still awkward. My main use of Siri is adding calendar events or quick alarms when I’m a) cooking or b) driving my car. When I’m working in front of an iPad, I just don’t see the point of using voice input when I have a keyboard and the speech recognition software is still failing at recognizing moderately complex Italian queries. When I’m waiting for my doctor or in line at the grocery store, I just don’t want to be that guy who pulls out his phone and starts talking with a robotic assistant. Ten years after my first smartphone, I still prefer avoiding phone calls in public because a) other people don’t need to know my business and b) I was taught that talking on the phone in public can be rude. How am I supposed to tell Siri to “read me” my schedule when I have 10 people around me?

I think a textual Siri, capable of accepting written input instead of spoken commands, would provide a great middle ground for those situations when you don’t want to/can’t talk in public. Like Rene, I think putting the functionality in Spotlight would be a fine choice; apps like Fantastical have shown that “natural language input” with text can still be a modern, useful addition to our devices.

Text input brings different challenges: how would Siri handle typos? Would it wait until you’ve finished writing a sentence or refresh with results as-you-type? Would Siri lose its “conversational” approach, or provide butttons to reply with “Yes” or “No” to its further questions?

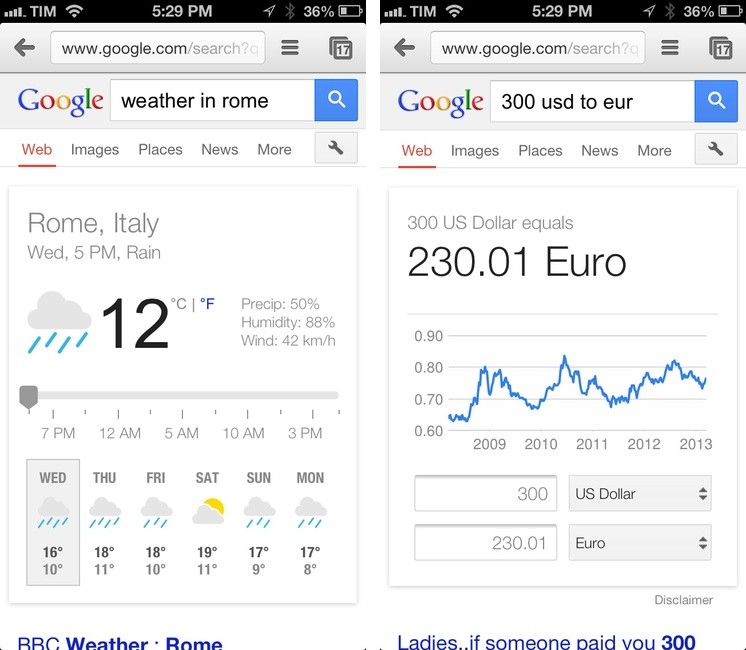

Text, however, has also its advantages: text is universal, free of voice alterations (think accents and dialects), independent from surrounding noise and/or microphone proximity. With a textual Siri, Apple could keep its users within its control by letting them ask for restaurant suggestions, weather information, unit conversions, or sports results without having to open other apps and/or launch Google.

It’s just absurd to think semantic search integration can only be applied to voice recognition, especially in the current version of Siri. I agree with Kontra: Siri isn’t really about voice.

More importantly: if Google can do it, why can’t Apple?