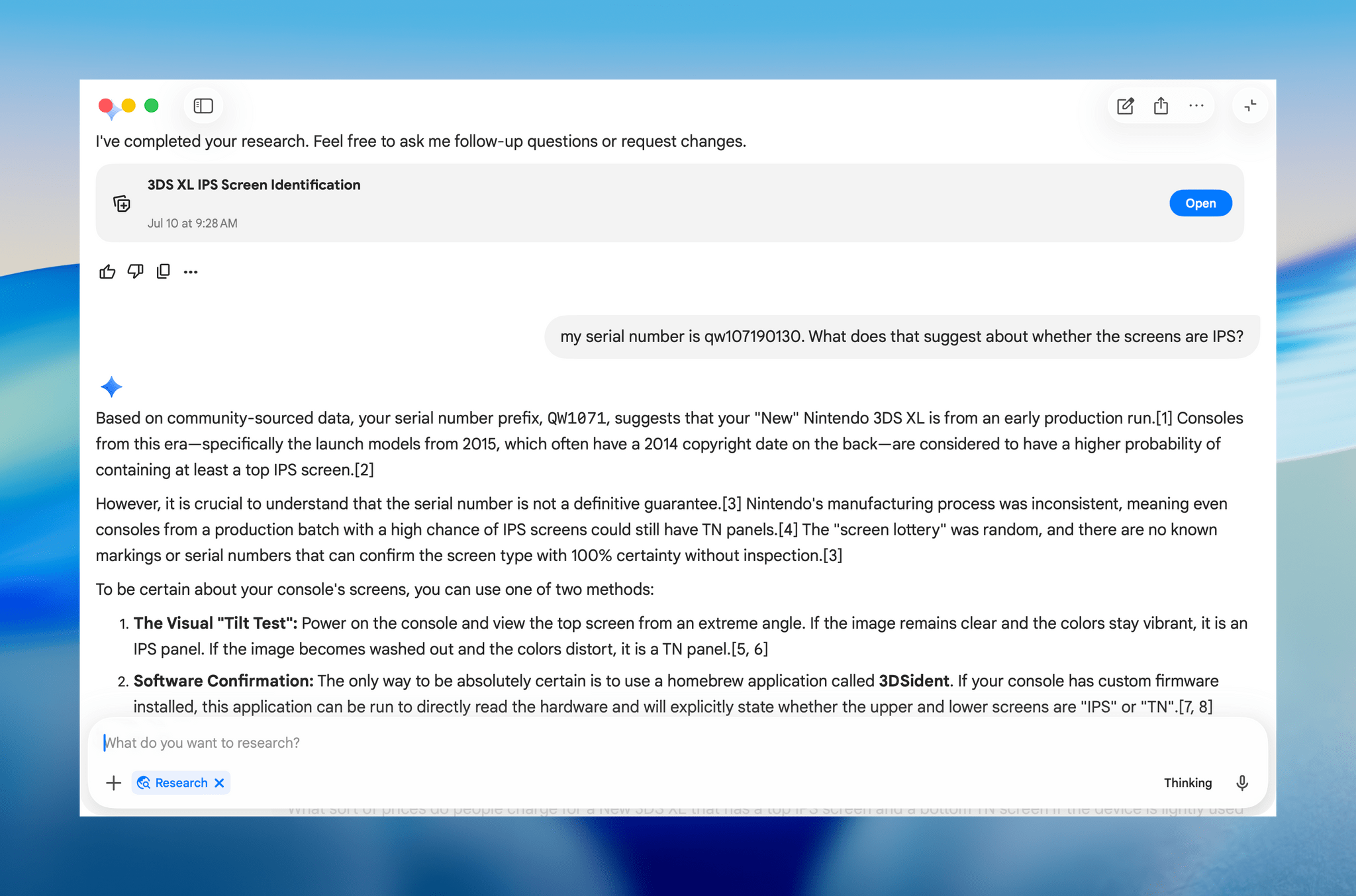

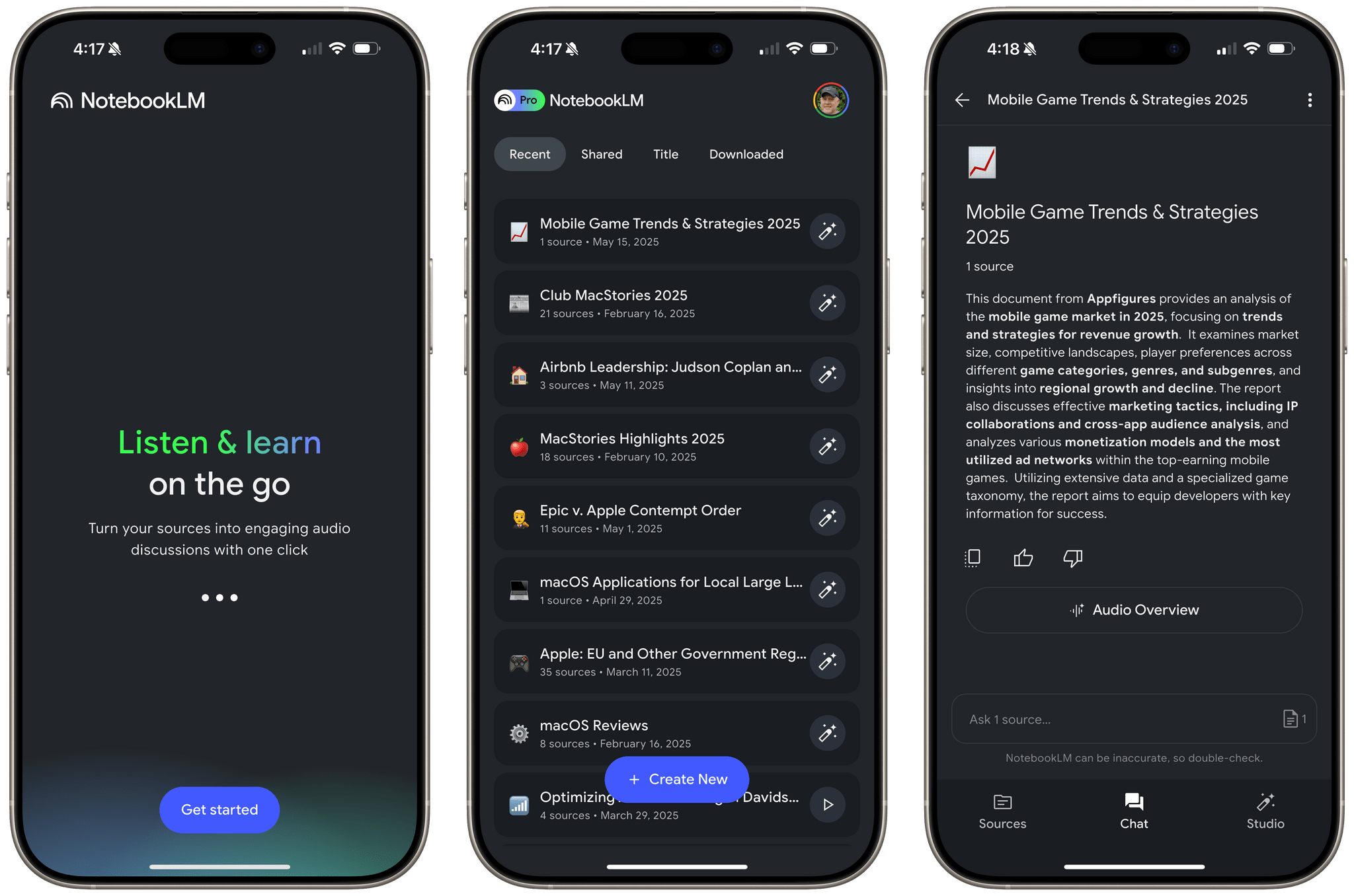

Google’s AI research tool NotebookLM dropped on the App Store for iOS and iPadOS a day earlier than expected. If you haven’t used NotebookLM before, it’s Google’s AI research tool. You feed it source materials like PDFs, text files, MP3s, and more. Once your sources are uploaded, you can use Google’s AI to query the sources, asking questions and creating materials that draw on your sources.

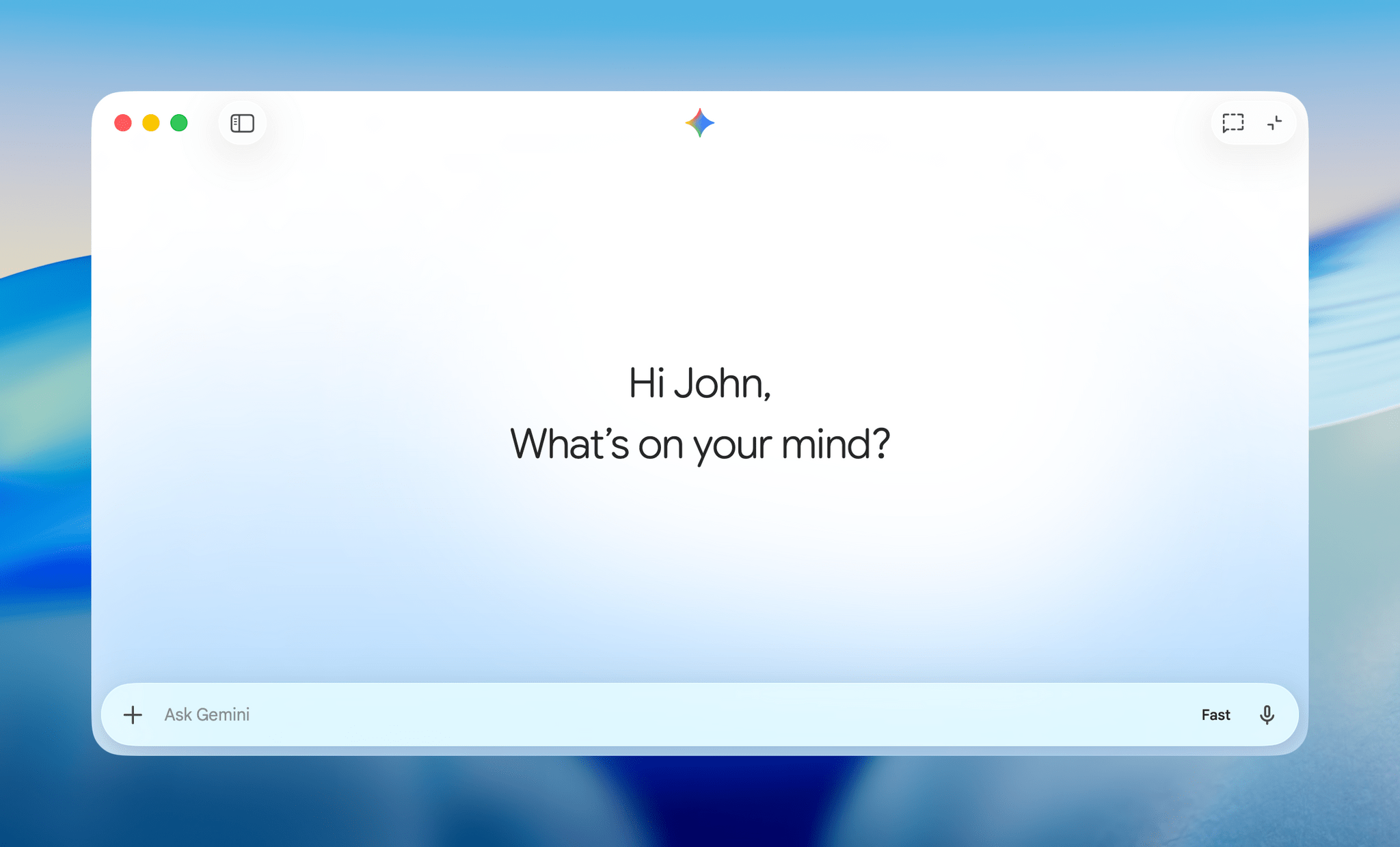

Of all the AI tools I’ve tried, NotebookLM’s web app is one of the best I’ve used, which is why I was excited to try it on the iPhone and iPad. I’ve only played with it for a short time, but so far, I like it a lot.

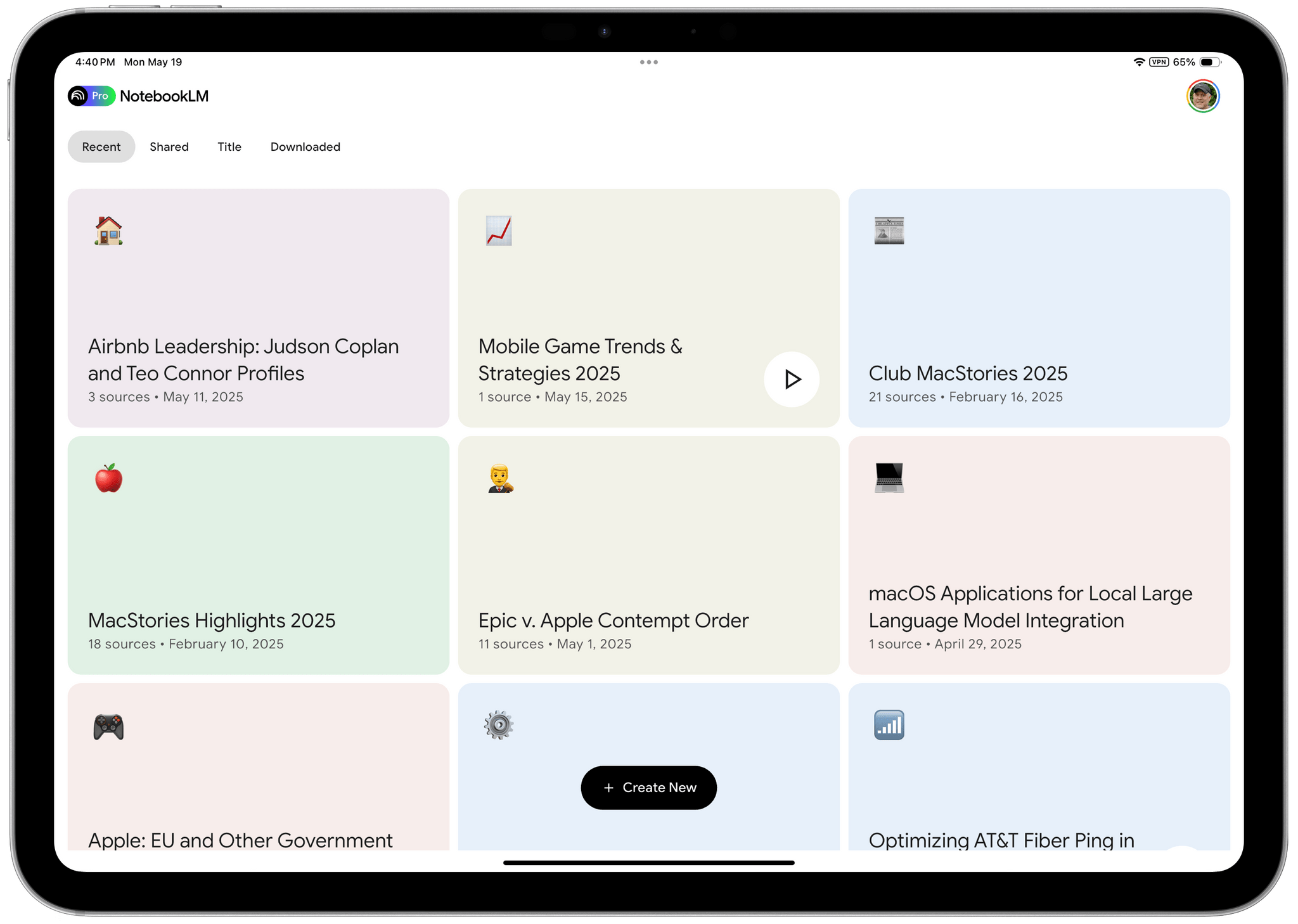

Just like the web app, you can create, edit and delete notebooks, add new sources using the native file picker, view existing sources, chat with your sources, create summaries, timelines, and use the Studio tab to generate a faux podcast of the materials you’ve added to the app. Notebooks can also be filtered and sorted by Recent, Shared, Title, and Downloaded. Unlike the web app, you won’t see predefined prompts for things like a study guide, a briefing document, or FAQs, but you can still generate those materials by asking for them from the Chat tab.

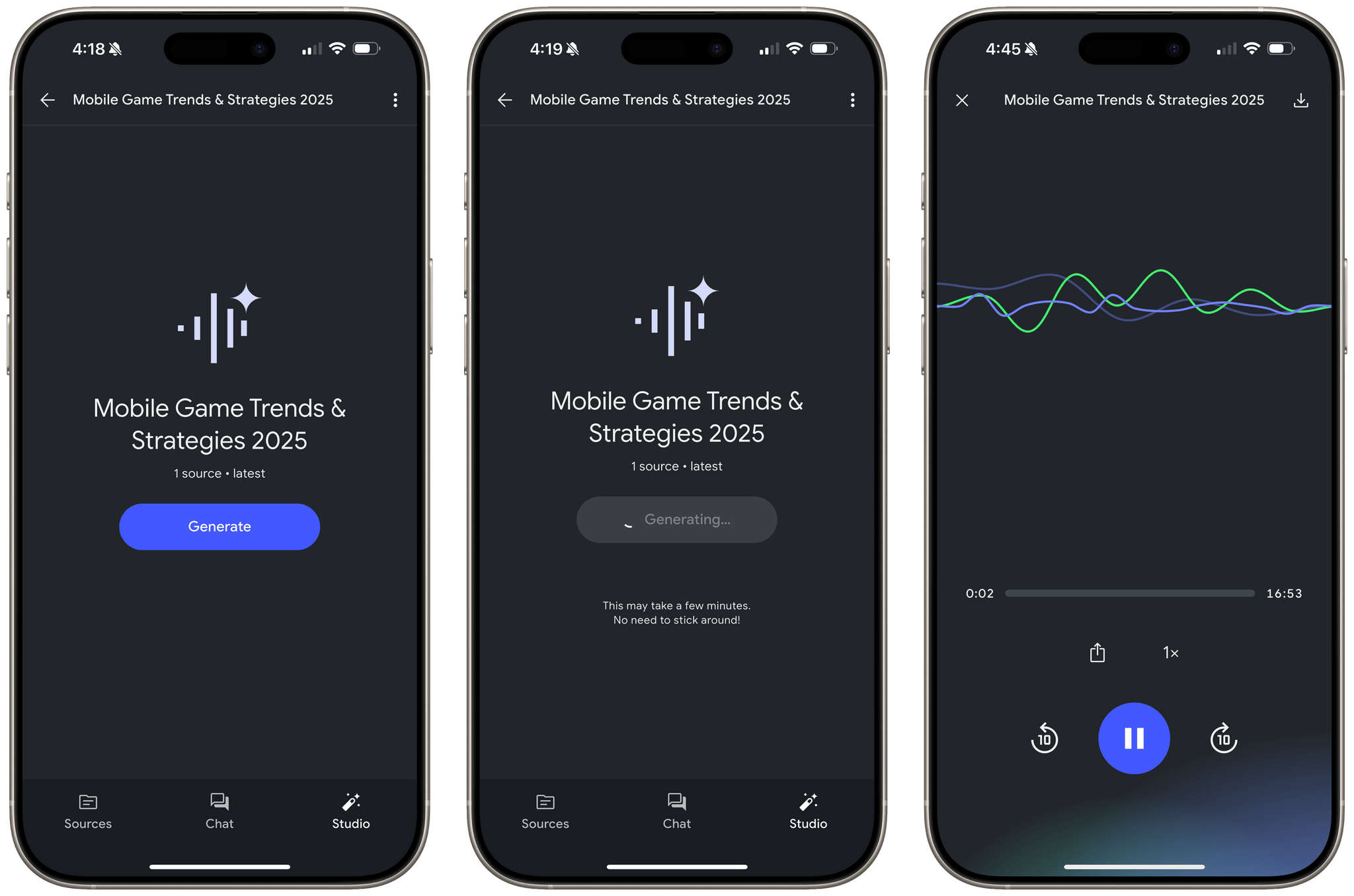

NotebookLM’s native iOS and iPadOS app is primarily focused on audio. The app lets you generate audio overviews from the Chats tab and ‘deep dive’ podcast-style conversations that draw from your sources. Also, the audio generated can be downloaded locally, allowing you to listen later whether or not you have an Internet connection. Playback controls are basic and include buttons to play and pause, skip forward and back by 10 seconds at a time, control playback speed, and share the audio with others.

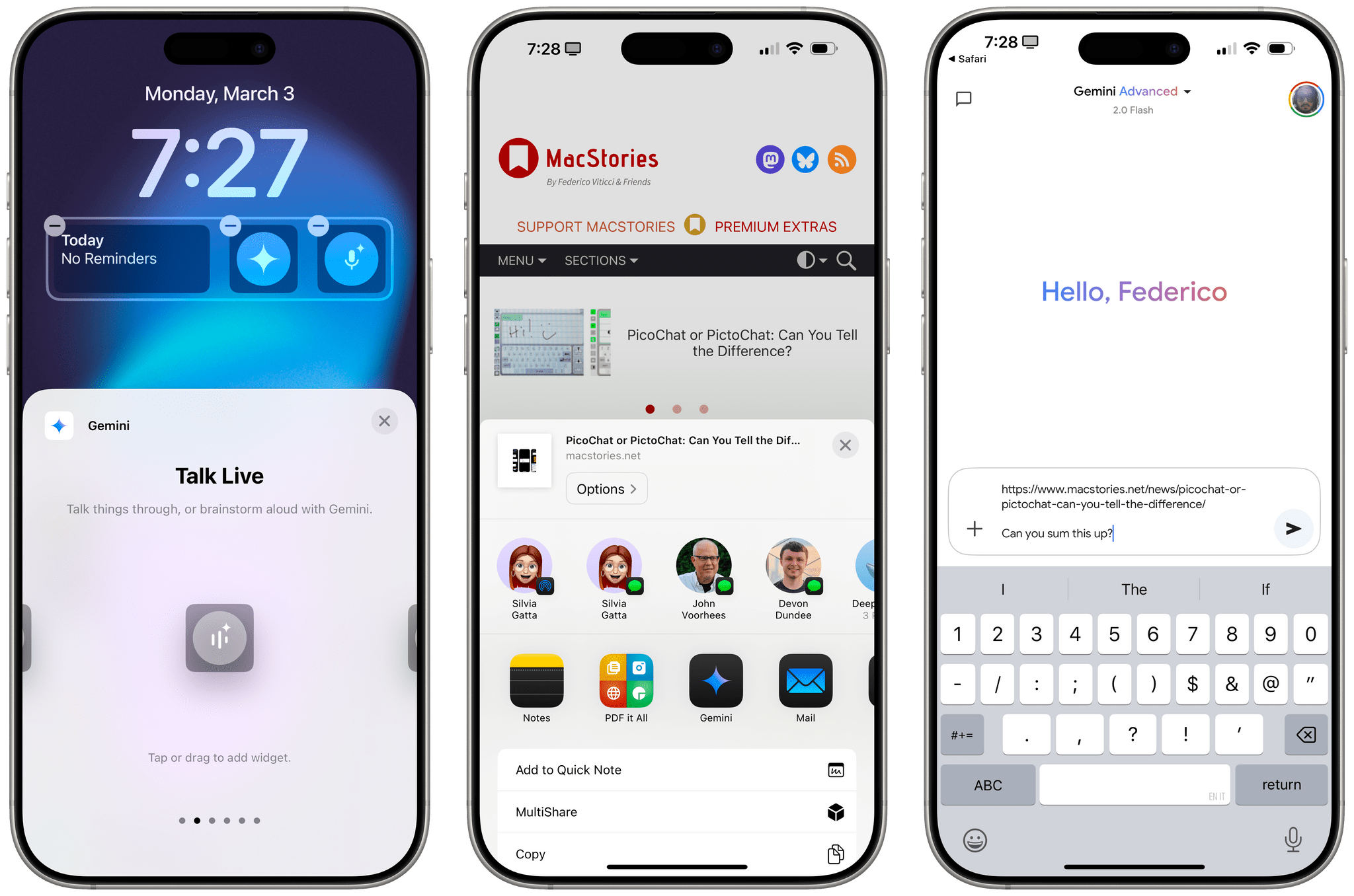

What you won’t find is any integration with features tied to App Intents. That means notebooks don’t show up in Spotlight Search, and there are no widgets, Control Center controls, or Shortcuts actions. Still, for a 1.0, NotebookLM is an excellent addition to Google’s AI tools for the iPhone and iPad.

NotebookLM is available to download from the App Store for free. Some NotebookLM features are free, while others require a subscription that can be purchased as an In-App Purchase in the App Store or from Google directly. You can learn more about the differences between the free and paid versions of NotebookLM on Google’s blog.