Joanna Stern of The Wall Street Journal, who interviewed Craig Federighi, Apple’s Senior Vice President of Software Engineering, in connection with the new security features coming to its platforms, reports that Apple has abandoned its efforts to identify child sexual-abuse materials in its devices. According to Stern:

Last year, Apple proposed software for the iPhone that would identify child sexual-abuse material on the iPhone. Apple now says it has stopped development of the system, following criticism from privacy and security researchers who worried that the software could be misused by governments or hackers to gain access to sensitive information on the phone.

Federighi told Stern:

Child sexual abuse can be headed off before it occurs. That’s where we’re putting our energy going forward.

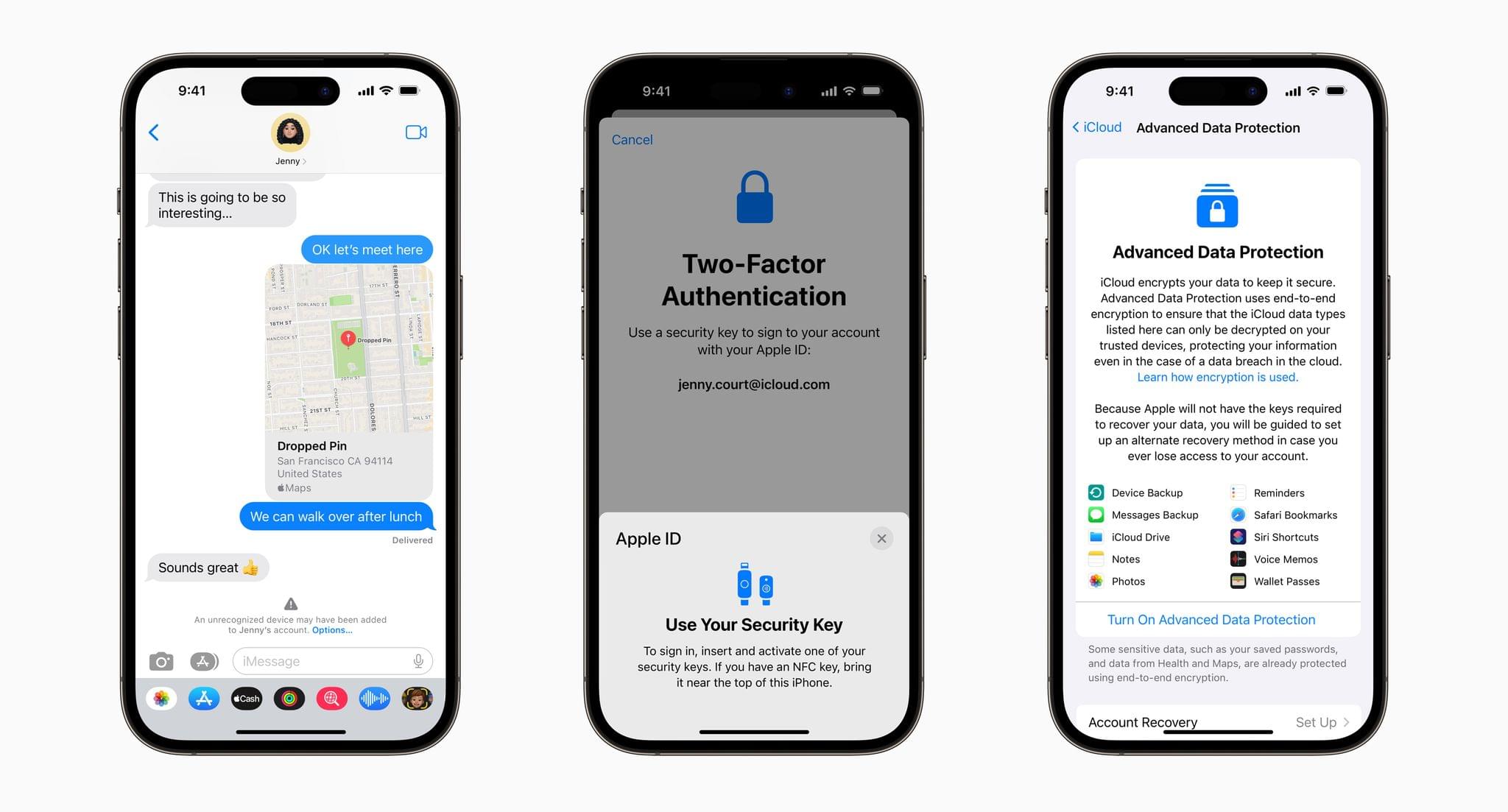

Apple also told The Wall Street Journal that Advanced Data Protection that allows users to opt into end-to-end encryption of new categories of personal data stored in iCloud, will be launched in the US this year and globally in 2023.

For an explanation of the new security protections announced today, be sure to catch Joanna Stern’s full interview with Craig Federighi.