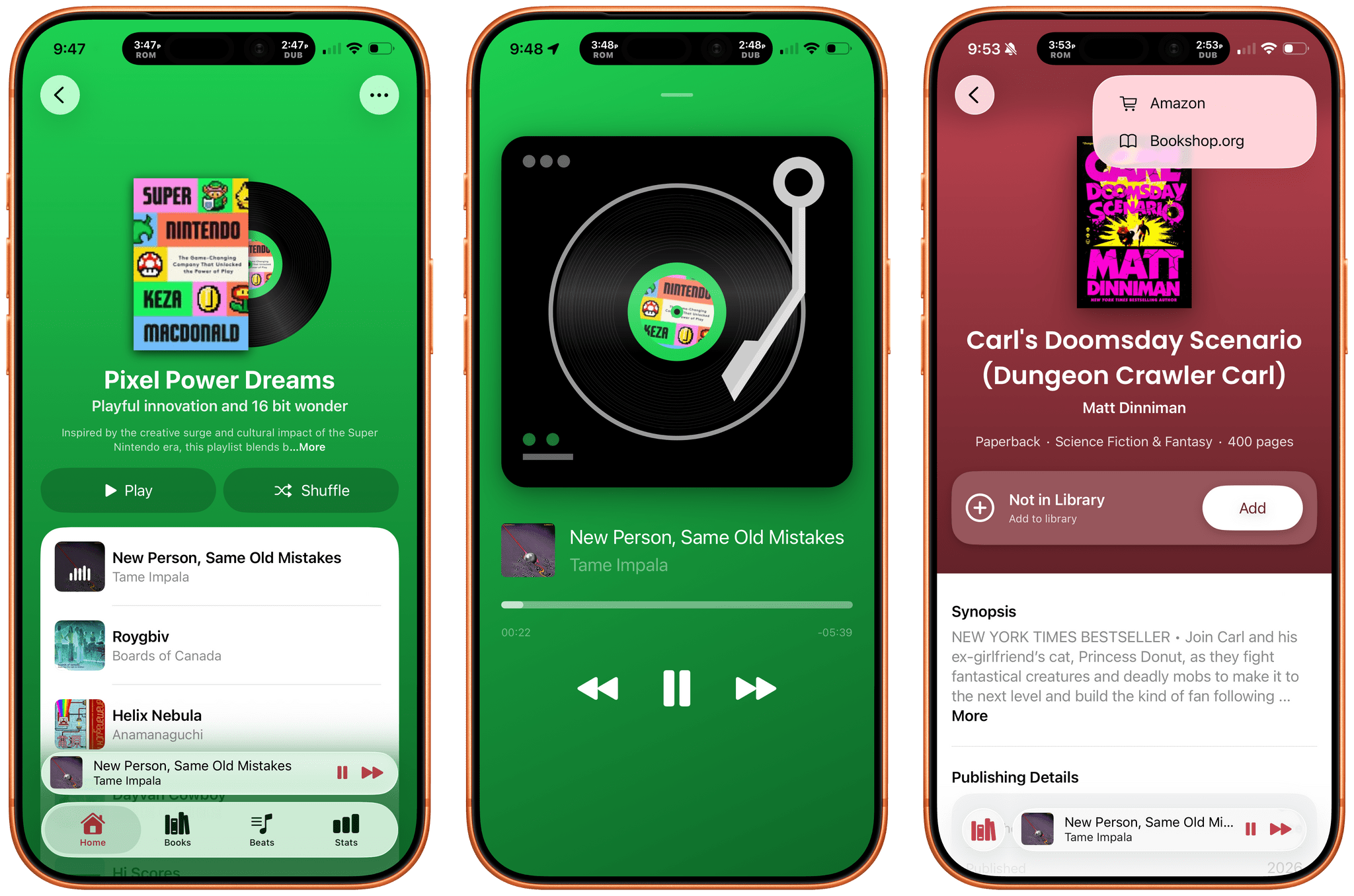

Tracking the books you read is well-trodden App Store ground. So is making music playlists. But what if you combined the two? The result is Book Beats, a new iPhone and iPad app from Olea Studios’ Casey and Lisa Doyle, that does just that, managing to elevate books and music in a new and unique way by bringing reading and listening together. It’s a terrific example of an app made by people who deeply understand its subject matter and bring AI to bear in a focused, tasteful way that elevates the app’s experience without being gimmicky.

Posts tagged with "music"

Book Beats: Reading to the Rhythm

Apple Is Working on an AI Music Tagging System→

Music Business Worldwide (via MacRumors) is reporting that Apple is rolling out a voluntary metadata system for identifying AI-generated content on Apple Music called Transparency Tags. Introduced by Apple in a newsletter sent to music industry partners, Transparency Tags is:

a system of disclosure labels that record labels and music distributors can begin applying to content delivered to Apple Music immediately, and will be required to use when delivering new content in [the] future.

According to Music Business Worldwide, the tagging system covers artwork, tracks, composition elements such as lyrics, and music videos. The publication quotes Apple’s newsletter as explaining that it views Transparency Tags as part of an initial effort toward giving the music industry what it needs to develop AI policies.

Although there are currently no consequences for failing to properly tag AI-generated music, Transparency Tags are a step in the right direction. The music industry and other creative industries are all grappling with how to deal with a flood of AI-generated content in a rapidly evolving environment. I don’t expect to see one approach sweep across industries any time soon, but it’s encouraging to see Apple taking a lead in pushing the conversation forward.

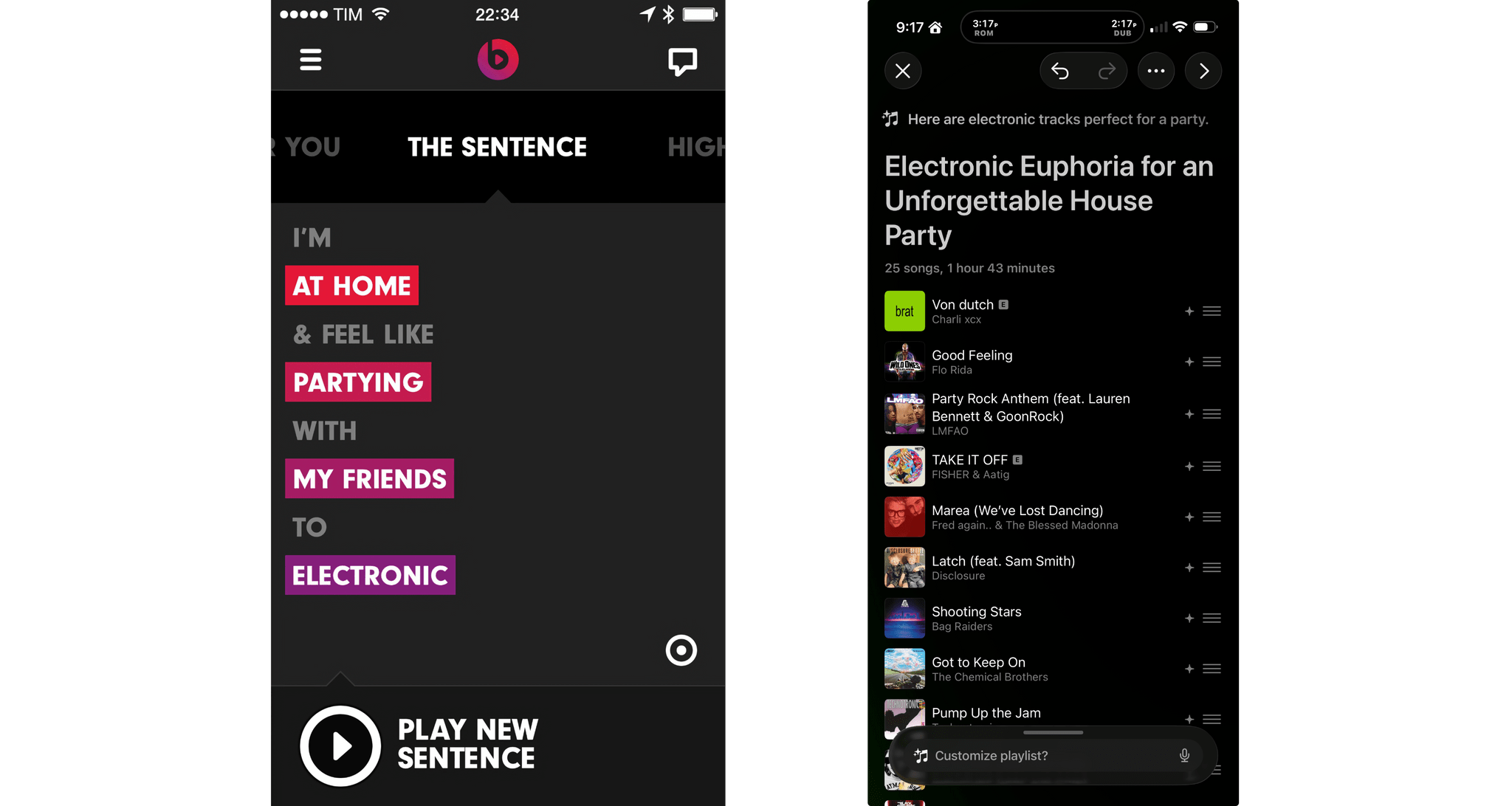

The Sentence Returns with iOS 26.4, Sort of

Yesterday, Apple released developer beta 1 of iOS 26.4, which among other things adds a feature to the Music app that uses Apple Intelligence to generate a playlist from a short description of what the user wants to hear. That immediately reminded Federico and me of The Sentence, a Beats Music feature that sadly didn’t survive the app’s acquisition by Apple.

The Sentence allowed subscribers to describe the music they wanted to hear based on a Mad Libs-style sentence construction. Every sentence was structured as “I’m [location] & feel like [mood] with [person/group] to [music genre].” The feature was a fantastic innovation that made playlist creation fun and easy. As Federico described it in 2014:

It’s The Sentence, though, that steals the spotlight in how it combines regular, Pandora-like song shuffling with a context/mood-based menu to tell Beats what you want to listen to. The Sentence, as the name implies, lets you construct a sentence using variable tokens for location, mood, user, and music genre. You can request things like “I’m at my computer and feel like dancing with myself to pop”, “I’m in the car and feel like driving with my friends to indie”, or more absurd contexts such as “I’m underpaid and I feel like shoveling snow with my lover to metal”. As reported by Re/code [Ed. note: This is a dead link], Beats explained that “the content, and the filters, are selected and tuned by humans, and an algorithm generates the playlist from your choices”.

Our Final 2025 MacStories Setups Update→

John: As 2025 comes to an end, Federico and I thought we’d cap off the year with a final update on our setups. We just went through this in November, but both Federico and I decided to take advantage of Black Friday sales to improve our setups in very different ways. Let’s take a look.

My changes were primarily to my office setup. I’ve wanted a gaming PC for a long time, but I never had a good place to set one up. The solution was to go with a high-end mini PC, the GMKtec EVO-X2, which features a Strix Halo processor, 64GB of RAM, and a 2TB SSD. It came with Windows installed, but after a few days, I installed Bazzite, an open-source version of SteamOS, which makes it dead simple to access my Steam videogame library.

Two things kept me from getting a PC earlier. The first was space, which the EVO-X2 takes care of nicely because it’s roughly the size of the Mac mini before its recent redesign.

The second and bigger issue, though, was my Studio Display. It’s an excellent screen, but it’s showing its age with its 60Hz refresh rate and 600 nits of brightness. Plus, with one Thunderbolt port for connecting to your Mac and three USB-C ports, the Studio Display is limiting. Without HDMI or DisplayPort, connecting it to other video sources like a PC or game console is nearly impossible.

So I also bought a deeply discounted ASUS ROG Swift 32” 4K OLED Gaming Monitor, which is attached to my desk using a VIVO VESA desk mount. I’d wanted a bigger screen for work anyway, and with its 240Hz refresh rate and bright OLED panel, the ASUS has been excellent. However, the ASUS display really shines when connected to my GMKtec and Nintendo Switch 2. As I covered on NPC: Next Portable Console recently, the mini PC combined with a great monitor, which also allows me to stream games to my handhelds over my local network, was the missing link in my setup, delivering a flexibility I just didn’t have before.

Along with the gaming part of my desktop setup, I updated my desktop lighting with two Philips Hue Play Wall Washer lights and a Hue Play HDMI Sync Box 8K, which casts light against the wall behind my desk that’s synced with what’s onscreen. In fact, the Sync Box 8K works with all the Hue lights in my office, allowing me to create a more immersive environment when I’m gaming.

I’ve been using a handful of other accessories lately, too, including:

- an Anker C300 battery that has the capacity of roughly nine 10,000 mAh batteries, allowing me to charge multiple devices without thinking about whether my battery has enough charge,

- the Philips Hue Bridge Pro, an updated version of the bridge that connects the Philips Hue lights throughout my house,

- Kuxiu’s clever K1 Ultra Qi 2.2 battery pack, which can charge multiple devices quickly and act as an iPhone stand,

- Native Union’s Weighter, a paperweight-like accessory for preventing cables from slipping off your desk, and

- The MOFT Dynamic Folio, an iPad case that folds into a wide variety of configurations for propping it while working.

That’s it from me for 2025, folks. Enjoy the holidays! Things will be a little quieter at MacStories over the next couple of weeks as we unwind and spend the time with family and friends over the holidays, but we’ll be back with lots more before long.

Federico: For this final update to my setup before the end of the year, I focused on two key areas: audio and my living room TV setup.

The biggest – literally – upgrade for me this month has been switching from my previous LG 65” TV to a flagship LG G5 77” model. I’d been keeping an eye on this TV for a while: it’s LG’s first model to use Tandem OLED technology, and it boasts higher brightness in both SDR and HDR with reduced reflections thanks to the new panel. I took advantage of an incredible Black Friday deal in Italy to buy it at 50% off, and we love it. The TV rests almost flush against the wall thanks to its compact design, but since it’s not completely flush, it allowed us to re-install our Philips Hue Gradient Light Strip behind it. Since I was in a renovation mood and I also wanted to future-proof my setup for the Steam Machine in 2026, I also upgraded to a Hue Bridge Pro and replaced my previous Hue Sync Box with the latest 8K edition that is certified for HDMI 2.1 connections. Speaking of gaming: as I discussed this week on NPC, I got a Beelink SER9 Pro mini PC and installed Bazzite on it to get a taste for SteamOS in the living room; this one will eventually be replaced by a more powerful Steam Machine.

The other area of improvement was audio. I recently realized that I wanted to fully take advantage of Apple Music and Spotify’s support for lossless playback with wireless headphones, which is something that, alas, Apple’s AirPods Max do not support. So after much research, I decided to treat myself to a pair of Bowers & Wilkins Px8 S2, which are widely considered some of the best Bluetooth headphones that you can buy right now. But you may be wondering: how do you even connect these headphones to Apple devices that do not support Qualcomm’s aptX Lossless or Adaptive codecs? That’s where the BT-W6 Bluetooth dongle comes in. In researching this field, I came across this relatively new category of small Bluetooth adapters that plug into an iPhone’s USB-C port (they work on a Mac or iPad, too) and essentially override the device’s built-in Bluetooth chip. Once headphones are paired with the dongle rather than the phone, wireless streaming from Apple Music or Spotify will use aptX Lossless instead of Apple’s legacy SBC protocol. The difference in audio quality is outstanding, and it makes me appreciate the Px8 S2 for all they have to offer.

While I was at it, I also took advantage of another deal for a Sonos Move 2 portable speaker; we’ll have to decide whether this one will be permanently docked on my desk or next to a record player that Silvia is getting me for Christmas. (We don’t like surprises for each other, especially when it comes to furniture-adjacent shopping.)

So that’s my update before we go on break for a couple of weeks. I can already feel that, when I’m back, I’ll have some changes to cover on the software front. But we’ll talk about those in 2026.

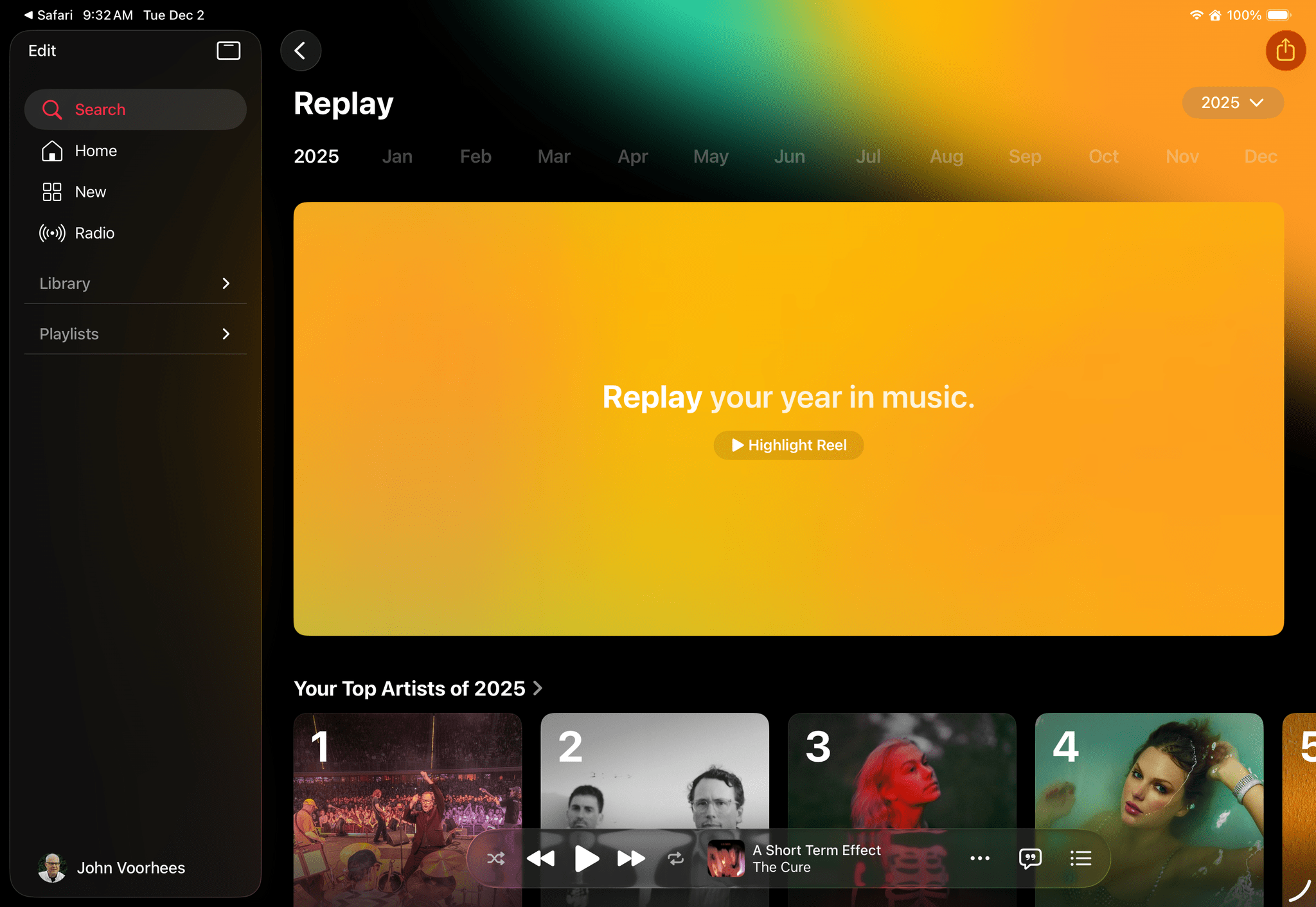

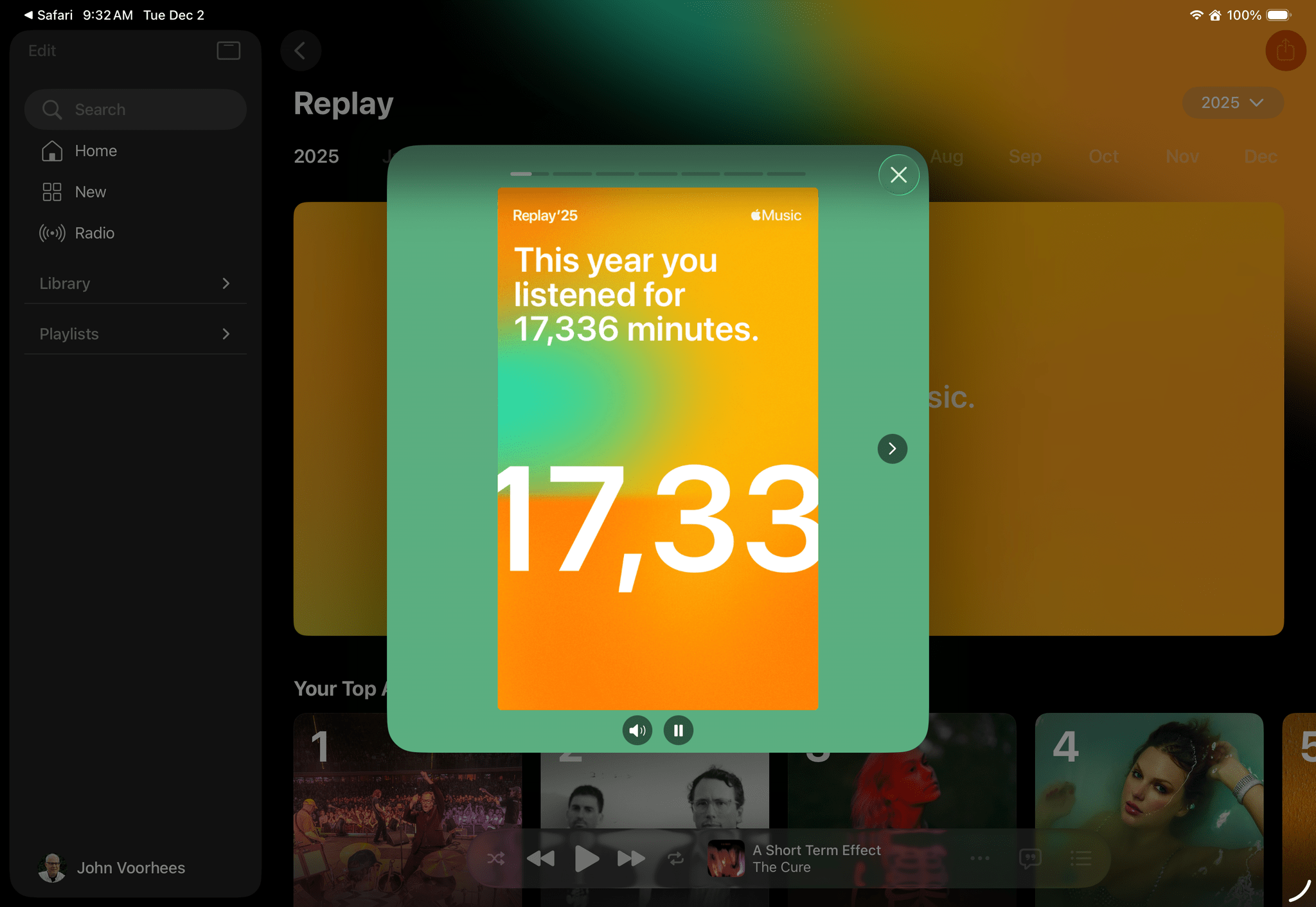

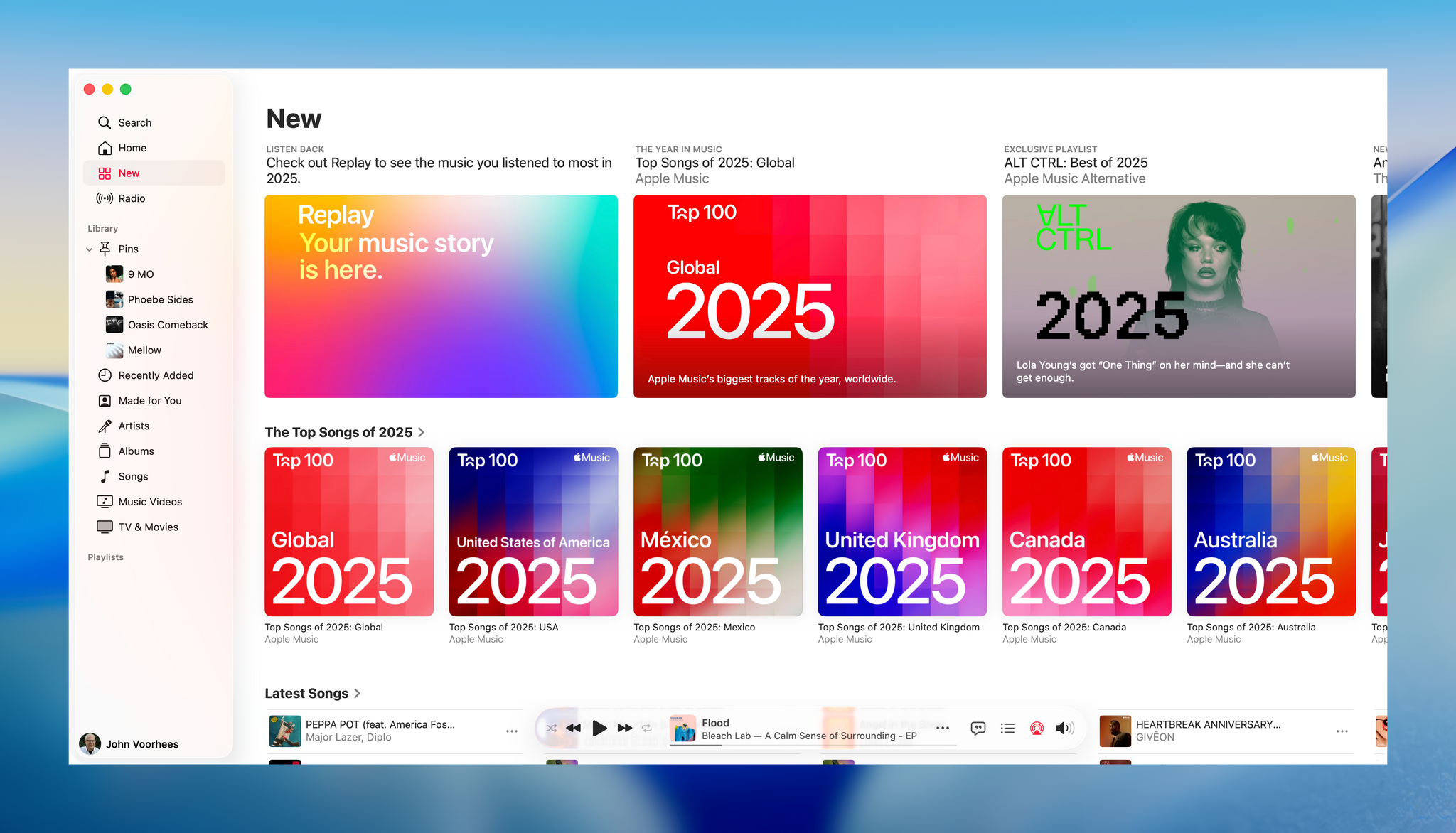

Apple Music’s Replay 2025 Is Live→

Apple Music has released its annual Apple Music Replay overview of subscribers’ listening statistics for 2025 along with top charts for 2025. The recap can be accessed on https://replay.music.apple.com, where you’ll find details about the music you listened to throughout the year, including your top albums, songs, artists, playlists, and genres.

As with past years, Replay ‘25 begins with an animated highlights reel of your year in music set to the songs you enjoyed throughout the year. Replay also spotlights listening milestones like the total number of minutes listened and the number of artists and songs played. Subscribers can browse through their statistics by month, too. Your Replay ‘25 playlist, which includes your 100 most listened-to songs is available from the Replay website and Apple’s Music app. In addition to Replay ‘25 Apple Music released top charts for countries around the world and genres.

The timing of Replay ‘25 is opportune for me, since Federico and I are beginning to assemble our lists of favorite music of 2025 for an upcoming episode of MacStories Unwind. Most of all though, I just enjoy using Replay ‘25 as a way to revisit my favorite music of the year.

To view your own Replay 2025 statistics, visit music.apple.com/replay.

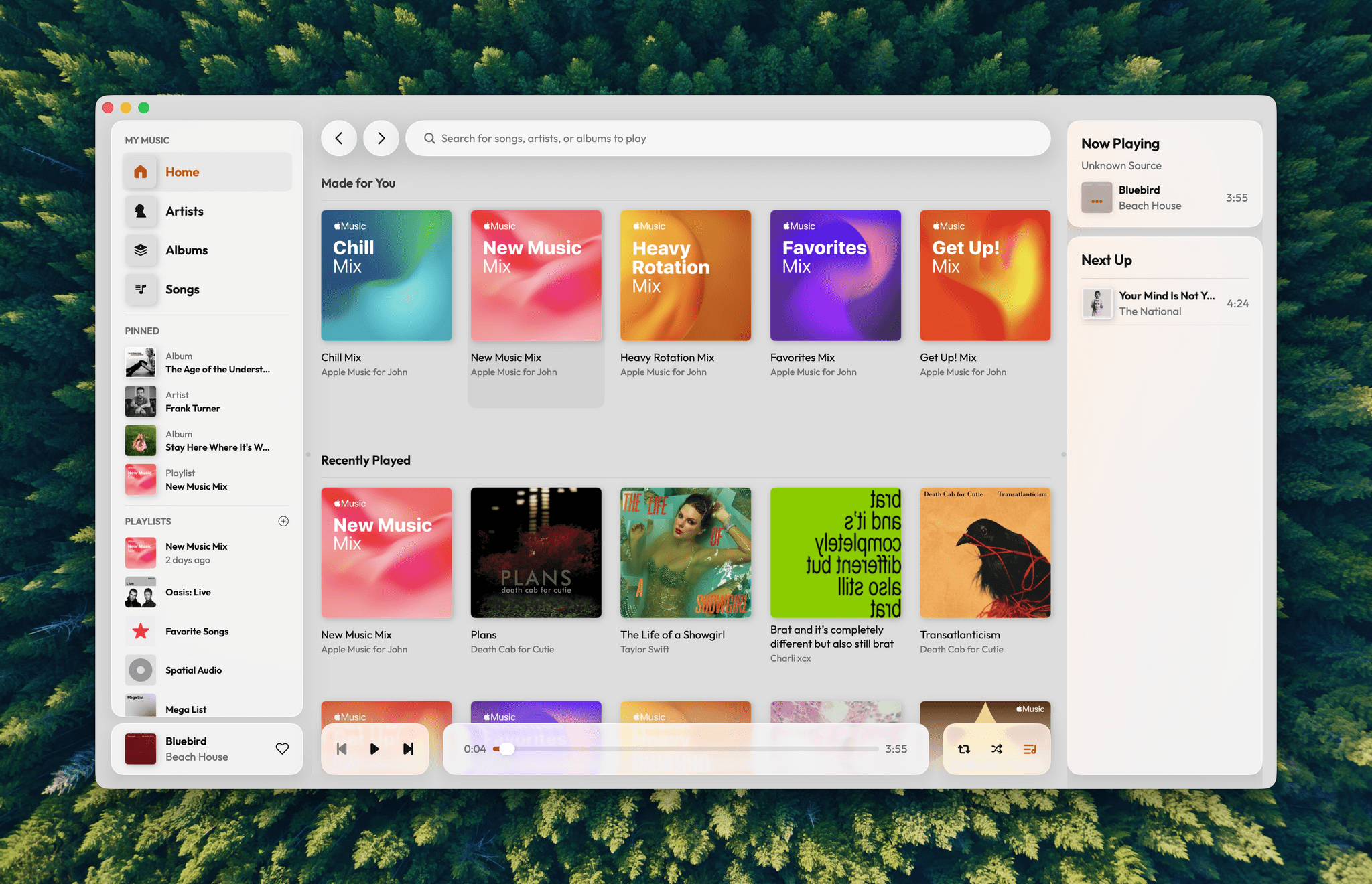

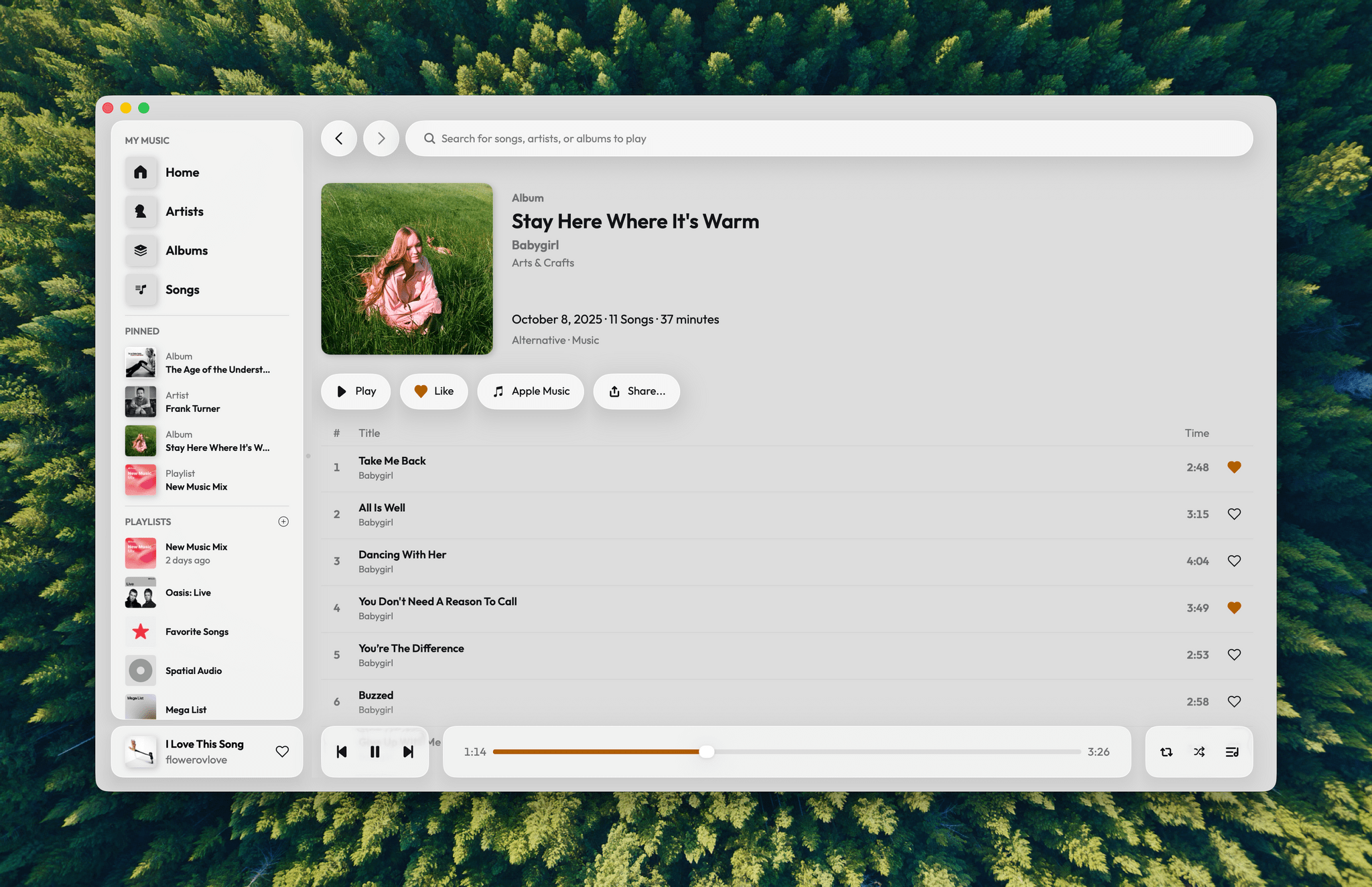

A Fresh Spin on Apple Music: Exploring Daft Music’s Liquid Glass Design

Daft Music asks a question that’s been on my mind for a long while: what if Apple Music started over with a new Mac app? As a service, I love Apple Music. I’ve been a subscriber since day one. But I’m less enamored with the Music app, especially on the Mac.

Music on the Mac has a long history dating back nearly 25 years to Apple’s acquisition of SoundJam MP, which became iTunes, an app for organizing your music collection, syncing it to your iPod, and, later, buying music. Over the years, iTunes expanded to encompass TV, movies, books, apps, and even courses, which was too much for one app. So Apple began dismantling iTunes, with the final blow coming in 2019 with the release of macOS Catalina. The update retired iTunes, replacing it with Apple Music and dedicated apps for other types of media.

Music was a significant break from the design of iTunes, but as a long-time user of both iTunes and Music, what didn’t seem to change as much was the app’s underlying code. That’s consistent with reporting at the time that Music was an AppKit app built on the bones of iTunes. The choice to build Music for macOS on top of the iTunes foundation had the advantage of allowing Apple to preserve iTunes features that the Music app lacked on other platforms. However, the decision had a big downside, too. Built on what was already a nearly 20-year-old code base, Music inherited iTunes’ bugs, which have hung around unfixed for years.

That’s where Daft Music by Dennis Oberhoff comes in. It’s a simple, elegant Apple Music “do-over” that also happens to be the first Mac app I’ve tried that was built from the ground up for Liquid Glass. There’s a lot to cover, so let’s dig in.

Oasis Just Glitched the Algorithm→

Beautiful, poignant story by Steven Zeitchik, writing for The Hollywood Reporter, on the magic of going to an Oasis concert in 2025.

It would have been weird back in Oasis’ heyday to talk about a big stadium-rock show being uniquely “human” — what the hell else could it be? But after decades of music chosen by algorithm, of the spirit of listen-together radio fracturing into a million personalized streams, of social media and the politics that fuel it ordering acts into groups of the allowed and prohibited, of autotuning and overdubbing washing out raw instruments, of our current cultural era’s spell of phone-zombification, of the communal spaces of record stores disbanded as a mainstream notion of gathering, well, it’s not such a given anymore. Thousands of people convening under the sky to hear a few talented fellow humans break their backs with a bunch of instruments, that oldest of entertainment constructs, now also feels like a radical one.

And:

The Gallaghers seemed to be coming just in time, to remind us of what it was like before — to issue a gentle caveat, by the power of positive suggestion, that we should think twice before plunging further into the abyss. To warn that human-made art is fragile and too easily undone — in fact in their case for 16 years it was undone — by its embodiments acting too much like petty, well, humans. And the true feat, the band was saying triumphantly Sunday, is that there is a way to hold it together.

I make no secret of the fact that Oasis are my favorite band of all time which, very simply, defined my teenage years. They’re responsible for some of my most cherished memories with my friends, enjoying music together.

I was lucky enough to be able to see Oasis in London this summer. To be honest with you, we didn’t have great seats. But what I’ll remember from that night won’t necessarily be the view (eh) or the audio quality at Wembley (surprisingly great). I’ll remember the sheer joy of shouting Live Forever with Silvia next to me. I’ll remember doing the Poznan with Jeremy and two guys next to us who just went for it because Liam asked to hug the stranger next to you. I’ll remember the thrill of witnessing Oasis walk back on stage after 16 years with 80,000 other people feeling the same thing as me, right there and then.

This story by Zeitchik hit me not only because it’s Oasis, but because I’ve always believed in the power of music recommendations that come from other humans – not algorithms – who would like you to also enjoy something. And to do so together.

If only for two hours one summer night in a stadium, there’s beauty to losing your voice to music not delivered by an algorithm.

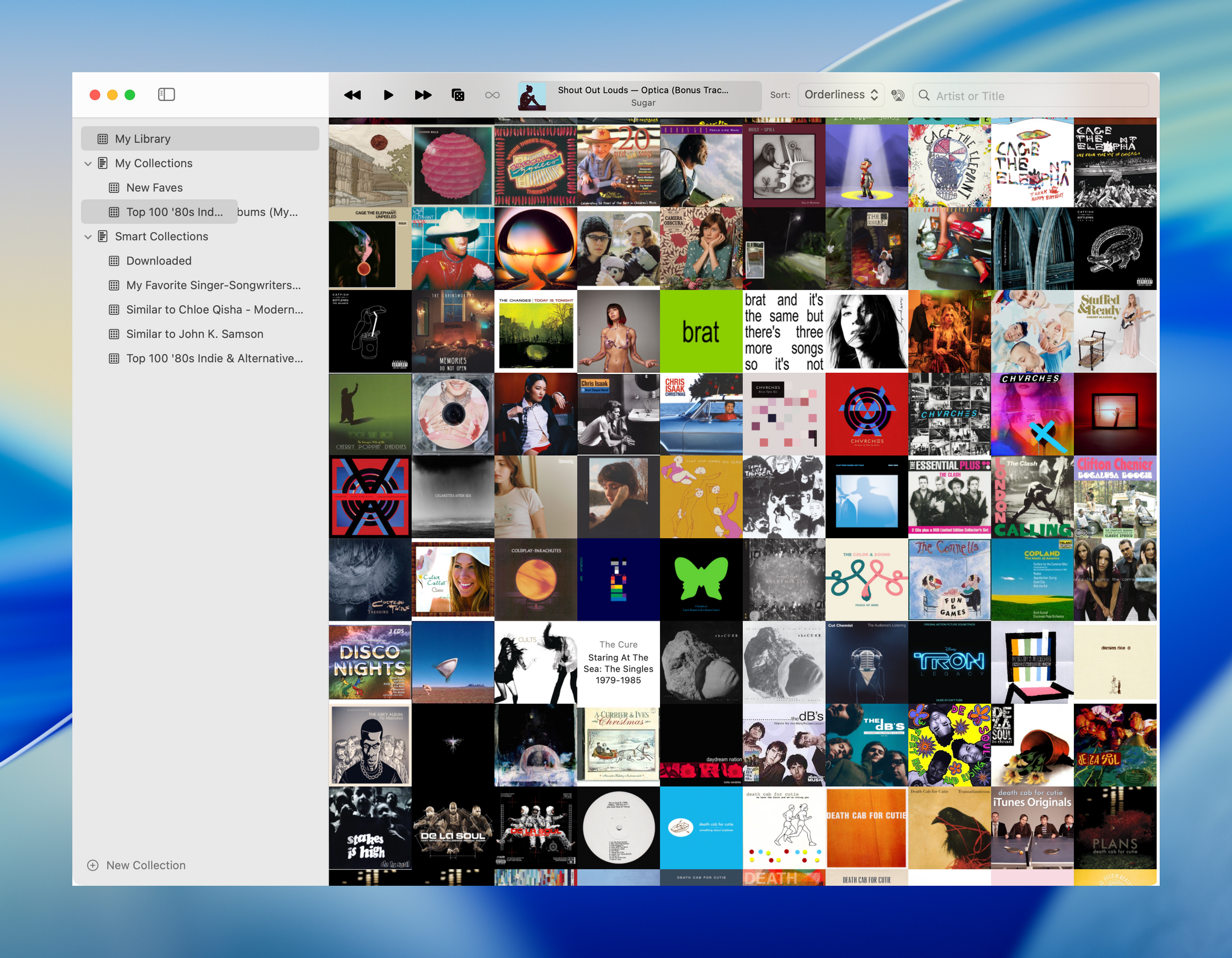

Longplay for Mac Launches with Powerful AI and Shortcuts Integration

Longplay by Adrian Schönig is an excellent album-oriented music app that integrates with Apple Music. The app started on iOS and iPadOS, then later added support for visionOS. With today’s update, Longplay is available on macOS, too, where it adds unique automation features.

If you aren’t familiar with Longplay, be sure to check out my reviews of version 2.0 for iOS and iPadOS and the app’s debut on the Vision Pro. I love the app’s album art-forward design, collection and queuing systems for navigating and organizing large music libraries, and many other ways to sort, filter, and rediscover your favorite albums. Here’s how Adrian describes Longplay in a post introducing the Mac version:

It filters out the albums where you only have a handful of tracks, and focusses on those complete or nearly complete albums in your library instead. It analyses your album stats to help you rediscover forgotten favourites and explore your library in different ways. You can organise your albums into collections, including smart ones. And you can go deep with automation support.

With the introduction of Longplay for Mac, the app is now available everywhere, with feature parity across all versions. Plus, Longplay syncs across all devices, so your Collections and Smart Collections are available on every platform.

Hands-On with Sound Therapy on Apple Music

I’ve always been envious of people who can listen to music while they work. For whatever reason, music-listening activates a part of my brain that pulls me away from the task at hand. My mind really wants to focus on the lyrics, the style, the mix – all distractions from whatever it is I’m currently trying to do. It just doesn’t work for me.

But under the right circumstances and with the right kind of music, you can create an environment that is conducive to focus. At least, that’s the idea behind Apple’s recent collaboration with Universal Music Group. It’s called Sound Therapy, a research-based collection of songs meant to promote not only focus, but also relaxation and even healthy sleep.

The effort comes out of UMG’s Sollos venture, a group of scientists and music professionals focused on the relationship between music and wellness. Founded in 2023, the London-based incubator has used its findings to put together a library of music that, as Apple says, “harnesses the power of sound waves, psychoacoustics, and cognitive science to help listeners relax or focus the mind.”