Apple events are always full of little details that don’t make it into the main presentation. Some tidbits are buried in footnotes and others in release notes. Yesterday’s event was no exception, so after having a chance to dig in a little deeper, here is an assortment of details about what Apple announced.

HomePod

- You send an Intercom message on the HomePod by saying “Hey Siri, Intercom” and then speaking your message.

- You cannot pair an original HomePod with a HomePod mini to make a stereo pair but music playback will sync across a mix of HomePods.

- Apple TV 4K is adding support for 5.1, 7.1 surround, and Dolby Atmos, when paired with one or two of the original HomePods, but not the mini.

- Apple is adding a feature to the HomePod that lets users set Apple Music tracks as alarms.

iPhone 12

- The spot on the side of the iPhone 12 is the mmWave 5G antenna, which is only available in the US.

- Apple has sent emails to customers who use the iPhone Upgrade program offering pre-approvals for the upcoming iPhone 12 and 12 Pro pre-orders, which begin October 16 at 5 a.m. Pacific time.

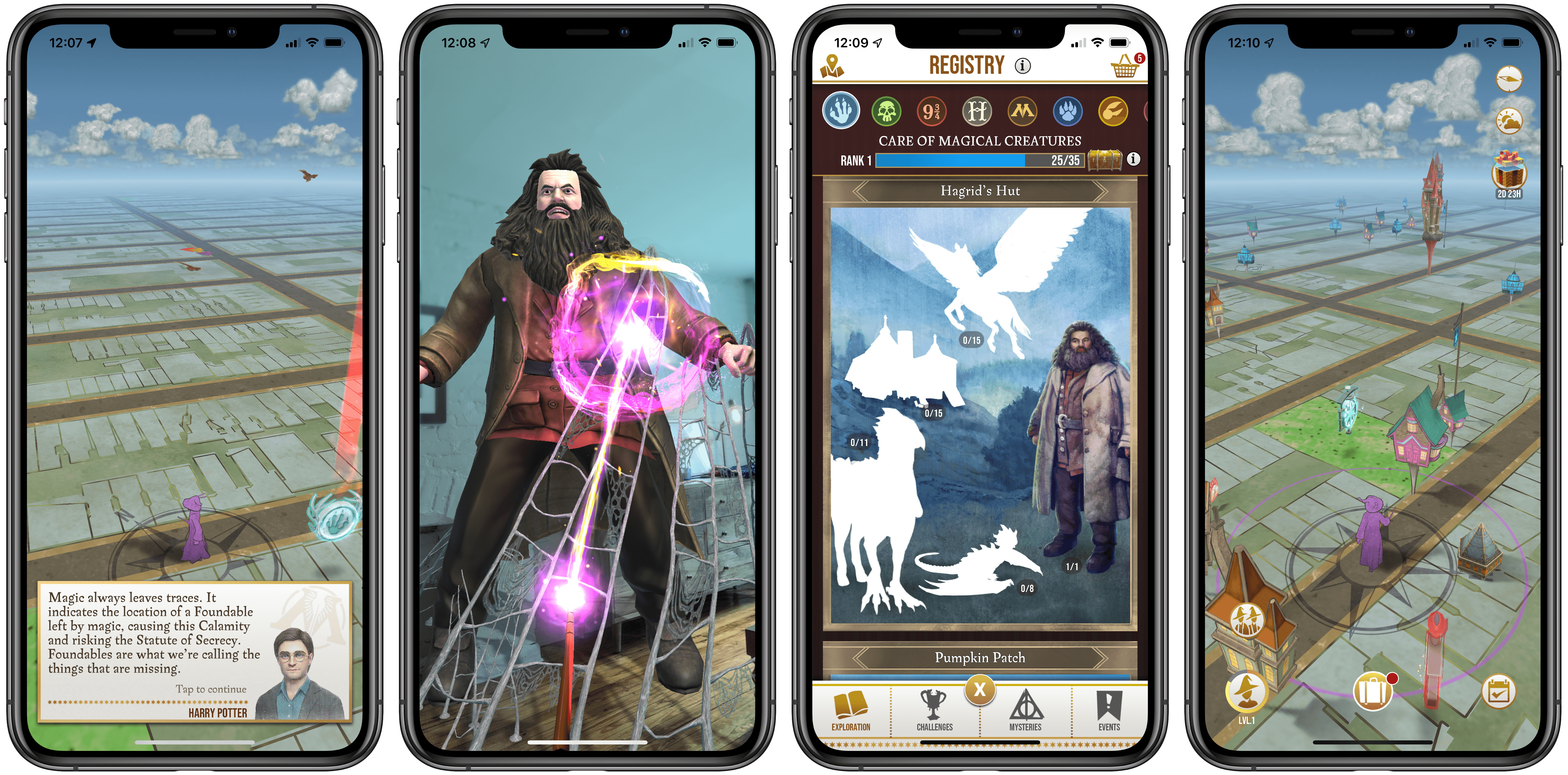

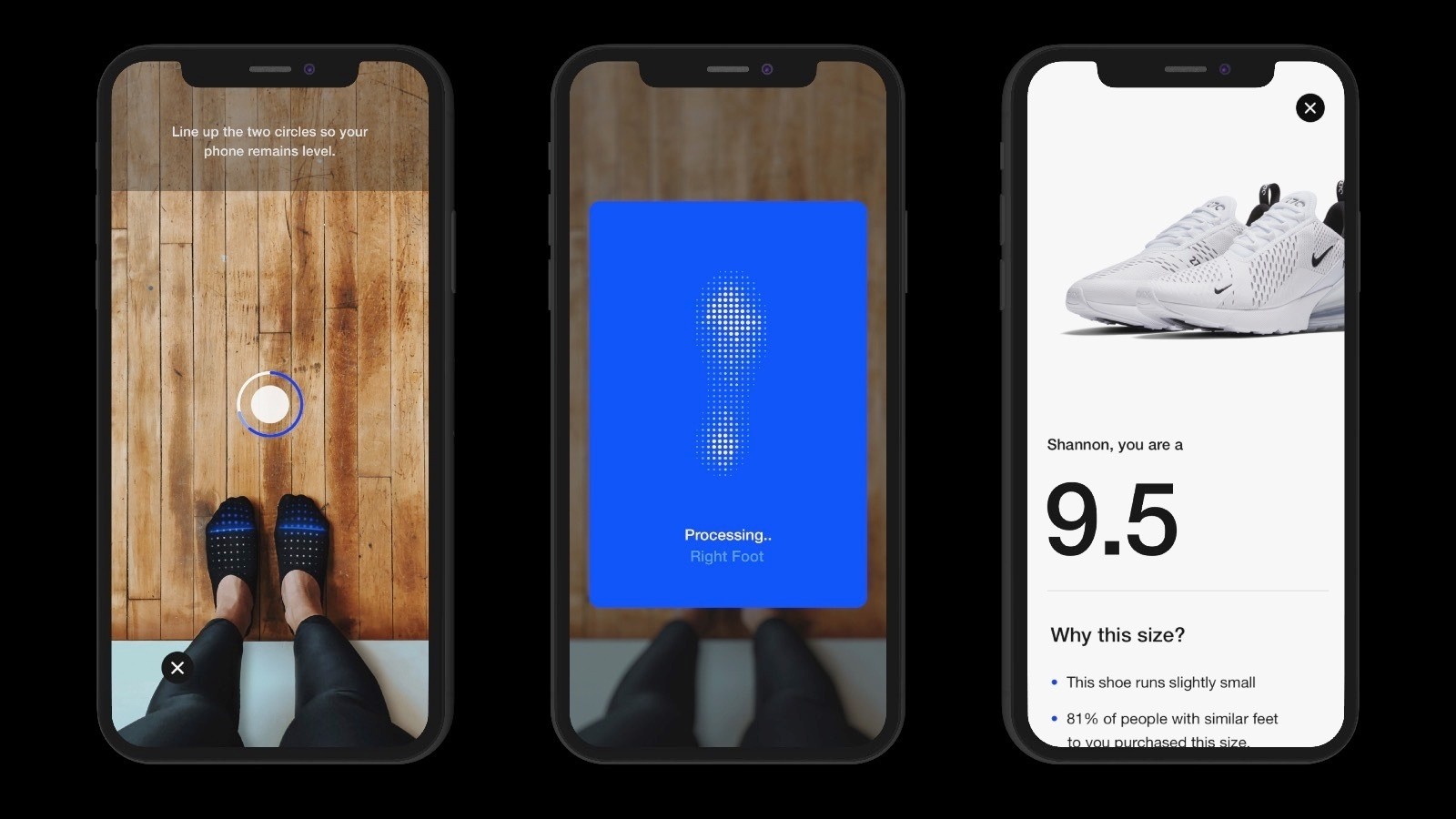

- Apple has published a story on its developer site about making apps for ARKit, RealityKit, and utilizing the LiDAR Scanner.

- The iOS 14.1 beta includes the updated Home app with the new Discover tab that recommends HomeKit accessories to users.

- Screen resolutions are all over the place with the new iPhones. It’s a little mind-bending, but for the details, check out this Twitter thread from Steve Troughton-Smith and chart from Joe Beninato.

- Apple is giving away three months of Apple Arcade to anyone who purchases an iPhone, iPad, iPod touch, Apple TV, or Mac.

Accessories

- Apple has reduced the price of EarPods $10 to $19, and the 20W charger that replaces the 18W charger is $19, down from the $29 charged for the 18W version.

- Belkin has announced a 3-in-1 MagSafe charging stand and a MagSafe car mount.

You can follow all of our October event coverage through our October 2020 event hub, or subscribe to the dedicated RSS feed.