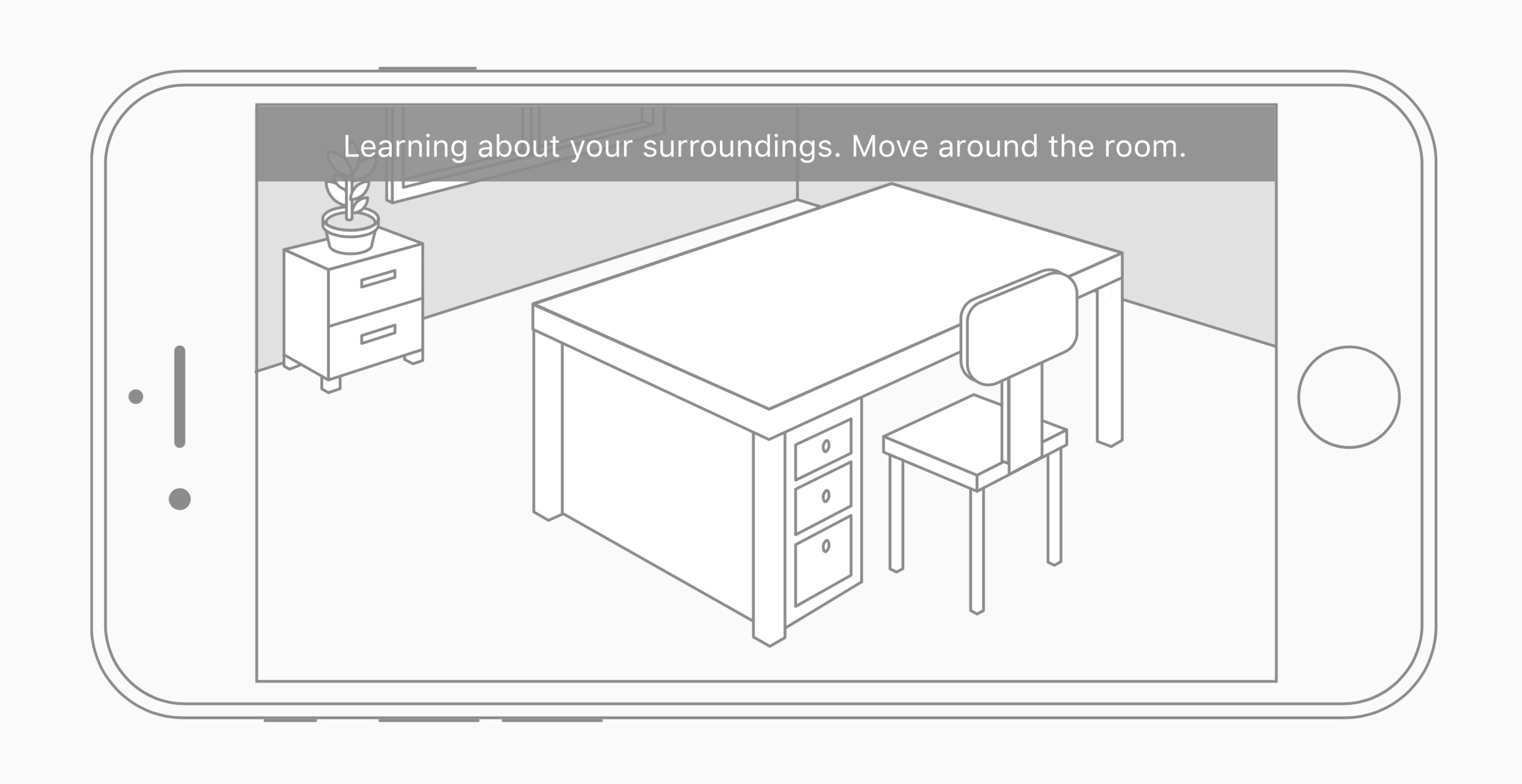

Apple’s developer site now contains Human Interface Guidelines for augmented reality apps. The guidelines are not hard, fast rules for developers working with ARKit, but more “best practices” Apple suggests for an ideal user experience. Guidelines that stand out include:

Use audio and haptic feedback to enhance the immersive experience. A sound effect or bump sensation is a great way to provide confirmation that a virtual object has come into contact with a physical surface or other virtual object.

To the extent possible, provide hints in context. Placing a three-dimensional rotation indicator around an object, for example, is more intuitive than presenting text-based instructions in an overlay.

Favor direct manipulation over separate onscreen controls. It’s more immersive and intuitive when a user can touch an object onscreen and interact with it directly, rather than interact with separate controls on a different part of the screen.

Suggest possible fixes if problems occur. Analysis of the user’s environment and surface detection can fail for a variety of reasons—there’s not enough light, a surface is too reflective, a surface doesn’t have enough detail, or there’s too much camera motion. If your app is notified of insufficient detail or too much motion, or if surface detection takes too long, offer suggestions for resolving the problem.

ARKit is a brand new technology that opens up a world of possibilities to app developers. But alongside its potential for magical, immersive experiences is the potential for user frustration as developers learn the hard way what works best. Apple’s guidelines – though released later than I’m sure many developers would like – should help minimize those frustrations.