Aside from keeping our iPhones in our pocket more, I think the Apple Watch is compelling for another reason: communication. The ways in which Apple is allowing people to communicate via Apple Watch – taps, doodles, and, yes, even heartbeats – is a clever, discreet new paradigm that epitomizes the company’s mantra that the Watch is the most intimate and personal device they’ve ever created. I, for one, am very much looking forward to trying these features.

What’s even more compelling, though, in my view, is the engine that’s powering the delivery of said communication – namely, the Taptic Engine. Beyond its use for notifications and communication on the Watch, Apple has implemented its Taptic Engine in one other form: trackpads. Apple has put the tech into the new MacBook and the refreshed 13-inch Retina MacBook Pro. I had an opportunity to play with the Force Touch trackpad (about 30 minutes) at my favorite Apple Store here in San Francisco, and came away very, very impressed.

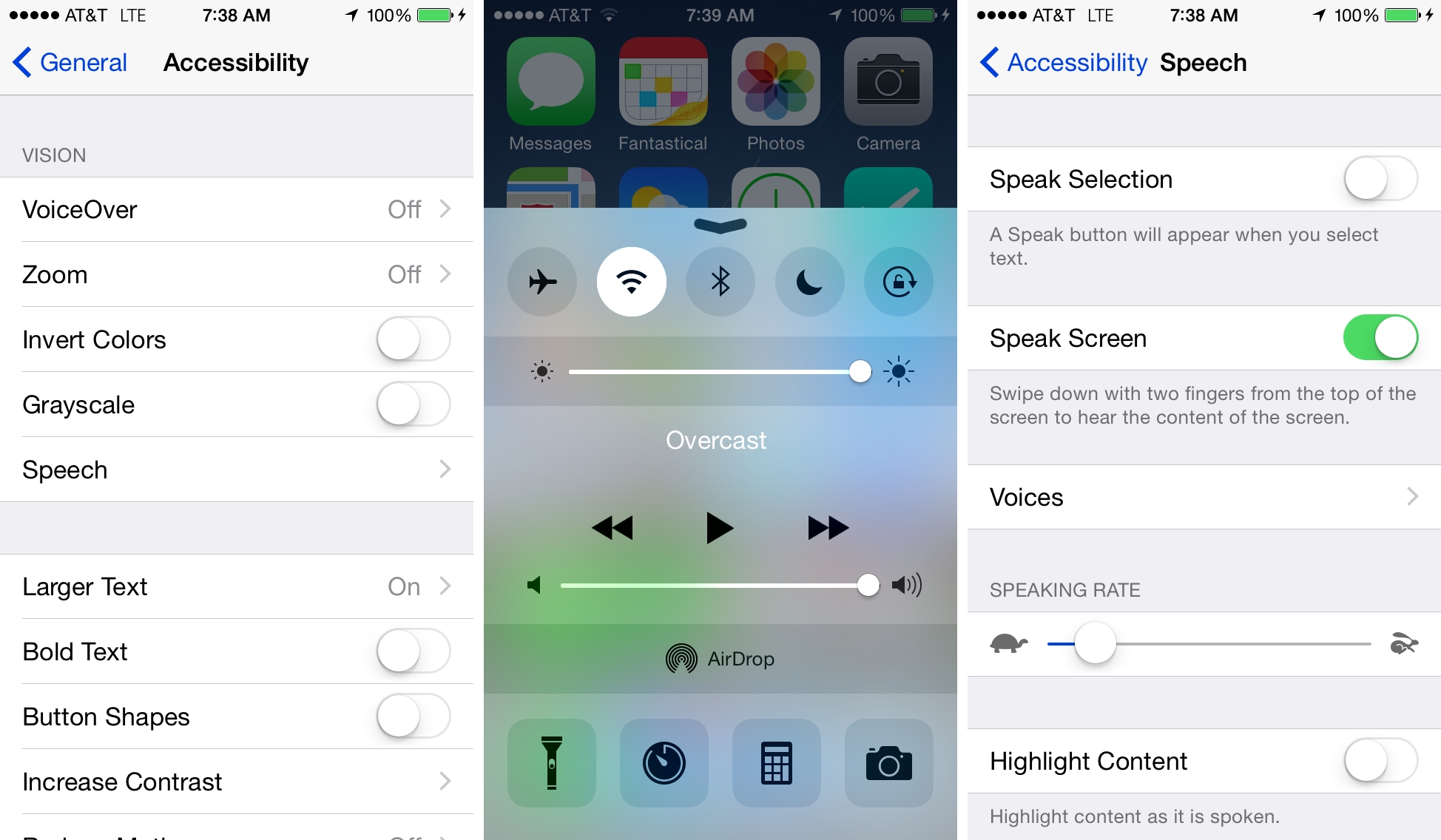

I find Apple’s embrace of haptic feedback fascinating and exciting, because the use of haptic technology has some very real benefits in terms of accessibility.